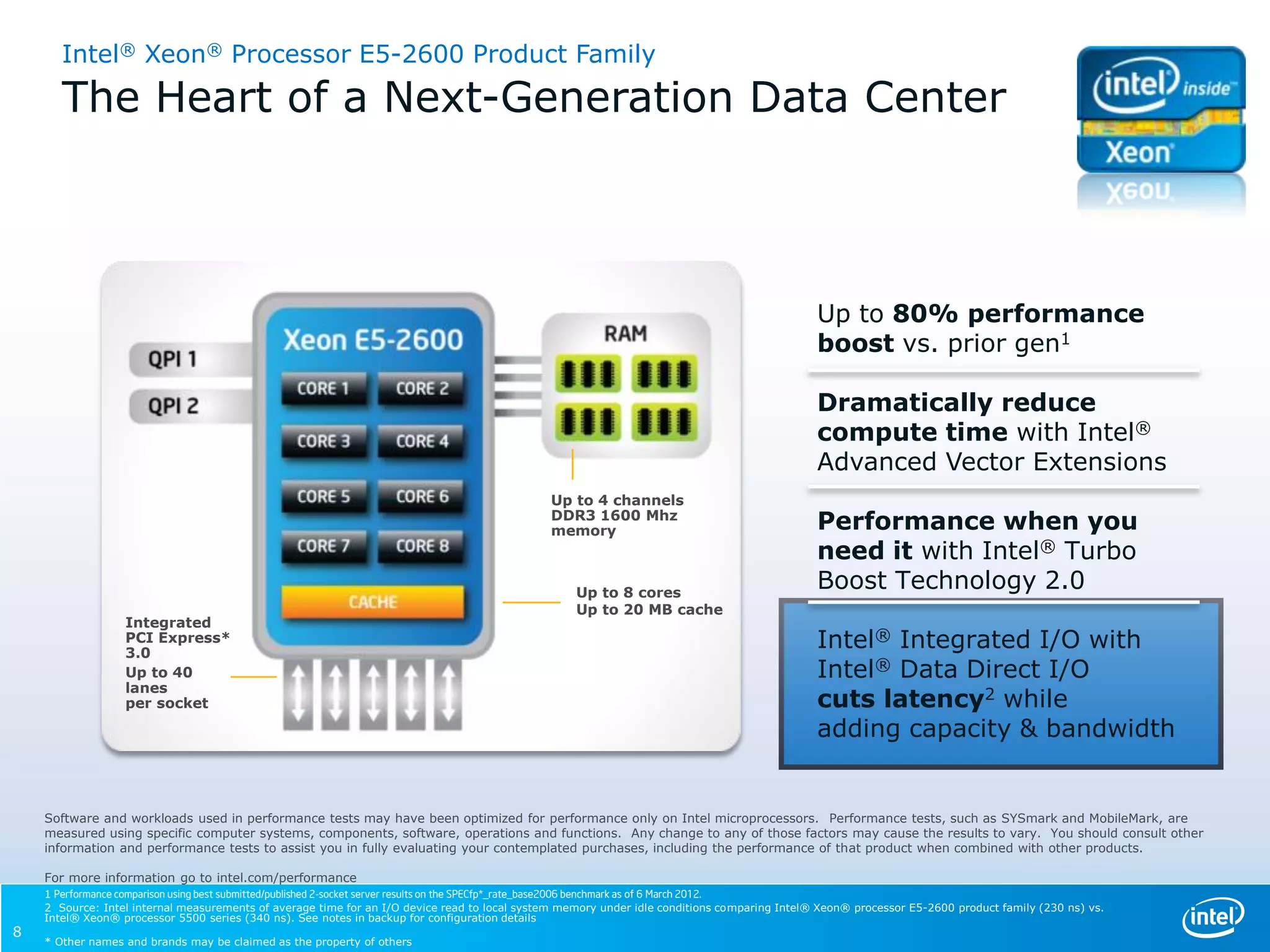

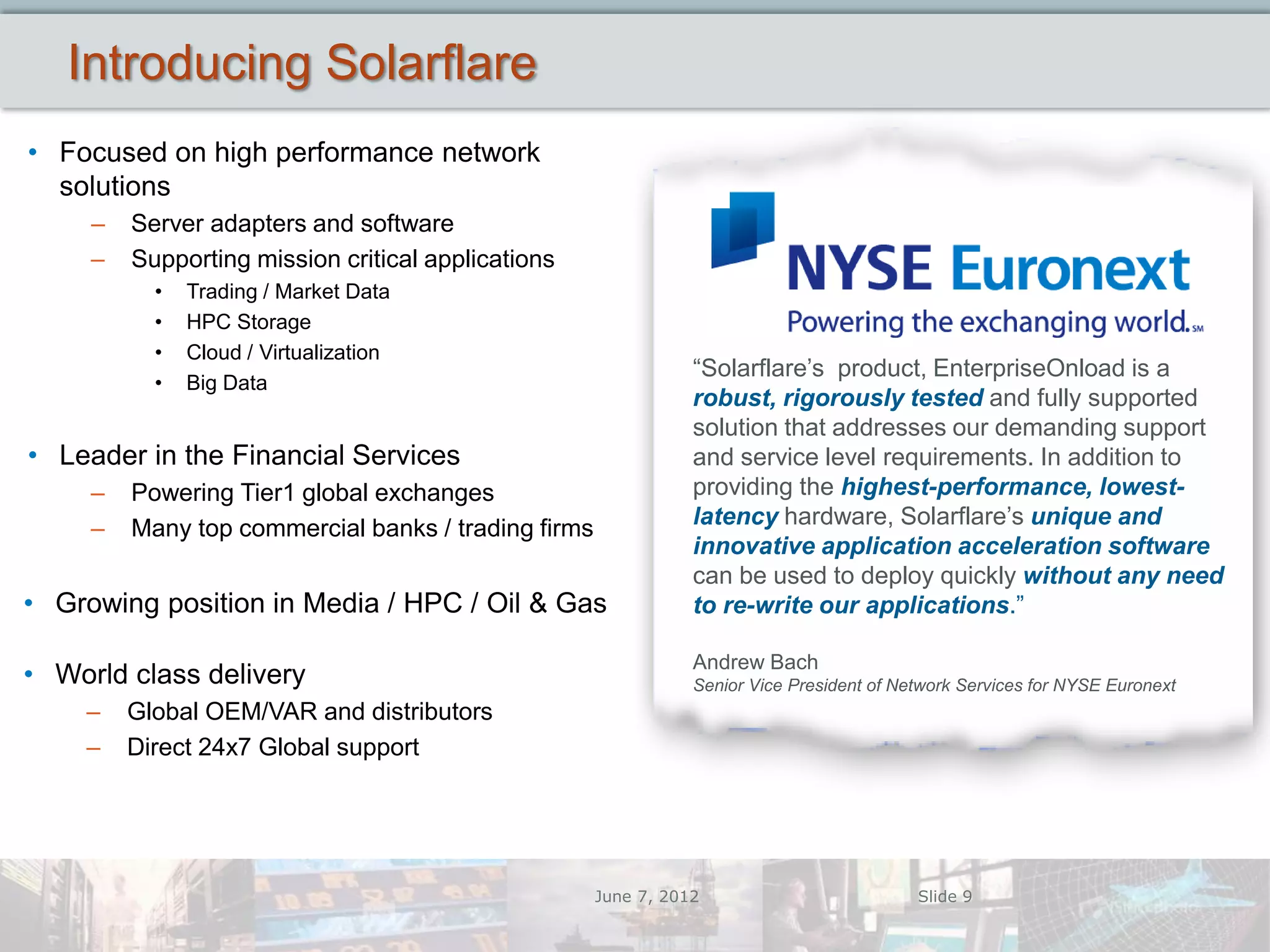

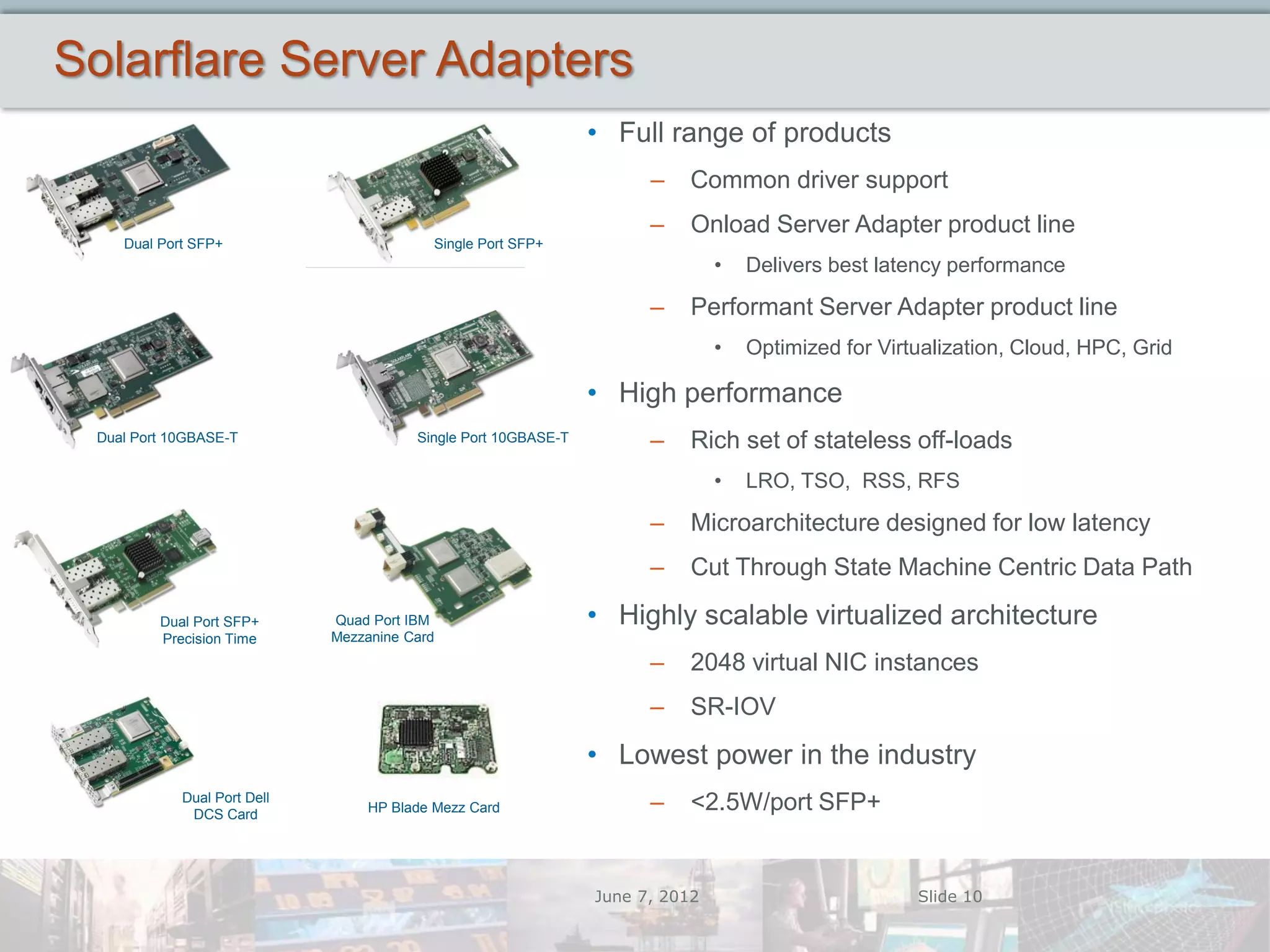

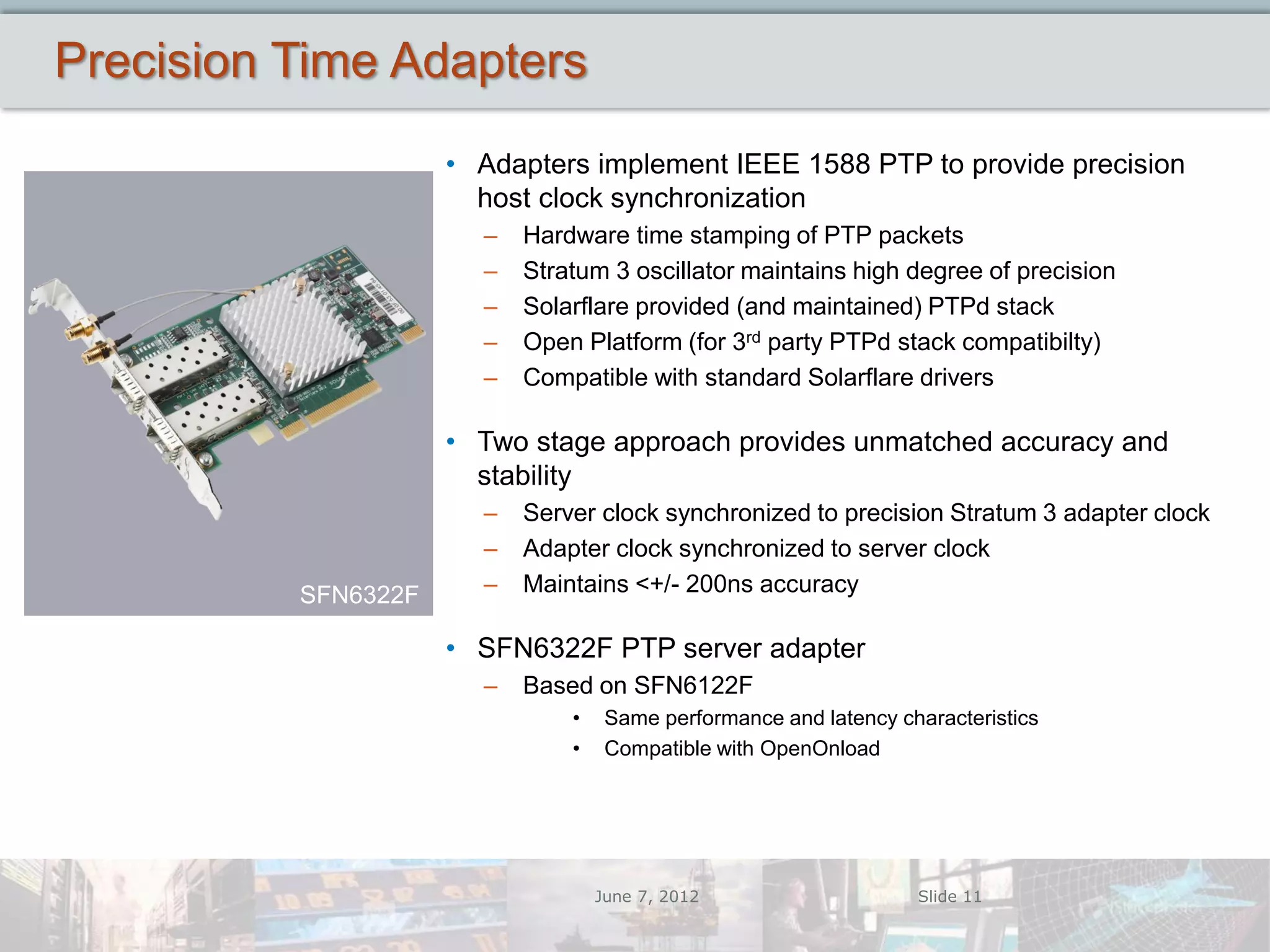

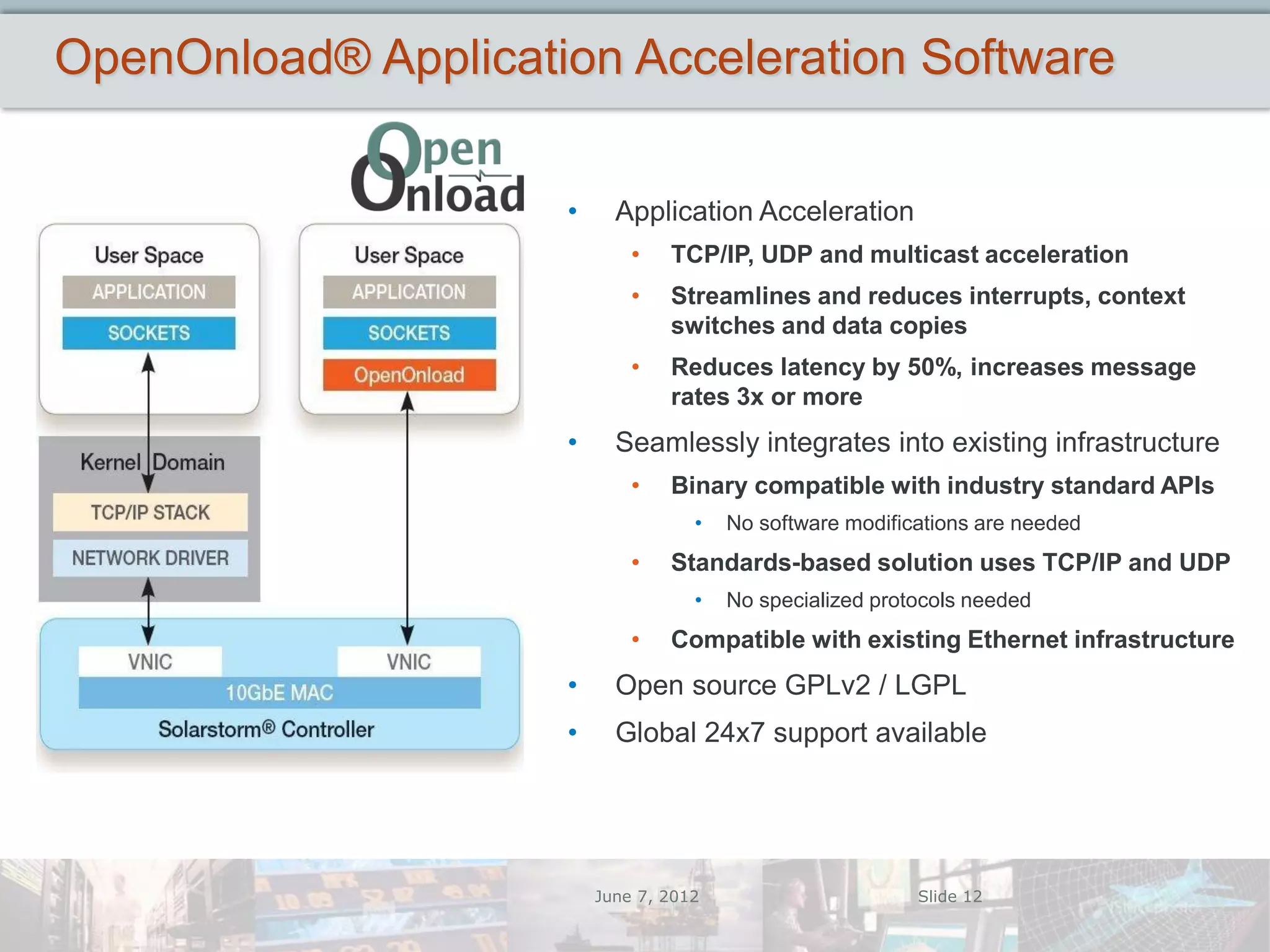

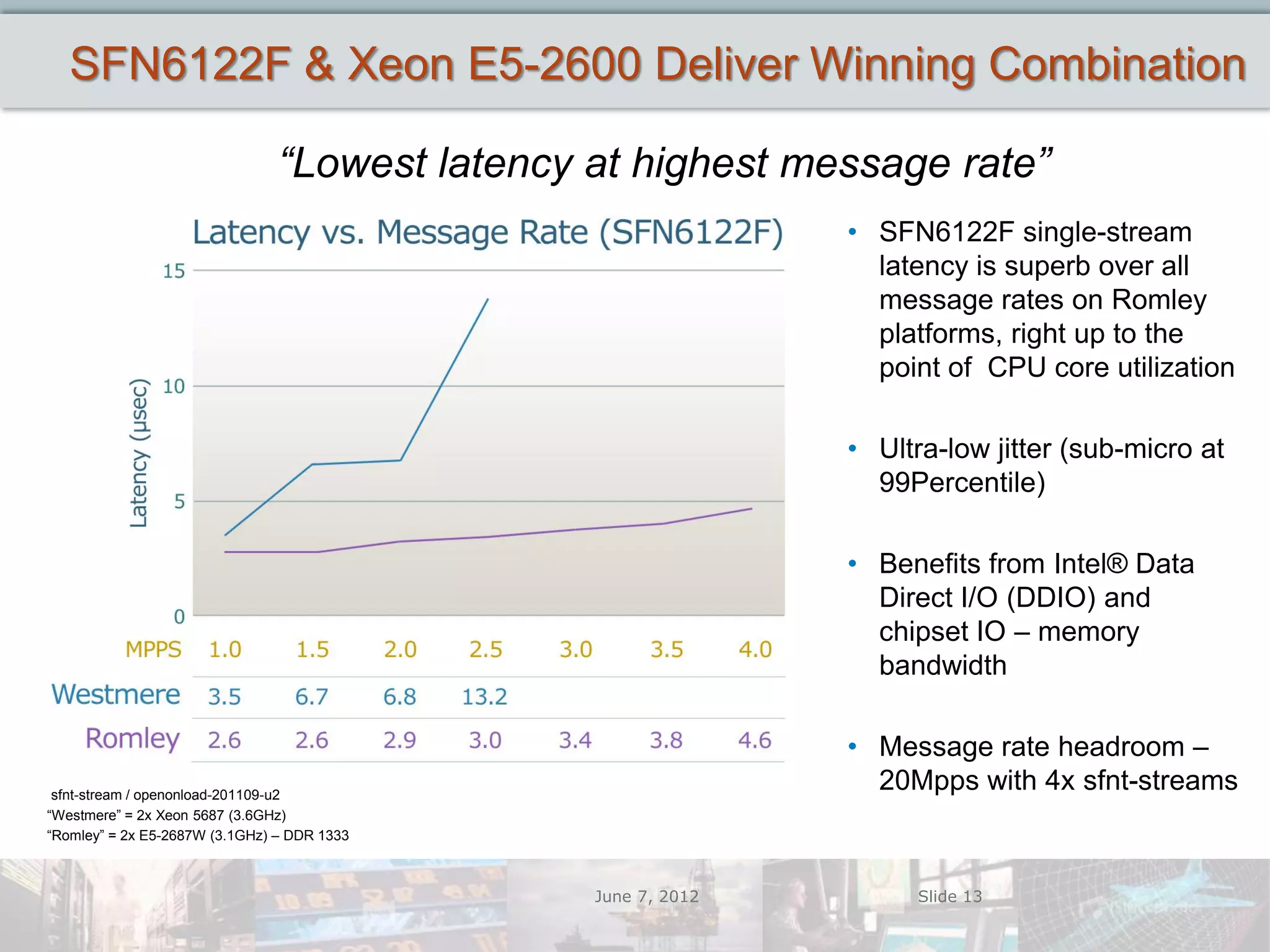

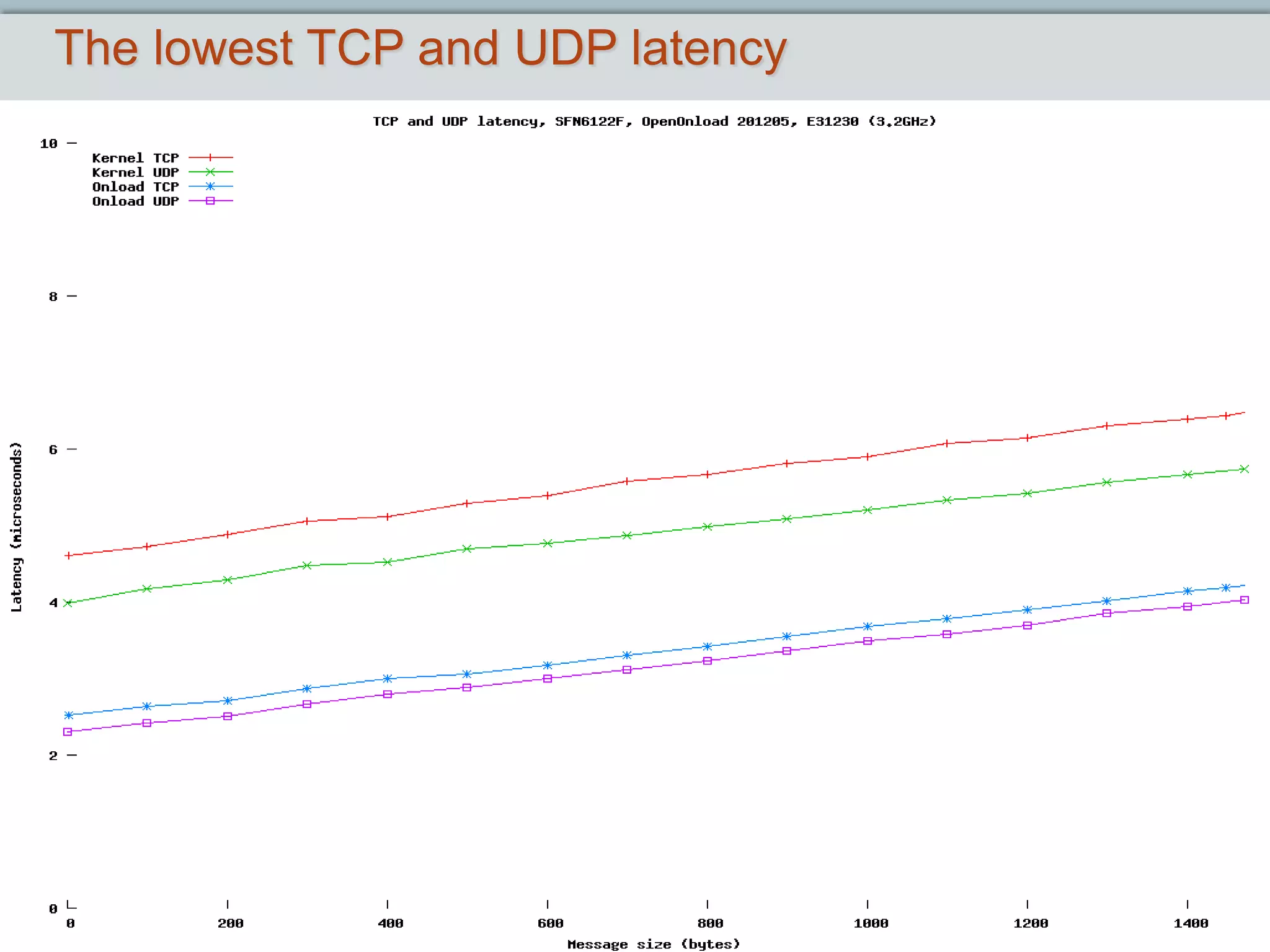

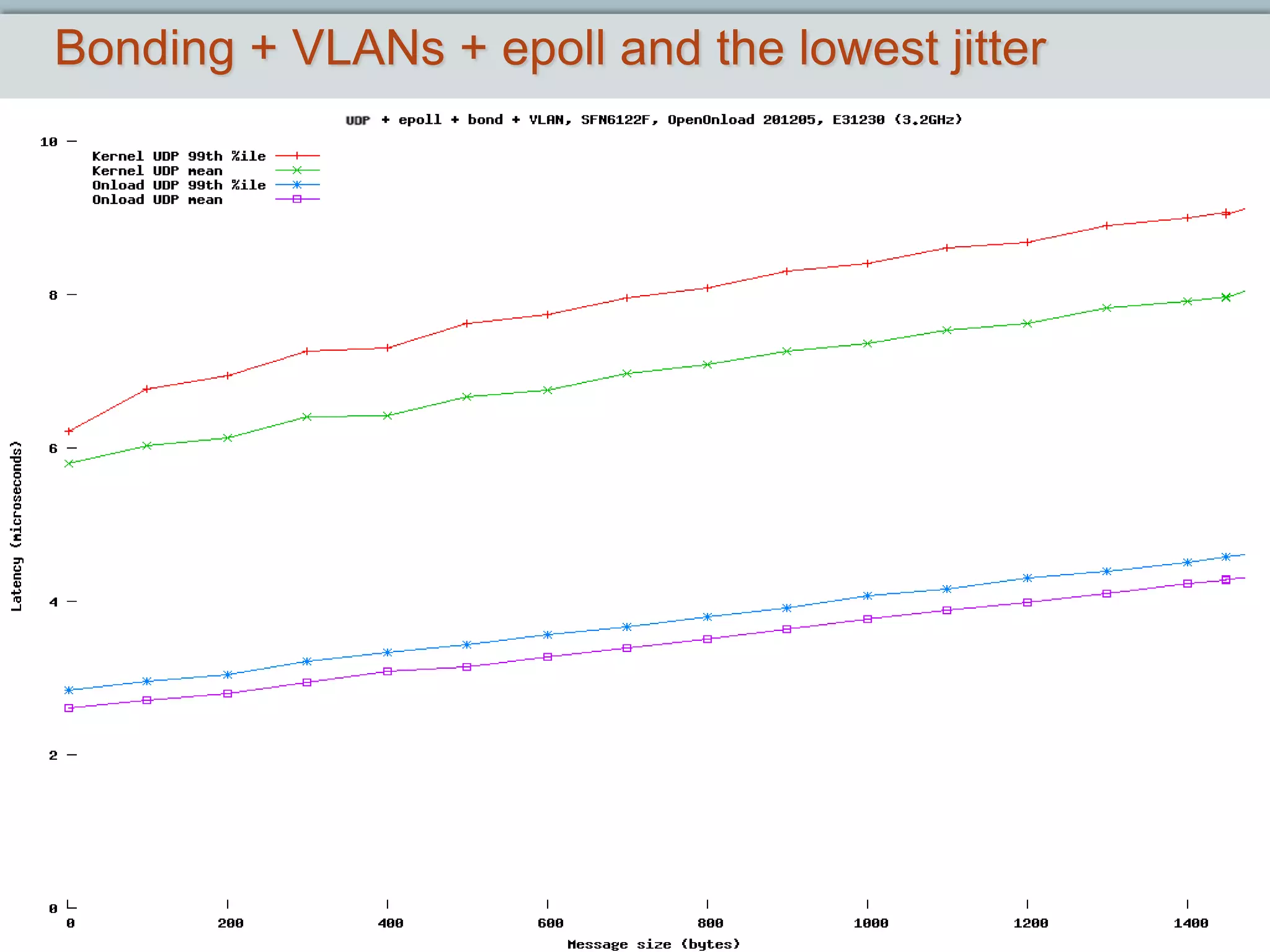

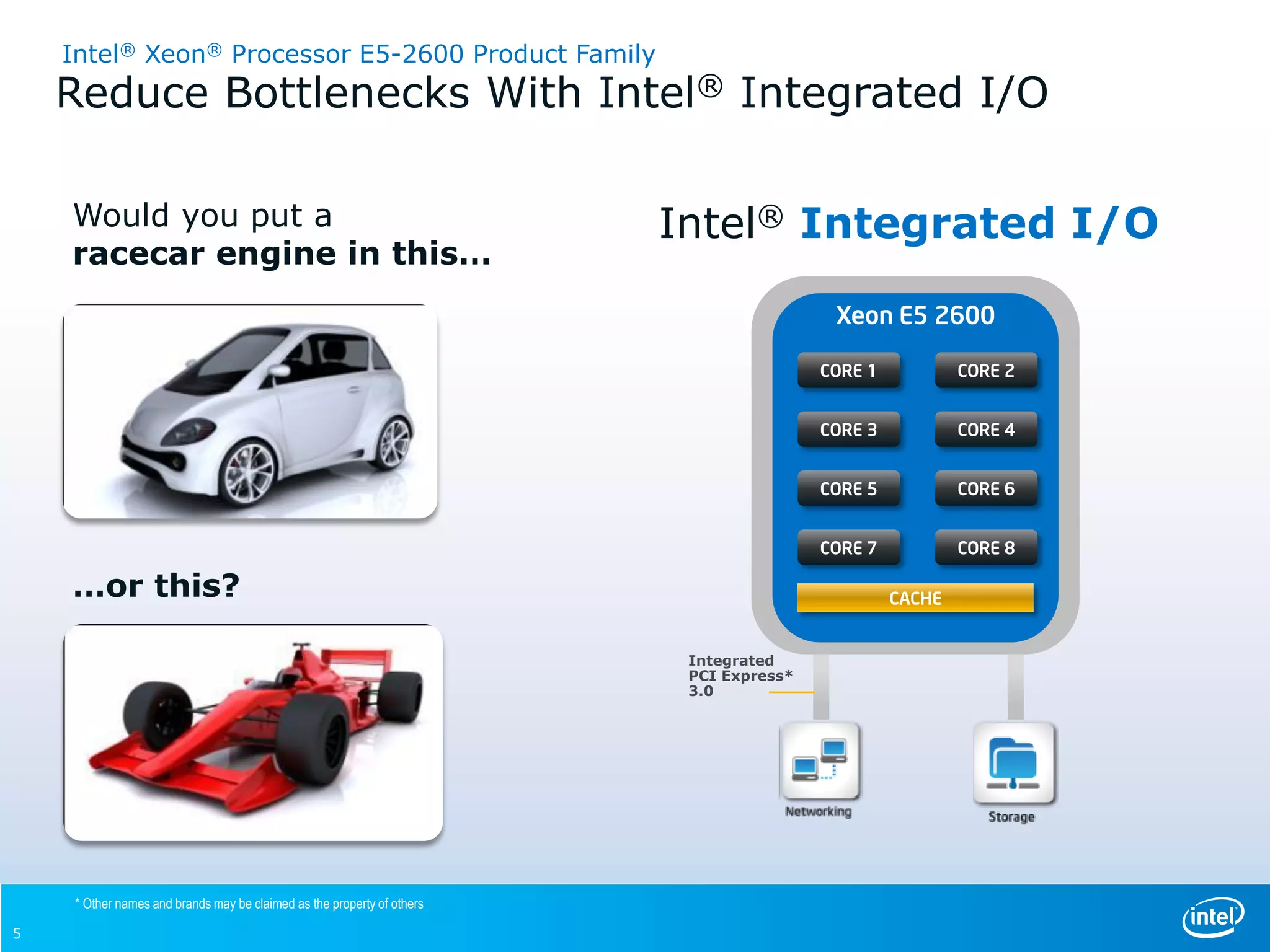

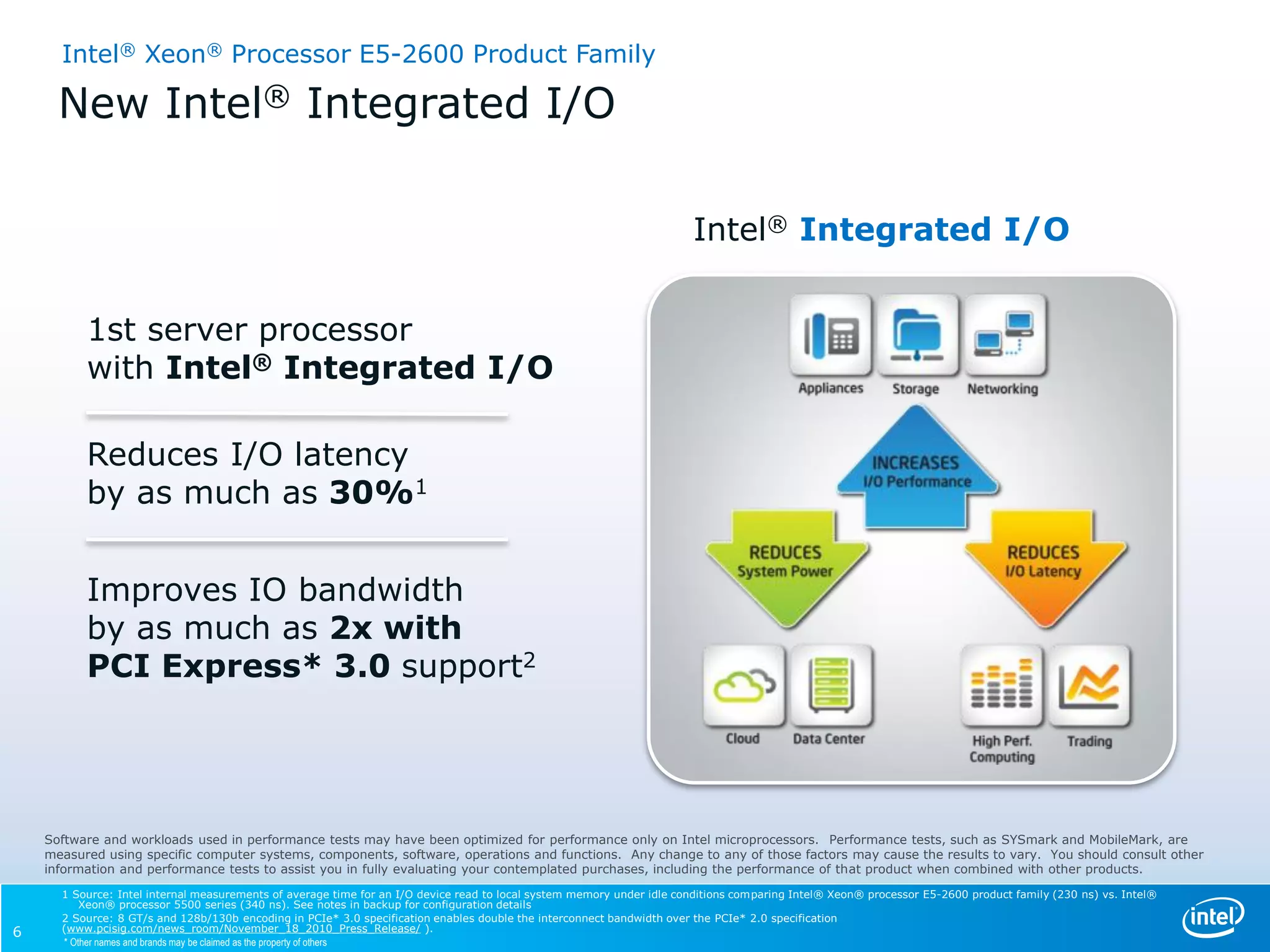

The webinar provided an overview of how the Intel Xeon E5-2600 processor and Solarflare network adapters can achieve the lowest latencies at the highest message rates. The agenda included details on the Intel Xeon E5-2600 platform features like integrated I/O and Data Direct I/O that reduce latency. Solarflare's adapters and OpenOnload software were presented as optimizing performance. It was emphasized that the combination of the Intel processors and Solarflare products can deliver the best performance through features like reduced jitter and increased message rates. The webinar concluded with a Q&A session.

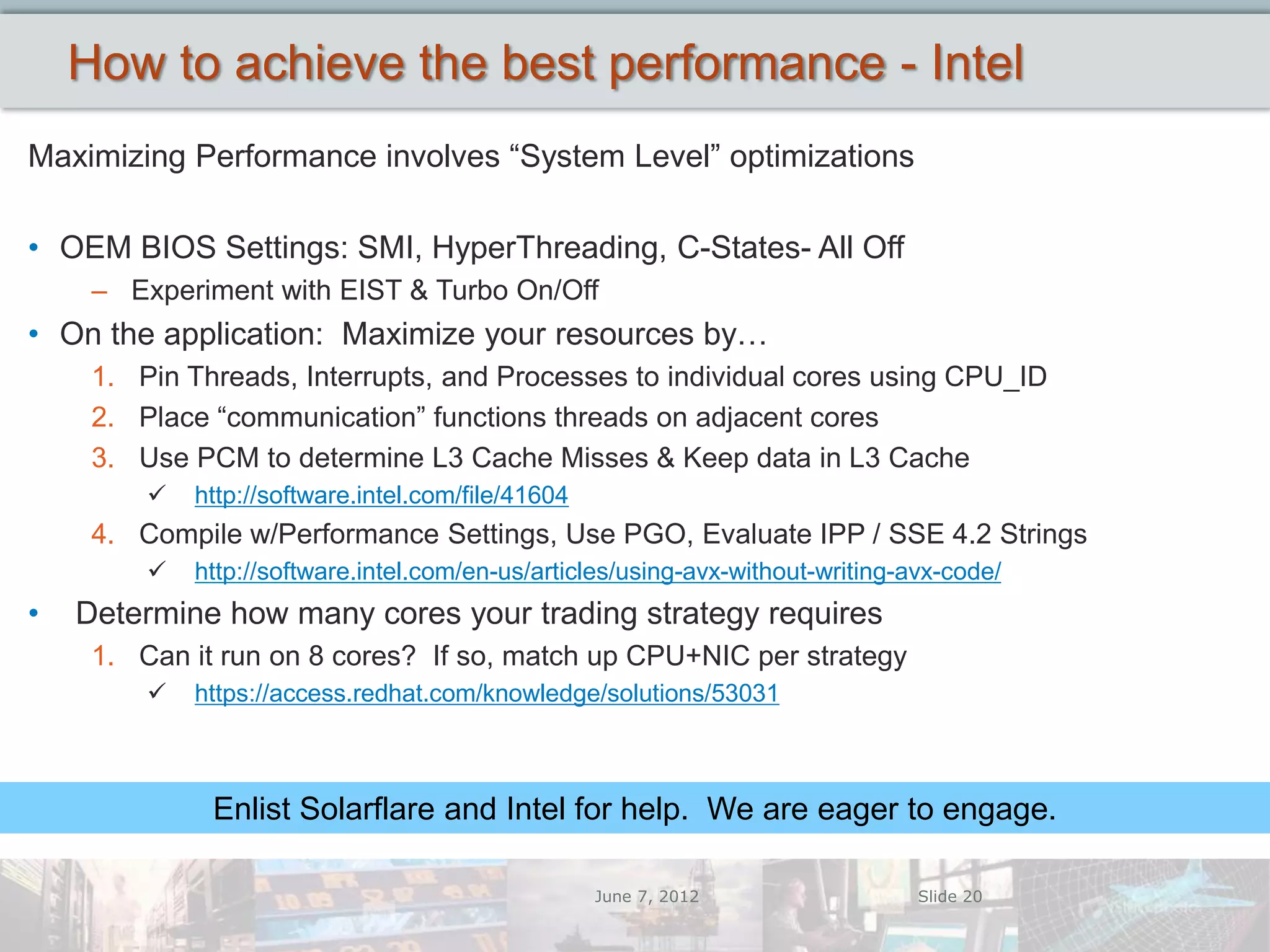

![Intel® Xeon® Processor E5-2600 Product Family

New Intel® Data Direct I/O Technology

(Intel® DDIO)

Can more than Double

I/O Performance1

Send I/O directly to and from

processor cache for all I/O

traffic types Xeon

2600

Family

Can allow system memory to

remain in low power state

Xeon

Reduce latency by eliminating 5600

Series

unneeded trips to memory

[ Transactions per second ]

Software and workloads used in performance tests may have been optimized for performance only on Intel microprocessors. Performance tests, such as SYSmark and MobileMark, are

measured using specific computer systems, components, software, operations and functions. Any change to any of those factors may cause the results to vary. You should consult other

information and performance tests to assist you in fully evaluating your contemplated purchases, including the performance of that product when combined with other products.

1 Up to 2.3x I/O performance is 1S with a Xeon processor 5600 series vs. 1S Xeon Processor E5-2600 data for L2 forwarding test using 8x10GbE ports .See notes in backup for configuration details

7](https://image.slidesharecdn.com/solarflareintelwebcastjune072012-120607103428-phpapp02/75/Achieving-Lowest-Latencies-at-Highest-Message-Rates-Solarflare-Intel-webcast-7-2048.jpg)