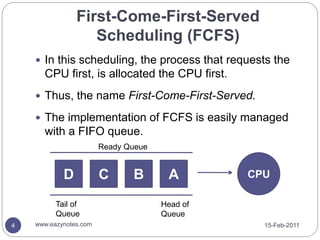

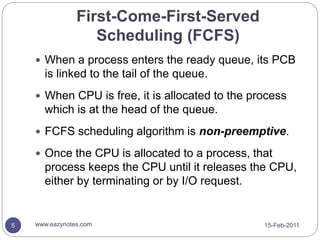

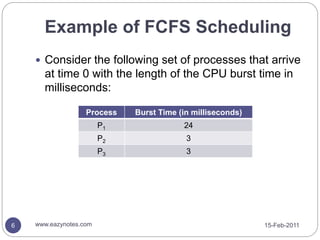

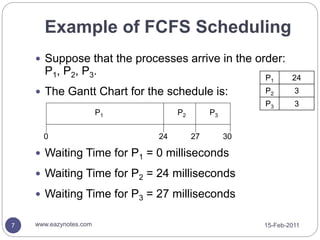

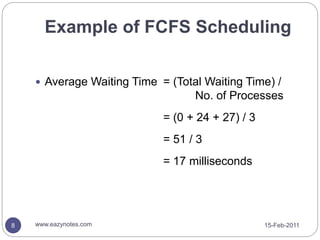

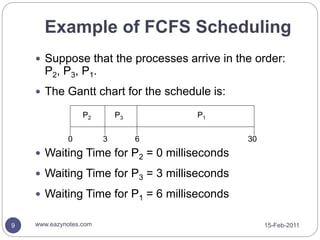

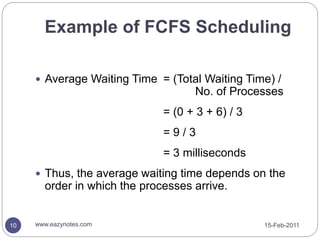

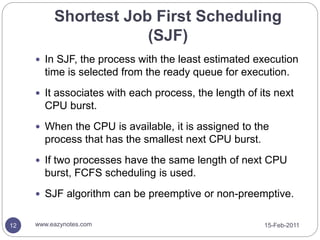

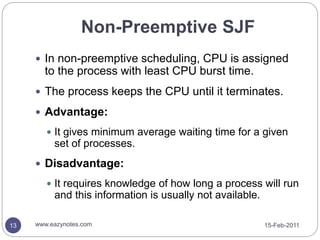

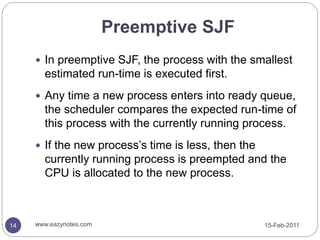

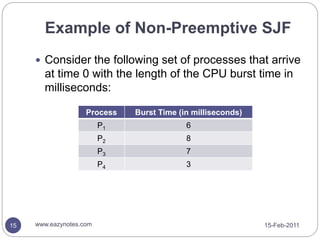

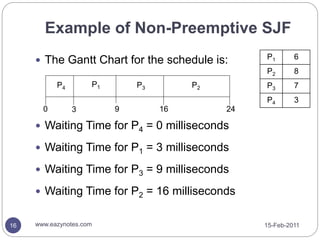

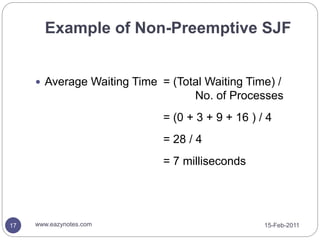

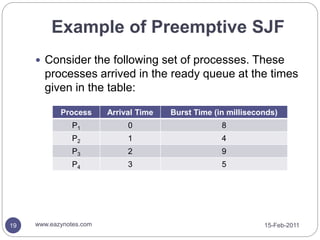

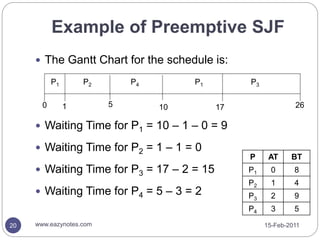

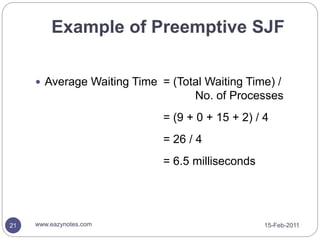

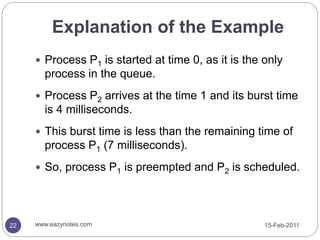

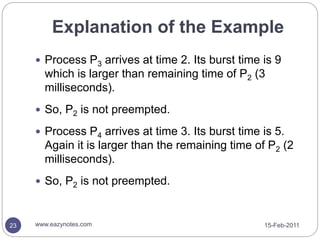

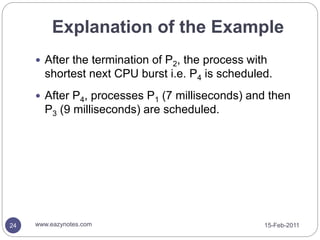

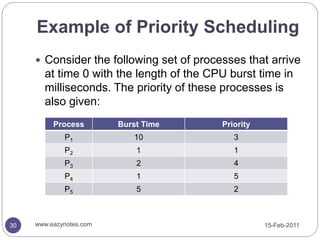

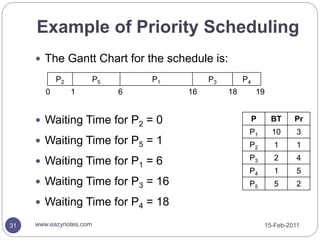

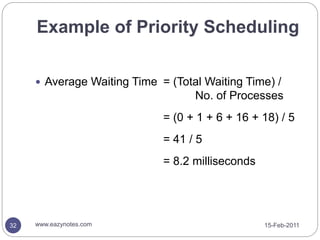

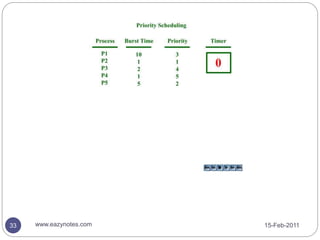

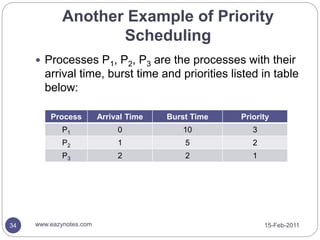

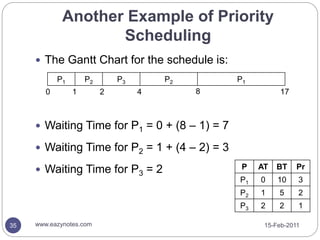

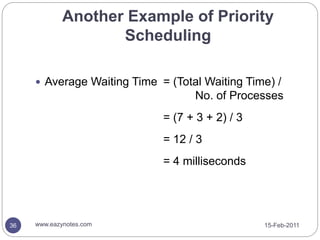

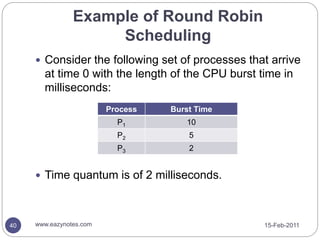

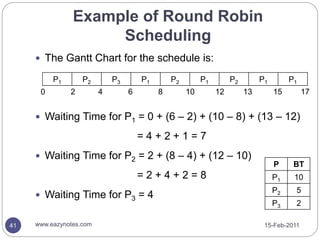

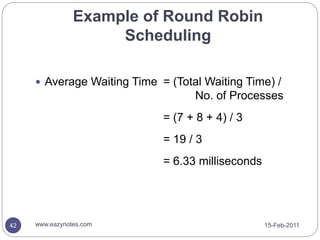

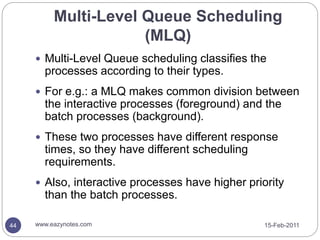

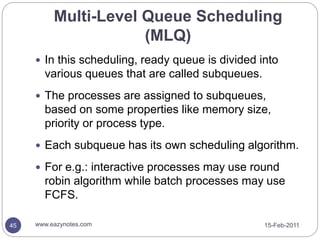

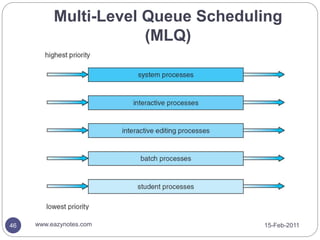

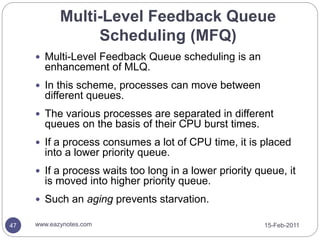

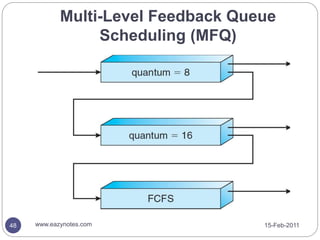

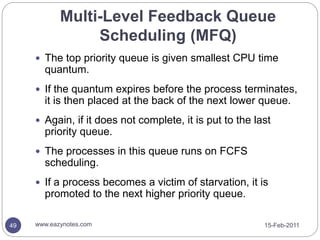

This document discusses various CPU scheduling algorithms. It provides details on First Come First Serve (FCFS), Shortest Job First (SJF), Priority Scheduling, Round Robin Scheduling, and Multi-Level Queue Scheduling. Examples are given to illustrate how each algorithm works. The key points covered include how each algorithm determines which process gets CPU time, whether they are preemptive or not, and how to calculate waiting times.