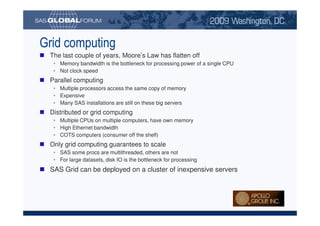

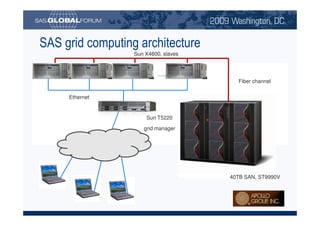

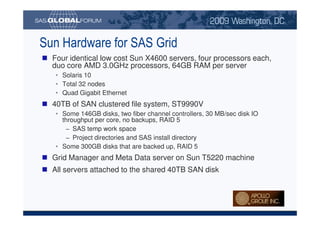

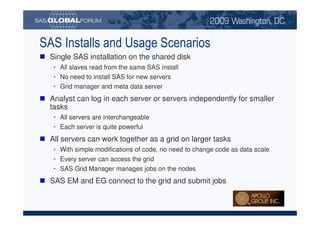

The University of Phoenix utilizes SAS Grid Computing to manage its large-scale data processing needs for marketing and student retention purposes, capitalizing on insights from extensive datasets. The system is scalable, fault-tolerant, and allows for sophisticated analyses through a cluster of cost-effective servers, enhancing educational experiences and operational efficiency. Key advantages include the ability to process vast amounts of data quickly, optimize model performance, and support a wide range of analytical tasks.