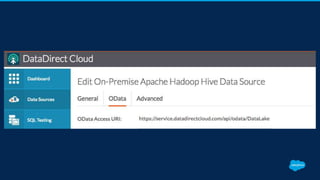

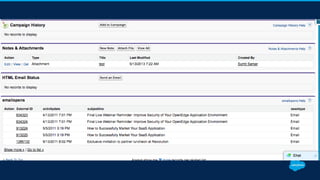

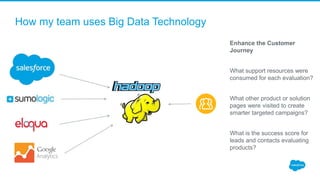

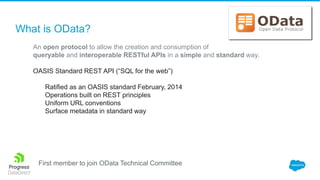

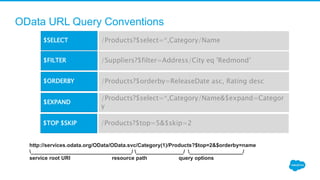

The document outlines the concept of Salesforce Connect, which enables access to external big data in real-time without data duplication. It introduces key components such as OData, discusses best practices for accessing big data from Salesforce, and provides lessons learned during implementation. Additionally, it emphasizes performance tuning for external objects and the importance of agile data governance.

![Demo [Lookups, Search, Write, Reporting]

Progress Corporate Firewall

Data Lake

OData

D2C On-premises

Connector

HTTPS and UDP firewall traversal](https://image.slidesharecdn.com/df16-bigdata-161005045443/85/Salesforce-External-Objects-for-Big-Data-15-320.jpg)