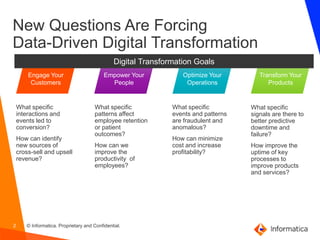

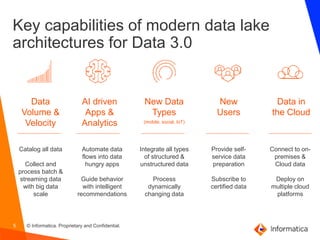

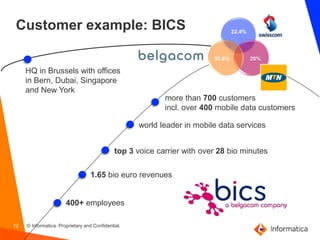

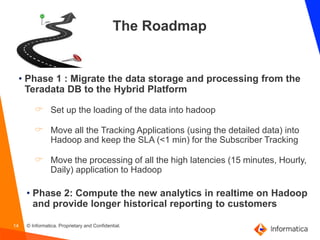

This document discusses building intelligent data lakes and the challenges of data-driven digital transformation. It outlines goals around engaging customers, optimizing operations, transforming products, and empowering employees. It then discusses the generational market disruption underway and challenges around data volume/velocity, new users, new data types, and data in the cloud. Key capabilities of modern data lake architectures are presented to address these challenges. The document recommends building a data catalog, using an abstraction layer, and choosing a tightly integrated platform. It provides an example customer, BICS, and their roadmap to migrate data storage/processing from Teradata to a hybrid platform.