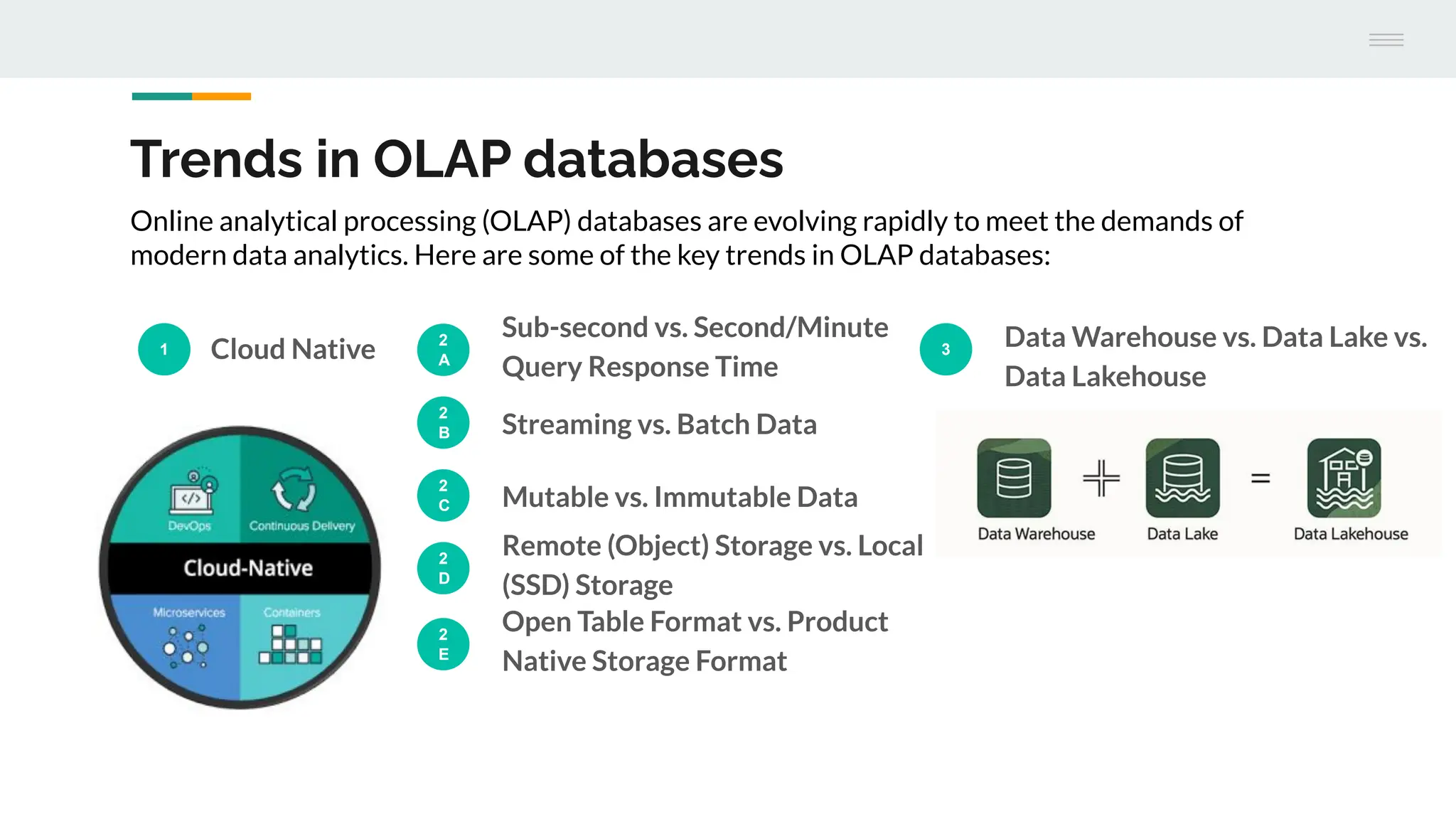

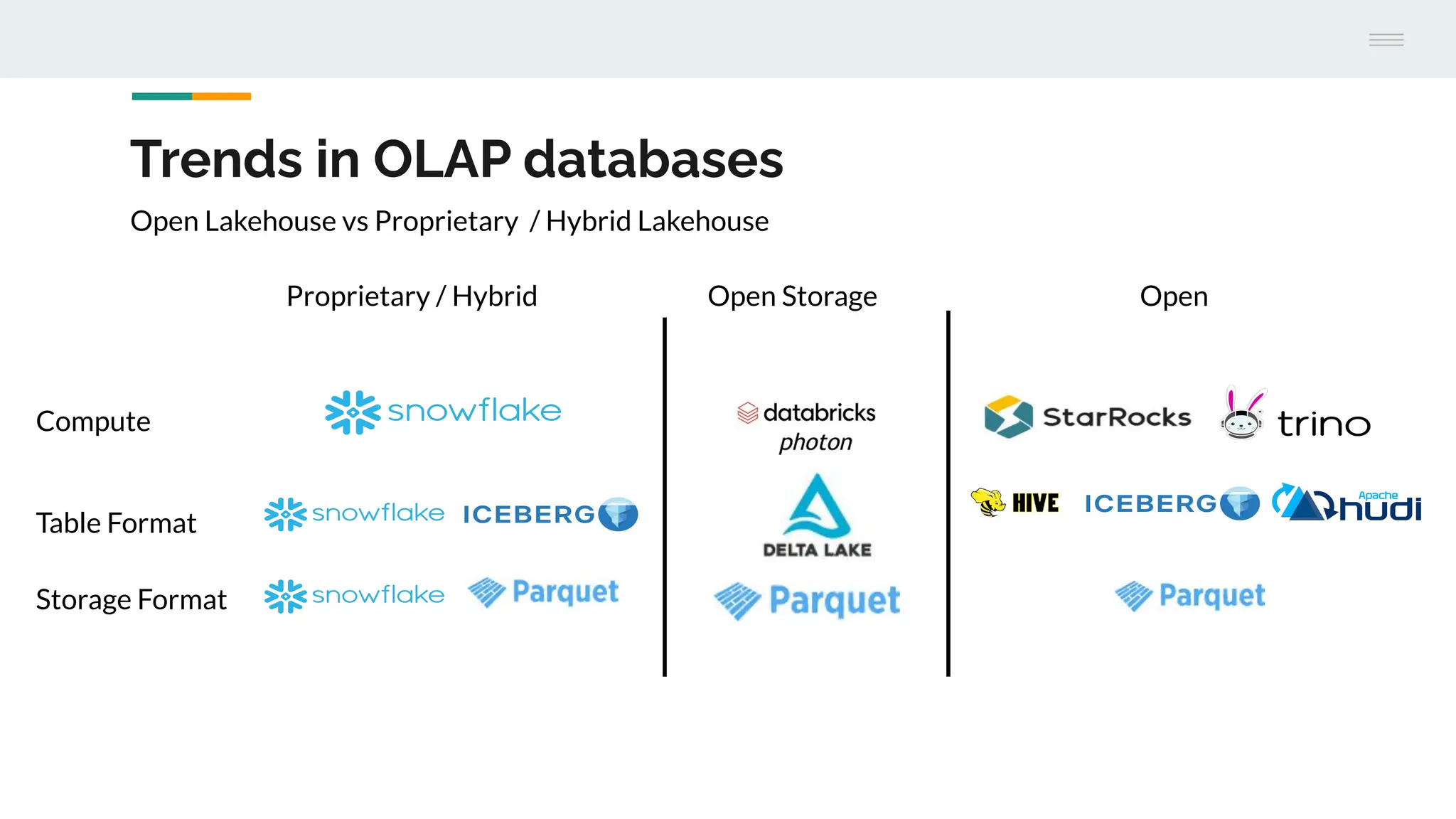

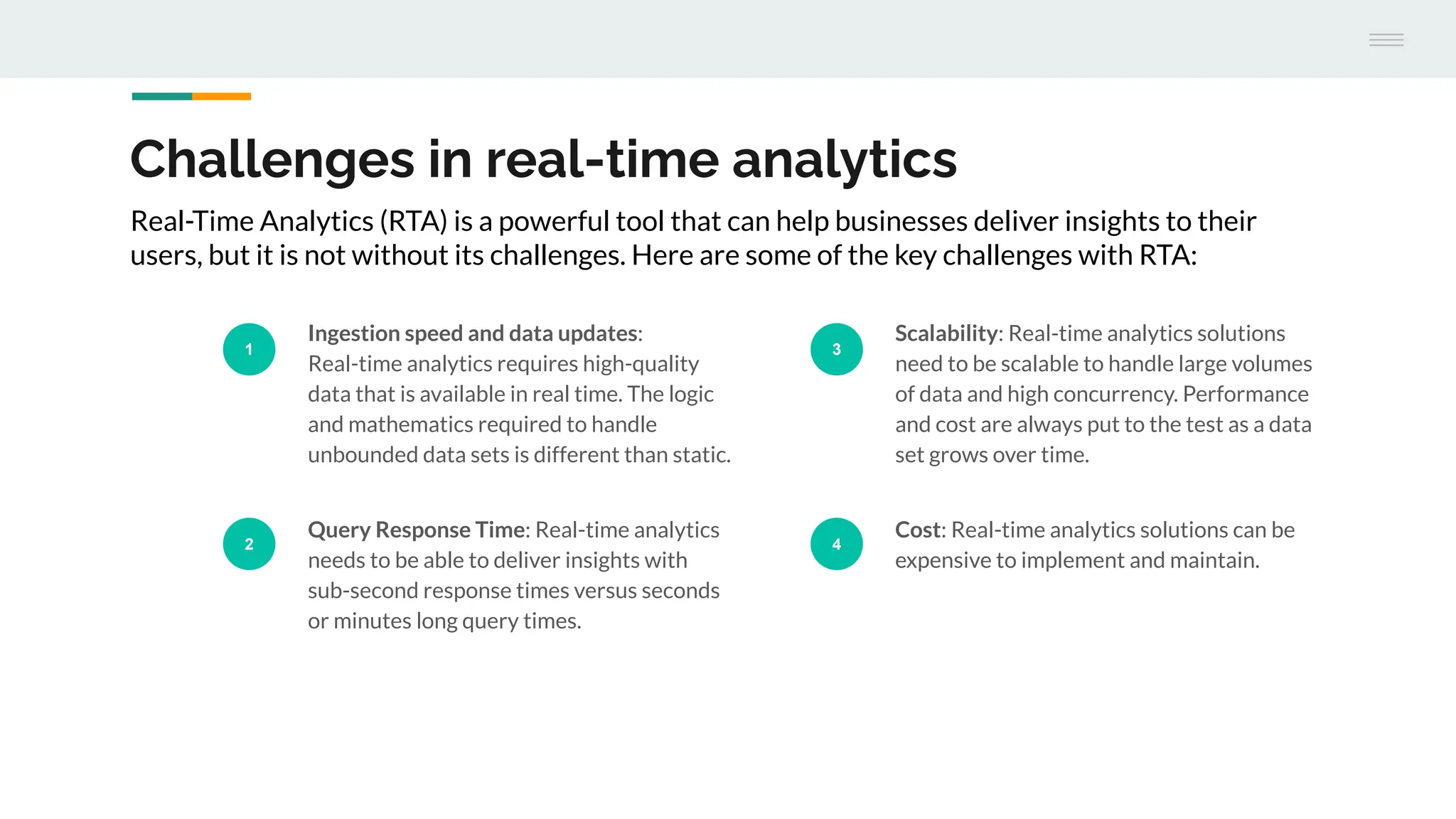

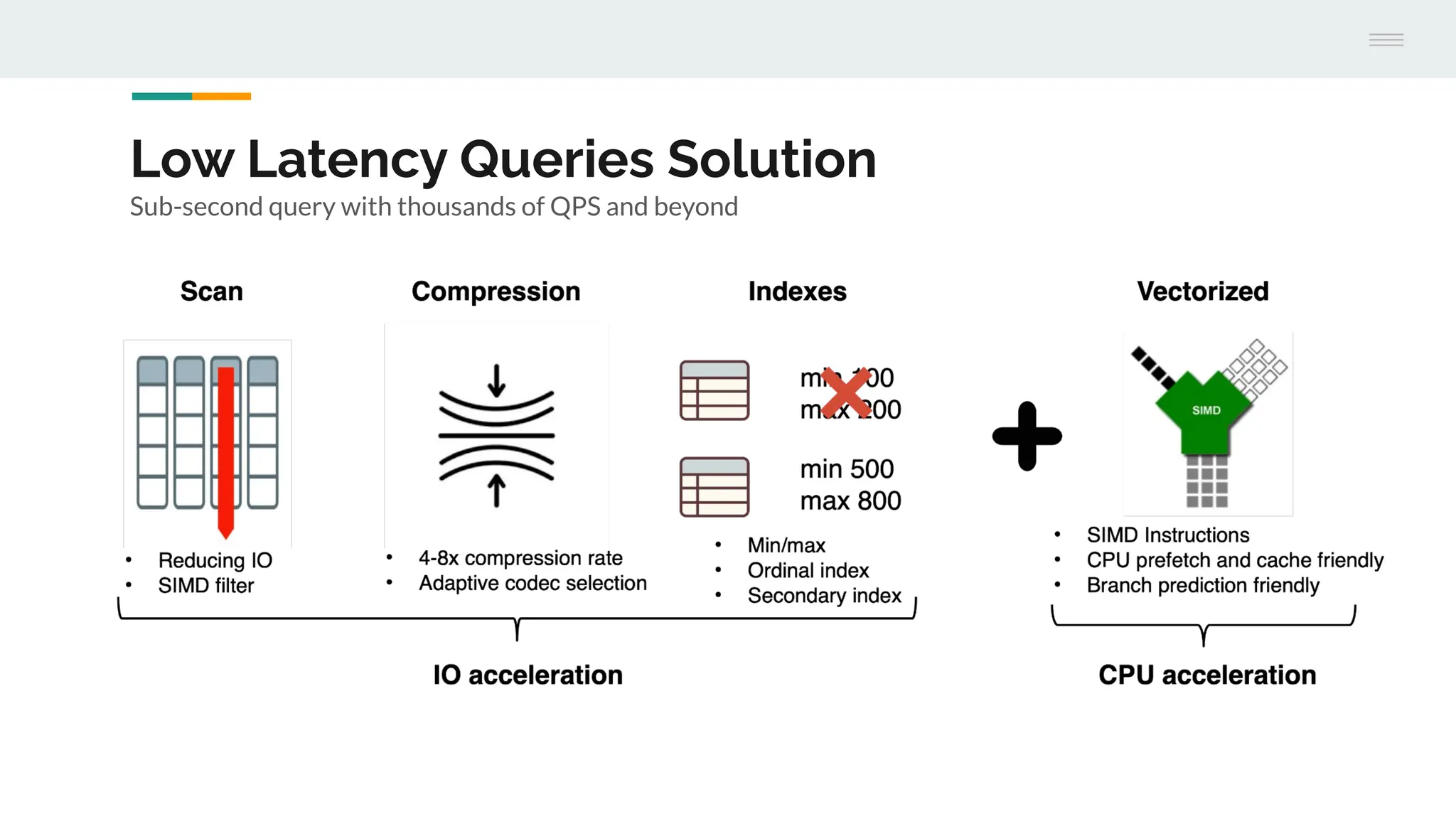

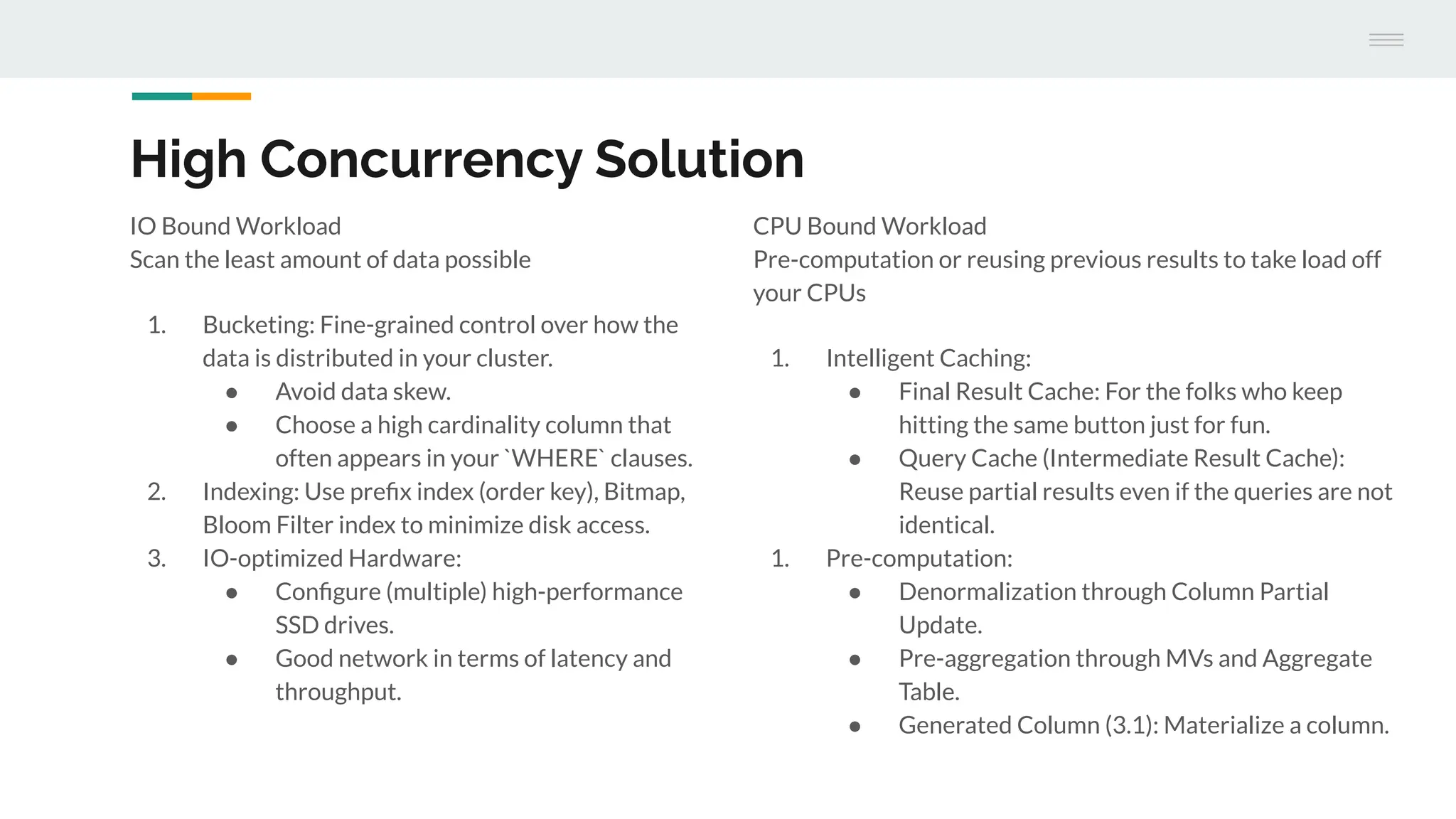

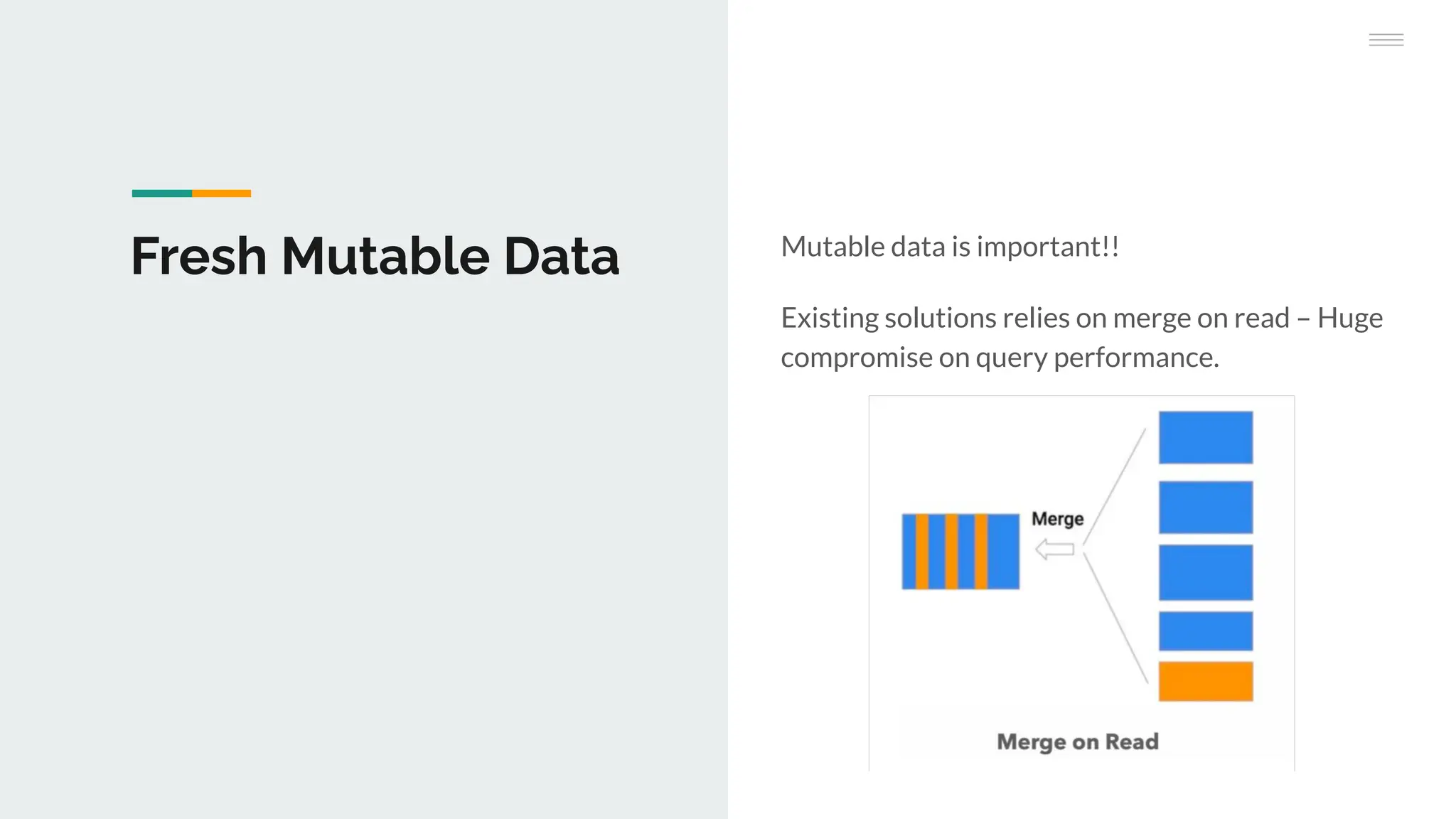

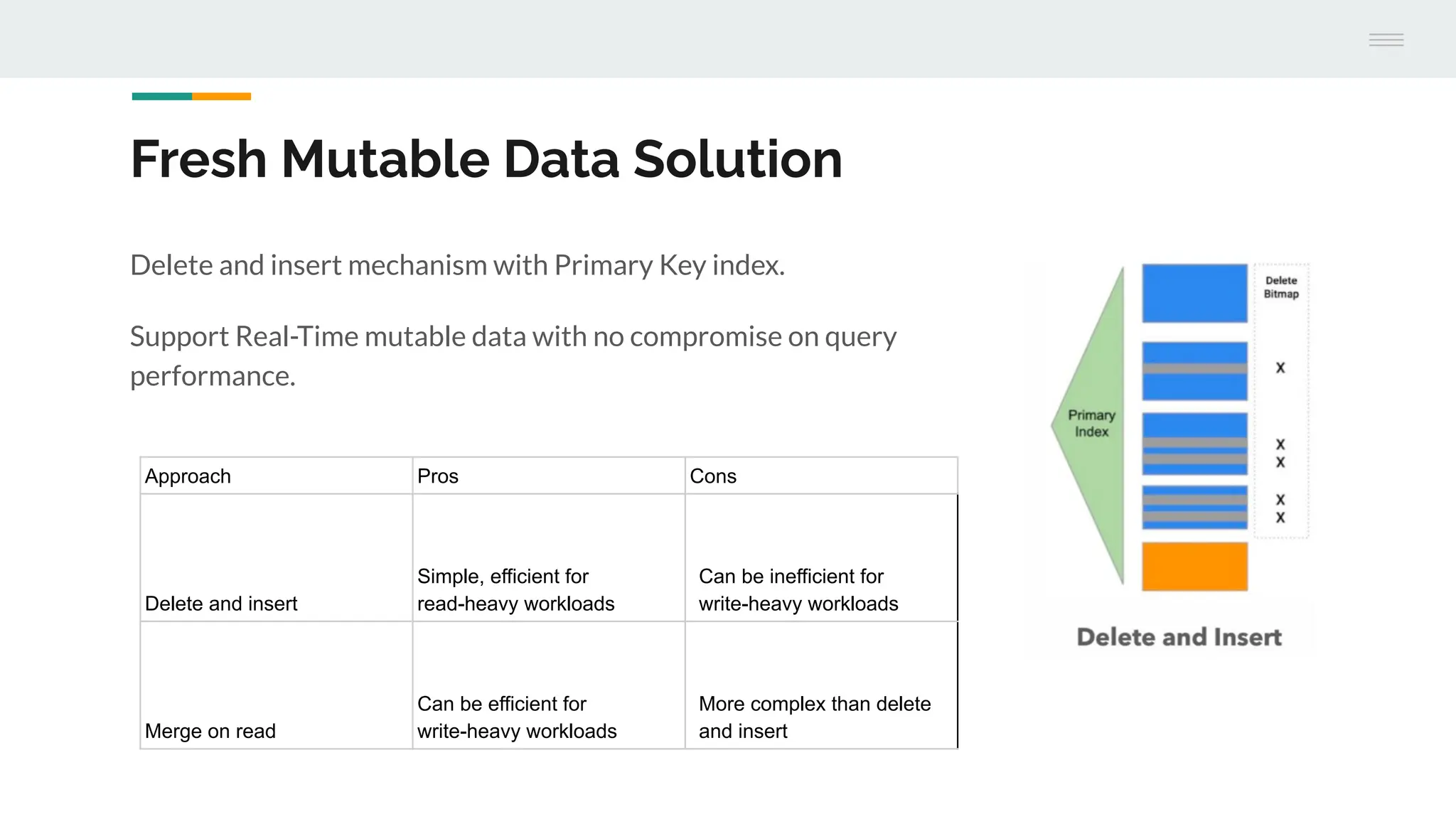

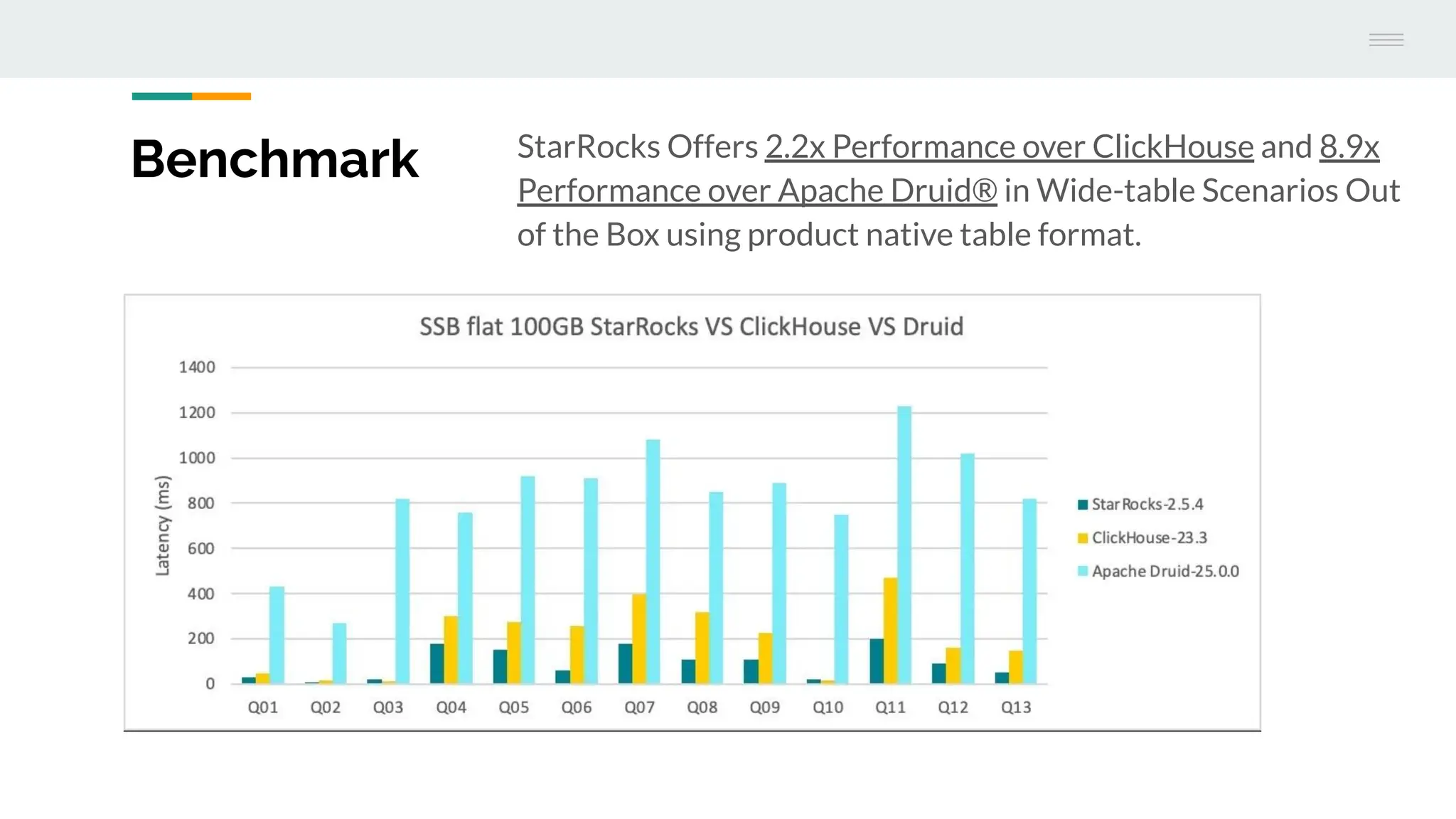

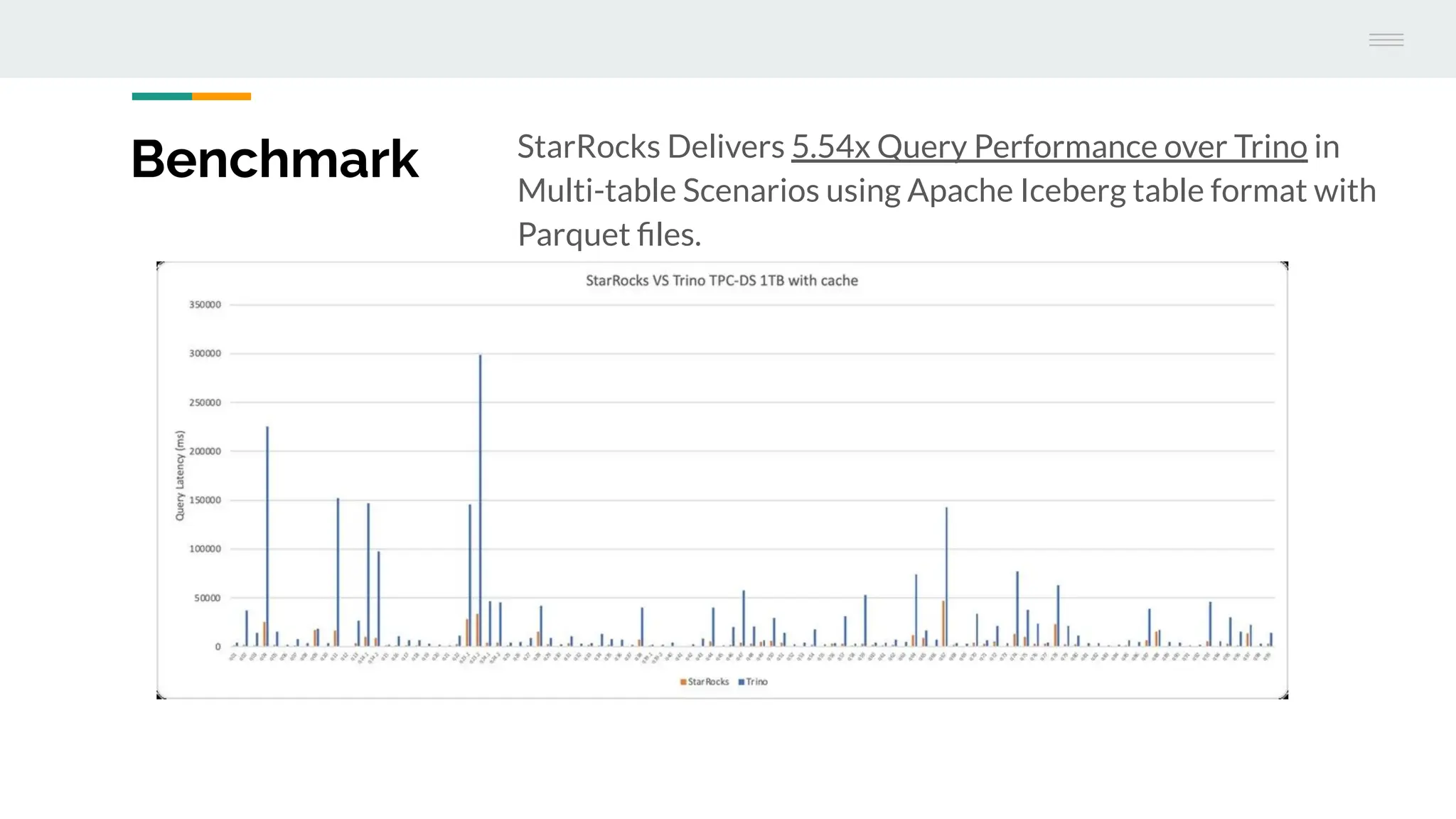

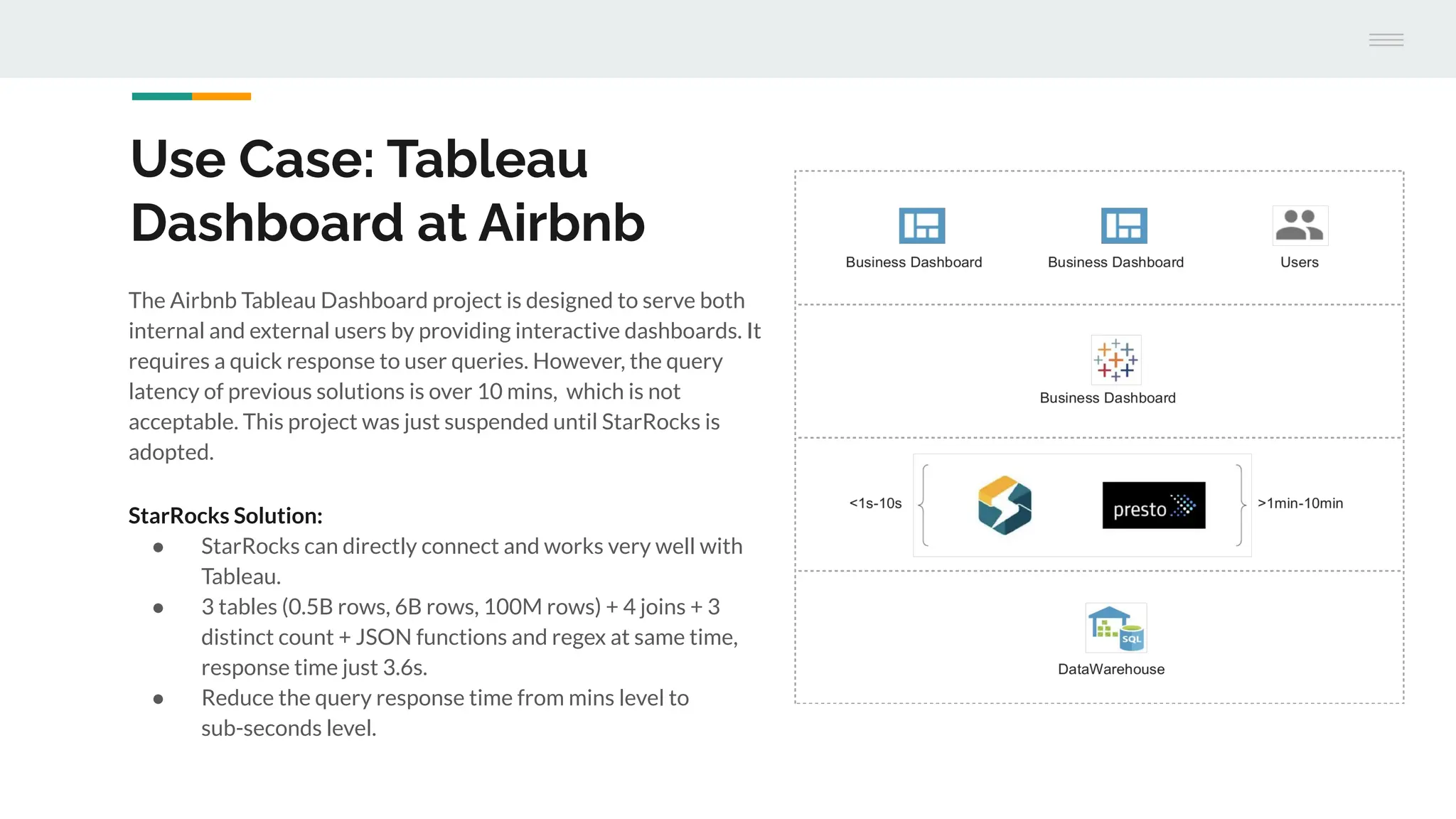

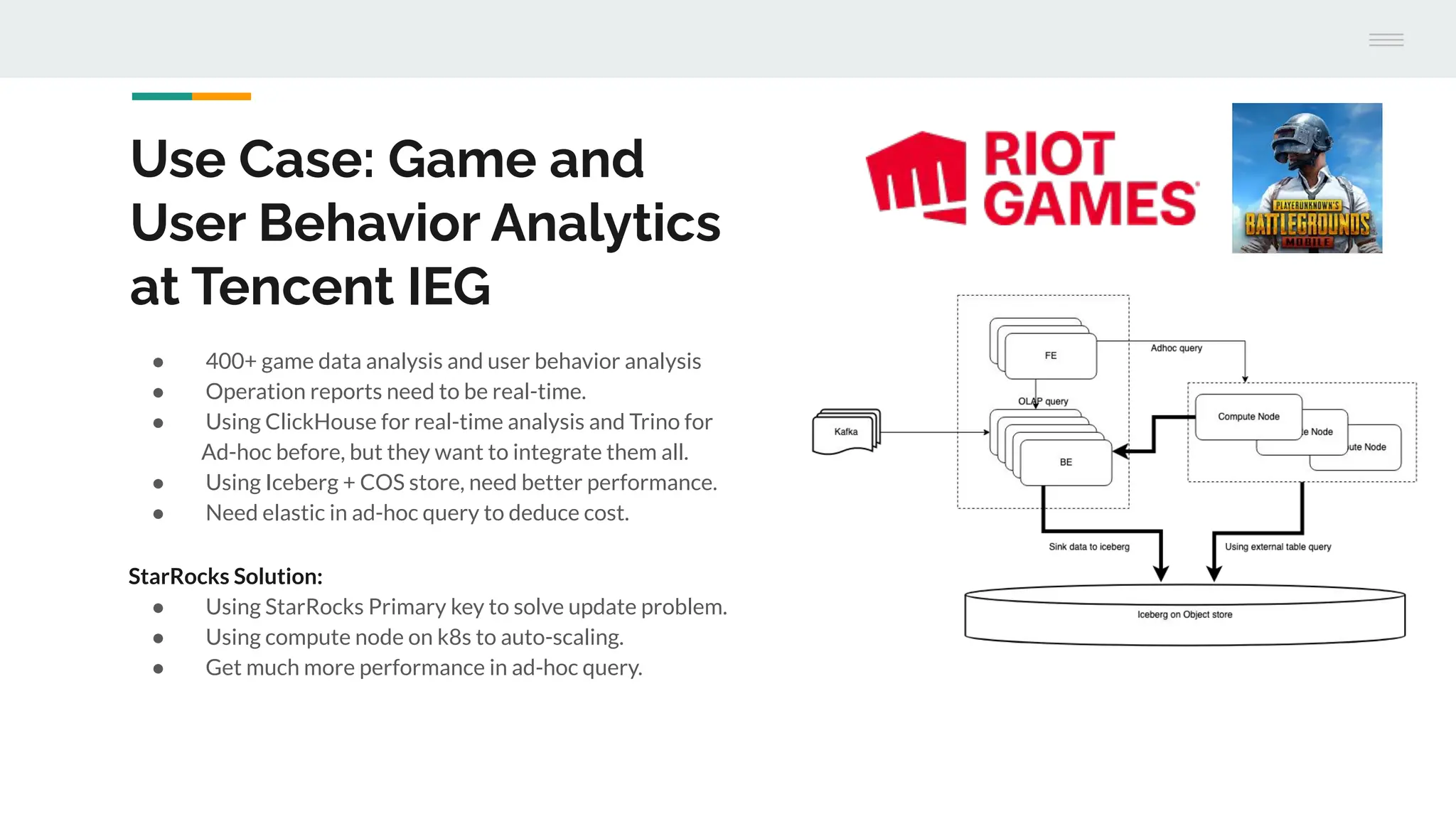

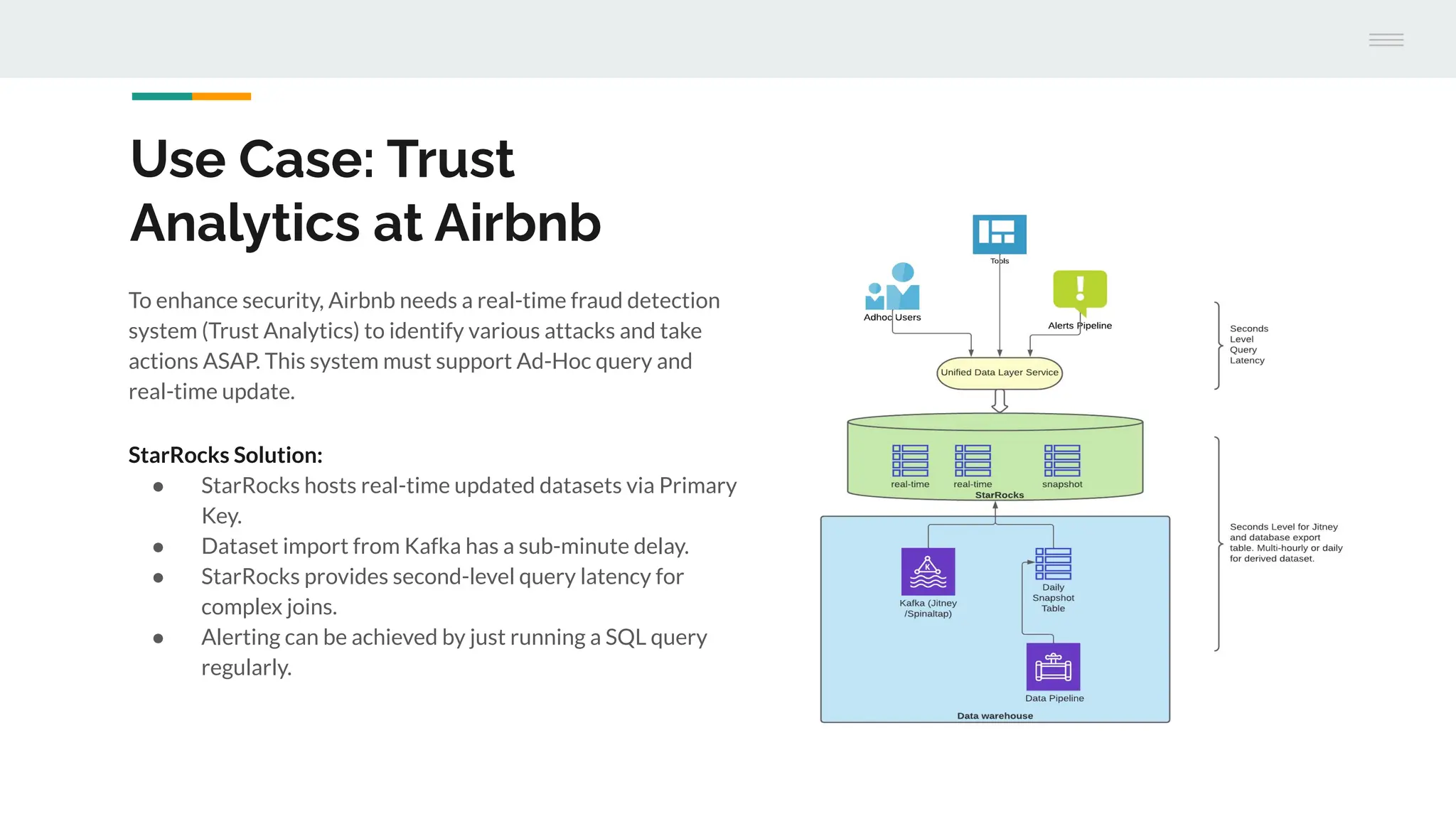

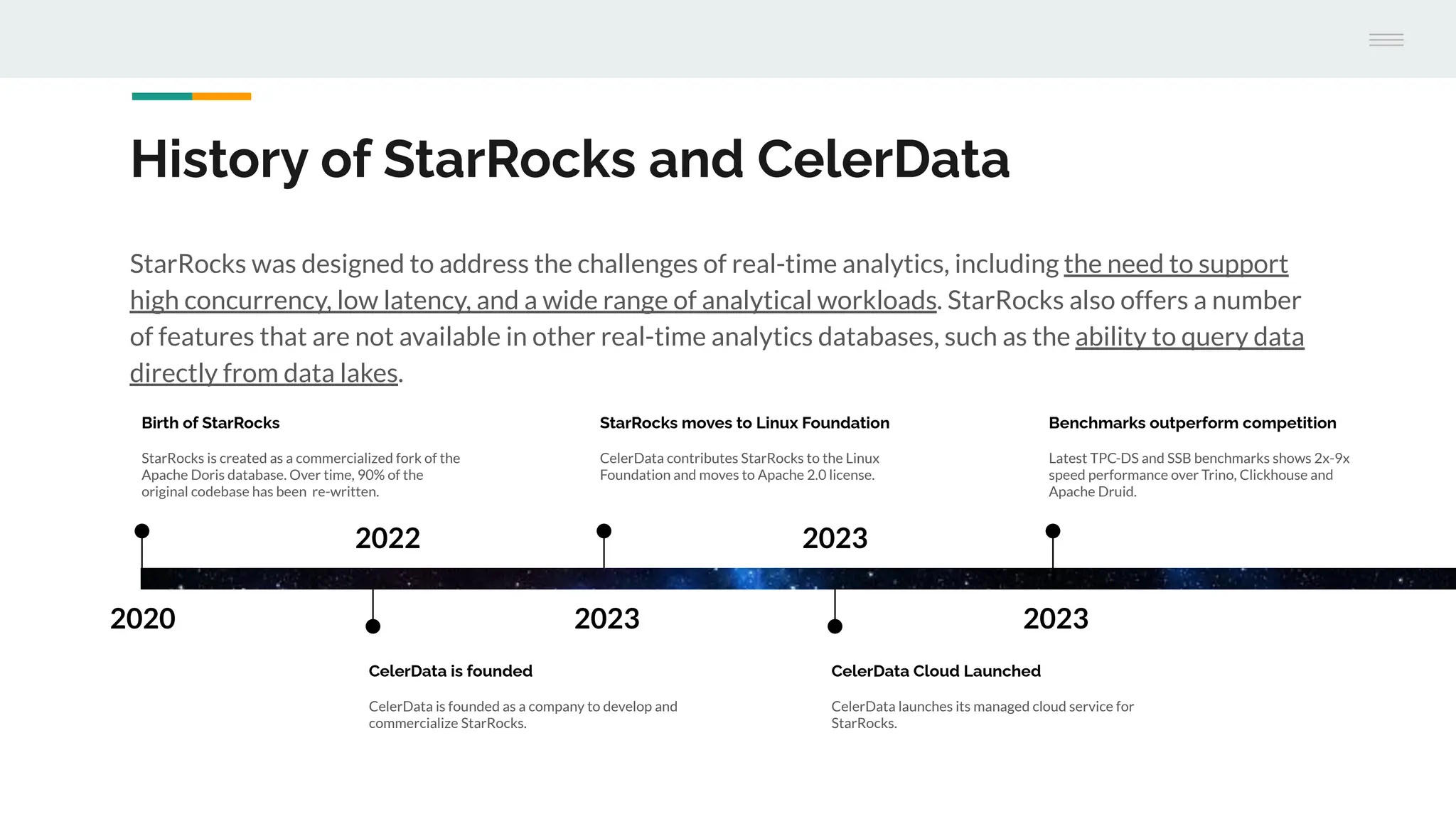

The document discusses StarRocks, a real-time analytics solution designed to address challenges such as high concurrency, low latency, and scalability in data processing. It highlights its capabilities in querying data directly from data lakes, performing complex queries with sub-second response times, and optimizing resource allocation for better cost efficiency. Additionally, several case studies showcase the effective application of StarRocks in organizations like Airbnb and Tencent for various analytics needs.