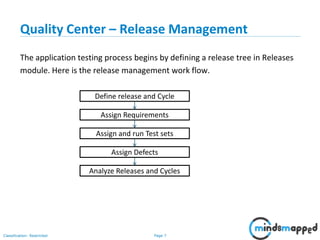

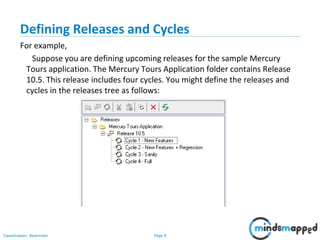

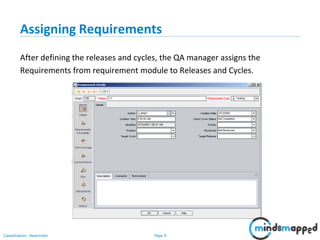

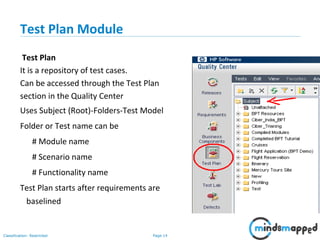

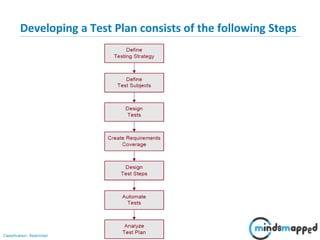

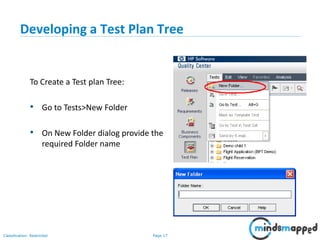

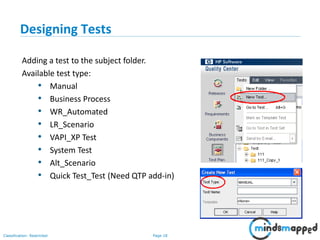

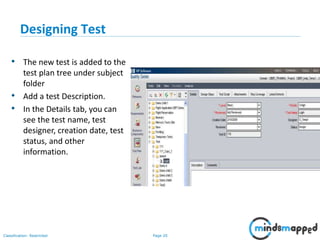

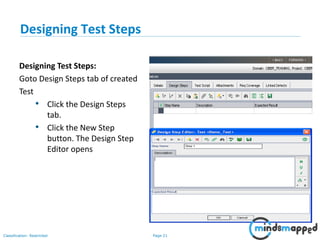

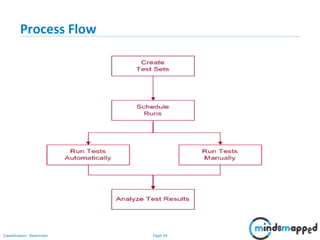

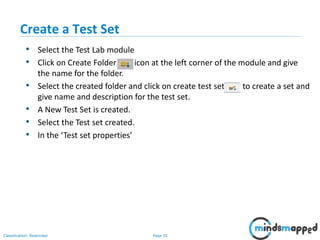

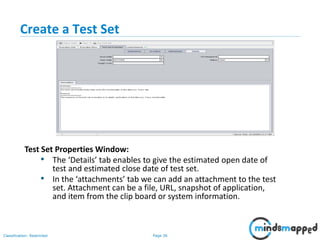

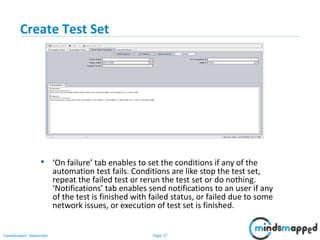

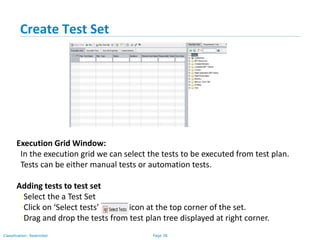

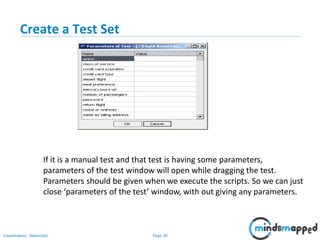

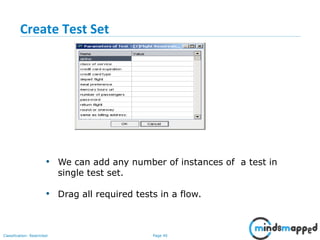

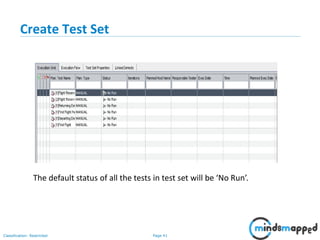

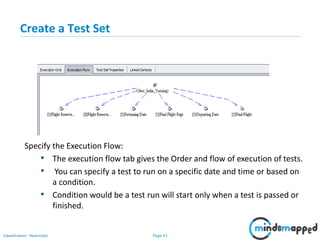

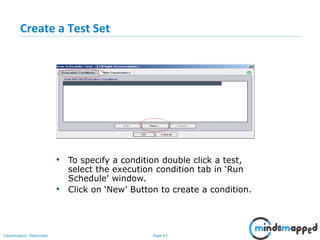

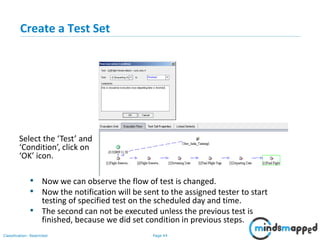

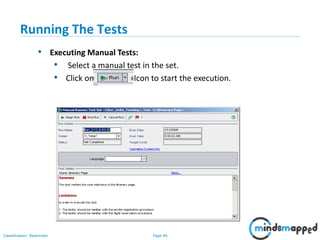

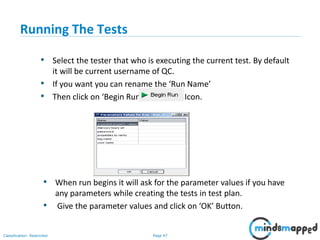

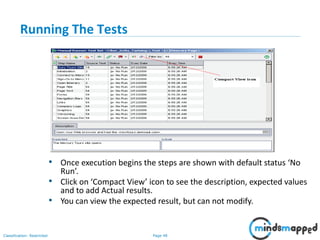

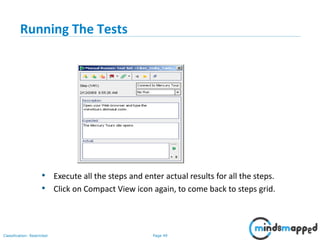

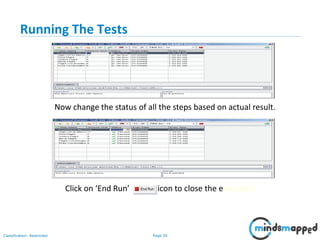

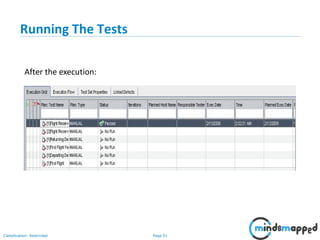

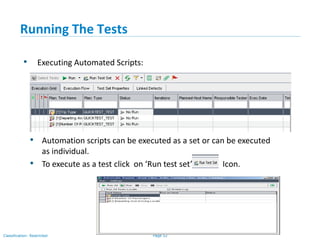

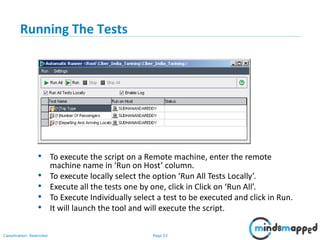

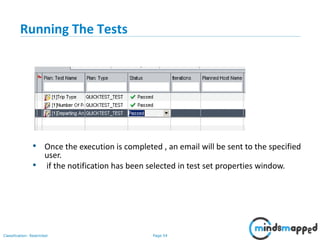

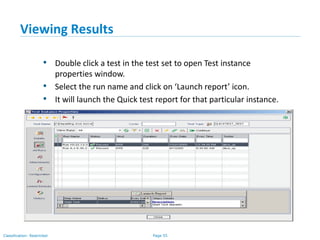

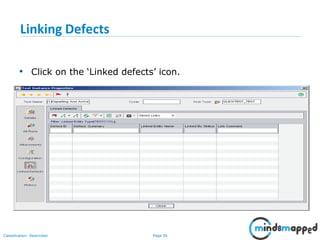

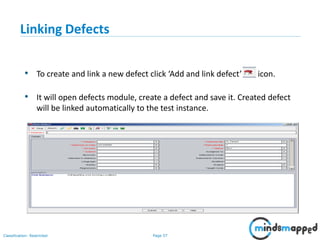

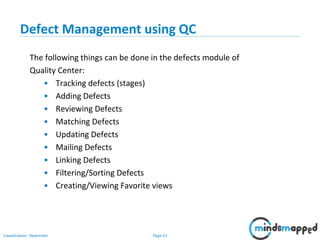

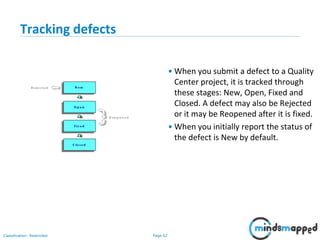

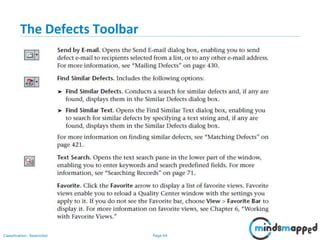

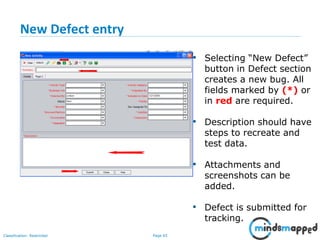

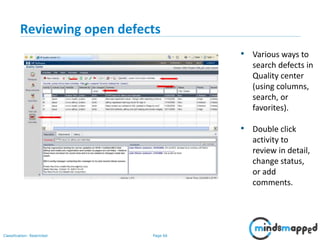

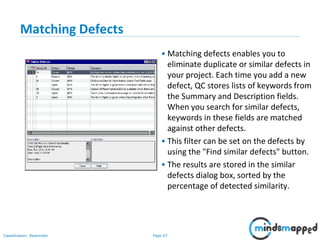

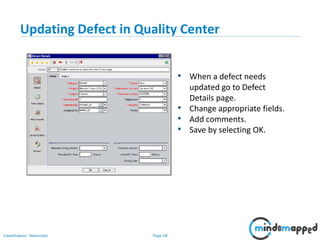

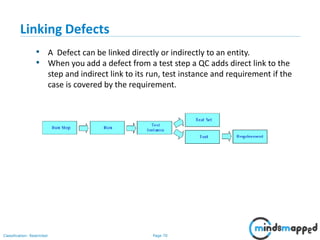

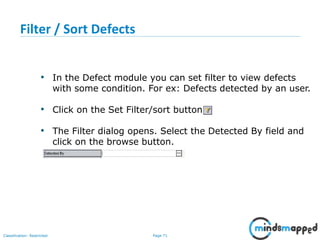

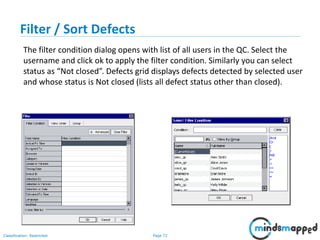

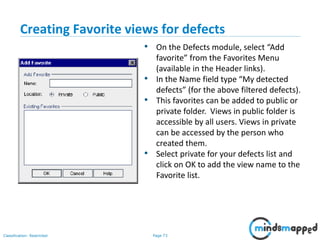

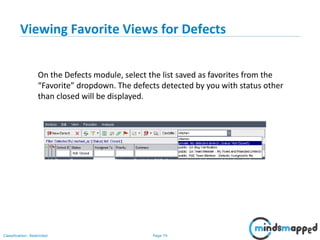

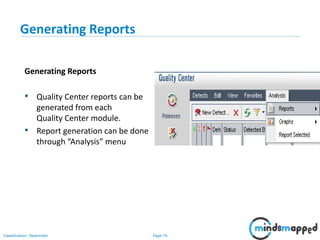

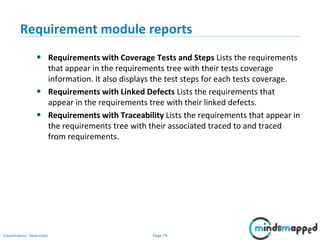

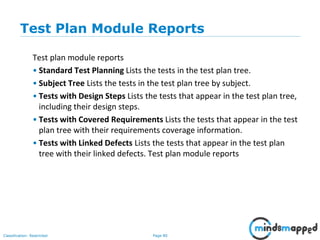

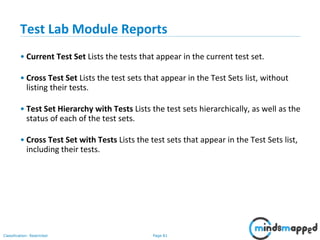

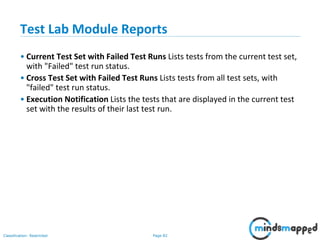

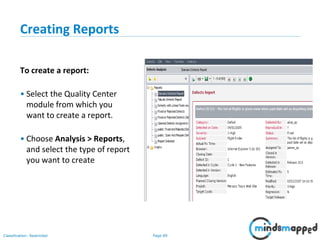

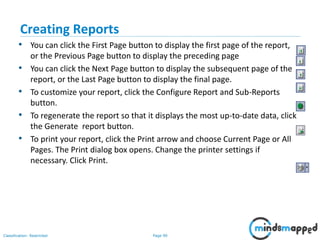

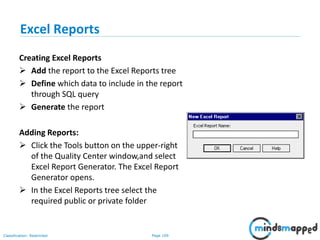

The document provides an overview of HP Quality Center, a web-based test management tool designed to streamline the testing process by offering modules for release management, test planning, defect management, and reporting. It outlines the functionalities of various modules including test lab operations, defect tracking, and the integration with automated testing tools, while detailing the steps for creating test sets, executing tests, and managing defects. The document also emphasizes the benefits of using Quality Center for improved analysis, management, and tracking throughout the testing lifecycle.