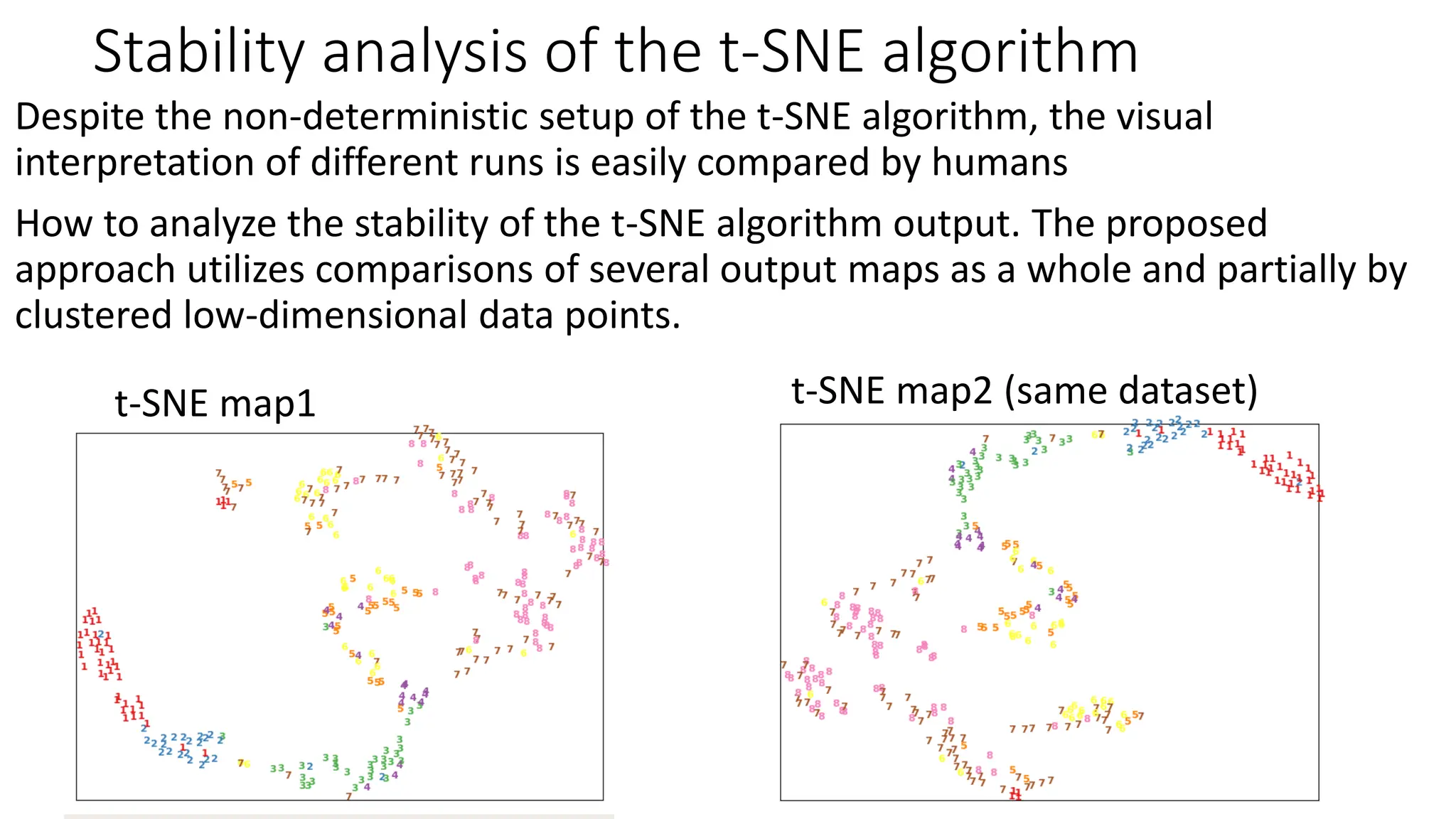

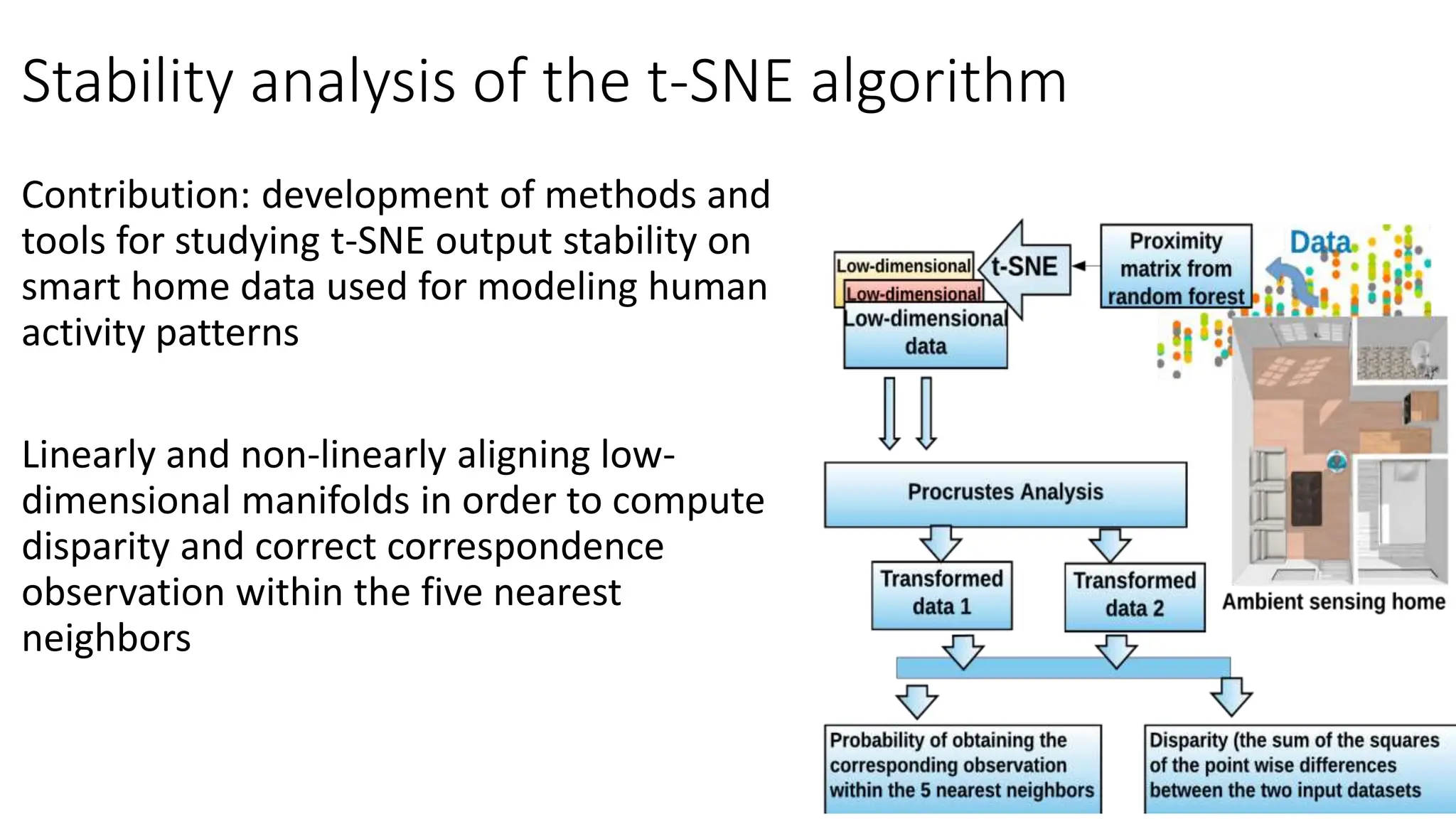

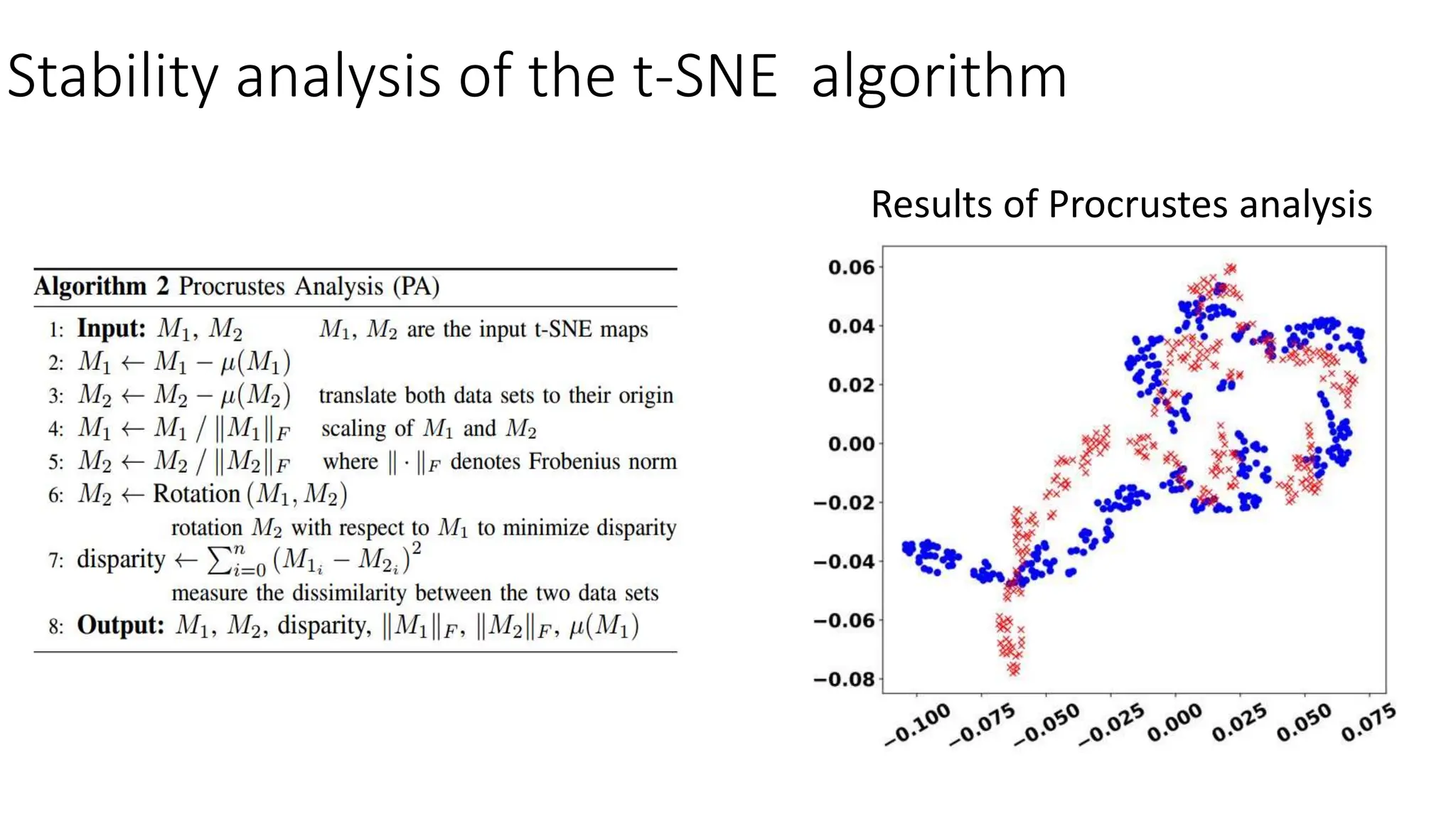

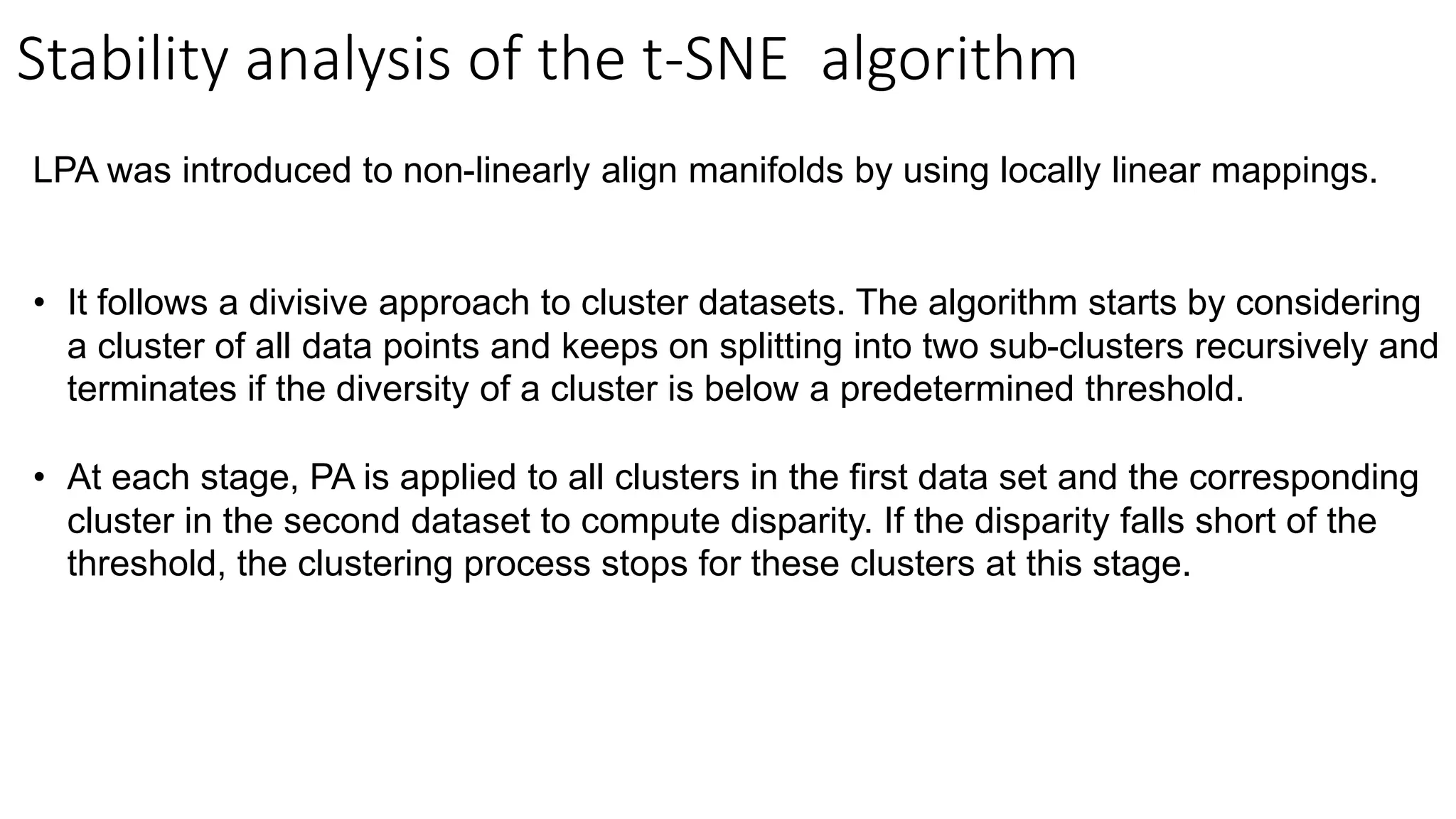

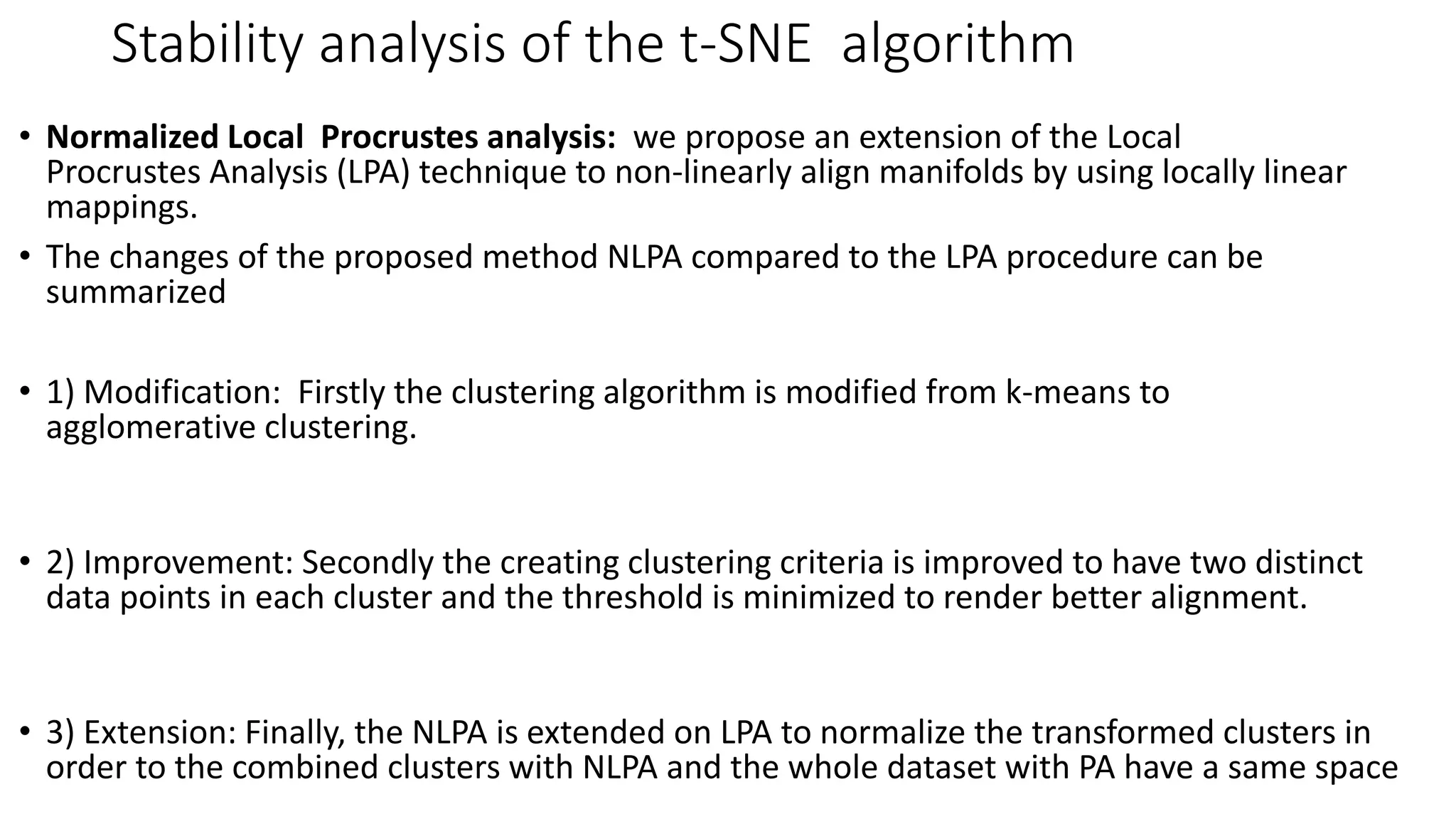

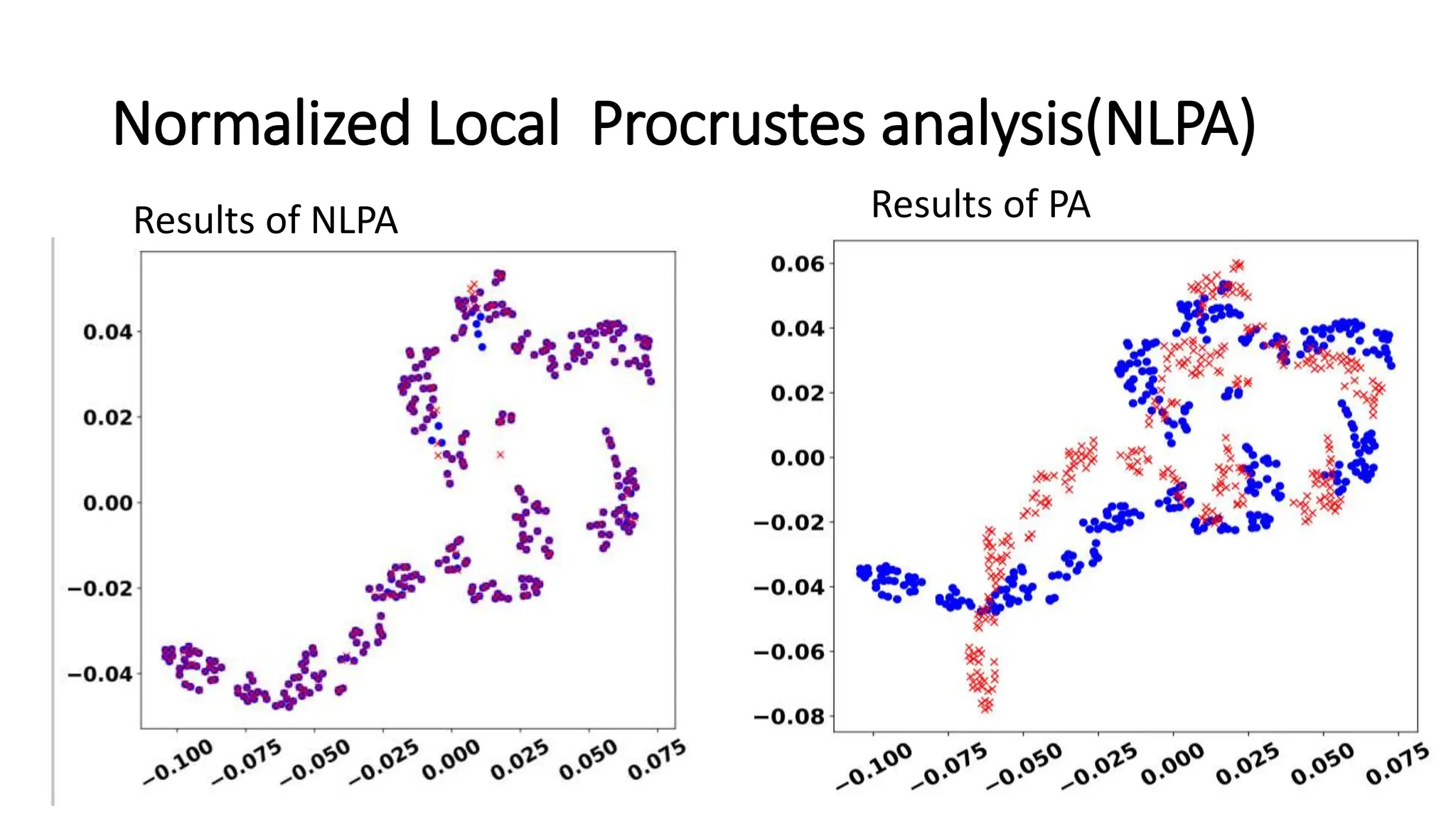

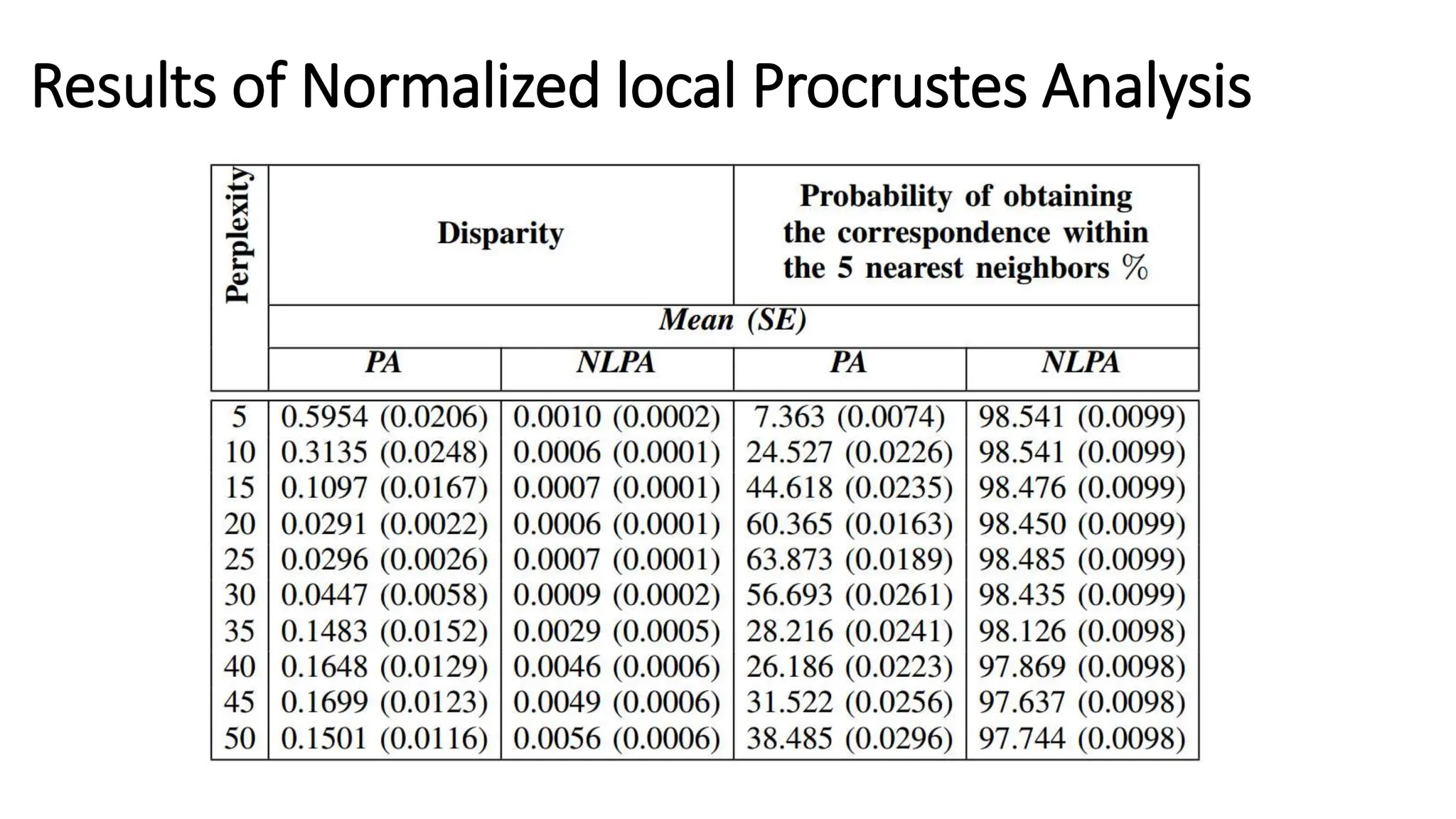

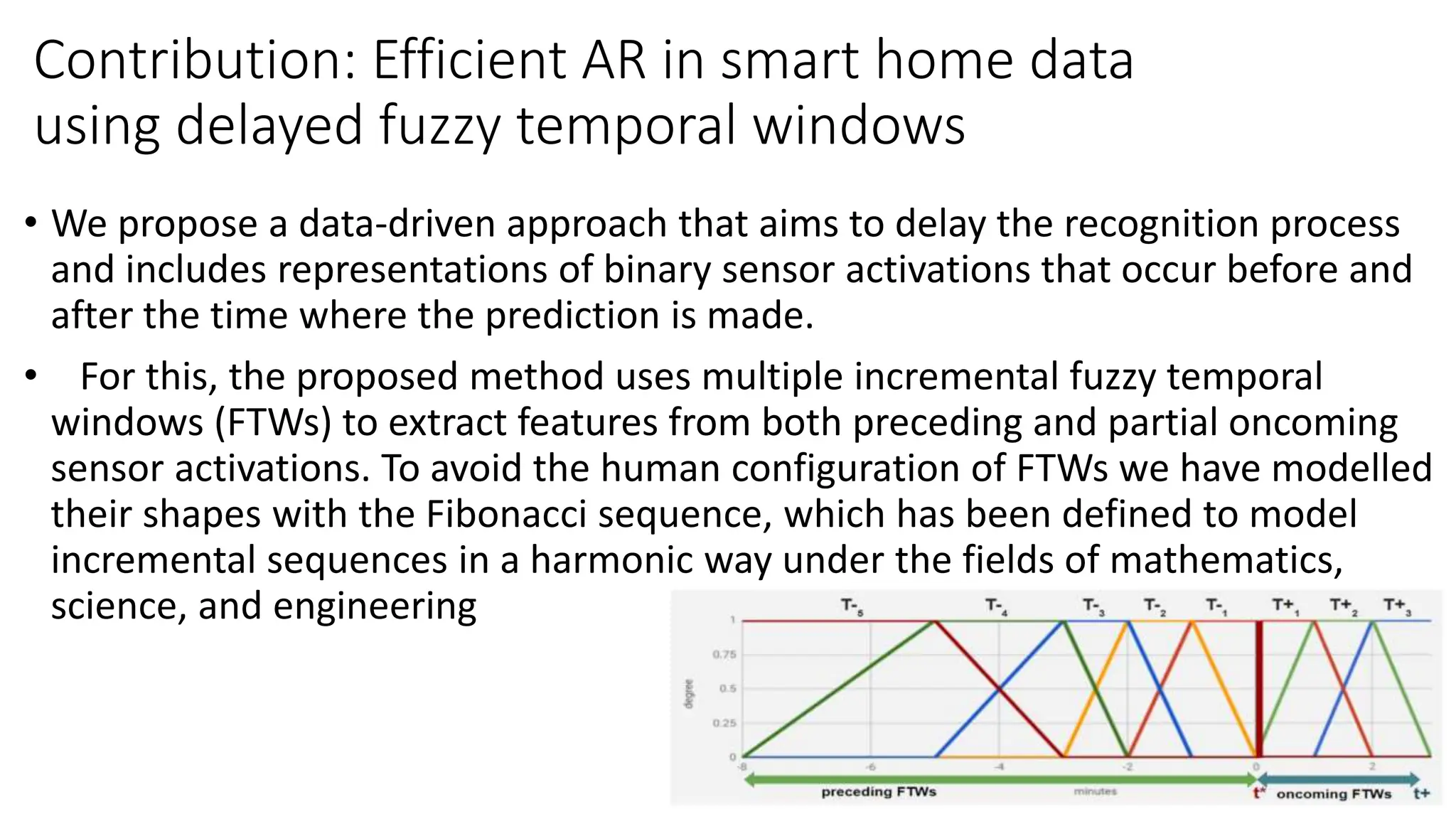

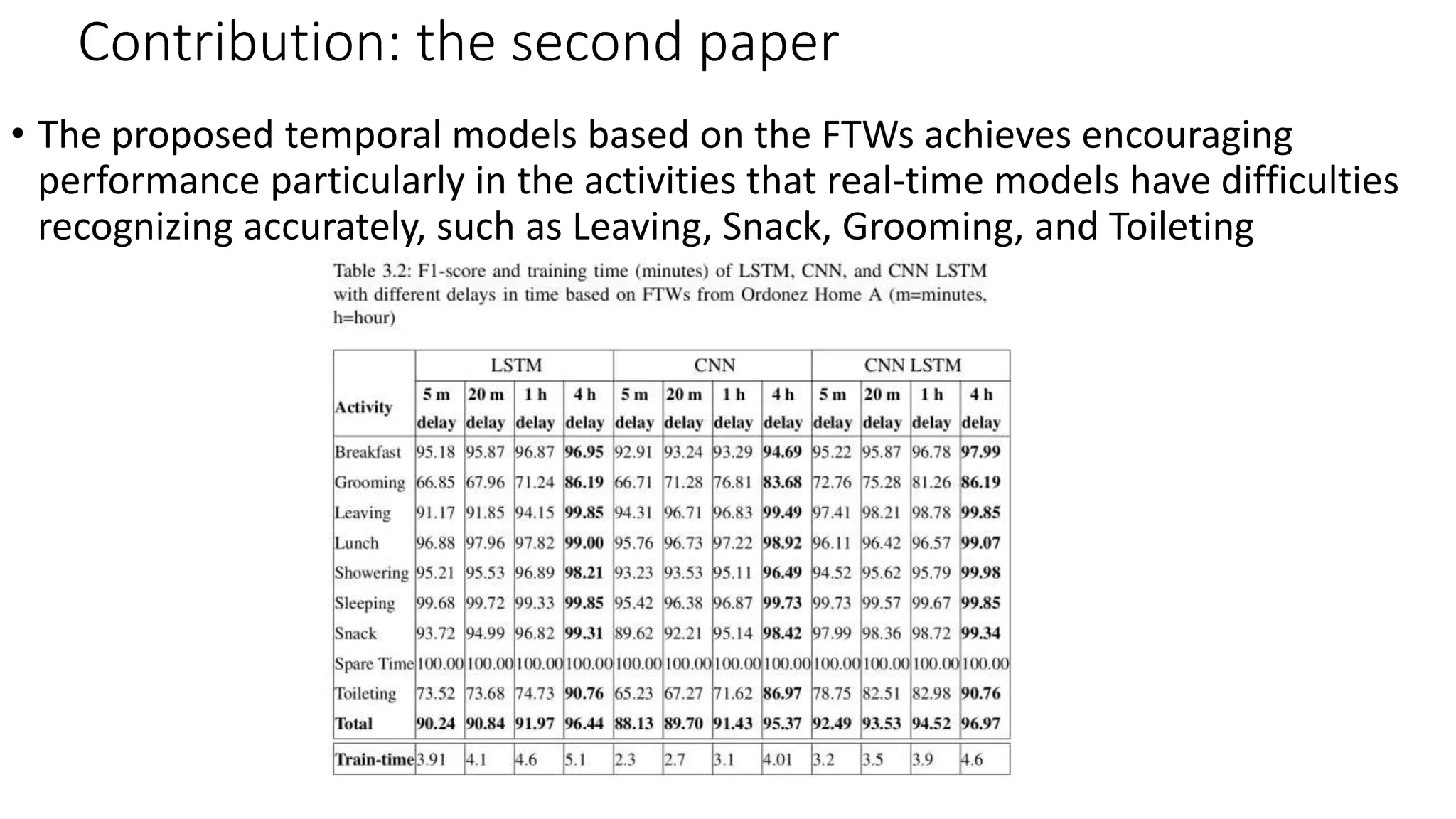

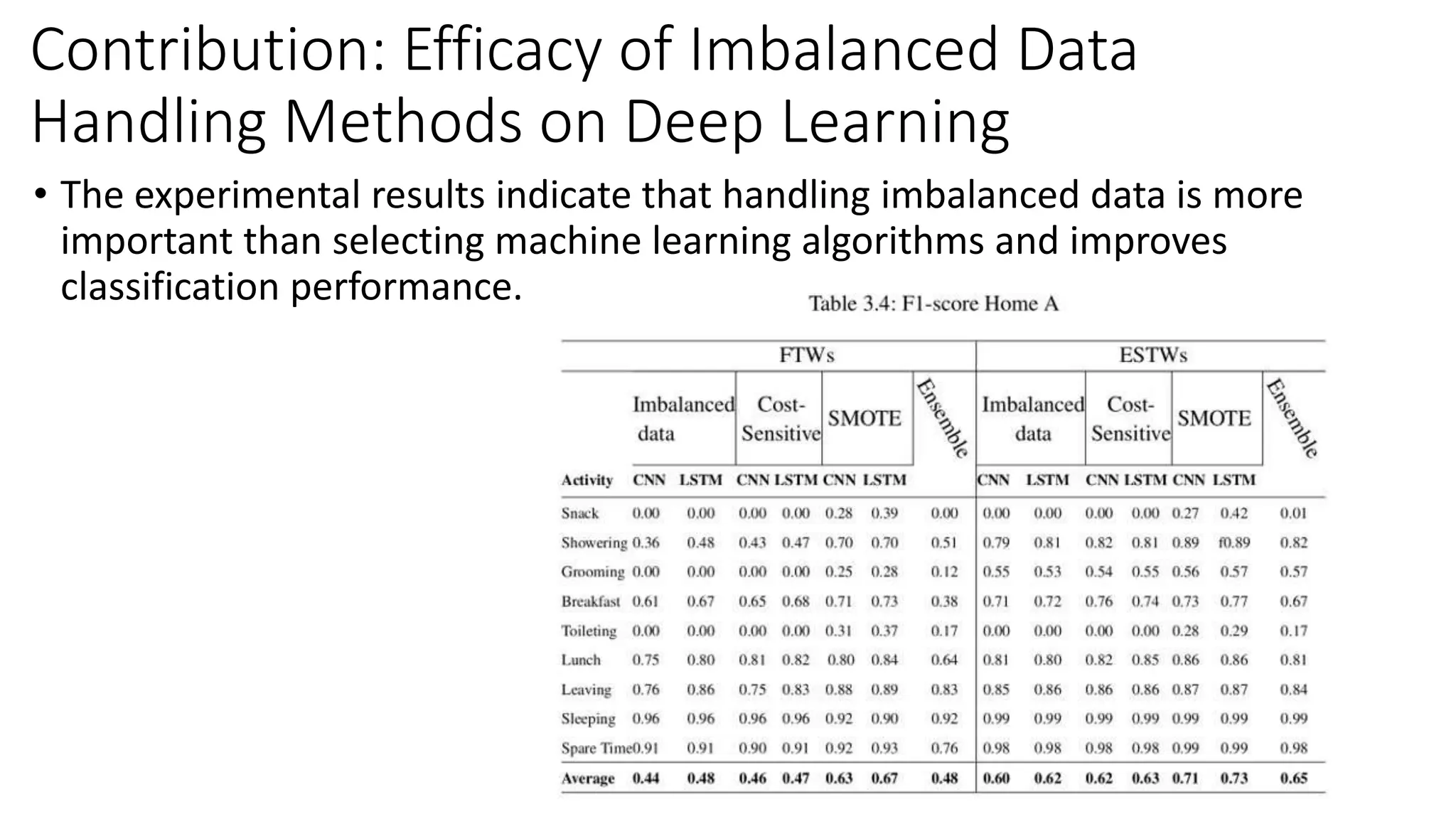

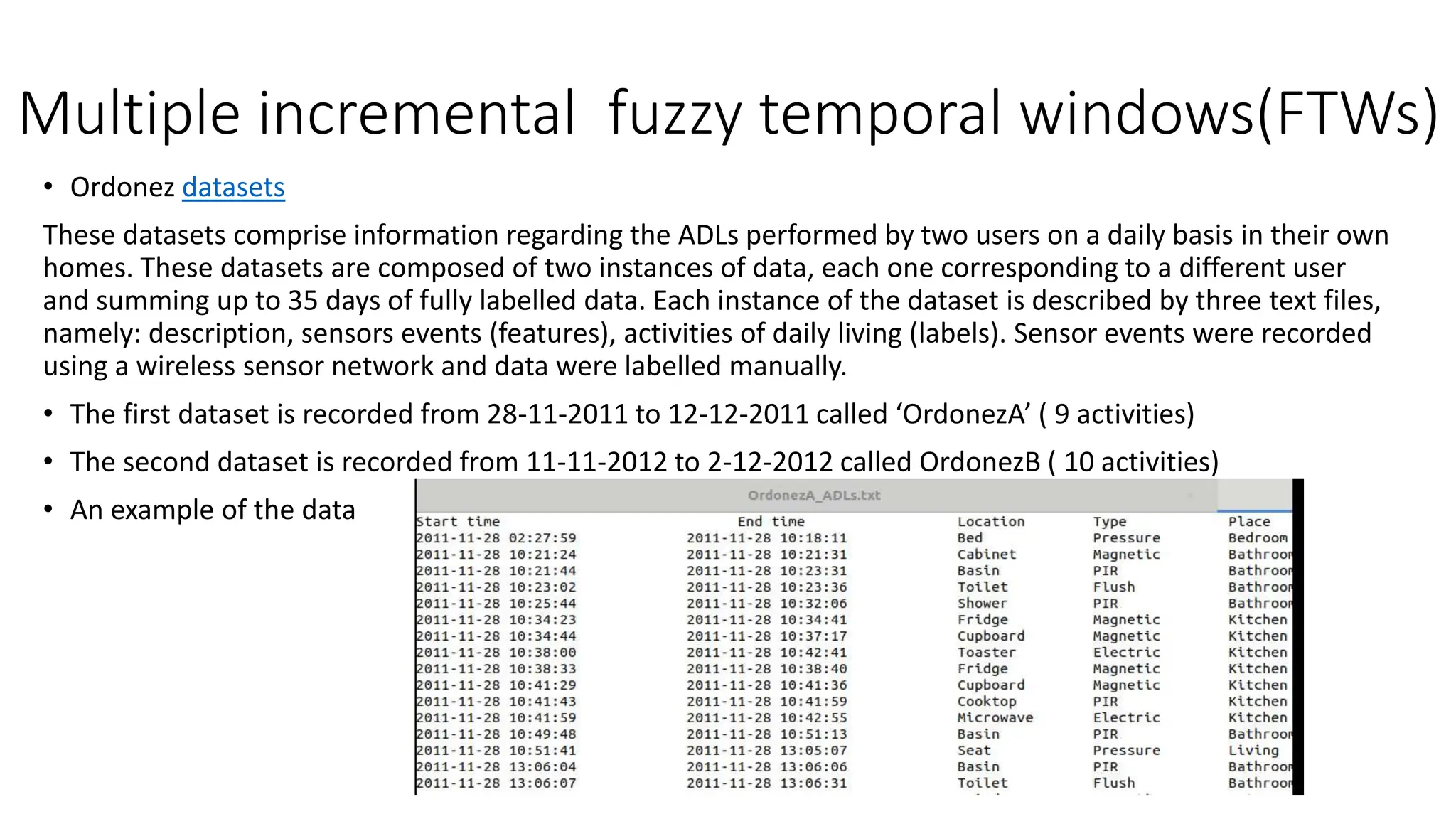

This thesis explores human activity recognition (HAR) within smart homes, focusing on improving stability and efficiency in activity recognition through methods like transfer learning and delayed fuzzy temporal windows. It addresses key challenges such as labeling sensor readings and activity diversity, presenting various machine learning techniques to enhance HAR. The research aims to facilitate better knowledge transfer across smart homes and improve the recognition of activities, especially for vulnerable populations.

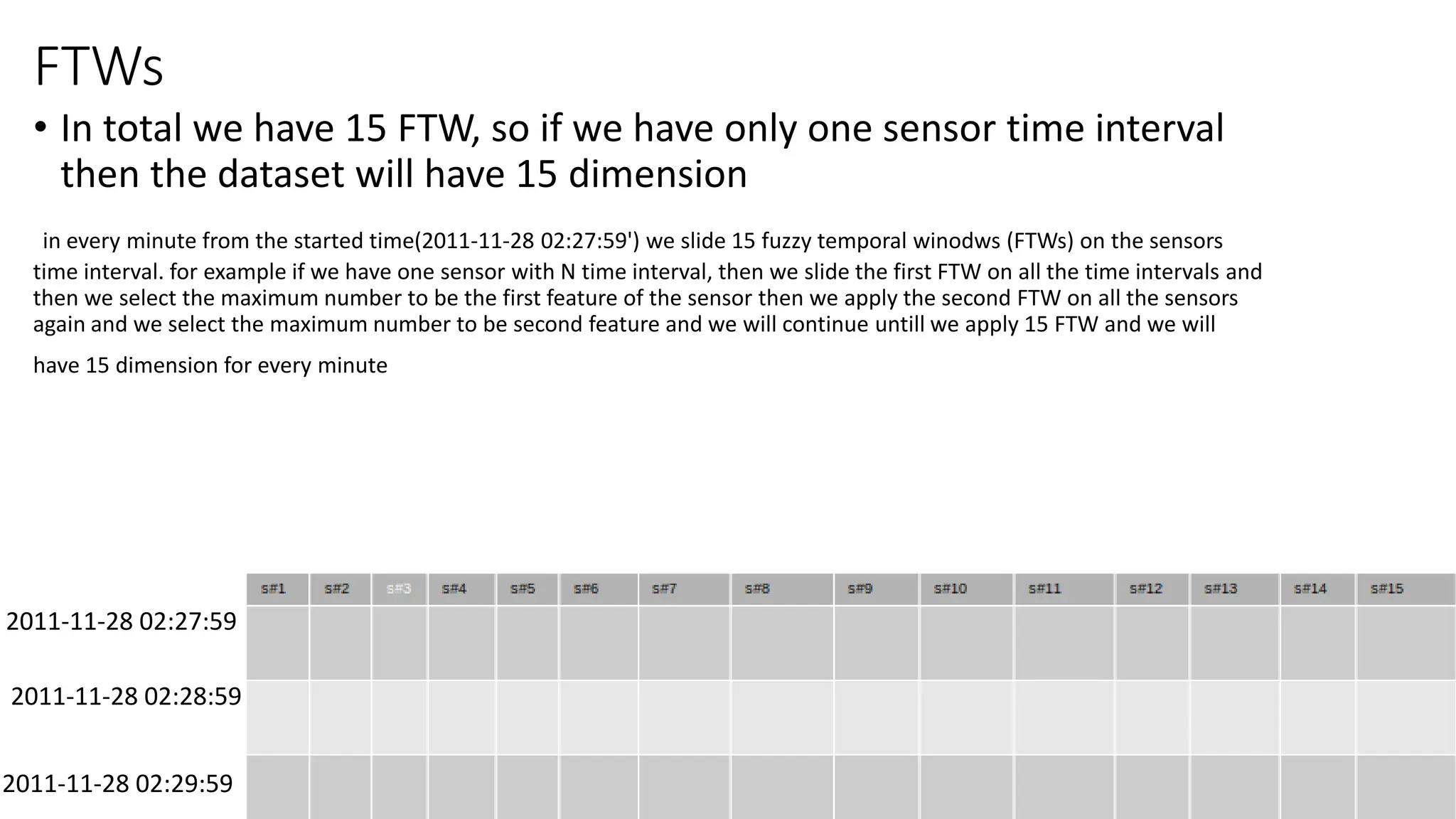

![FTWs

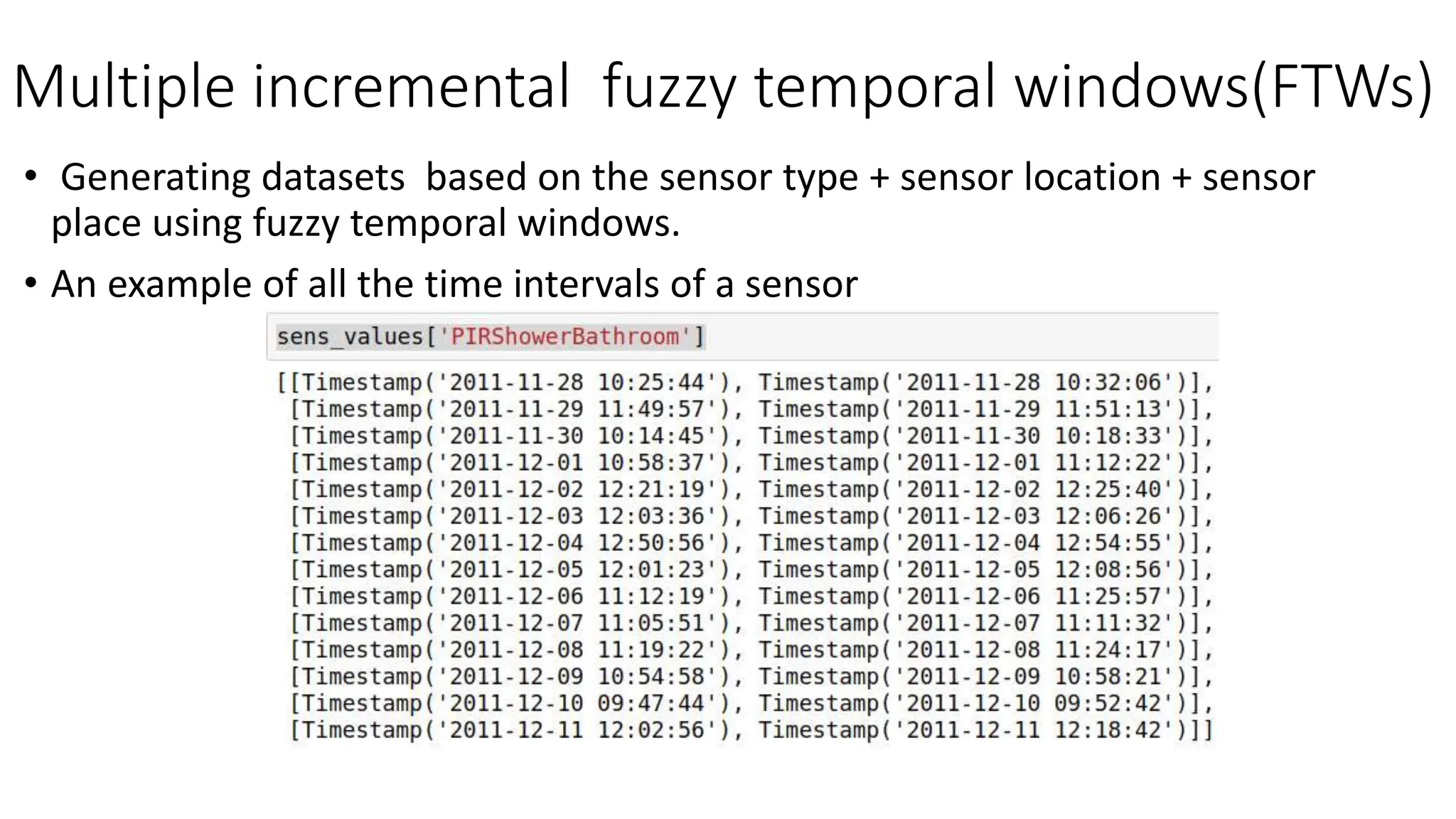

• Febon=[0, 0, 1,1,2, 3, 5, 8, 13, 21, 34, 55, 89, 144, 233, 377, 610, 987]

• curent_tme = the_start_time

• L1=curent_tme + 60 * Febon[i]

• U1=curent_tme + 60 *Febon[i+1]

• U2=curent_tme + 60 *Febon[i+2]

• L3=curent_tme + 60 * Febon[i+3]

• curent_tme +60](https://image.slidesharecdn.com/presentation5-231123170706-e90d935f/75/Presentation-Licentiate-degree-pptx-27-2048.jpg)