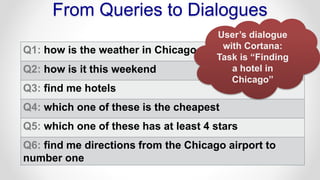

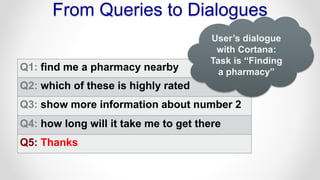

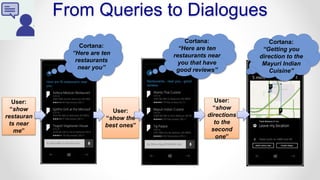

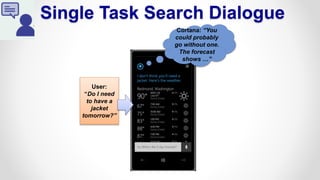

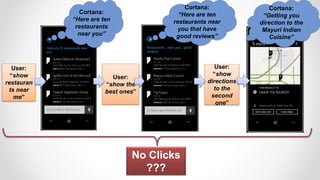

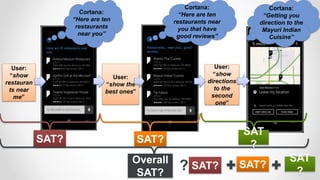

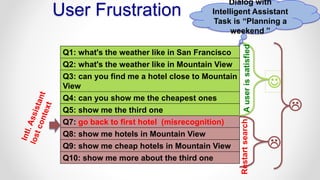

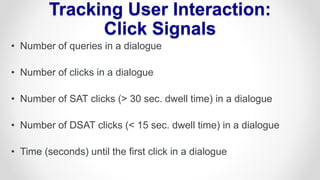

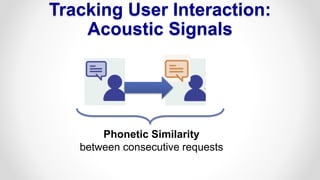

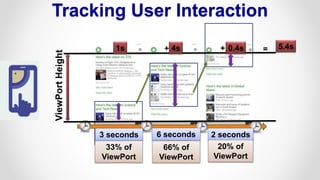

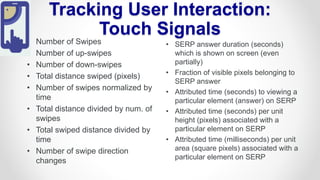

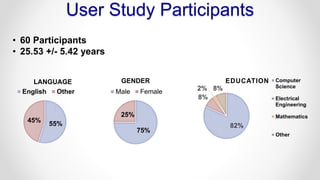

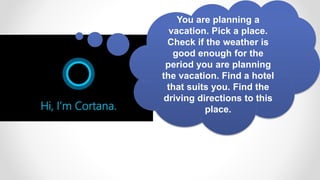

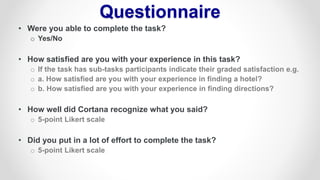

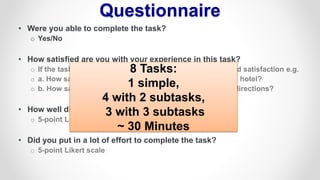

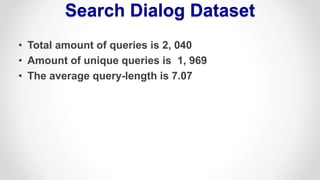

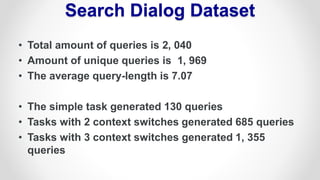

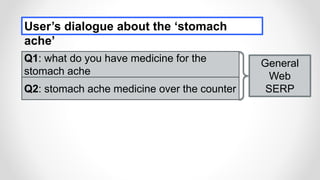

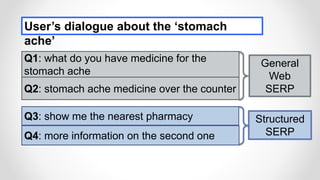

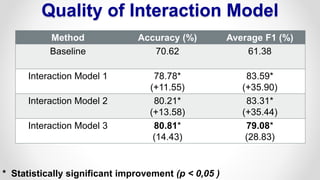

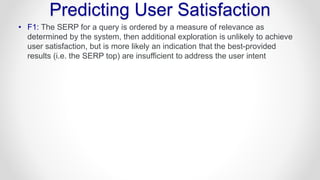

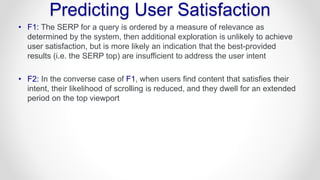

The document discusses how to predict user satisfaction with intelligent assistants through various interaction signals such as clicks, touches, and voice commands during search dialogues. It emphasizes the importance of task complexity and user frustration indicators while analyzing satisfaction based on user interactions. Additionally, it presents a model that improves accuracy in predicting satisfaction using derived features from these interactions.