poster

•

0 likes•76 views

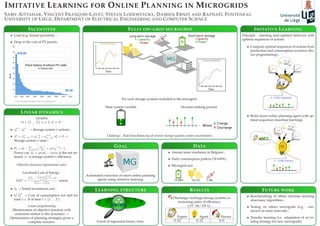

This document discusses using imitative learning to develop online planning agents for microgrids. It proposes learning optimal sequences of actions for storage systems from linear programming solutions and using these to build a smart planning agent via machine learning. The goal is to automate extraction of planning strategies that can balance storage systems in real-time under uncertainties. Preliminary results show the imitative learning agent outperforms a novice agent in minimizing costs.

Report

Share

Report

Share

Download to read offline

Recommended

Deep Learning Applications to Satellite Imagery

These are the slides from Intel's AI DevCon 2018 Conference. The video from the workshop is available online.The last few years has seen a significant increase in the launch of commercial and federal satellite imaging platforms. As these data become more widely available, so too have the data science challenges and research opportunities. In this hands-on workshop, CosmiQ Works and Intel AI Lab will introduce the business use cases and research questions around leveraging this imagery, as well as helpful tools and datasets to ease the friction. We will guide attendees through a hands-on exercise using the tools to train a small network on Intel® Xeon® Processors to detect buildings or road networks using the SpaceNet™ dataset. Join us to learn how to explore this exciting area of applied deep learning.

State of the Map US 2018: Analytic Support to Mapping Contributors

Significant advances in machine learning techniques for image classification, object detection and image segmentation have profound implications for crowdsourced mapping applications. Recent open source initiatives such as SpaceNet have strived to direct more research and development towards specific foundational mapping functions such as building detection and road network and routing identification. As these machine learning techniques mature, mapping contributors need to understand and engage the research community to help structure the application of these new techniques against a diverse of mapping challenges. Yet, currently, it is difficult translate mapping requirements to machine learning evaluation metrics, and vice versa. This presentation will discuss a proposed framework for defining levels of analyst augmentation that will allow mapping contributors and machine learning researchers to better understand each other and help direct the application of these advanced algorithms against mapping problems. Specifically, it will focus on relevant use case of mapping requirements, before, during and after a natural disaster and demonstrate a framework to understand what capabilities are nearing readiness.

Electrolux meetup

Presentation about participating in Statoil/C-CORE Iceberg Classifier Kaggle Challenge. Was presented on Electrolux meetup by Andrii Sydorchuk and Kirill Zhdanovich

Detecting probability of ice formation on overhead lines of the Dutch railway...

Slides used during my presentation at IEEE eScience 2018 conference in Amsterdam, during the parallel session "Weather & Climate Science in the Digital Era"

How Rough Is Your Runway?

Using Trimble TX9 terrestrial laser scanner my surveying team scanned an active runway in Western Australia to a tolerance spec of 3mm. The teams were working a live site providing aircraft right of way meant the teams had to setup and takedown scanner and targets to yield to any aircraft movements around the site and airspace. Surveyors took a ground based approach over drone UAS to maintain tighter vertical control than can be achieved using drone capture. We were tasked with looking for deviations, rutting and areas to derive Pavement Condition Index (PCI) criteria for their asset.

Once the data was captured surveying teams utilized TopoDot to assemble the raw scans into a consolidated model. They then attempted to use the pavement roughness algorithms in the software against the close to 3.4b points of classified data but had to split the datasets into halves and quads in order for the processing runs to complete. The Bentley product has an inbuilt “Road condition tool” which reports on pavement roughness characteristics but has preset expected pavement widths, roads not runway widths, set in the software. We explained to our surveyors that the algorithms might run faster in another product. It allowed us to explore FME as a point cloud processing workflow using feature tables functionality to quickly generate the statistics required for reporting deliverables using the entire dataset in one process.

Processing of raw astronomical data of large volume by map reduce model

Exponential grow of volume, increased quality of data in current and incoming sky surveys open new horizons for astrophysics but require new approaches to data processing specially big data technologies and cloud computing. This work presents a MapReduce-based approach to solve a major and important computational task in astrophysics - raw astronomical image data processing.

A historical introduction to deep learning: hardware, data, and tricks

The bumpy 60-year history that led to the current state of the art of deep learning and an overview of what yo can do with it.

Recommended

Deep Learning Applications to Satellite Imagery

These are the slides from Intel's AI DevCon 2018 Conference. The video from the workshop is available online.The last few years has seen a significant increase in the launch of commercial and federal satellite imaging platforms. As these data become more widely available, so too have the data science challenges and research opportunities. In this hands-on workshop, CosmiQ Works and Intel AI Lab will introduce the business use cases and research questions around leveraging this imagery, as well as helpful tools and datasets to ease the friction. We will guide attendees through a hands-on exercise using the tools to train a small network on Intel® Xeon® Processors to detect buildings or road networks using the SpaceNet™ dataset. Join us to learn how to explore this exciting area of applied deep learning.

State of the Map US 2018: Analytic Support to Mapping Contributors

Significant advances in machine learning techniques for image classification, object detection and image segmentation have profound implications for crowdsourced mapping applications. Recent open source initiatives such as SpaceNet have strived to direct more research and development towards specific foundational mapping functions such as building detection and road network and routing identification. As these machine learning techniques mature, mapping contributors need to understand and engage the research community to help structure the application of these new techniques against a diverse of mapping challenges. Yet, currently, it is difficult translate mapping requirements to machine learning evaluation metrics, and vice versa. This presentation will discuss a proposed framework for defining levels of analyst augmentation that will allow mapping contributors and machine learning researchers to better understand each other and help direct the application of these advanced algorithms against mapping problems. Specifically, it will focus on relevant use case of mapping requirements, before, during and after a natural disaster and demonstrate a framework to understand what capabilities are nearing readiness.

Electrolux meetup

Presentation about participating in Statoil/C-CORE Iceberg Classifier Kaggle Challenge. Was presented on Electrolux meetup by Andrii Sydorchuk and Kirill Zhdanovich

Detecting probability of ice formation on overhead lines of the Dutch railway...

Slides used during my presentation at IEEE eScience 2018 conference in Amsterdam, during the parallel session "Weather & Climate Science in the Digital Era"

How Rough Is Your Runway?

Using Trimble TX9 terrestrial laser scanner my surveying team scanned an active runway in Western Australia to a tolerance spec of 3mm. The teams were working a live site providing aircraft right of way meant the teams had to setup and takedown scanner and targets to yield to any aircraft movements around the site and airspace. Surveyors took a ground based approach over drone UAS to maintain tighter vertical control than can be achieved using drone capture. We were tasked with looking for deviations, rutting and areas to derive Pavement Condition Index (PCI) criteria for their asset.

Once the data was captured surveying teams utilized TopoDot to assemble the raw scans into a consolidated model. They then attempted to use the pavement roughness algorithms in the software against the close to 3.4b points of classified data but had to split the datasets into halves and quads in order for the processing runs to complete. The Bentley product has an inbuilt “Road condition tool” which reports on pavement roughness characteristics but has preset expected pavement widths, roads not runway widths, set in the software. We explained to our surveyors that the algorithms might run faster in another product. It allowed us to explore FME as a point cloud processing workflow using feature tables functionality to quickly generate the statistics required for reporting deliverables using the entire dataset in one process.

Processing of raw astronomical data of large volume by map reduce model

Exponential grow of volume, increased quality of data in current and incoming sky surveys open new horizons for astrophysics but require new approaches to data processing specially big data technologies and cloud computing. This work presents a MapReduce-based approach to solve a major and important computational task in astrophysics - raw astronomical image data processing.

A historical introduction to deep learning: hardware, data, and tricks

The bumpy 60-year history that led to the current state of the art of deep learning and an overview of what yo can do with it.

I²: Interactive Real-Time Visualization for Streaming Data

This is our poster for the demonstration "I²: Interactive Real-Time Visualization for Streaming Data" which was awarded as best demonstration at EDBT 2017.

The paper and the source code are available on GitHub: https://github.com/TU-Berlin-DIMA/i2

AI and Deep Learning for On-Board Satellite Image Analysis, OW2con'19, June 1...

This presentation presents how OW2 ProActive is accelerating the development of AI Image Analysis with Deep Learning.

The objective is to on-board and launch in a Satellite analysis that automatically detect image differences between two passes of a satellite over the same spot on the earth. This further accelerate notification of the ground station that something potentially abnormal or dangerous is going-on on our planet.

Denis Reznik Data driven future

Of course, you know what data is. Probably you know what Big Data and small data is. But what's the heck is that buzz about data? Why is it so important today? These are the questions which will be the topic of the session. This session will be beyond the definitions and descriptions. We will talk about data, about different options for data usage, and how we can benefit from data.

Multi objective vm placement using cloudsim

In this ppt VM placement with more than 1 objective is presented

(Big) data for env. monitoring, public health and verifiable risk assessment-...

Presented by Andreas N. Skouloudis (JRC) during the 2nd BDE SC5 workshop, 11 October 2016, in Brussels, Belgium

Snow cover assessment tool using Python

Snow cover assessment tool using Python

Developed by Antara / Prasun

The physics background of the BDE SC5 pilot cases

Presented by Spyros Andronopoulos (NCSR-Demokritos) during the 2nd BDE SC5 workshop, 11 October 2016, in Brussels, Belgium

An Update on CSCS

In this deck from the HPC User Forum at Argonne, Thomas Schulthess presents: An Update on CSCS.

"CSCS develops and operates cutting-edge high-performance computing systems as an essential service facility for Swiss researchers. These computing systems are used by scientists for a diverse range of purposes – from high-resolution simulations to the analysis of complex data. Simulation is considered the third pillar of science, alongside theory and experimentation, and scientists from an increasing number of disciplines are using high-performance computing simulation for their research. For example, supercomputers are used to model new materials with hitherto unknown properties. Climate modelling and weather forecasting would be impossible without them. In social science, simulations can help prevent mass panic by predicting people’s behavior. In medicine, computer simulations aid diagnostics help improve treatment methods. Moreover, they can facilitate risk assessments for natural hazards such as earthquakes and the tsunamis they might trigger.

CSCS has a strong track record in supporting the processing, analysis and storage of scientific data, and is investing heavily in new tools and computing systems to support data science applications. For more than a decade, CSCS has been involved in the analysis of the many petabytes of data produced by scientific instruments such as the Large Hadron Collider (LHC) at CERN. Supporting scientists in extracting knowledge from structured and unstructured data is a key priority for CSCS."

Learn more: https://www.cscs.ch/

and

http://hpcuserforum.com

Sign up for our insideHPC Newsletter: http://insidehpc.com/newsletter

AHM 2014: The Flow Simulation Tools on VHub

Presentation by Sylvain Charbonnier during the lunch & learn sessions on Day 2, June 25 at the EarthCube All-Hands Meeting

Cloud Computing - examples

Congresso Sociedade Brasileira de Computação CSBC2016 Porto Alegre (Brazil)

Workshop on Cloud Networks & Cloudscape Brazil

Rodolfo Azevedo - Associate professor at University of Campinas, Brazil

Interdisciplinary Research for Cloud Computing: Future and challenges

HPC landscape in Brazil

Presented at "e-Infrastructures & RDA for data intensive science" workshop. Paris, September 2015

One Algorithm to Rule Them All: How to Automate Statistical Computation

Delivered by Alp Kucukelbir (Data Scientist, Columbia University) at the 2016 New York R Conference on April 8th and 9th at Work-Bench.

More Related Content

What's hot

I²: Interactive Real-Time Visualization for Streaming Data

This is our poster for the demonstration "I²: Interactive Real-Time Visualization for Streaming Data" which was awarded as best demonstration at EDBT 2017.

The paper and the source code are available on GitHub: https://github.com/TU-Berlin-DIMA/i2

AI and Deep Learning for On-Board Satellite Image Analysis, OW2con'19, June 1...

This presentation presents how OW2 ProActive is accelerating the development of AI Image Analysis with Deep Learning.

The objective is to on-board and launch in a Satellite analysis that automatically detect image differences between two passes of a satellite over the same spot on the earth. This further accelerate notification of the ground station that something potentially abnormal or dangerous is going-on on our planet.

Denis Reznik Data driven future

Of course, you know what data is. Probably you know what Big Data and small data is. But what's the heck is that buzz about data? Why is it so important today? These are the questions which will be the topic of the session. This session will be beyond the definitions and descriptions. We will talk about data, about different options for data usage, and how we can benefit from data.

Multi objective vm placement using cloudsim

In this ppt VM placement with more than 1 objective is presented

(Big) data for env. monitoring, public health and verifiable risk assessment-...

Presented by Andreas N. Skouloudis (JRC) during the 2nd BDE SC5 workshop, 11 October 2016, in Brussels, Belgium

Snow cover assessment tool using Python

Snow cover assessment tool using Python

Developed by Antara / Prasun

The physics background of the BDE SC5 pilot cases

Presented by Spyros Andronopoulos (NCSR-Demokritos) during the 2nd BDE SC5 workshop, 11 October 2016, in Brussels, Belgium

An Update on CSCS

In this deck from the HPC User Forum at Argonne, Thomas Schulthess presents: An Update on CSCS.

"CSCS develops and operates cutting-edge high-performance computing systems as an essential service facility for Swiss researchers. These computing systems are used by scientists for a diverse range of purposes – from high-resolution simulations to the analysis of complex data. Simulation is considered the third pillar of science, alongside theory and experimentation, and scientists from an increasing number of disciplines are using high-performance computing simulation for their research. For example, supercomputers are used to model new materials with hitherto unknown properties. Climate modelling and weather forecasting would be impossible without them. In social science, simulations can help prevent mass panic by predicting people’s behavior. In medicine, computer simulations aid diagnostics help improve treatment methods. Moreover, they can facilitate risk assessments for natural hazards such as earthquakes and the tsunamis they might trigger.

CSCS has a strong track record in supporting the processing, analysis and storage of scientific data, and is investing heavily in new tools and computing systems to support data science applications. For more than a decade, CSCS has been involved in the analysis of the many petabytes of data produced by scientific instruments such as the Large Hadron Collider (LHC) at CERN. Supporting scientists in extracting knowledge from structured and unstructured data is a key priority for CSCS."

Learn more: https://www.cscs.ch/

and

http://hpcuserforum.com

Sign up for our insideHPC Newsletter: http://insidehpc.com/newsletter

AHM 2014: The Flow Simulation Tools on VHub

Presentation by Sylvain Charbonnier during the lunch & learn sessions on Day 2, June 25 at the EarthCube All-Hands Meeting

Cloud Computing - examples

Congresso Sociedade Brasileira de Computação CSBC2016 Porto Alegre (Brazil)

Workshop on Cloud Networks & Cloudscape Brazil

Rodolfo Azevedo - Associate professor at University of Campinas, Brazil

Interdisciplinary Research for Cloud Computing: Future and challenges

HPC landscape in Brazil

Presented at "e-Infrastructures & RDA for data intensive science" workshop. Paris, September 2015

One Algorithm to Rule Them All: How to Automate Statistical Computation

Delivered by Alp Kucukelbir (Data Scientist, Columbia University) at the 2016 New York R Conference on April 8th and 9th at Work-Bench.

What's hot (20)

I²: Interactive Real-Time Visualization for Streaming Data

I²: Interactive Real-Time Visualization for Streaming Data

AI and Deep Learning for On-Board Satellite Image Analysis, OW2con'19, June 1...

AI and Deep Learning for On-Board Satellite Image Analysis, OW2con'19, June 1...

(Big) data for env. monitoring, public health and verifiable risk assessment-...

(Big) data for env. monitoring, public health and verifiable risk assessment-...

Mr. giacomo martirano (epsilon italia srl) “arc fuel and inspire”

Mr. giacomo martirano (epsilon italia srl) “arc fuel and inspire”

One Algorithm to Rule Them All: How to Automate Statistical Computation

One Algorithm to Rule Them All: How to Automate Statistical Computation

Viewers also liked

SMAU 2013 Workshop - La rivoluzione "Mobile" di HT: Sempre, Ovunque, Meglio.

La mobility rivoluziona l’ERP!

Grazie alle innovative Applicazioni Mobile, SAP può essere fruito completamente anche su tablet e smartphone integrando le risorse interne con le risorse sul campo, in modalità sincronizzata automatica e trasparente.

“Sempre, Ovunque, Meglio”.

Códigos en la educación

Aquí te mostraremos ¿ Qué son y como nos proporcionan información los códigos QR ?

China project description from 5th to 15th century

This presentation is about China and its religious and cultural advances.

I Congreso Internacional de RedUE ALCUE

Participación del OVTT en el Tercer Panel del encuentro, "Vigilancia tecnológica e inteligencia de negocios", con la comunicación: “La vigilancia tecnológica como proceso de innovación relacional Universidad-Empresa: Observa, Metabuscador en Ciencia y Tecnología”. Santiago de Chile, Octubre 2013

IPhone Application Development India |#IPhoneApplicationDevelopmentIndia

We combine our years of experience with creativity, strong ideas, and collaboration, to incorporate special features such as multi-touch, 2D graphics, and even Facebook integration.

Viewers also liked (16)

Seed forum global second summit of science and technology parks in russia

Seed forum global second summit of science and technology parks in russia

SMAU 2013 Workshop - La rivoluzione "Mobile" di HT: Sempre, Ovunque, Meglio.

SMAU 2013 Workshop - La rivoluzione "Mobile" di HT: Sempre, Ovunque, Meglio.

China project description from 5th to 15th century

China project description from 5th to 15th century

IPhone Application Development India |#IPhoneApplicationDevelopmentIndia

IPhone Application Development India |#IPhoneApplicationDevelopmentIndia

Similar to poster

State estimation and Mean-Field Control with application to demand dispatch

Y. Chen, A. Busic, and S. Meyn.

In 54th IEEE Conference on Decision and Control, Dec. 2015.

See also journal version of the paper,

http://arxiv.org/abs/1504.00088

HPC + Ai: Machine Learning Models in Scientific Computing

In this video from the 2019 Stanford HPC Conference, Steve Oberlin from NVIDIA presents: HPC + Ai: Machine Learning Models in Scientific Computing.

"Most AI researchers and industry pioneers agree that the wide availability and low cost of highly-efficient and powerful GPUs and accelerated computing parallel programming tools (originally developed to benefit HPC applications) catalyzed the modern revolution in AI/deep learning. Clearly, AI has benefited greatly from HPC. Now, AI methods and tools are starting to be applied to HPC applications to great effect. This talk will describe an emerging workflow that uses traditional numeric simulation codes to generate synthetic data sets to train machine learning algorithms, then employs the resulting AI models to predict the computed results, often with dramatic gains in efficiency, performance, and even accuracy. Some compelling success stories will be shared, and the implications of this new HPC + AI workflow on HPC applications and system architecture in a post-Moore’s Law world considered."

Watch the video: https://youtu.be/SV3cnWf39kc

Learn more: https://nvidia.com

and

http://hpcadvisorycouncil.com/events/2019/stanford-workshop/

Sign up for our insideHPC Newsletter: http://insidehpc.com/newsletter

IRIS Webinar: How can software support smart cities and energy projects?

IRIS experts looked at 15+ software tools to help accelerate replication and uptake of smart city and energy initiatives. Discover their findings and practical applications in Alexandroupolis, Greece (electricity, heating & cooling) and Nice, France for Battery sizing.

Held in conjunction with fellow smart city project POCITYF (www.pocityf.eu) 7 December 2019

Overview of the FlexPlan project. Focus on EU regulatory analysis and TSO-DSO...

Webinar recording at https://youtu.be/4s2GGlu-ylc

The FlexPlan project (https://flexplan-project.eu/) aims at establishing a new grid planning methodology making use of storage and flexible loads as an alternative to the build-up of new grid elements. After introducing the project, the webinar will focus on pan-European grid planning regulation and present practices of TSOs and DSOs.

byteLAKE's expertise across NVIDIA architectures and configurations

AI Solutions for Industries | Quality Inspection | Data Insights | AI-accelerated CFD | Self-Checkout | byteLAKE.com

byteLAKE: Empowering Industries with AI Solutions. Embrace cutting-edge technology for advanced quality inspection, data insights, and more. Harness the potential of our CFD Suite, accelerating Computational Fluid Dynamics for heightened productivity. Unlock new possibilities with Cognitive Services: image analytics for precise visual inspection for Manufacturing, sound analytics enabling proactive maintenance for Automotive, and wet line analytics for the Paper Industry. Seamlessly convert data into actionable insights using Data Insights' AI module, enabling advanced predictive maintenance and risk detection. Simplify Restaurant and Retail operations with our efficient self-checkout solution, recognizing meals and groceries and elevating customer satisfaction. Custom AI Development services available for tailored solutions. Discover more at www.byteLAKE.com.

T1-4_Maslennikov_et_al.pdf

Transmission Constraint

Management at ISO New England

Business Architecture and Technology Department

RT15 Berkeley | Optimized Power Flow Control in Microgrids - Sandia Laboratory

Presented by David Wilson at OPAL-RT RT15 User Group event in Berkeley on May 13-14, 2015

Quantum Leap in Next-Generation Computing

Quantum computers are rapidly evolving and are promising significant advantages in domains like machine learning or optimization, to name but a few areas. In this keynote we sketch the underpinnings of quantum computing, show some of the inherent advantages, highlight some application areas, and show how quantum applications are built.

LEGaTO: Low-Energy Heterogeneous Computing Use of AI in the project

Presentation by Osman Unsal and Pirah Noor Soomro at the webinar AI4EU WebCafé: 'Energy-efficient AI, a perspective from the LEGaTO project' on 28 October 2020

Architectural Optimizations for High Performance and Energy Efficient Smith-W...

Architectural Optimizations for High Performance and Energy Efficient Smith-W...NECST Lab @ Politecnico di Milano

NGC17 Talk @ Oracle - June 7, 2017Revisiting Sensor MAC for Periodic Monitoring: Why Should Transmitters Be Ear...

Daewoo Kim,

Slide for presentation in IEEE SECON 2017,

Self-adaptive container monitoring with performance-aware Load-Shedding policies

Self-adaptive container monitoring with performance-aware Load-Shedding policiesNECST Lab @ Politecnico di Milano

Self-adaptive container monitoring with performance-aware Load-Shedding policies, by Rolando Brondolin, PhD student in System Architecture at Politecnico di MilanoTest different neural networks models for forecasting of wind,solar and energ...

In this project work, a multi-step deep neural network is used to forecast power generation and load demand for a short-term time frame. The data or feature vectors that have been used to predict the target, is a sequential time series sequence. In this project, a Recurrent Neural Network has been used in combination with a convolutional neural network to have a better forecasting model for the Windpark, Solar park and Loadpark datasets. Moreover, the forecasting performance of Feedforward neural network and Long Short Term Memory also has been compared. The whole project work has divided into two parts, in the first approach the raw dataset has been divided into a train, test split and no previous step data have been used. In the second step whole raw dataset has been divided into test, train and validation split. Additionally, current and seven previous time steps data has been fed into the model.

Poster: Contract-Based Integration of Cyber-Physical Analyses

A poster presented at the International Conference on Embedded Software (EMSOFT) 2014

Similar to poster (20)

State estimation and Mean-Field Control with application to demand dispatch

State estimation and Mean-Field Control with application to demand dispatch

A Smart Home Testbed for Evaluating XAI with Non-Experts

A Smart Home Testbed for Evaluating XAI with Non-Experts

HPC + Ai: Machine Learning Models in Scientific Computing

HPC + Ai: Machine Learning Models in Scientific Computing

IRIS Webinar: How can software support smart cities and energy projects?

IRIS Webinar: How can software support smart cities and energy projects?

Overview of the FlexPlan project. Focus on EU regulatory analysis and TSO-DSO...

Overview of the FlexPlan project. Focus on EU regulatory analysis and TSO-DSO...

byteLAKE's expertise across NVIDIA architectures and configurations

byteLAKE's expertise across NVIDIA architectures and configurations

RT15 Berkeley | Optimized Power Flow Control in Microgrids - Sandia Laboratory

RT15 Berkeley | Optimized Power Flow Control in Microgrids - Sandia Laboratory

LEGaTO: Low-Energy Heterogeneous Computing Use of AI in the project

LEGaTO: Low-Energy Heterogeneous Computing Use of AI in the project

Architectural Optimizations for High Performance and Energy Efficient Smith-W...

Architectural Optimizations for High Performance and Energy Efficient Smith-W...

Revisiting Sensor MAC for Periodic Monitoring: Why Should Transmitters Be Ear...

Revisiting Sensor MAC for Periodic Monitoring: Why Should Transmitters Be Ear...

Self-adaptive container monitoring with performance-aware Load-Shedding policies

Self-adaptive container monitoring with performance-aware Load-Shedding policies

Test different neural networks models for forecasting of wind,solar and energ...

Test different neural networks models for forecasting of wind,solar and energ...

Poster: Contract-Based Integration of Cyber-Physical Analyses

Poster: Contract-Based Integration of Cyber-Physical Analyses

poster

- 1. IMITATIVE LEARNING FOR ONLINE PLANNING IN MICROGRIDS SAMY AITTAHAR, VINCENT FRANÇOIS-LAVET, STEFAN LODEWEYCKX, DAMIEN ERNST AND RAPHAËL FONTENEAU UNIVERSITY OF LIÈGE, DEPARTMENT OF ELECTRICAL ENGINEERING AND COMPUTER SCIENCE FULLY OFF-GRID MICROGRID For each storage systems included in the microgrid : State system variable Decision making process Challenge : Real-time balancing of several storage systems under uncertainties. DATA • Annual solar irradiance in Belgium ; • Daily consumption pattern (18 kWh) ; • Microgrid size. INCENTIVES • Load (e.g. house) proximity ; • Drop in the cost of PV panels. LINEAR DYNAMICS Variables ∀t ∈ {1 . . . T}, σ ∈ Σ, T ∈ N : • aσ,+ t , aσ,− t → Storage system σ actions ; • sσ t = sσ (t−1) + a−,σ t−1 + a+,σ (t−1), sσ 0 = 0 → Storage system σ state ; • Ft = dt − σ∈Σ ( a+,σ t ησ + ησ a−,σ t ) → Power cut. dt = prodt − const is the net de- mand. ησ is storage system σ efficiency. Objective function (operational costs) Levelized Cost of Energy : LEC = T t=1 − ψ∈Ψ k ψ t F ψ t (1+r)y +I0 n y=1 y (1+r)y where • I0 → Initial investment cost ; • kψ t Fψ t → Cost of consumption not met for load ψ ∈ Ψ at time t ∈ {1 . . . T}. Linear programming Minimization of objective function with contraints related to the dynamics → Optimization of planning strategies given a complete scenario. GOAL Automated extraction of smart online planning agents using imitative learning. IMITATIVE LEARNING Principle : learning near-optimal behavior with optimal sequences of actions. • Compute optimal sequences of actions from production and consumption scenarios (lin- ear programming) ; • Build smart online planning agent with op- timal sequences (machine learning). FUTURE WORK • Benchmarking of others machine learning structures/algorithms ; • Testing on others microgrids (e.g. con- nected on main network) ; • Transfer learning (i.e. adaptation of an ex- isting strategy for new microgrids). RESULTS Discharge/recharge storage systems in increasing order of efficiency. LEC (e/ kWh) : Expert Agent Novice 0.32 0.42 0.6 LEARNING STRUCTURE Forest of regression binary trees.