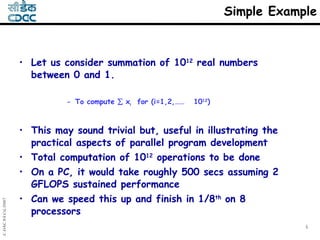

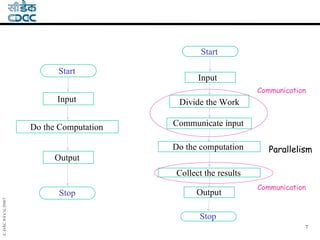

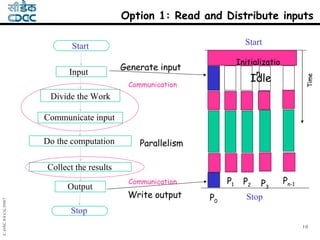

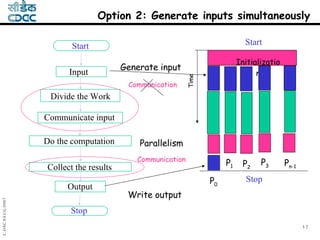

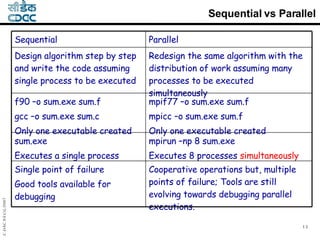

1) The document discusses parallelizing an application by dividing the work across multiple processors. It provides a simple example of summing 10^12 real numbers between 0 and 1 in parallel.

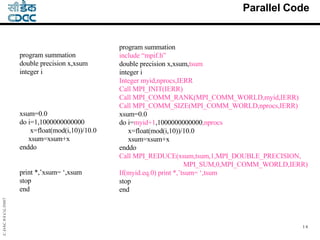

2) The example shows dividing the work of computing the sum across 8 processors. Each processor generates a portion of the input numbers and computes a partial sum, which are then combined.

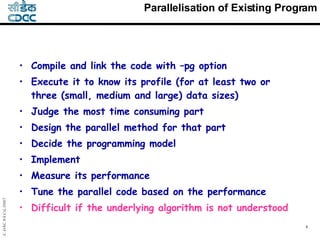

3) The key steps of parallelizing an existing application are profiling it to find the most time-consuming part, designing a parallel method for that part, implementing it using a programming model like MPI, and measuring/tuning performance.