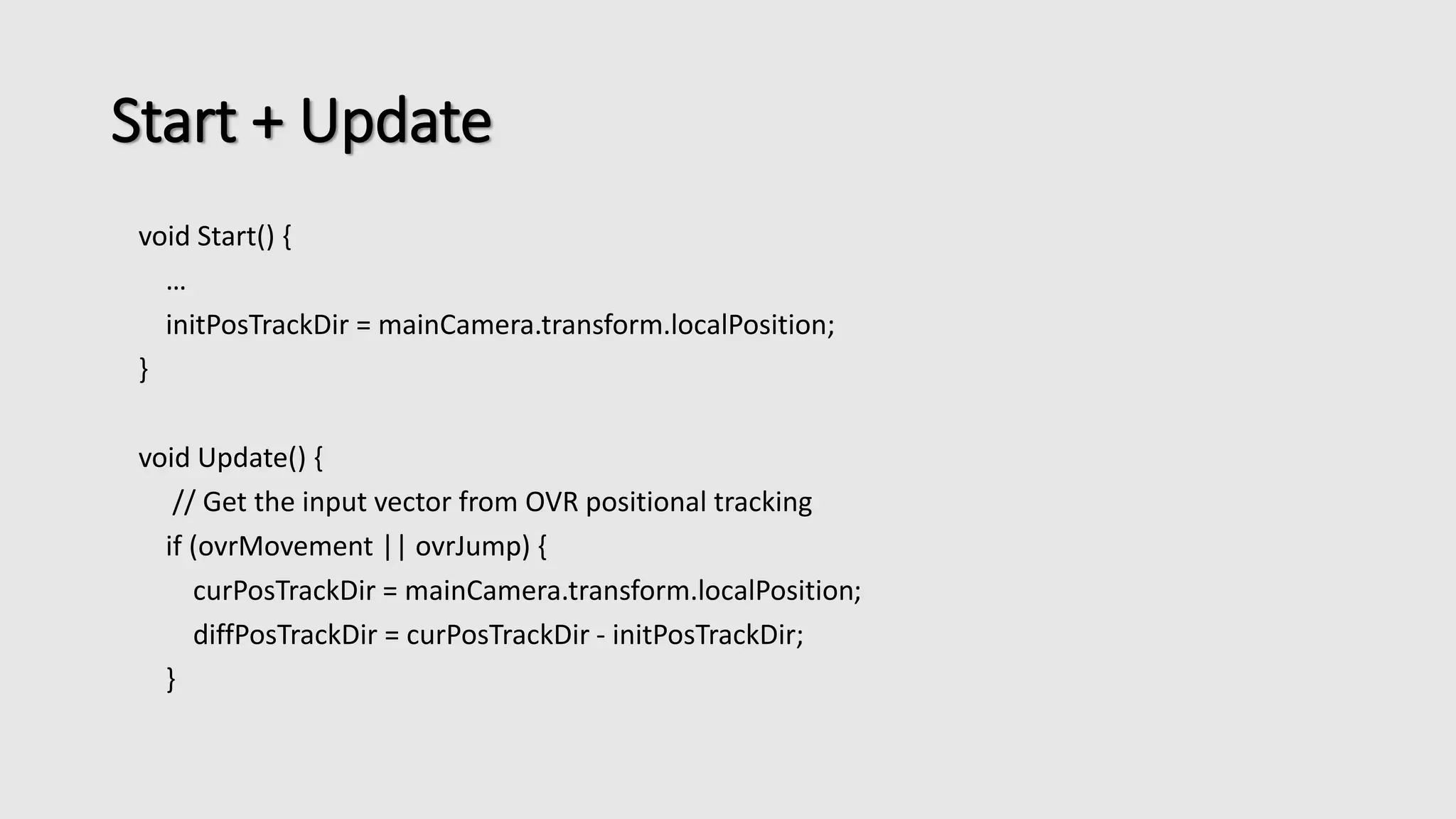

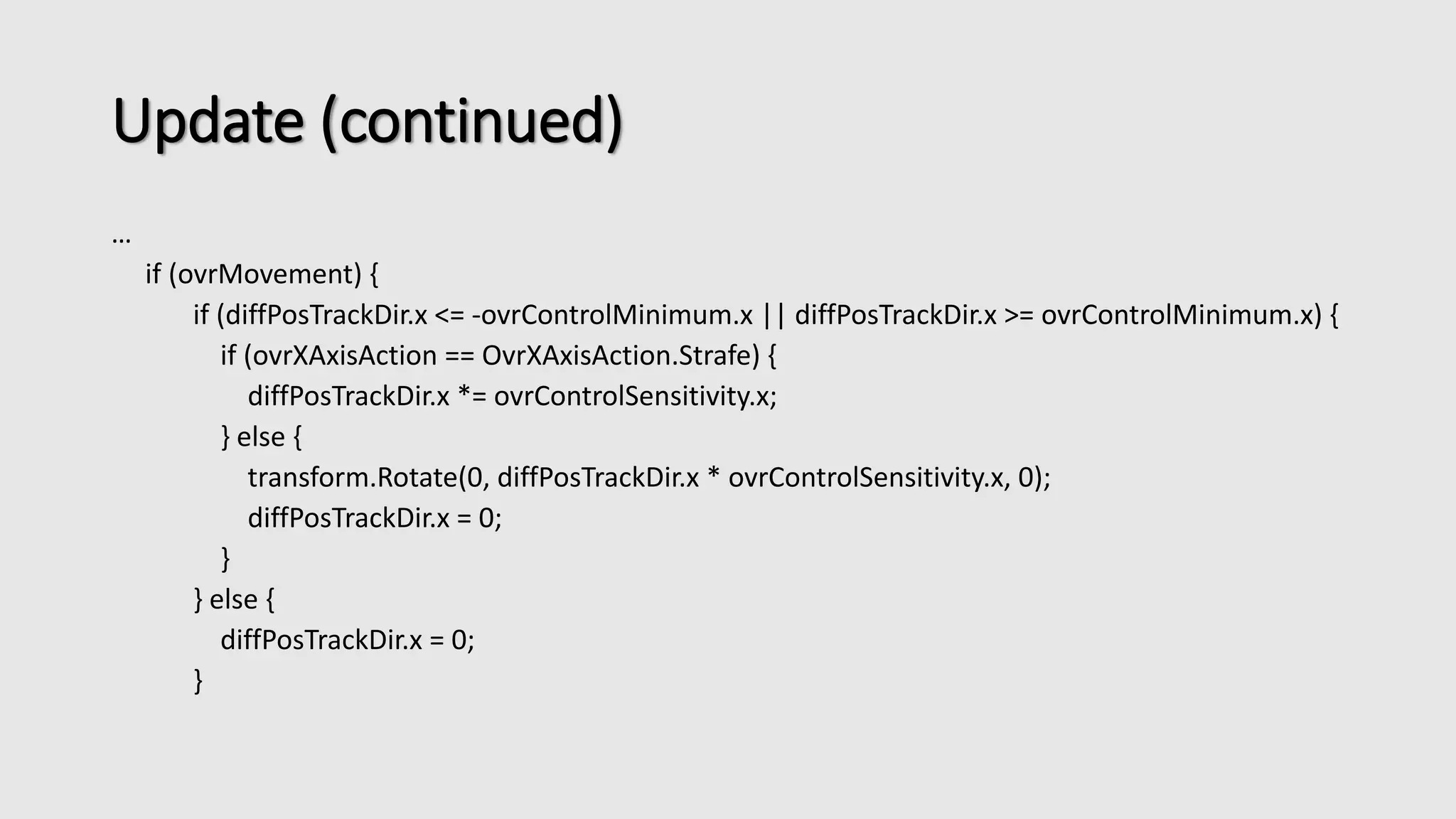

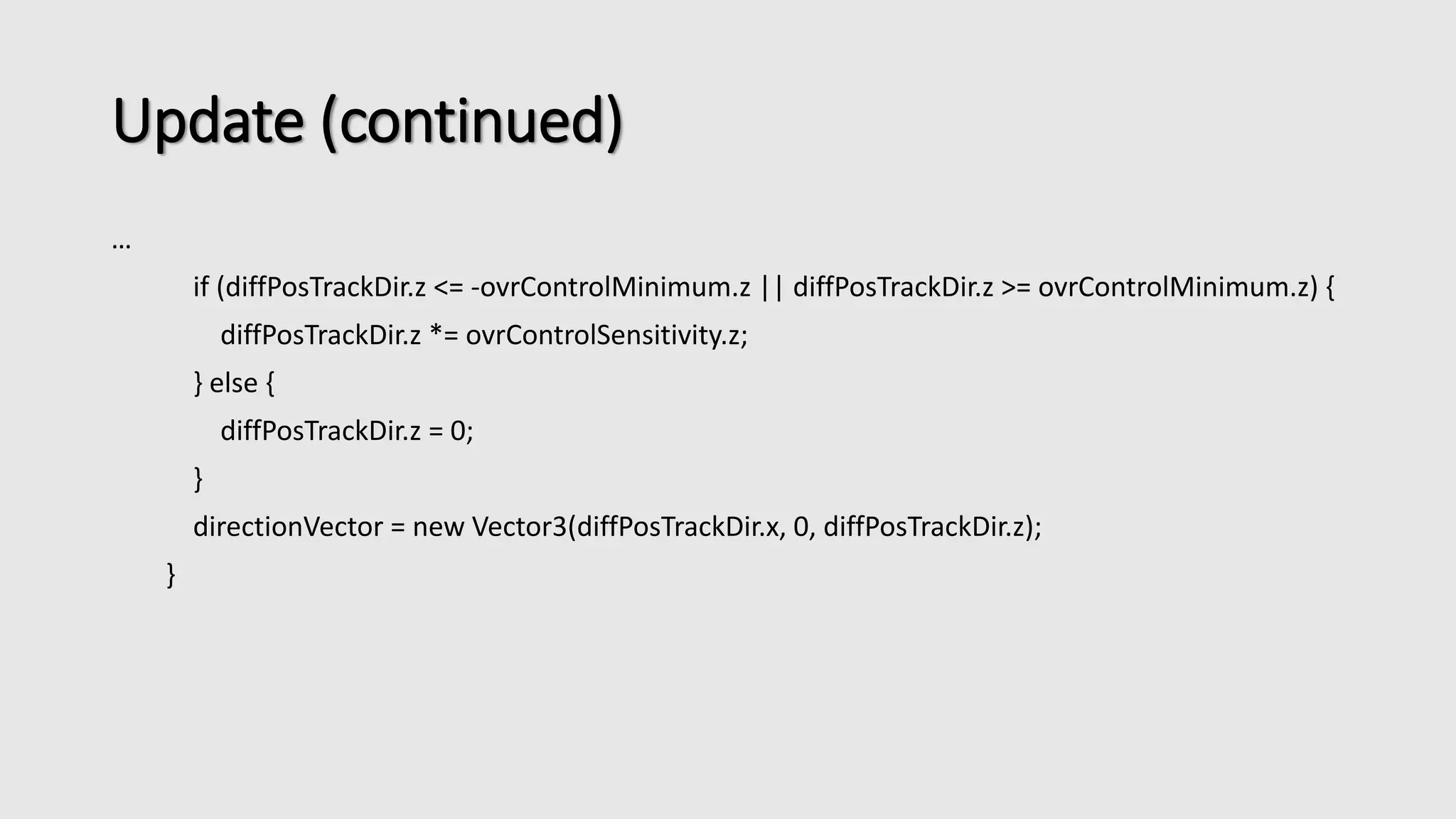

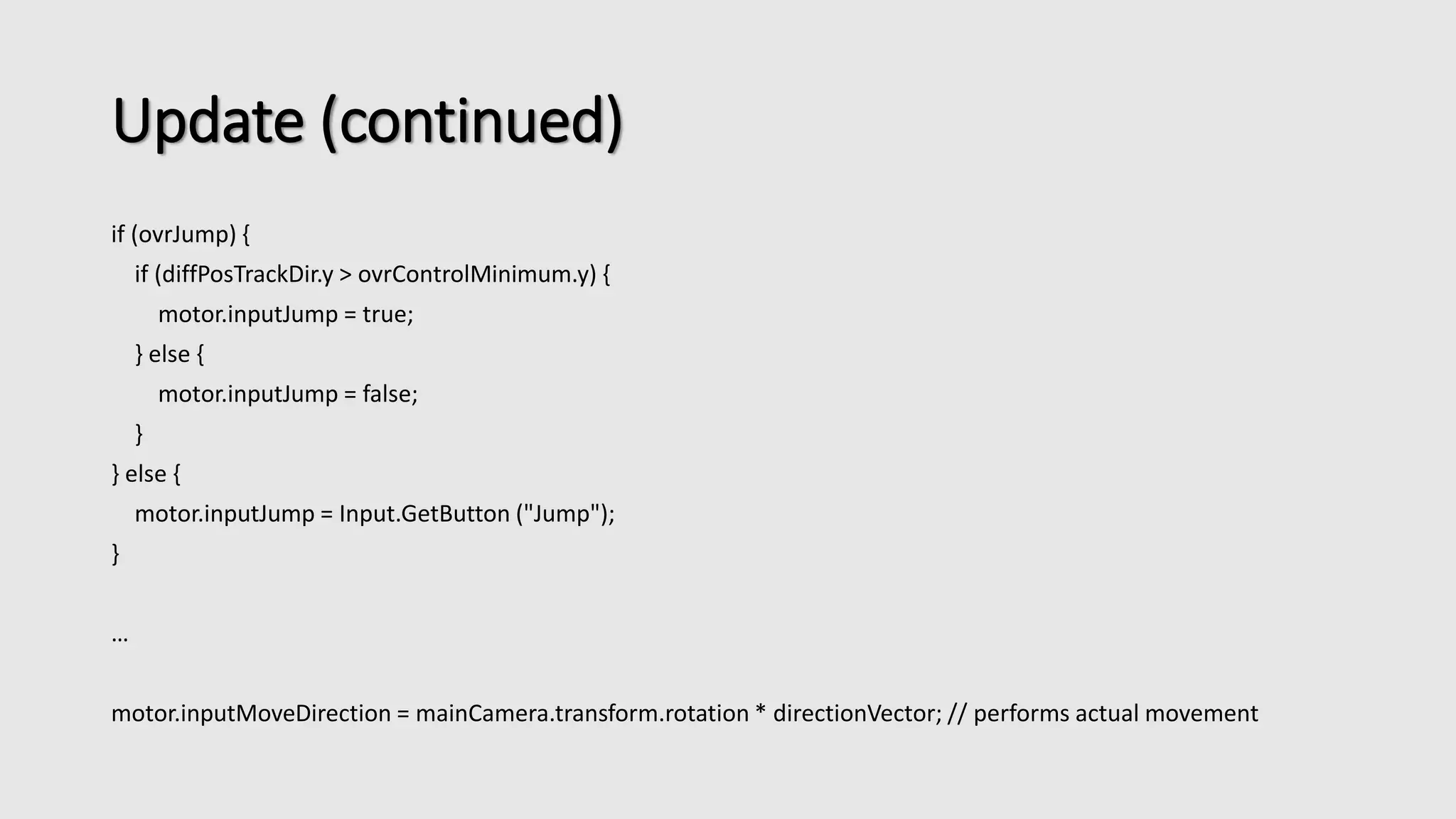

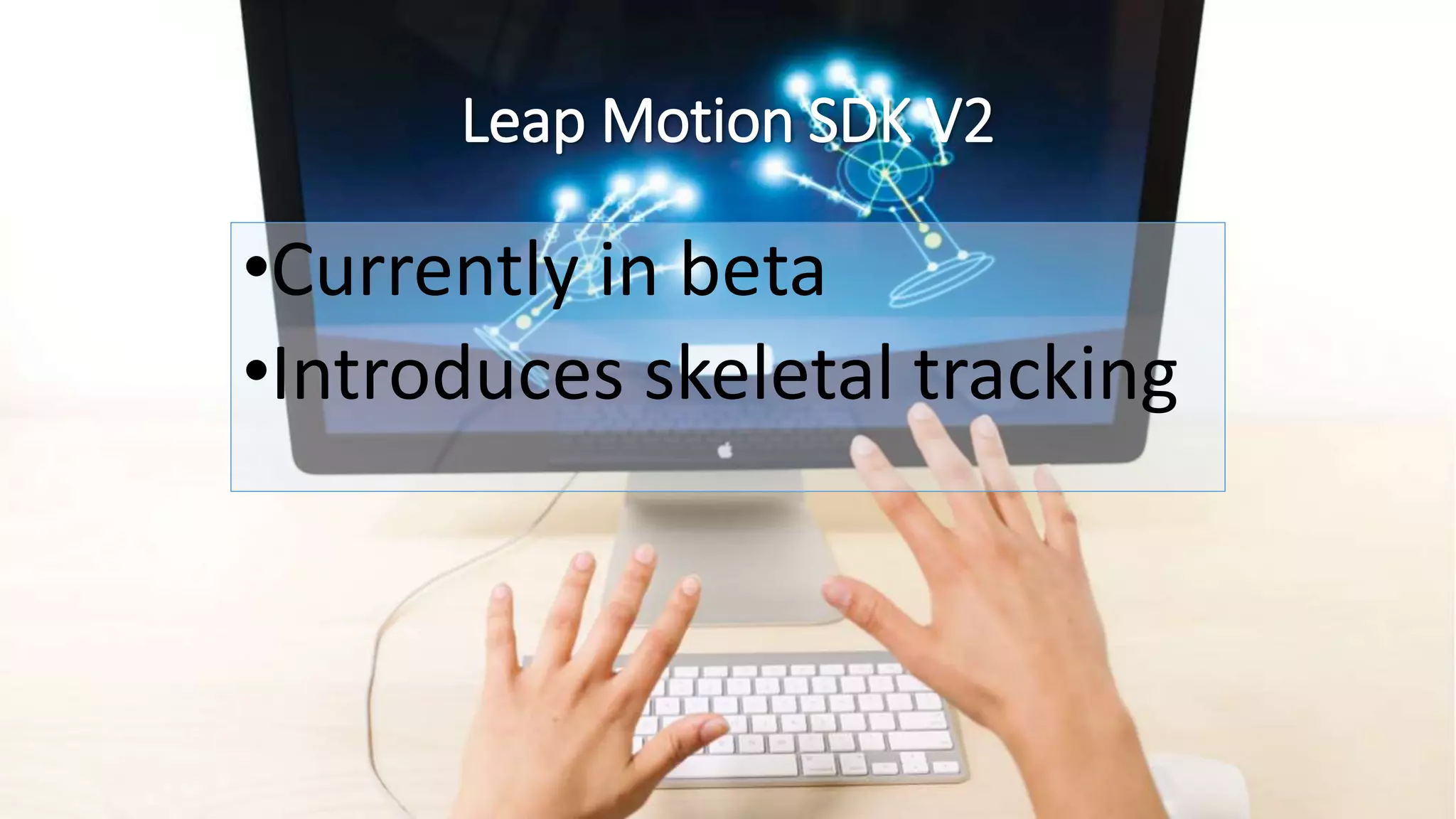

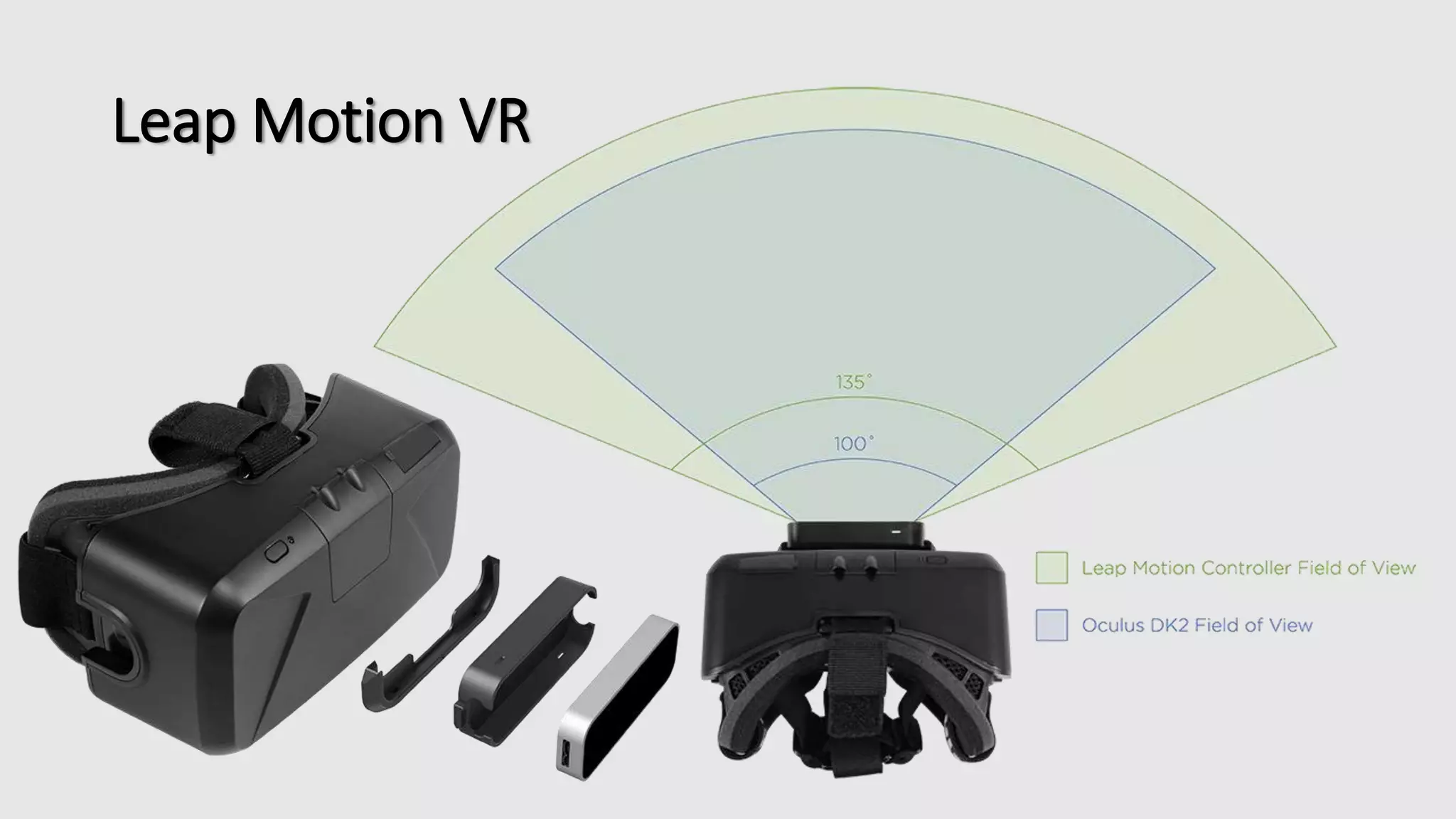

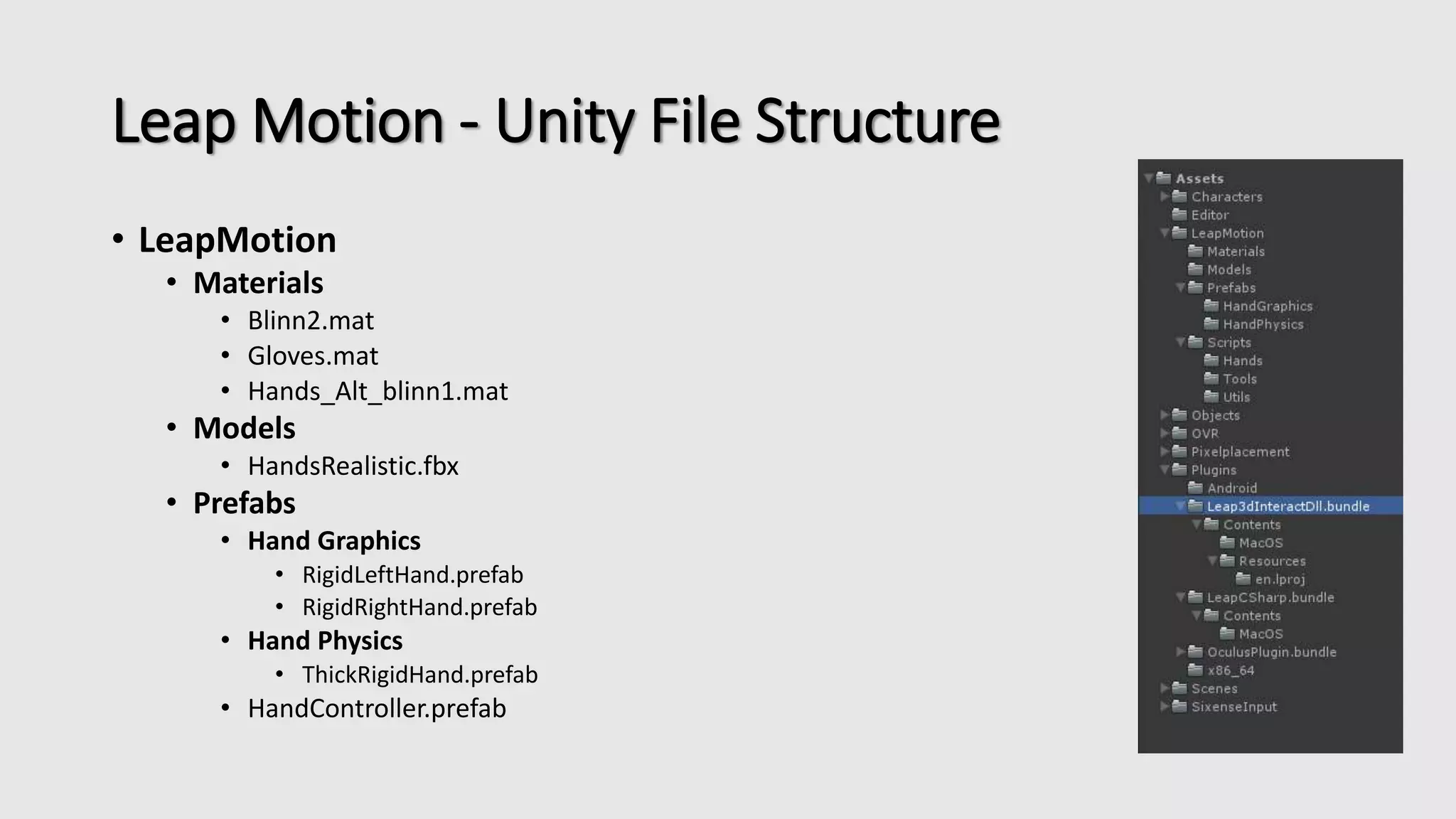

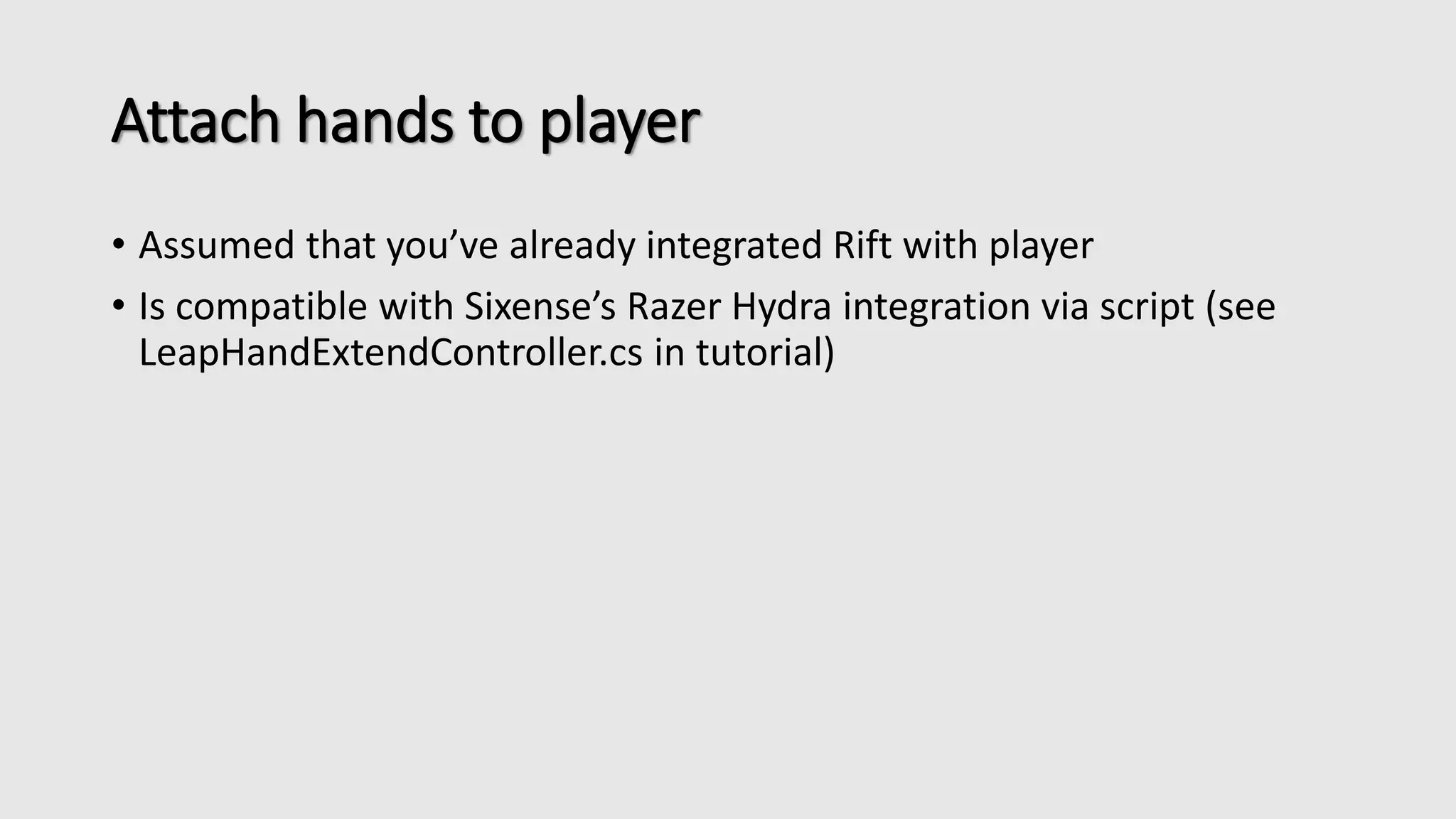

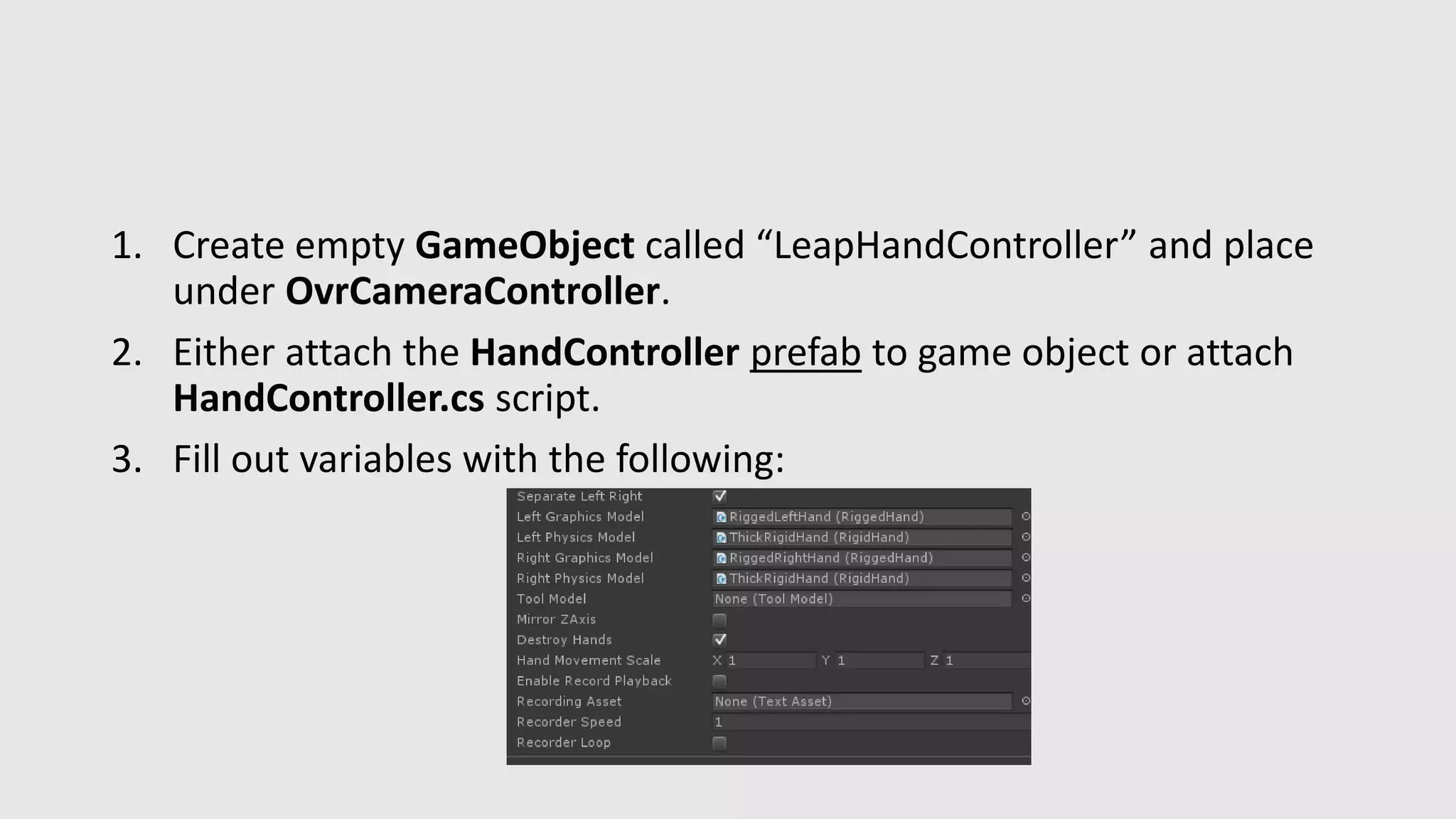

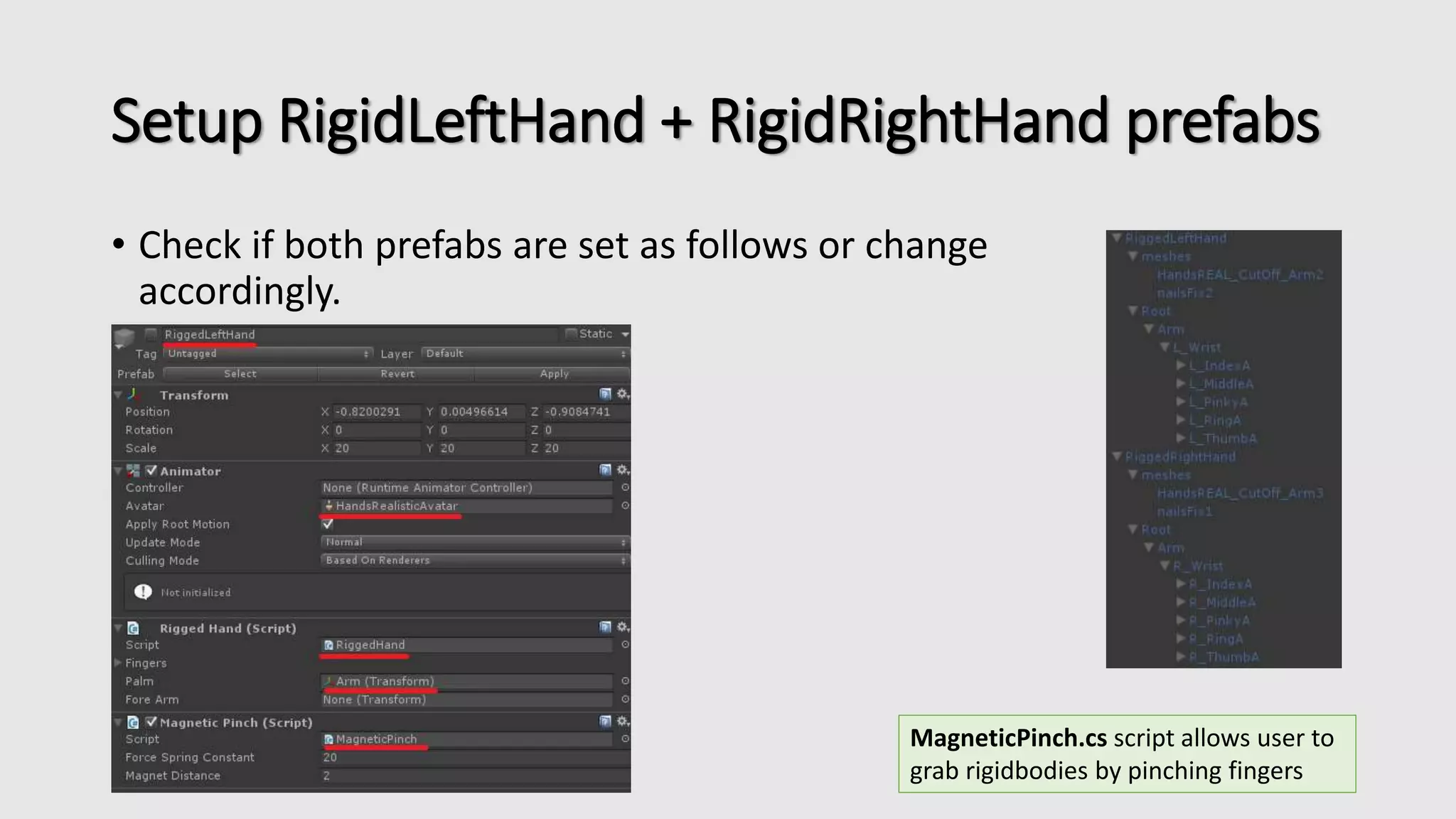

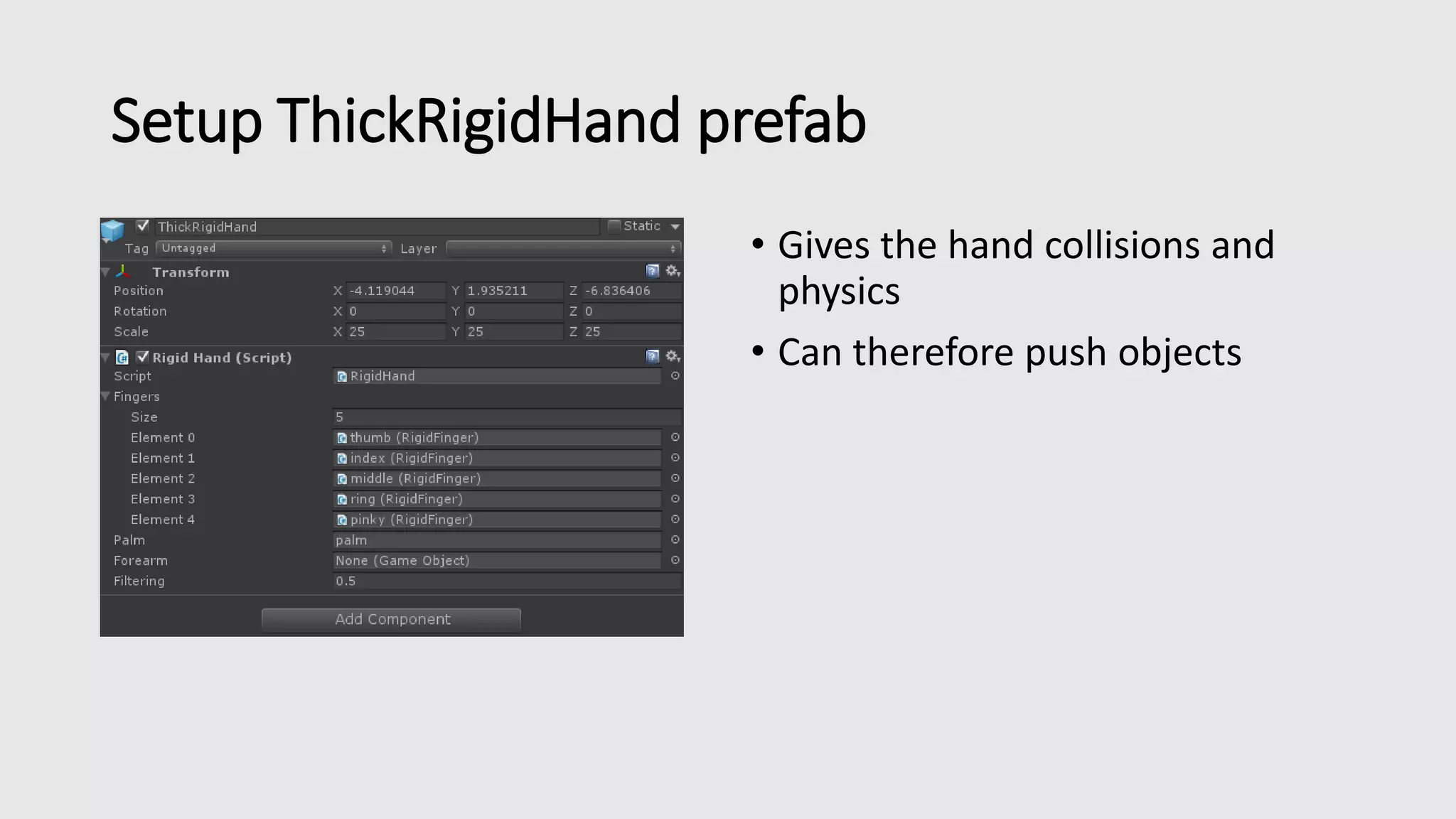

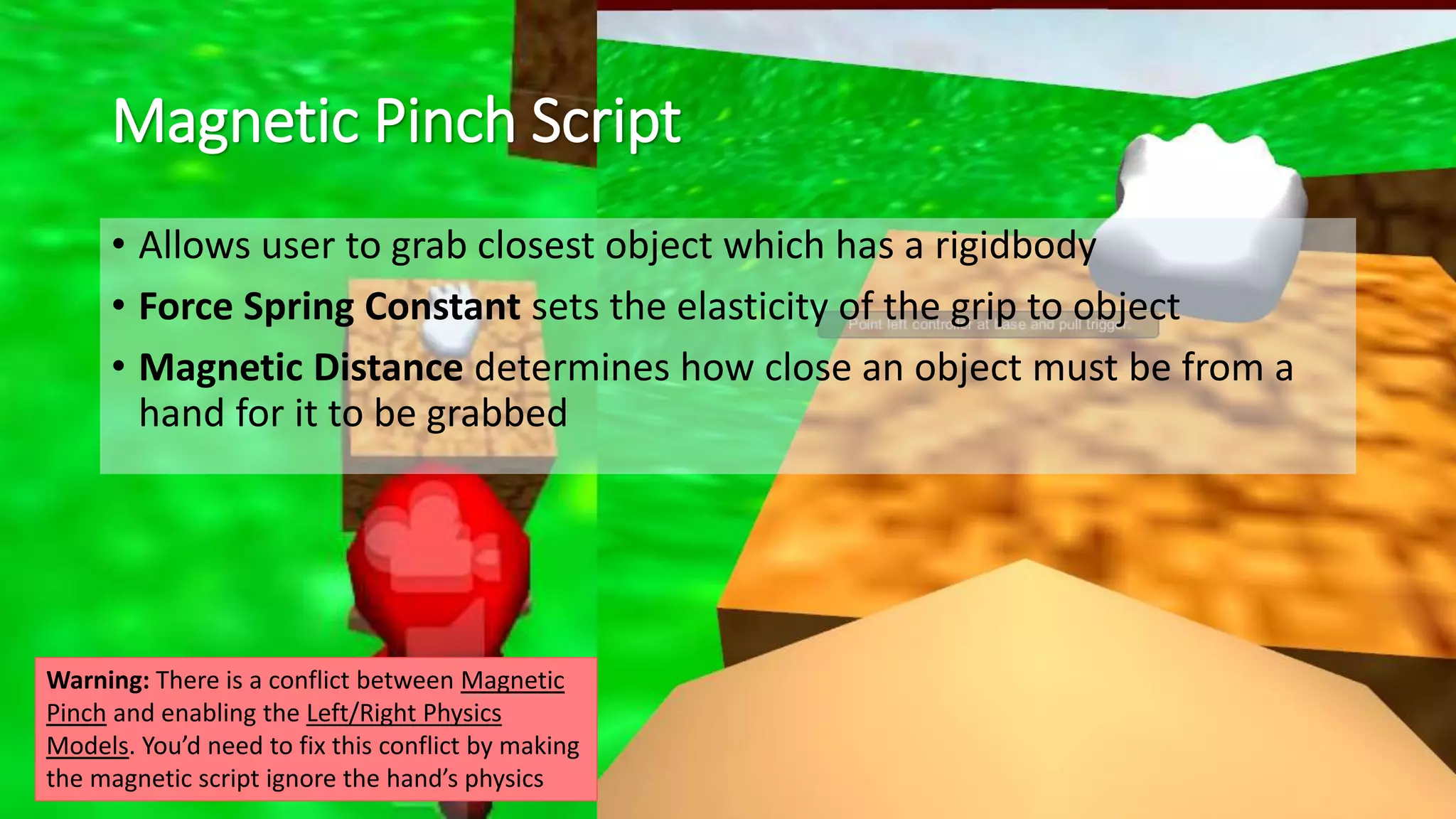

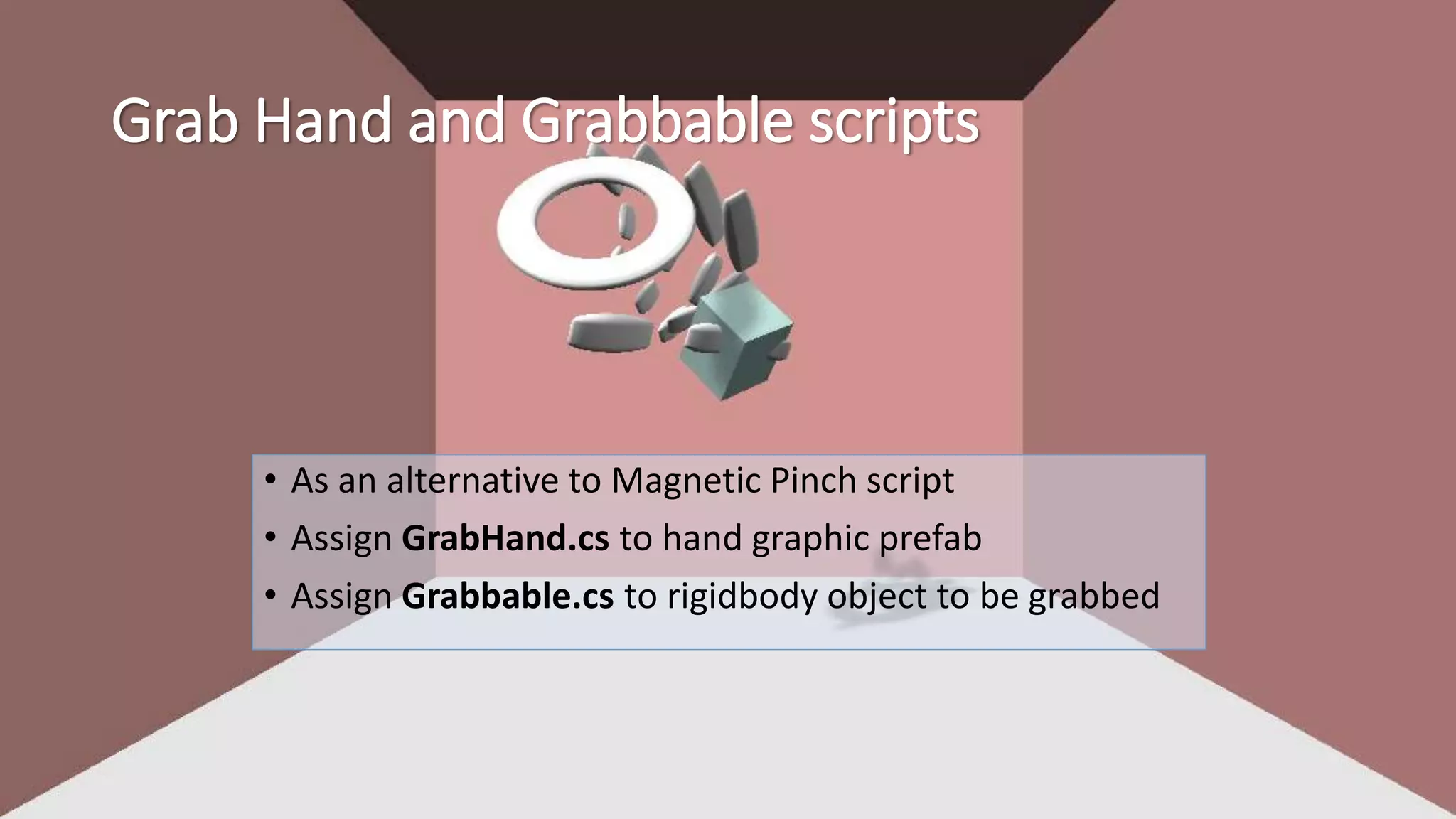

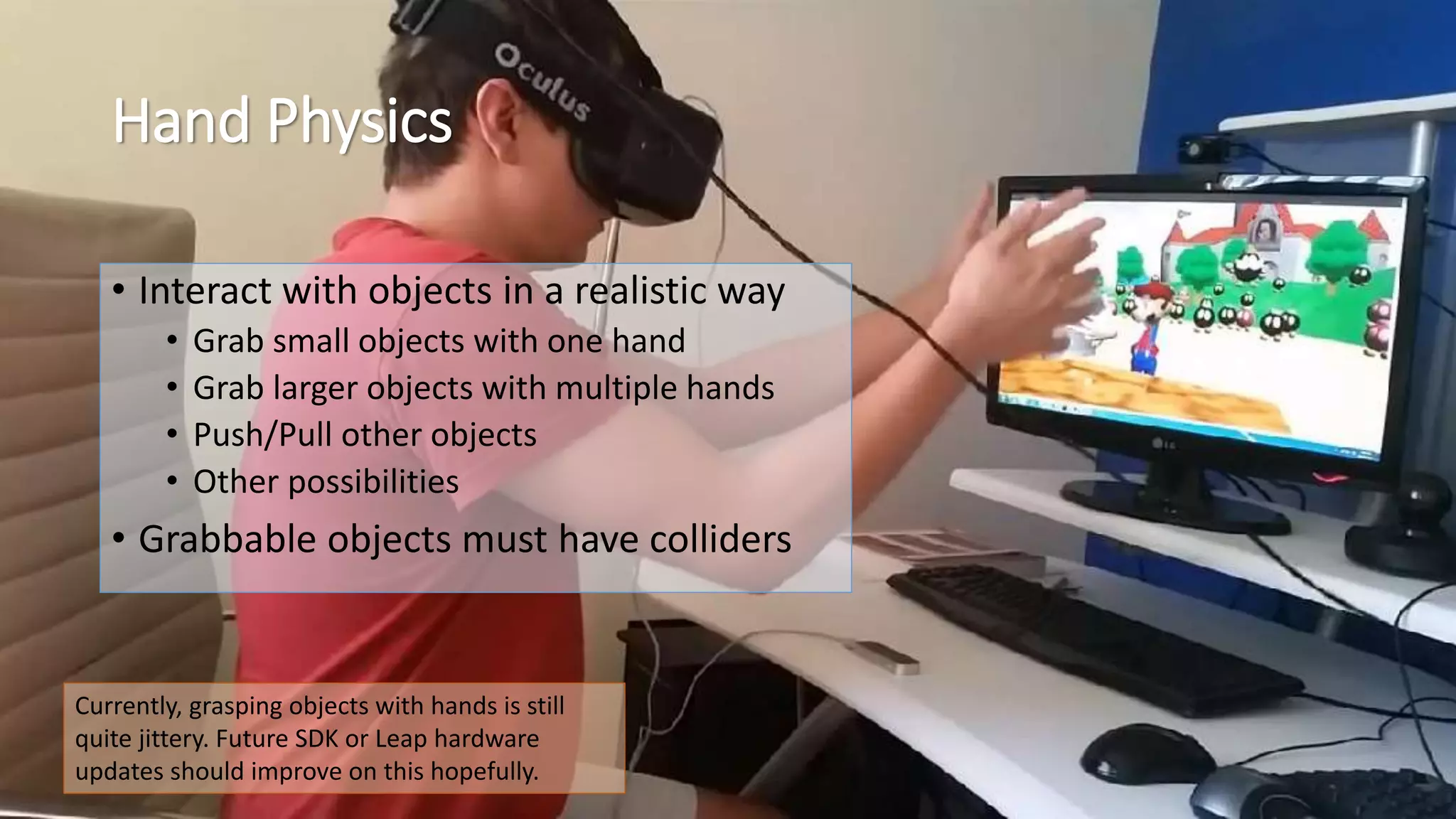

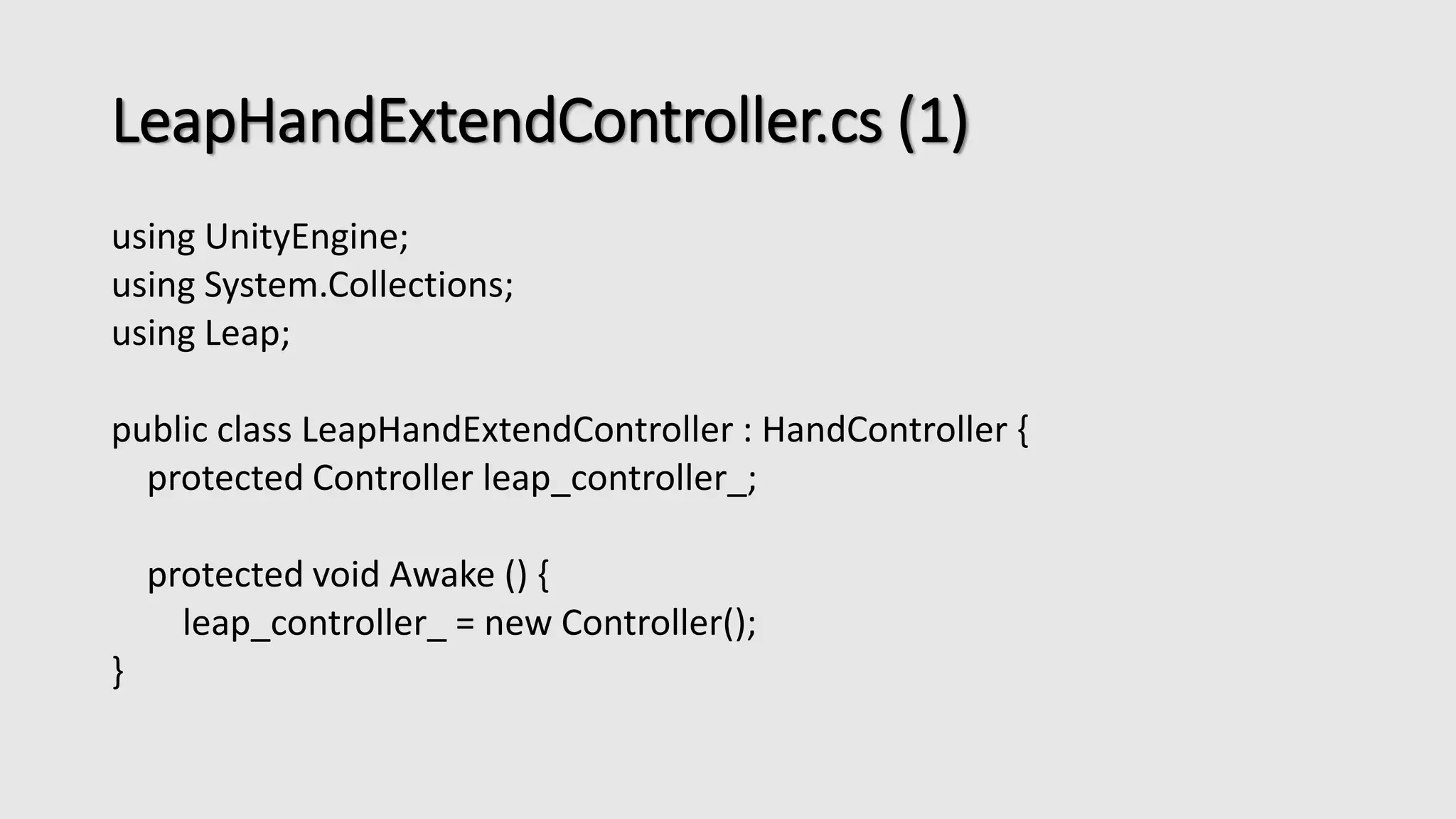

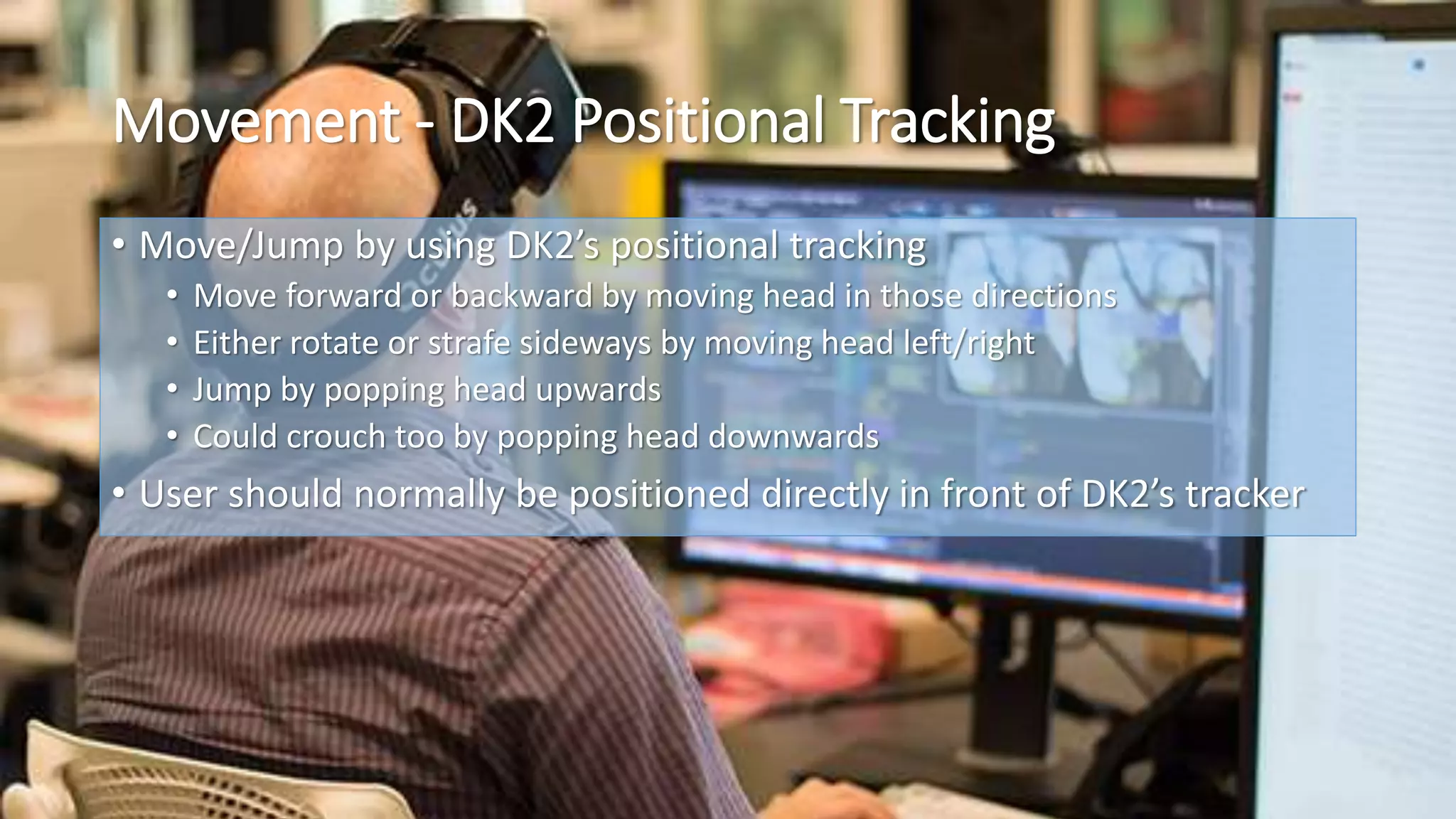

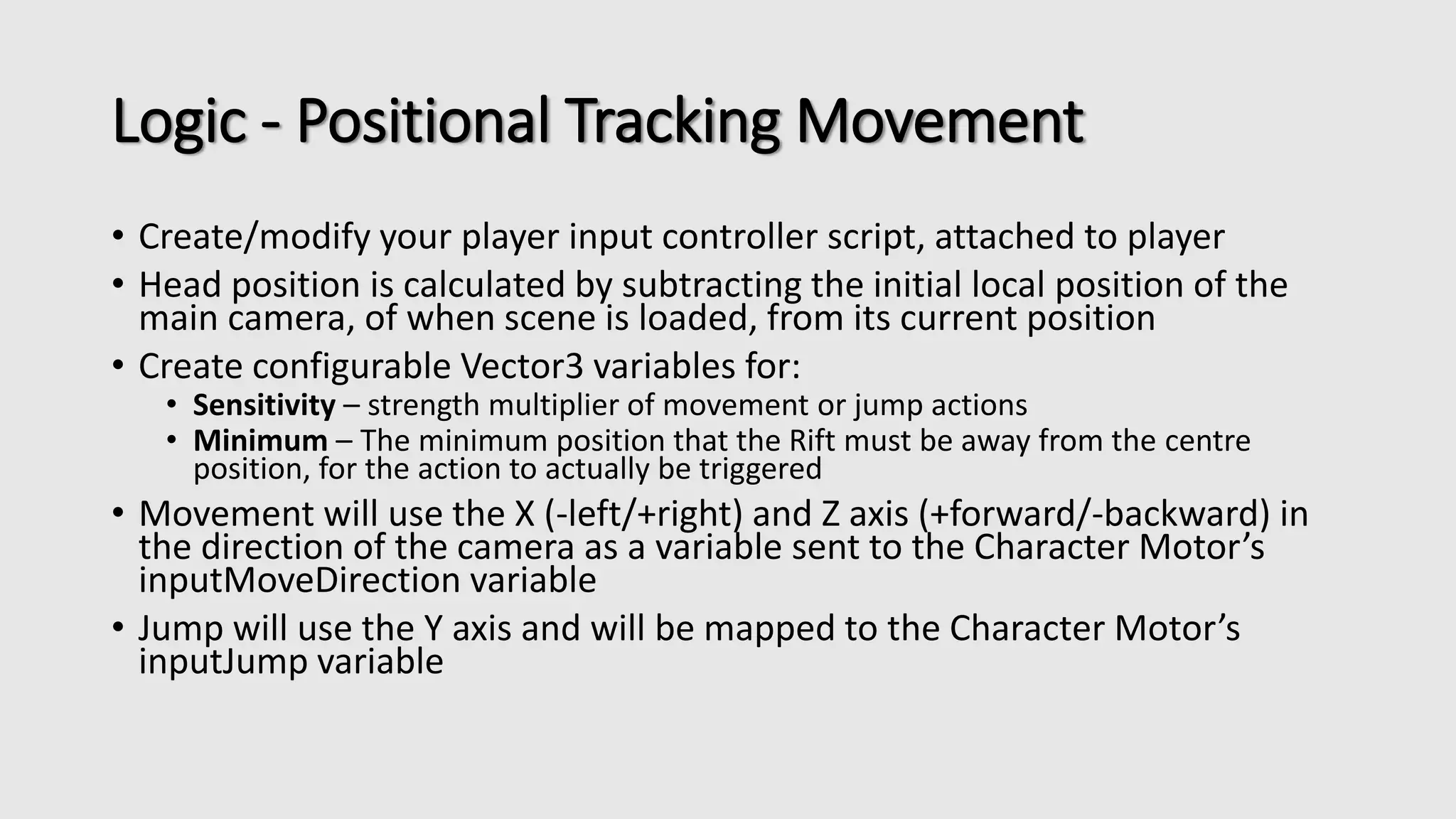

This document is a tutorial for integrating the Oculus Rift DK2 with Leap Motion in Unity, focusing on hand tracking and object interaction using the Leap Motion SDK. It details the setup of hand controllers, physics-based interactions, and movement mechanics via DK2 positional tracking. The tutorial also addresses potential conflicts with other hand tracking systems and provides code snippets for implementing these features.

![• Plugins

• [Include all files]](https://image.slidesharecdn.com/oculusriftdk2leapmotiontutorial-140901021329-phpapp02/75/Oculus-Rift-DK2-Leap-Motion-Tutorial-10-2048.jpg)

![For right hand, choose

the [..]Arm3 mesh

Each finger must have the following variables,

based on type of finger (i.e. index, pinky) and if

it’s left (starts with L) or right (starts with R):](https://image.slidesharecdn.com/oculusriftdk2leapmotiontutorial-140901021329-phpapp02/75/Oculus-Rift-DK2-Leap-Motion-Tutorial-14-2048.jpg)

![Code – Positional Track Movement

[RequireComponent(typeof(CharacterMotor))]

public class FPSInputController : MonoBehaviour {

…

public bool ovrMovement = false; // Enable move player by moving head on X and Z axis

public bool ovrJump = false; // Enable player jumps by moving head on Y axis upwa rds

public Vector3 ovrControlSensitivity = new Vector3(1, 1, 1); // Multiplier of positiona tracking move/jump actions

public Vector3 ovrControlMinimum = new Vector3(0, 0, 0); // Min distance of head from centre to move/jump

public enum OvrXAxisAction { Strafe = 0, Rotate = 1 }

public OvrXAxisAction ovrXAxisAction = OvrXAxisAction.Rotate; // Whether x axis positional tracking performs strafing or rotation

private GameObject mainCamera; // Camera where movement orientation is done and audio listener enabled

private CharacterMotor motor;

// OVR positional tracking, currently works via tilting head

private Vector3 initPosTrackDir;

private Vector3 curPosTrackDir;

private Vector3 diffPosTrackDir;](https://image.slidesharecdn.com/oculusriftdk2leapmotiontutorial-140901021329-phpapp02/75/Oculus-Rift-DK2-Leap-Motion-Tutorial-27-2048.jpg)