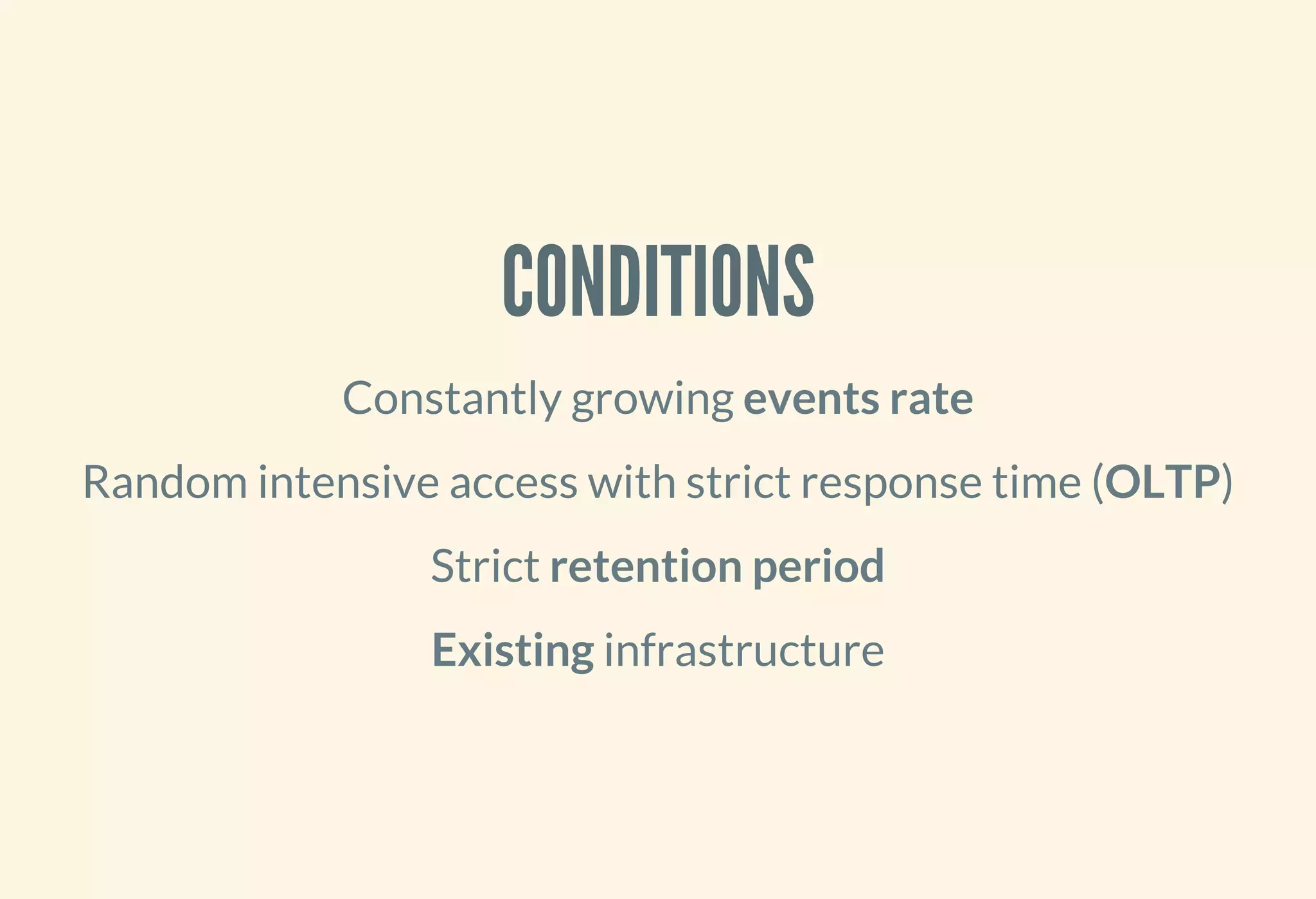

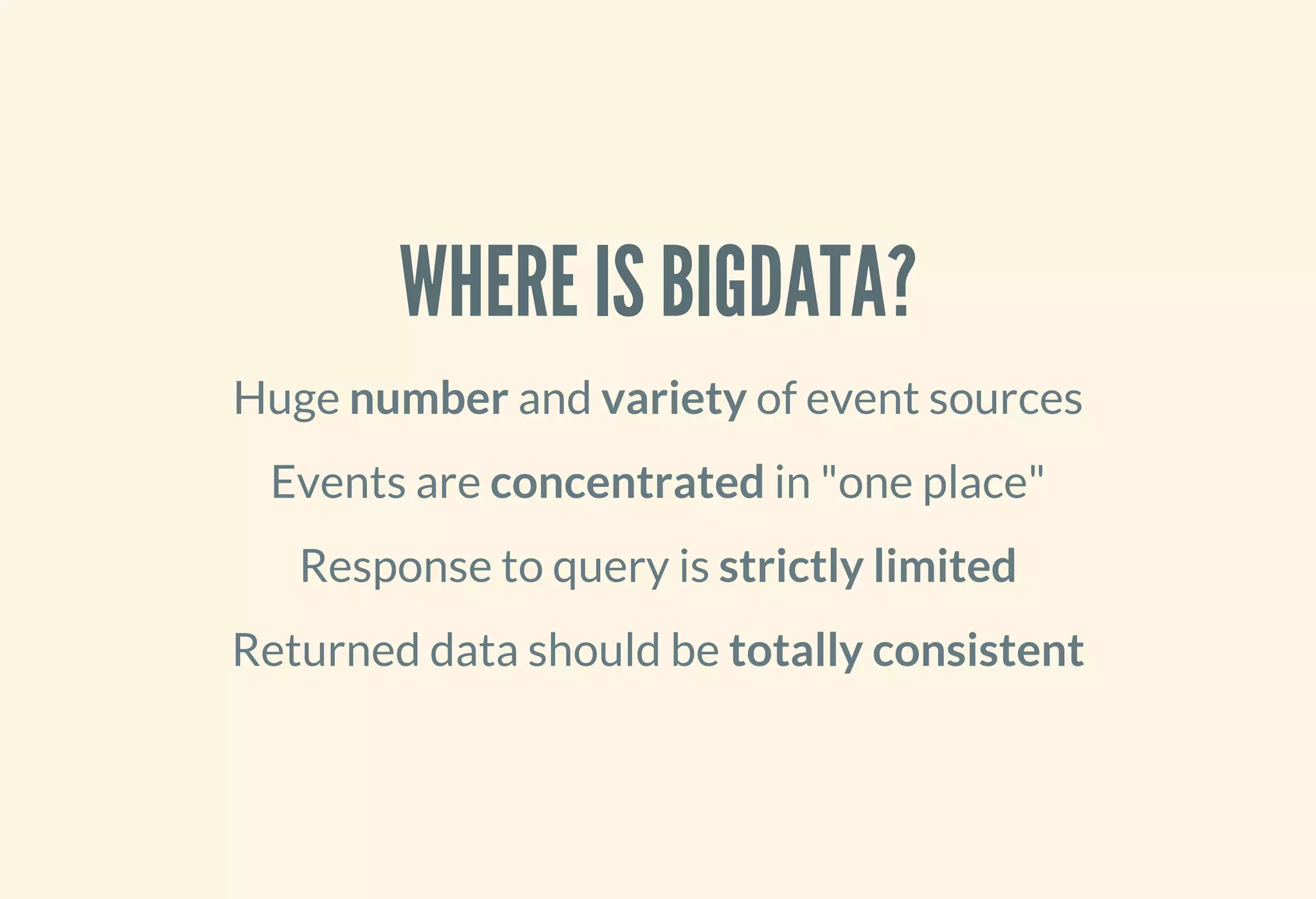

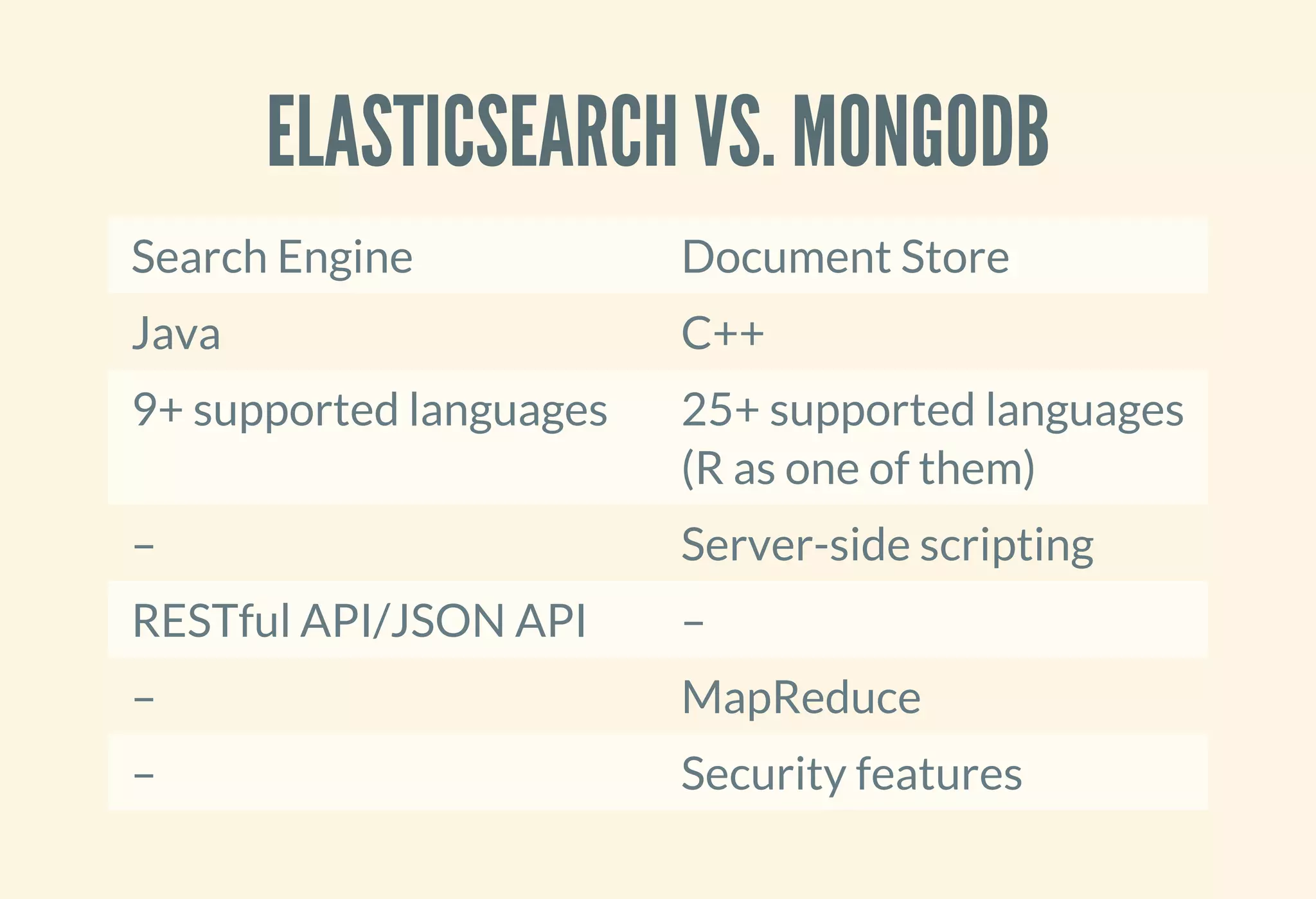

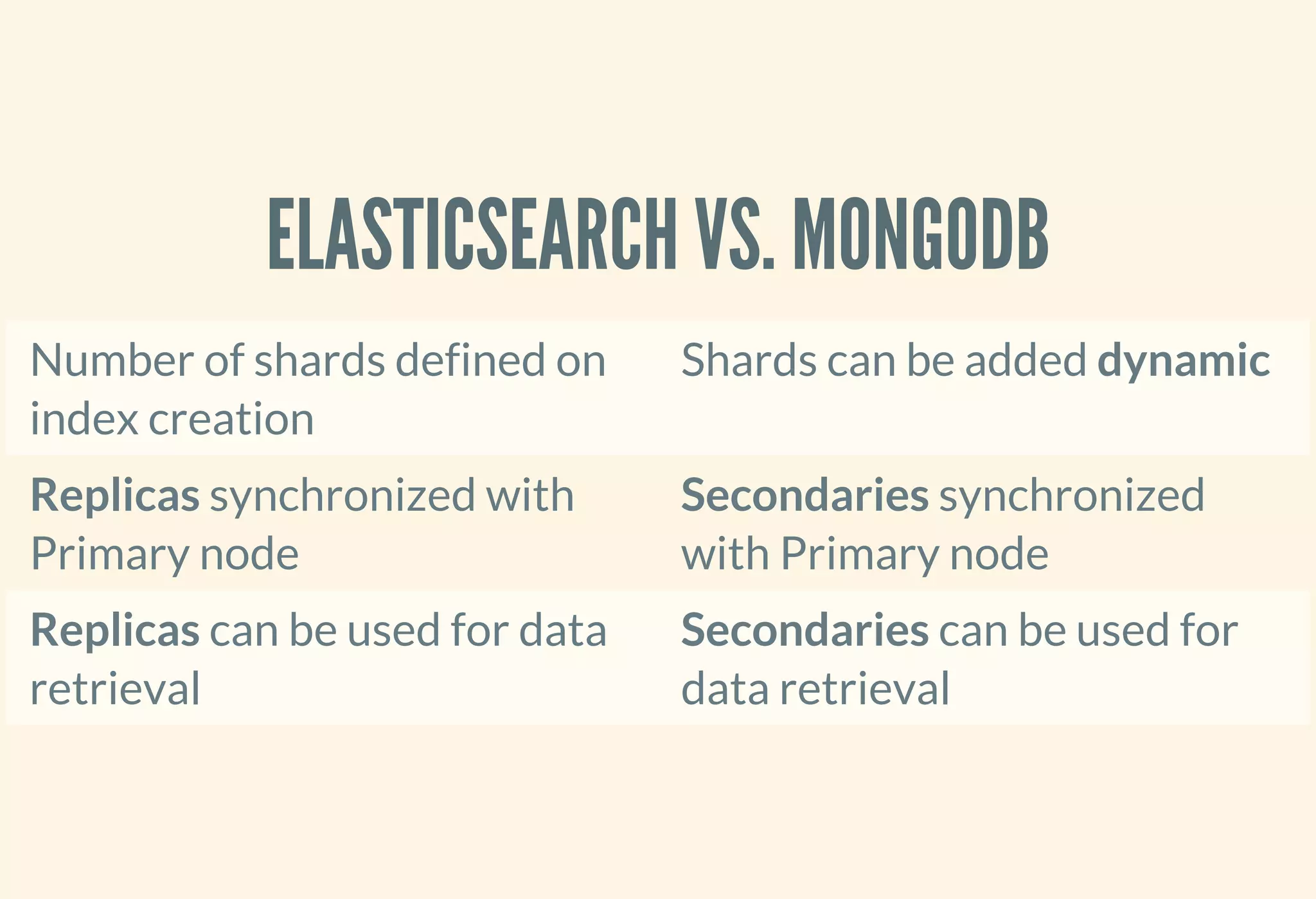

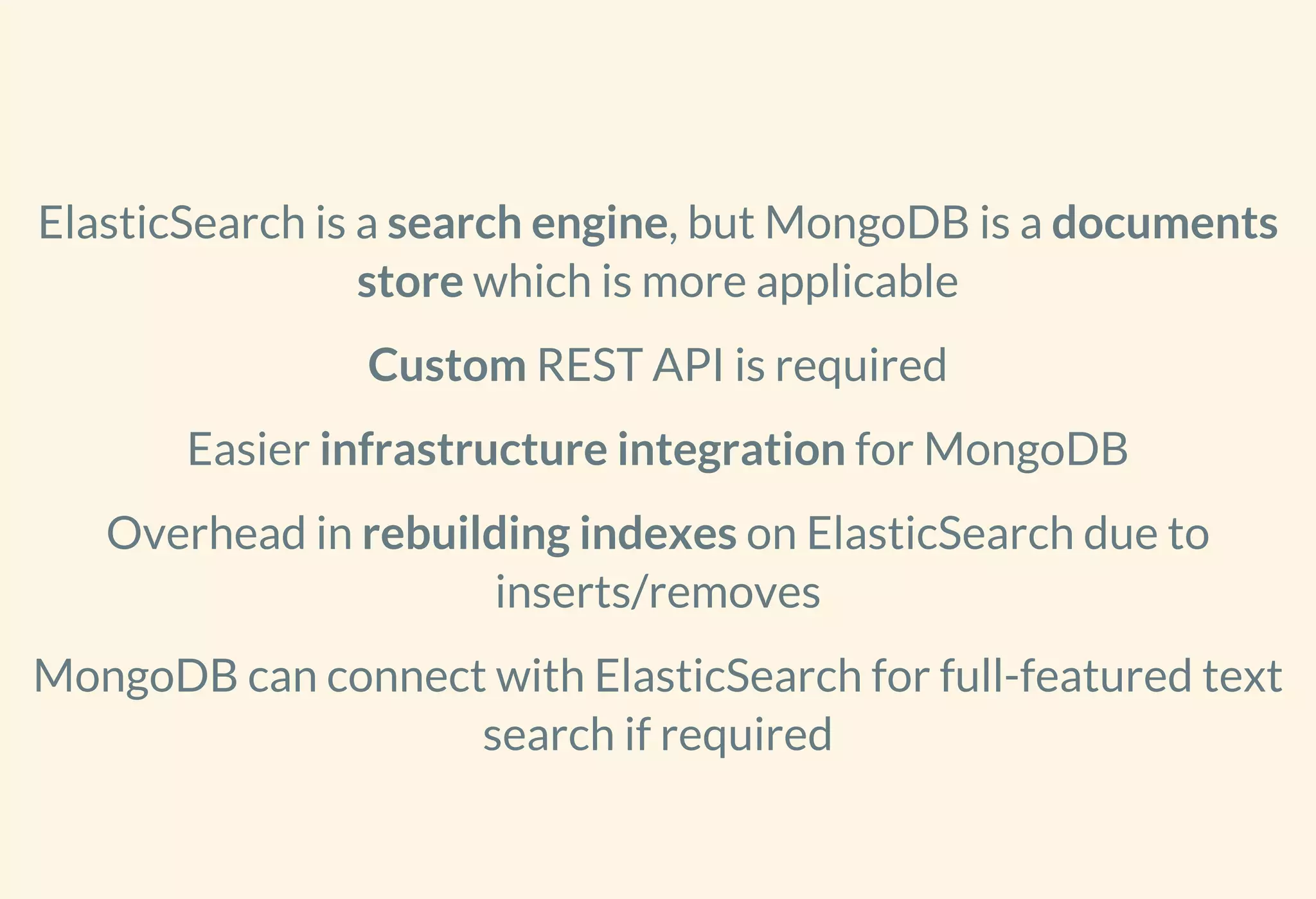

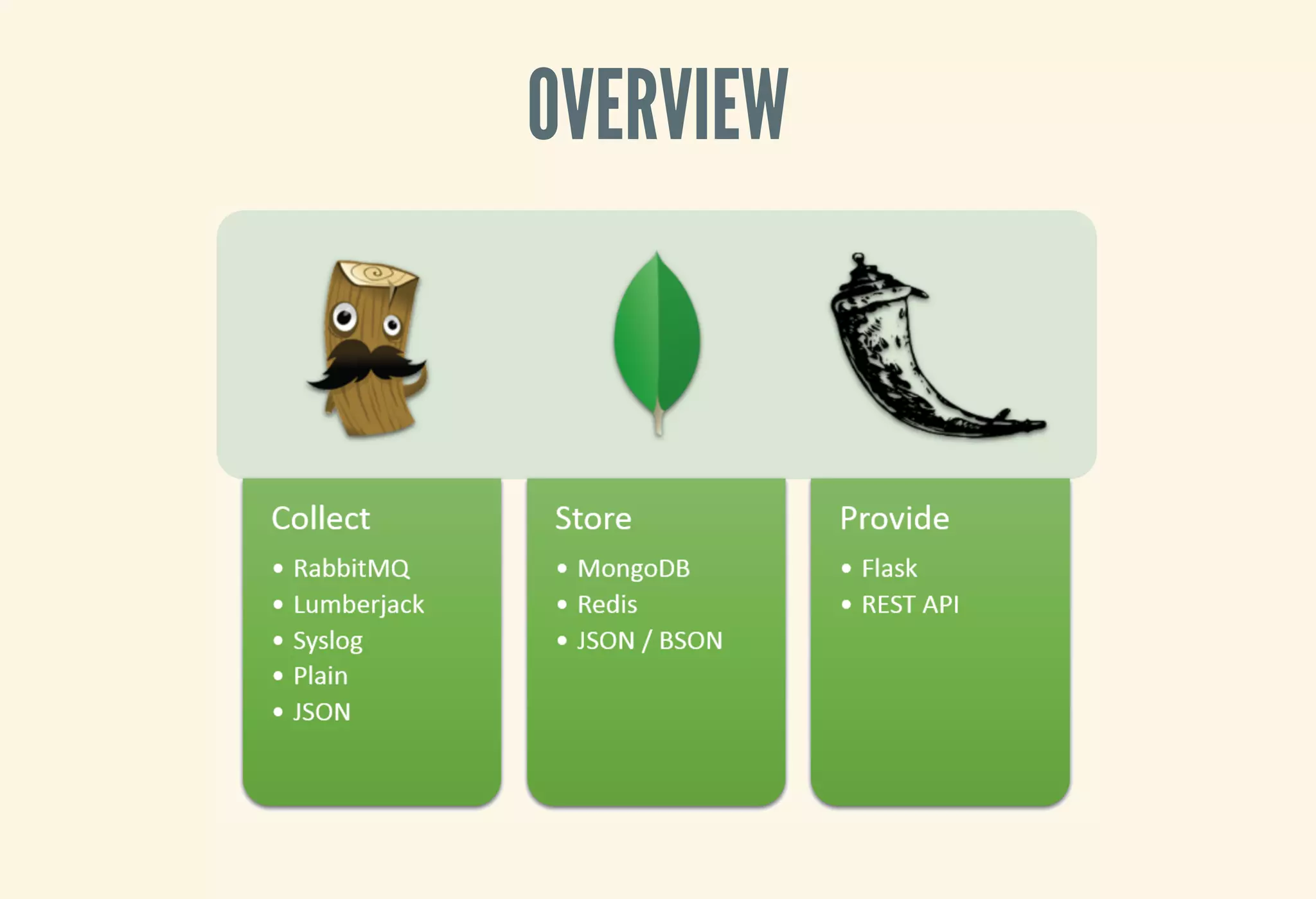

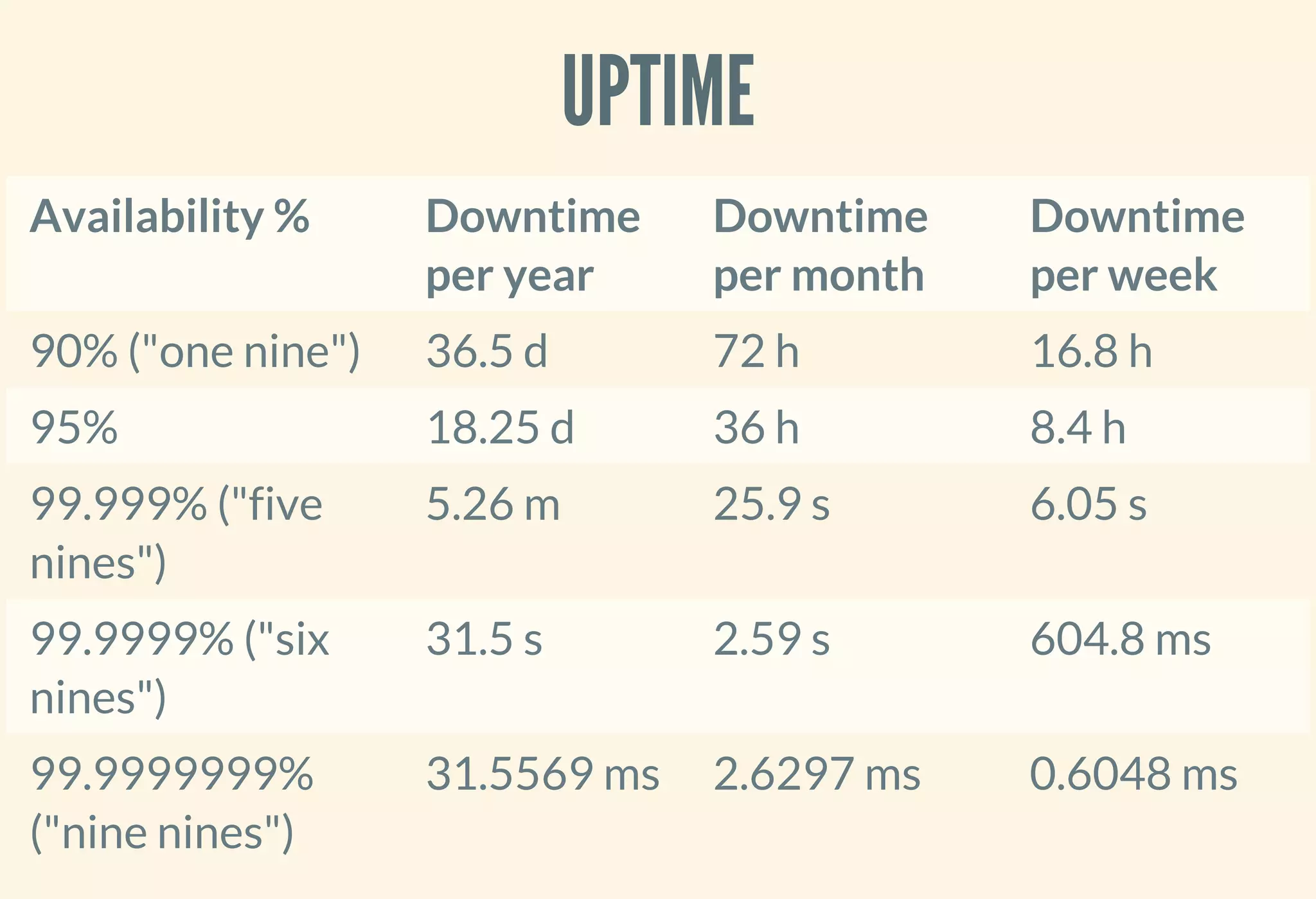

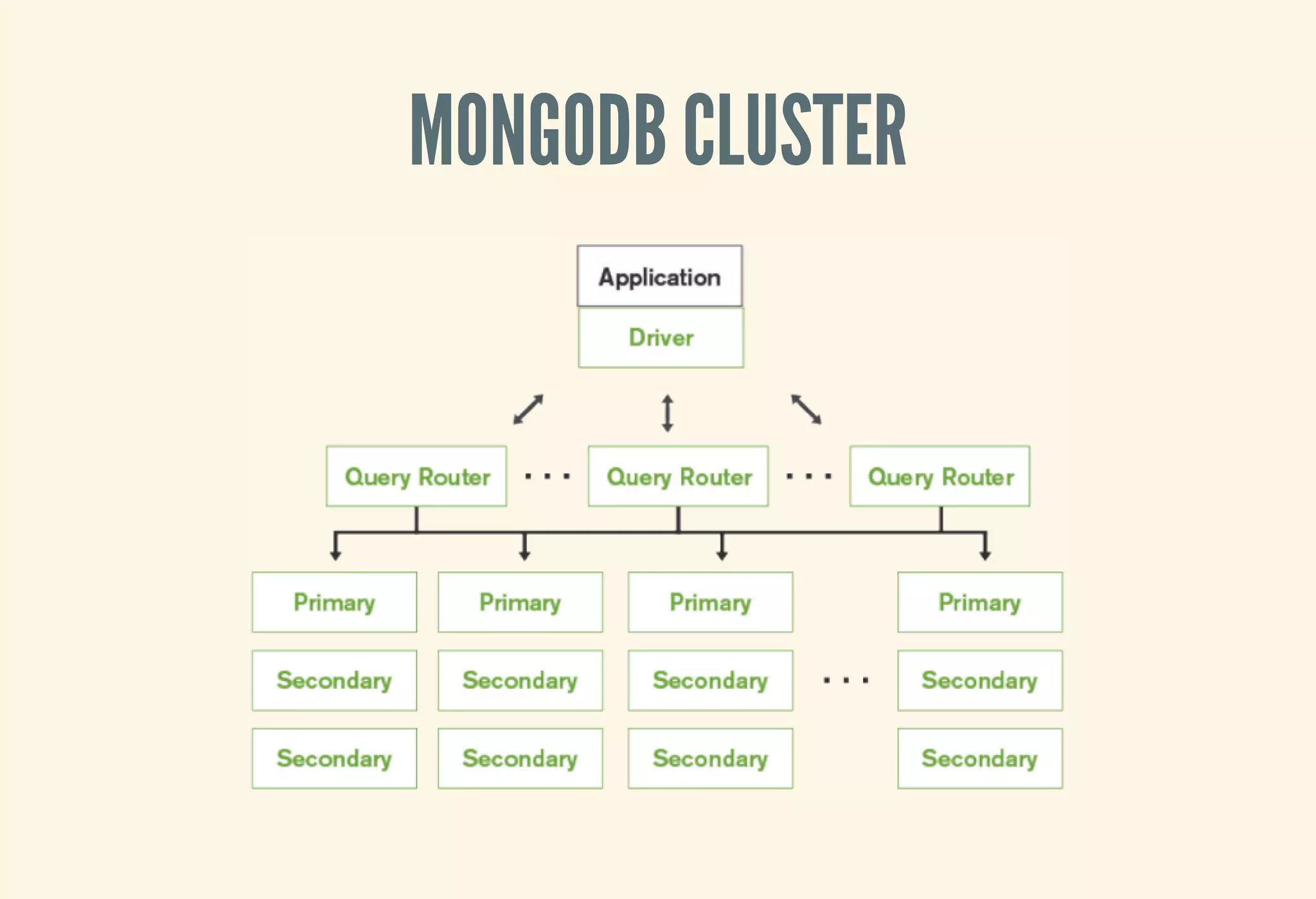

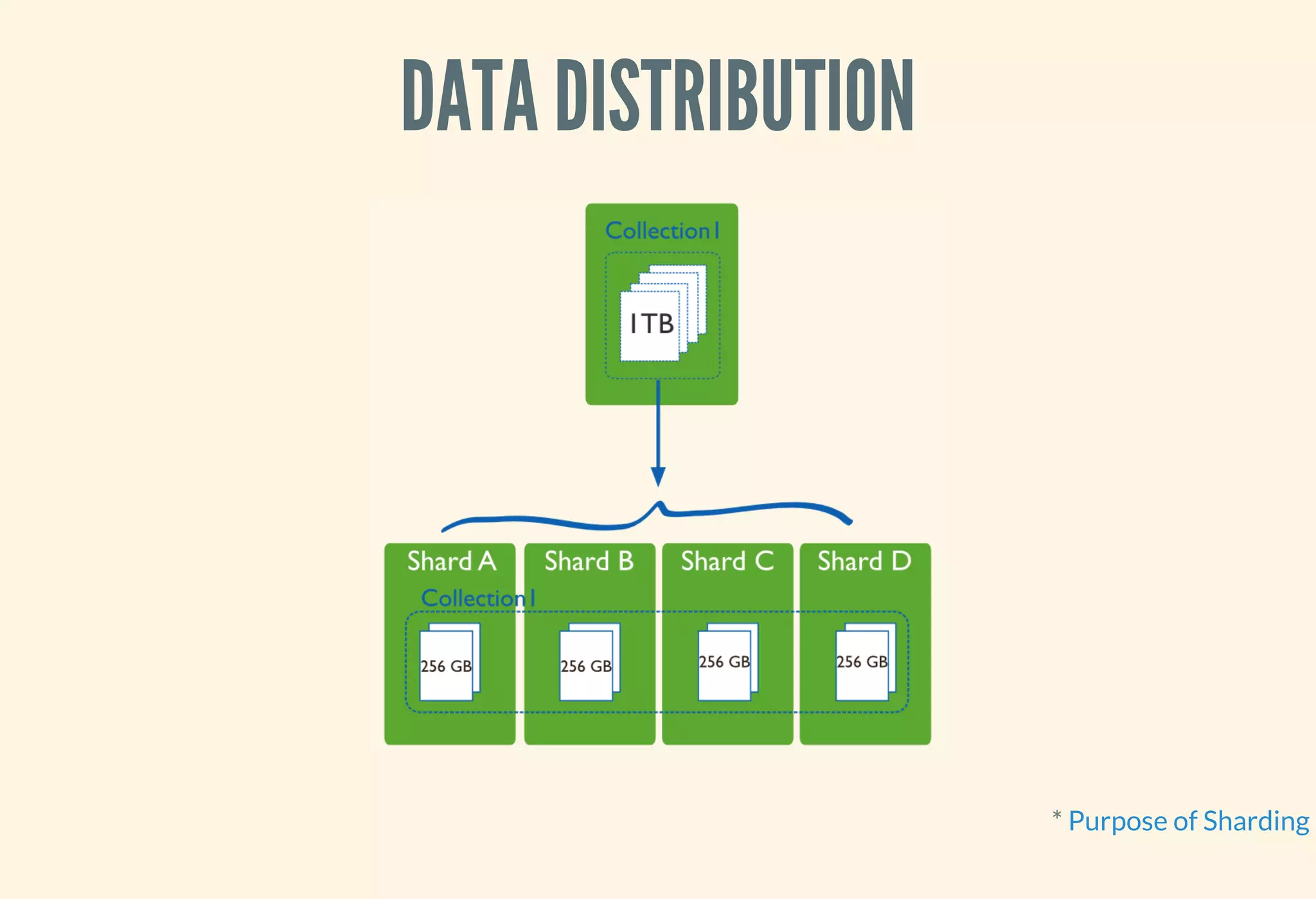

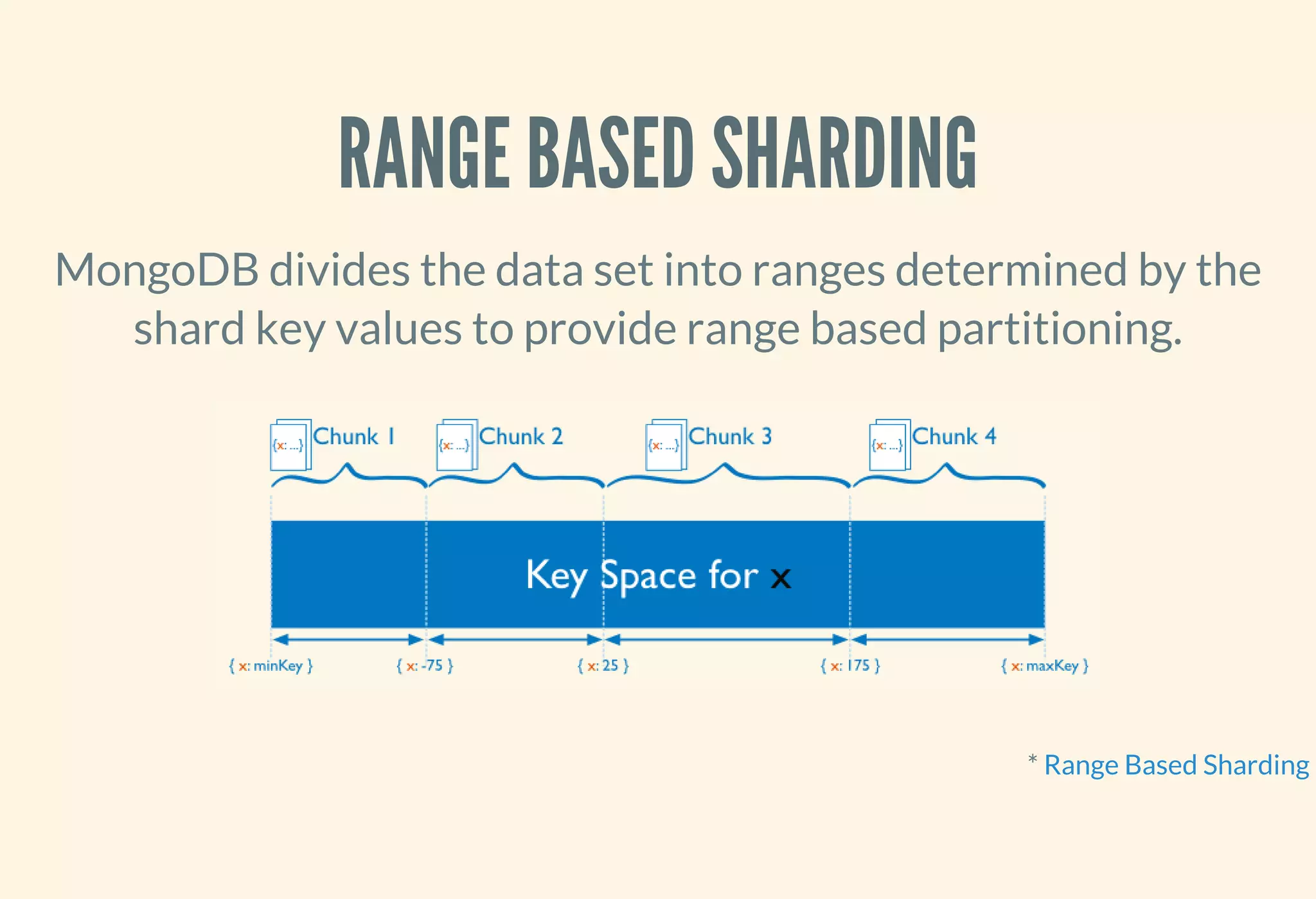

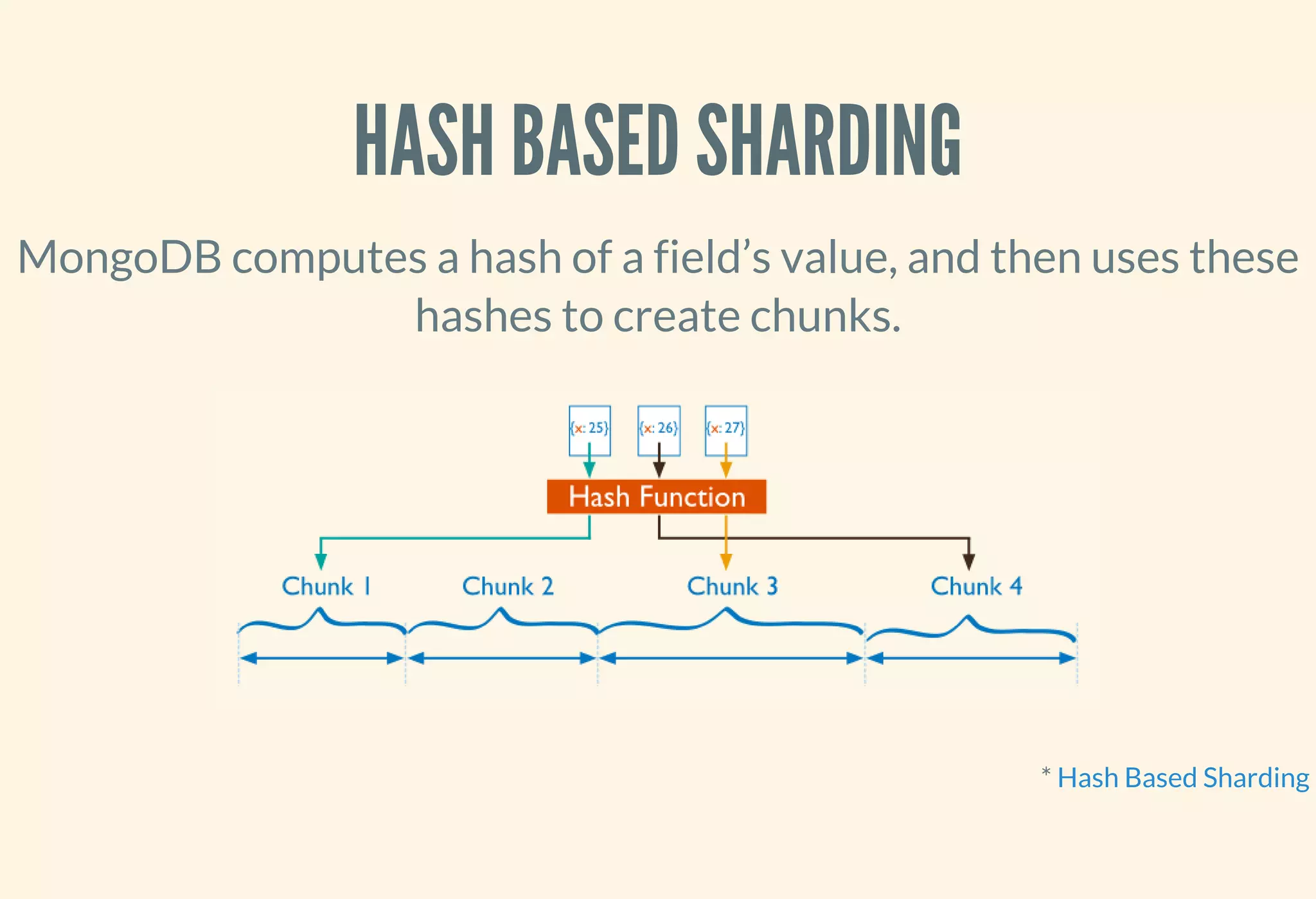

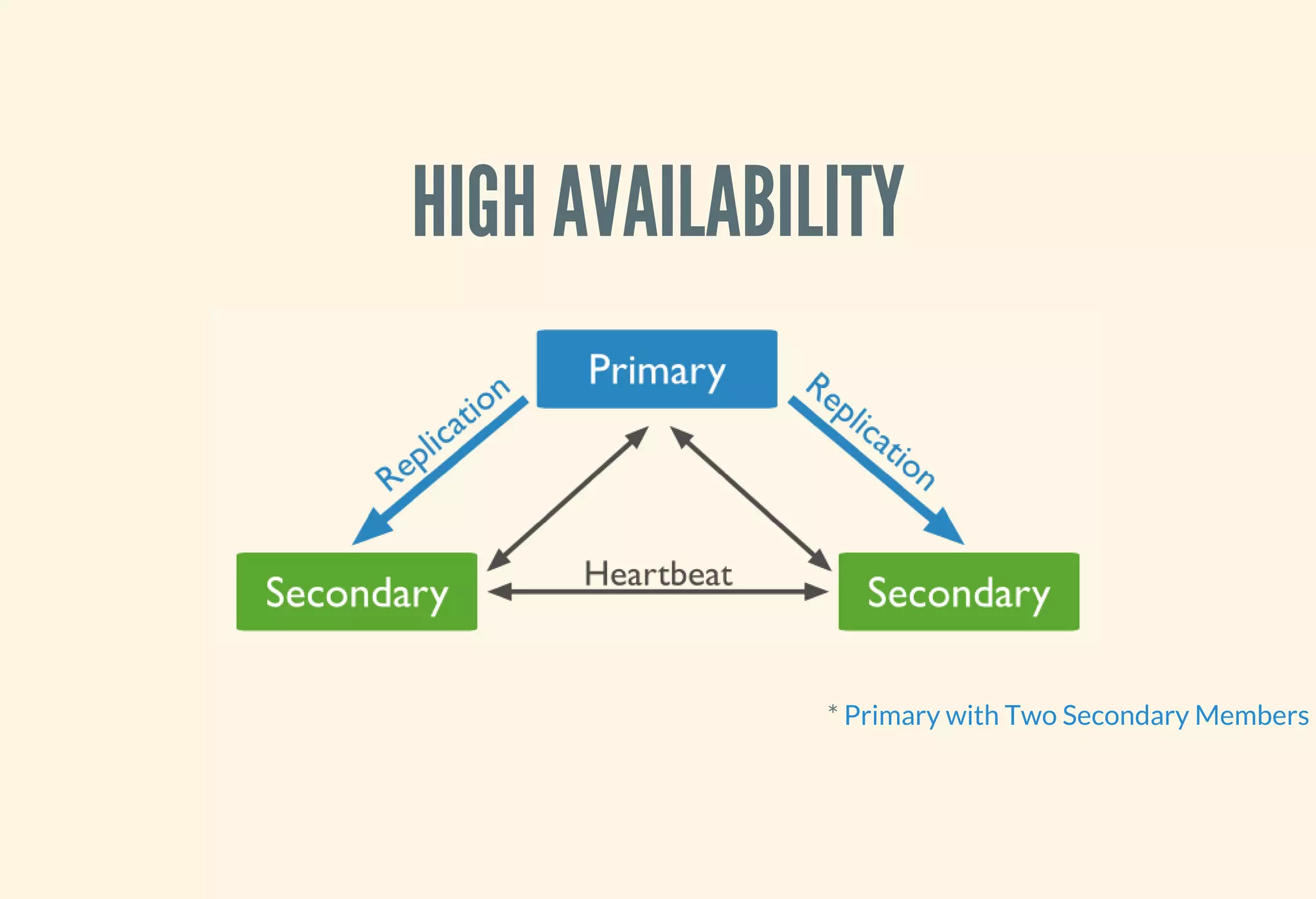

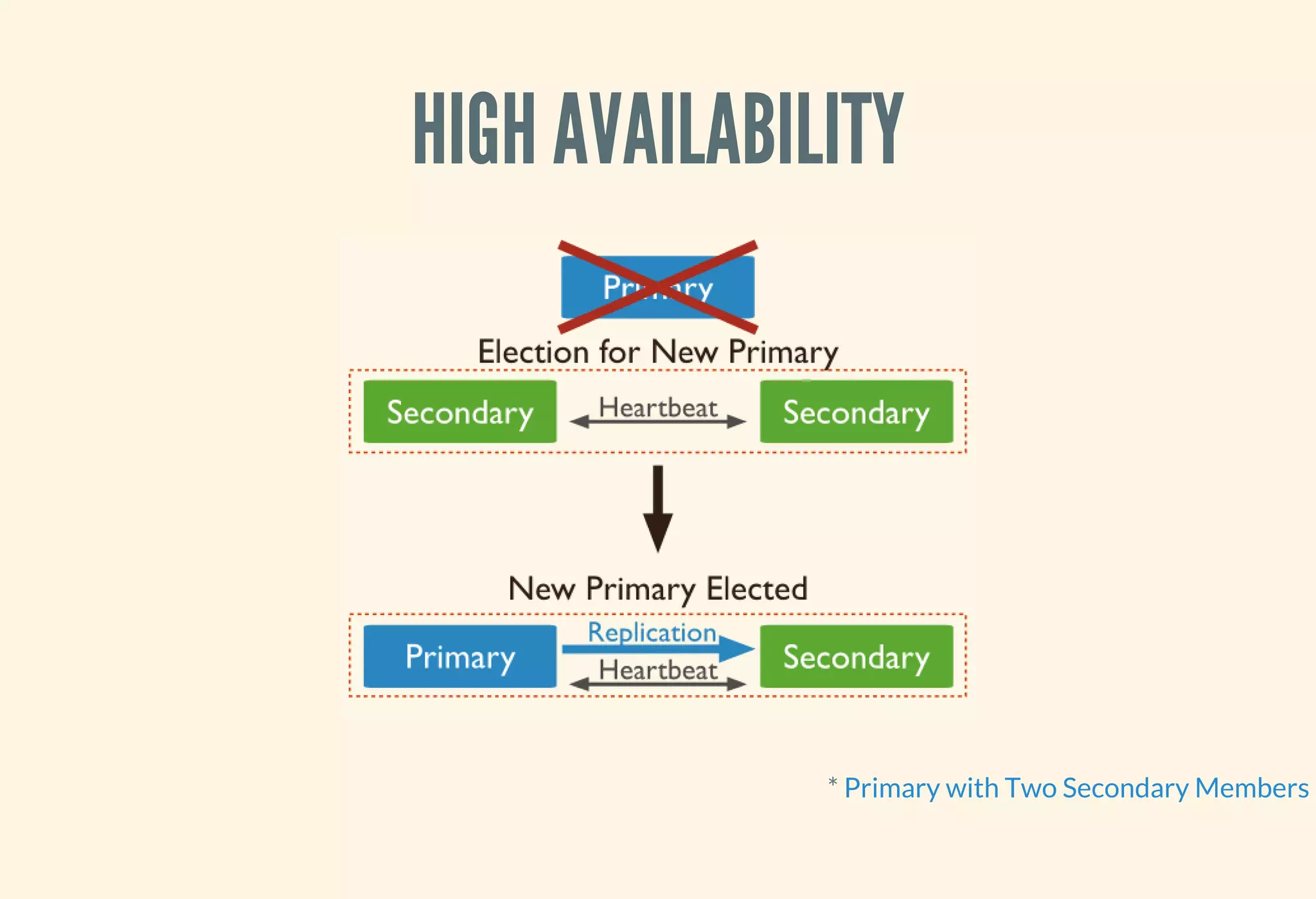

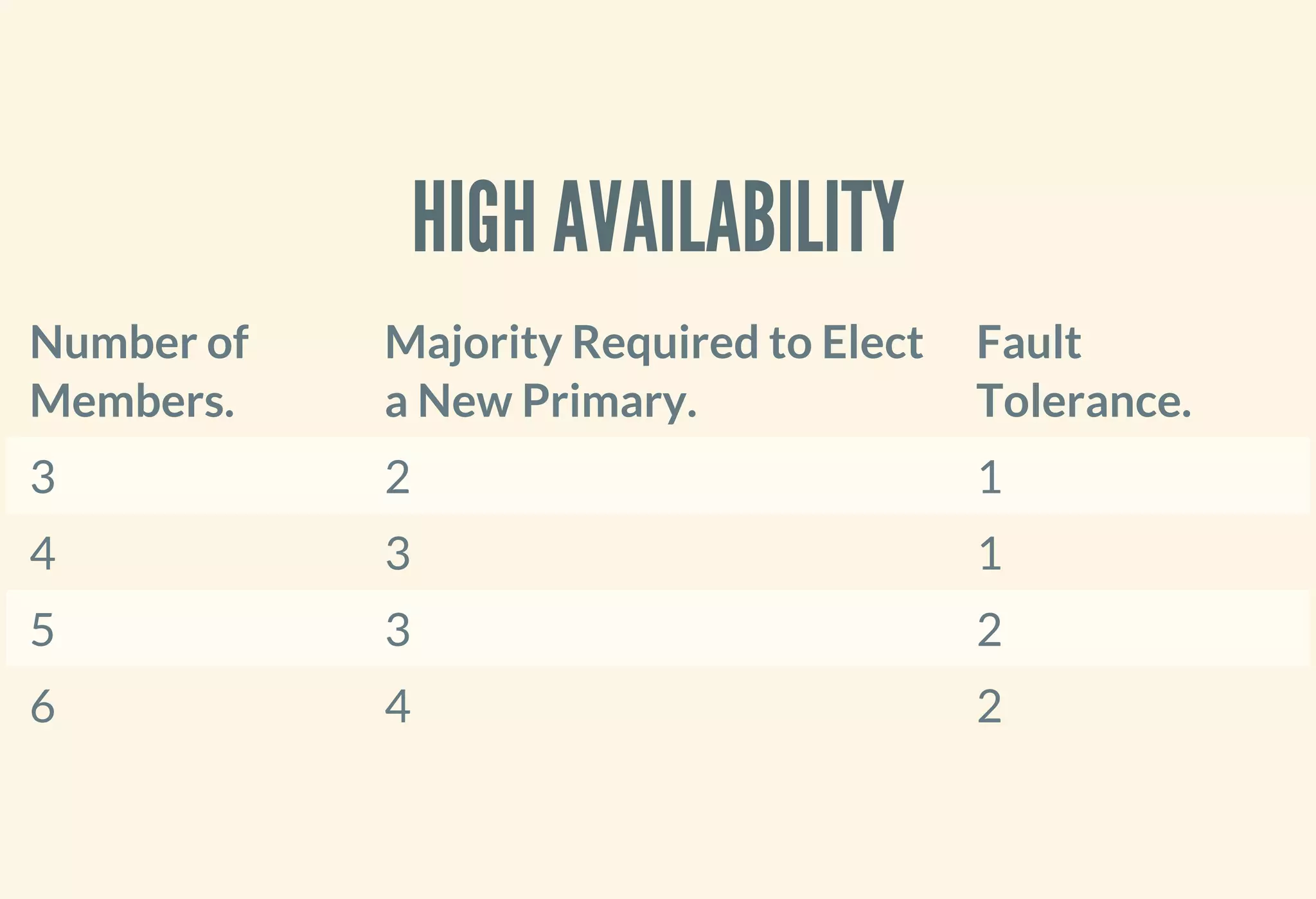

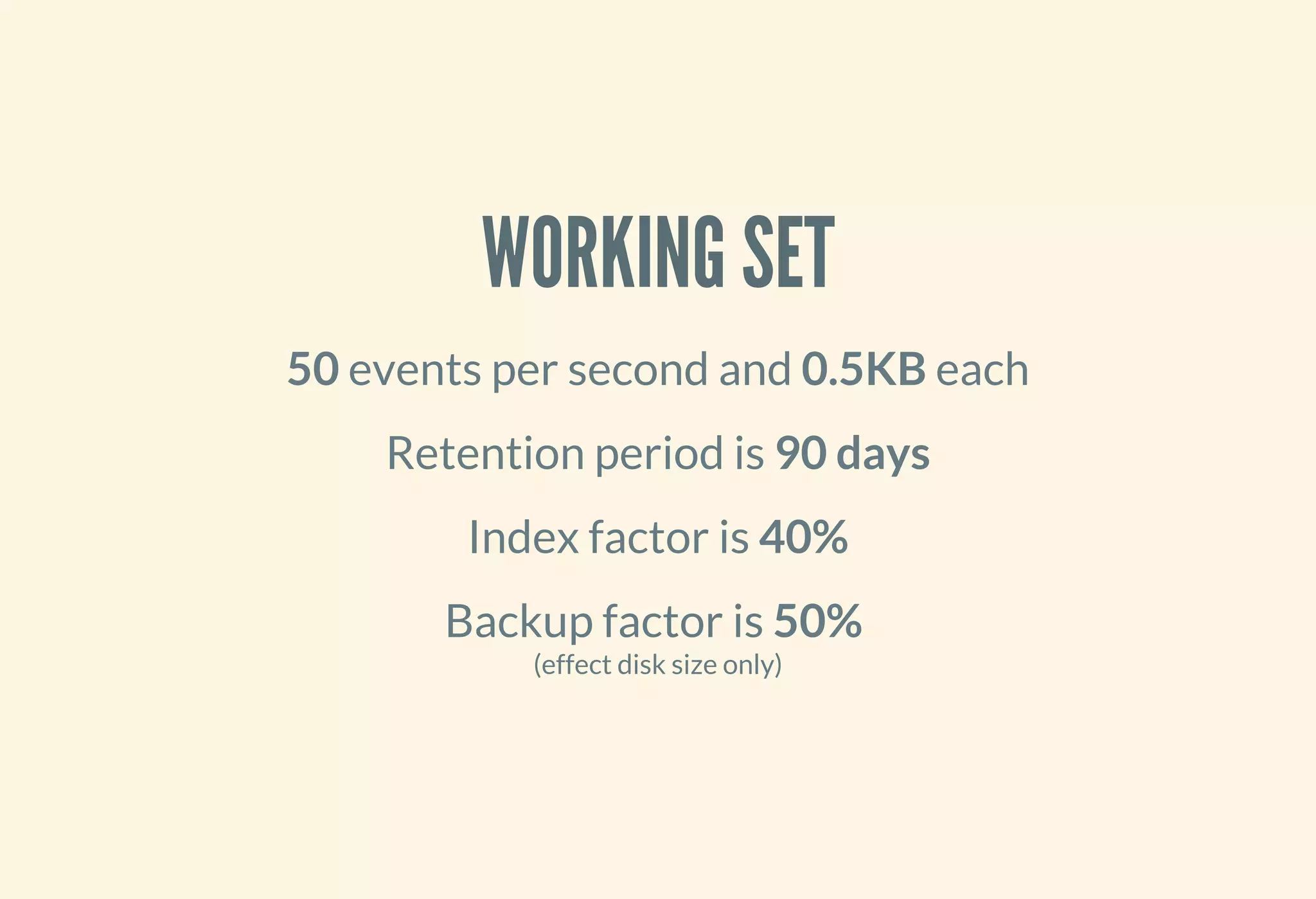

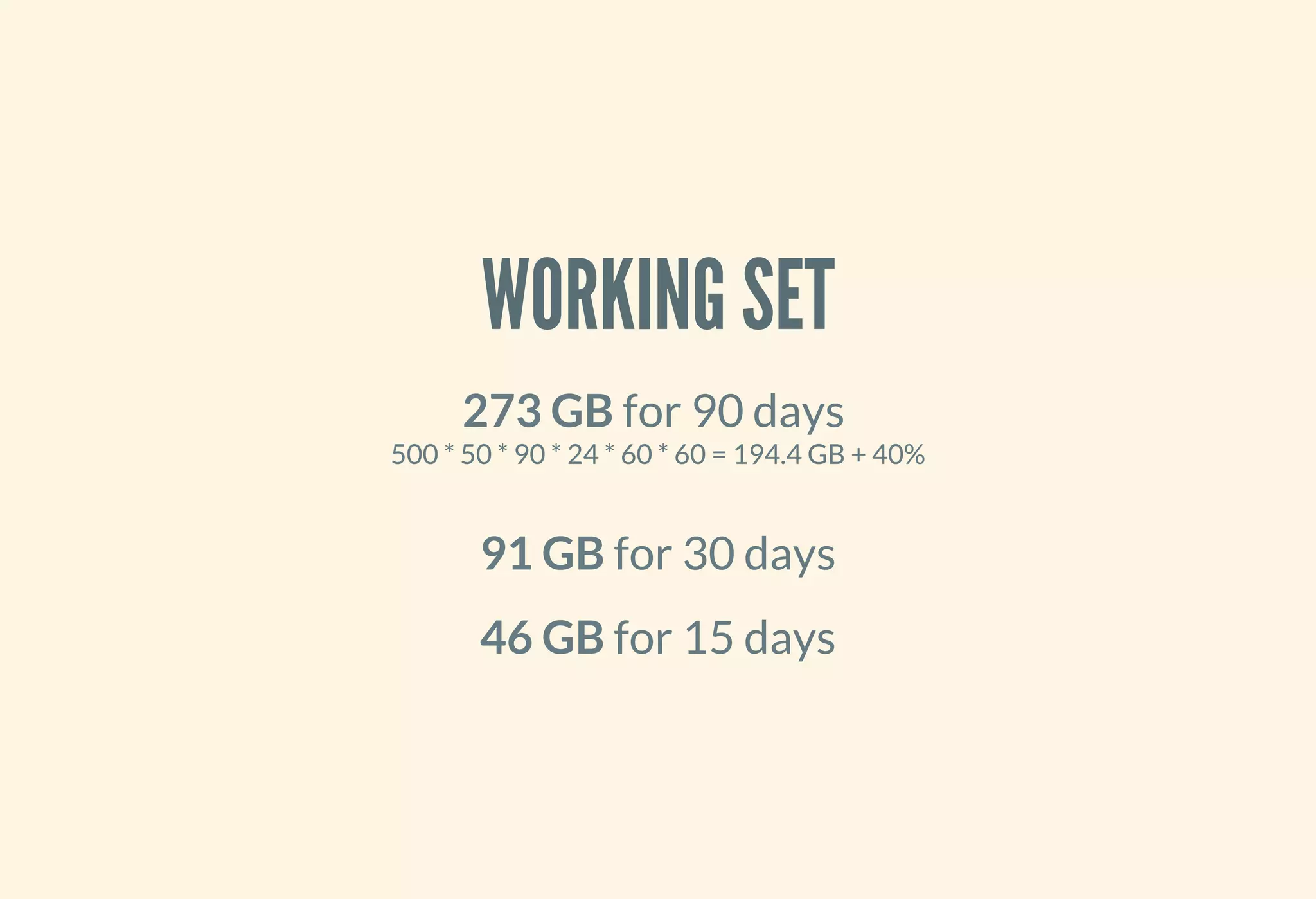

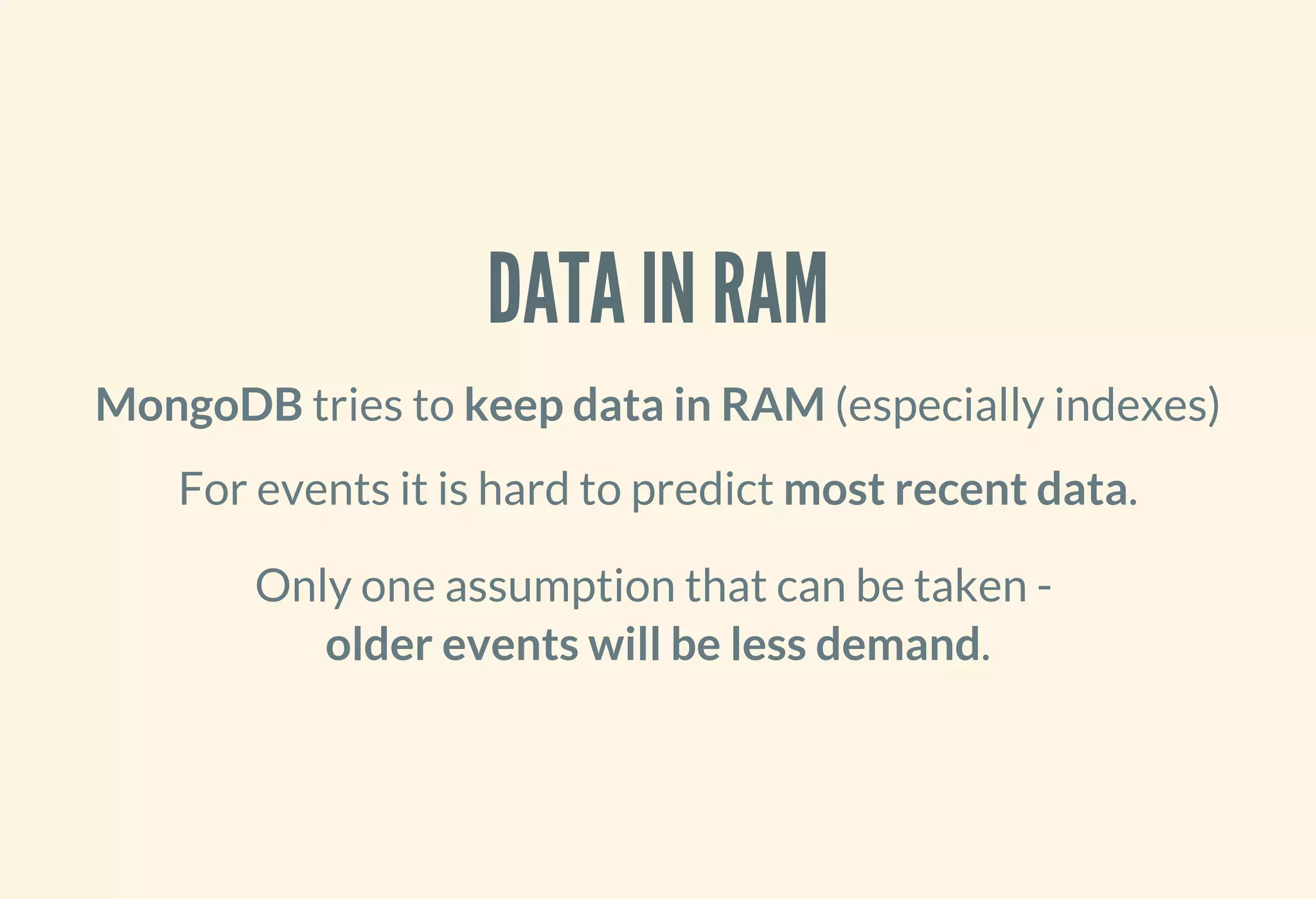

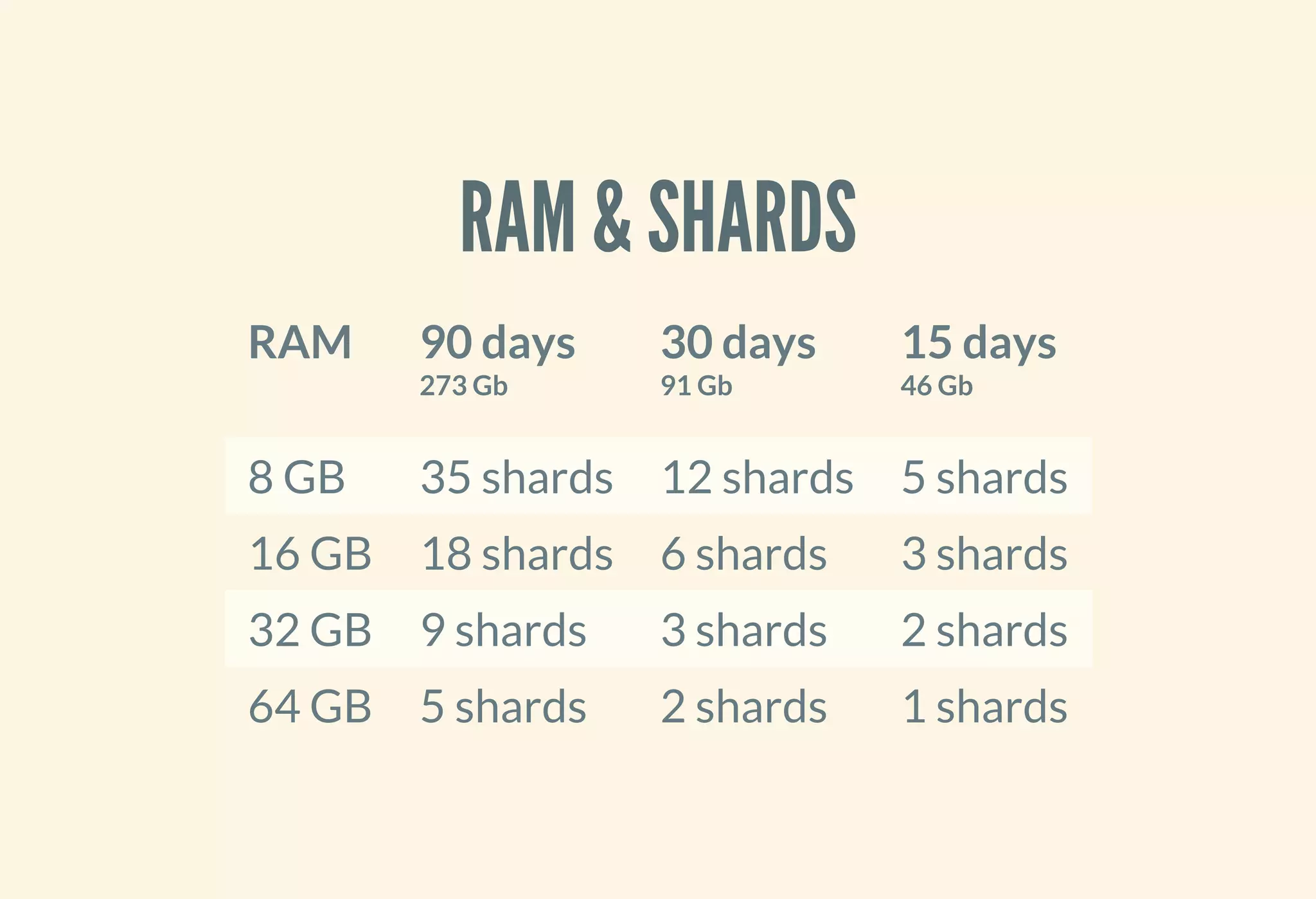

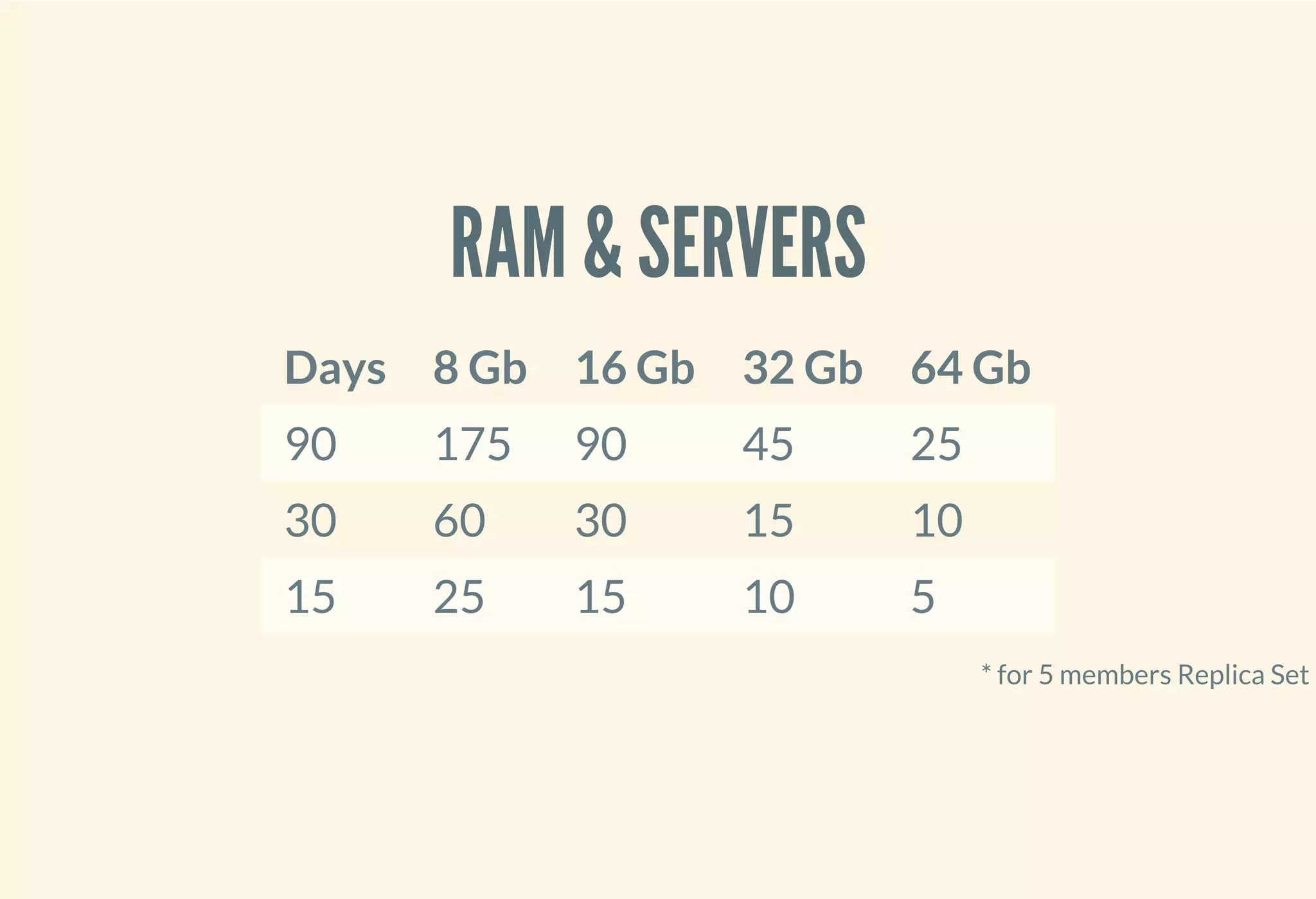

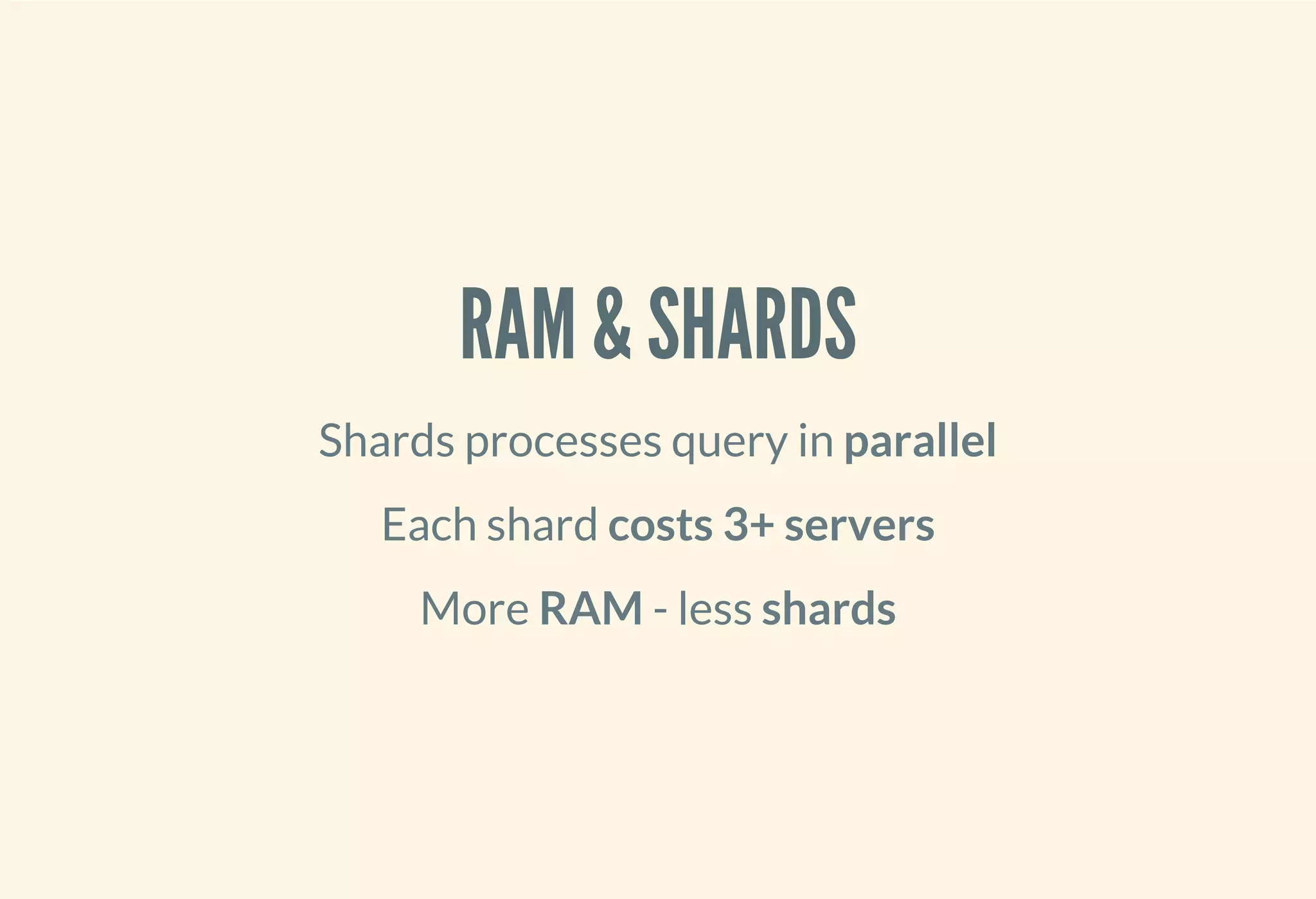

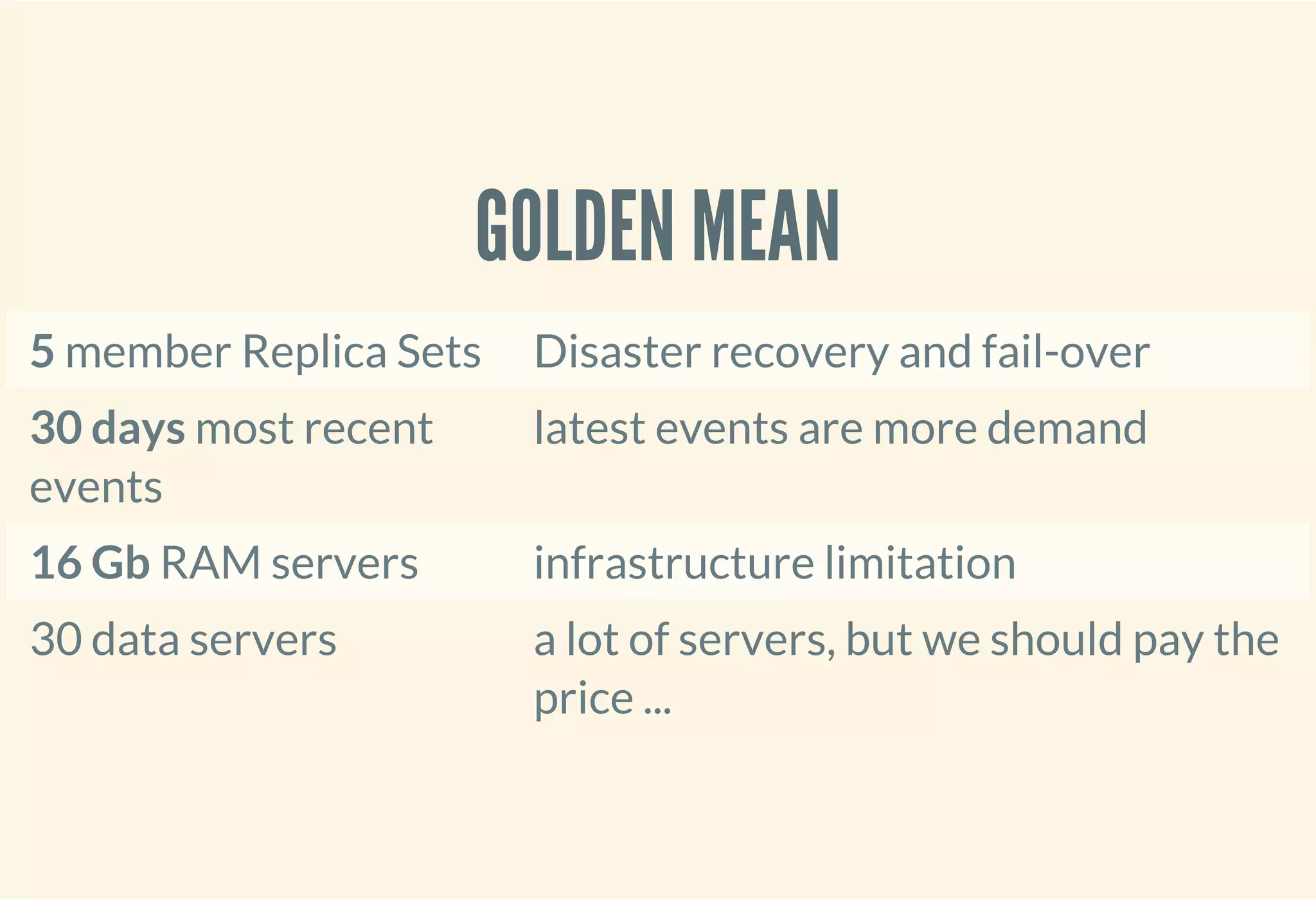

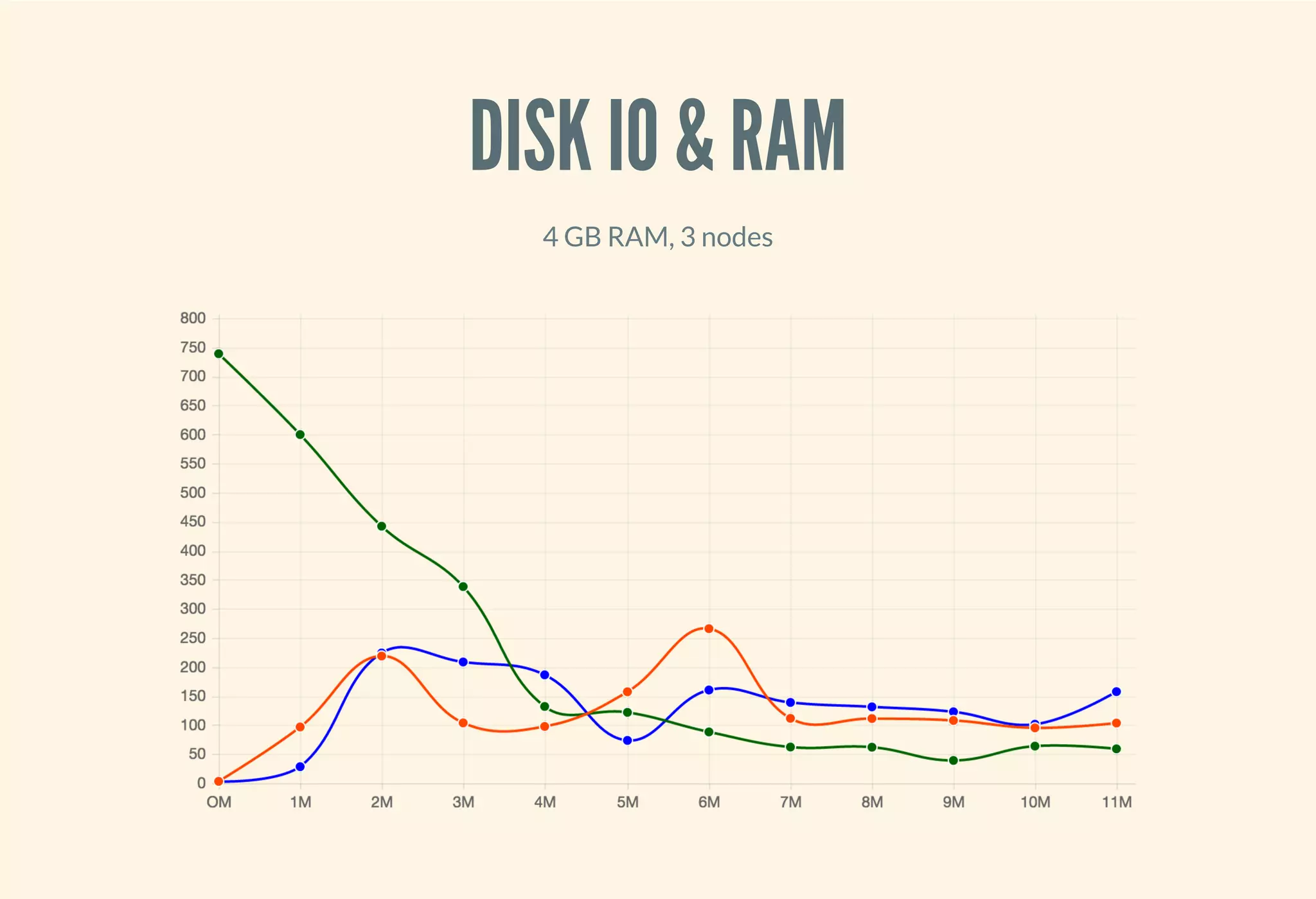

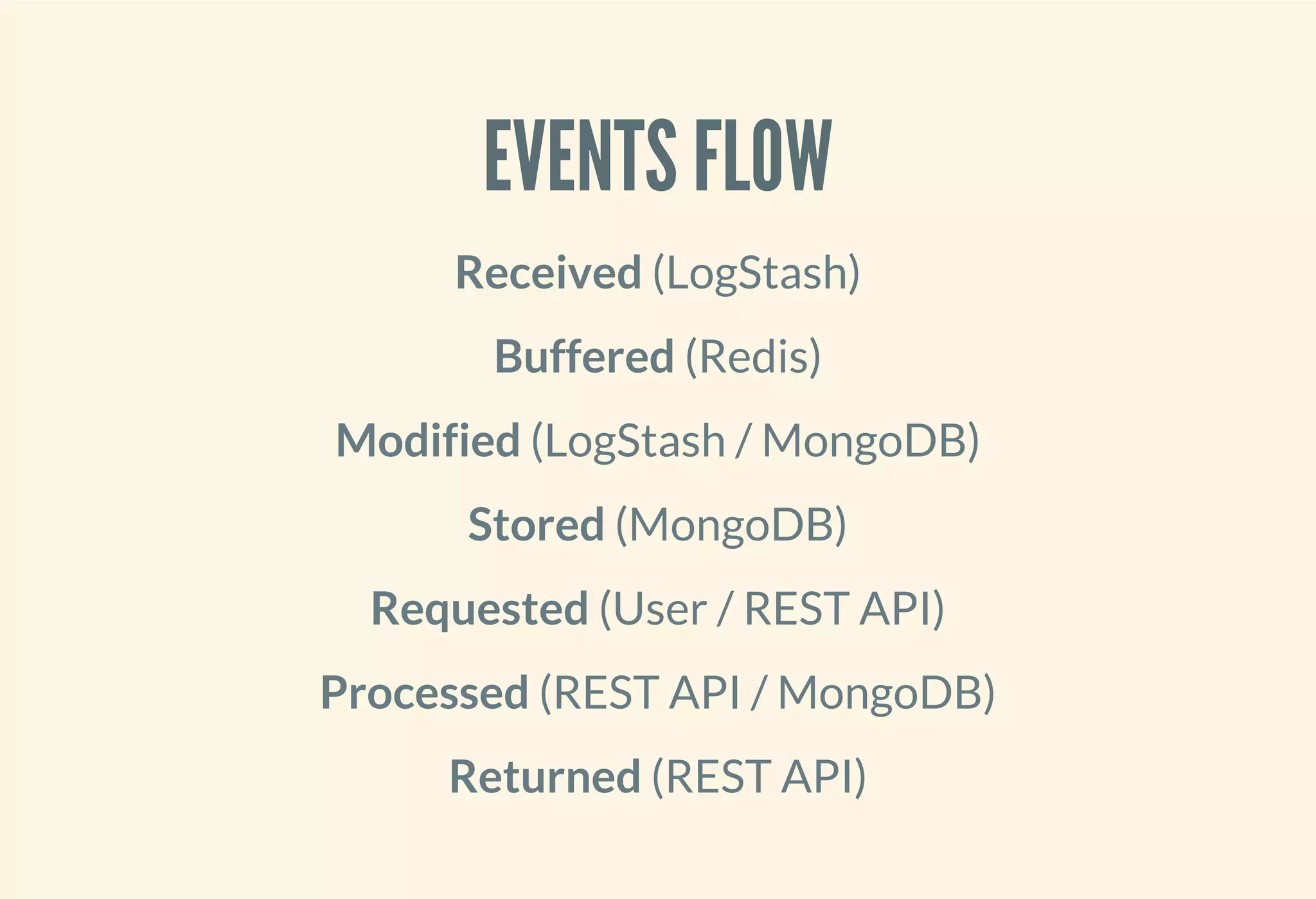

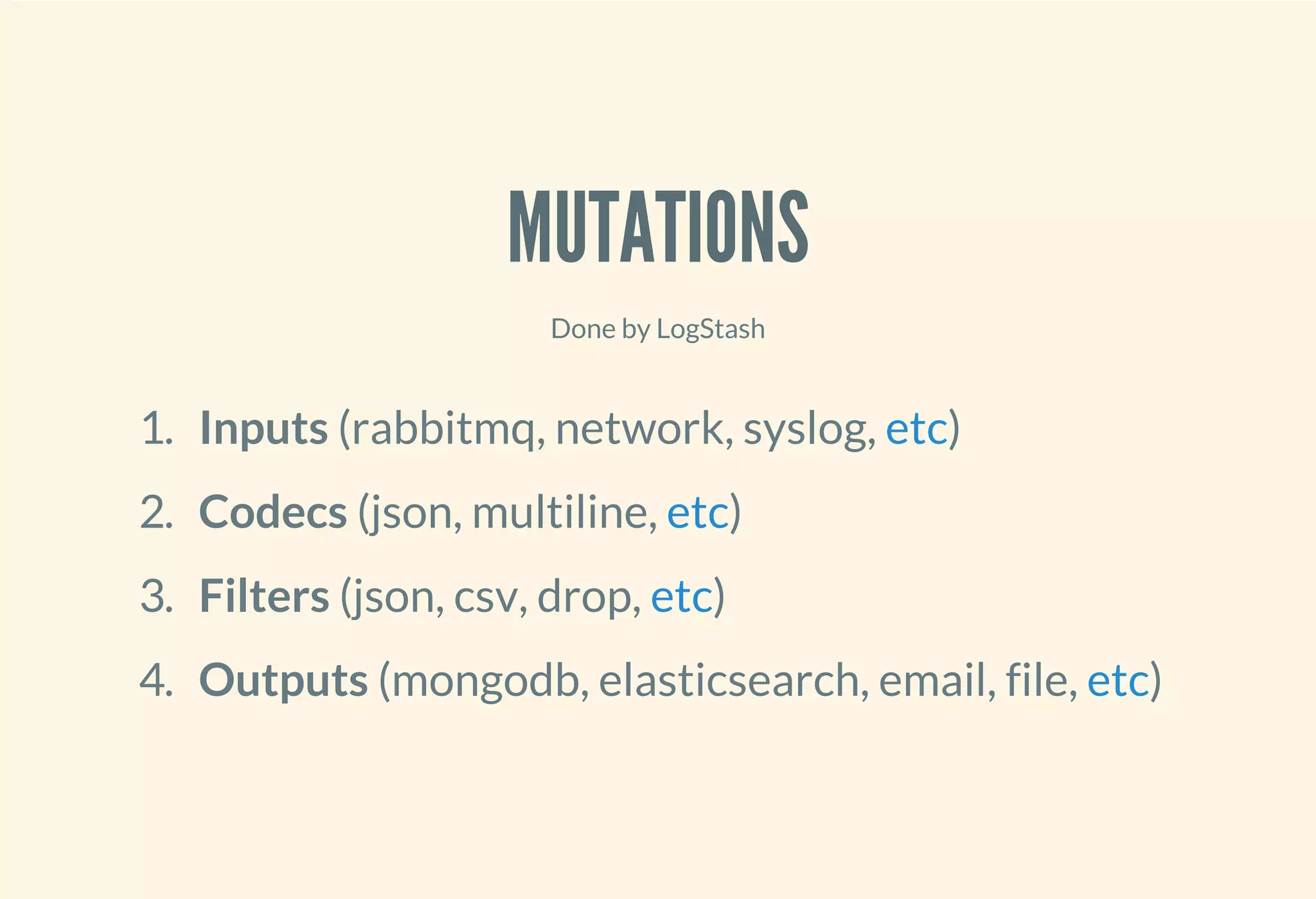

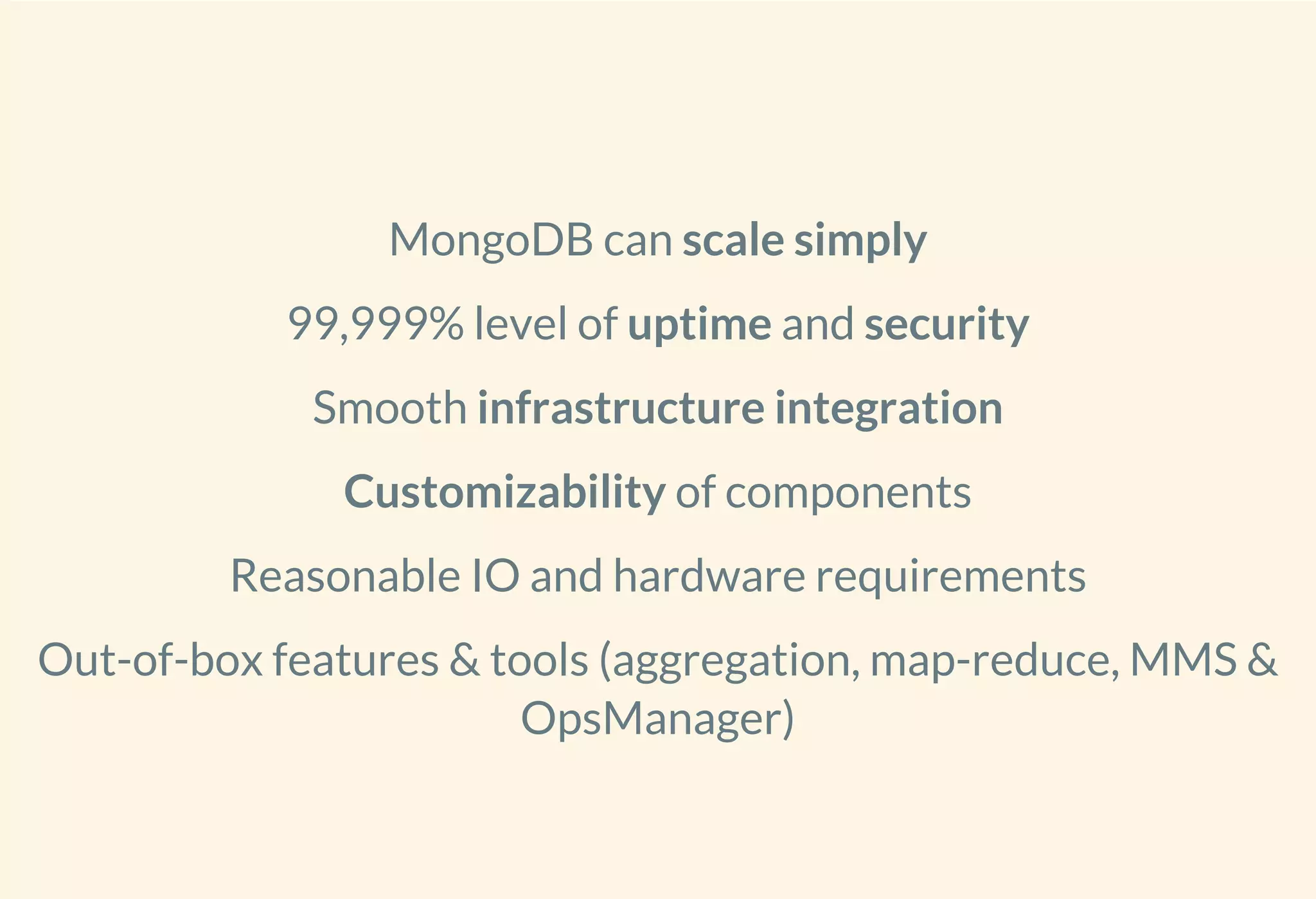

This document discusses using MongoDB to warehouse and aggregate events from different sources. MongoDB can scale simply to handle large volumes of event data, provide 99.999% uptime, and integrate smoothly with other infrastructure components. It describes how MongoDB can distribute data across multiple shards to improve performance and scale to handle large workloads of event data over long retention periods in a cost effective manner using reasonable hardware requirements. The document compares MongoDB to Elasticsearch and provides an overview of how event data would flow through the system from ingestion to storage to retrieval.