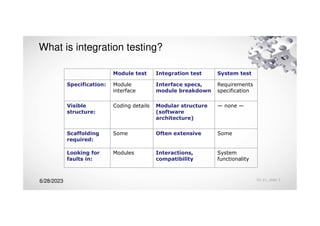

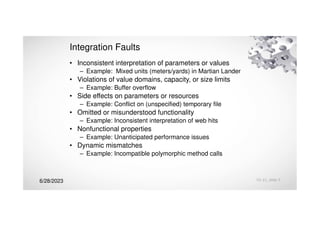

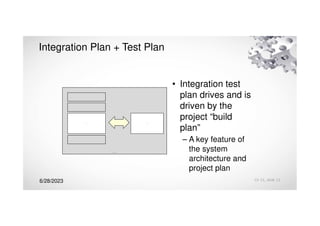

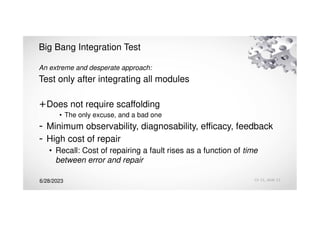

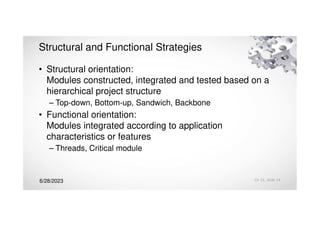

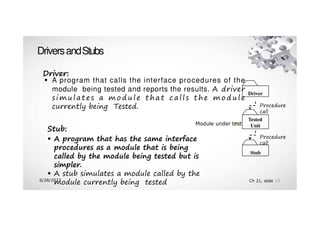

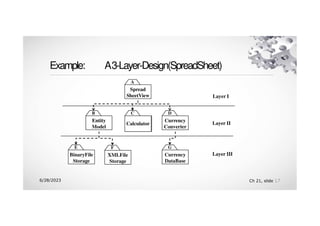

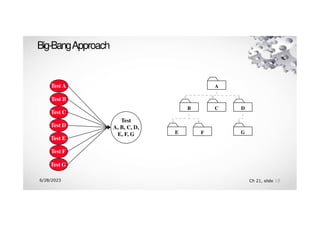

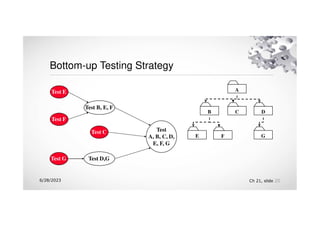

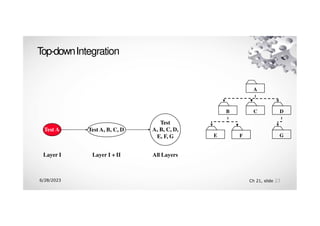

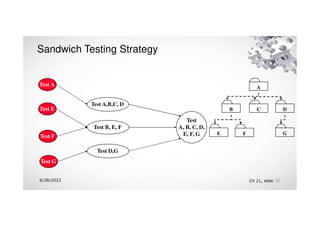

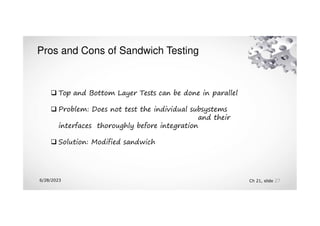

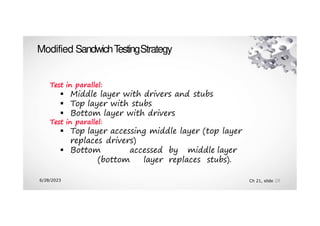

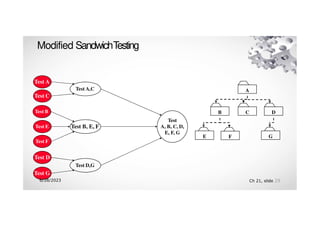

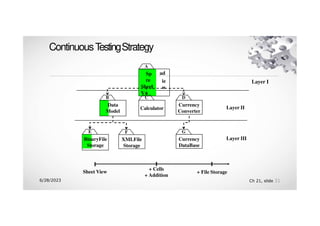

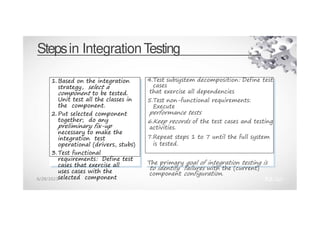

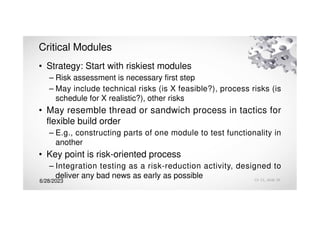

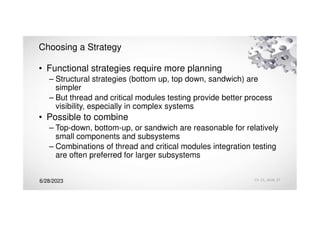

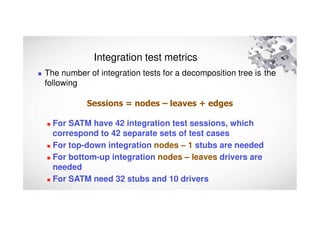

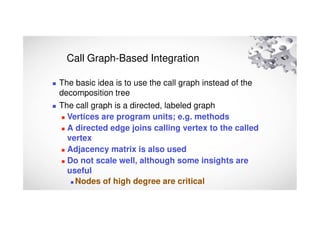

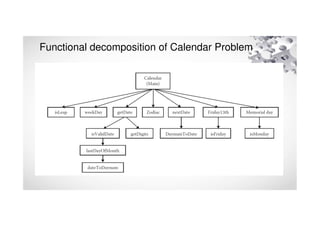

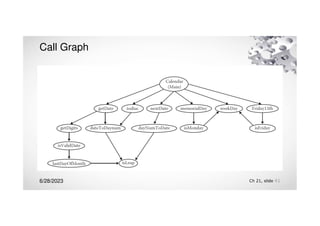

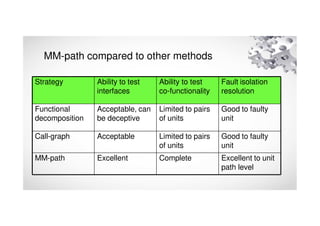

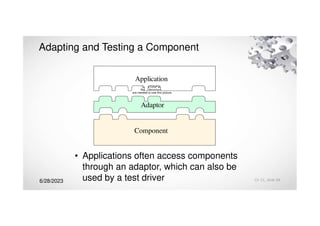

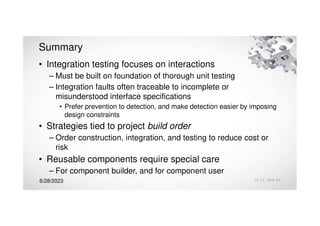

The document discusses integration testing strategies and challenges for component-based systems. It describes various integration testing approaches like incremental, top-down, bottom-up, sandwich, and big-bang. Component-based integration poses special challenges due to dependencies between components. Approaches like thread-based and critical module prioritization are recommended to increase visibility and reduce risk. Metrics like the number of integration test sessions can quantify the effort required for different decomposition strategies.