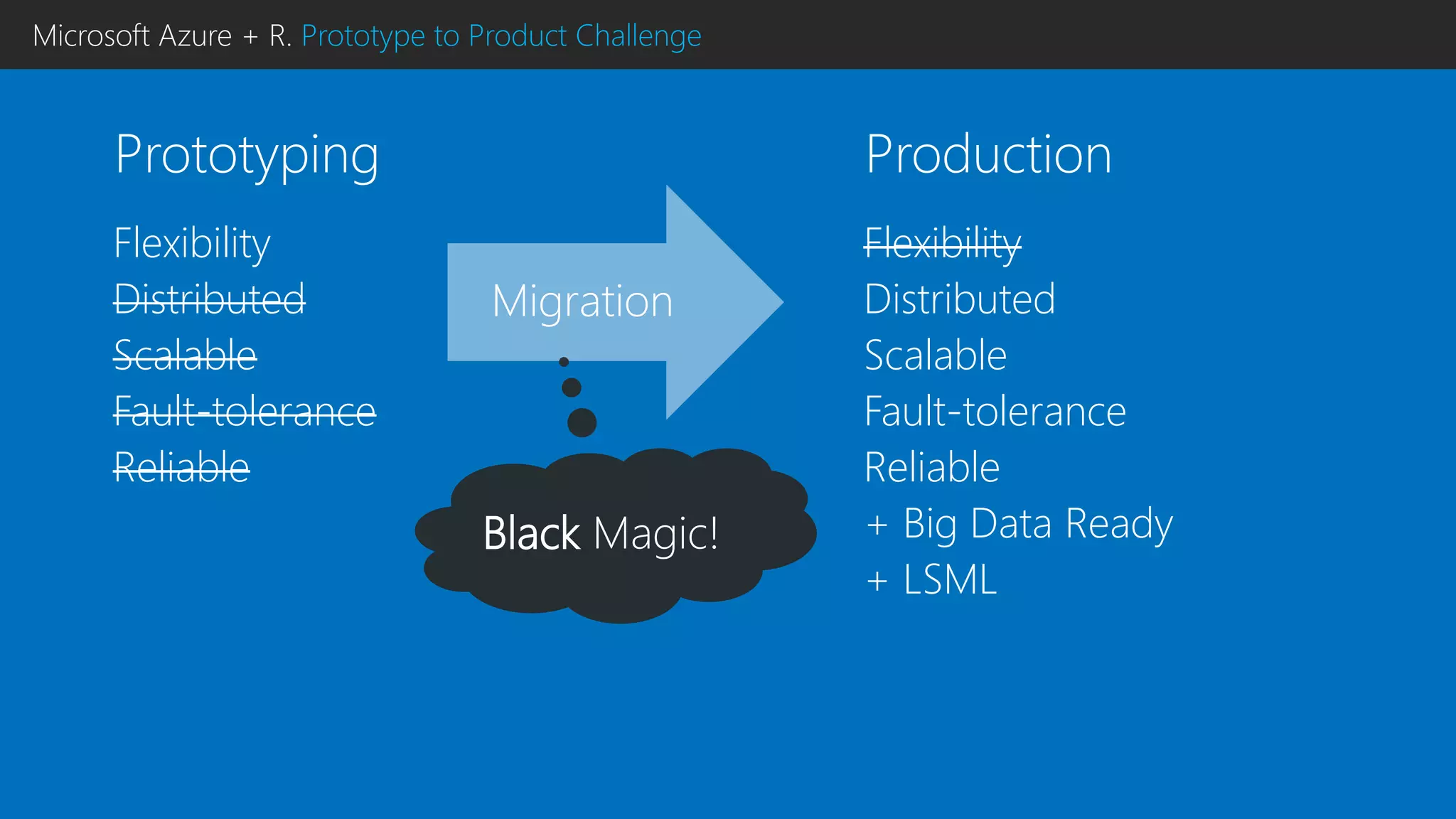

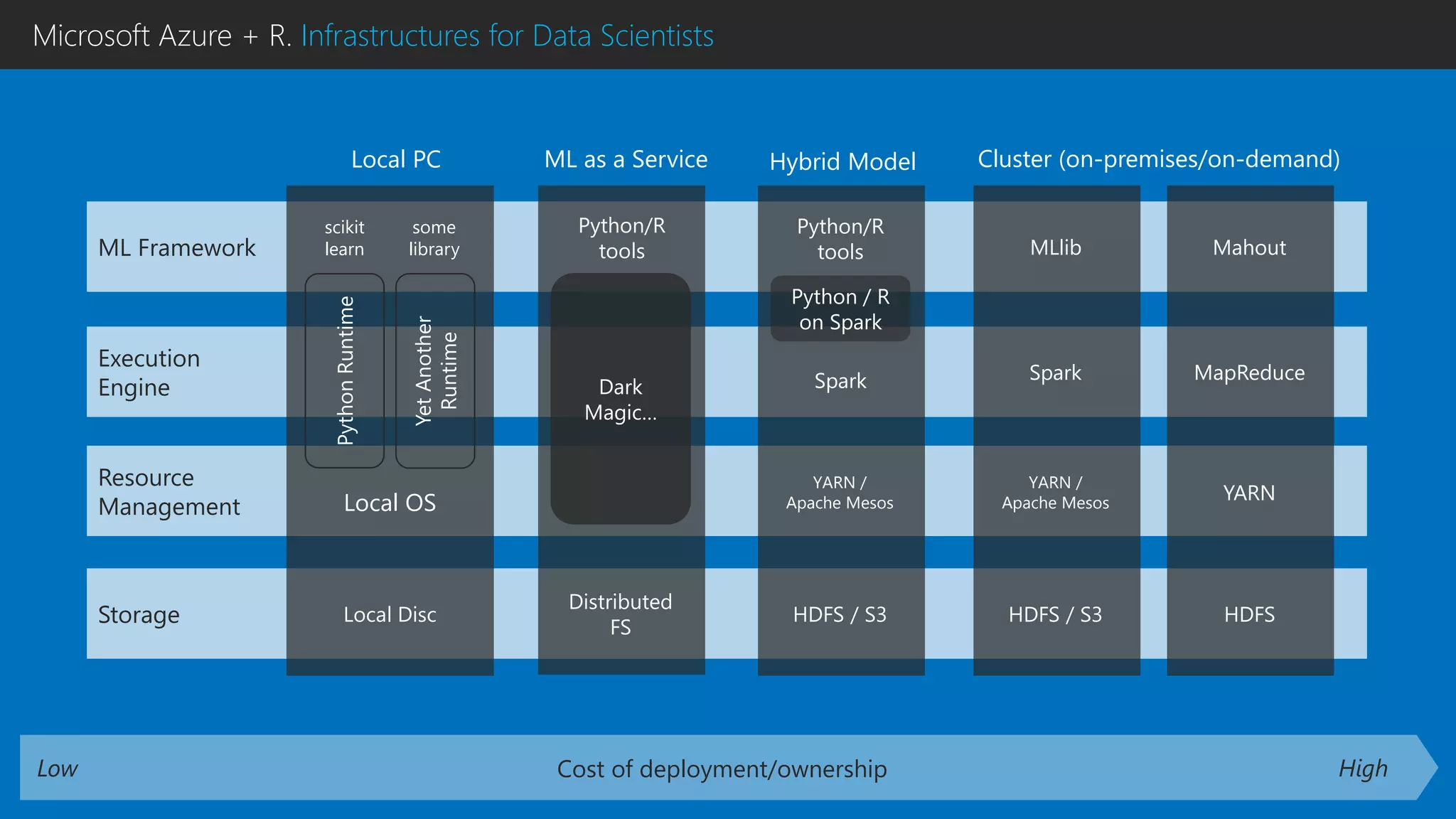

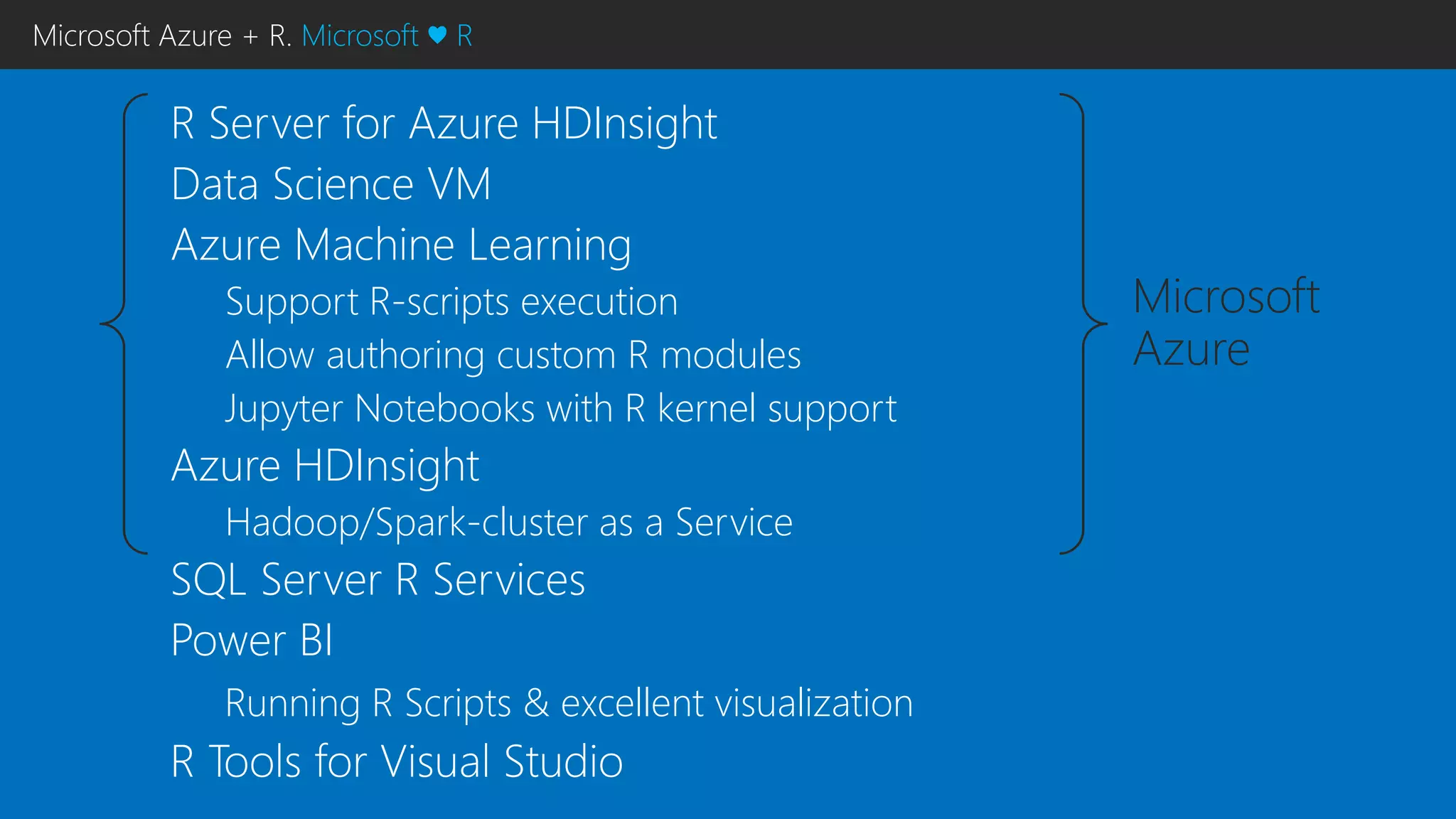

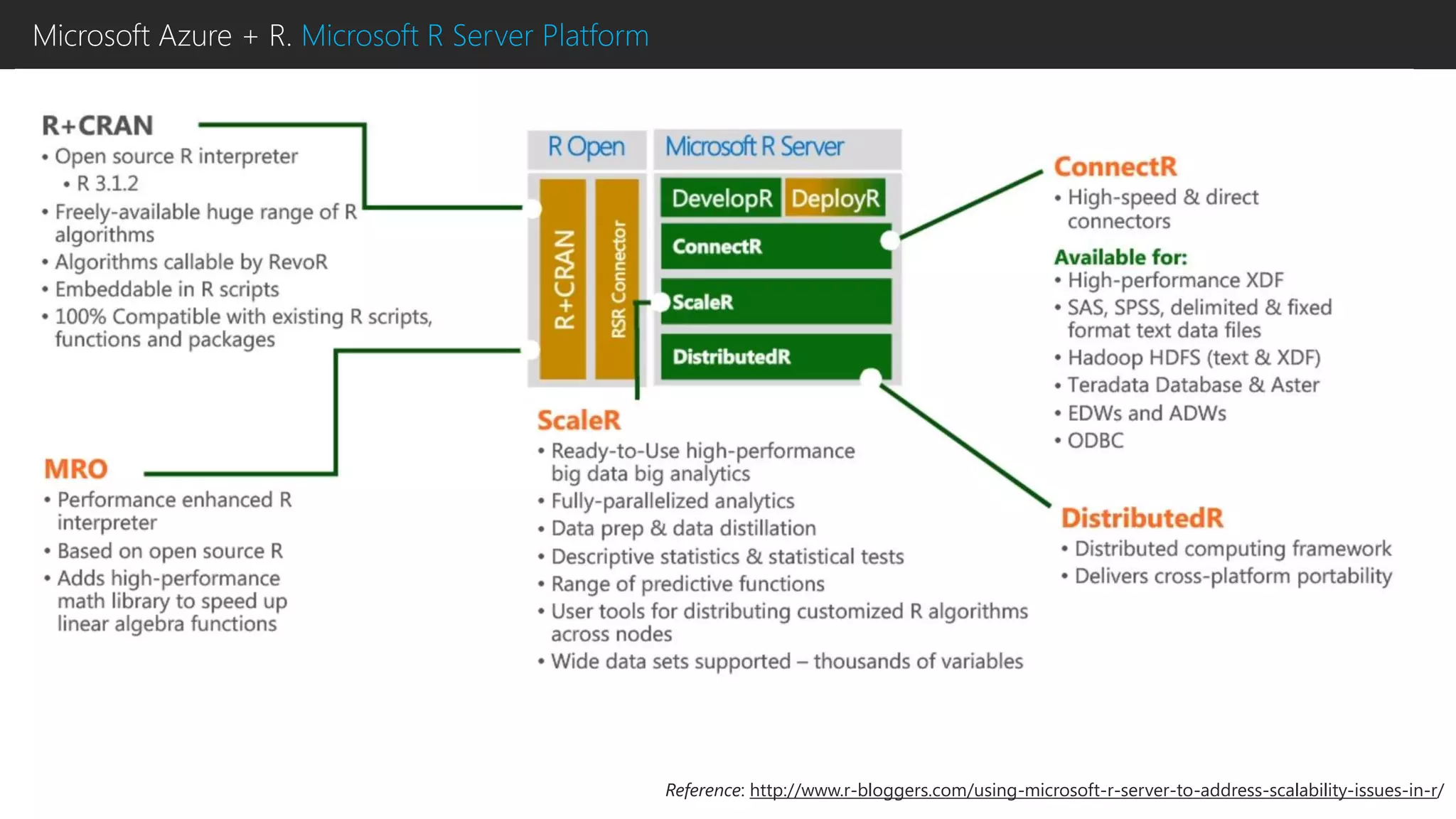

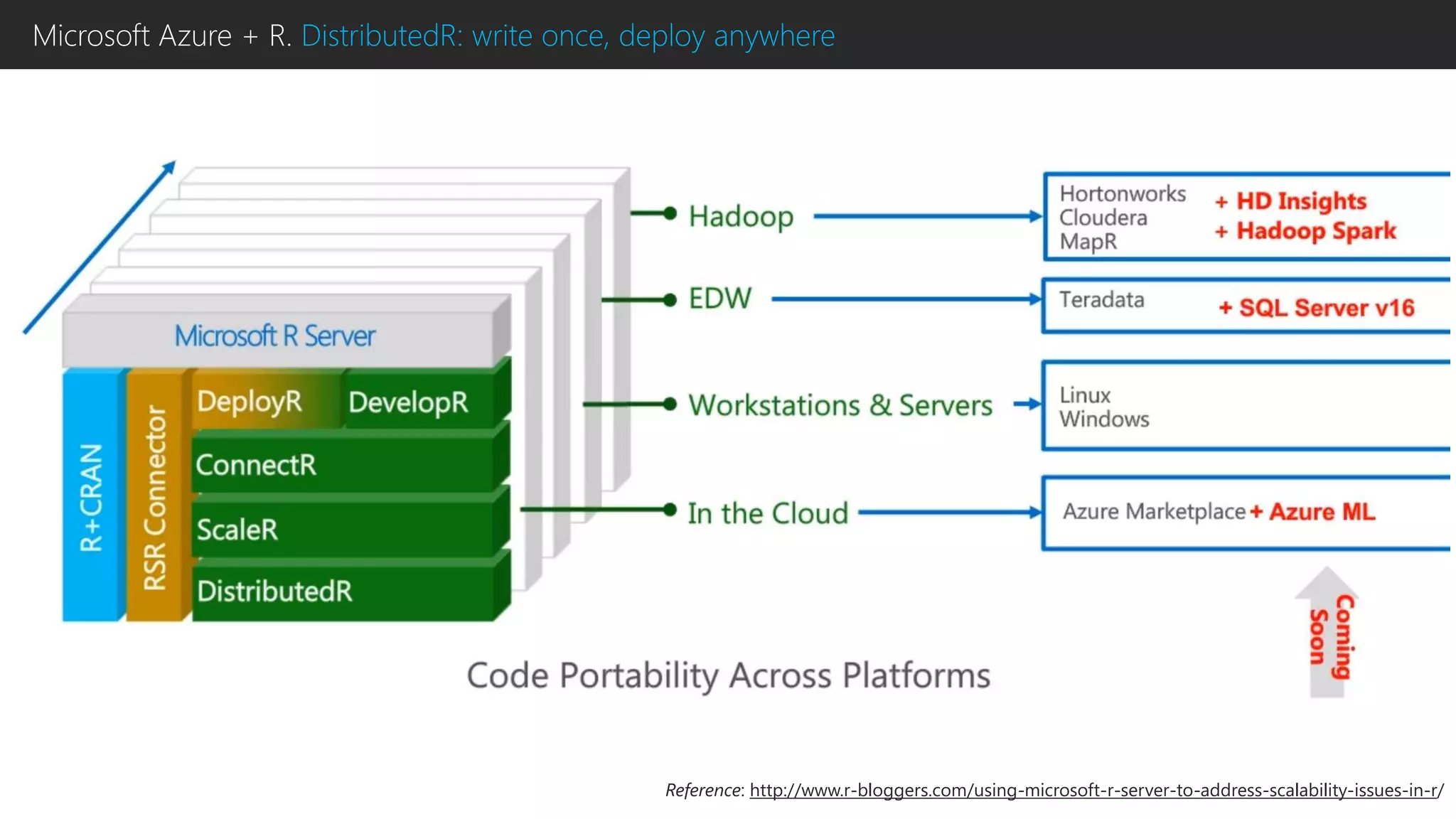

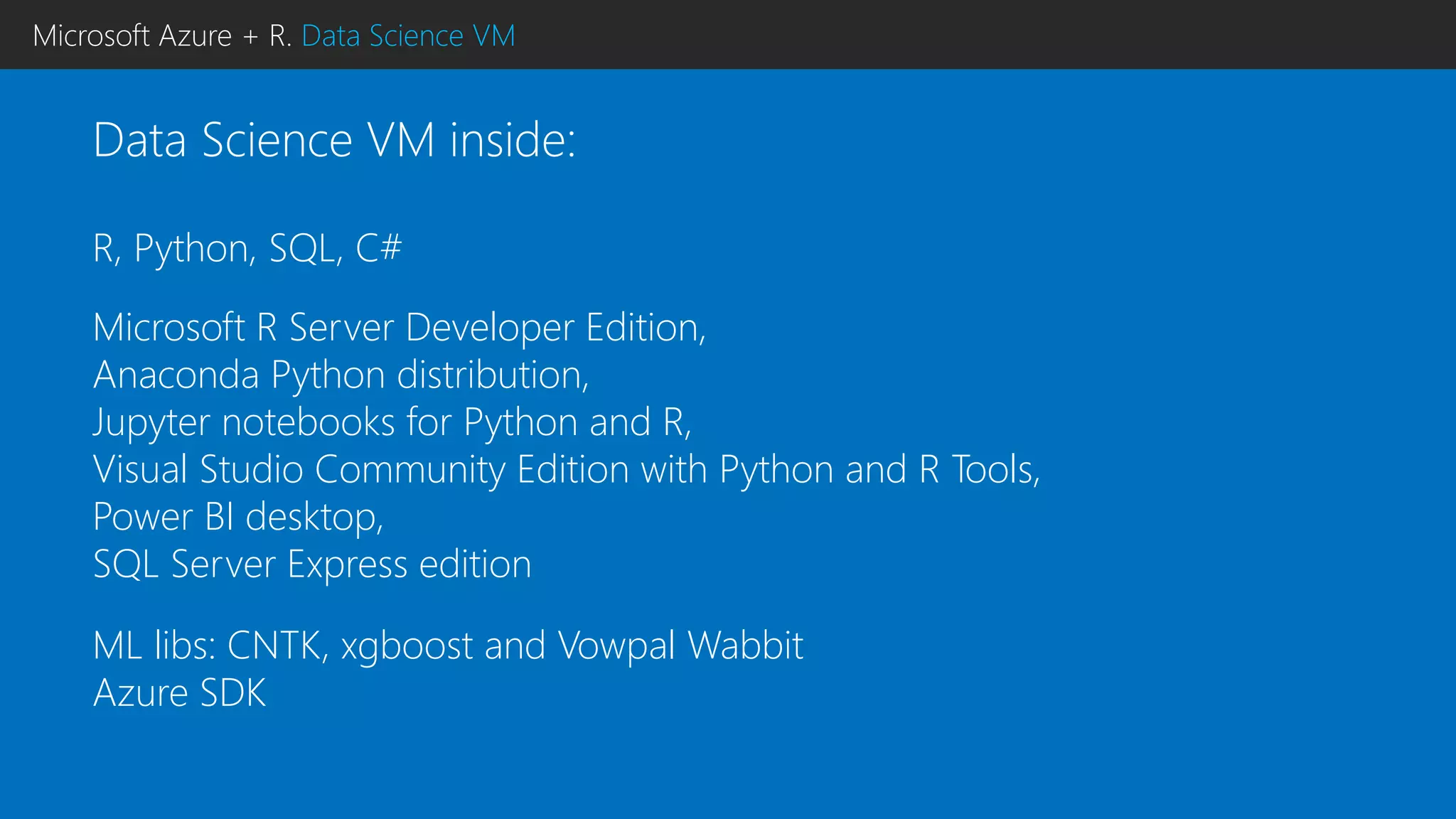

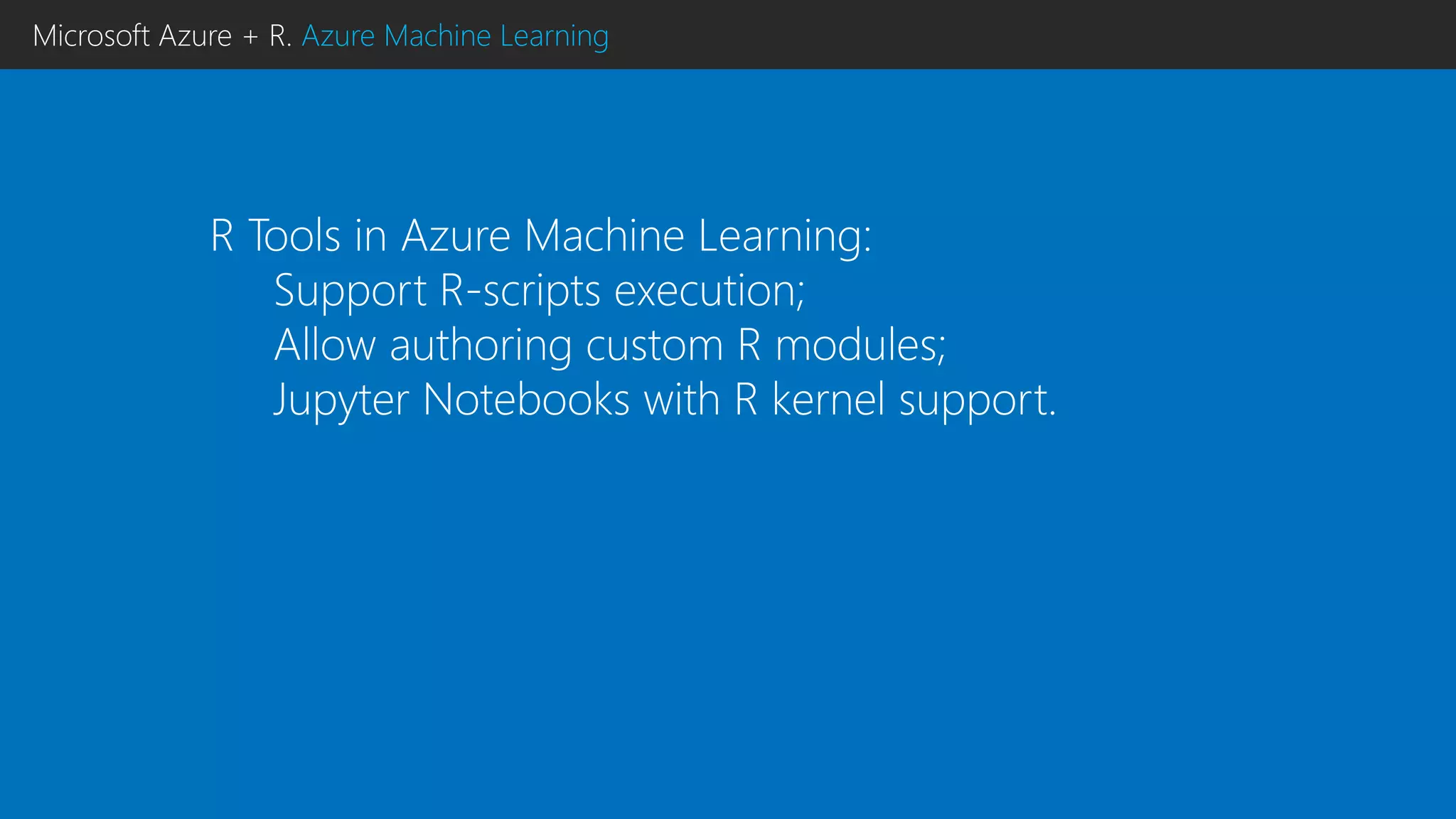

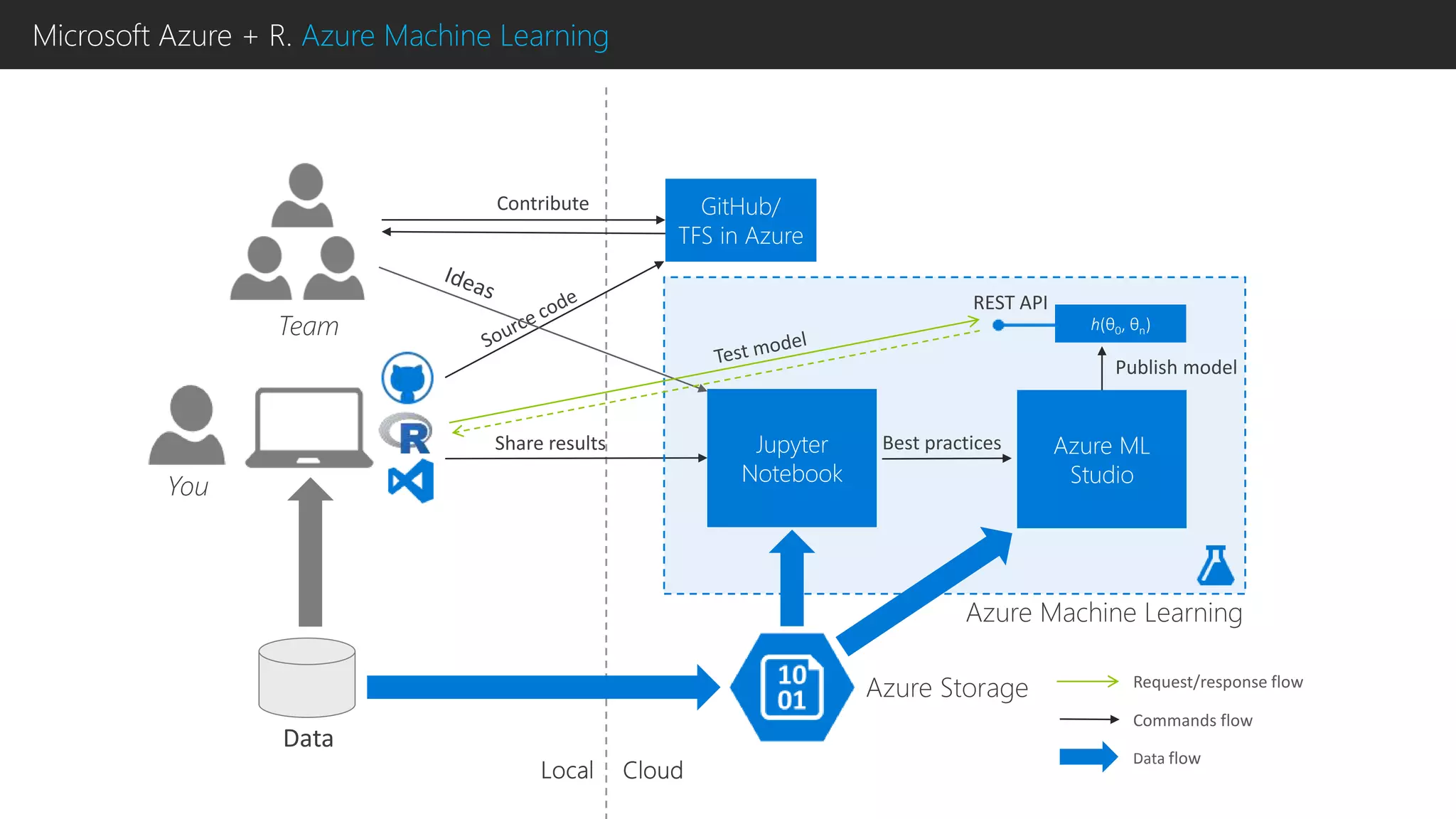

This document discusses using R with Microsoft Azure. It begins by outlining how Azure provides scalability, reliability and fault tolerance for moving models from prototyping to production. It then highlights several Azure services that support R, including HDInsight clusters, the Data Science VM, Azure Machine Learning, and SQL Server R Services. References are provided for learning more about using R with Azure Machine Learning and DistributedR.