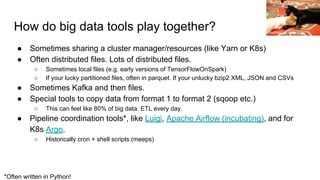

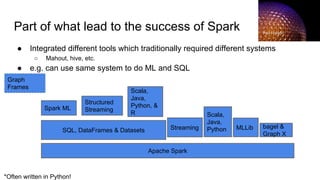

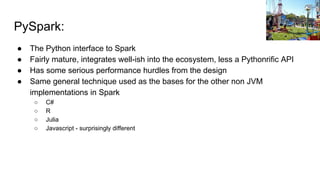

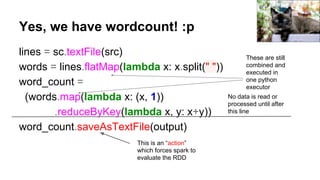

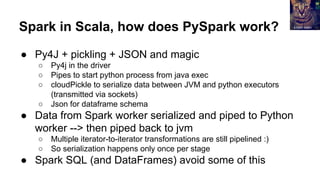

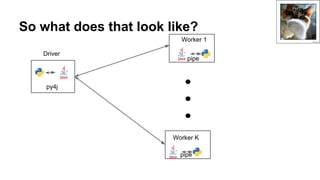

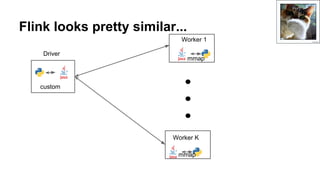

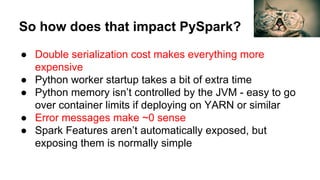

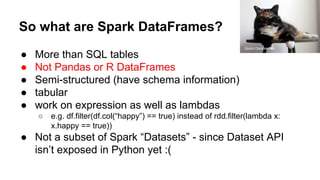

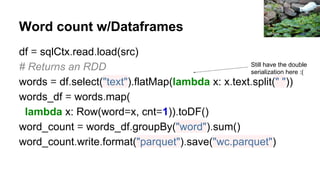

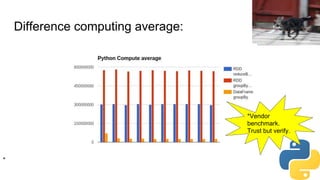

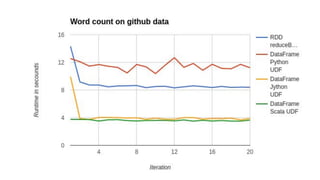

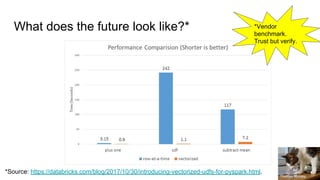

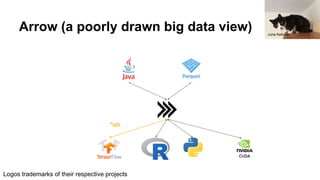

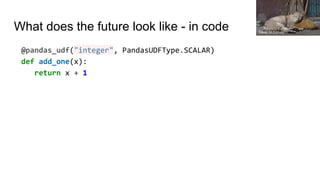

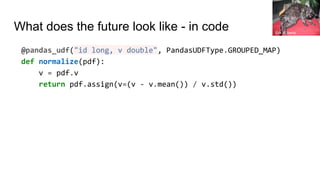

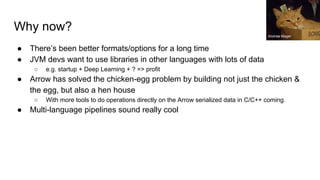

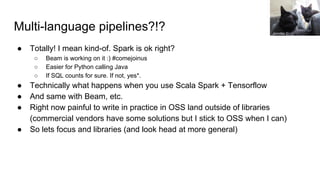

The document details a presentation by Holden Karau about integrating big data tools using Python and various technologies like Apache Arrow, Spark, Beam, and Dask. It discusses the challenges and current state of PySpark, including integration hurdles with non-JVM tools, serialization issues, and the future potential of multi-language pipelines. The importance of efficient data processing and the need for improved collaboration within the big data ecosystem are emphasized throughout the talk.

![So what goes in startup.py?

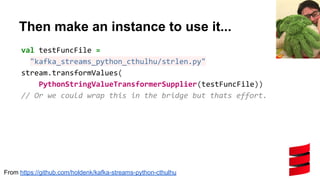

class PythonRegistrationProvider(object):

class Java:

package = "com.sparklingpandas.sparklingml.util.python"

className = "PythonRegisterationProvider"

implements = [package + "." + className]

zenera](https://image.slidesharecdn.com/makingthebigdataecosystemworktogetherwithpythonapachearrowsparkbeamanddask2-180428155945/85/Making-the-big-data-ecosystem-work-together-with-Python-Apache-Arrow-Apache-Spark-Apache-Beam-and-Dask-42-320.jpg)

![So what goes in startup.py?

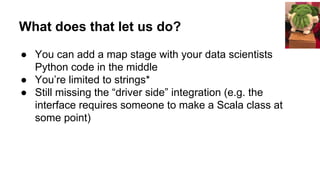

def registerFunction(self, ssc, jsession, function_name,

params):

setup_spark_context_if_needed()

if function_name in functions_info:

function_info = functions_info[function_name]

evaledParams = ast.literal_eval(params)

func = function_info.func(*evaledParams)

udf = UserDefinedFunction(func, ret_type,

make_registration_name())

return udf._judf

else:

print("Could not find function")

Jennifer C.](https://image.slidesharecdn.com/makingthebigdataecosystemworktogetherwithpythonapachearrowsparkbeamanddask2-180428155945/85/Making-the-big-data-ecosystem-work-together-with-Python-Apache-Arrow-Apache-Spark-Apache-Beam-and-Dask-43-320.jpg)

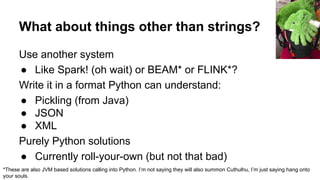

dataIn.readFully(result)

// Assume UTF8, what could go wrong? :p

new String(result)

}

From https://github.com/holdenk/kafka-streams-python-cthulhu](https://image.slidesharecdn.com/makingthebigdataecosystemworktogetherwithpythonapachearrowsparkbeamanddask2-180428155945/85/Making-the-big-data-ecosystem-work-together-with-Python-Apache-Arrow-Apache-Spark-Apache-Beam-and-Dask-60-320.jpg)