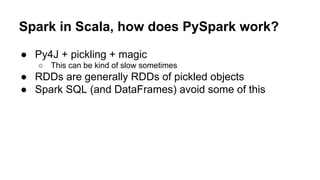

This document is an introduction to Apache Spark with a focus on using PySpark for distributed computing, aimed at attendees of a 2016 PyLadies workshop. It covers the basics of Spark, including its architecture, RDDs, transformations, and actions, as well as performance considerations and how to use PySpark for machine learning tasks. It also shares resources, such as notebooks and references for further learning, alongside a promotional mention of an upcoming book on Spark performance.

![SparkContext: entry to the world

● Can be used to create RDDs from many input sources

○ Native collections, local & remote FS

○ Any Hadoop Data Source

● Also create counters & accumulators

● Automatically created in the shells (called sc)

● Specify master & app name when creating

○ Master can be local[*], spark:// , yarn, etc.

○ app name should be human readable and make sense

● etc.

Petfu

l](https://image.slidesharecdn.com/gettingstartedwithapachesparkinpythononml-pyladiestoronto2016-160920200544/85/Getting-started-with-Apache-Spark-in-Python-PyLadies-Toronto-2016-11-320.jpg)

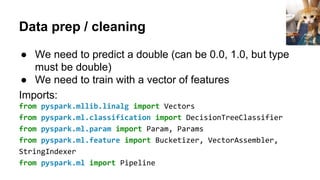

![Data prep / cleaning continued

# Combines a list of double input features into a vector

assembler = VectorAssembler(inputCols=["age", "education-num"],

outputCol="feautres")

# String indexer converts a set of strings into doubles

indexer =

StringIndexer(inputCol="category")

.setOutputCol("category-index")

# Can be used to combine pipeline components together

pipeline = Pipeline().setStages([assembler, indexer])

Huang

Yun

Chung](https://image.slidesharecdn.com/gettingstartedwithapachesparkinpythononml-pyladiestoronto2016-160920200544/85/Getting-started-with-Apache-Spark-in-Python-PyLadies-Toronto-2016-30-320.jpg)

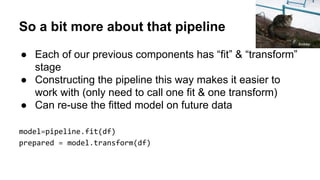

![Let's train a model on our prepared data:

# Specify model

dt = DecisionTreeClassifier(labelCol = "category-index",

featuresCol="features")

# Fit it

dt_model = dt.fit(prepared)

# Or as part of the pipeline

pipeline_and_model = Pipeline().setStages([assembler, indexer,

dt])

pipeline_model = pipeline_and_model.fit(df)](https://image.slidesharecdn.com/gettingstartedwithapachesparkinpythononml-pyladiestoronto2016-160920200544/85/Getting-started-with-Apache-Spark-in-Python-PyLadies-Toronto-2016-33-320.jpg)