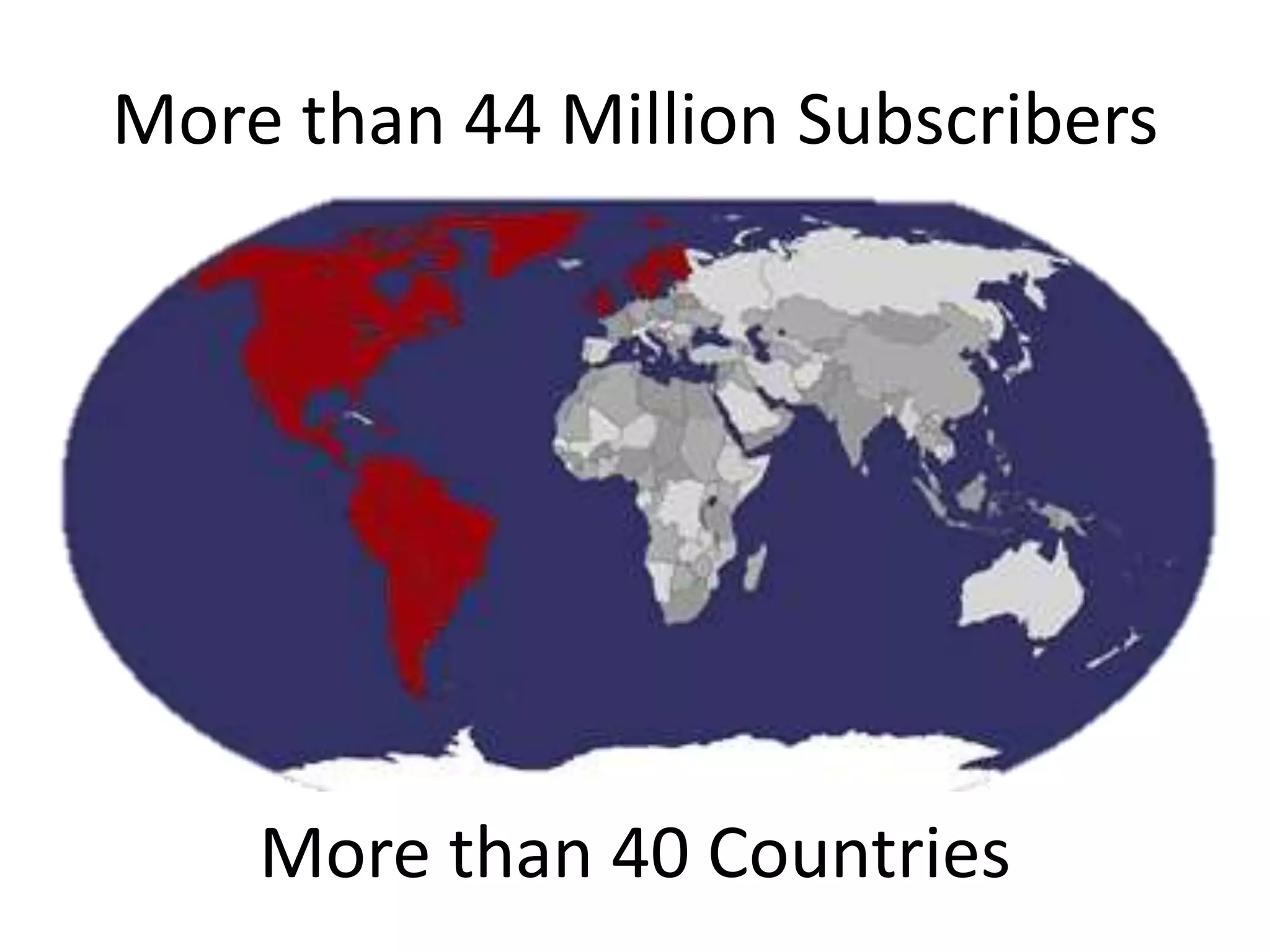

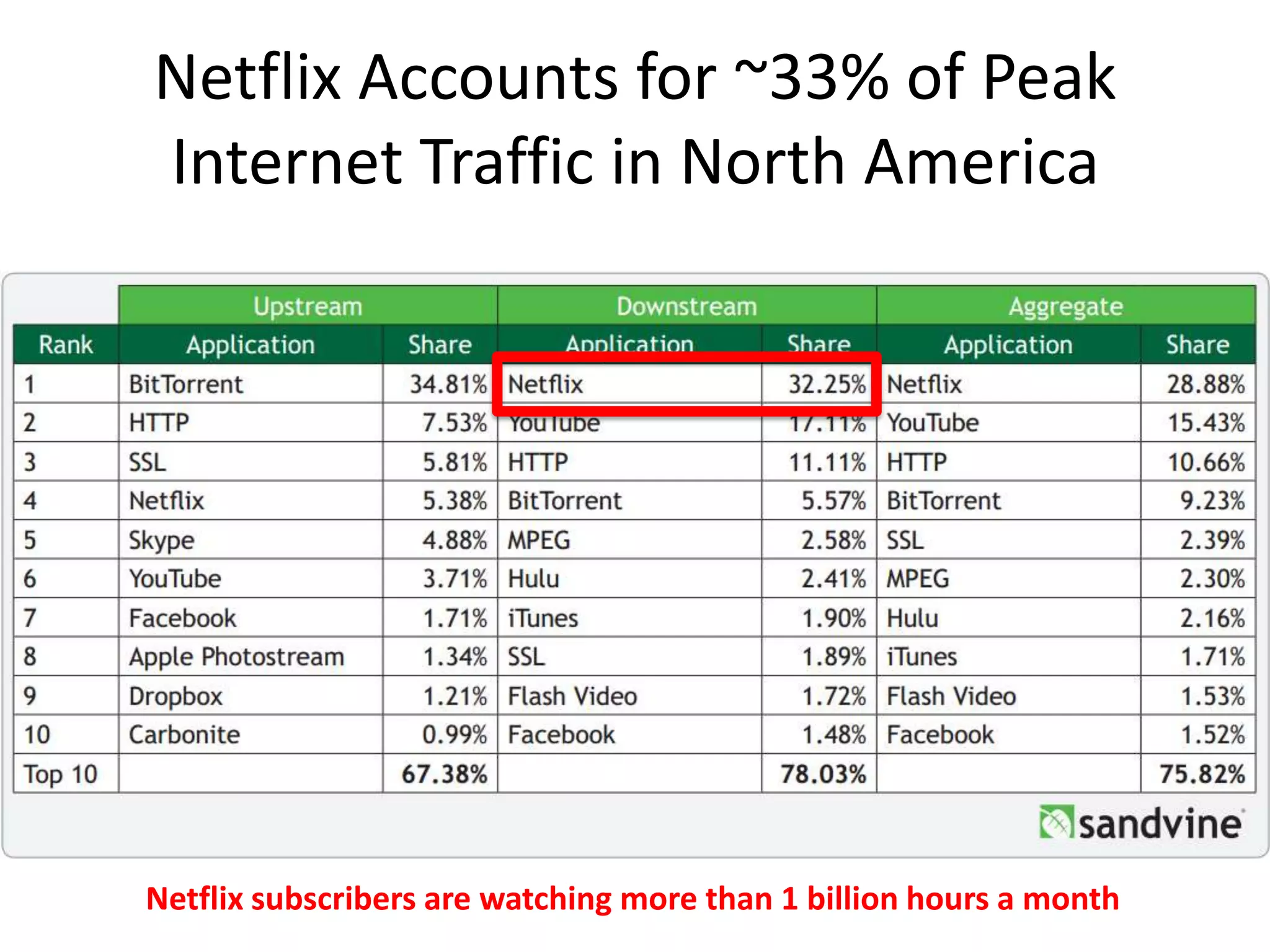

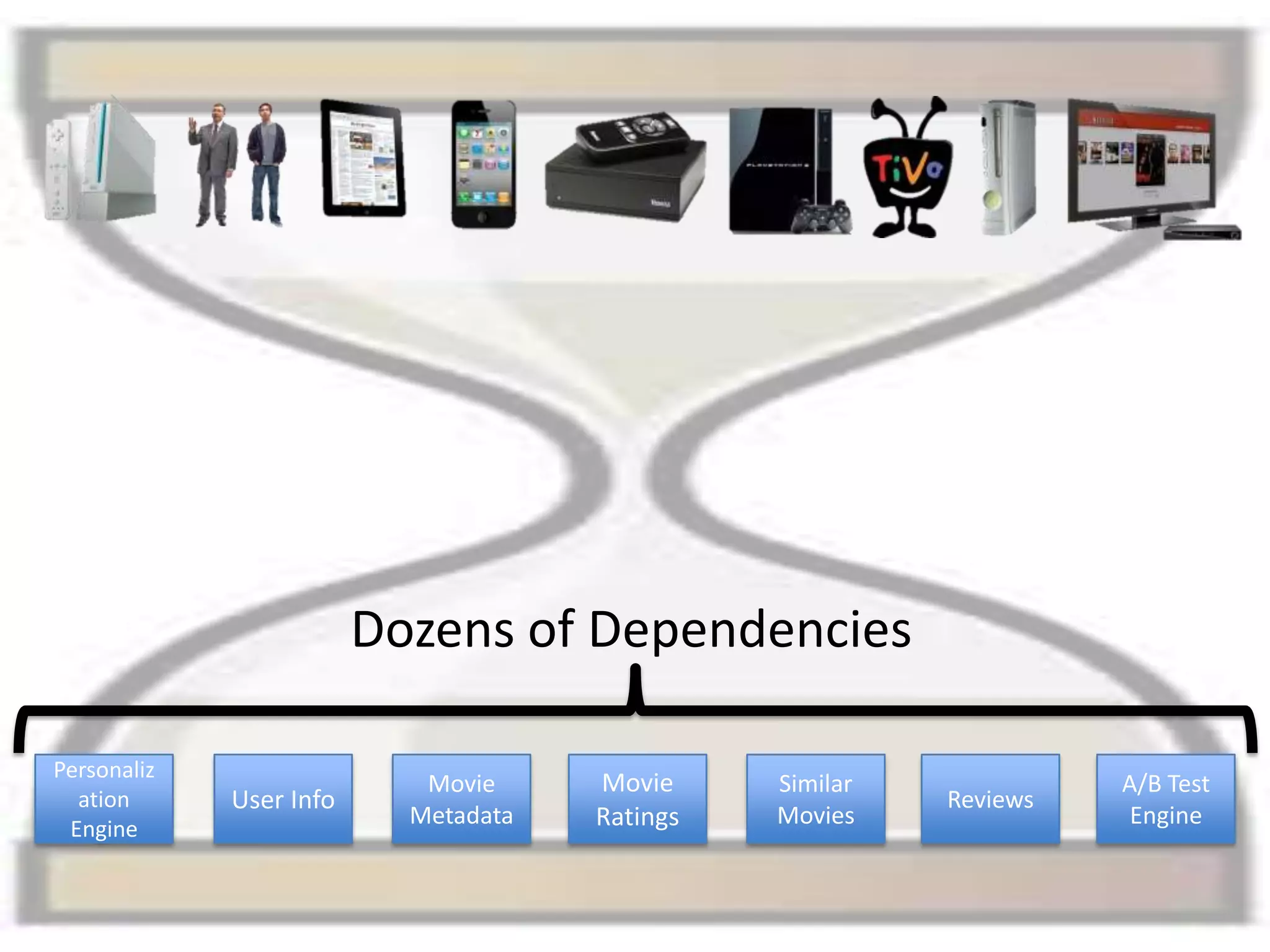

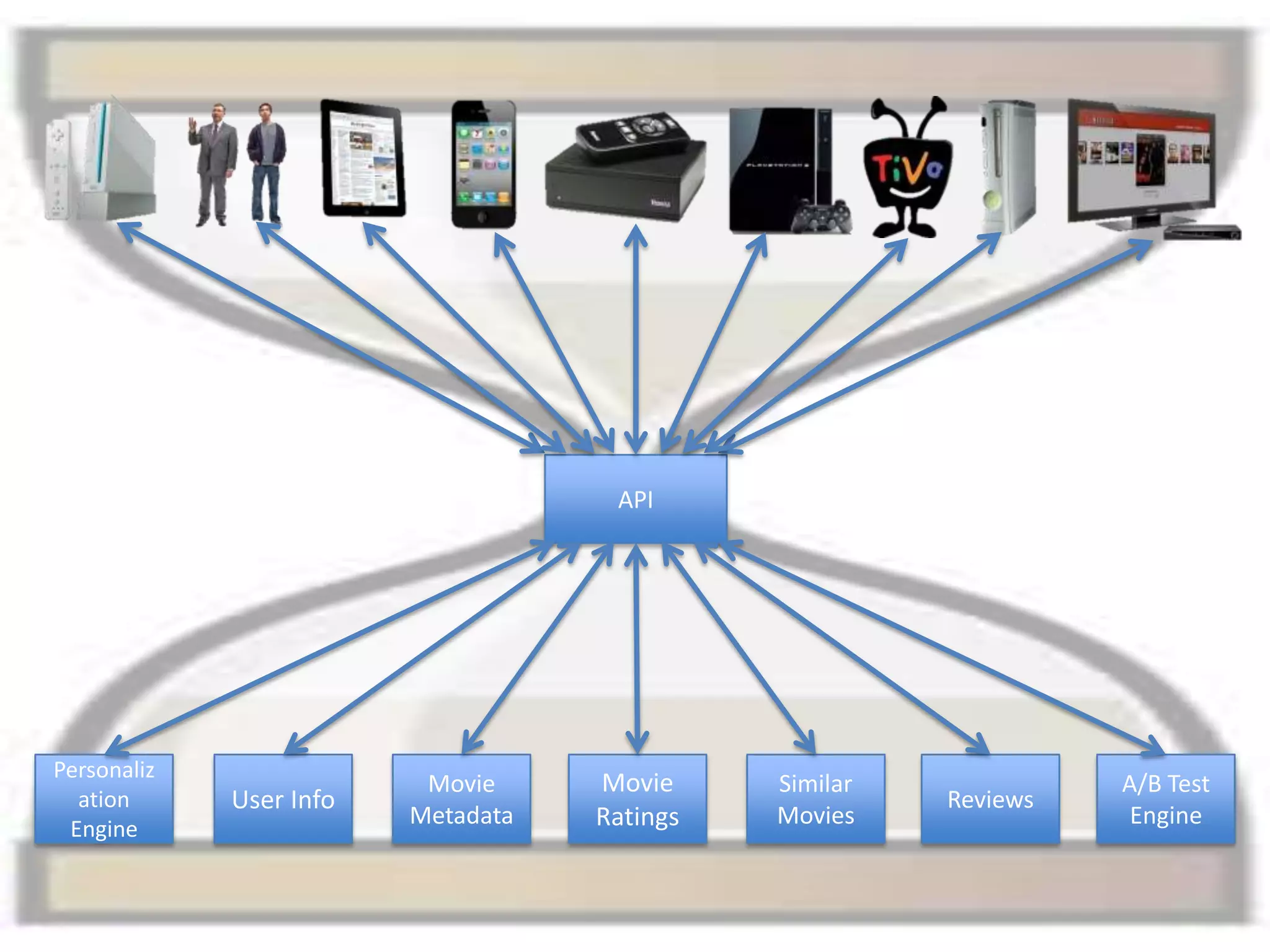

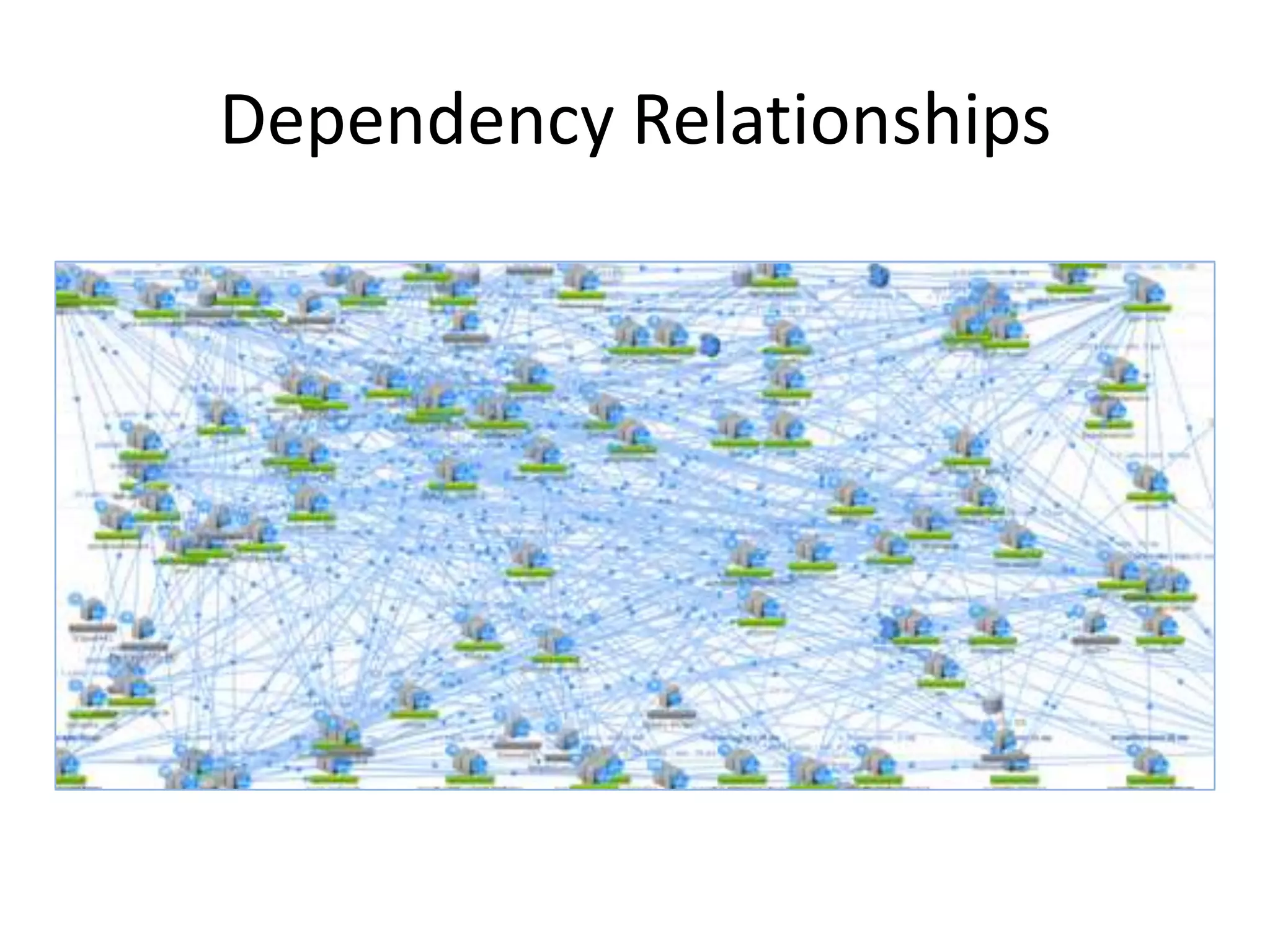

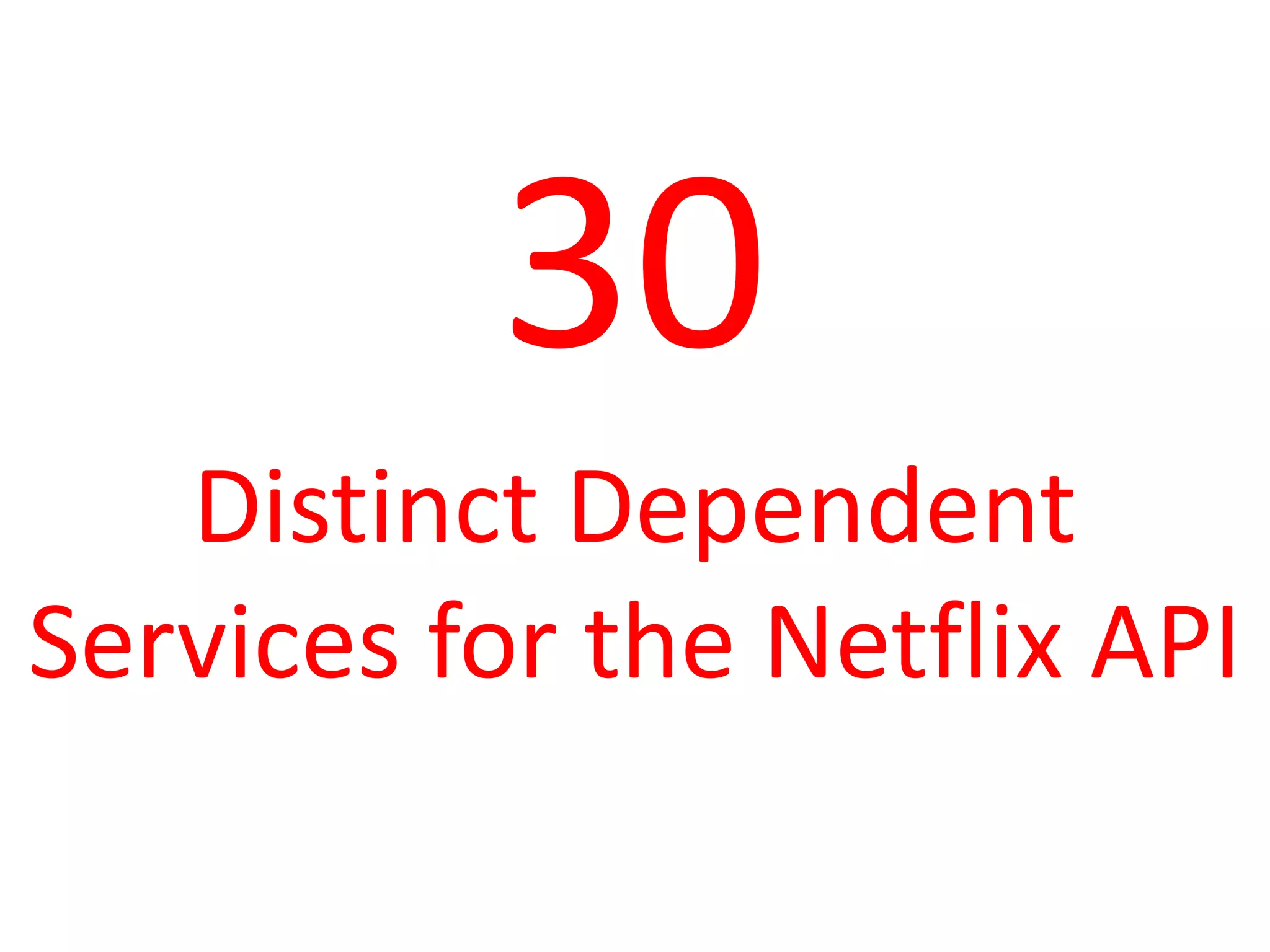

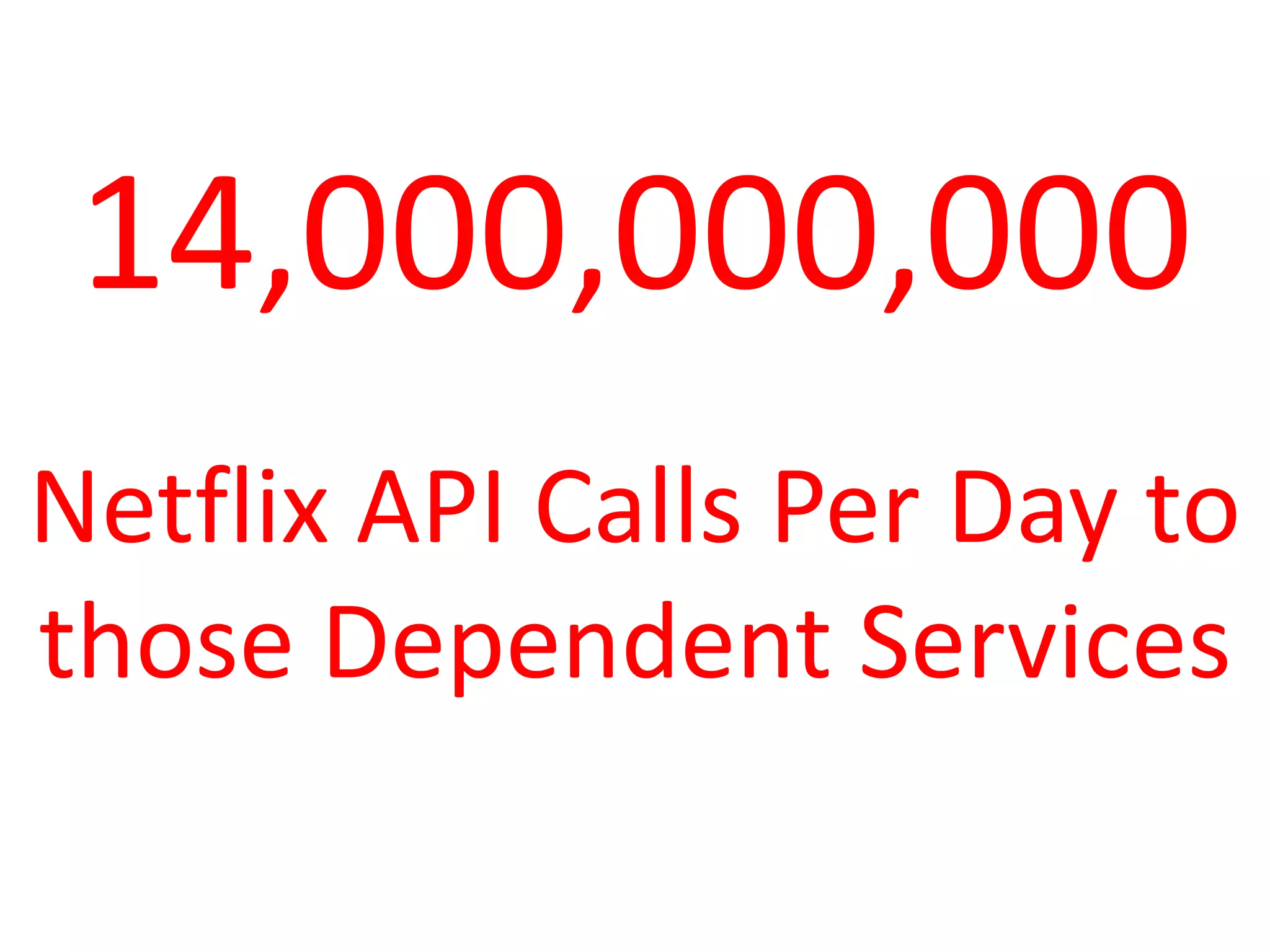

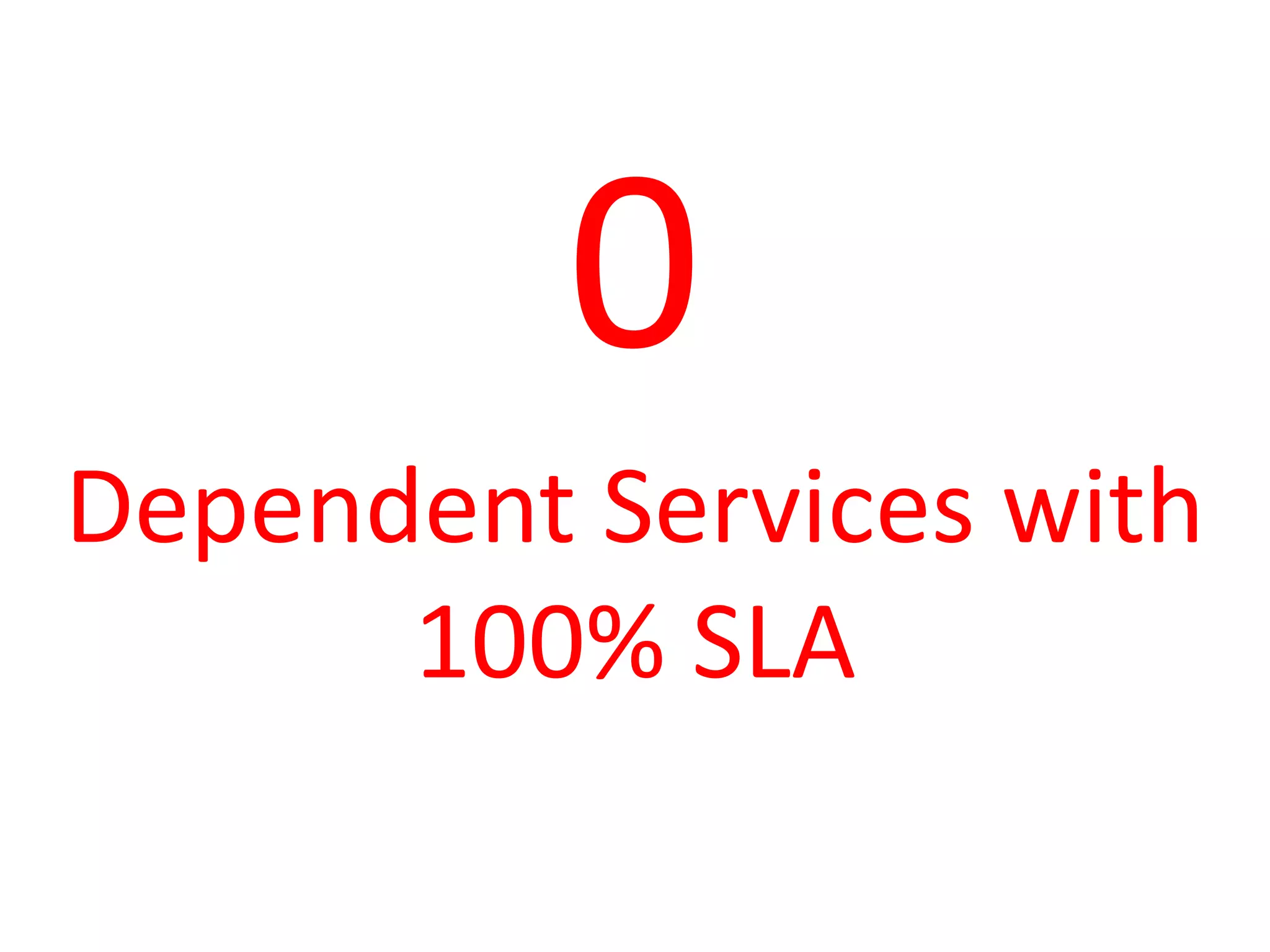

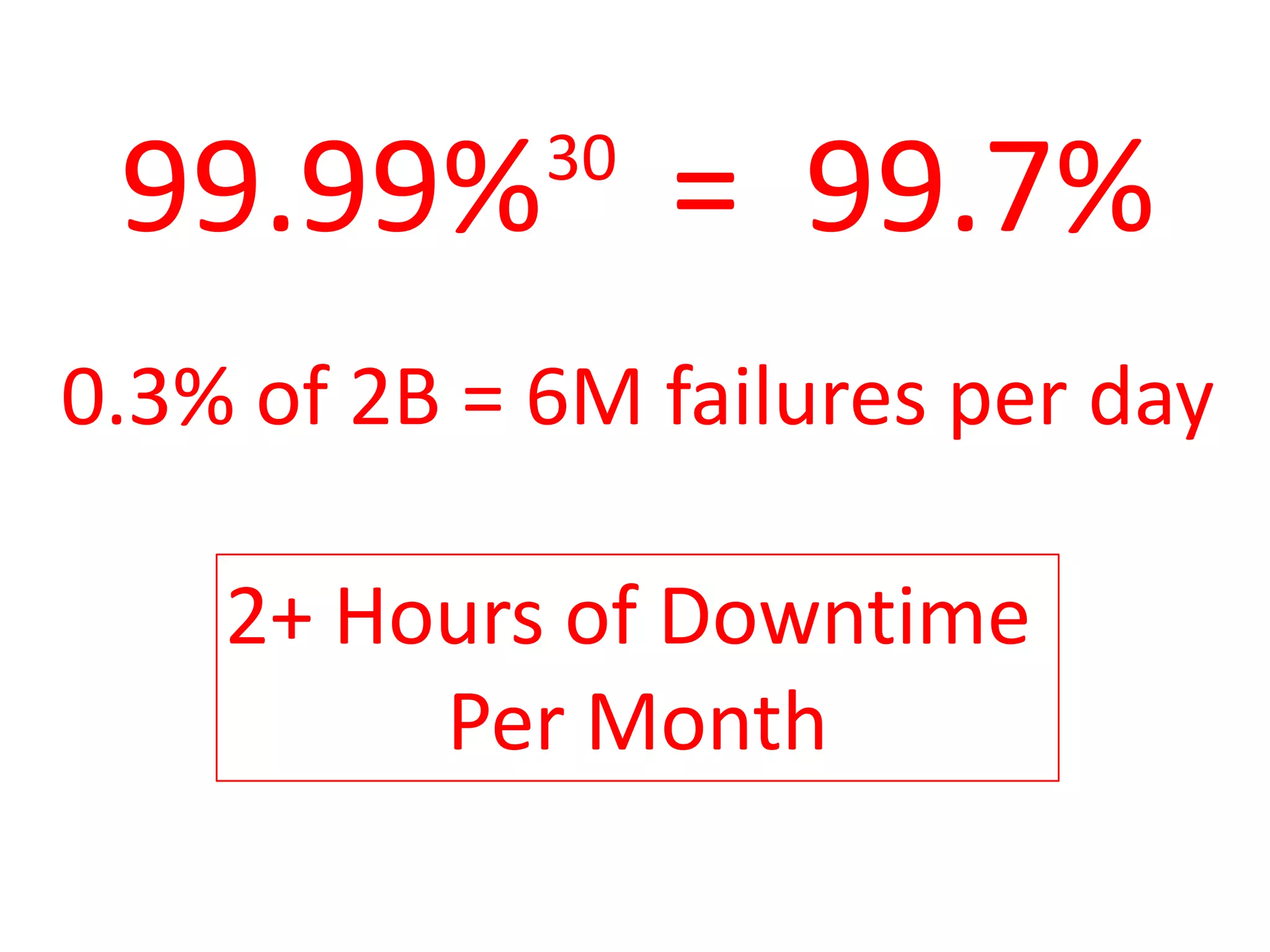

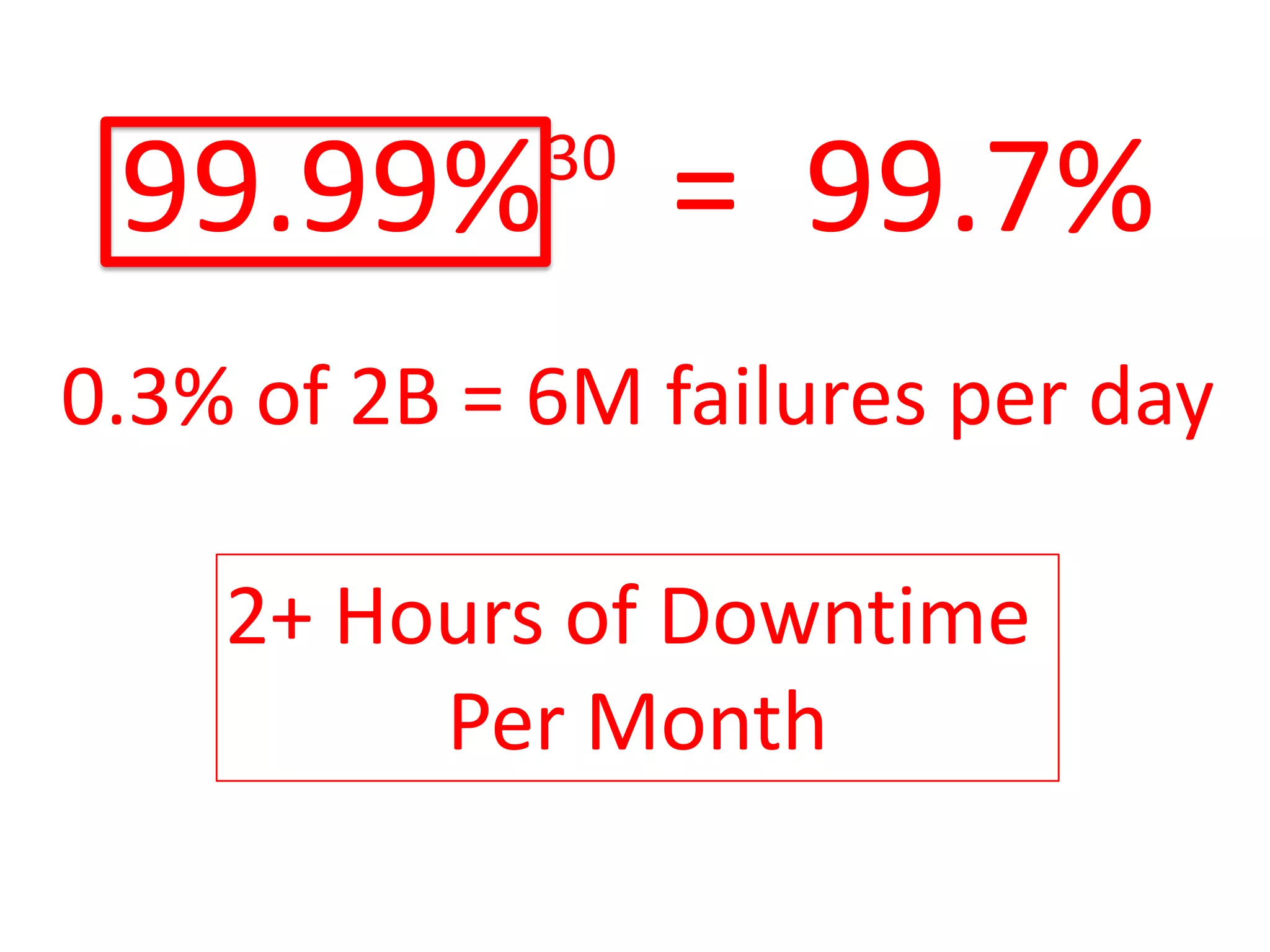

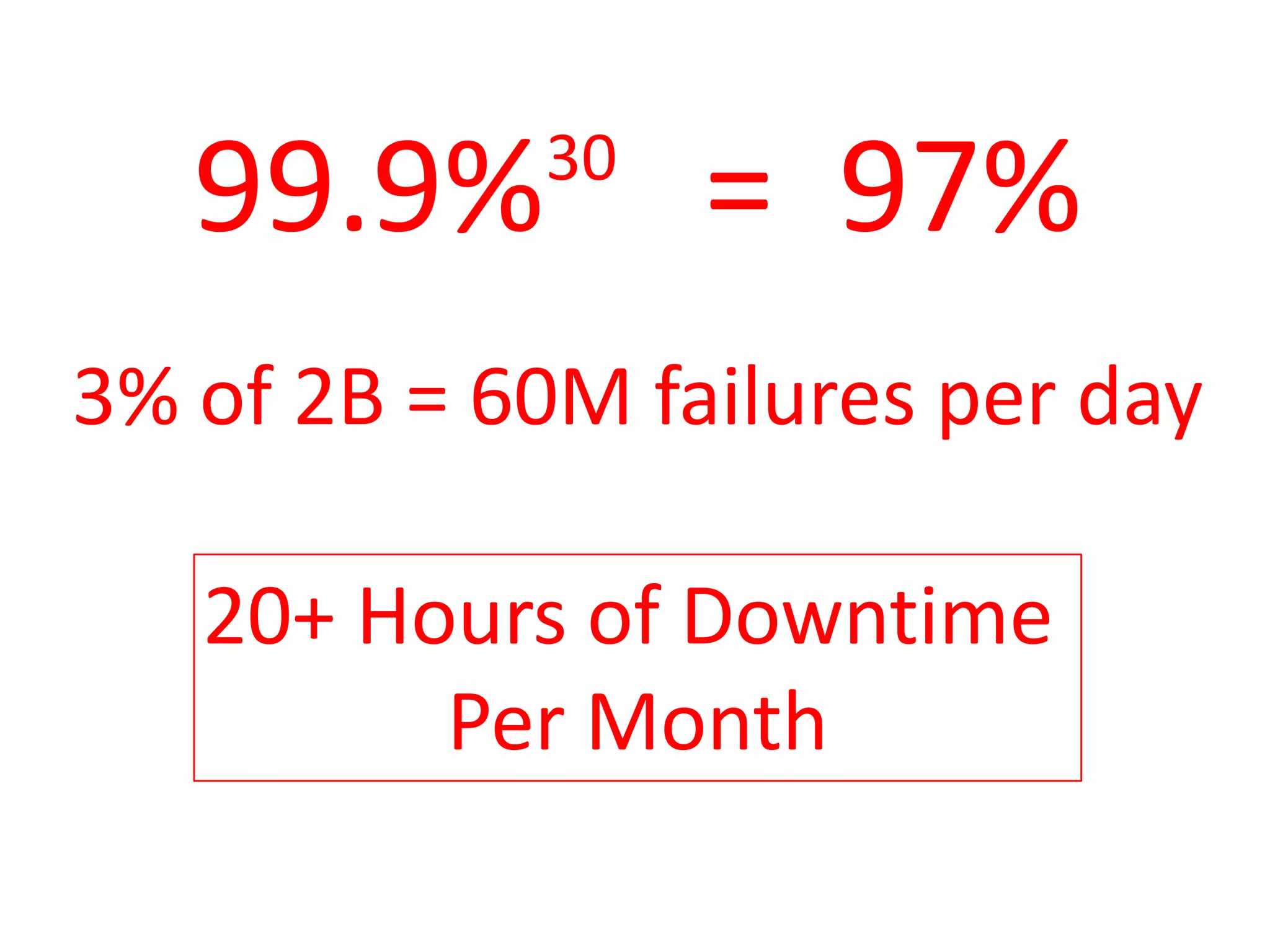

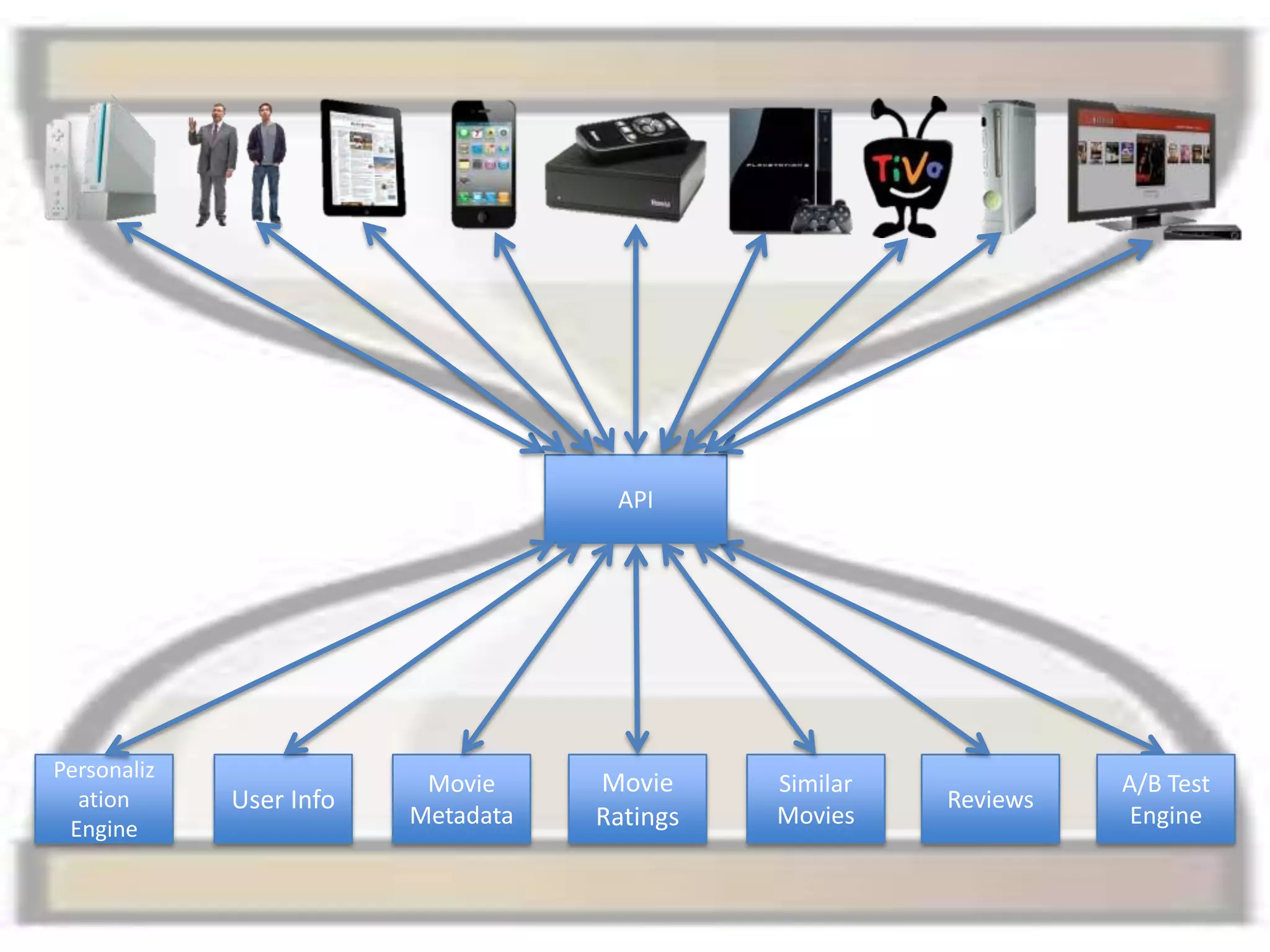

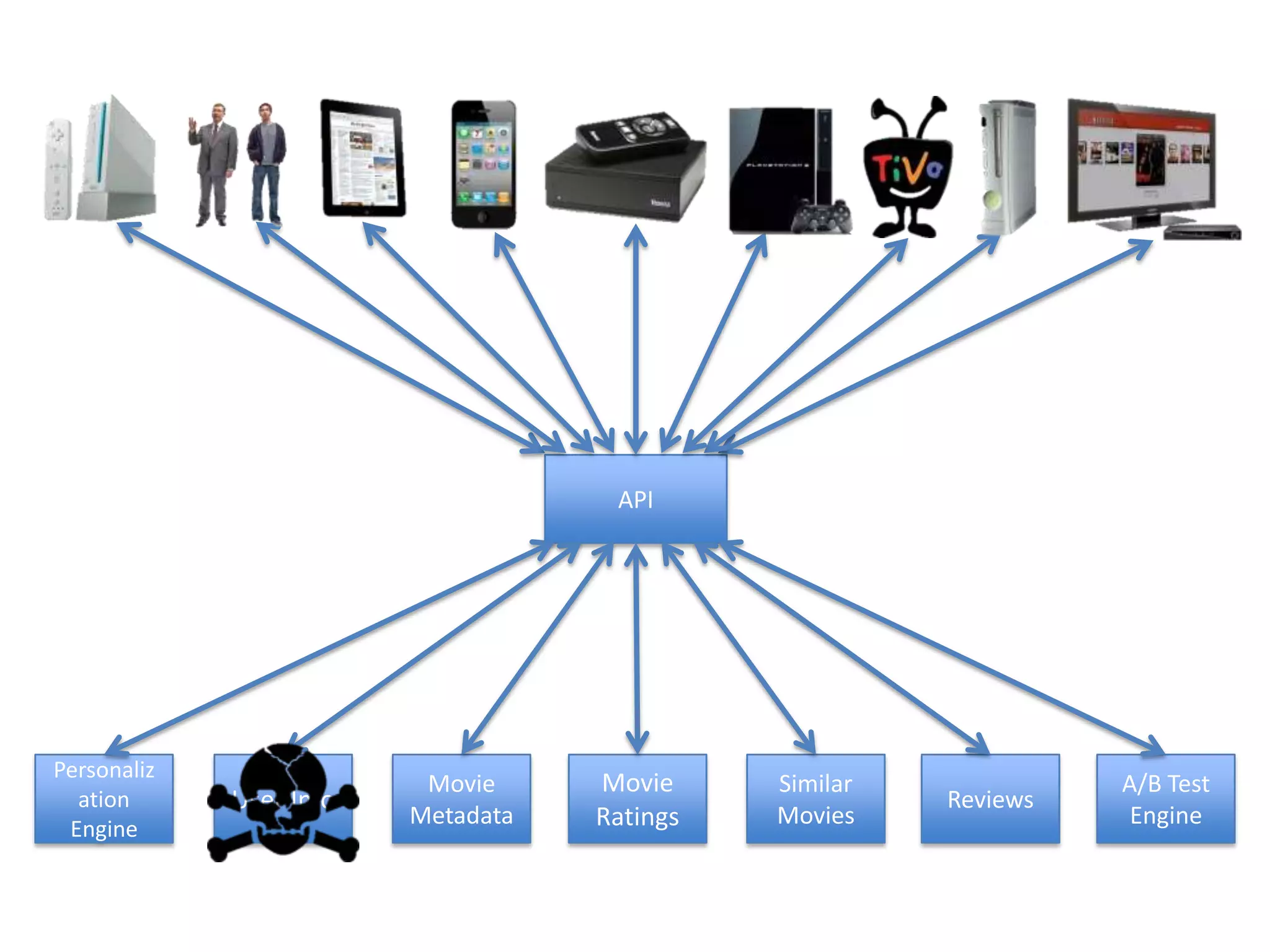

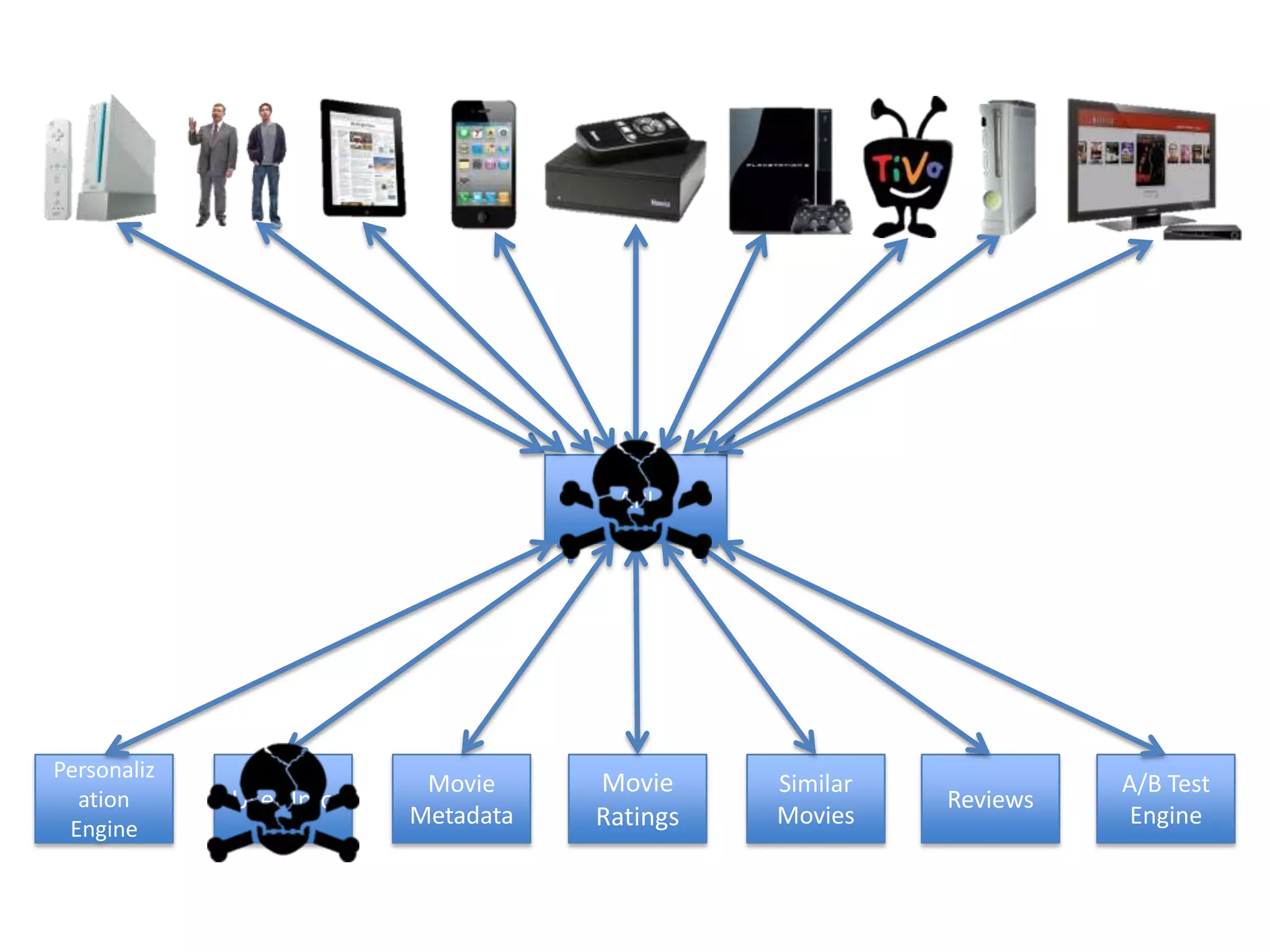

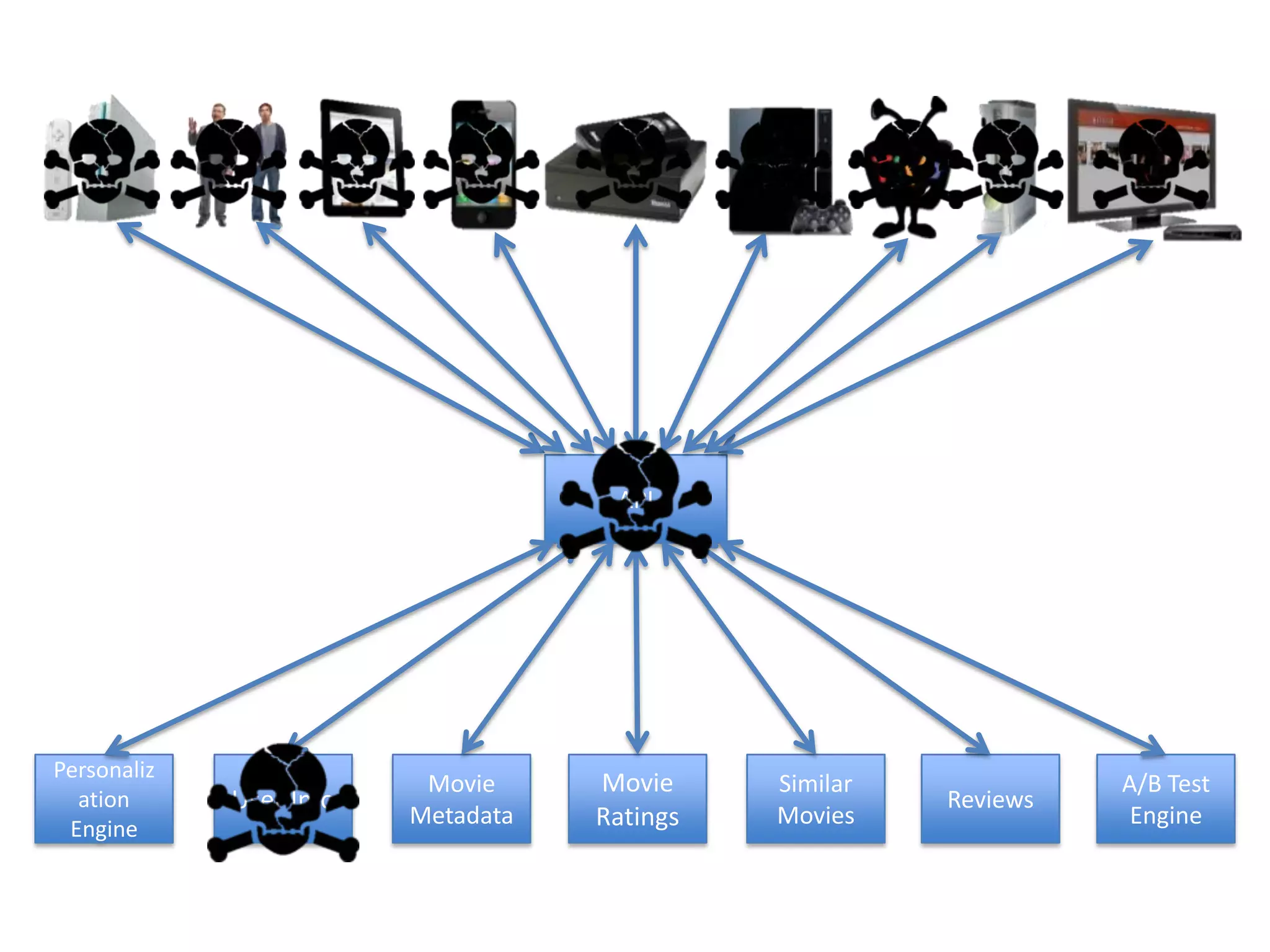

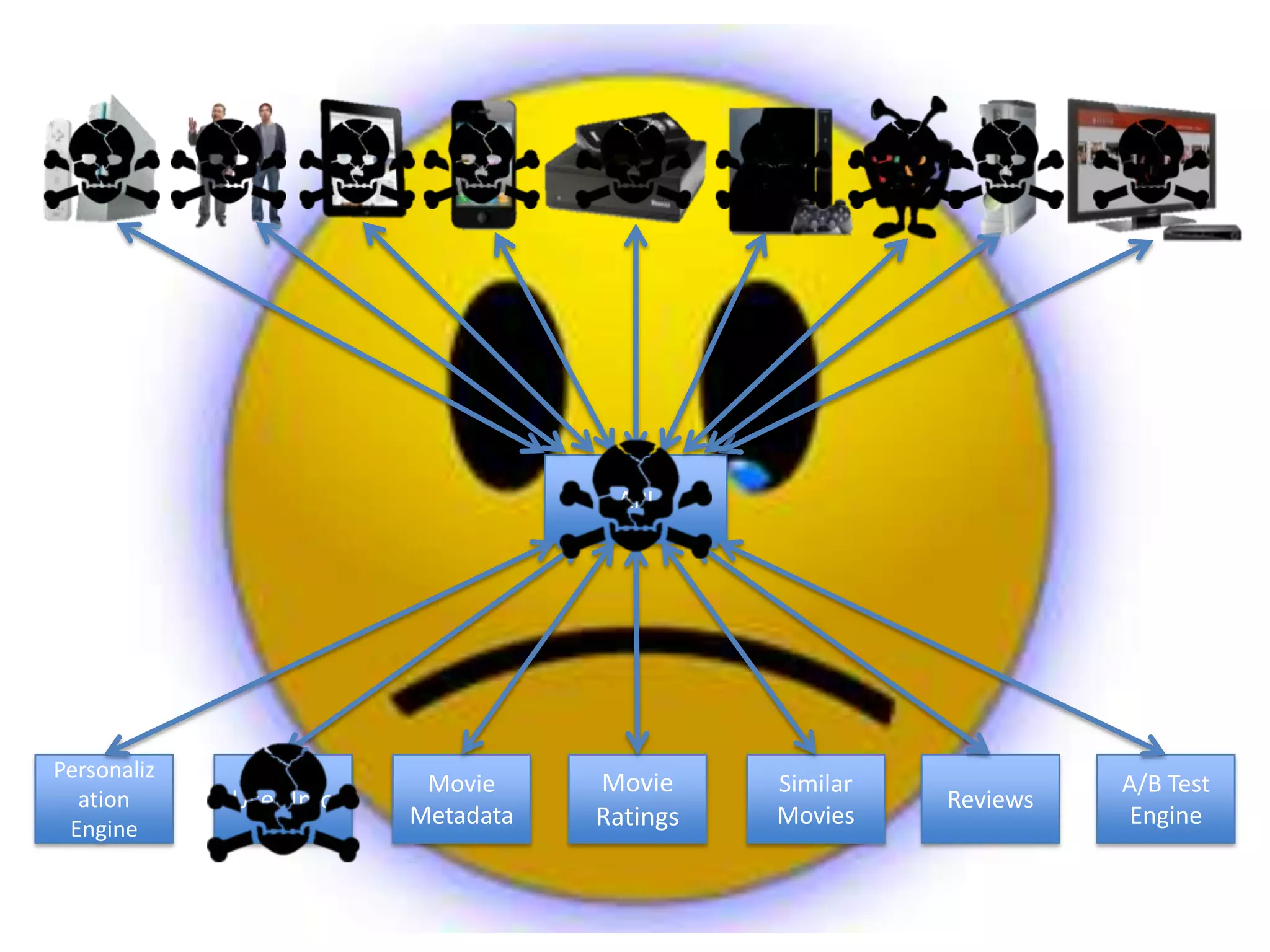

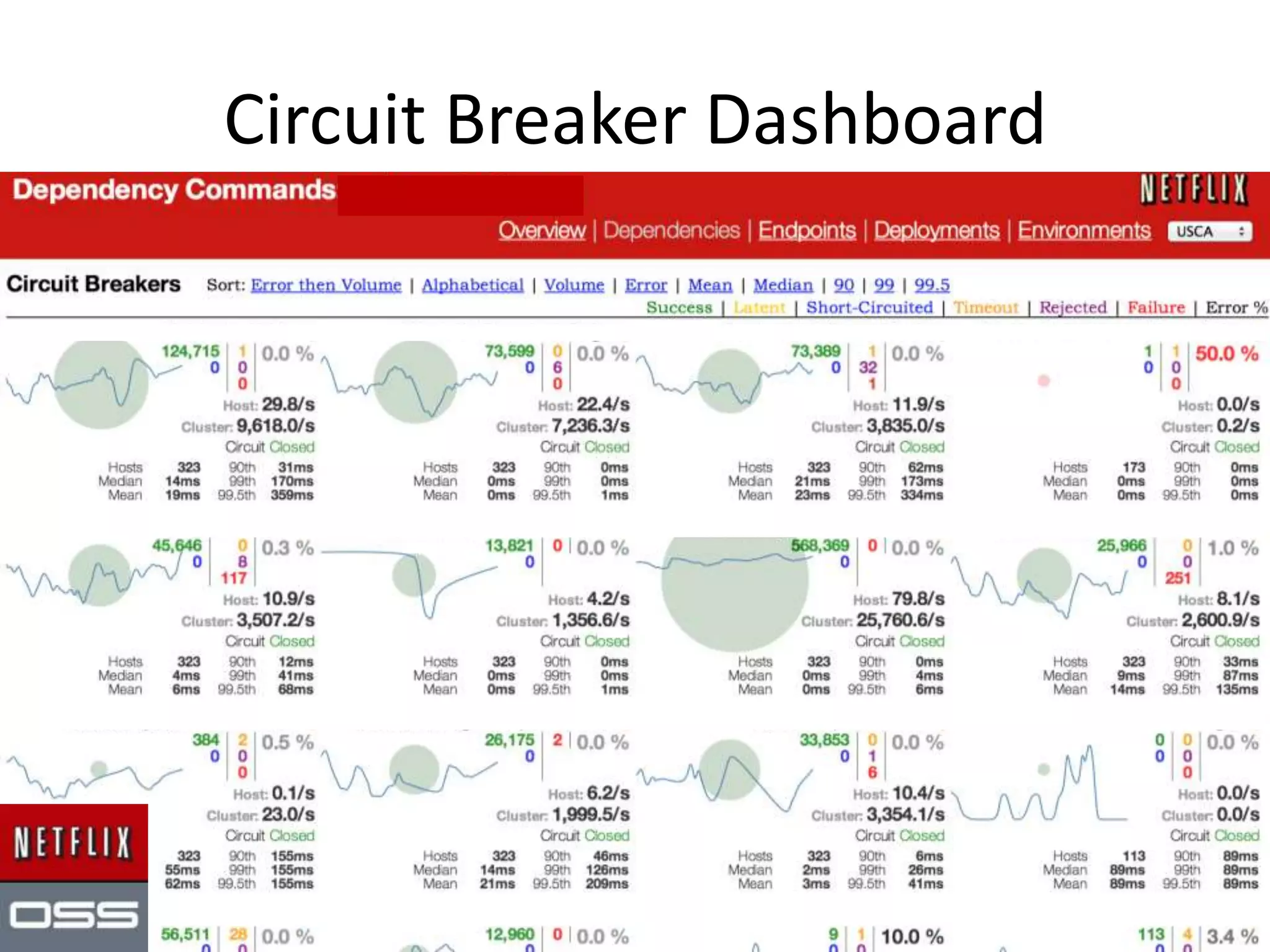

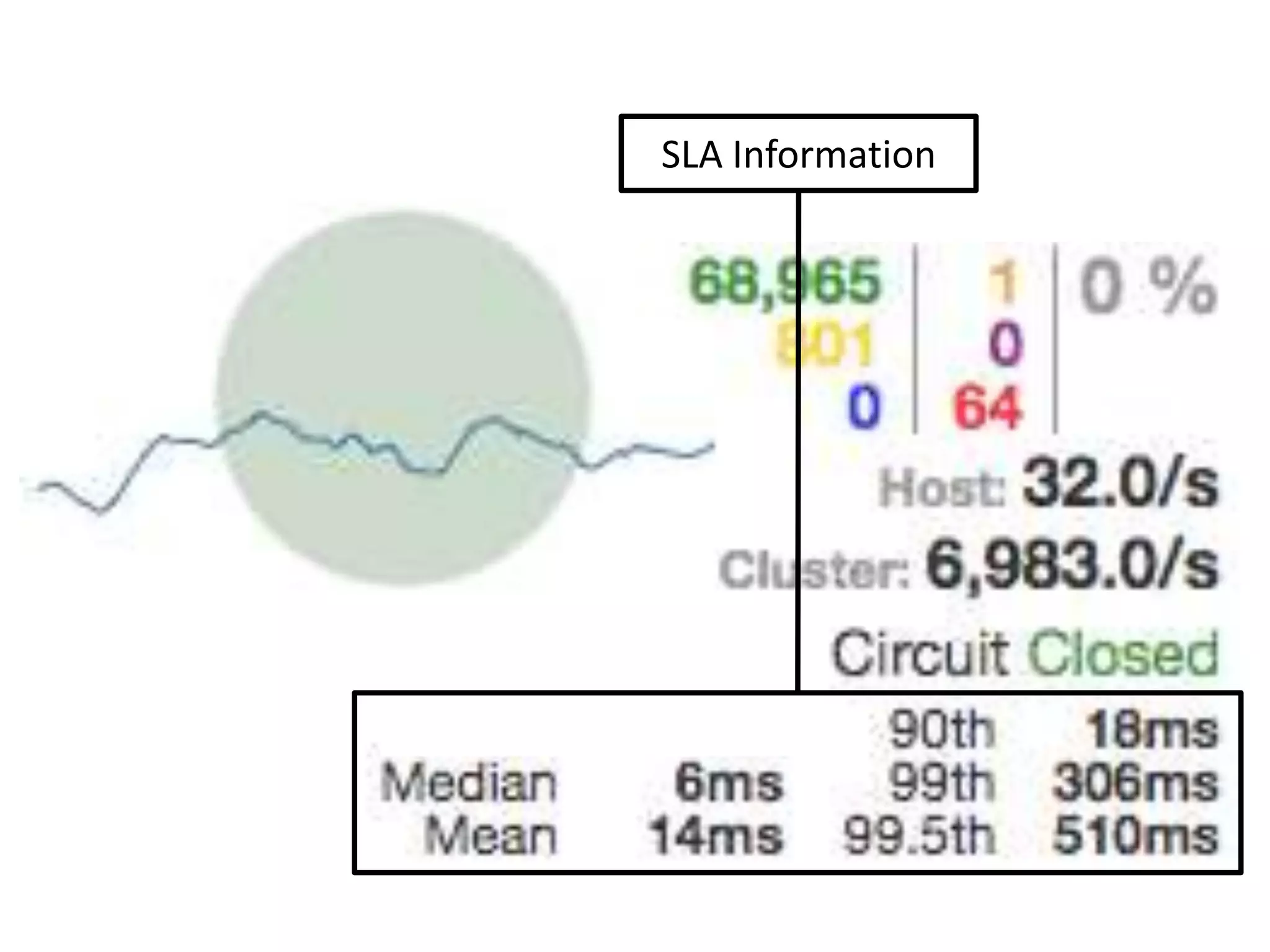

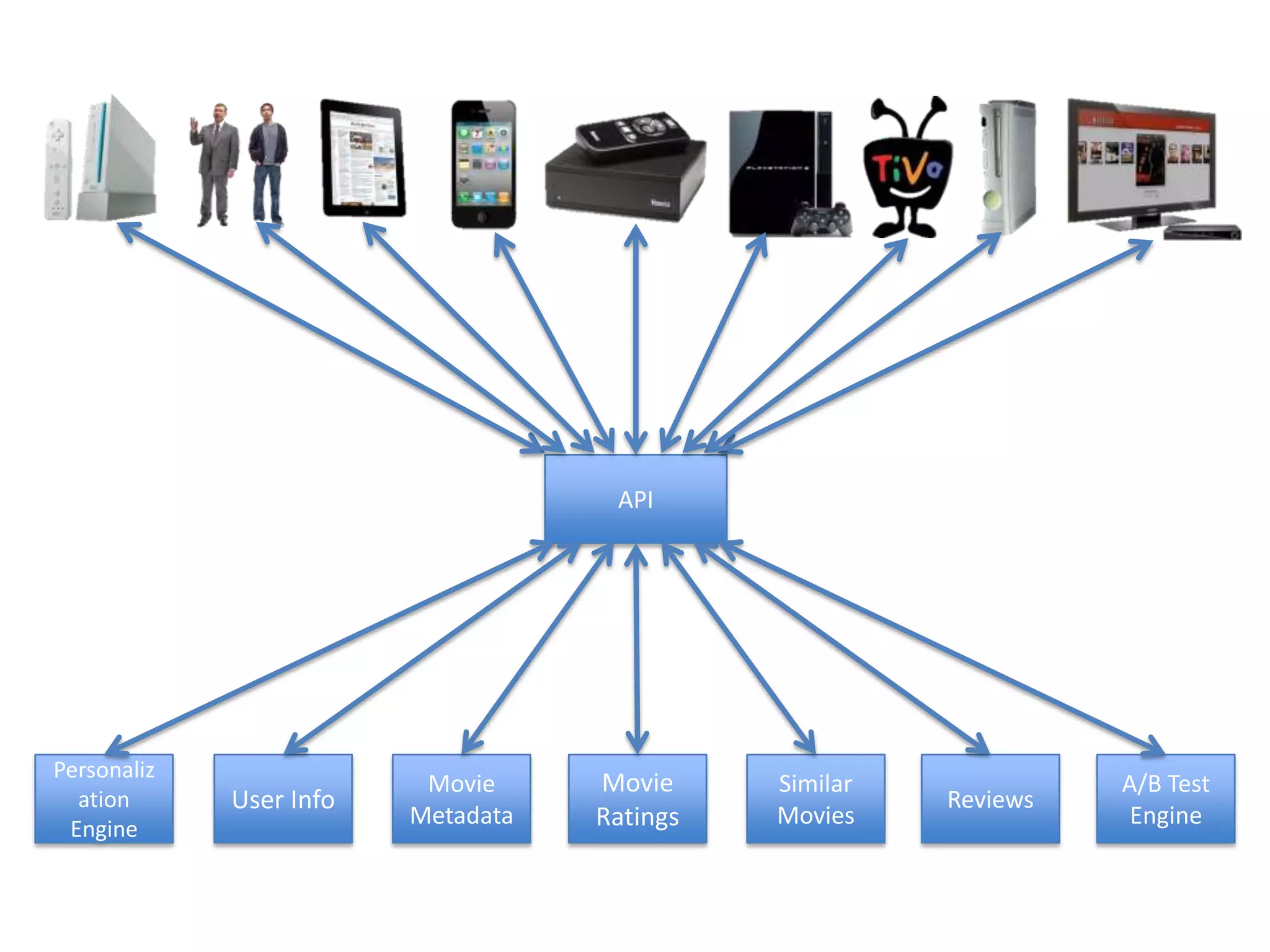

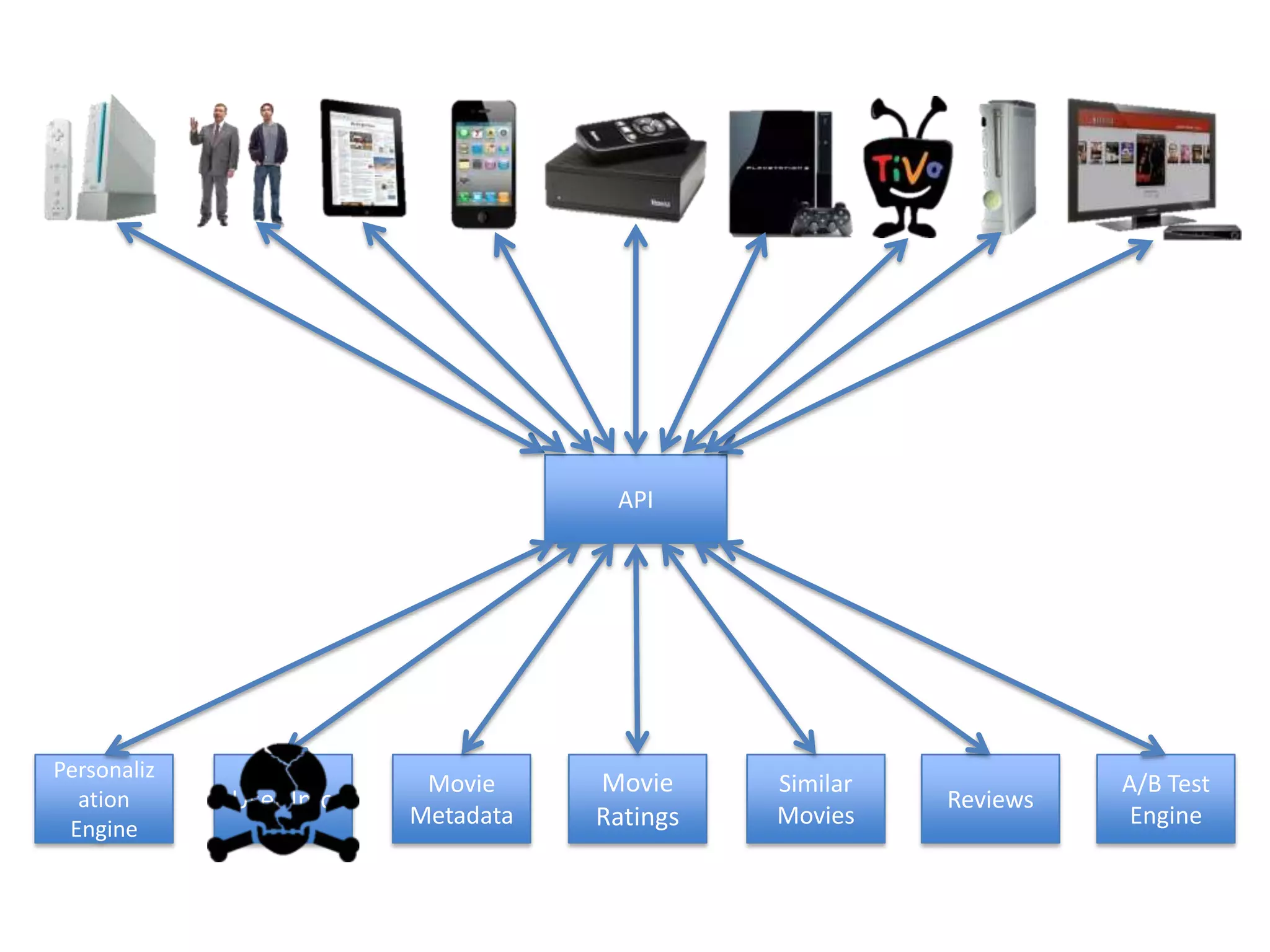

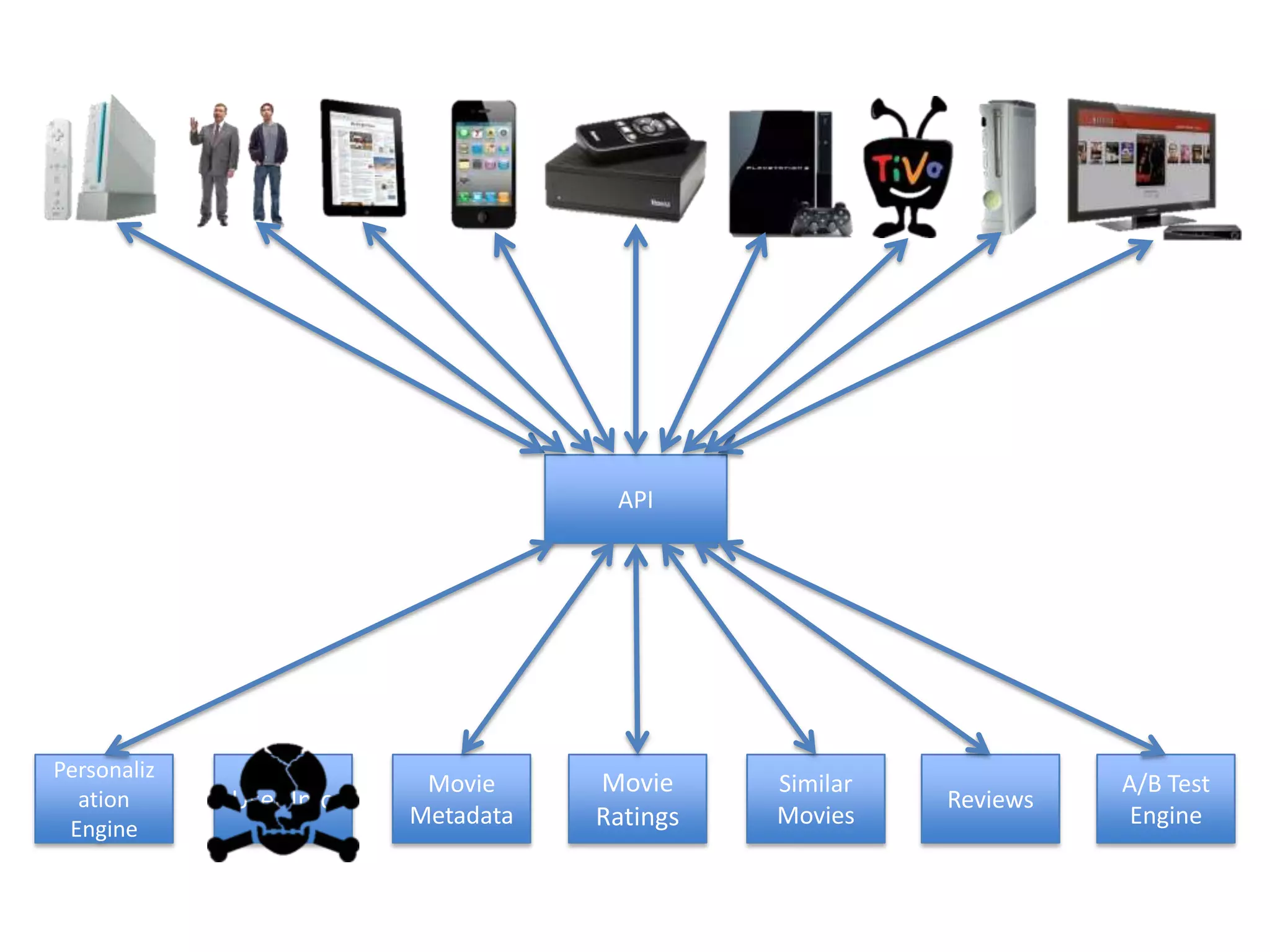

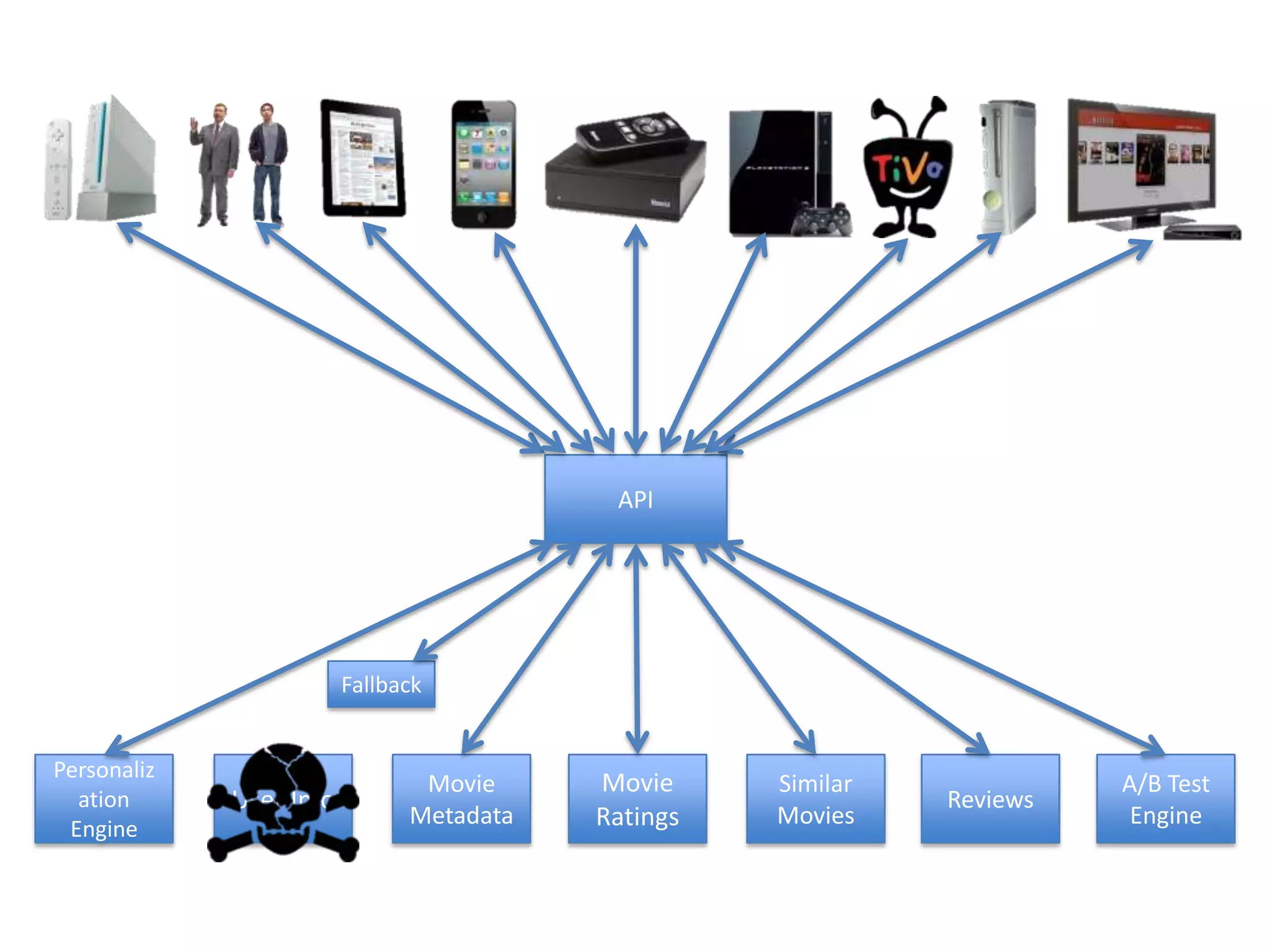

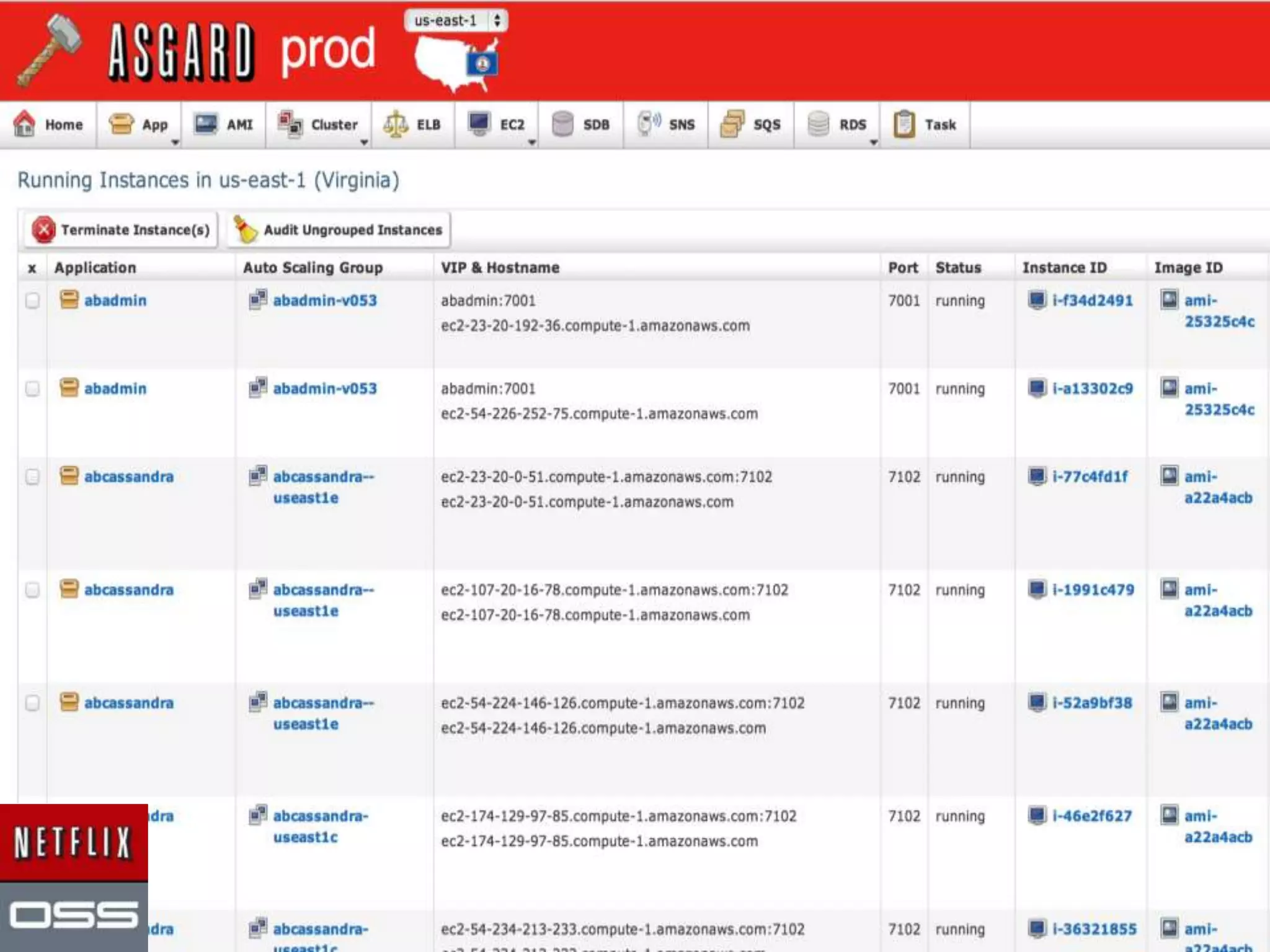

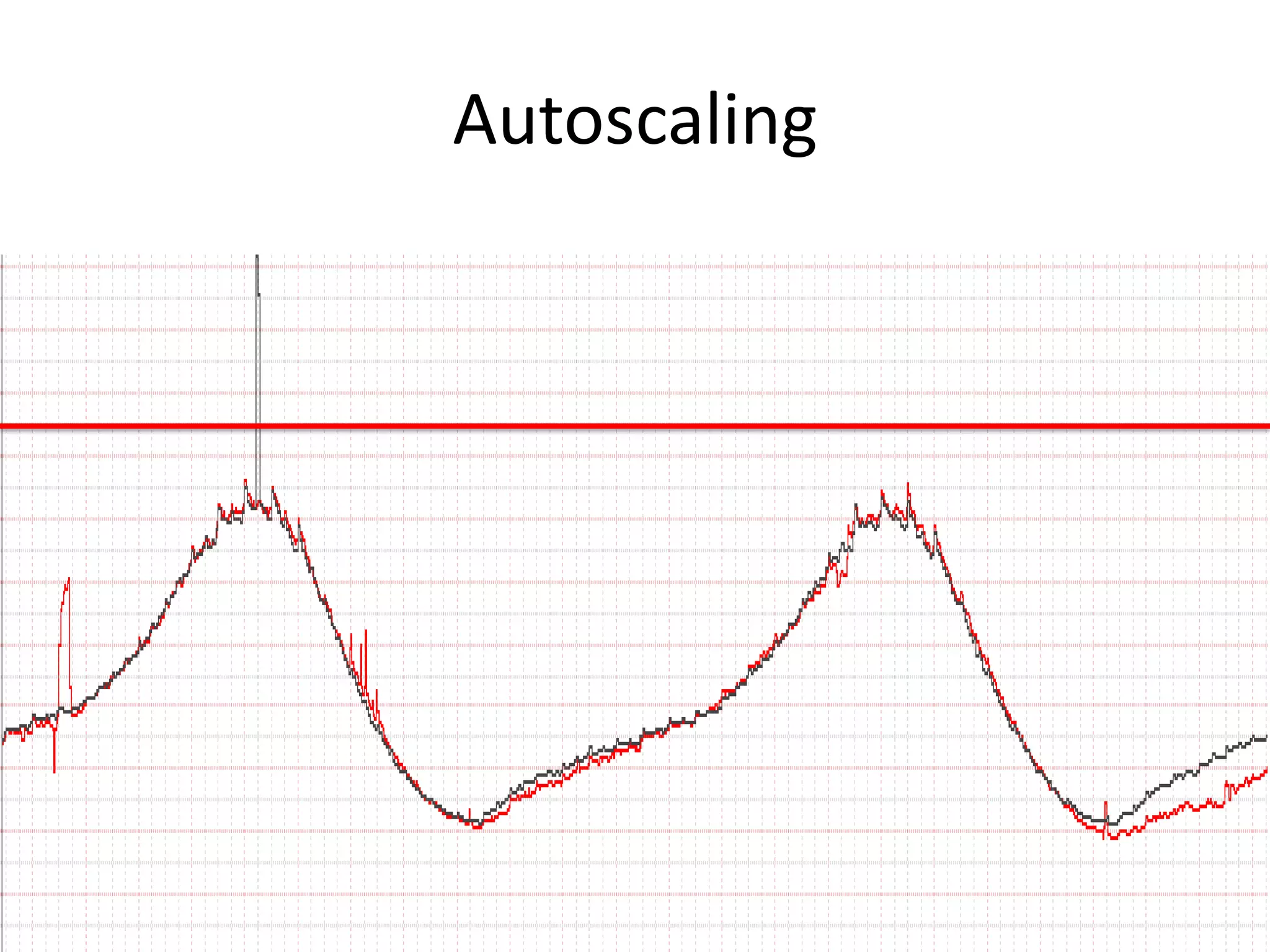

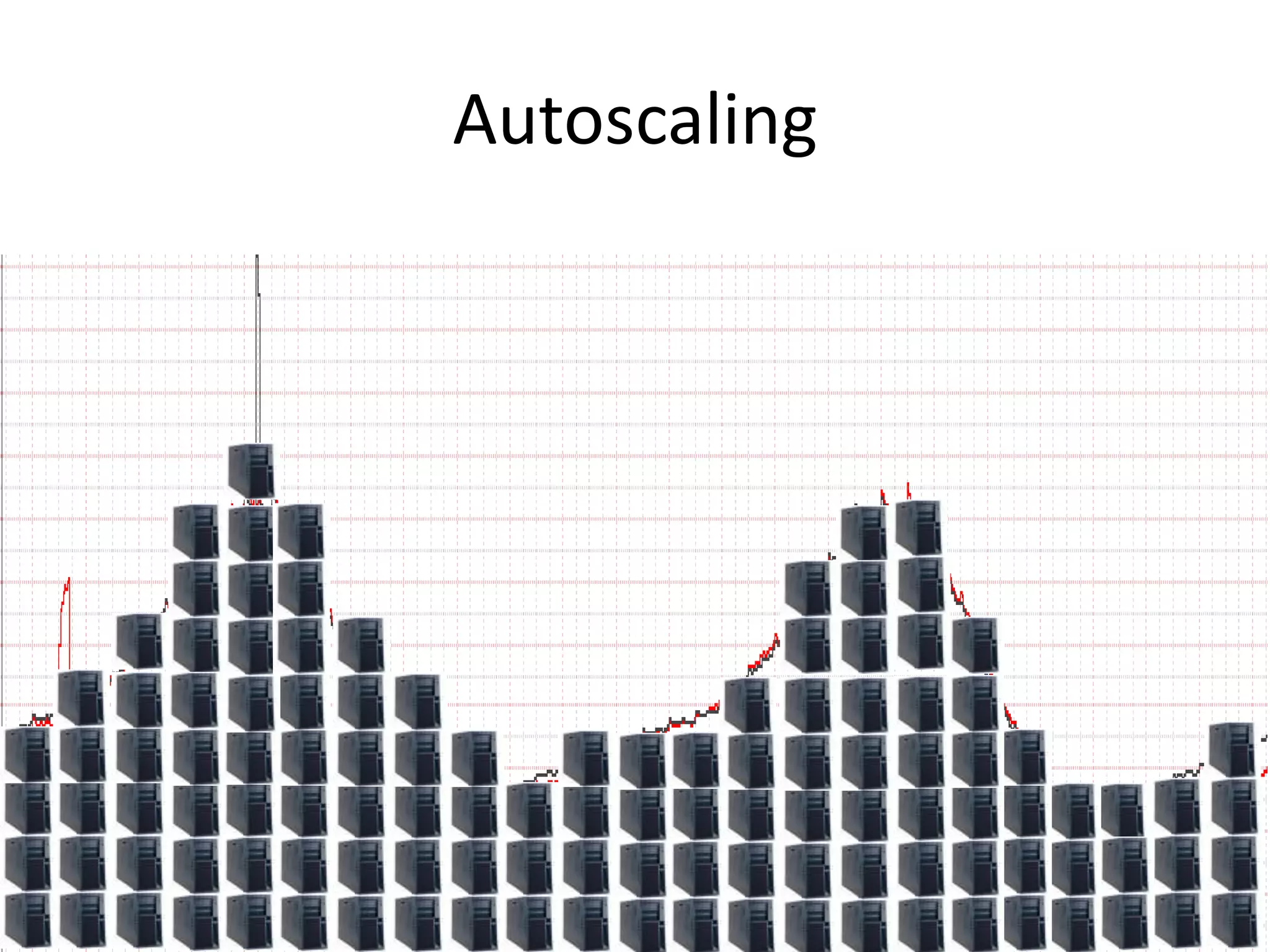

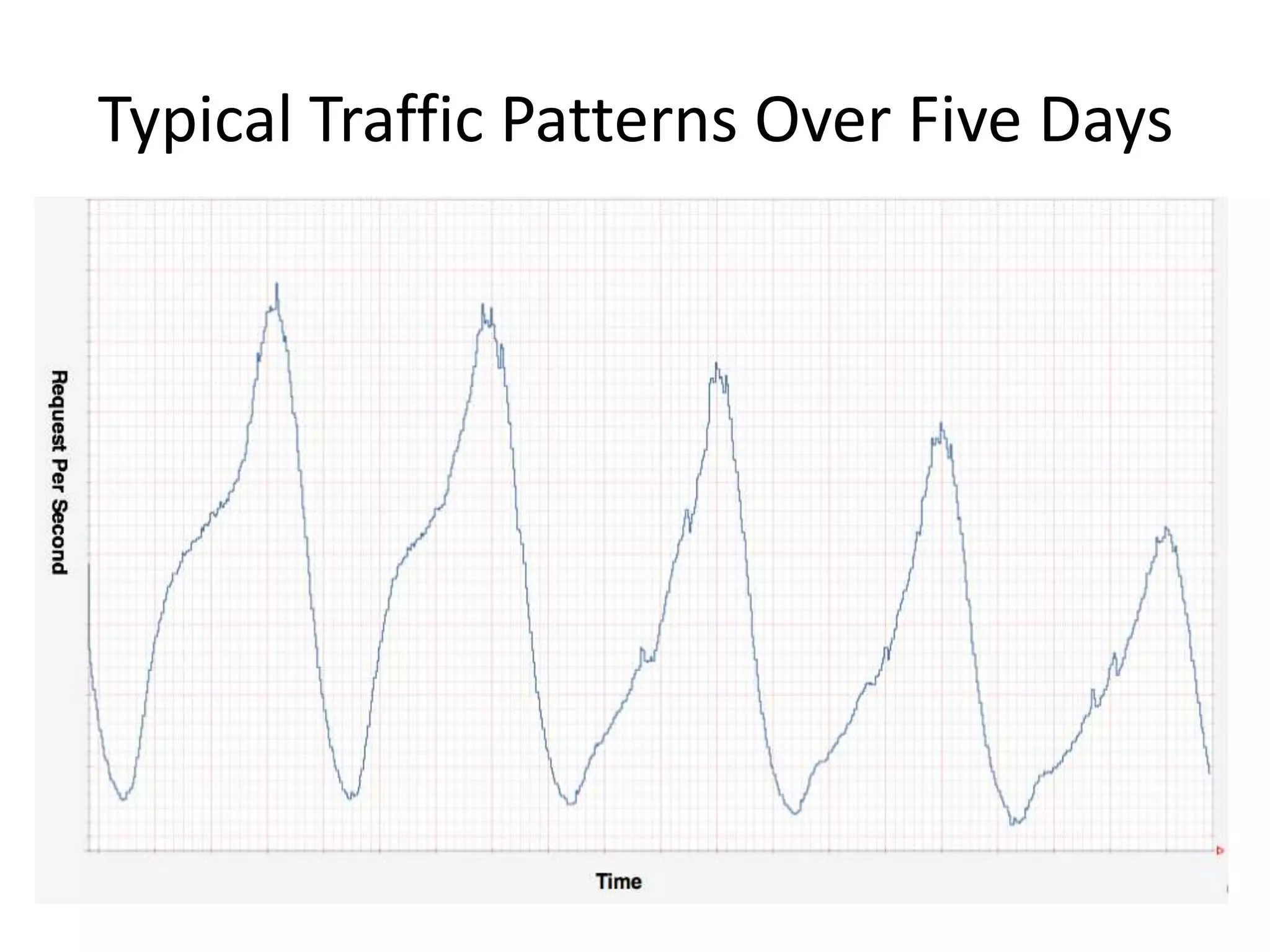

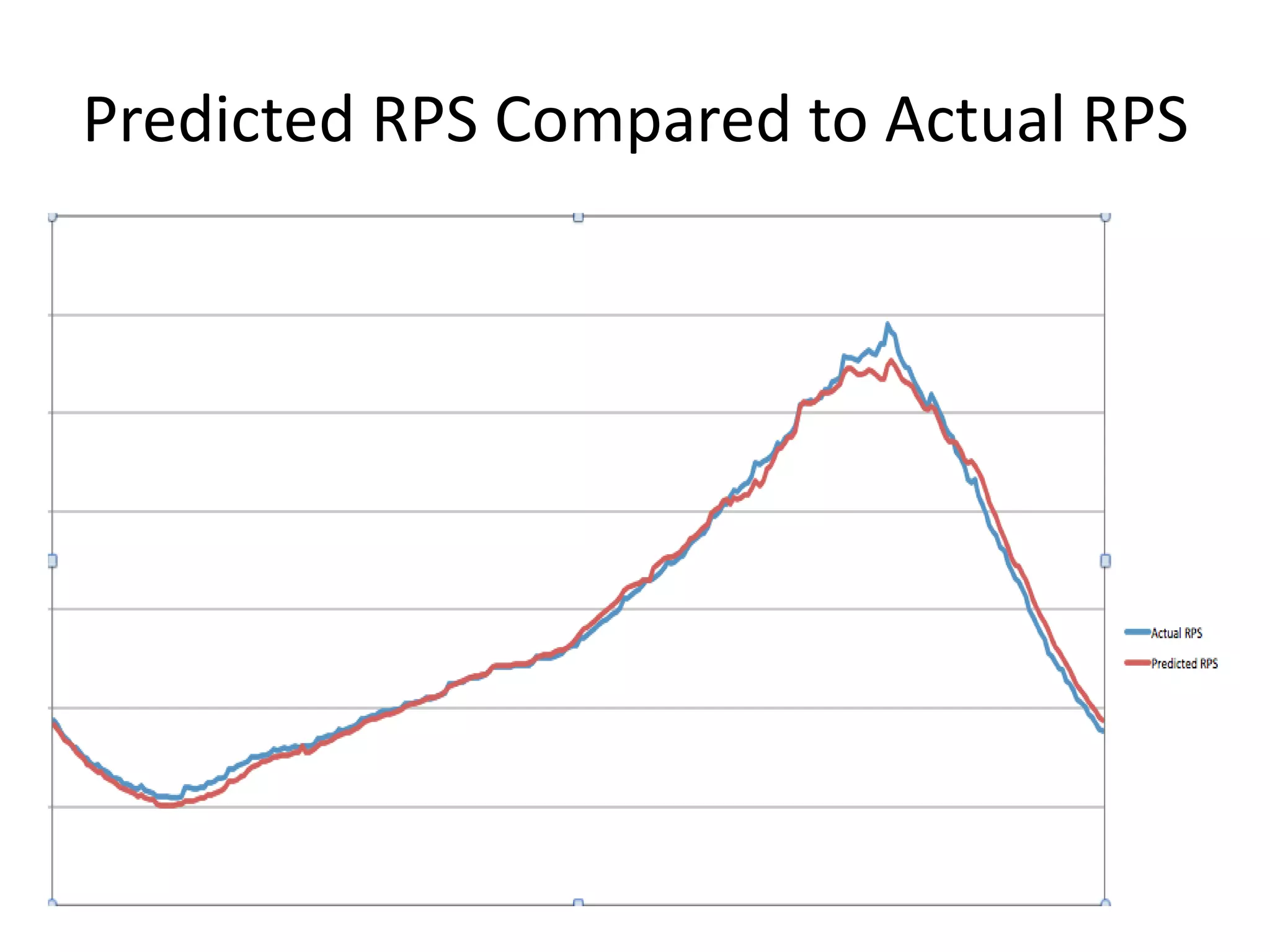

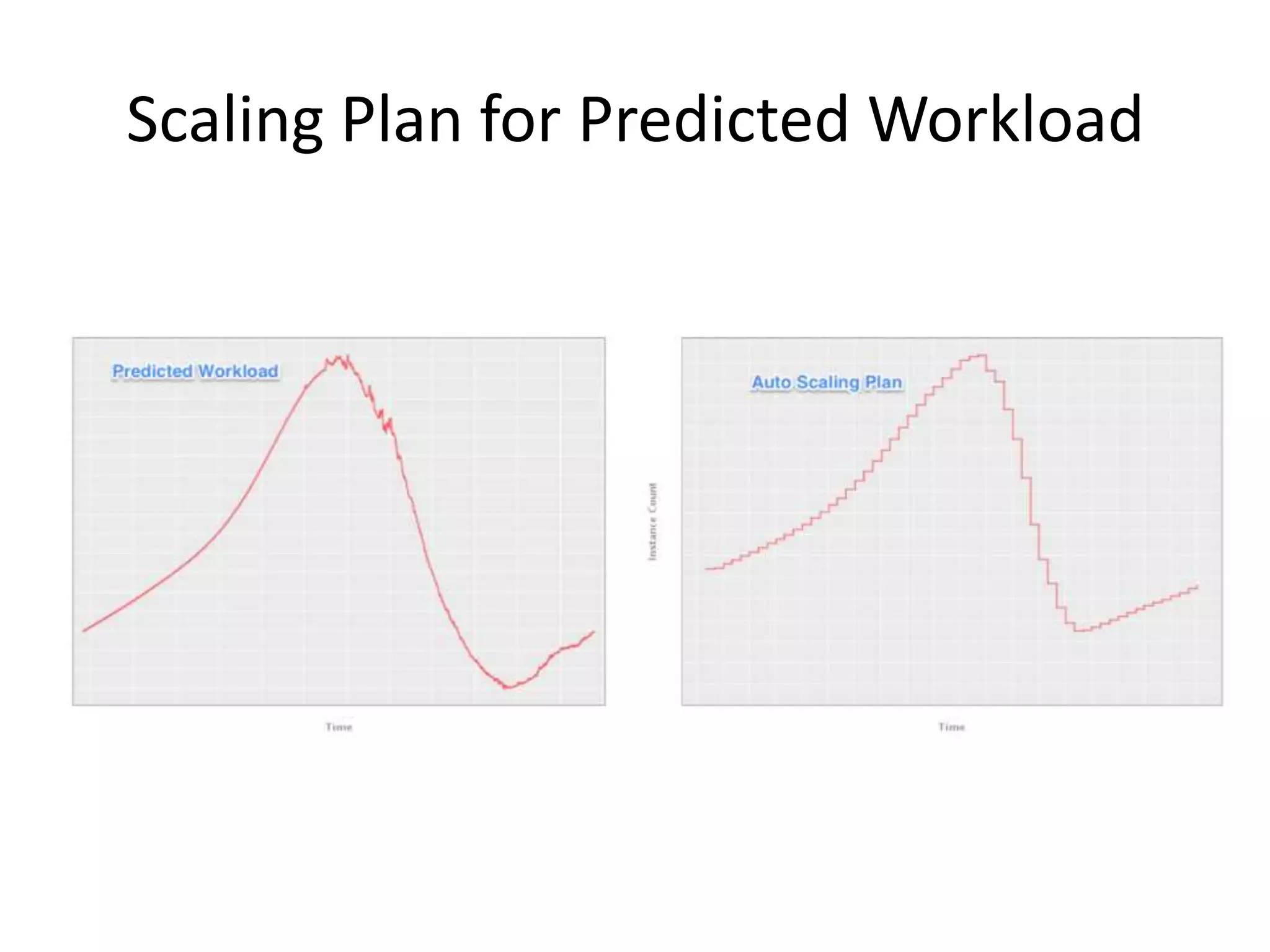

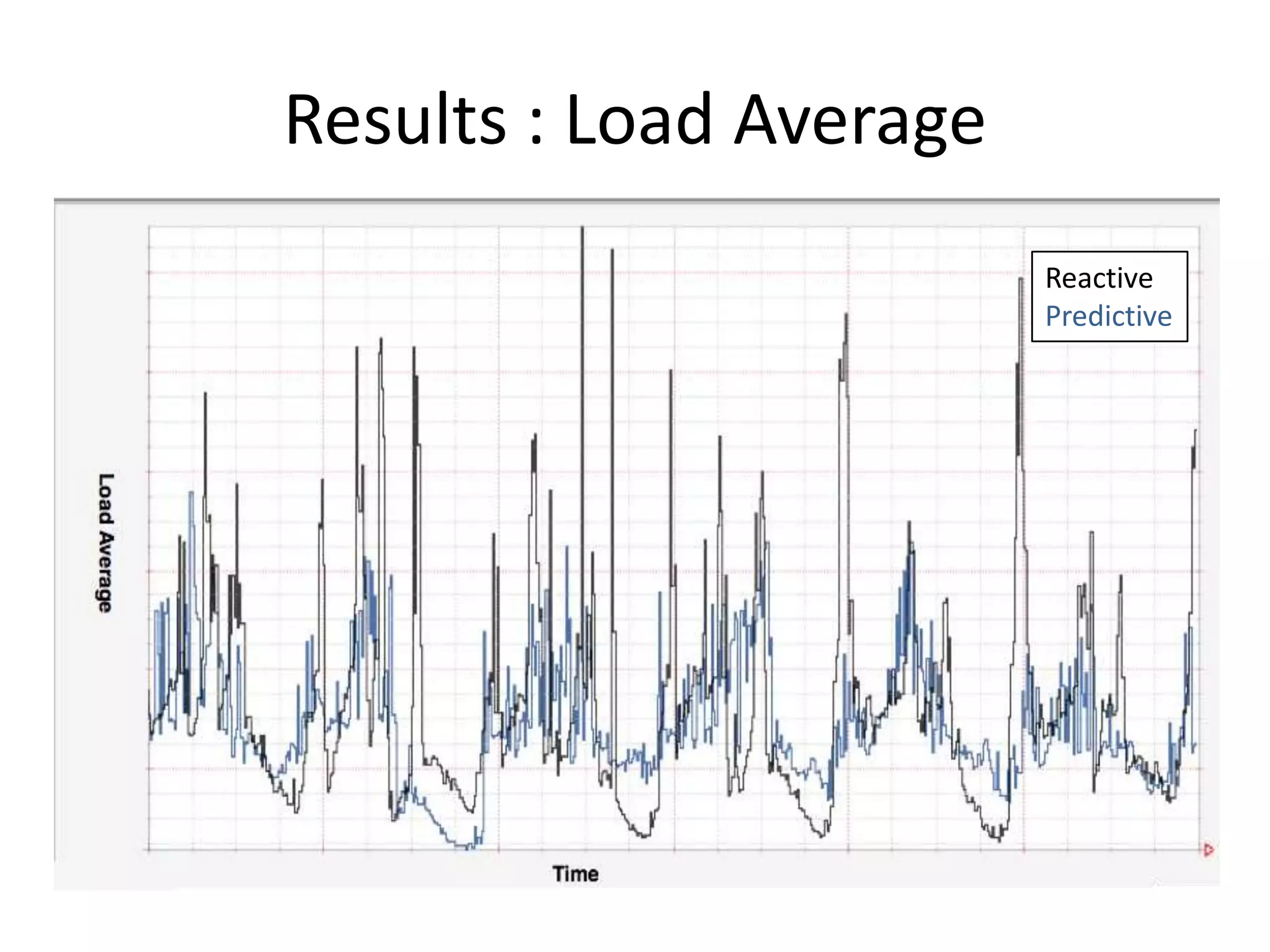

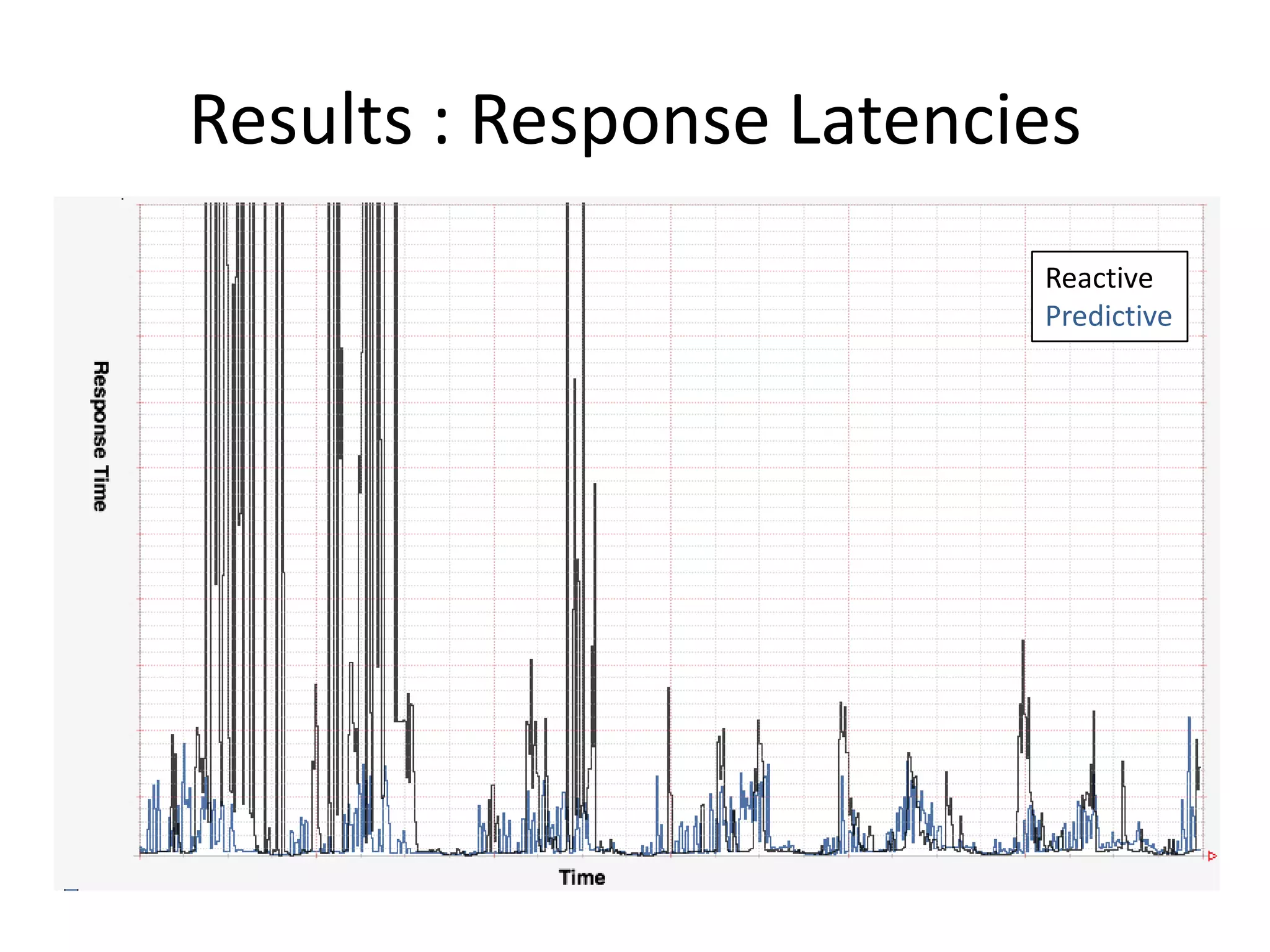

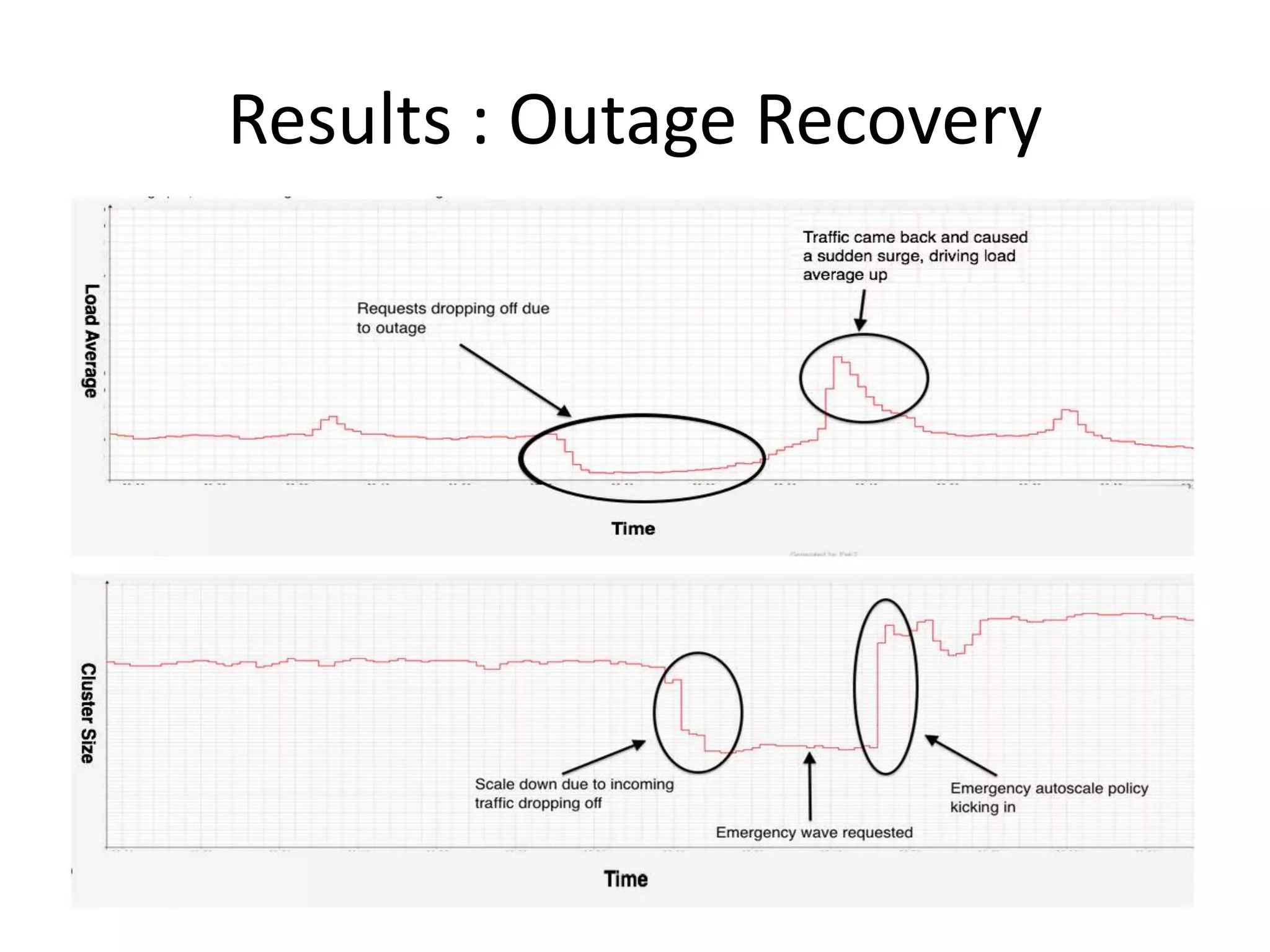

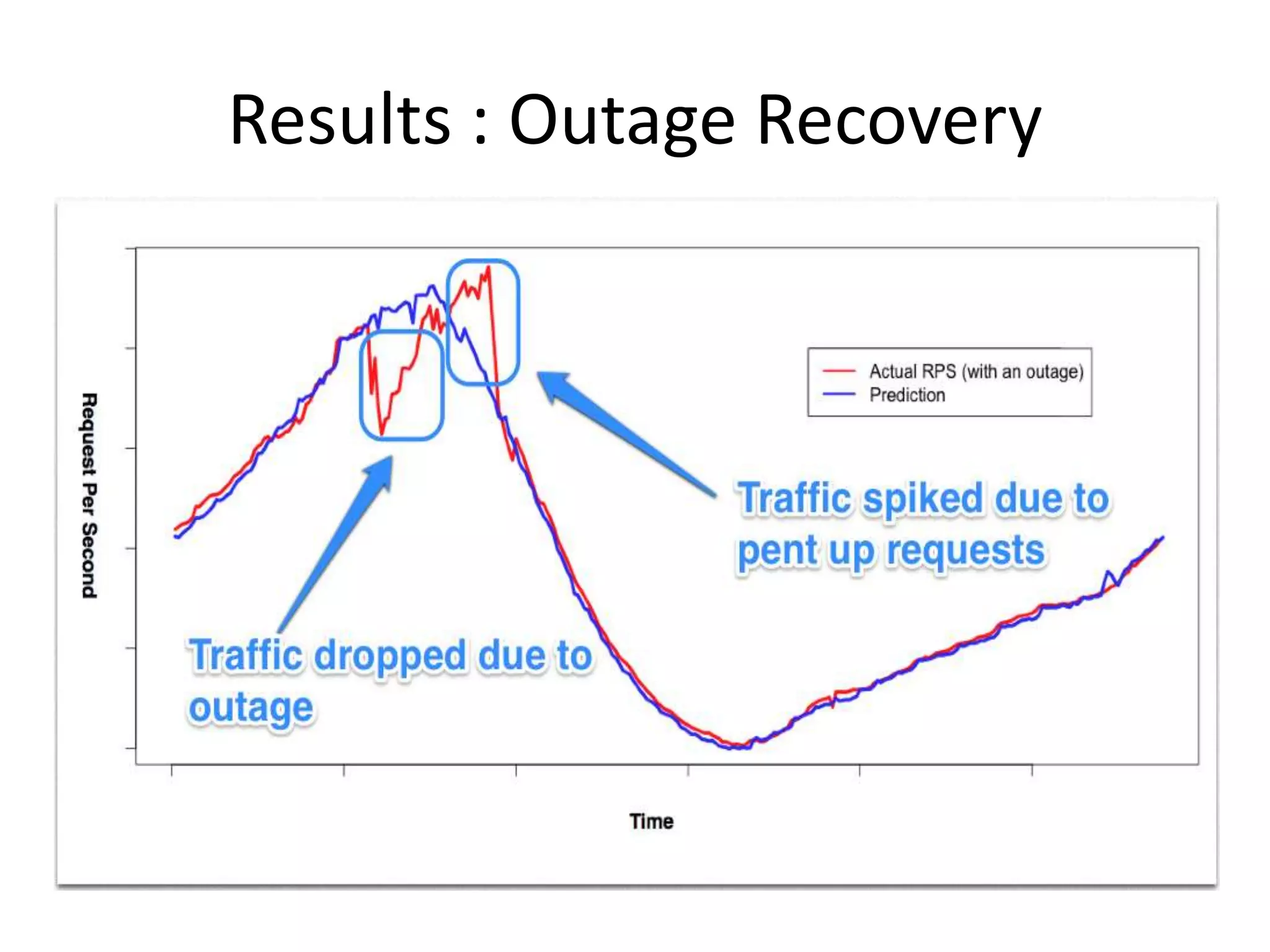

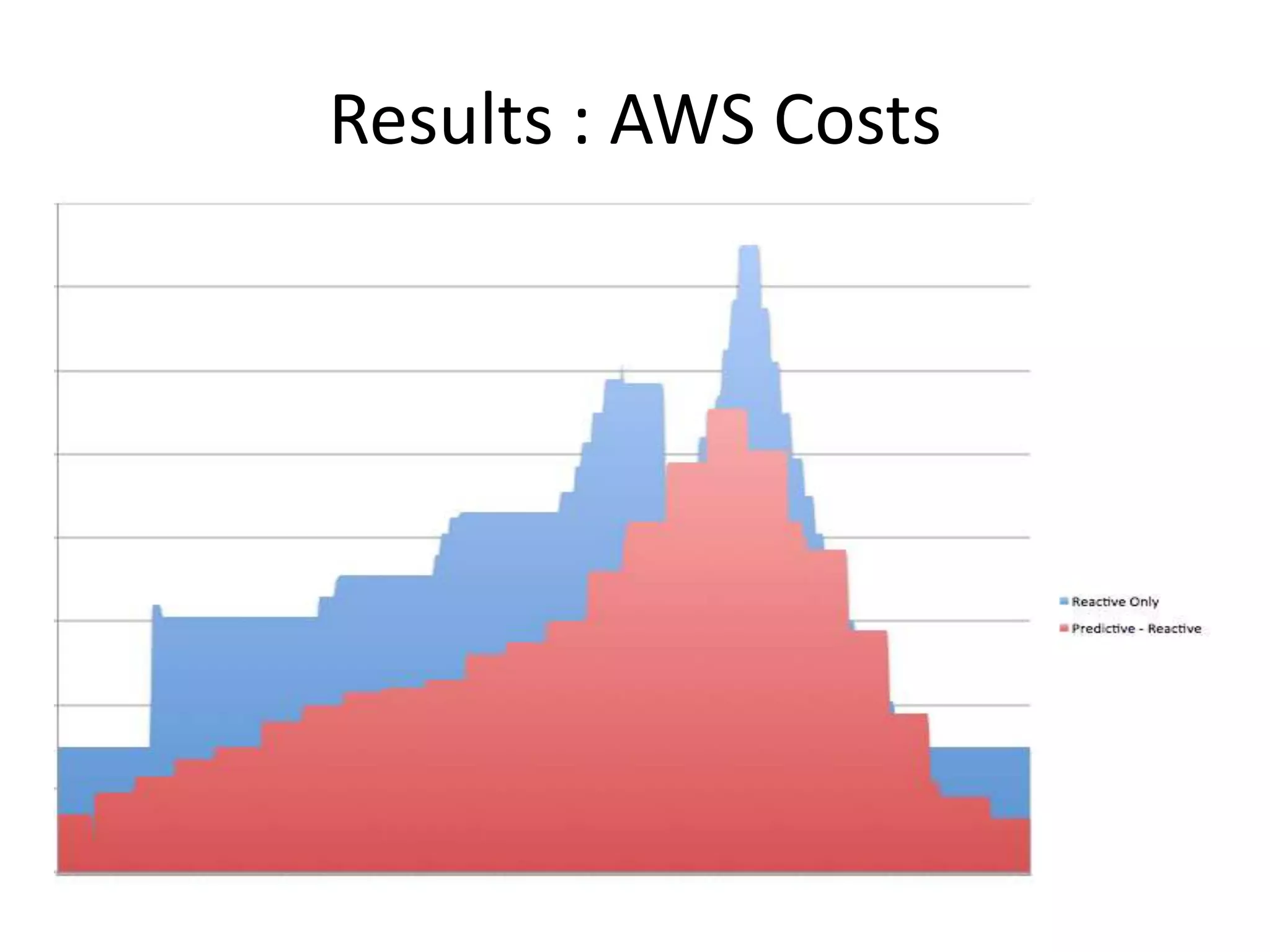

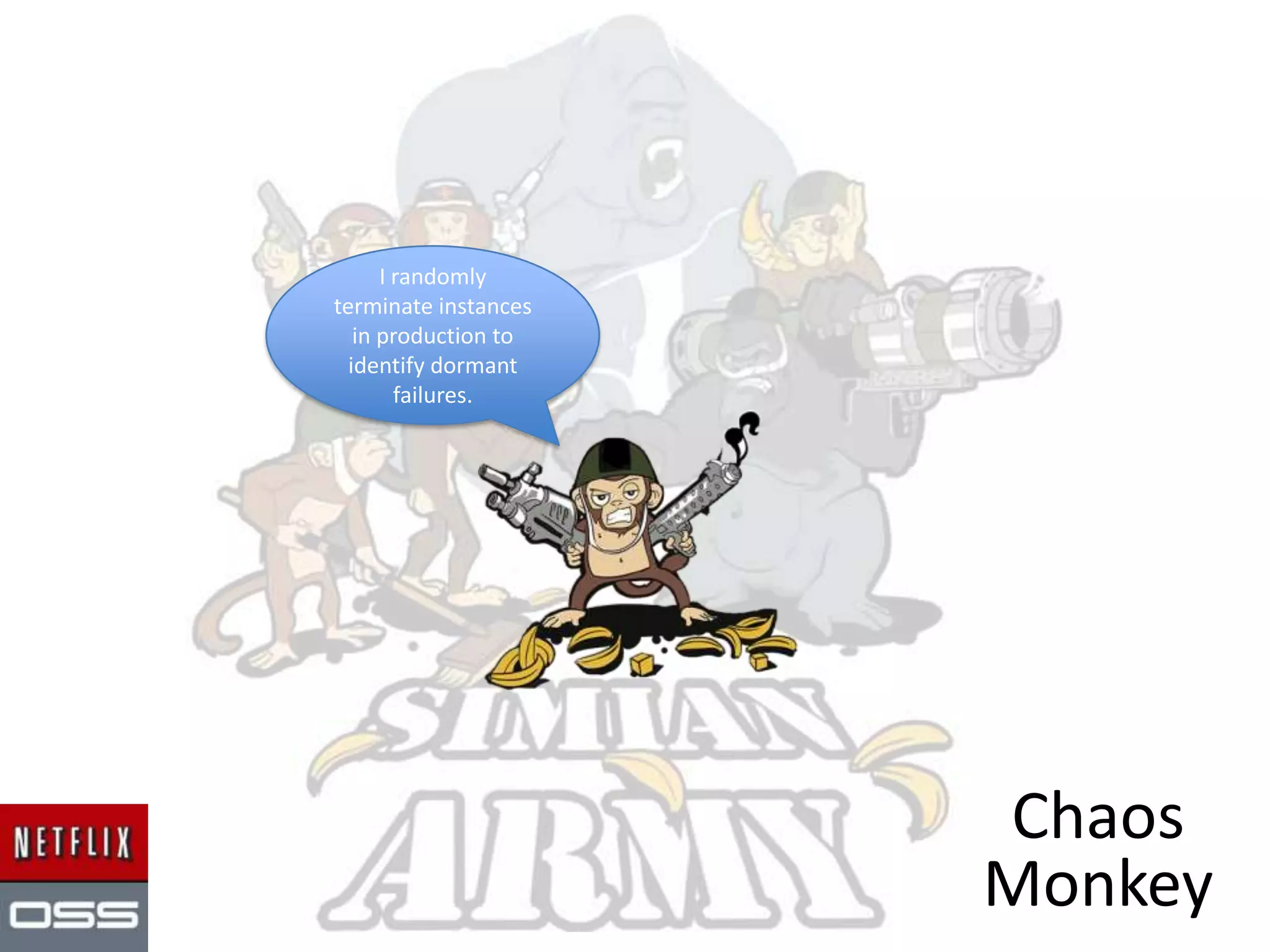

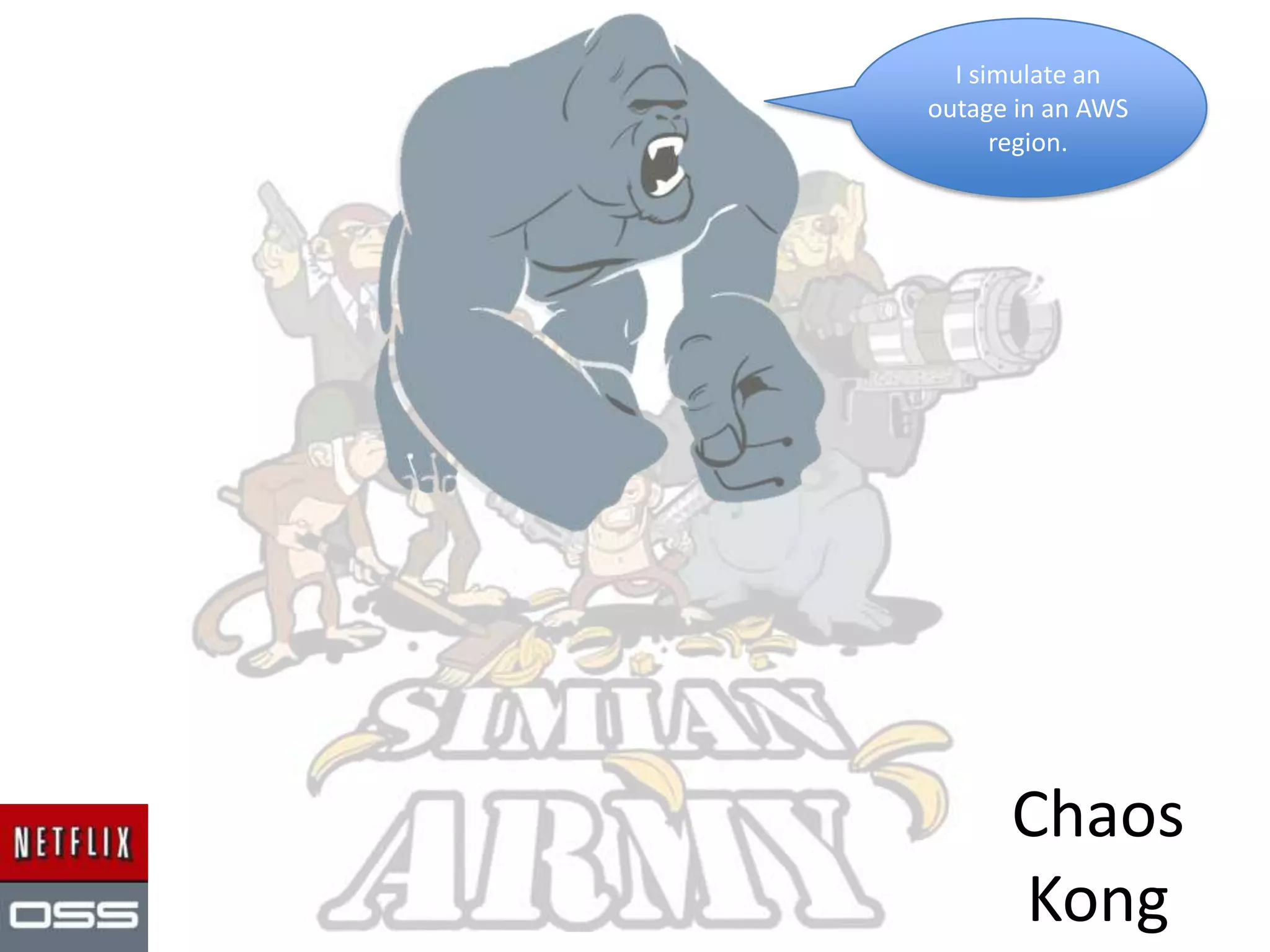

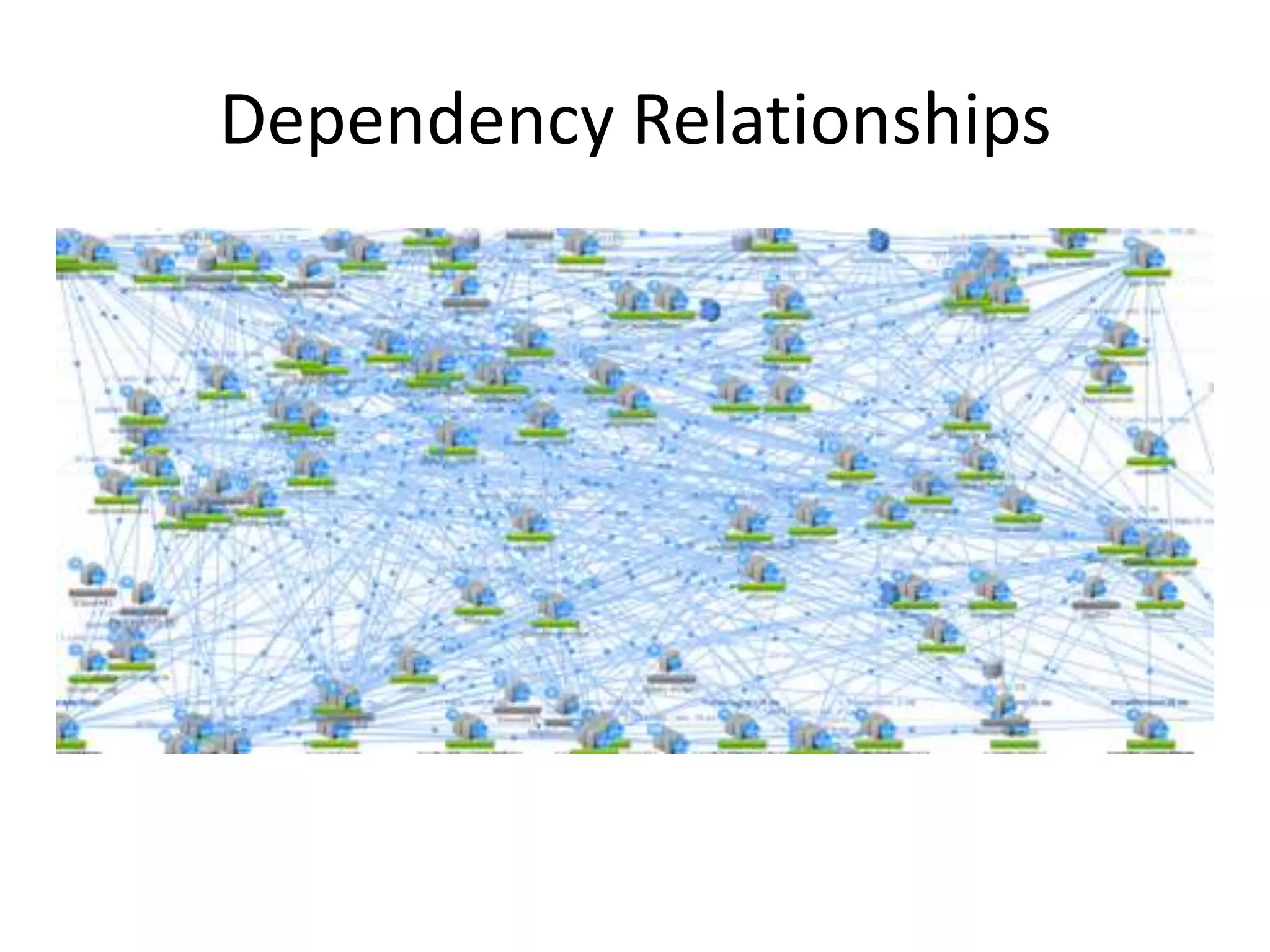

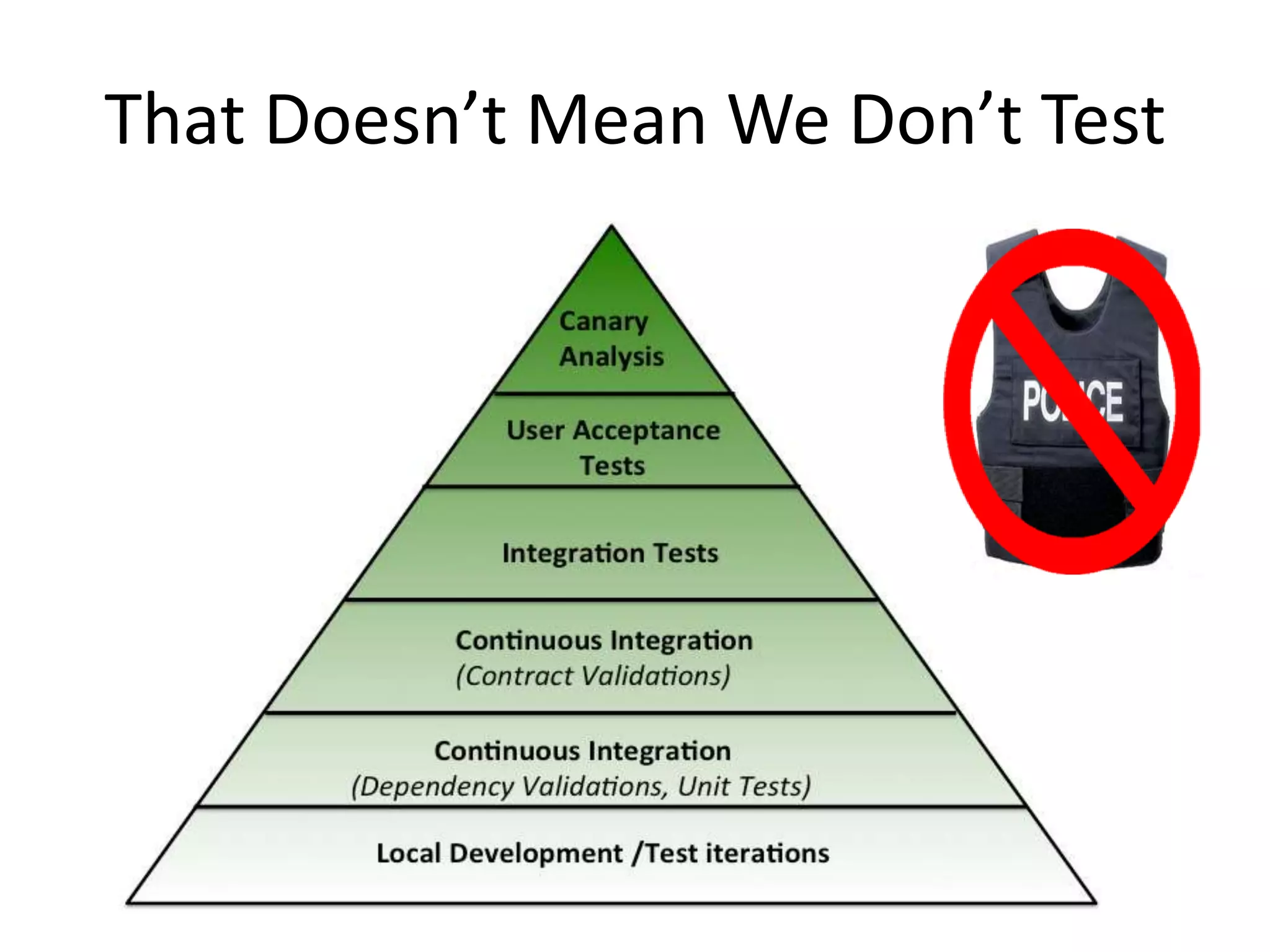

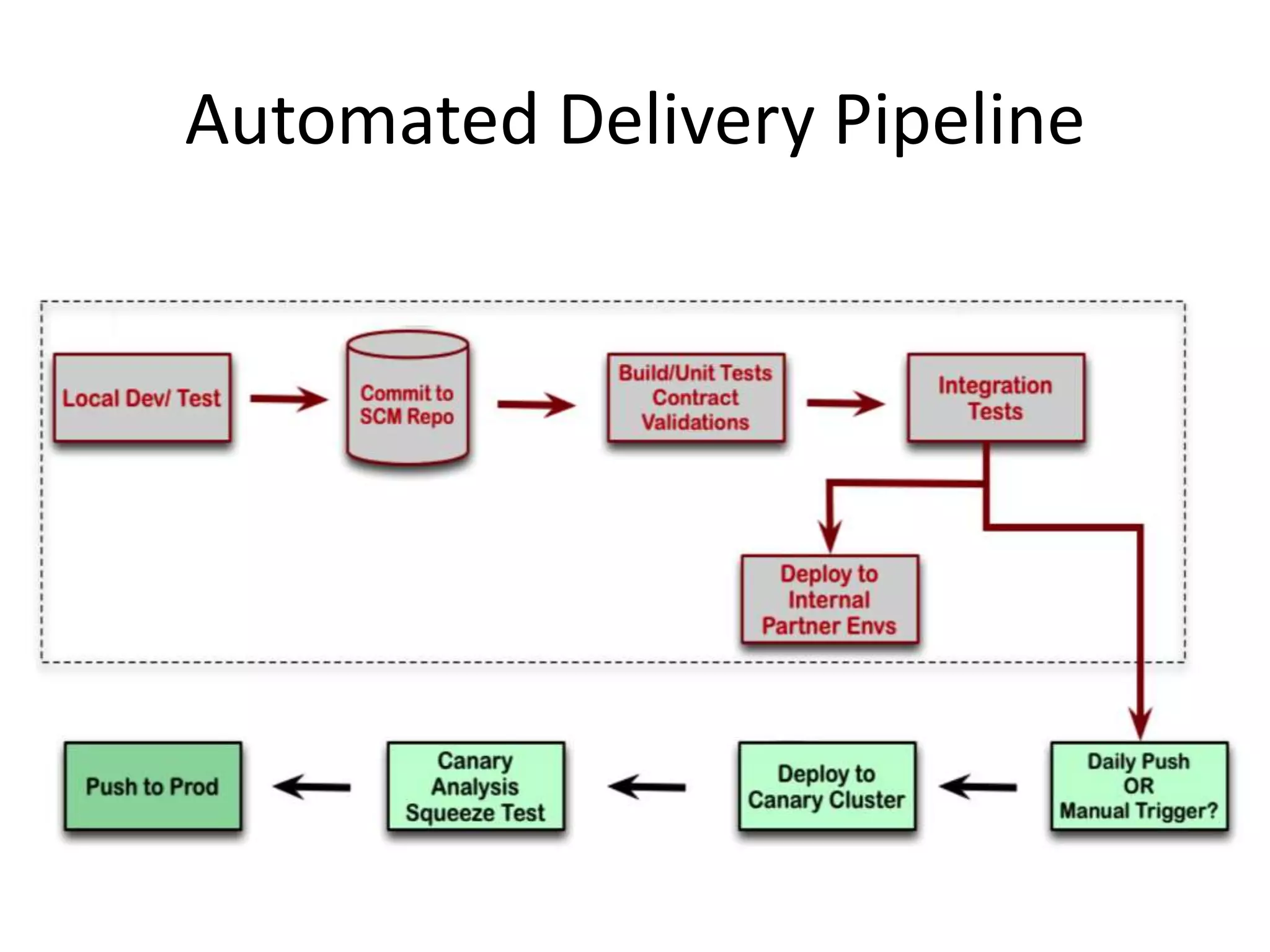

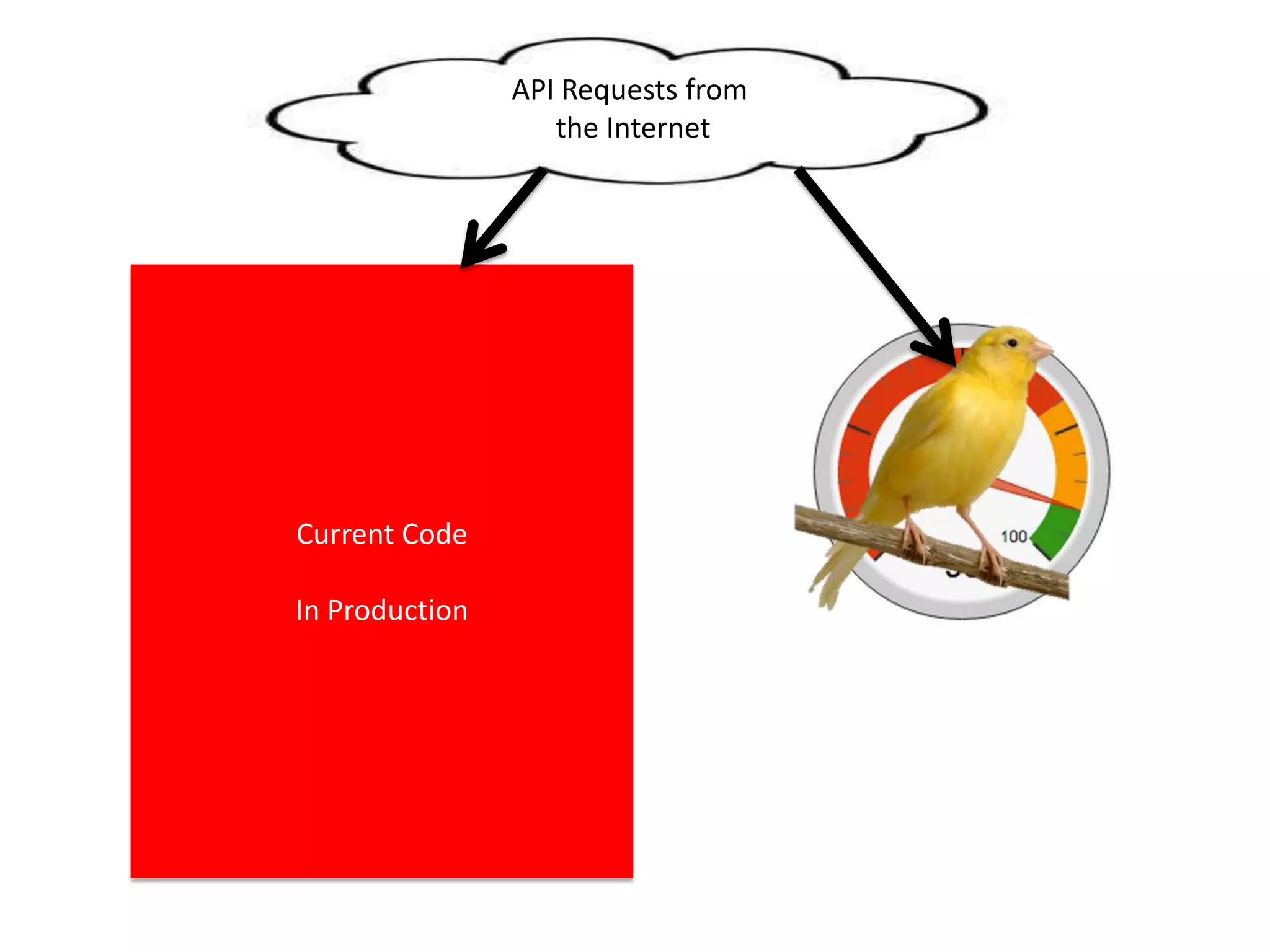

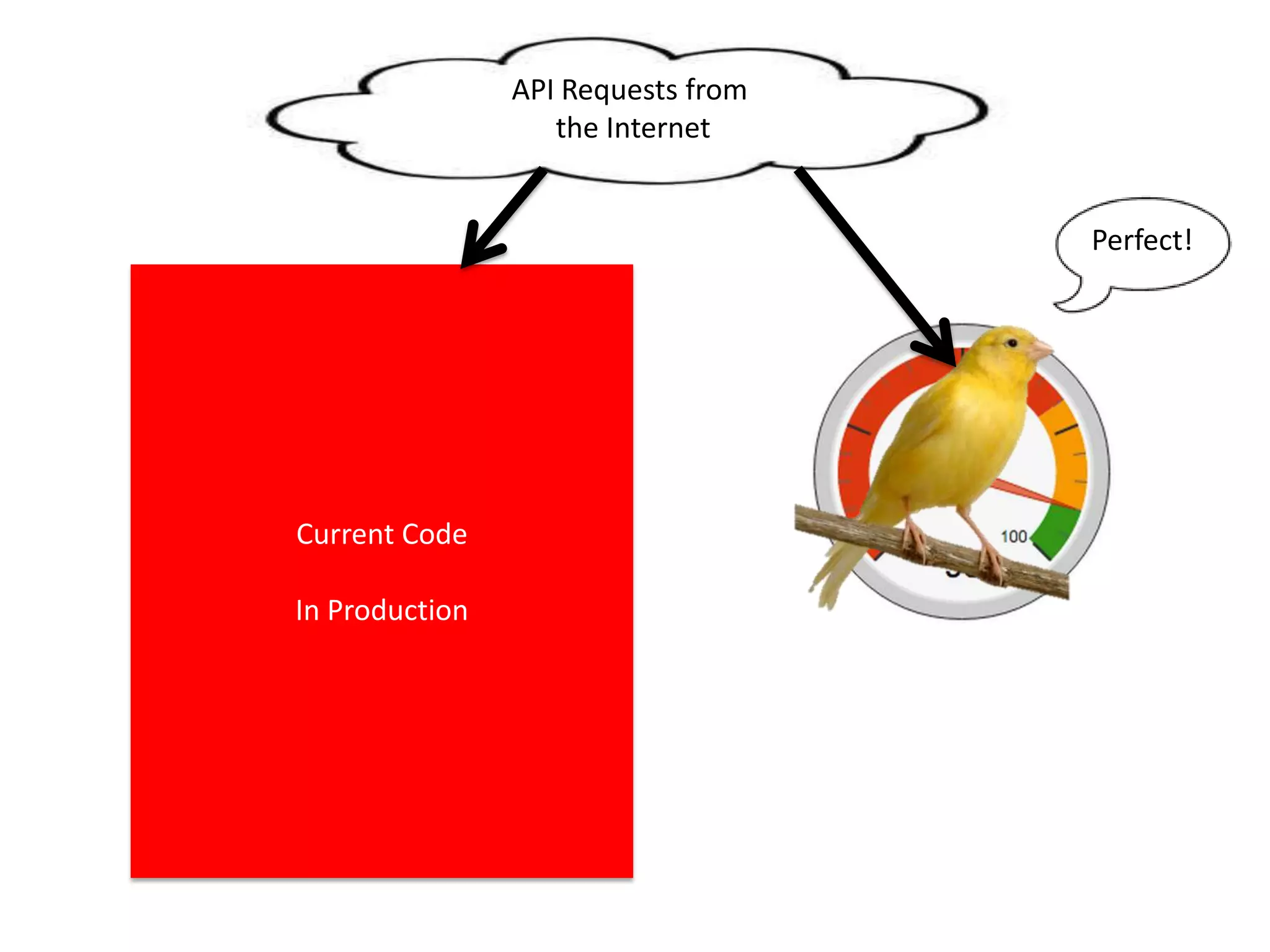

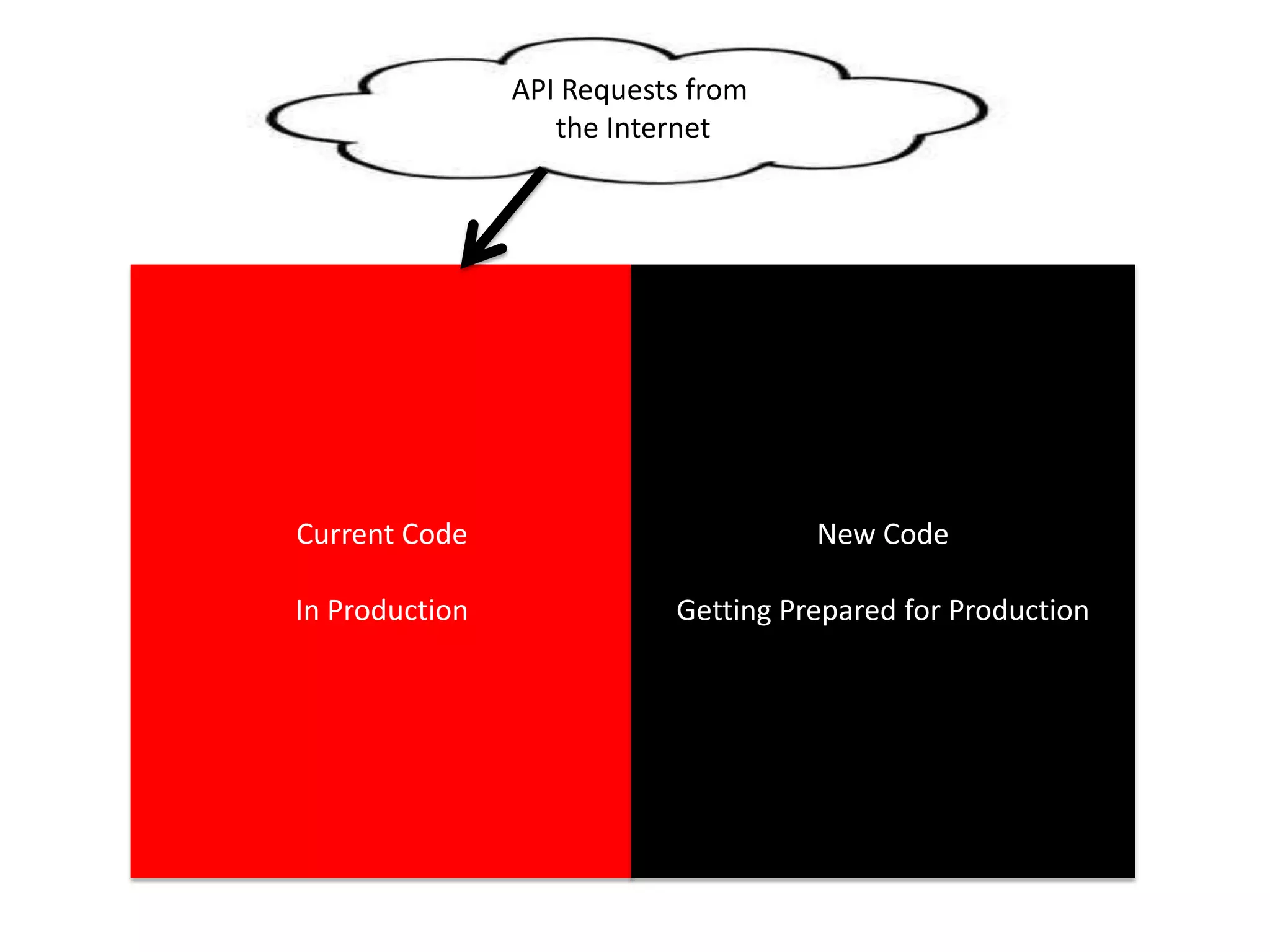

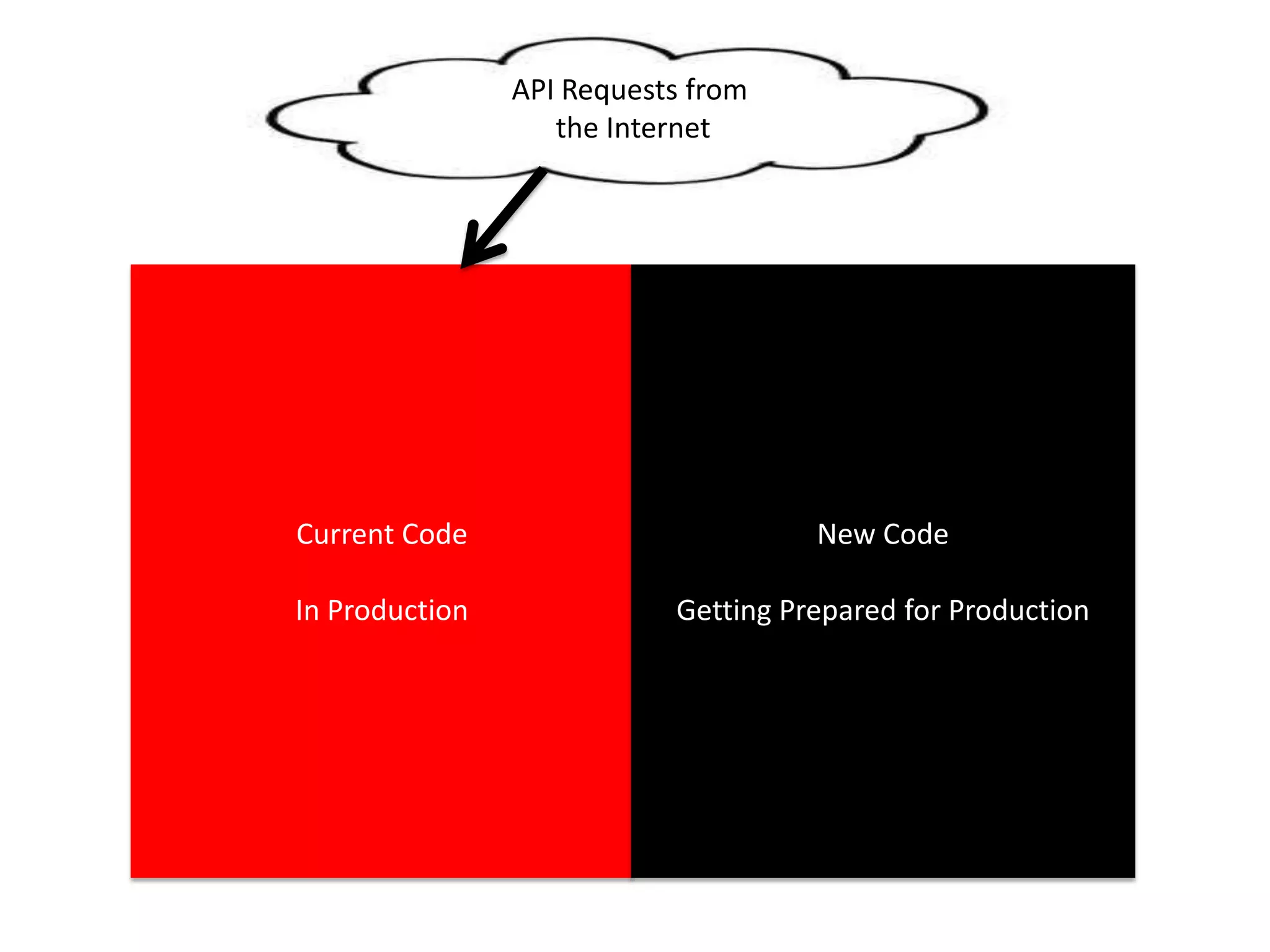

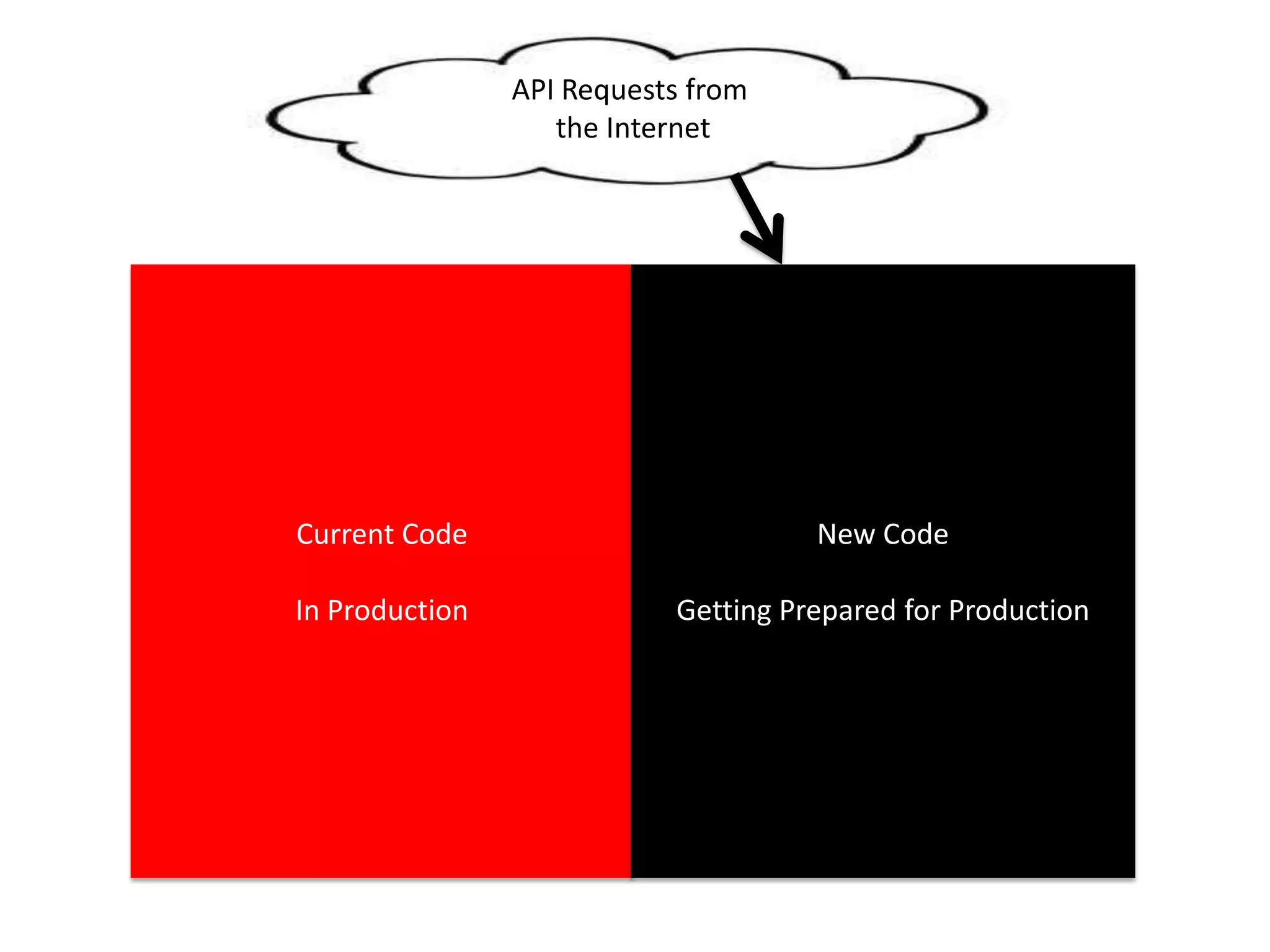

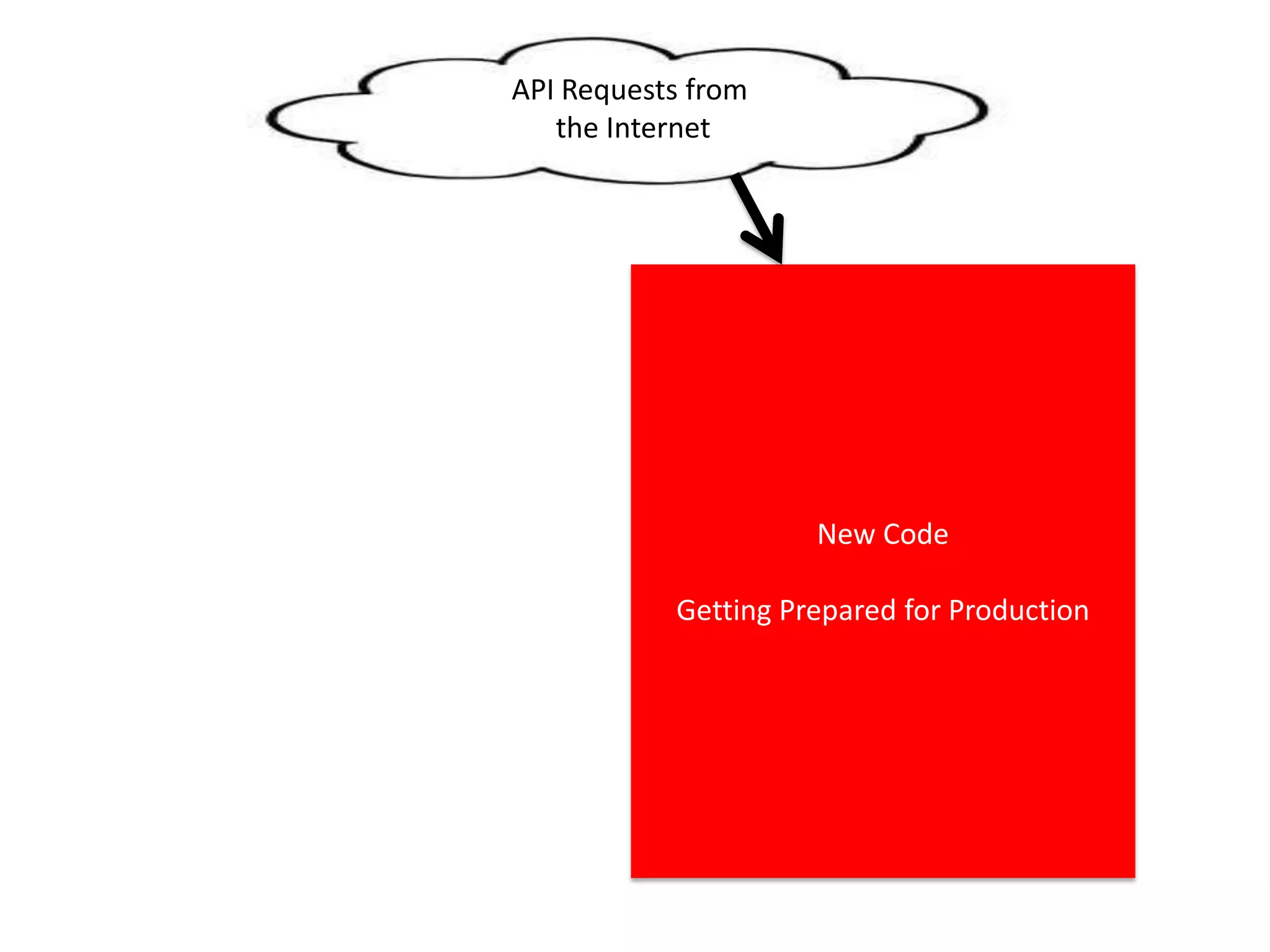

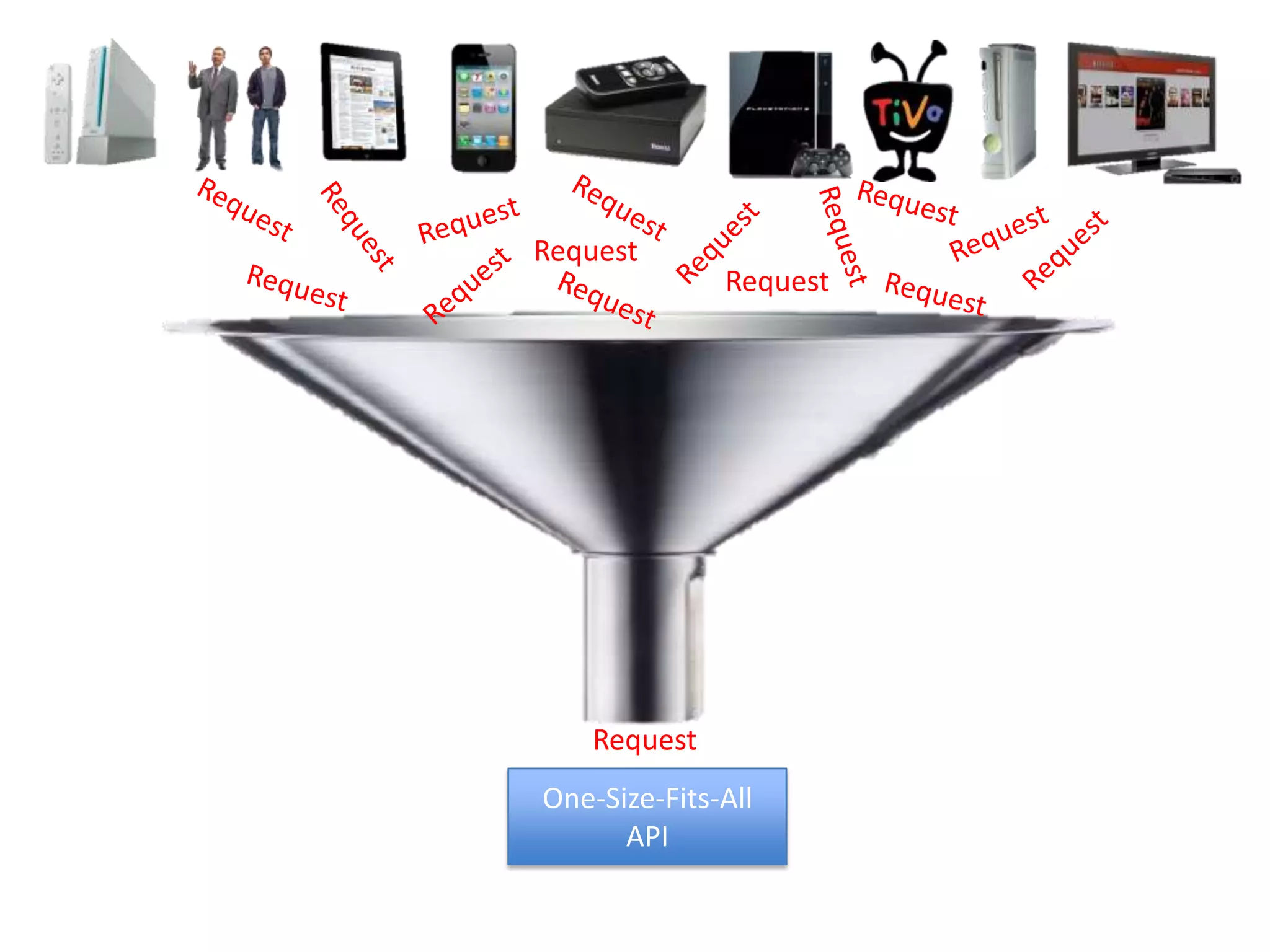

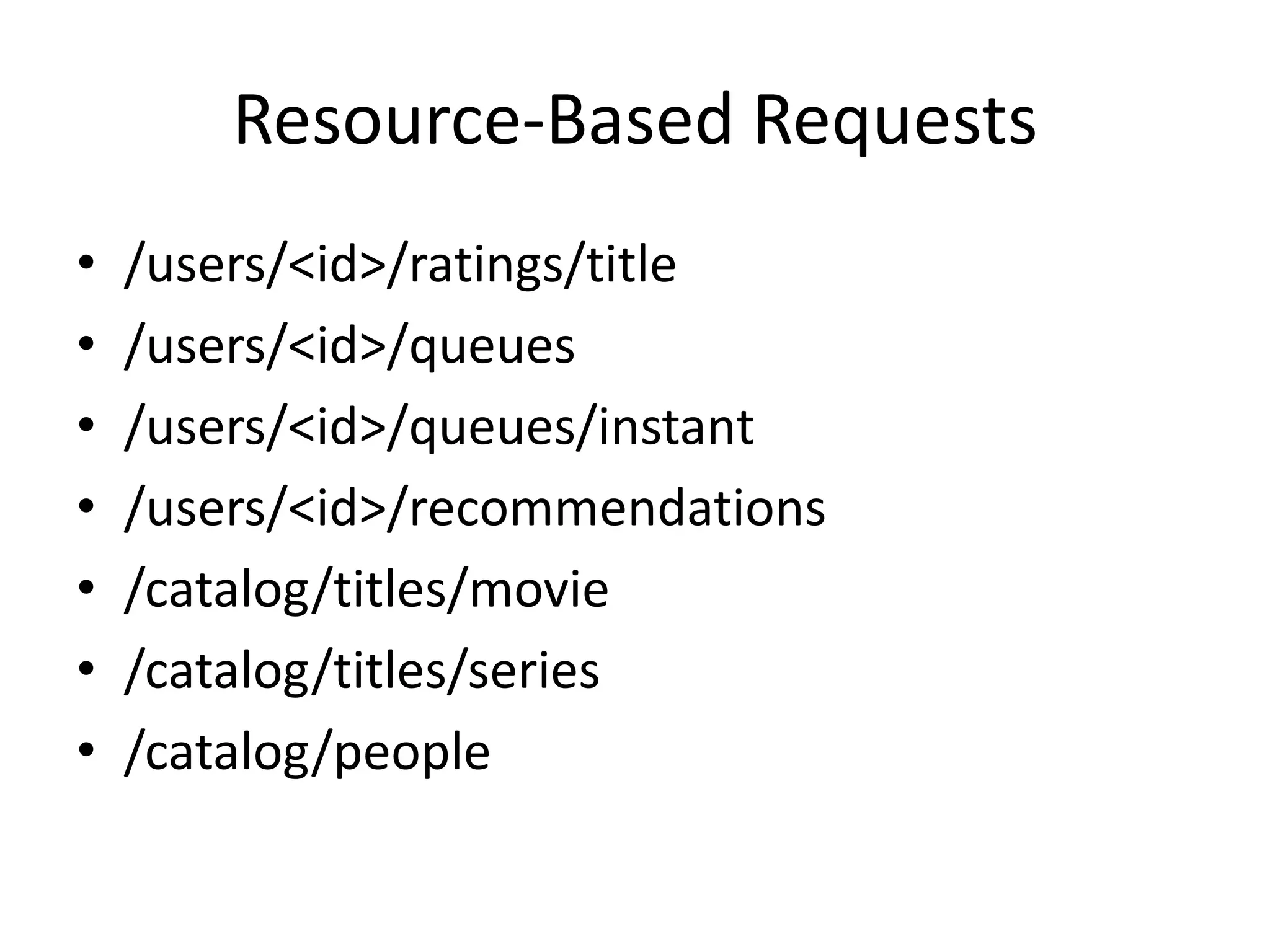

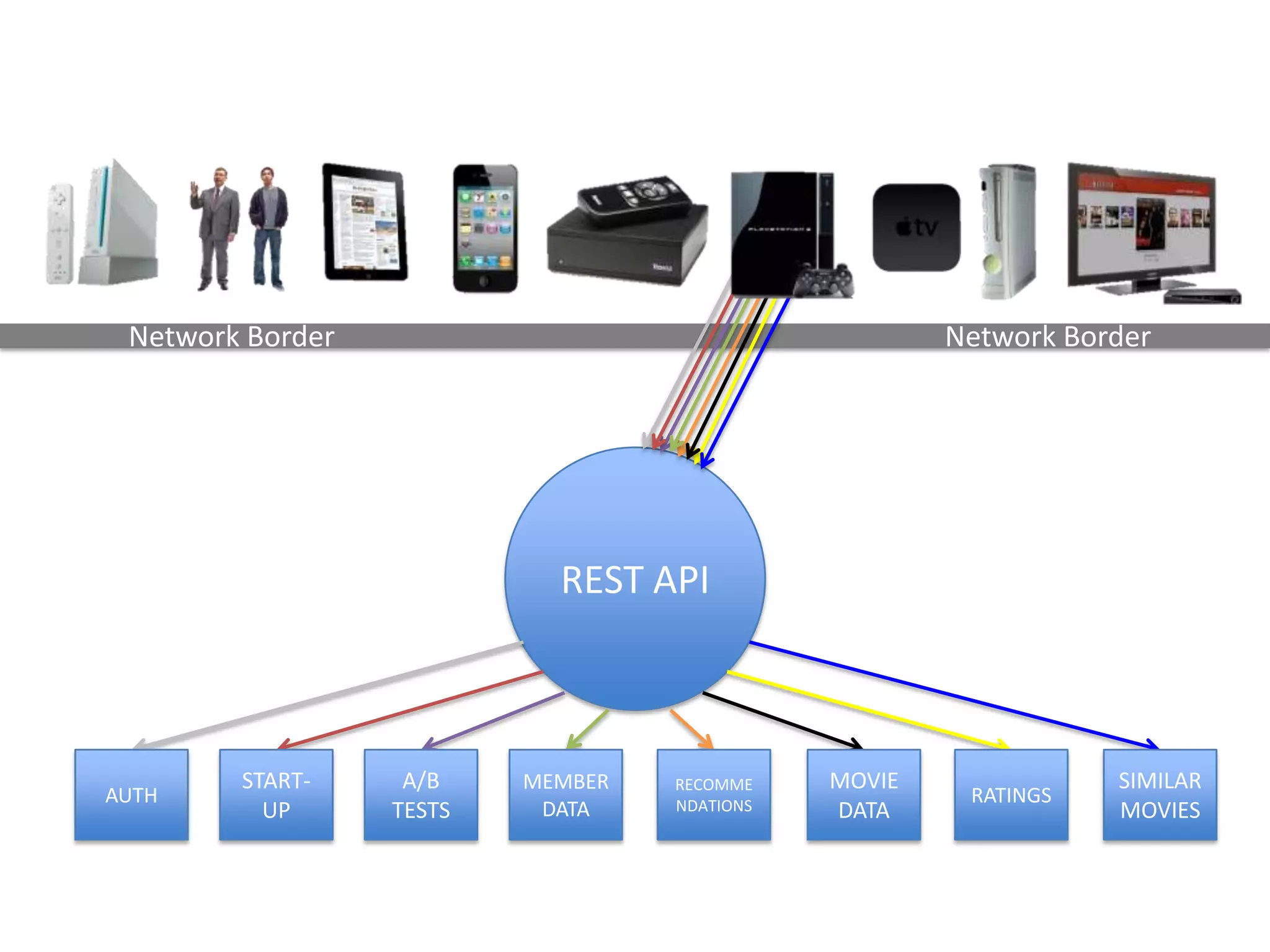

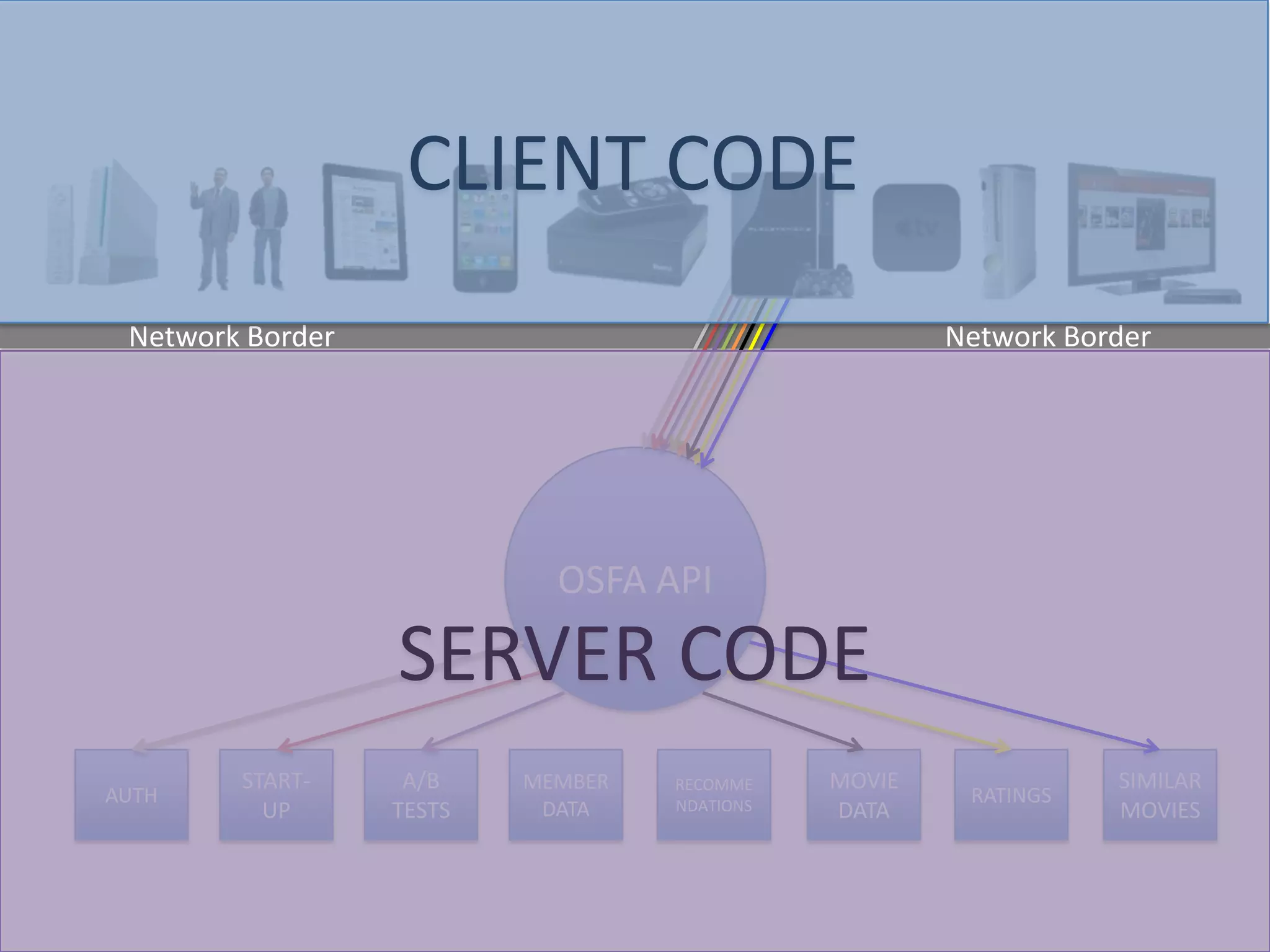

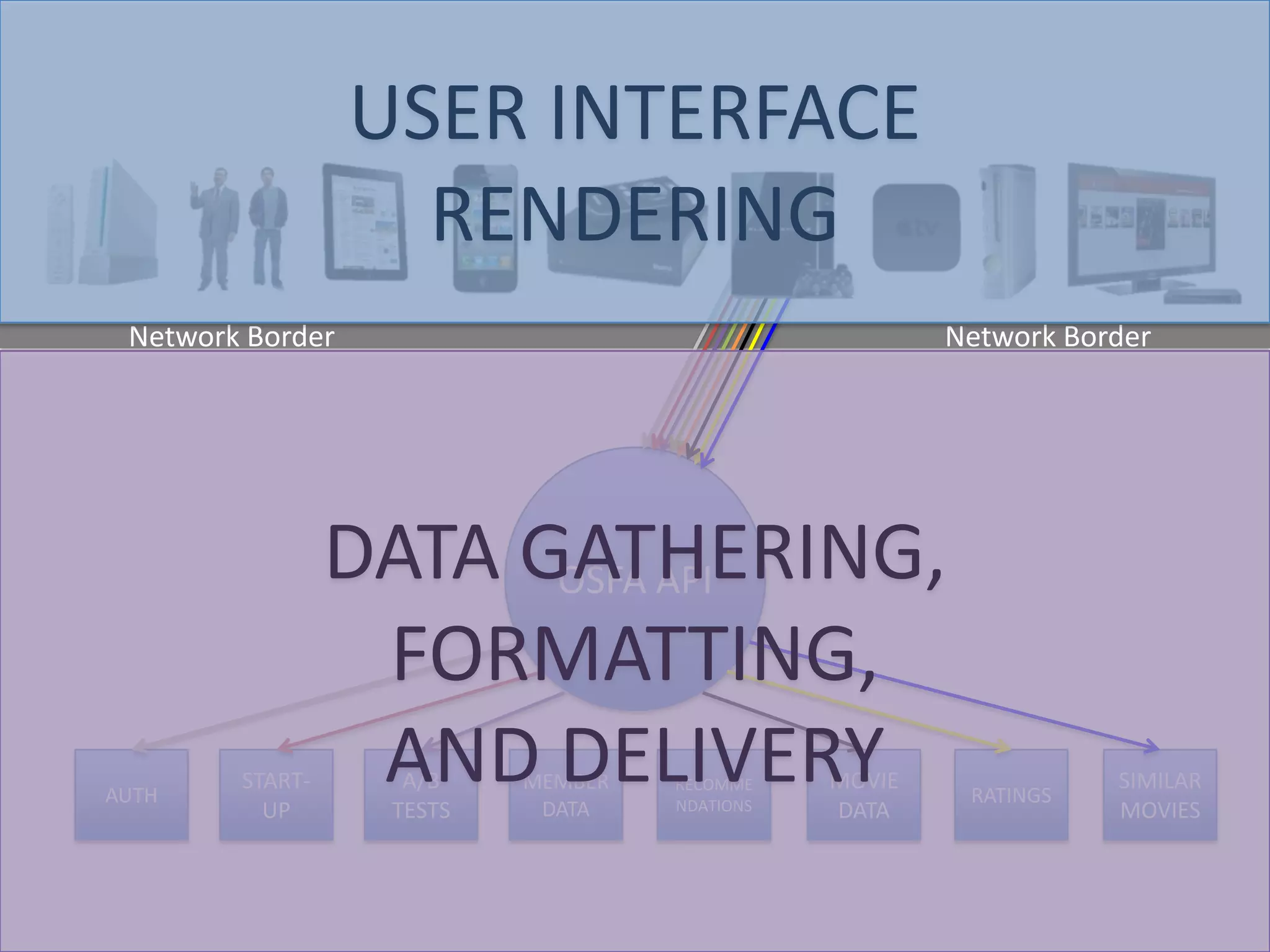

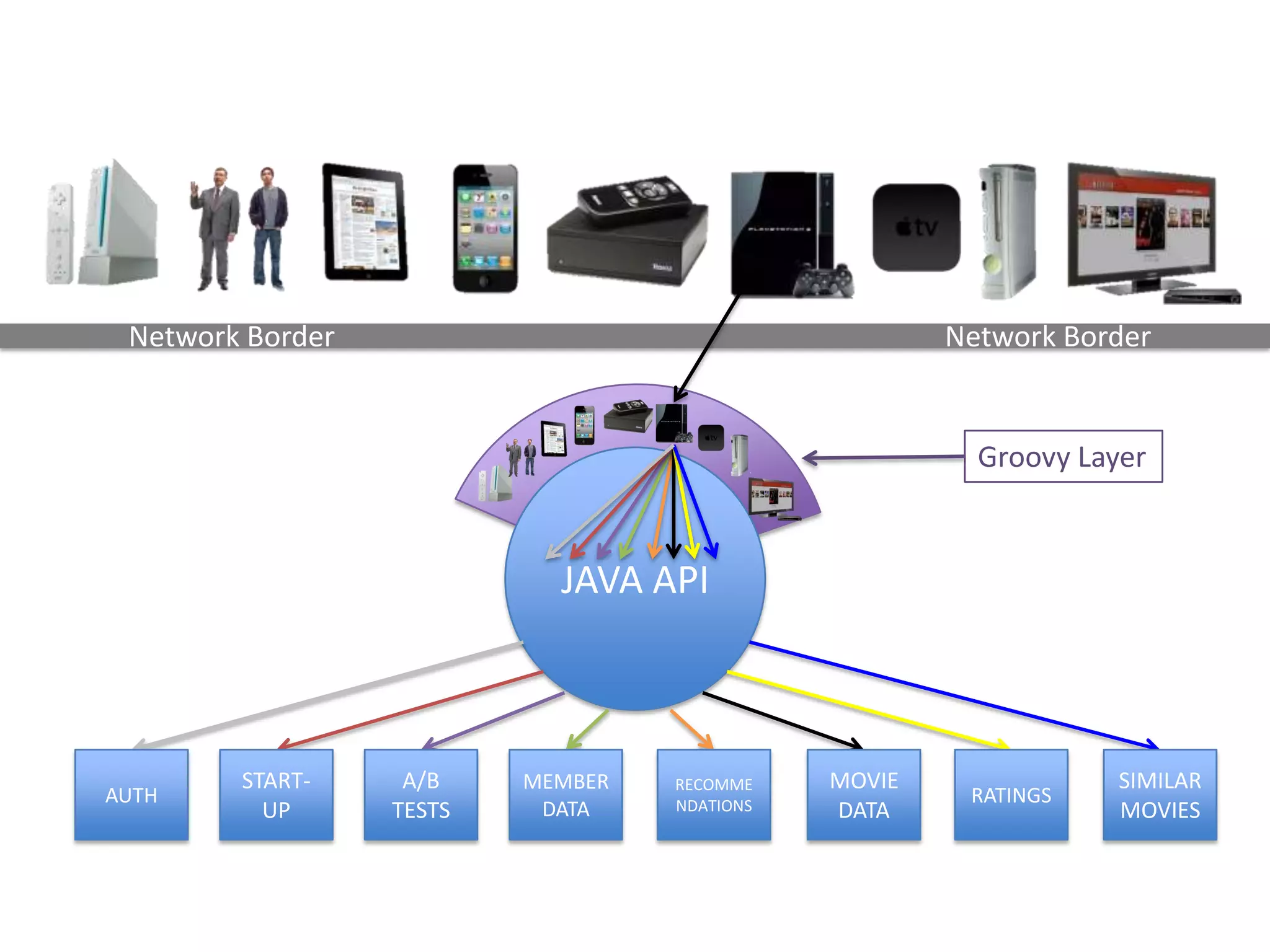

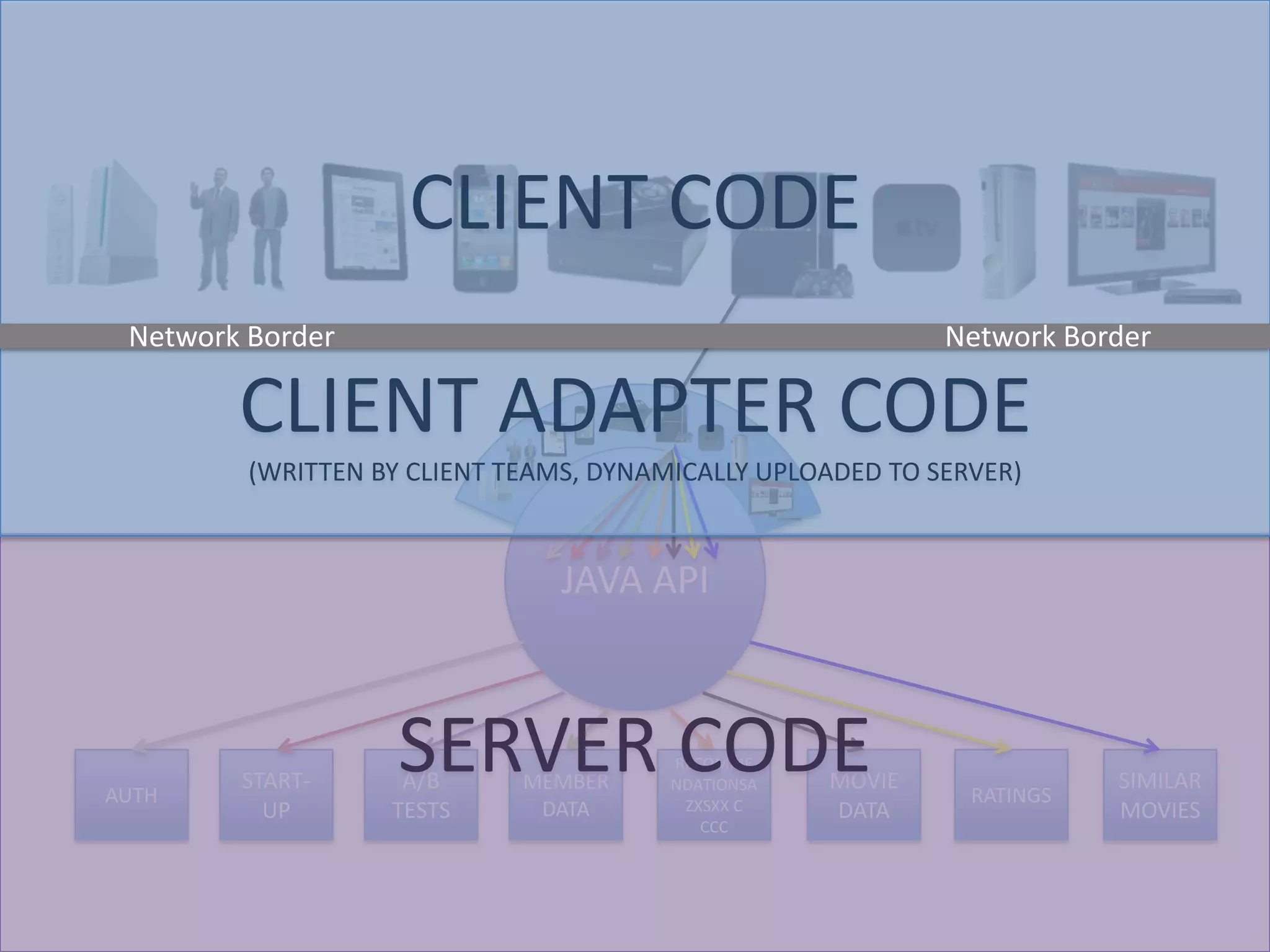

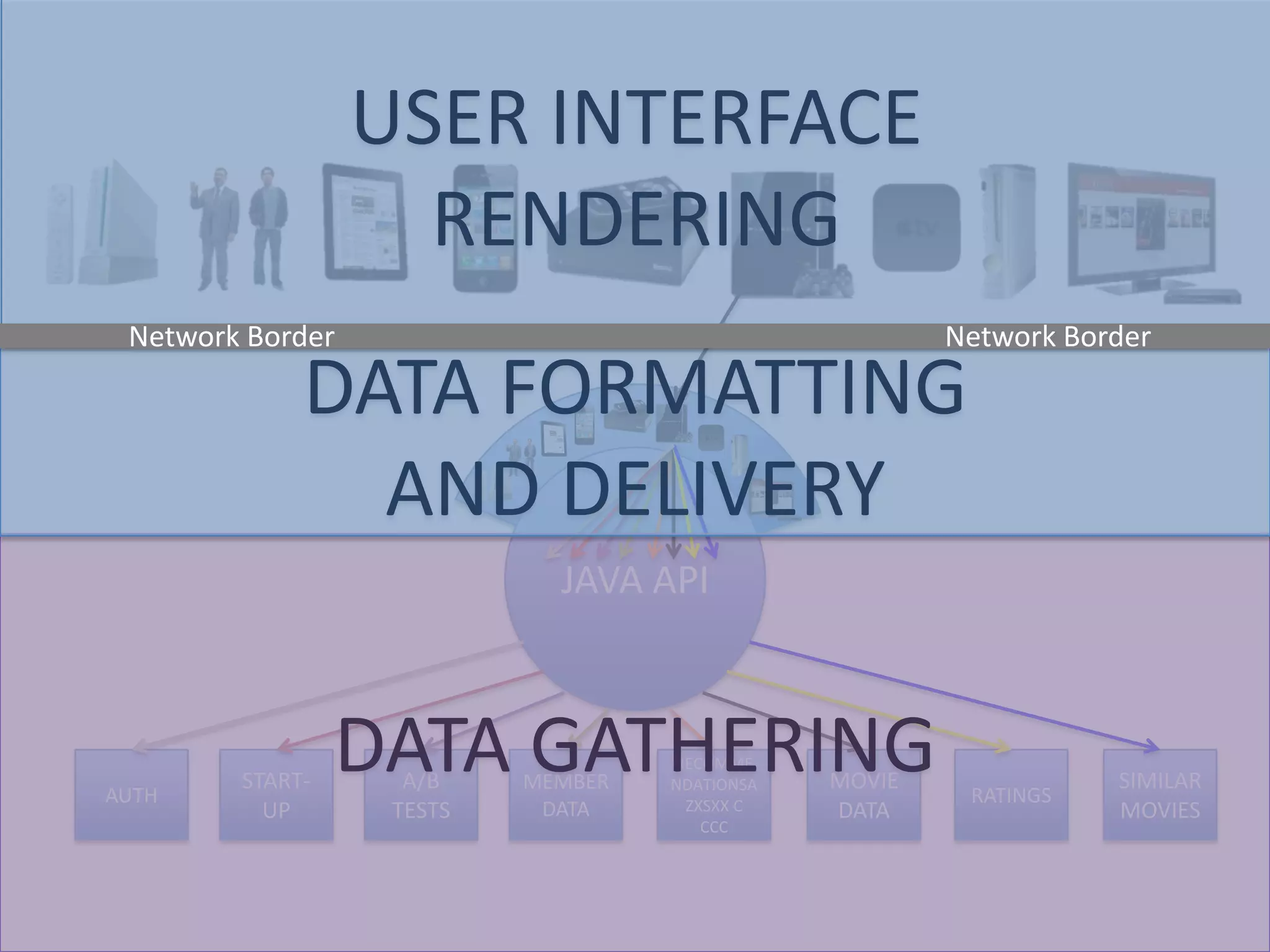

The presentation by Daniel Jacobson details the architecture and operational strategies of Netflix's global streaming service, which serves over 44 million subscribers across more than 40 countries. Key points include the challenges of maintaining a resilient API, scaling the system, and implementing predictive auto-scaling with tools like 'Scryer' and chaos testing methodologies to ensure reliability. Additionally, it emphasizes the importance of data brokering and personalized content delivery across over 1,000 device types.