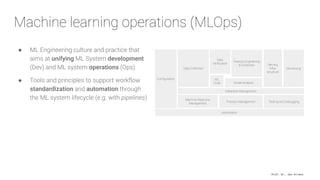

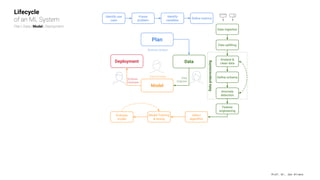

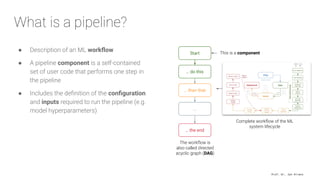

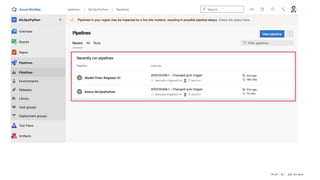

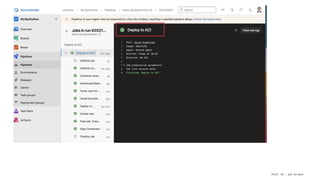

The document presents an overview of machine learning operations (MLOps) and the usage of pipelines in the ML lifecycle, emphasizing the importance of tools like TensorFlow Extended (TFX) and Kubeflow for automating and orchestrating ML processes. It highlights common challenges in scaling AI projects and provides insights into a structured approach to the ML workflow, from data ingestion to model deployment. A key takeaway is the necessity of standardization and automation to bridge the gap from proof of concept to production in AI initiatives.