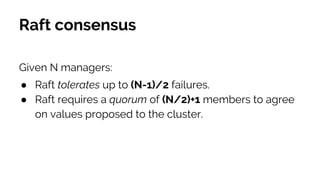

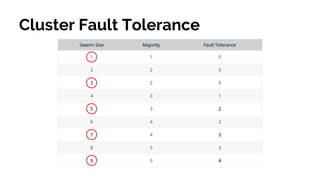

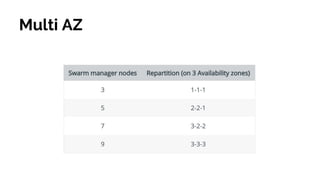

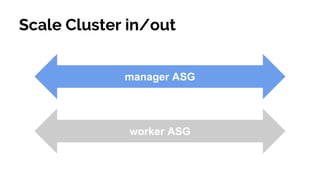

The document details a presentation by Simone Soldateschi on Docker Swarm and container orchestration engines, covering topics such as cluster management, security, and service management. It outlines the features and advantages of Docker Swarm, including auto-scaling, service discovery, and fault tolerance, as well as providing a demo of setting up a development swarm cluster. Key lessons emphasize the benefits of Docker Swarm for new projects and the importance of prototyping before automation.

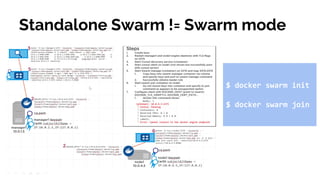

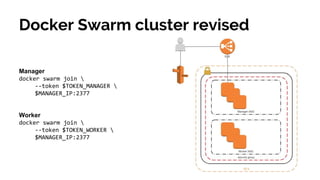

![Manager initialises cluster

docker swarm init

--advertise-addr $MANAGER_IP

Stand up basic cluster

[Manager|Worker]

docker swarm join

--token $TOKEN_[MANAGER|WORKER]

$MANAGER_IP:2377](https://image.slidesharecdn.com/soldateschi-20180413codemotionrome2018dockerswarm1-180709092326/85/Load-balancing-high-available-web-app-with-Docker-Swarm-cluster-Simone-Soldateschi-Codemotion-Rome-2018-13-320.jpg)

![$ docker service create --name web -p 80:80 nginx

overall progress: 1 out of 1 tasks

1/1: running [=================================>]

verify: Service converged

Docker service

$ docker service scale web=3

web scaled to 3

overall progress: 3 out of 3 tasks

1/3: running [=================================>]

2/3: running [=================================>]

3/3: running [=================================>]

verify: Service converged

$ docker service rm web](https://image.slidesharecdn.com/soldateschi-20180413codemotionrome2018dockerswarm1-180709092326/85/Load-balancing-high-available-web-app-with-Docker-Swarm-cluster-Simone-Soldateschi-Codemotion-Rome-2018-29-320.jpg)

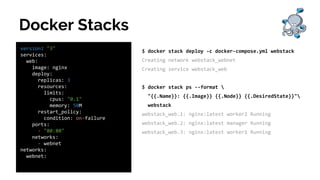

![Docker Stacks

$ docker service scale webstack_web=6

webstack_web scaled to 6

overall progress: 6 out of 6 tasks

1/6: running [===================================>]

2/6: running [===================================>]

3/6: running [===================================>]

4/6: running [===================================>]

5/6: running [===================================>]

6/6: running [===================================>]

verify: Service converged](https://image.slidesharecdn.com/soldateschi-20180413codemotionrome2018dockerswarm1-180709092326/85/Load-balancing-high-available-web-app-with-Docker-Swarm-cluster-Simone-Soldateschi-Codemotion-Rome-2018-32-320.jpg)