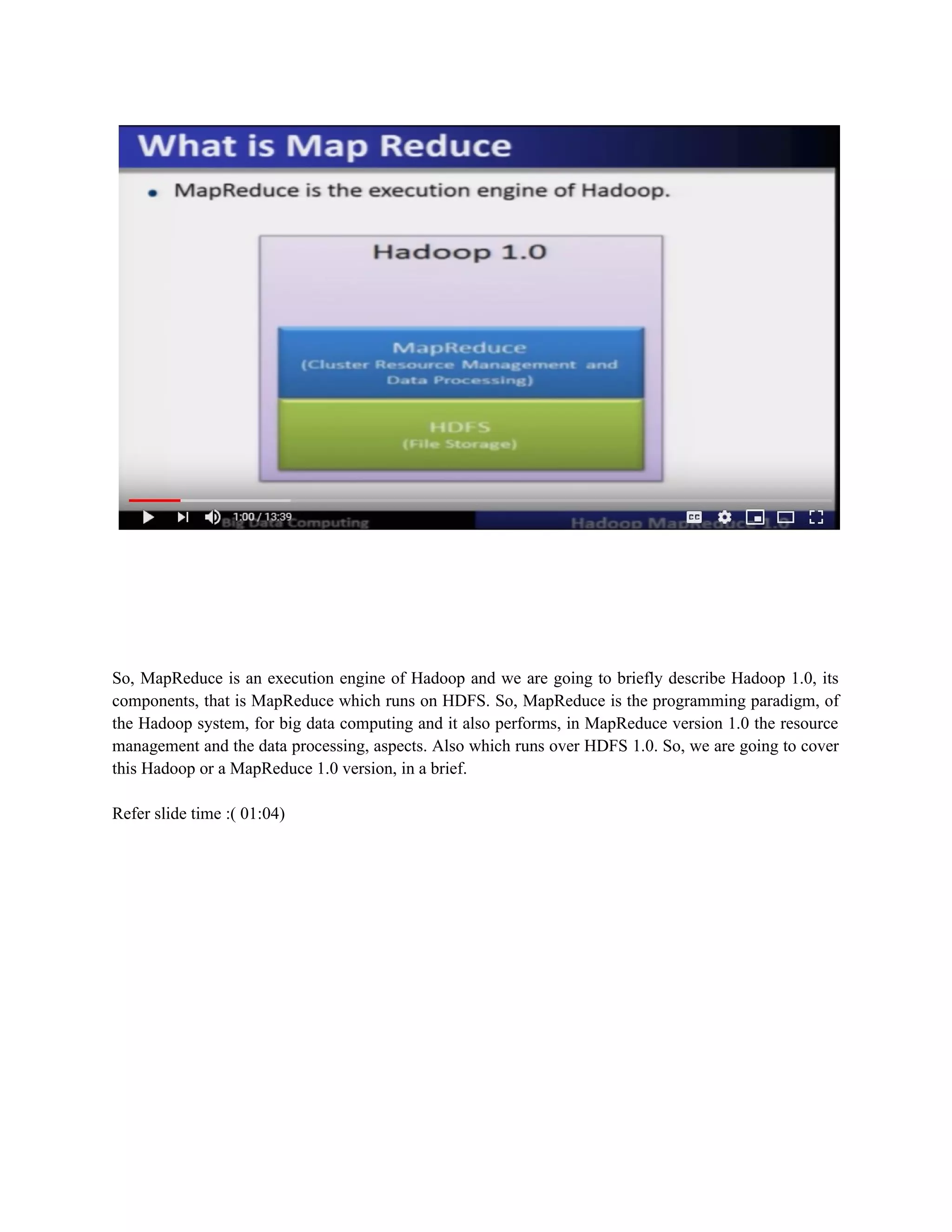

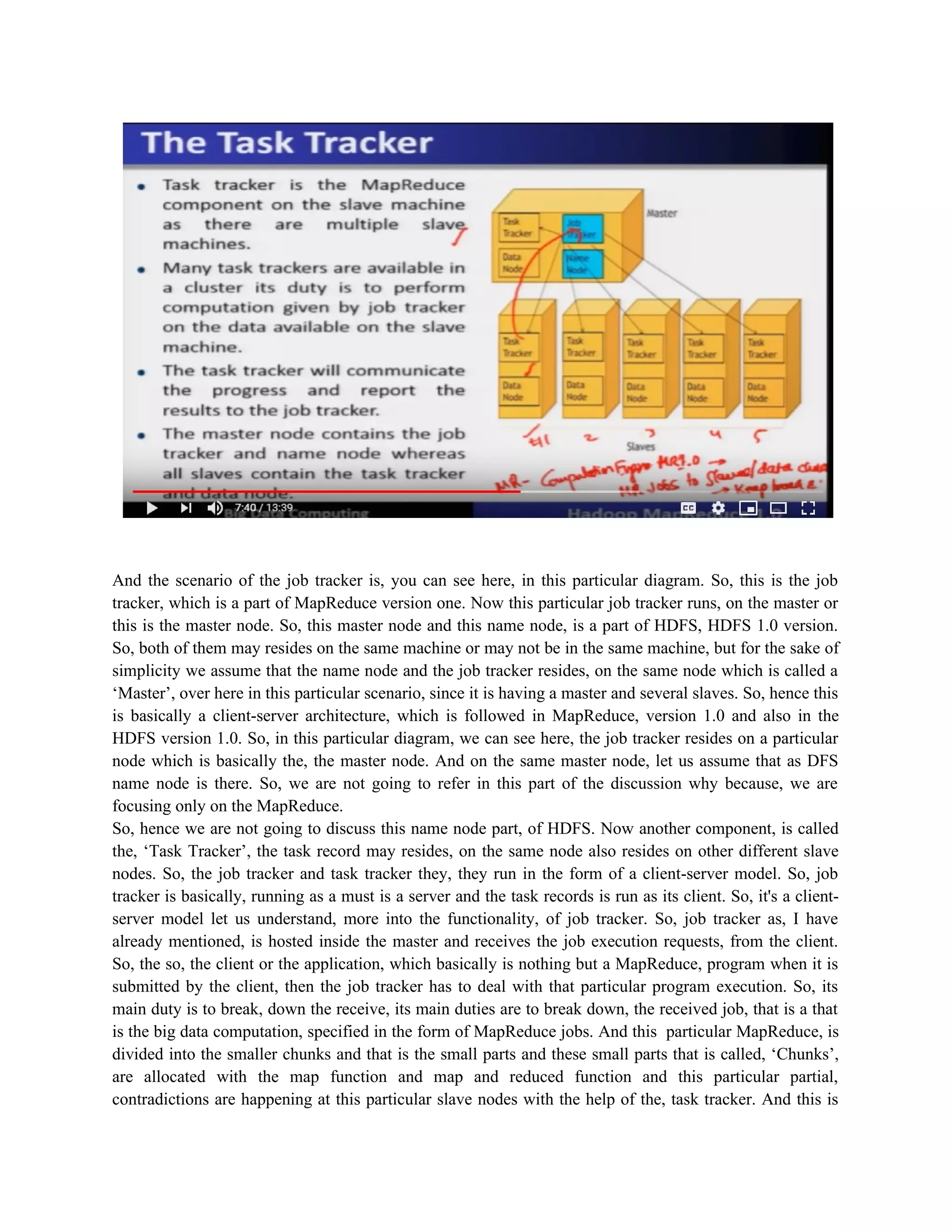

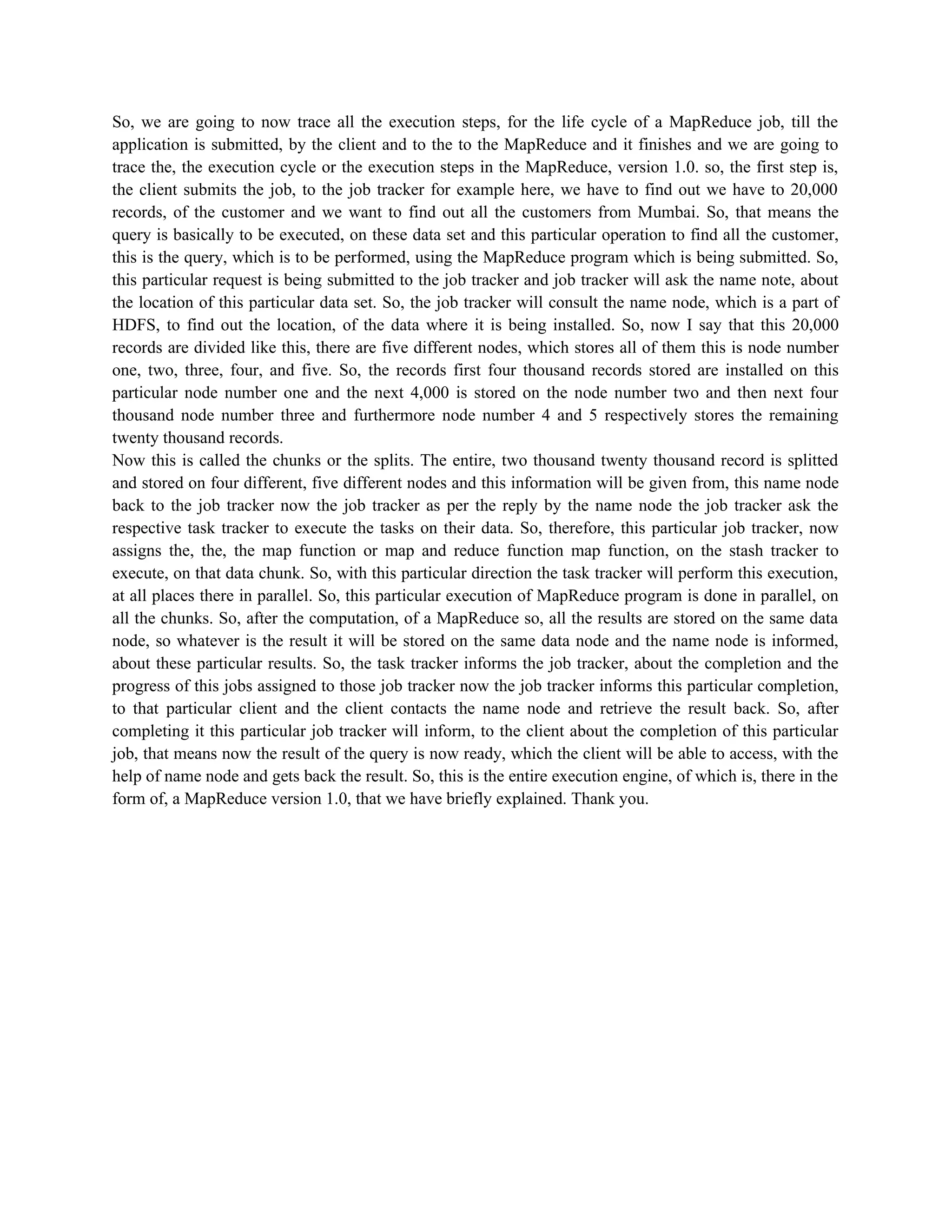

Hadoop MapReduce 1.0 is the original execution engine for Hadoop that performs resource management and data processing. It has two major components: the JobTracker, which runs on the master node, and TaskTrackers, which run on slave nodes. The JobTracker receives jobs from clients, divides them into tasks, and assigns tasks to TaskTrackers. TaskTrackers perform computations on the data located on their nodes and report results back to the JobTracker. When a job is submitted, the JobTracker consults the NameNode to determine data locations, assigns map and reduce tasks to TaskTrackers, and monitors job completion before reporting results back to the client.