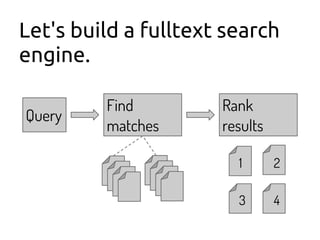

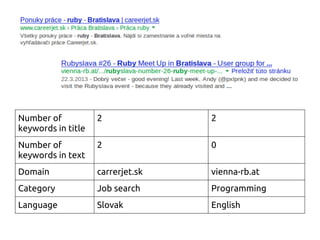

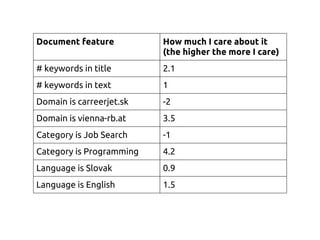

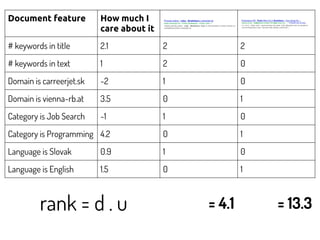

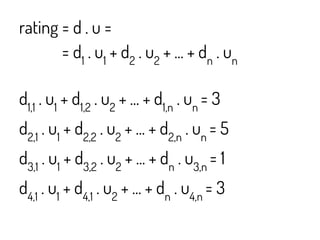

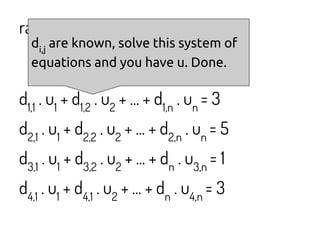

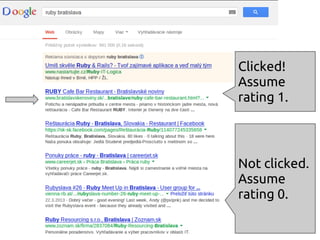

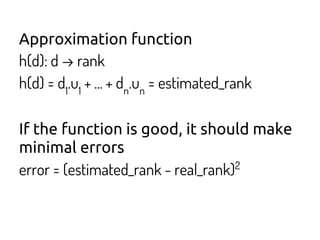

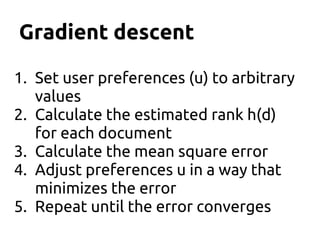

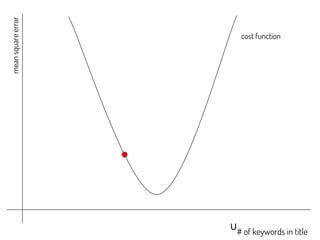

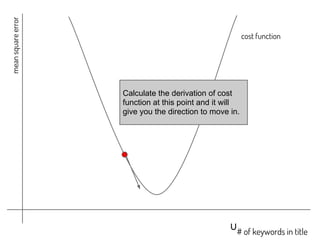

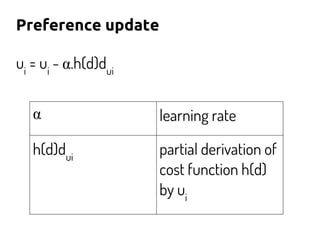

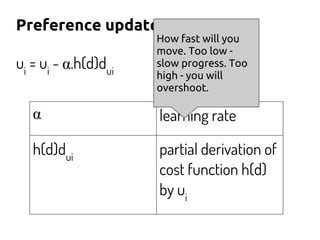

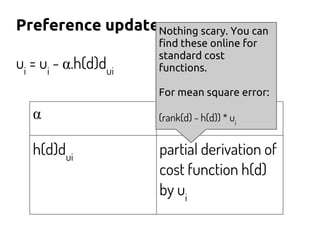

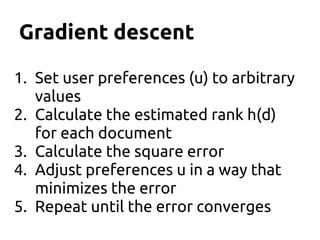

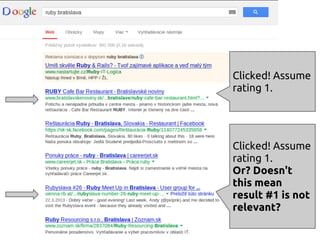

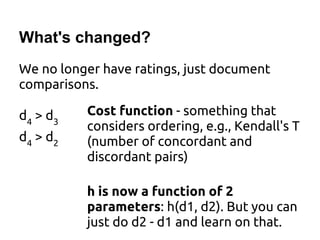

The document discusses using machine learning and gradient descent to learn user preferences for ranking search results based on clicks. It proposes using gradient descent to adjust user preference weights to minimize the error between estimated rankings produced by a preference-based ranking function and actual user clicks. Preference weights are updated on each iteration by moving in the direction that reduces the cost function, which is initially mean squared error but later proposed to be based on pairwise document comparisons to account for implicit feedback from clicks alone.