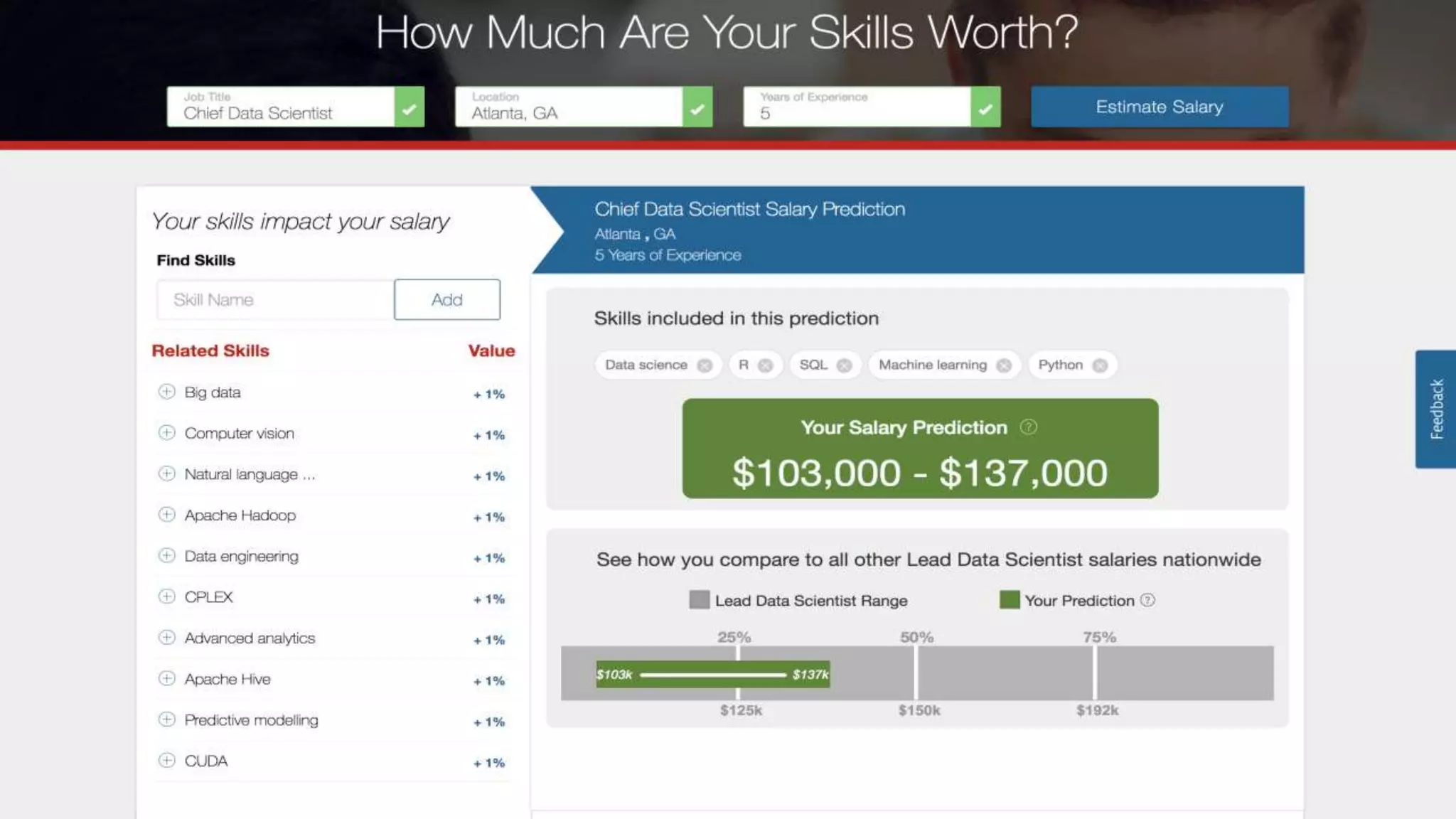

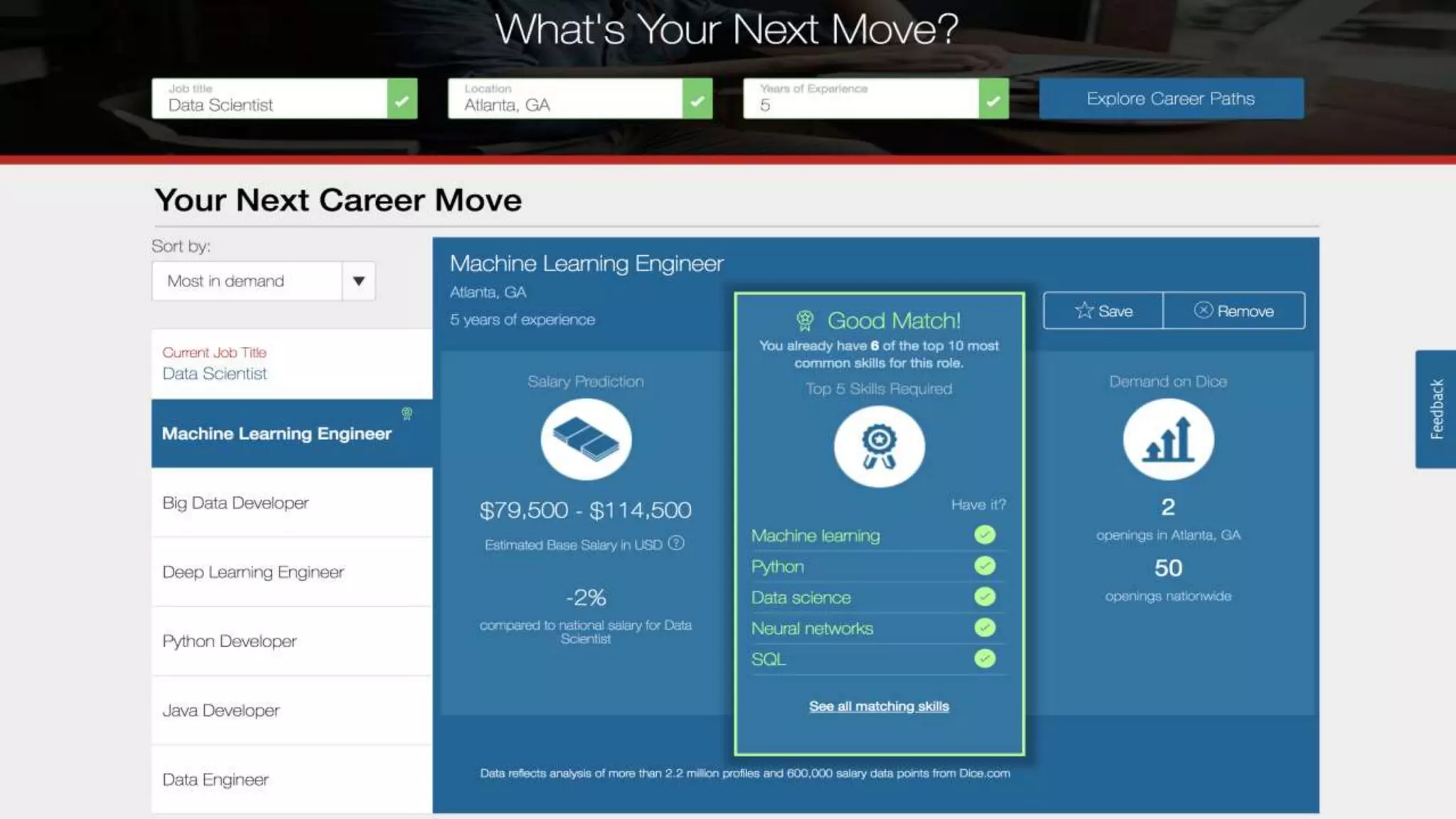

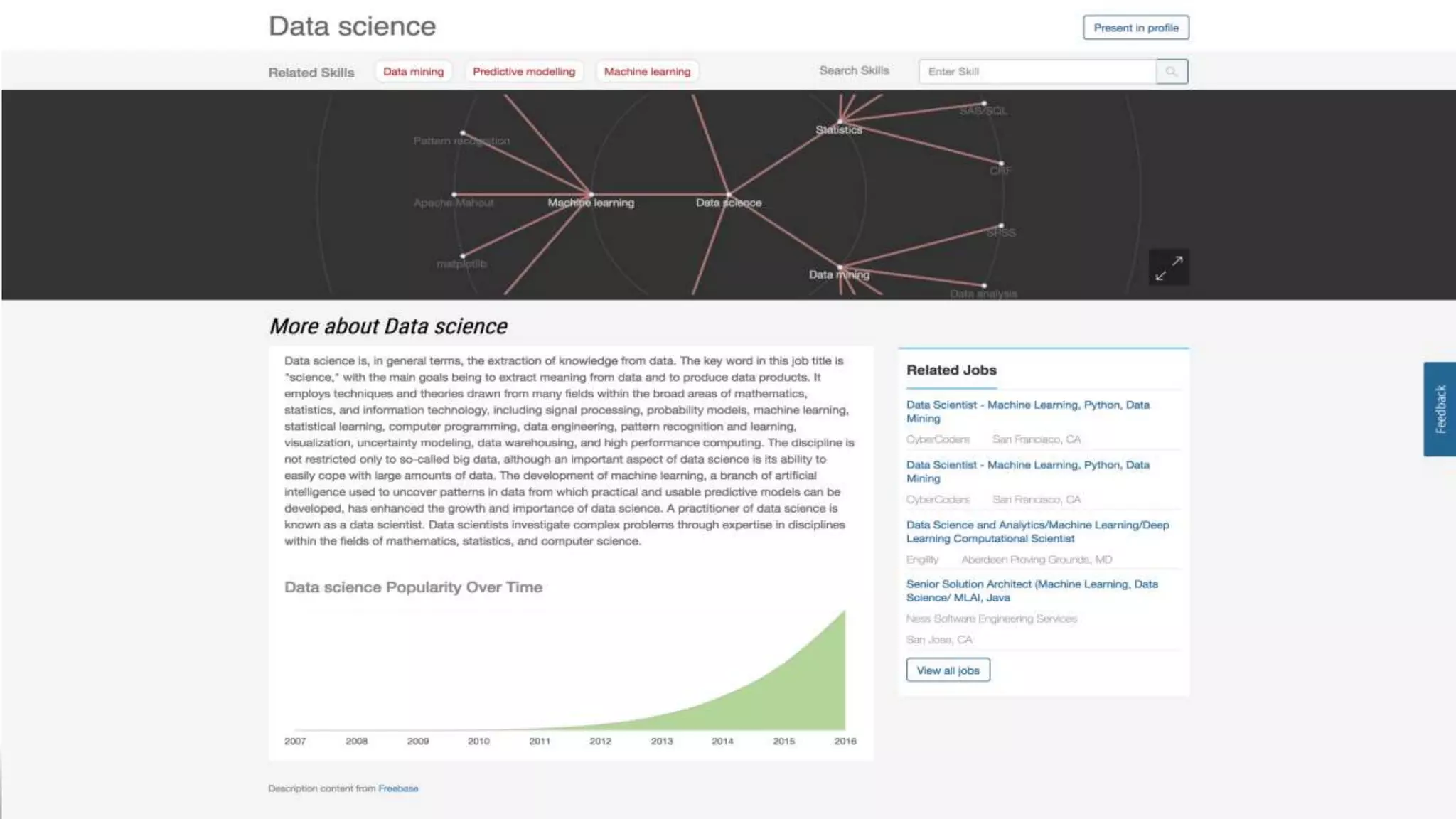

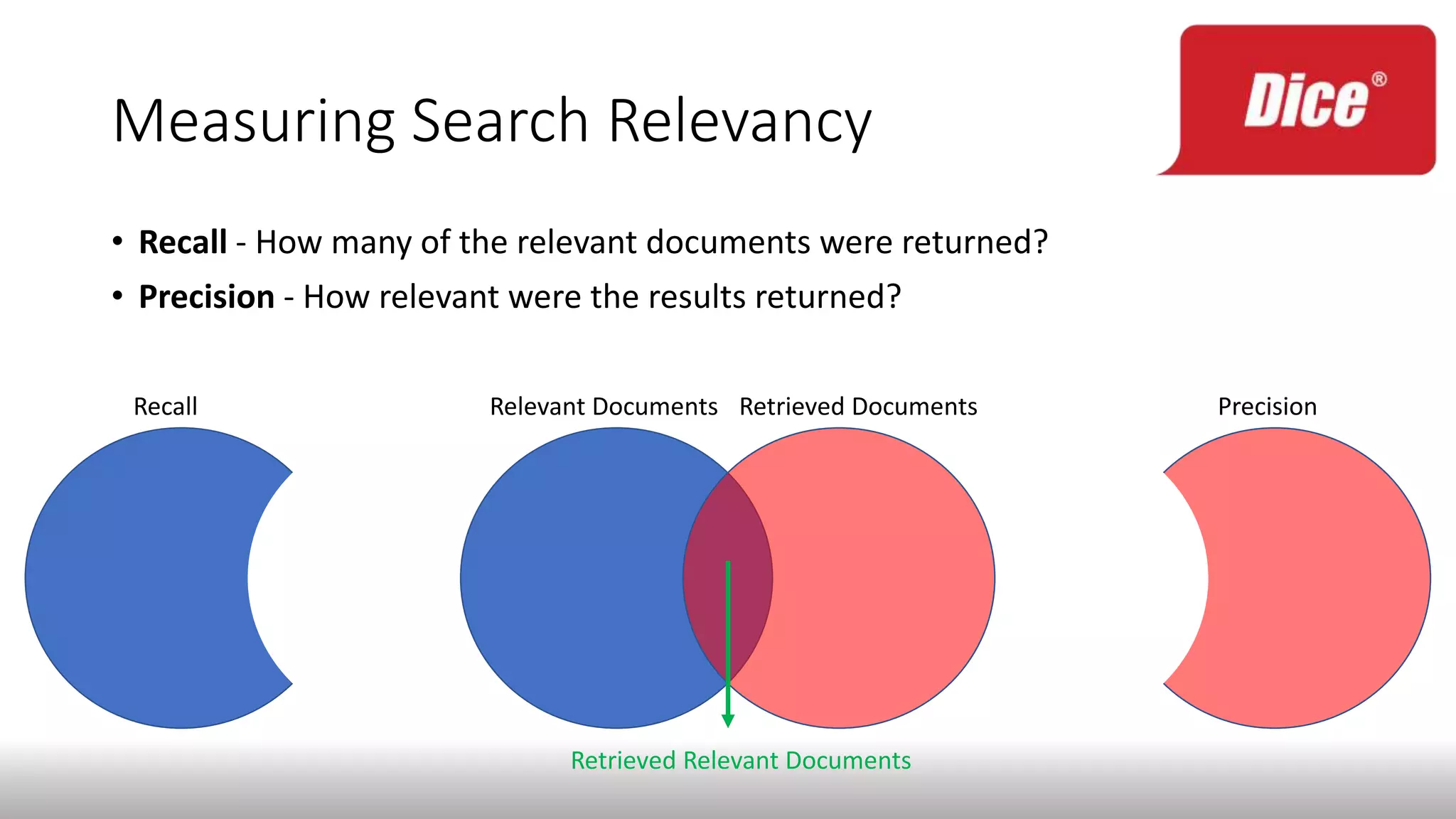

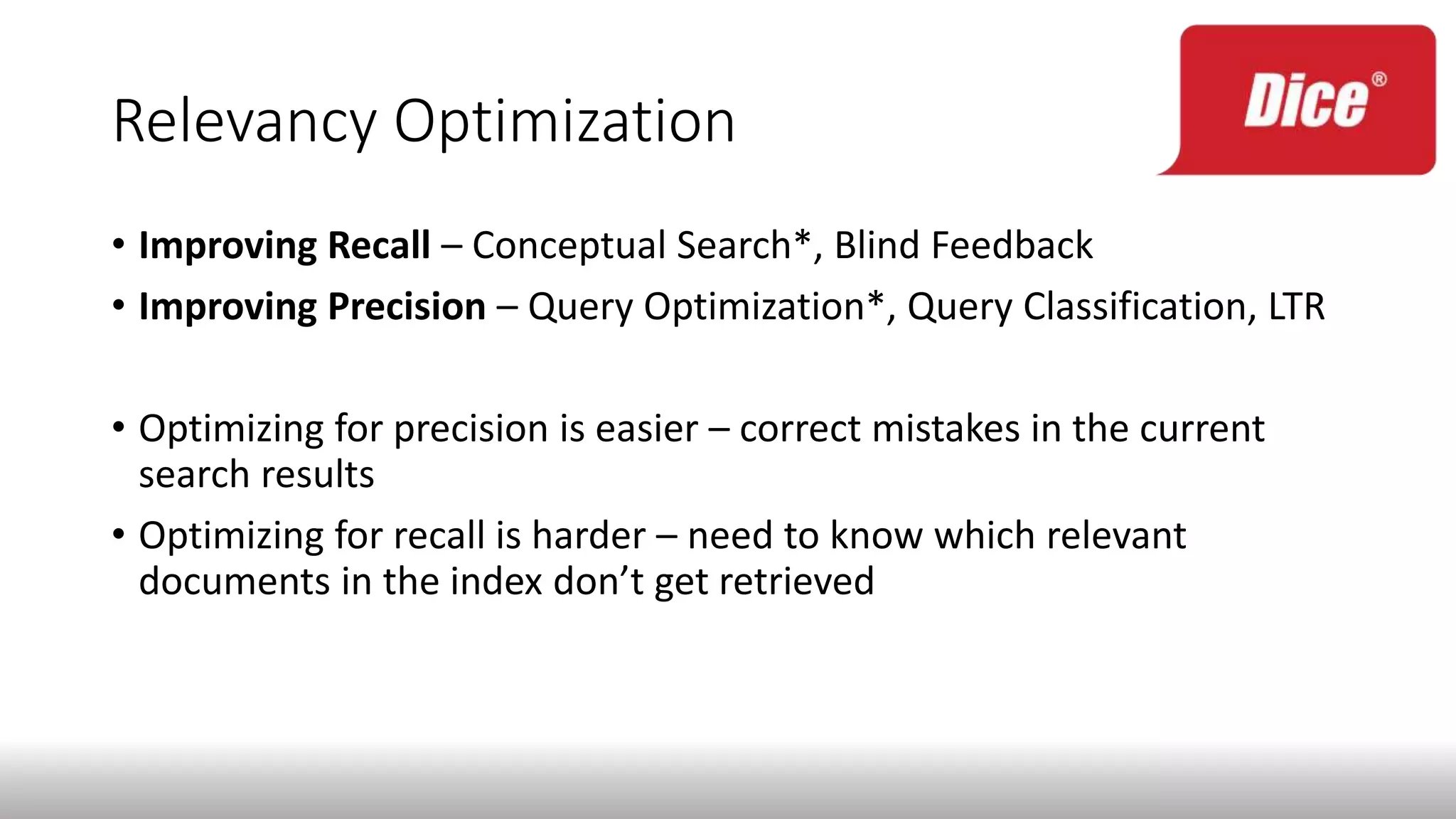

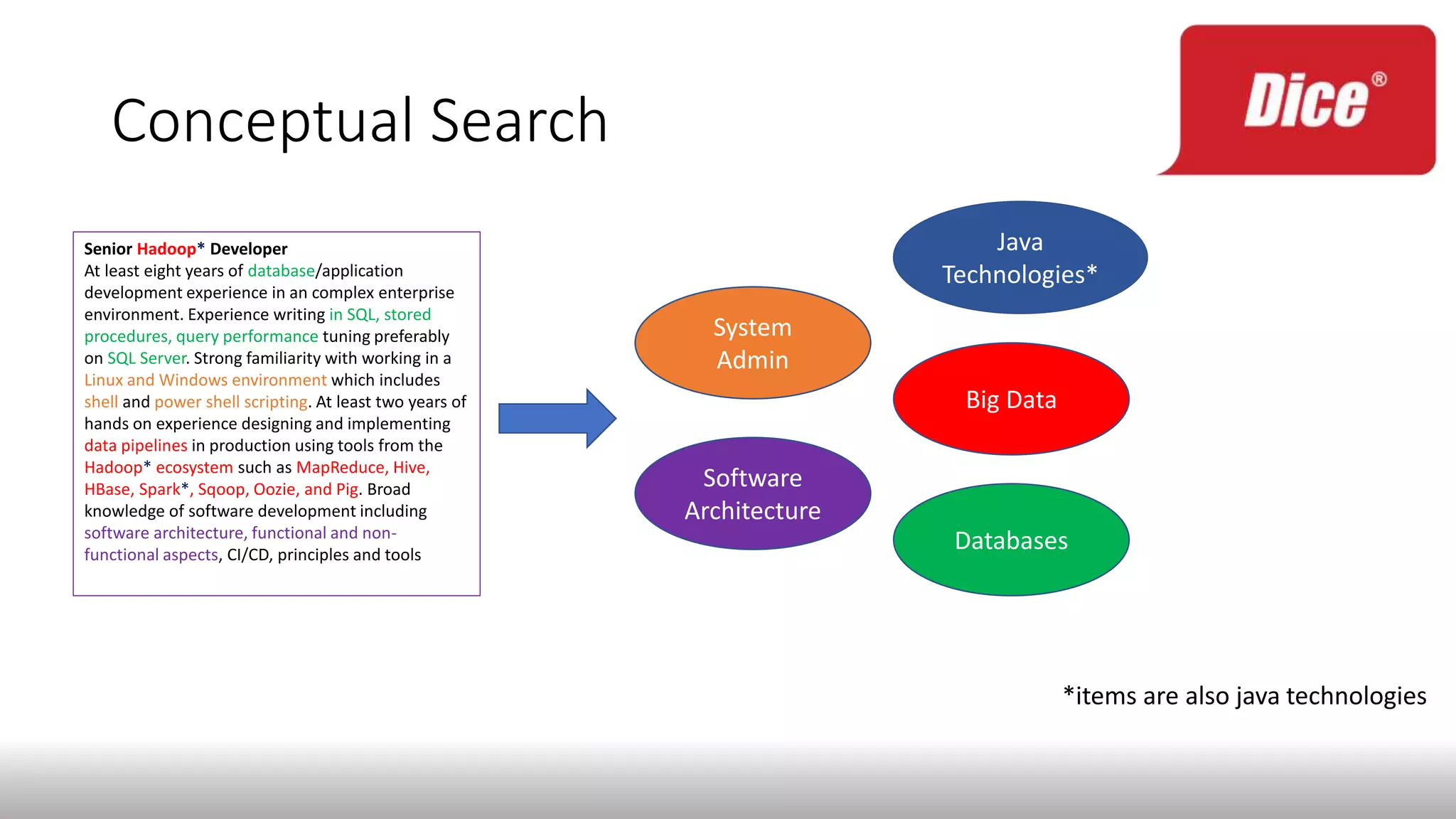

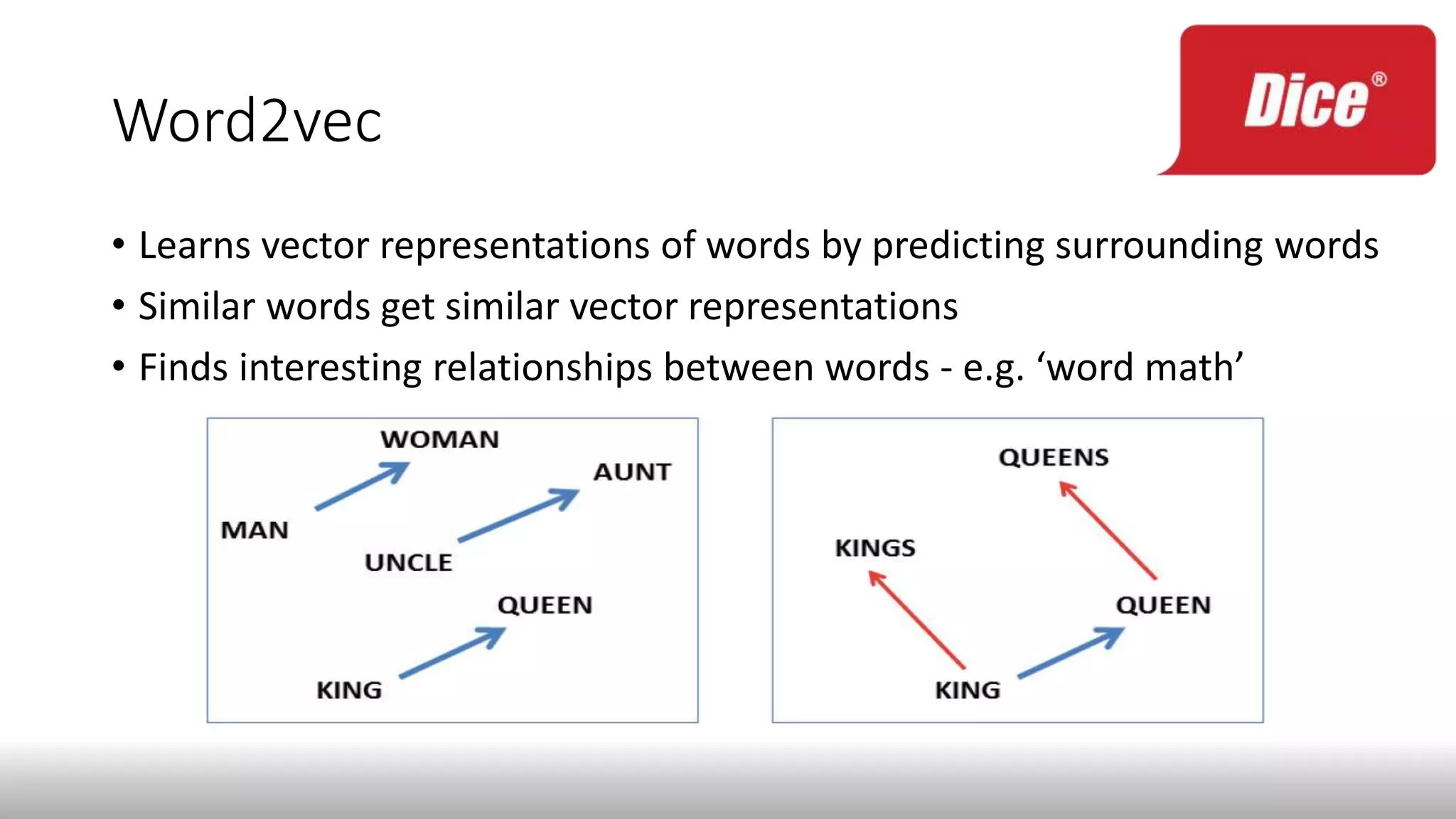

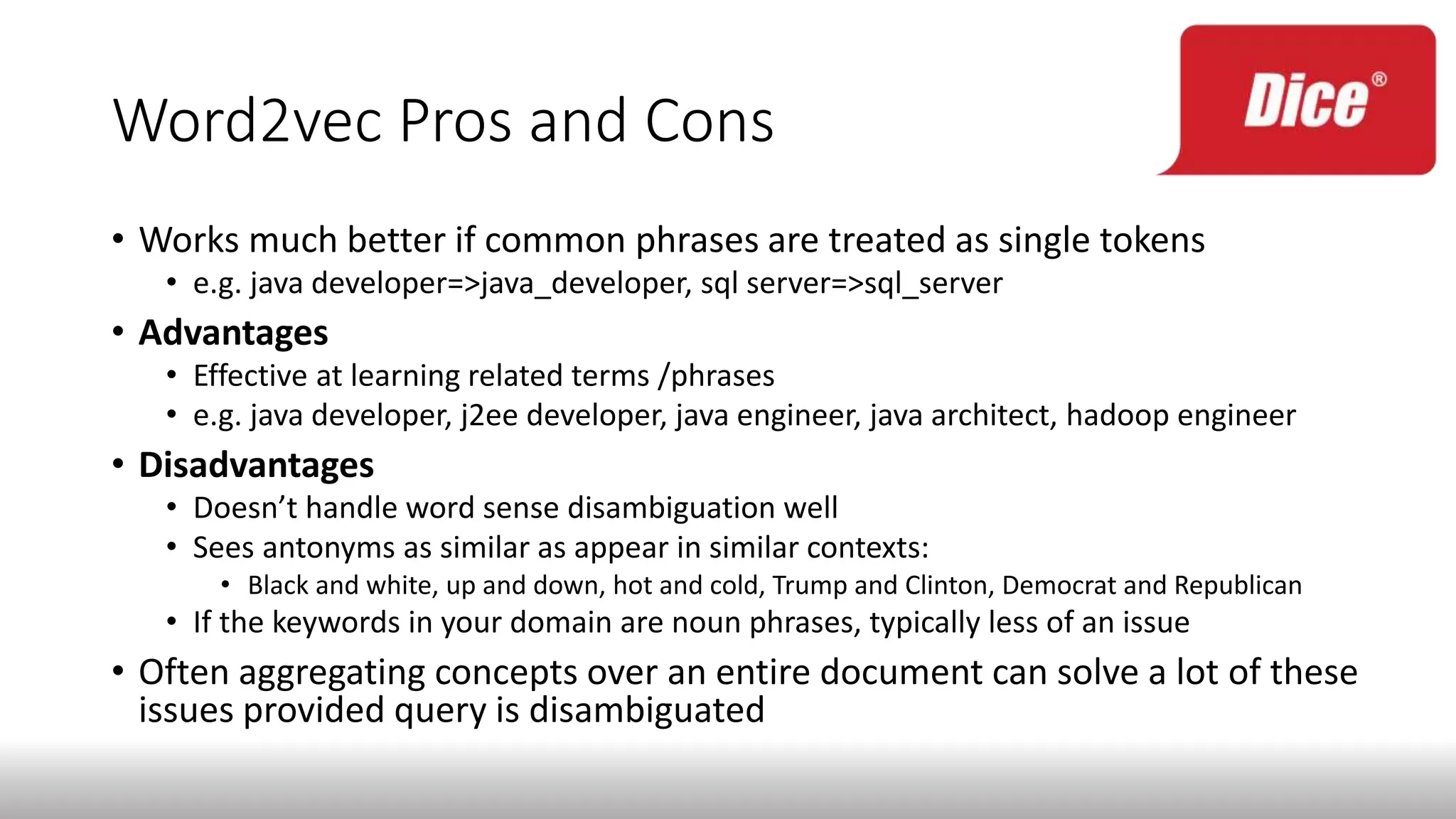

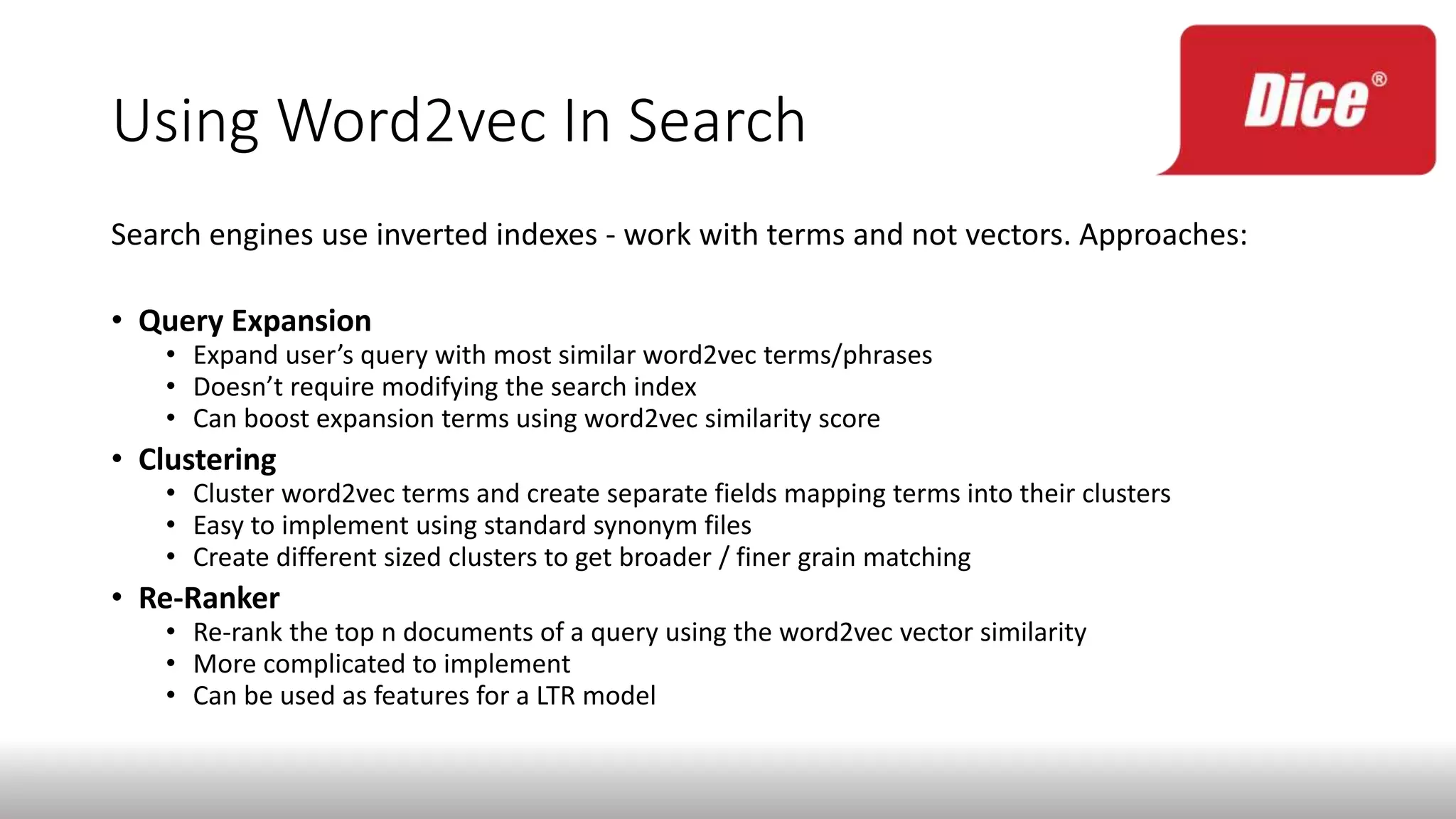

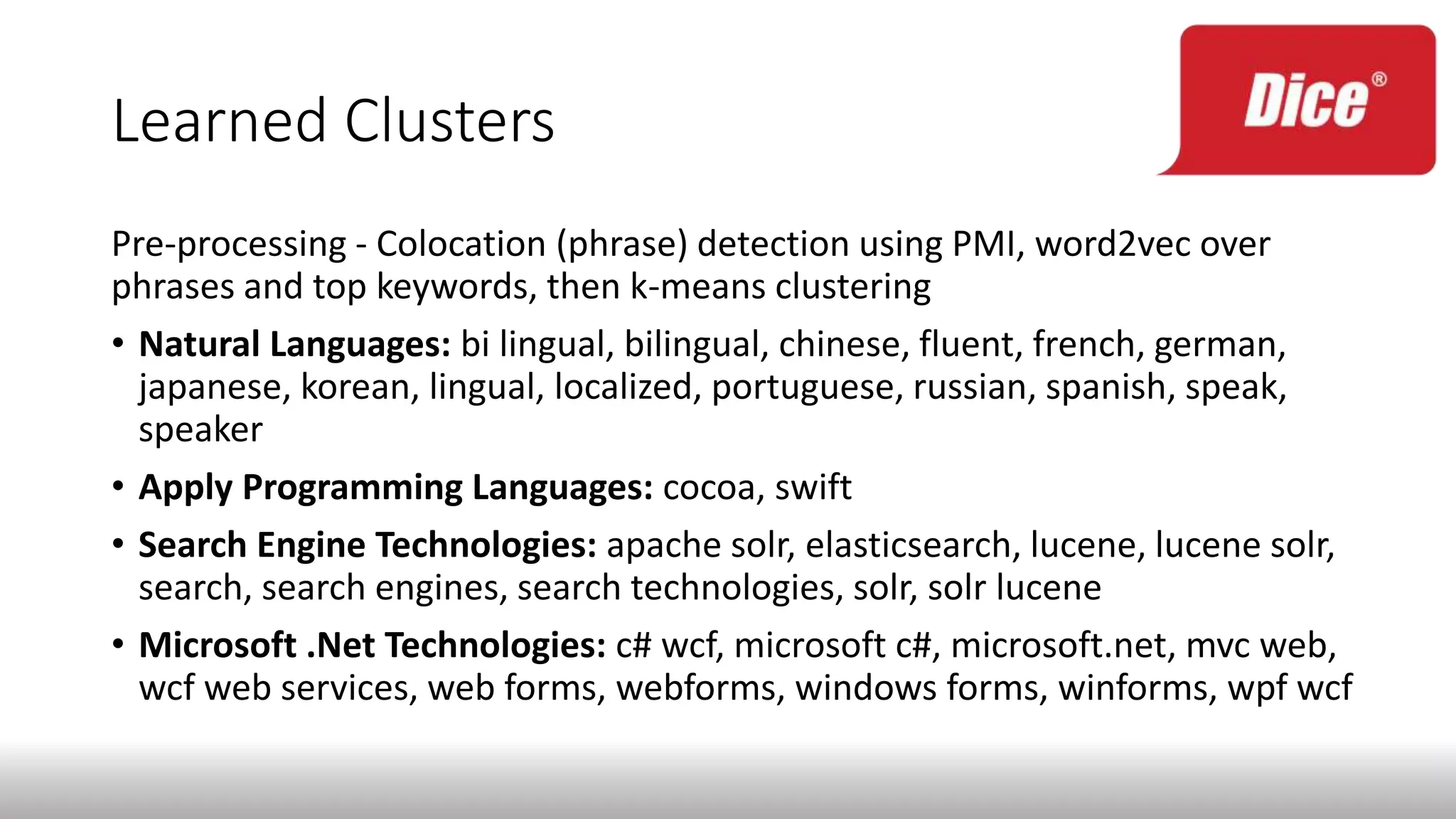

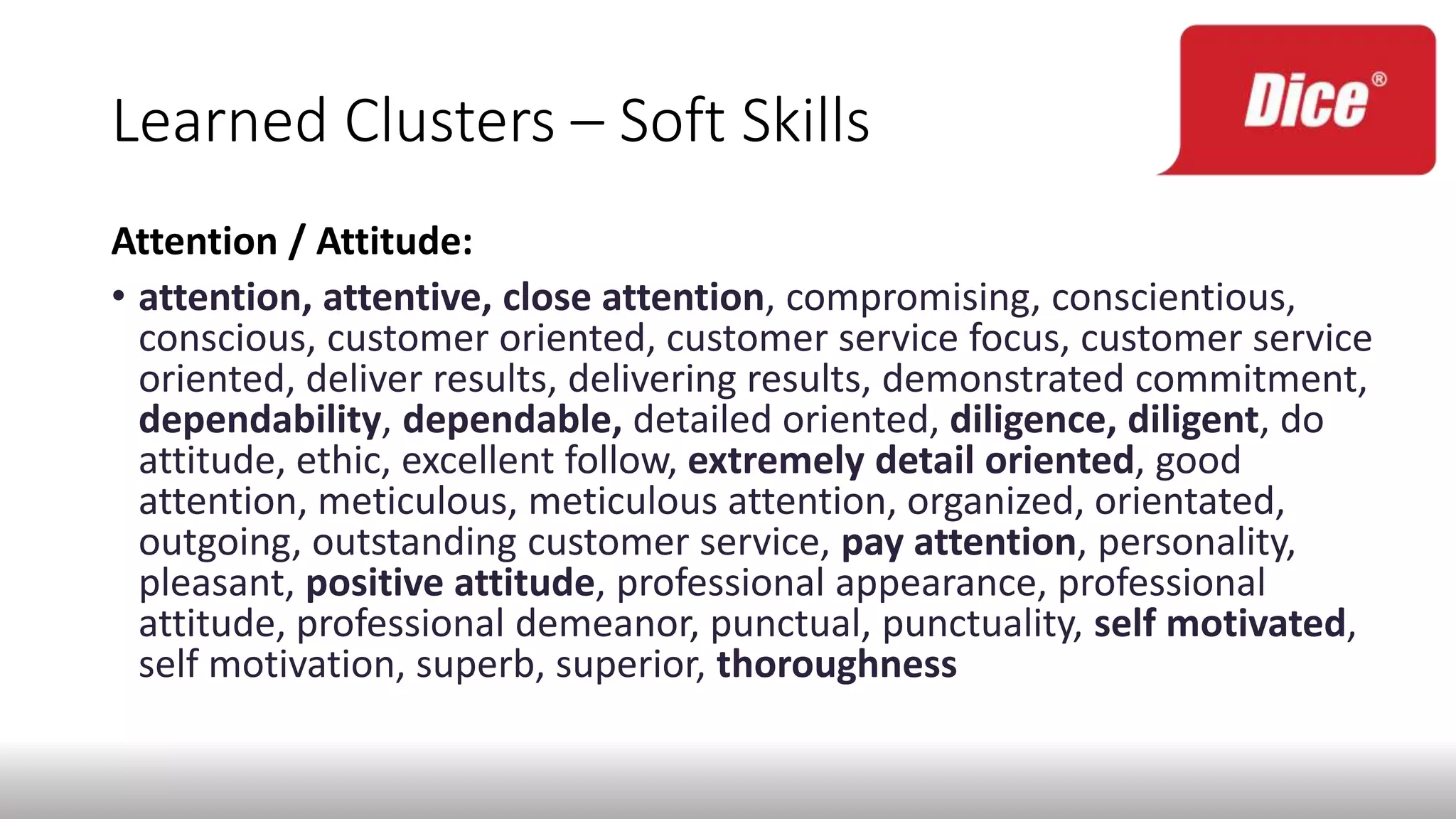

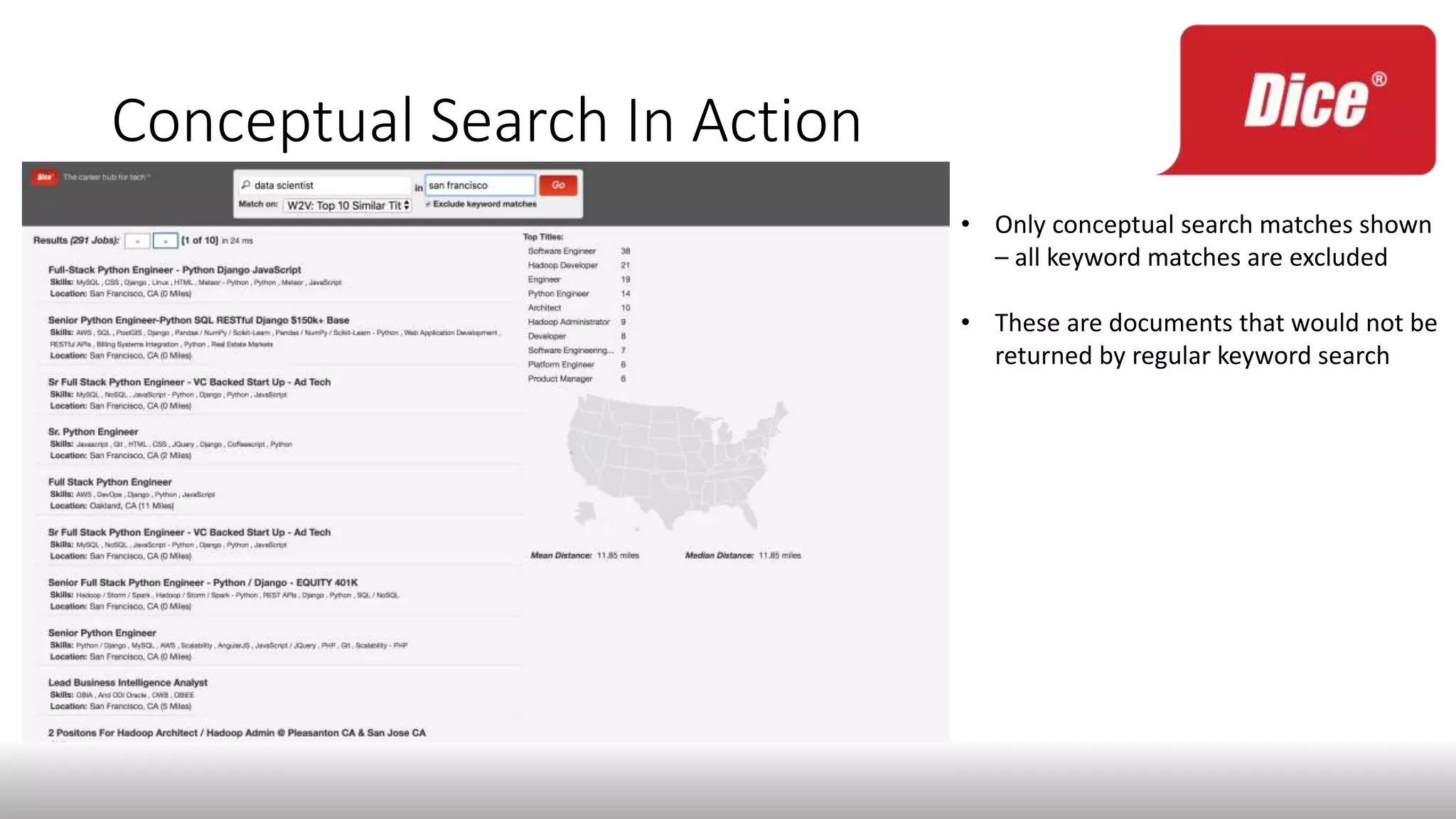

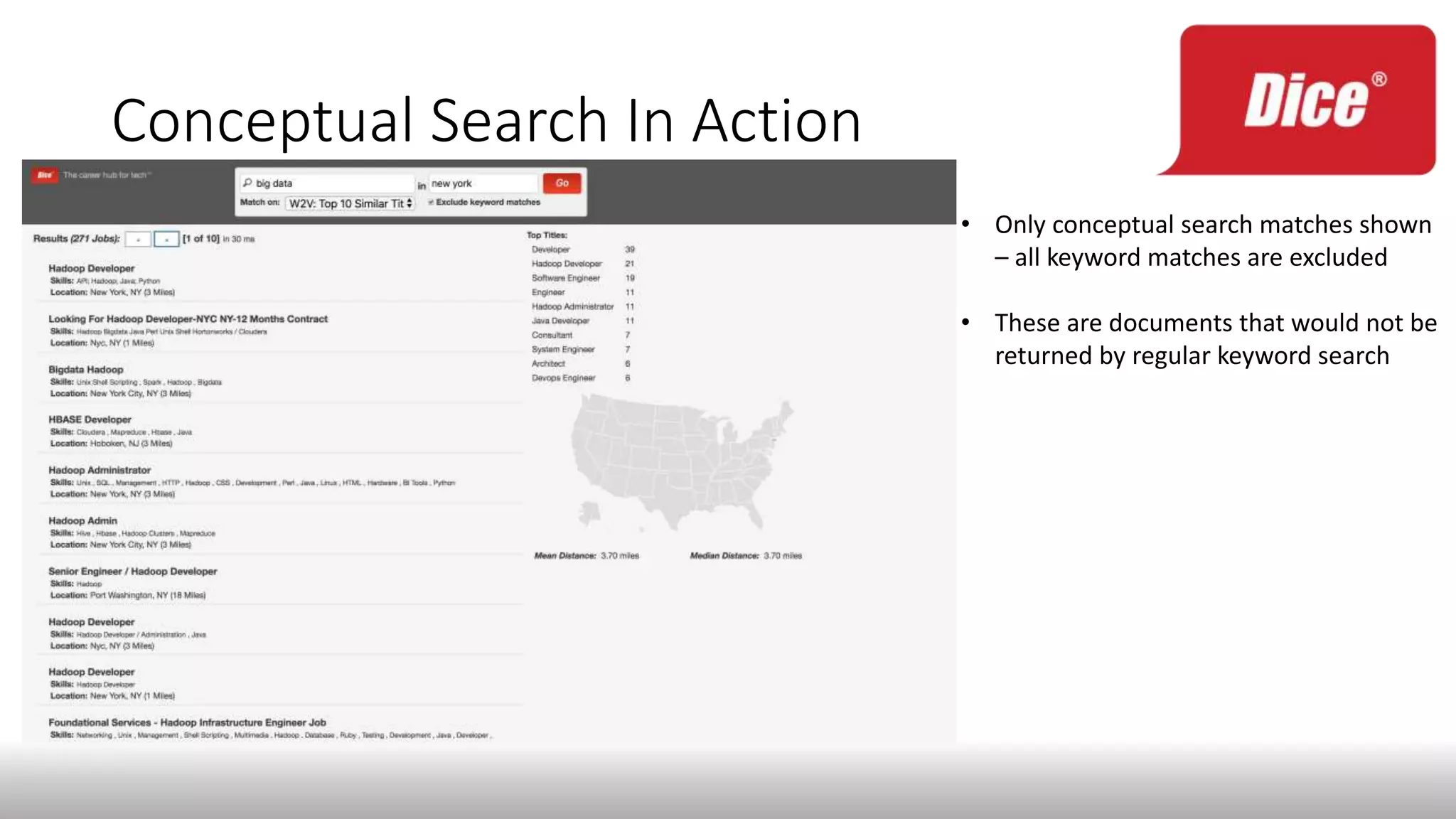

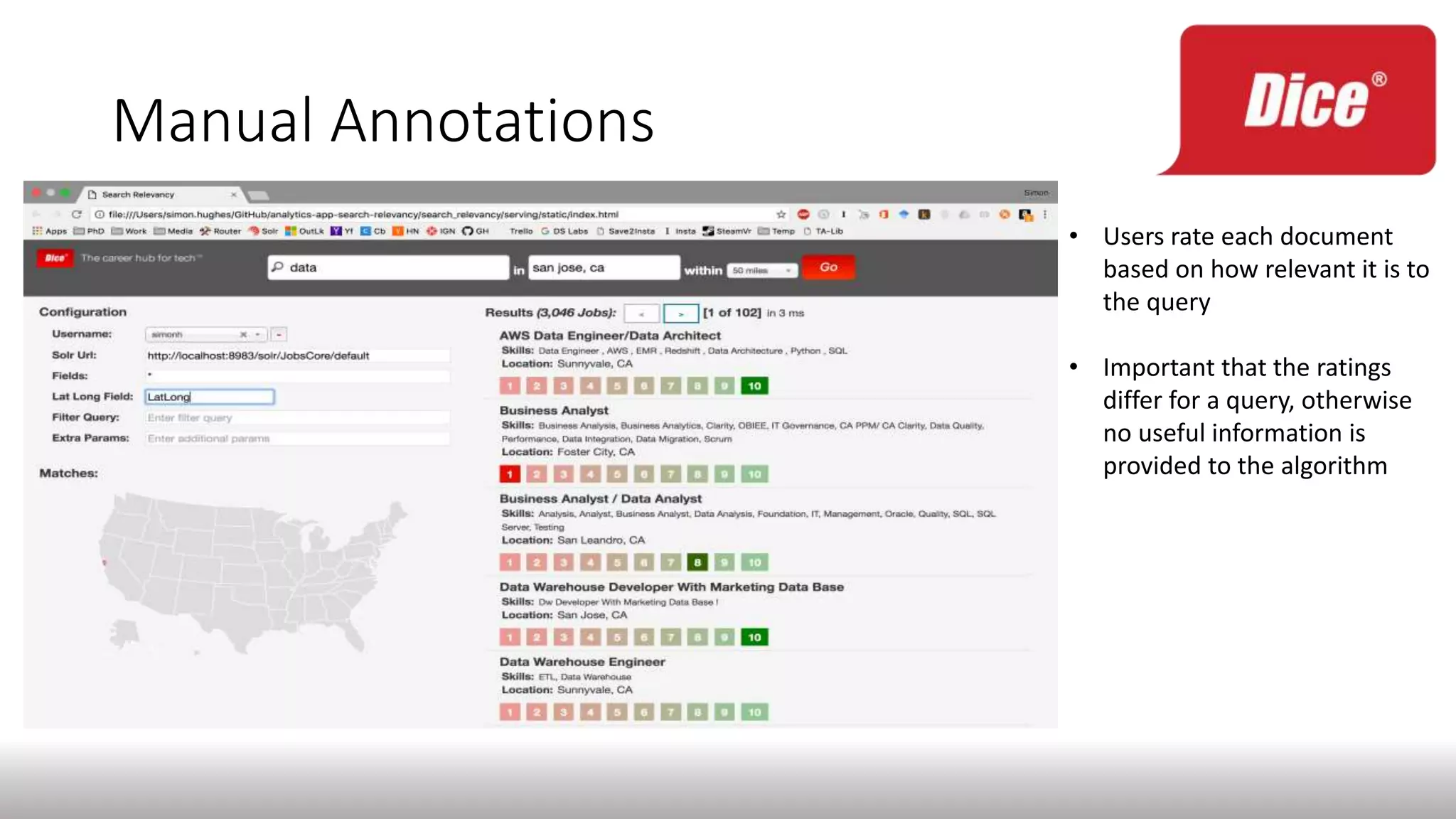

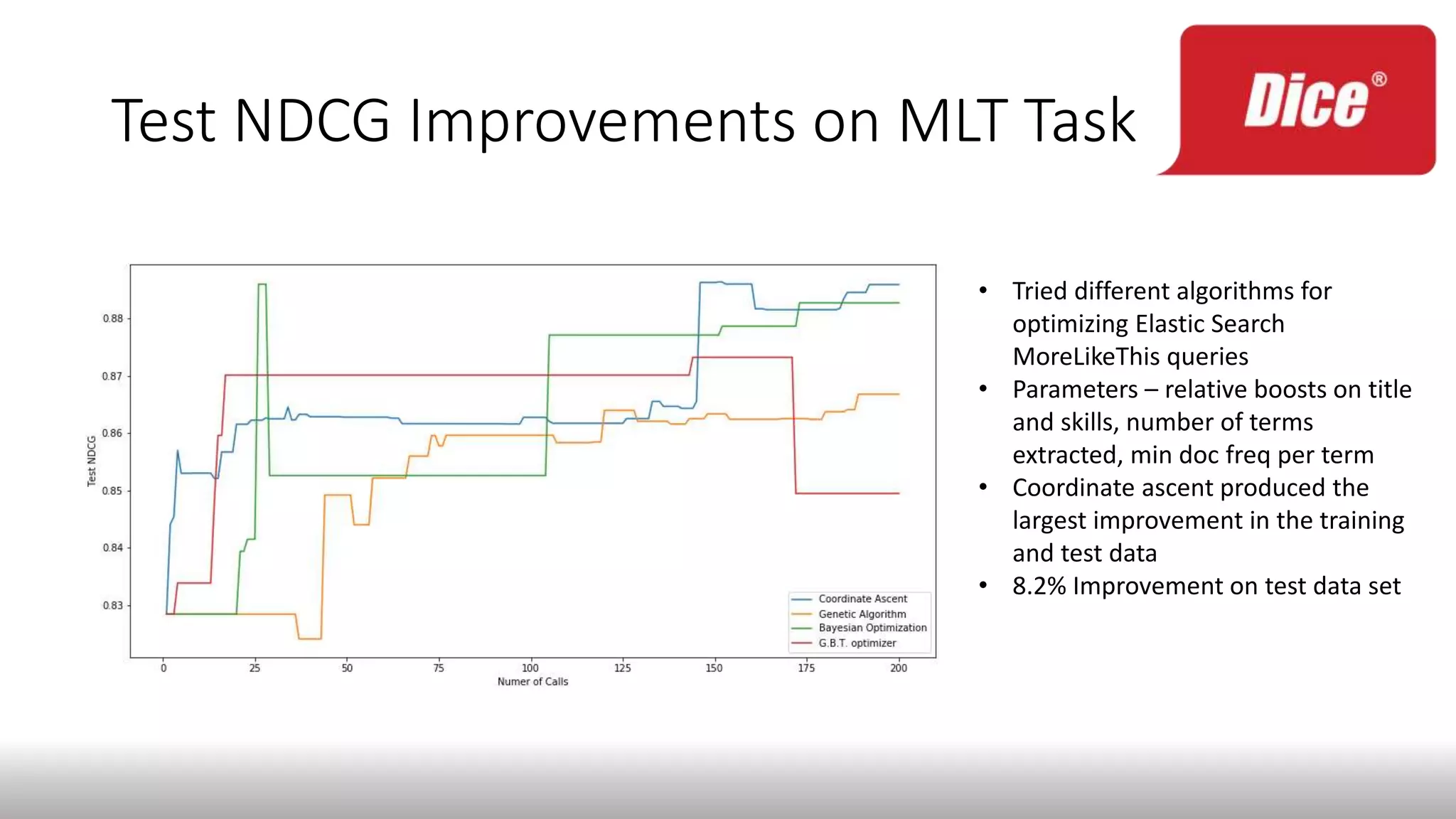

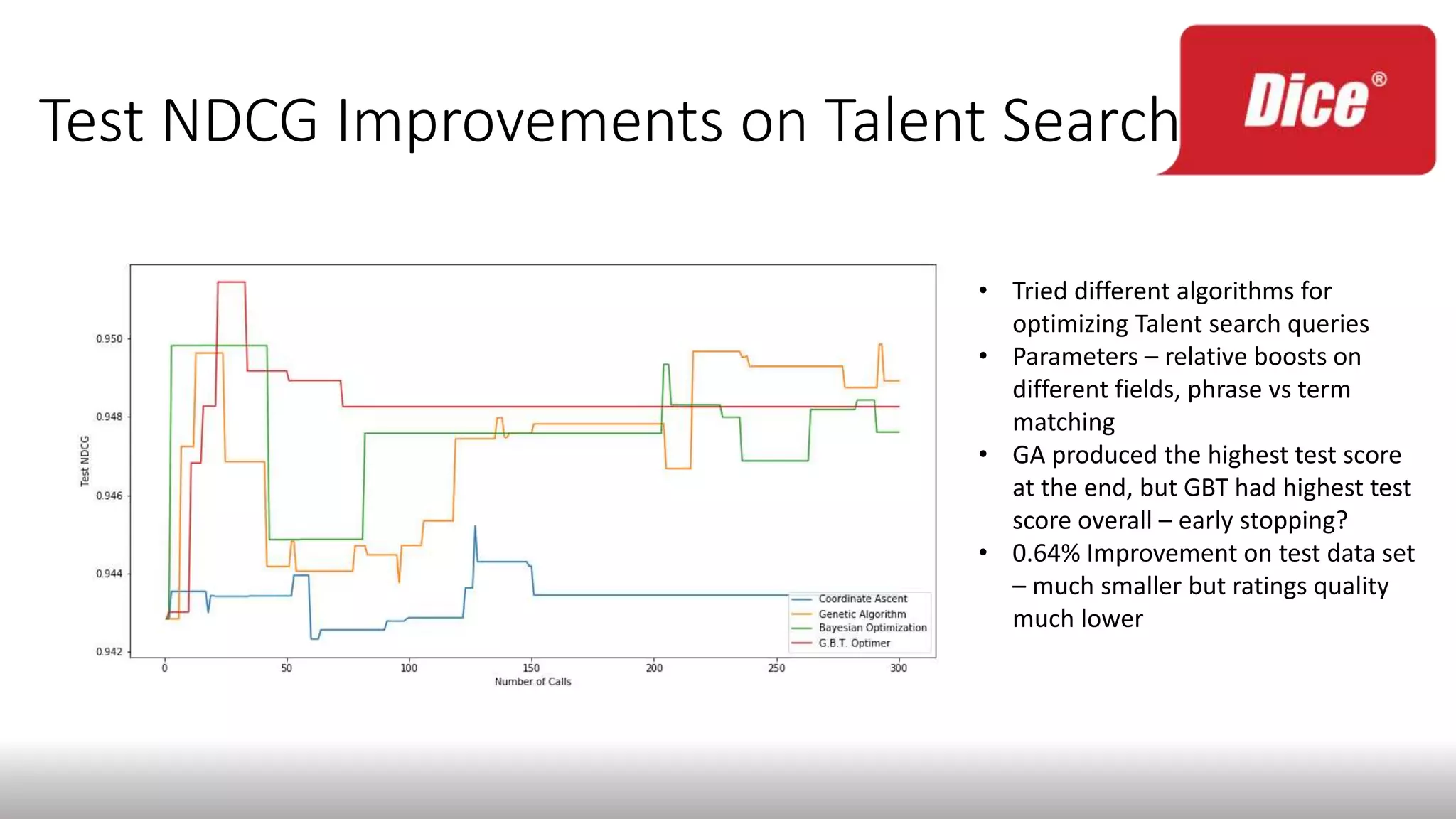

The document discusses enhancing enterprise search through machine learning techniques, focusing on improving search relevancy by optimizing recall and precision. It introduces conceptual search methods, such as word2vec, to tackle challenges related to polysemy and synonymy, and outlines various optimization strategies, including black-box algorithms for tuning relevancy. Additionally, it highlights the importance of fine-tuning search engine settings to enhance user experience and introduces metrics for evaluating search performance.