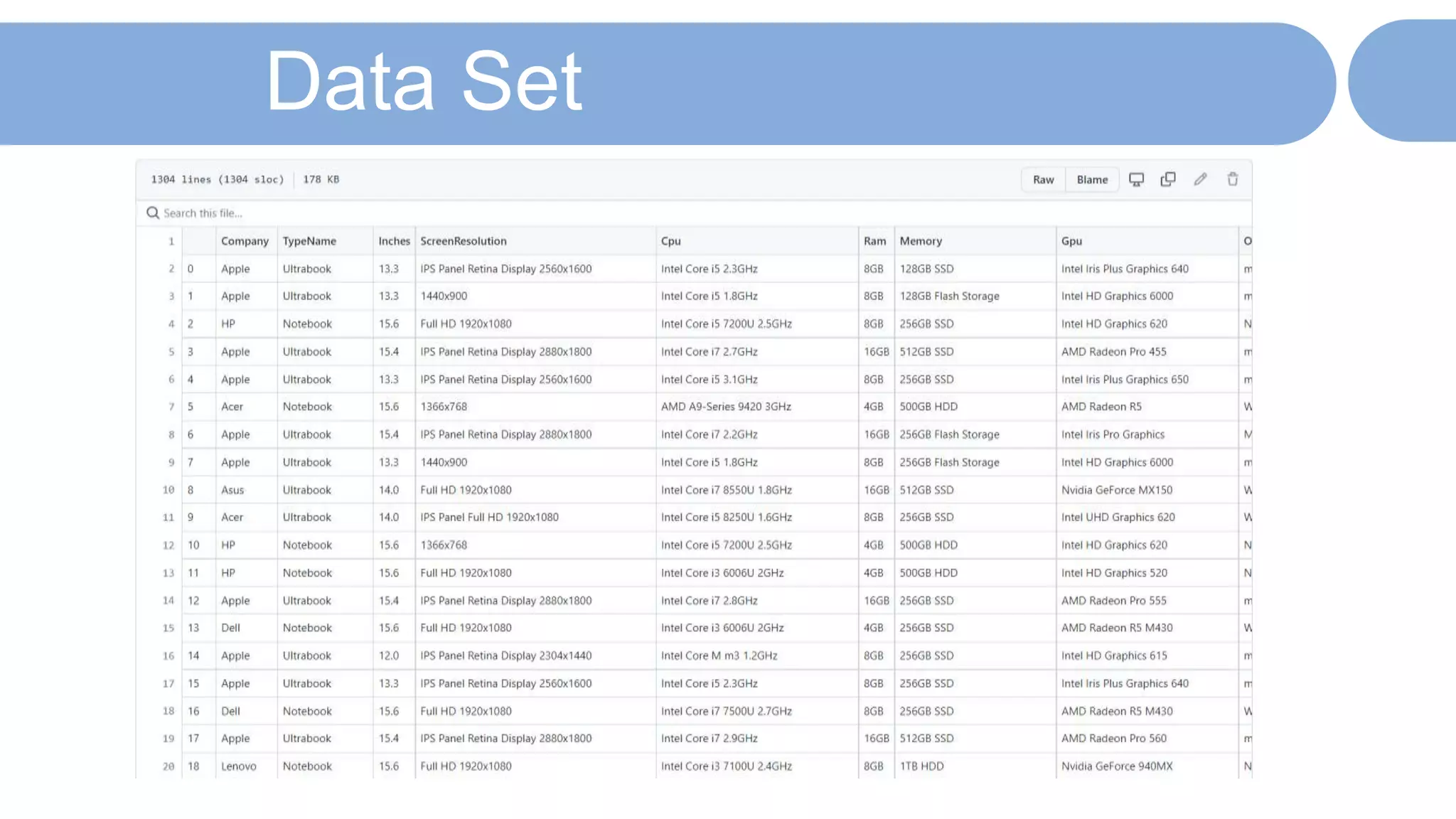

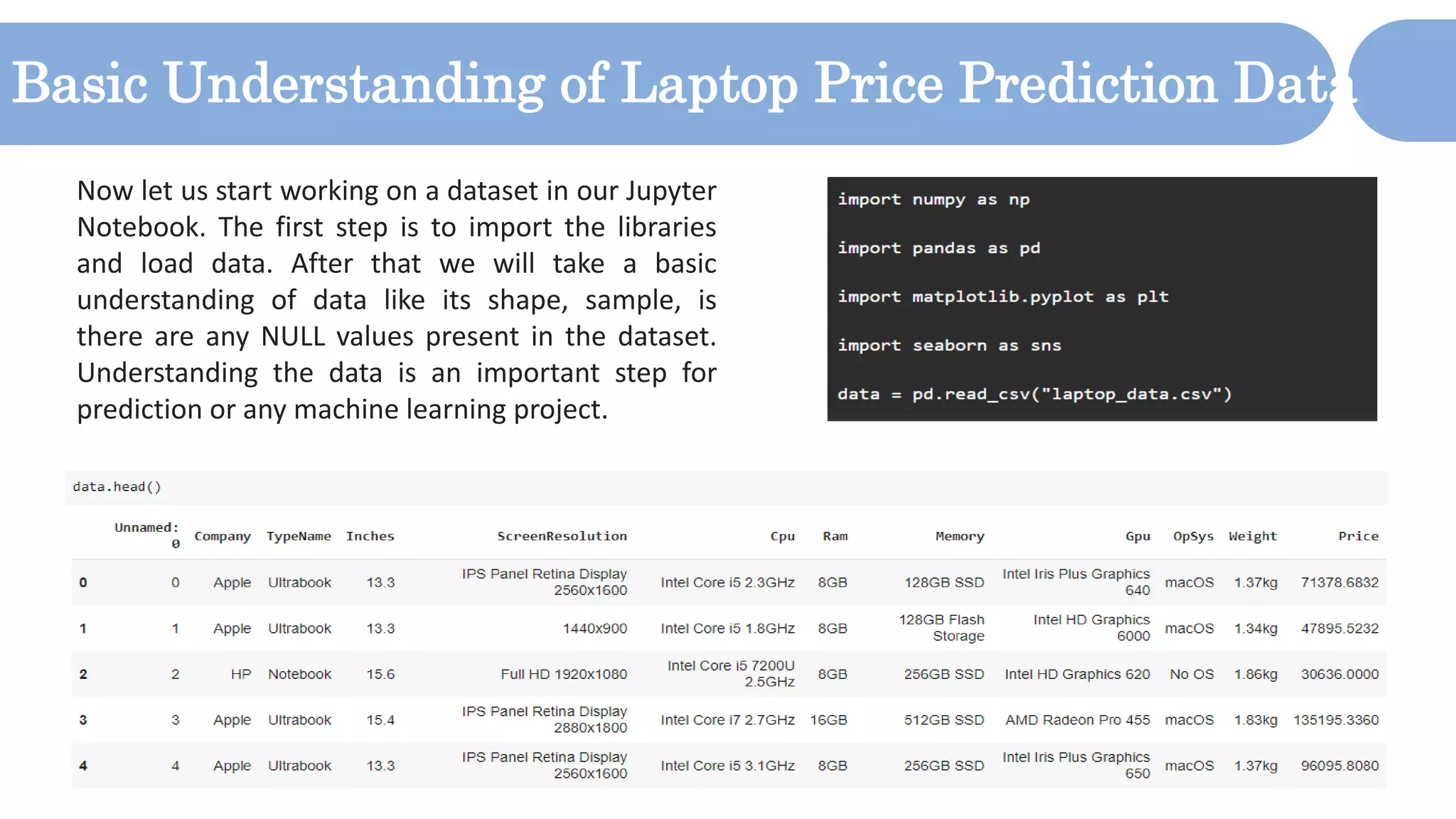

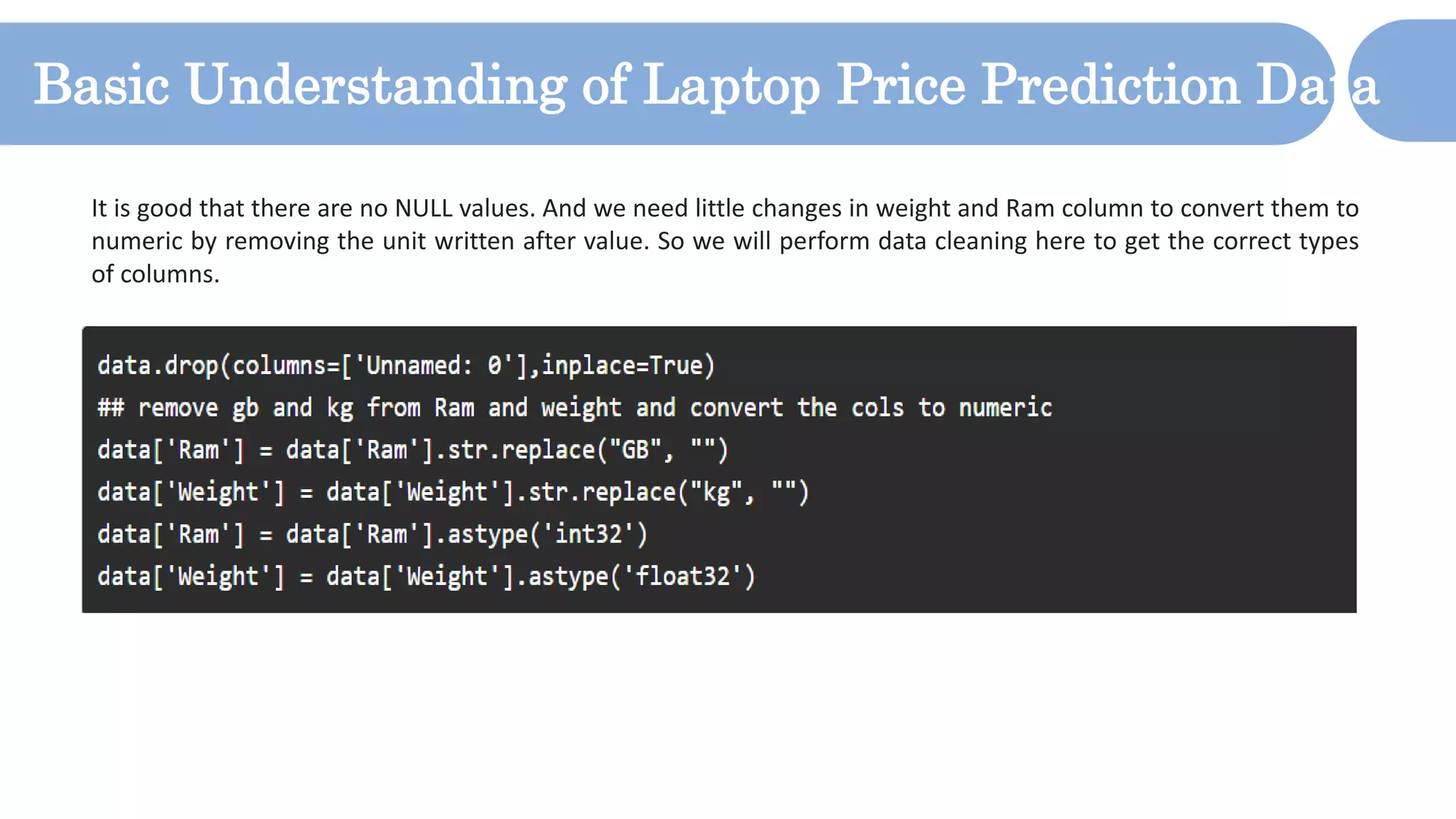

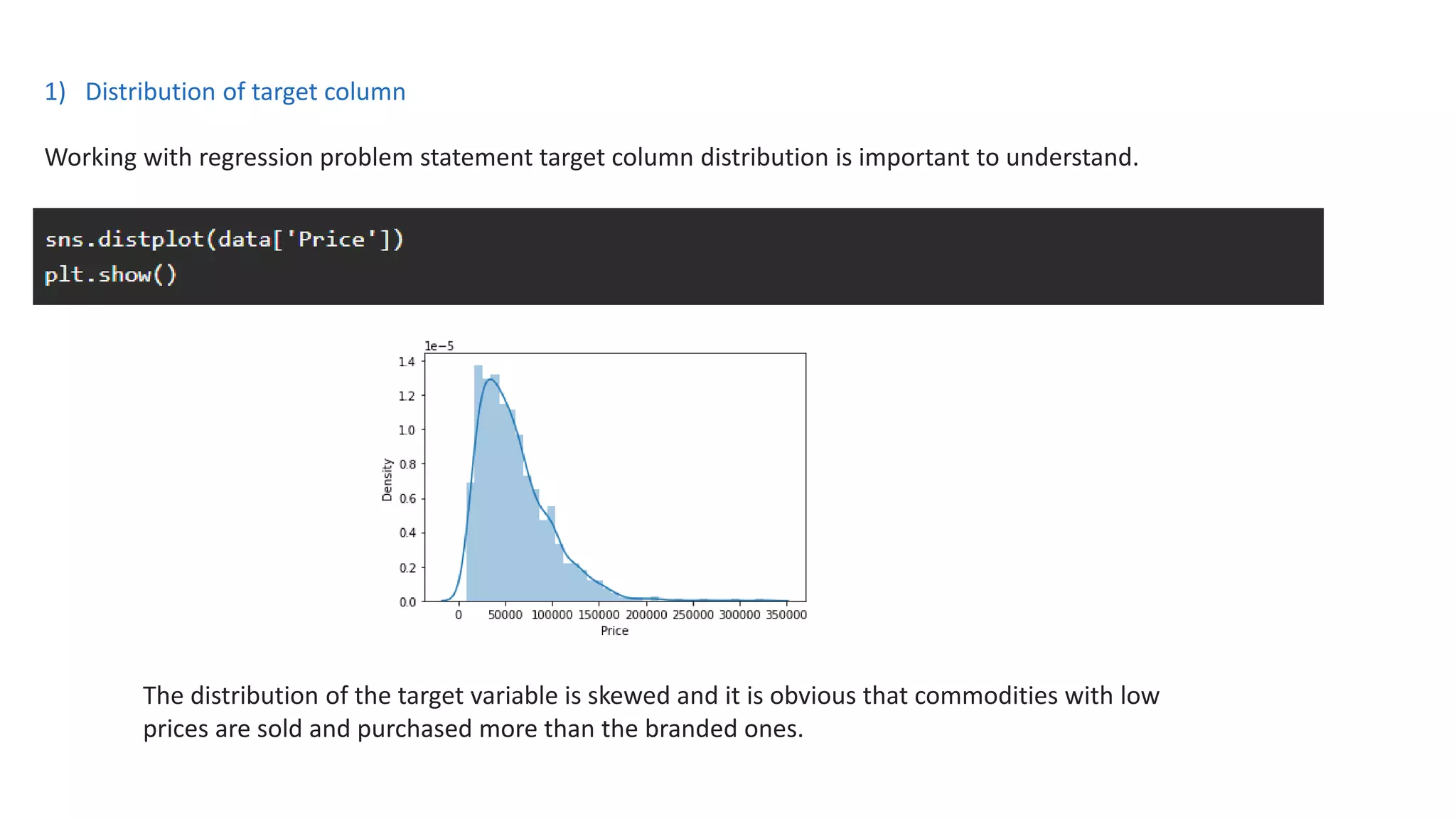

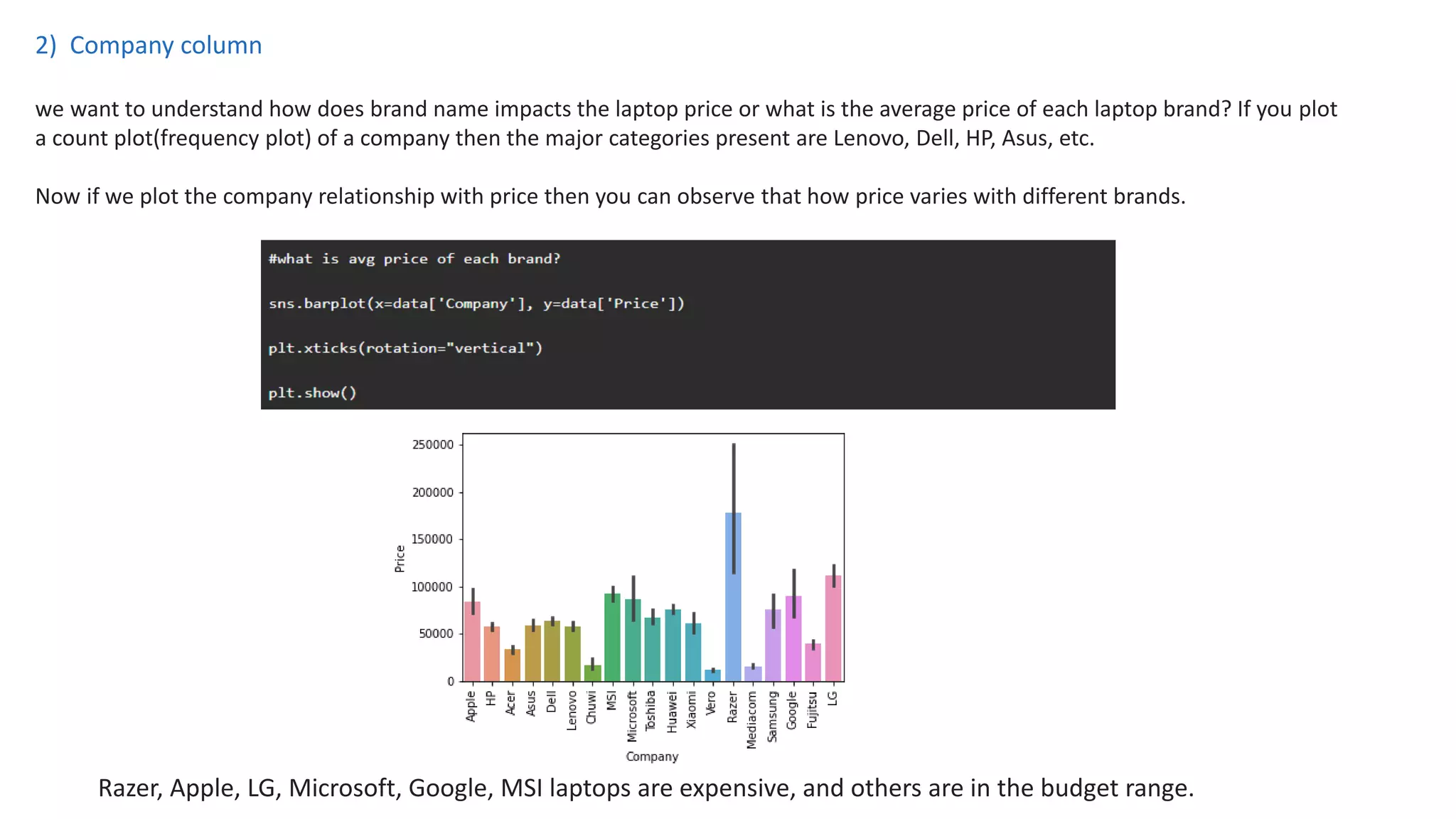

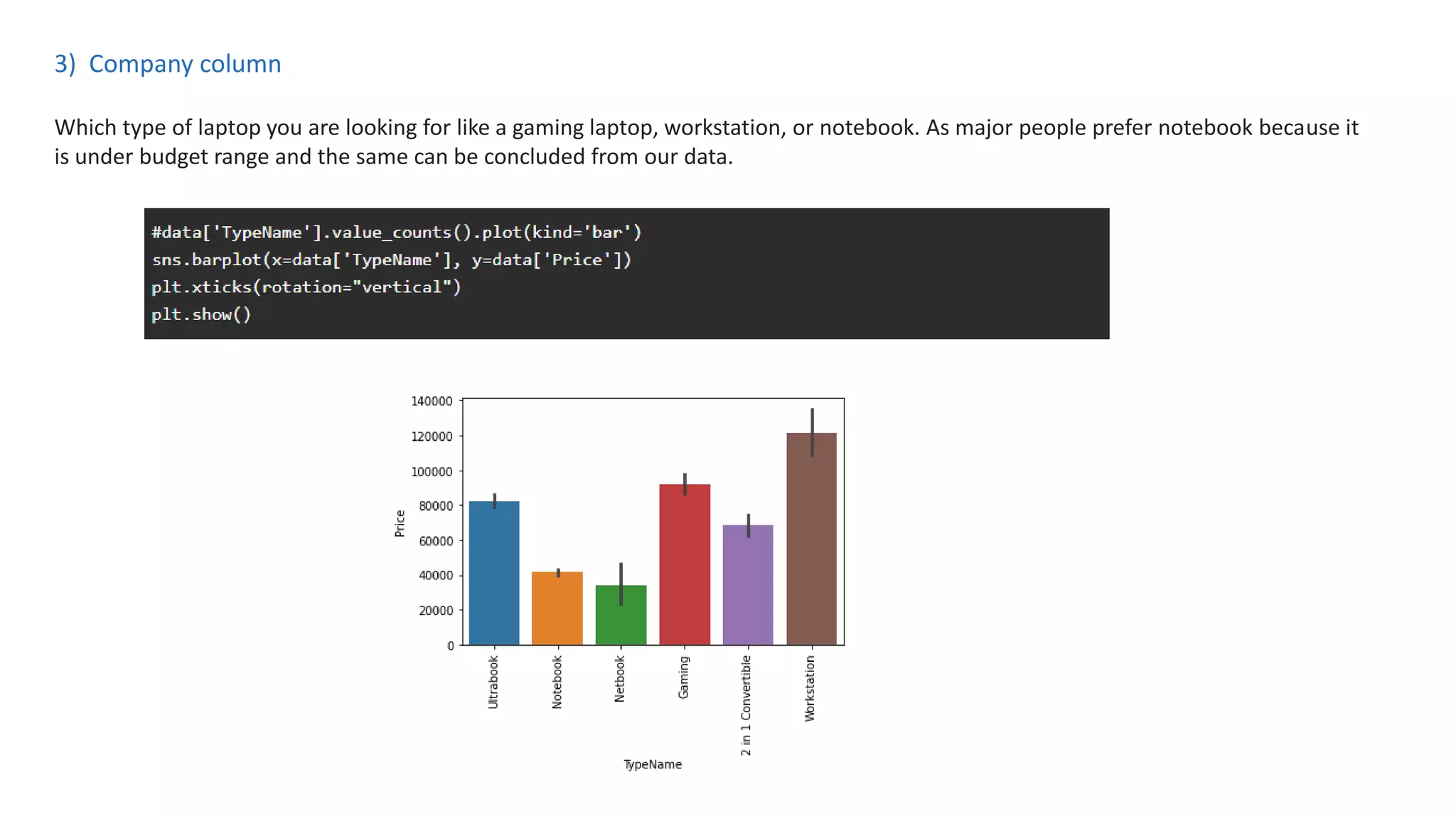

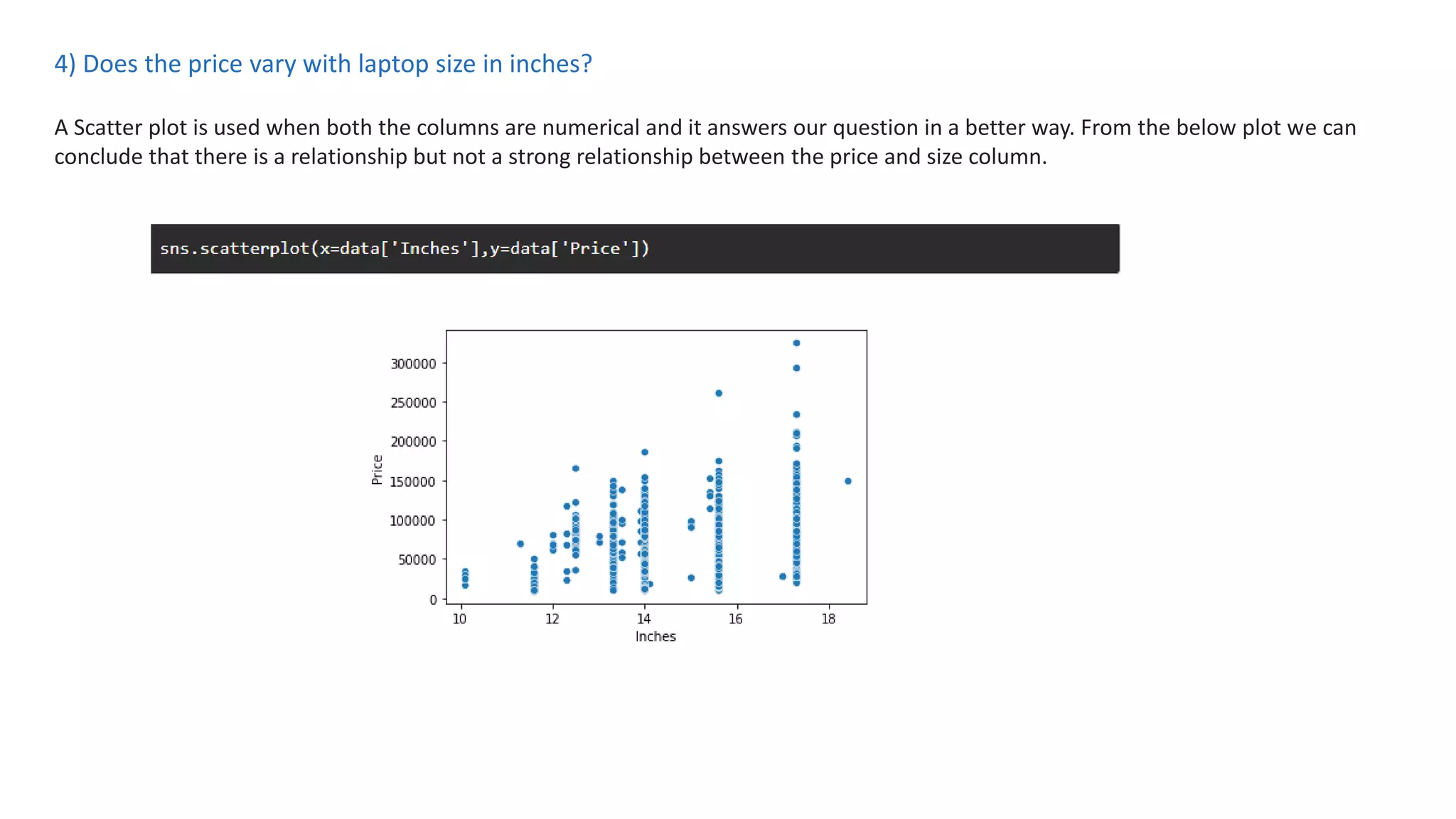

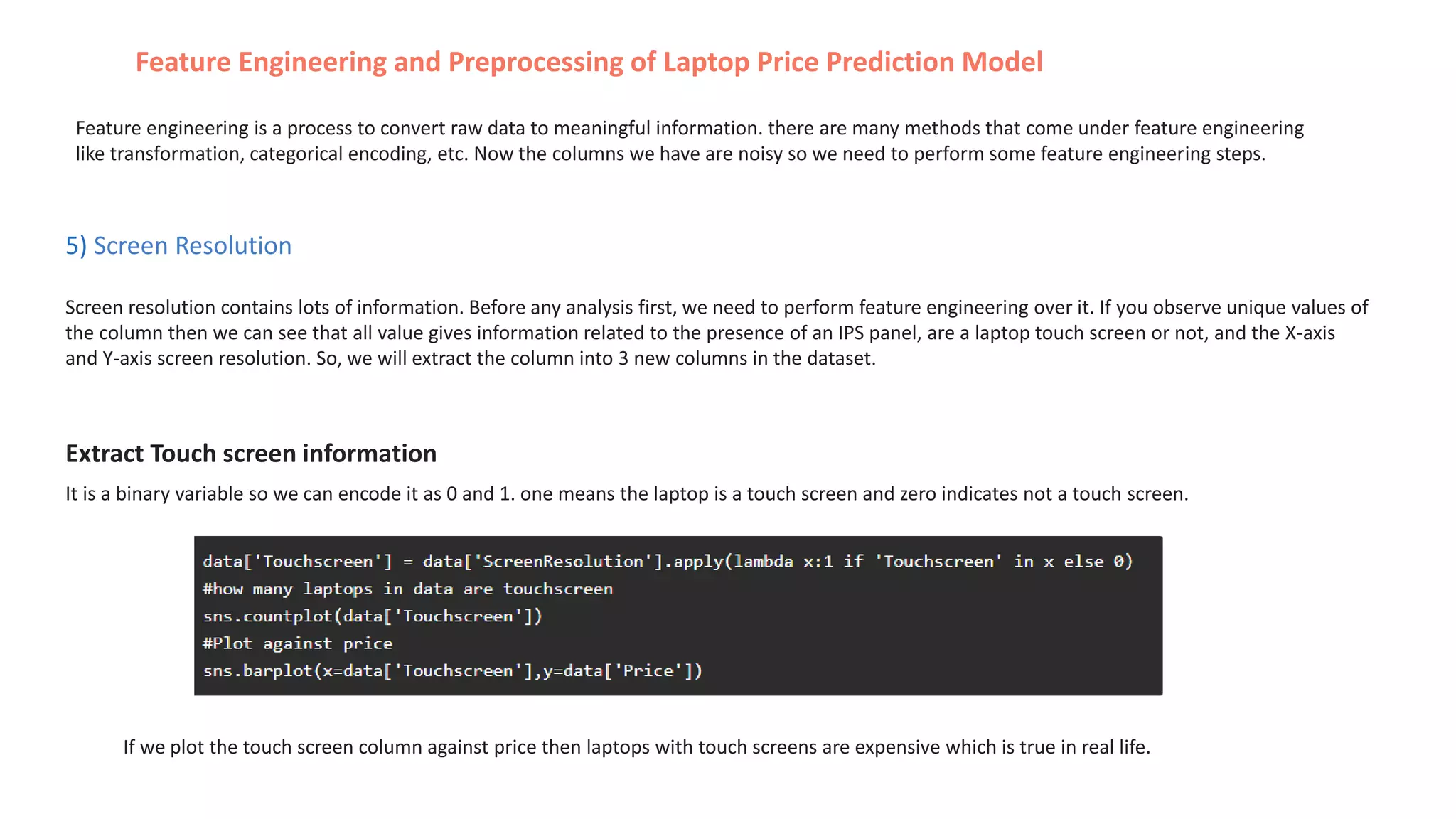

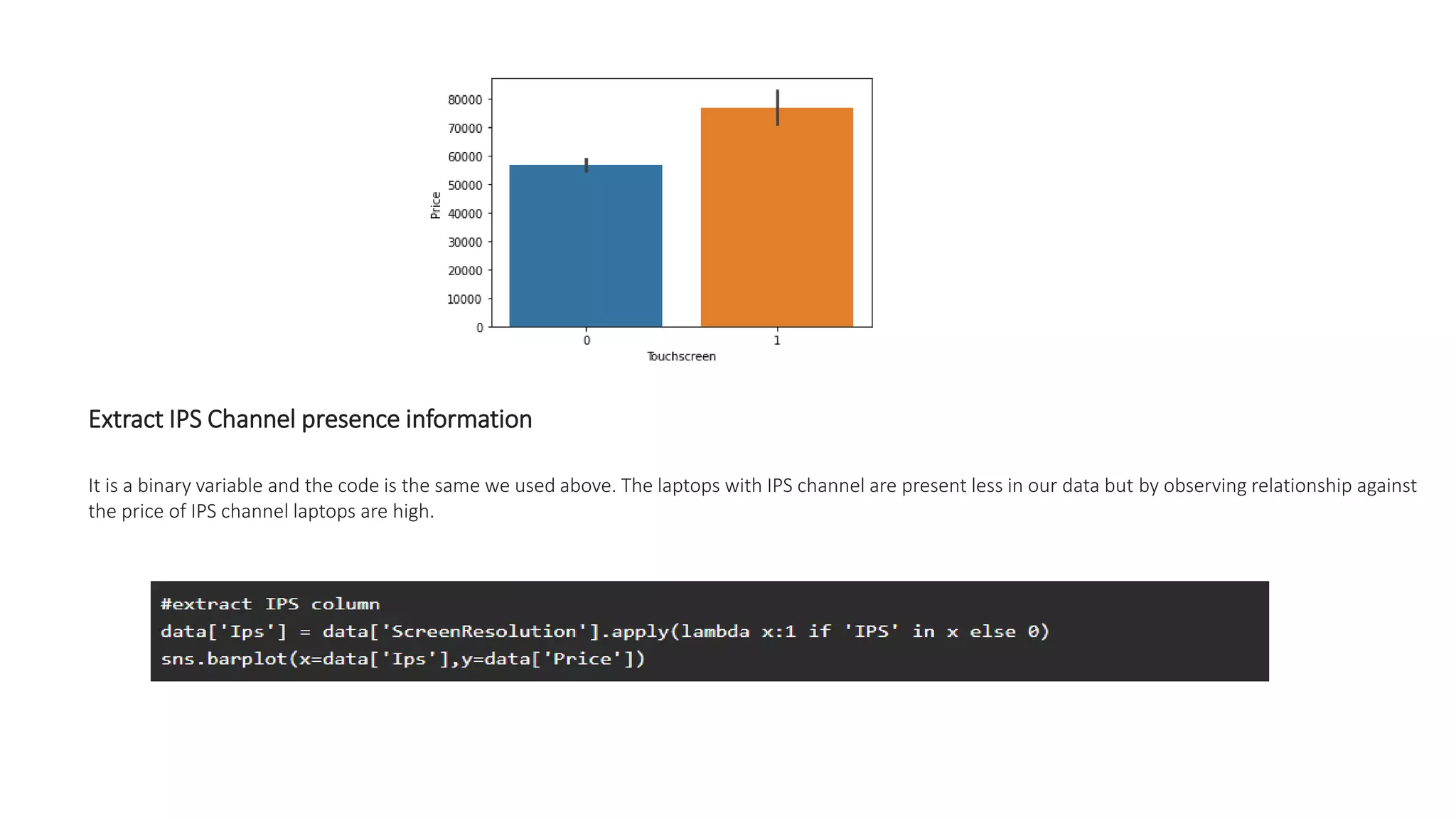

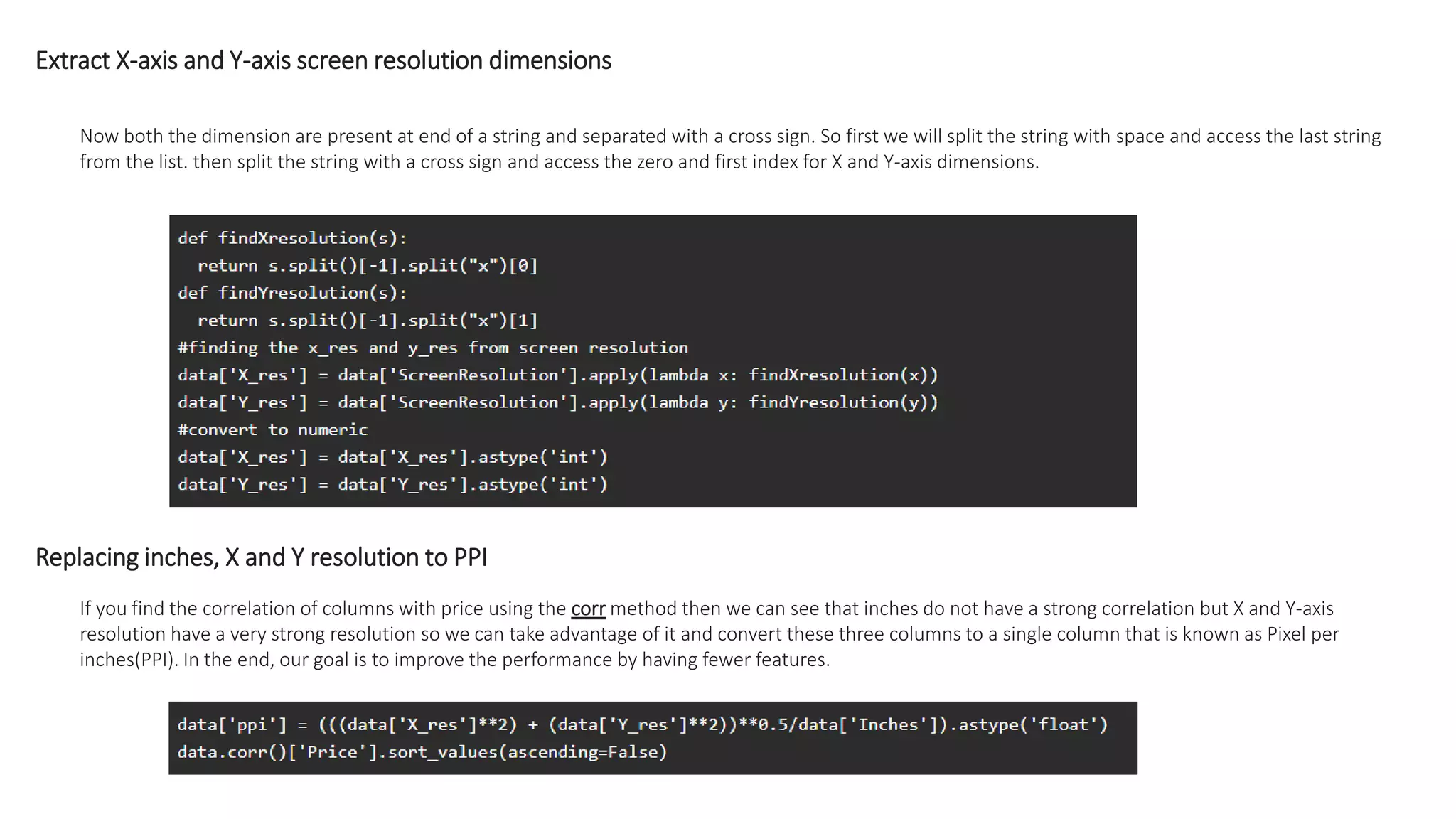

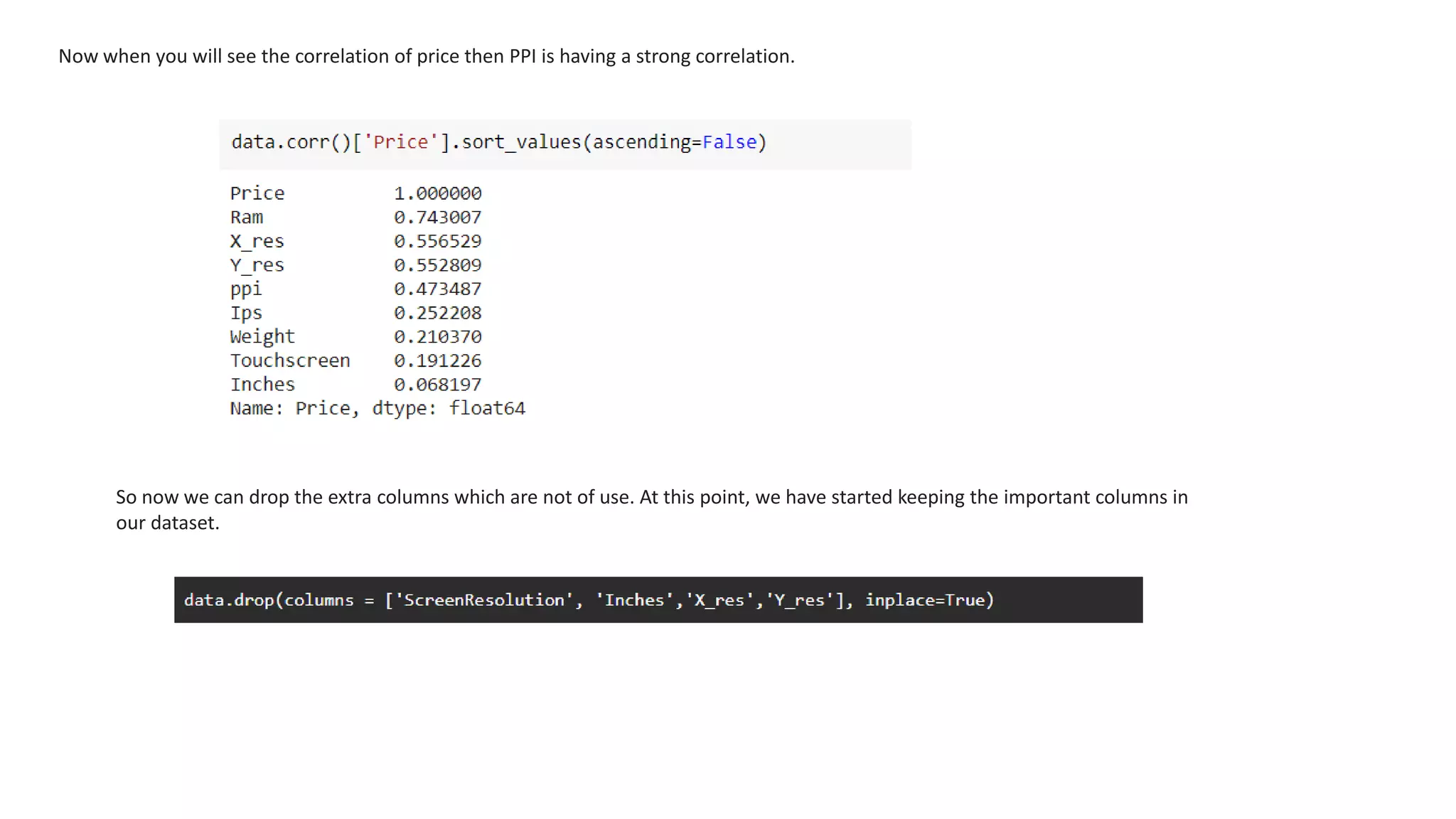

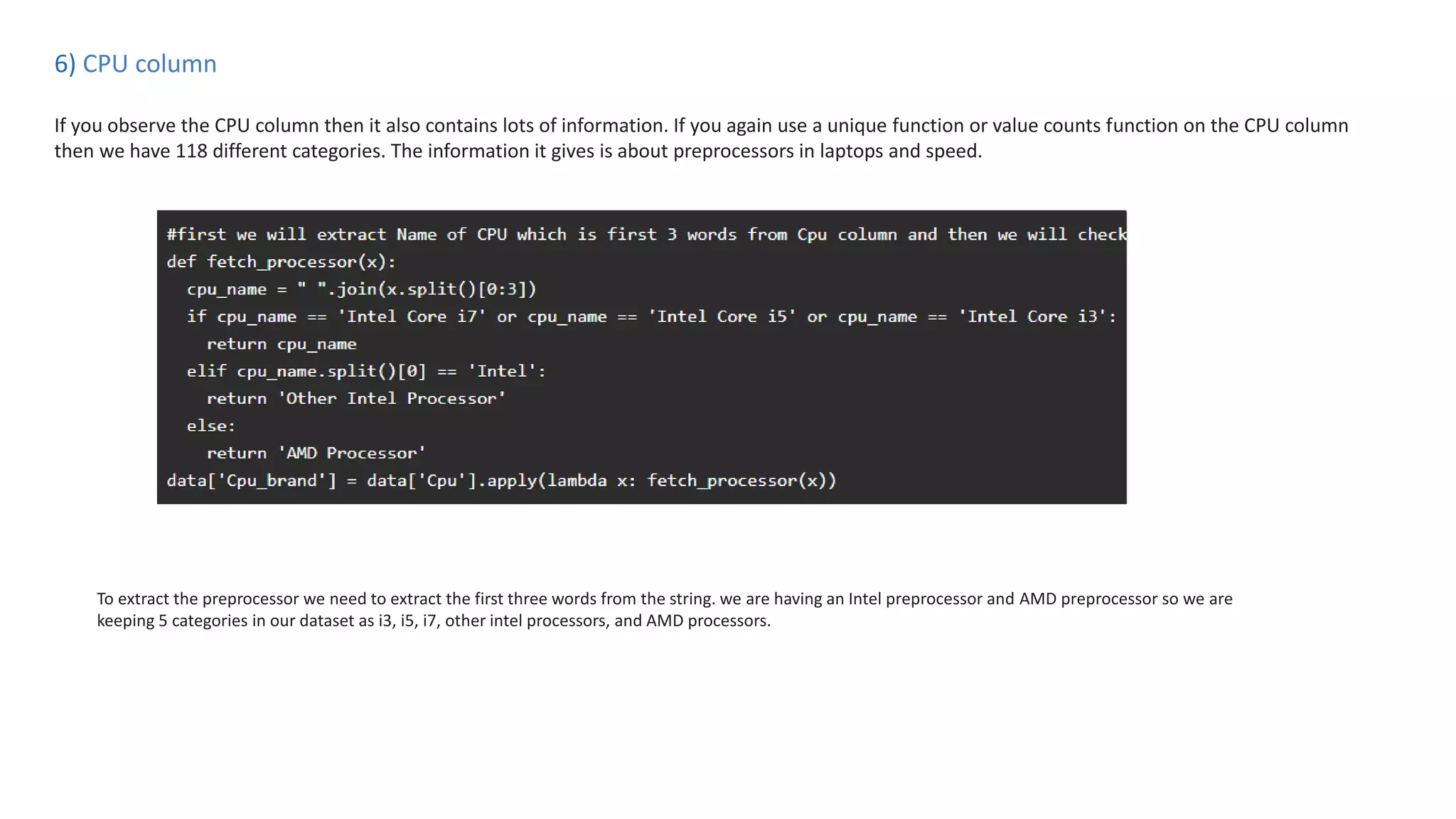

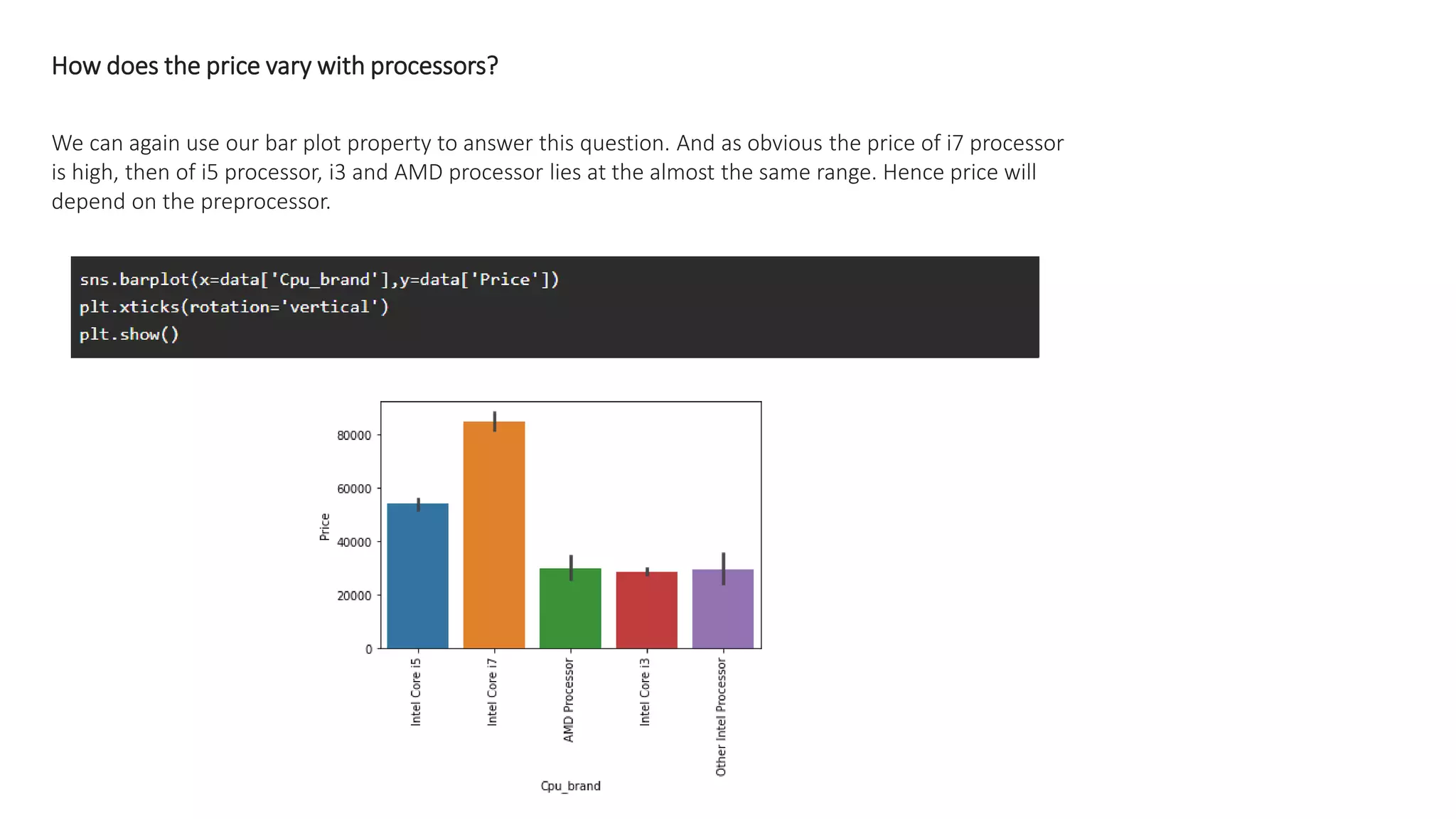

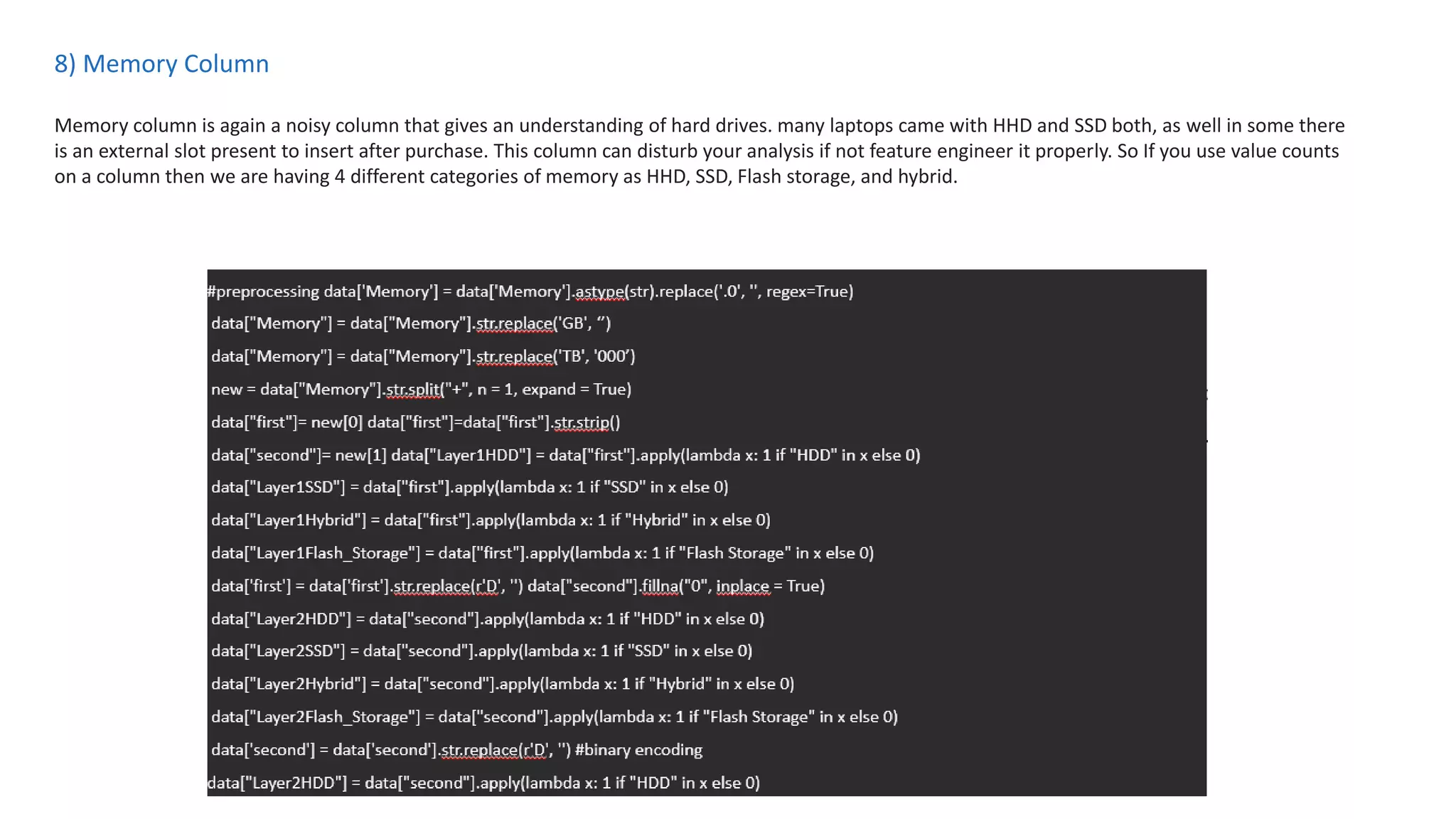

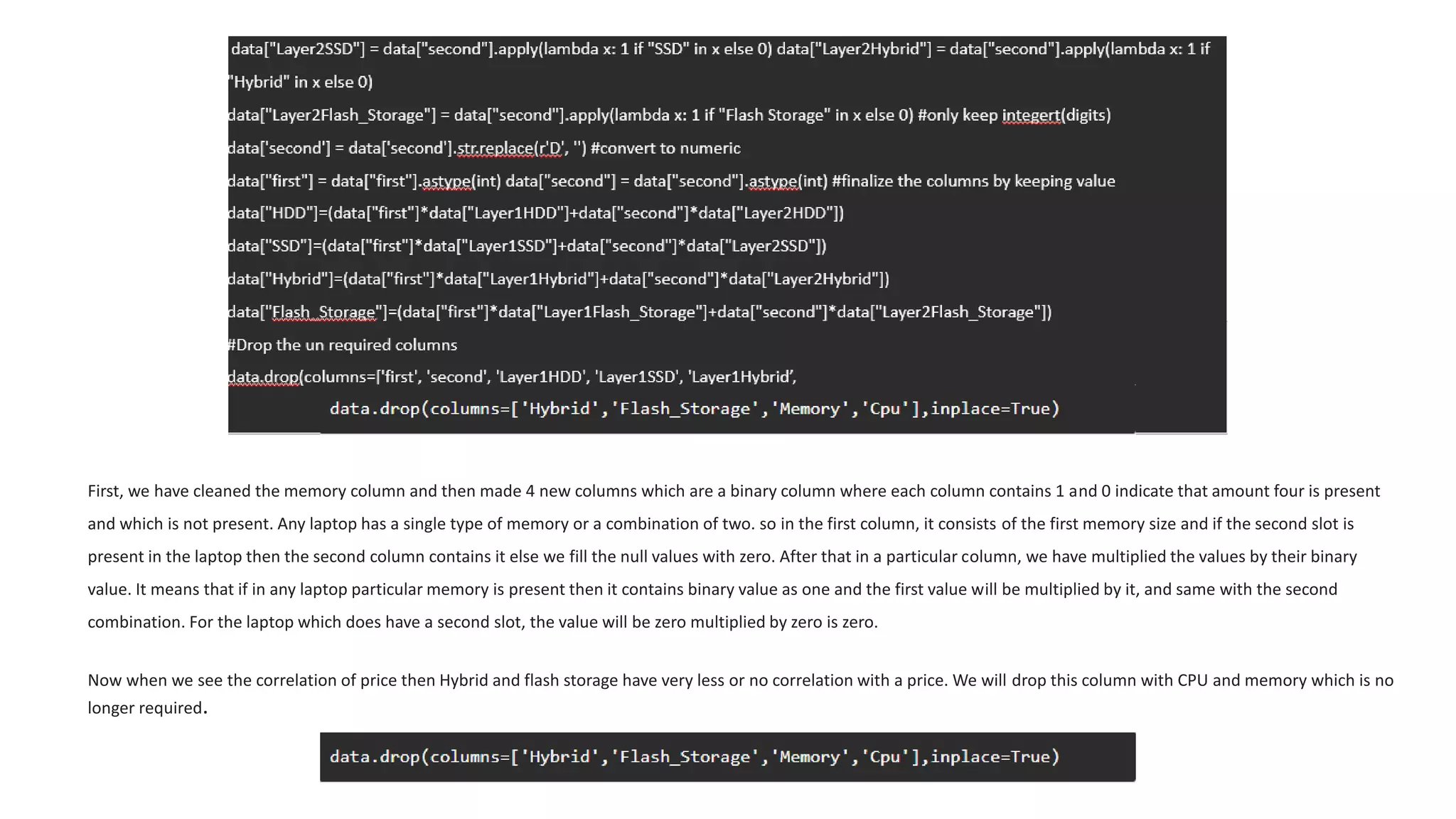

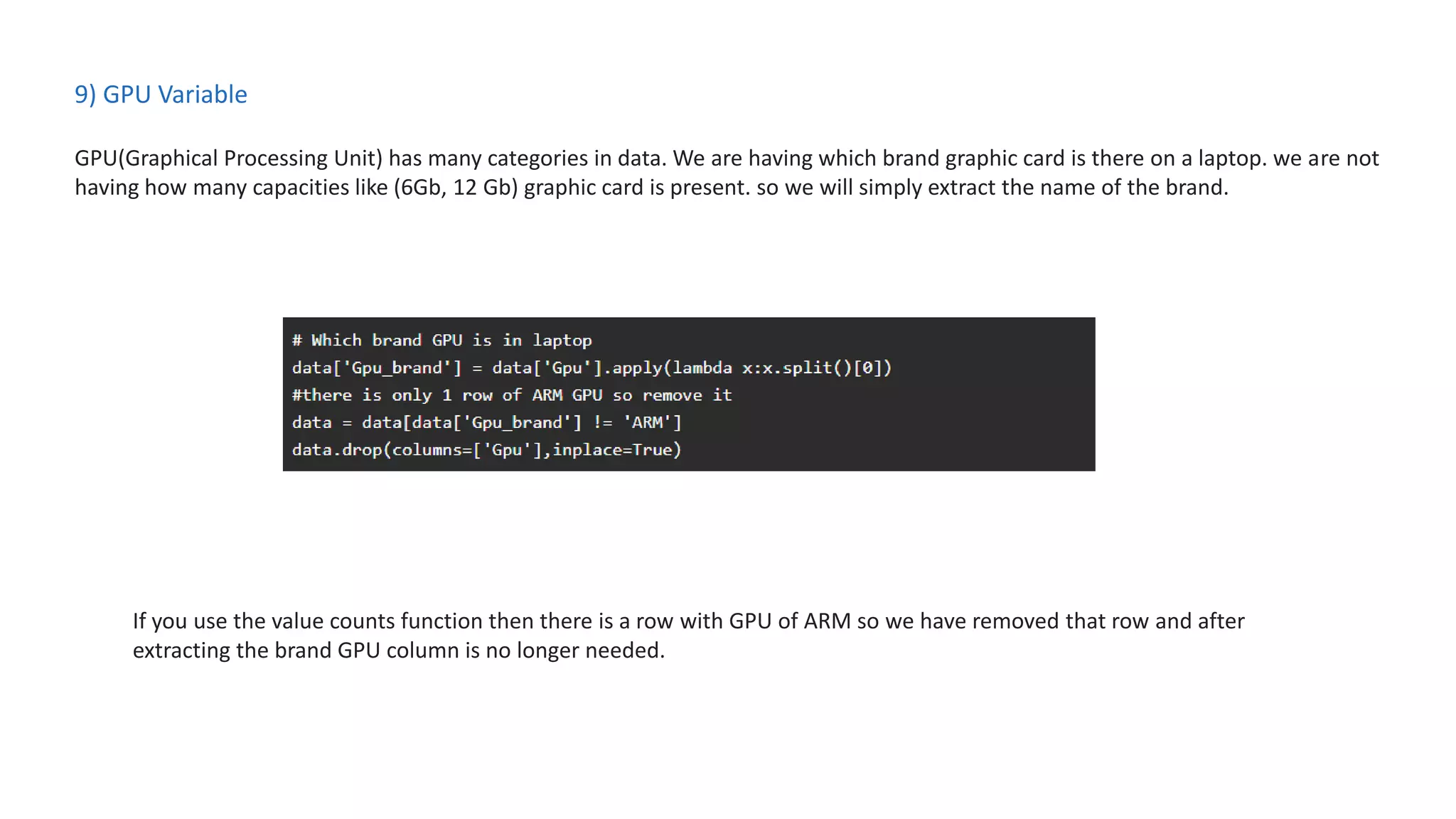

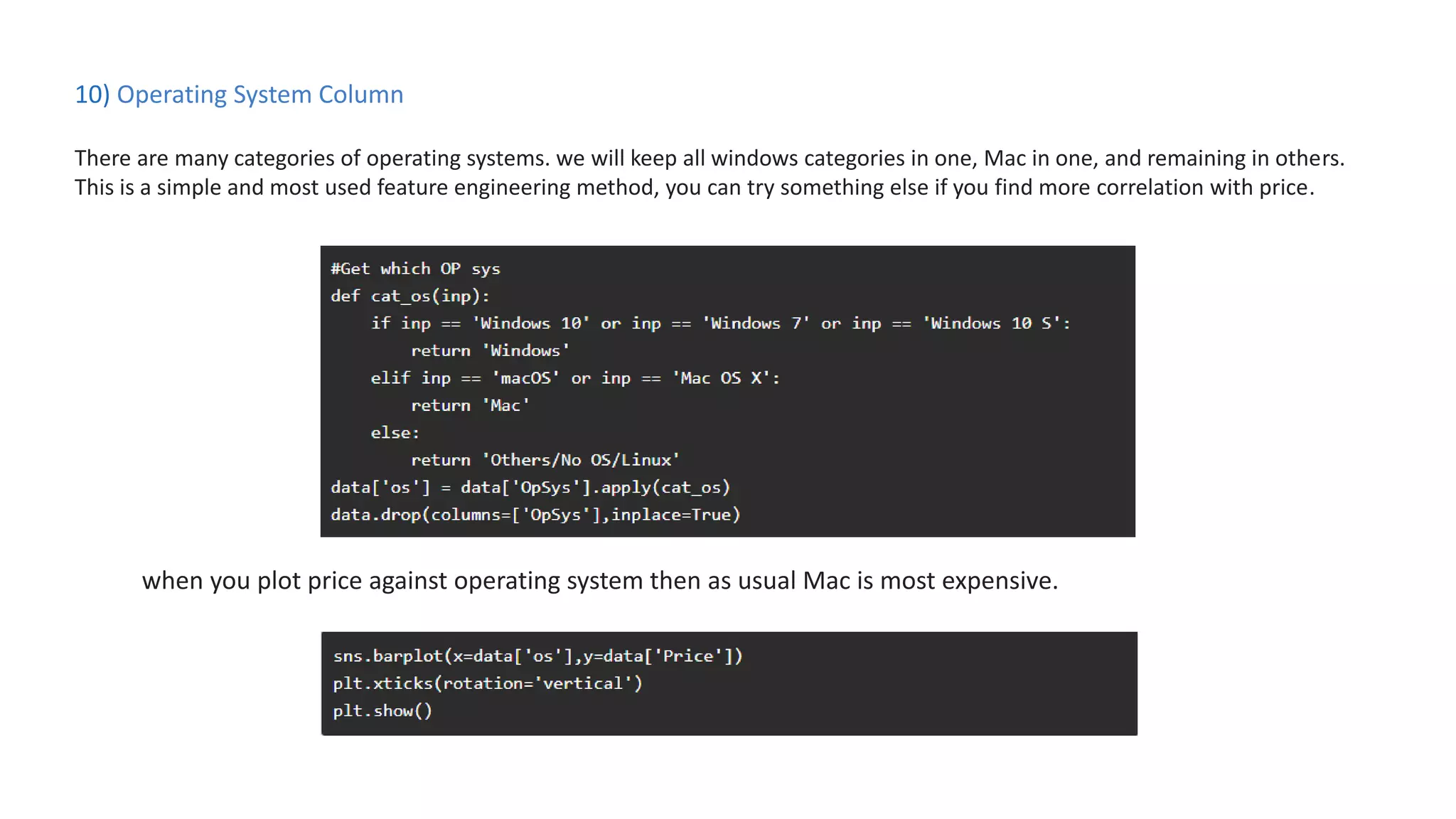

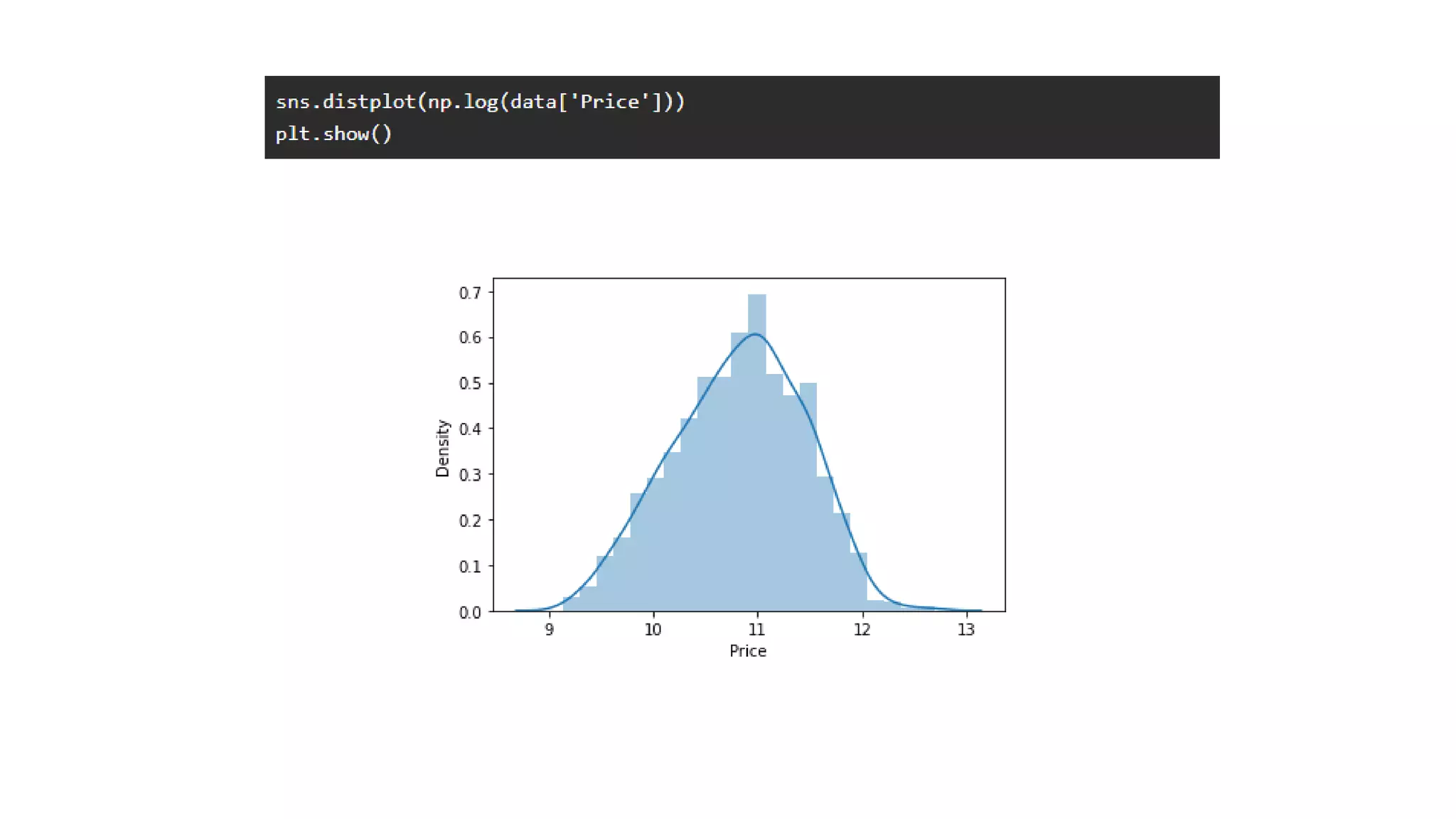

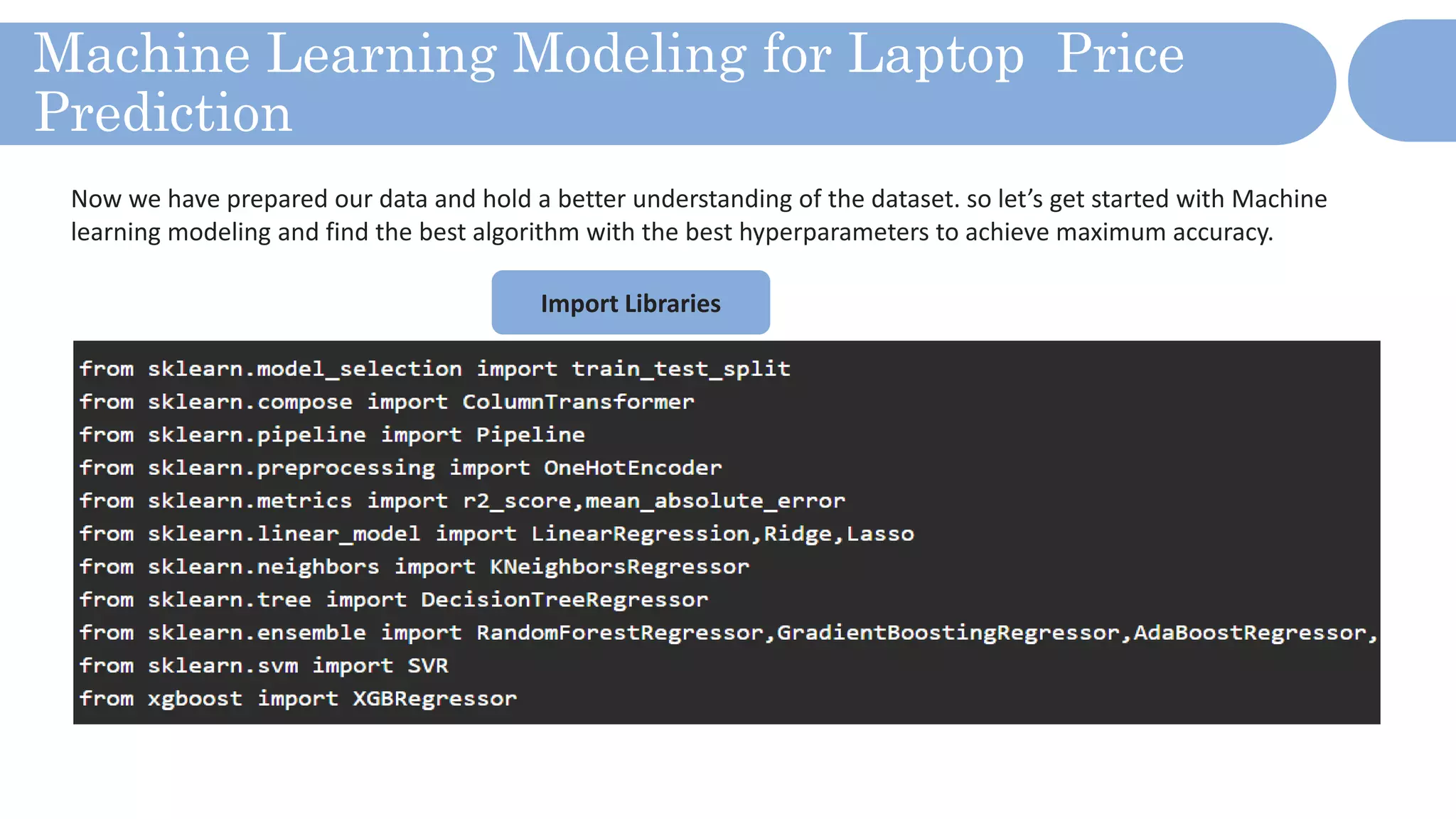

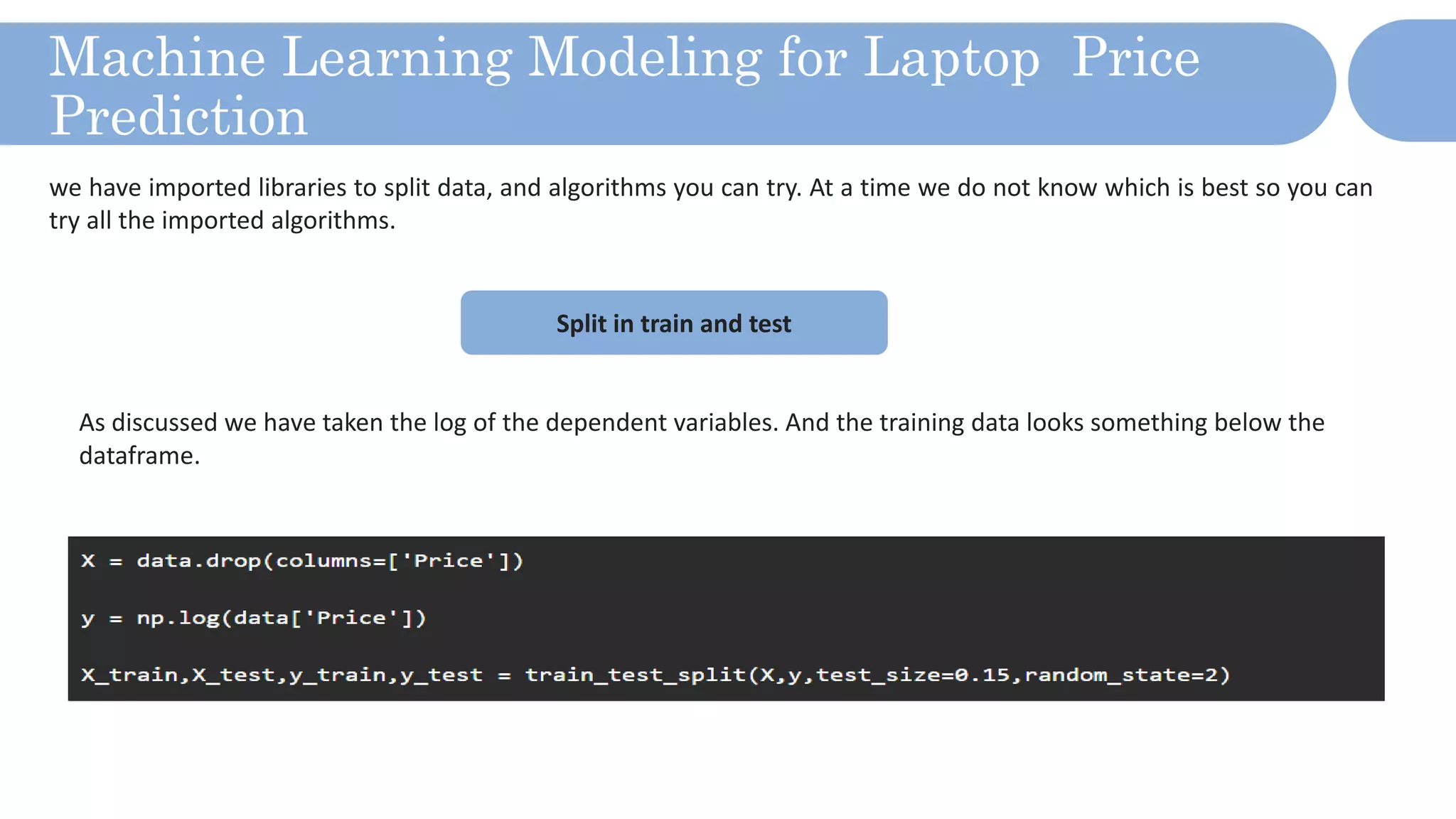

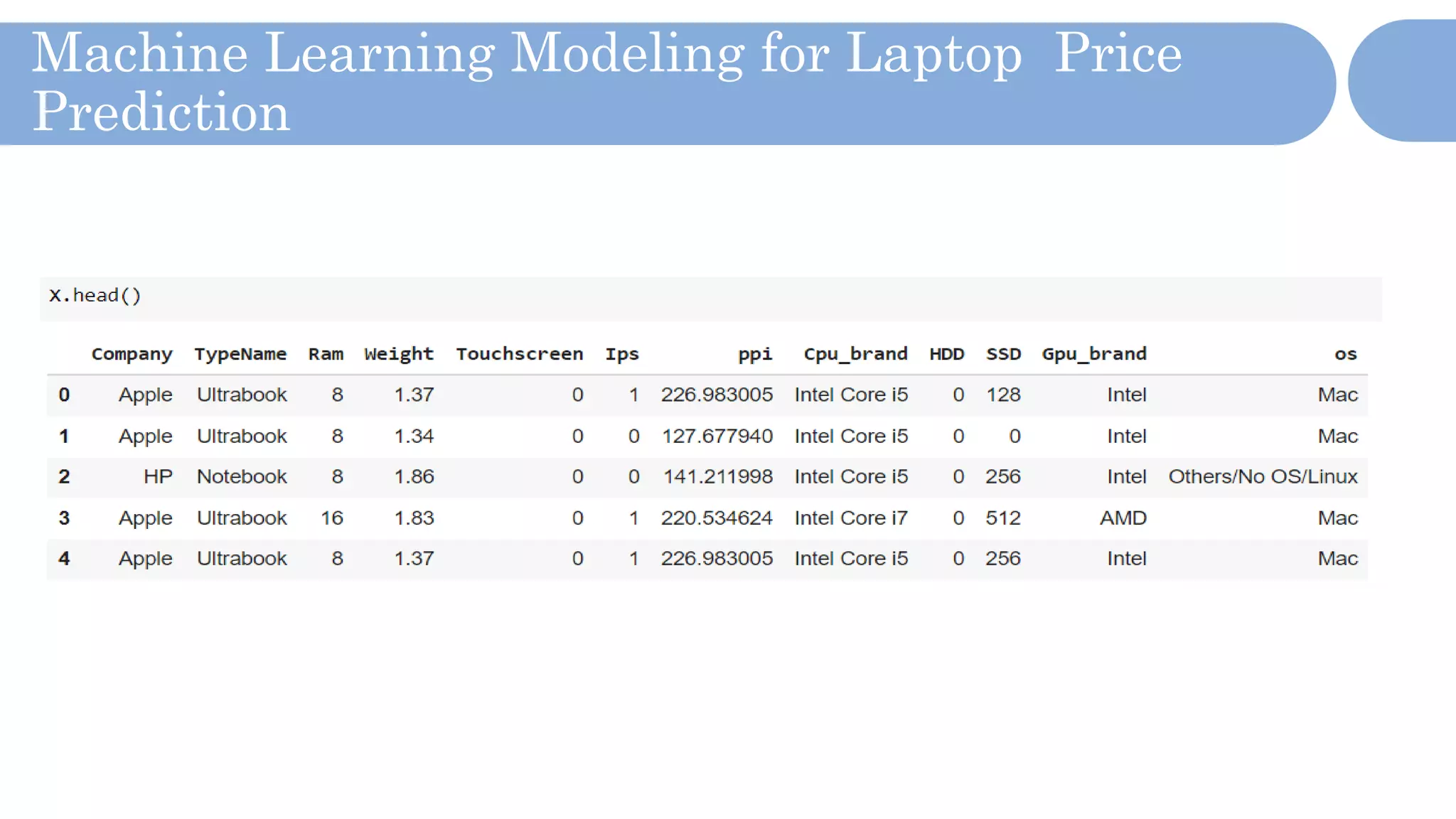

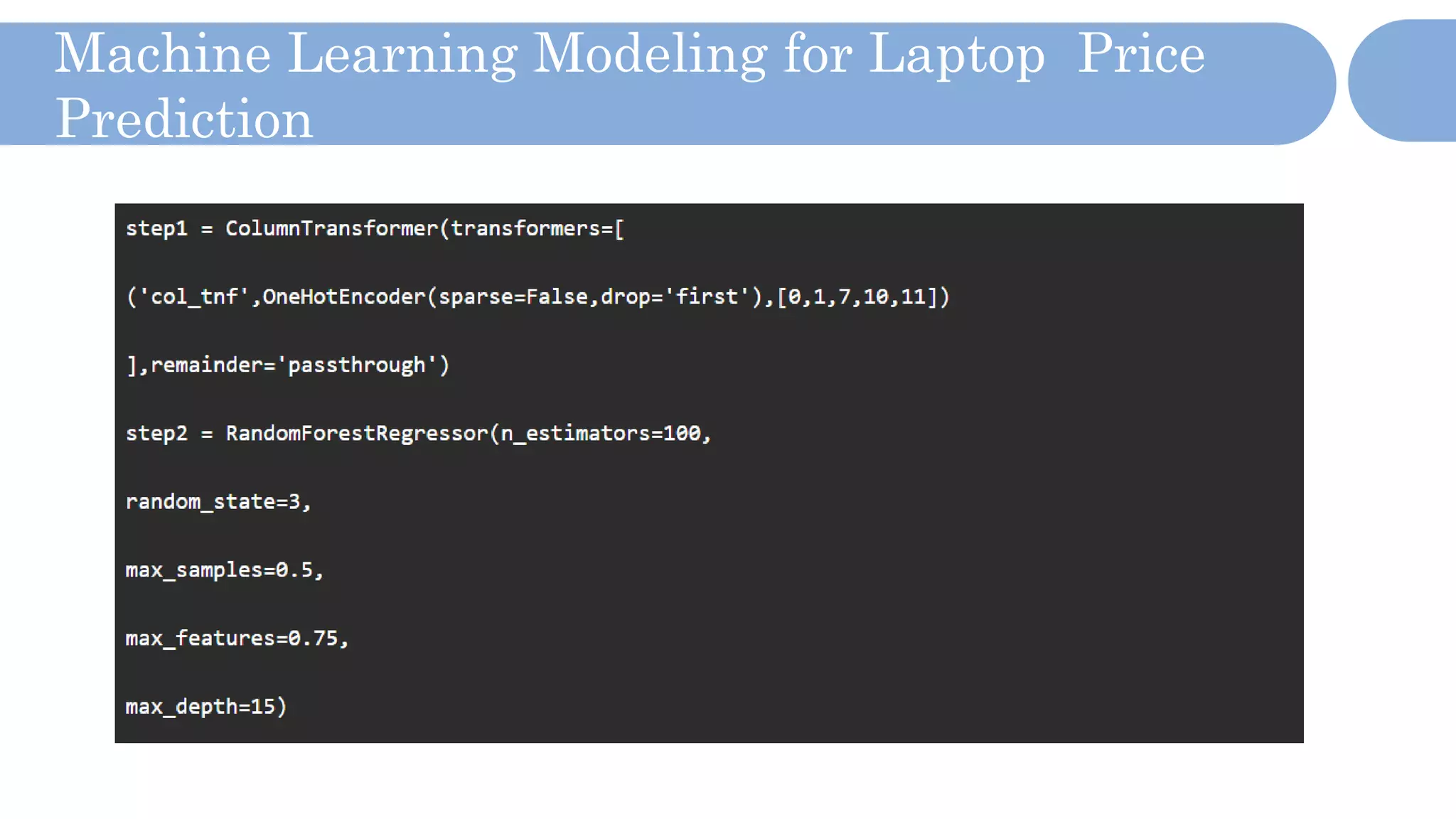

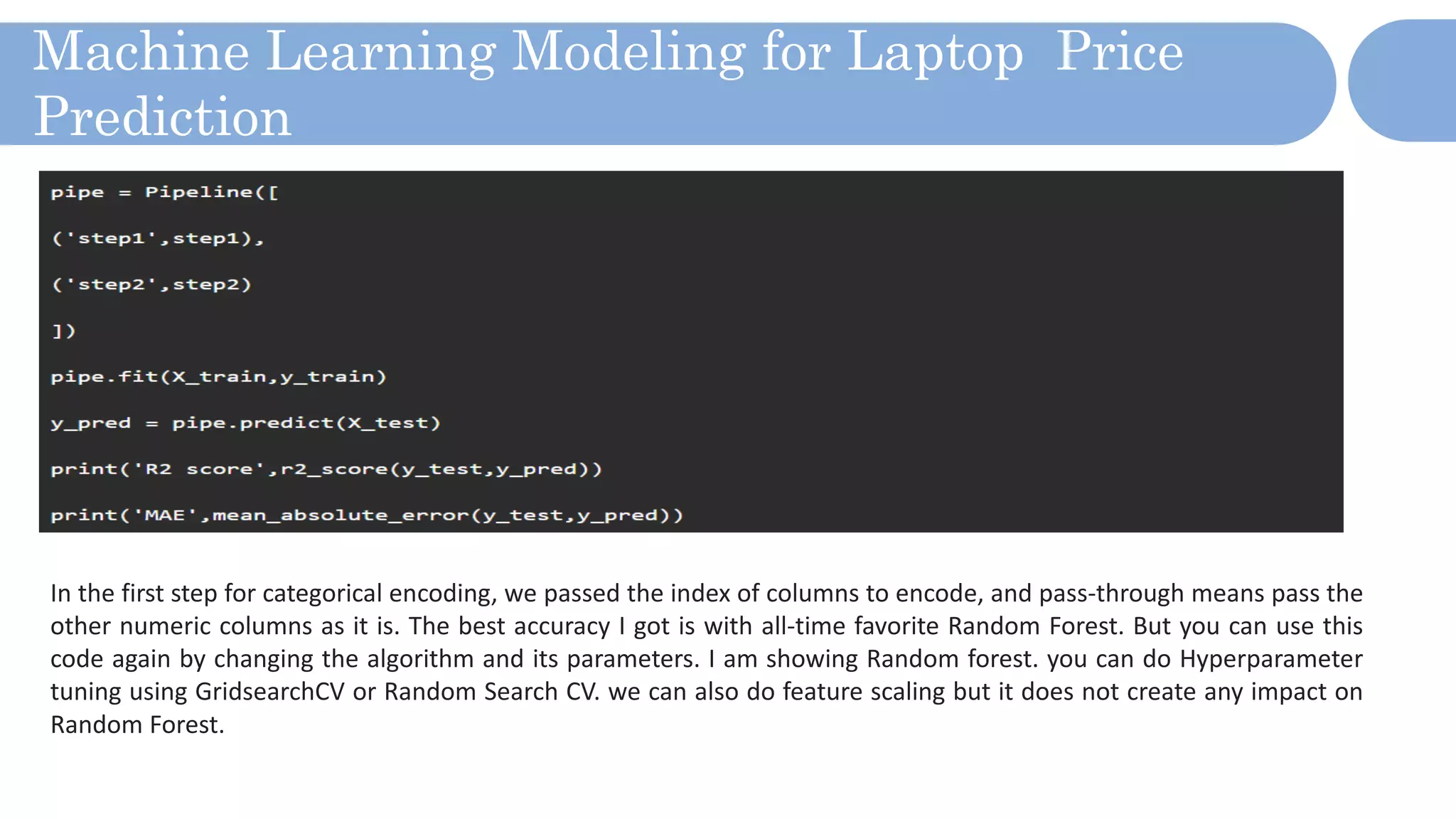

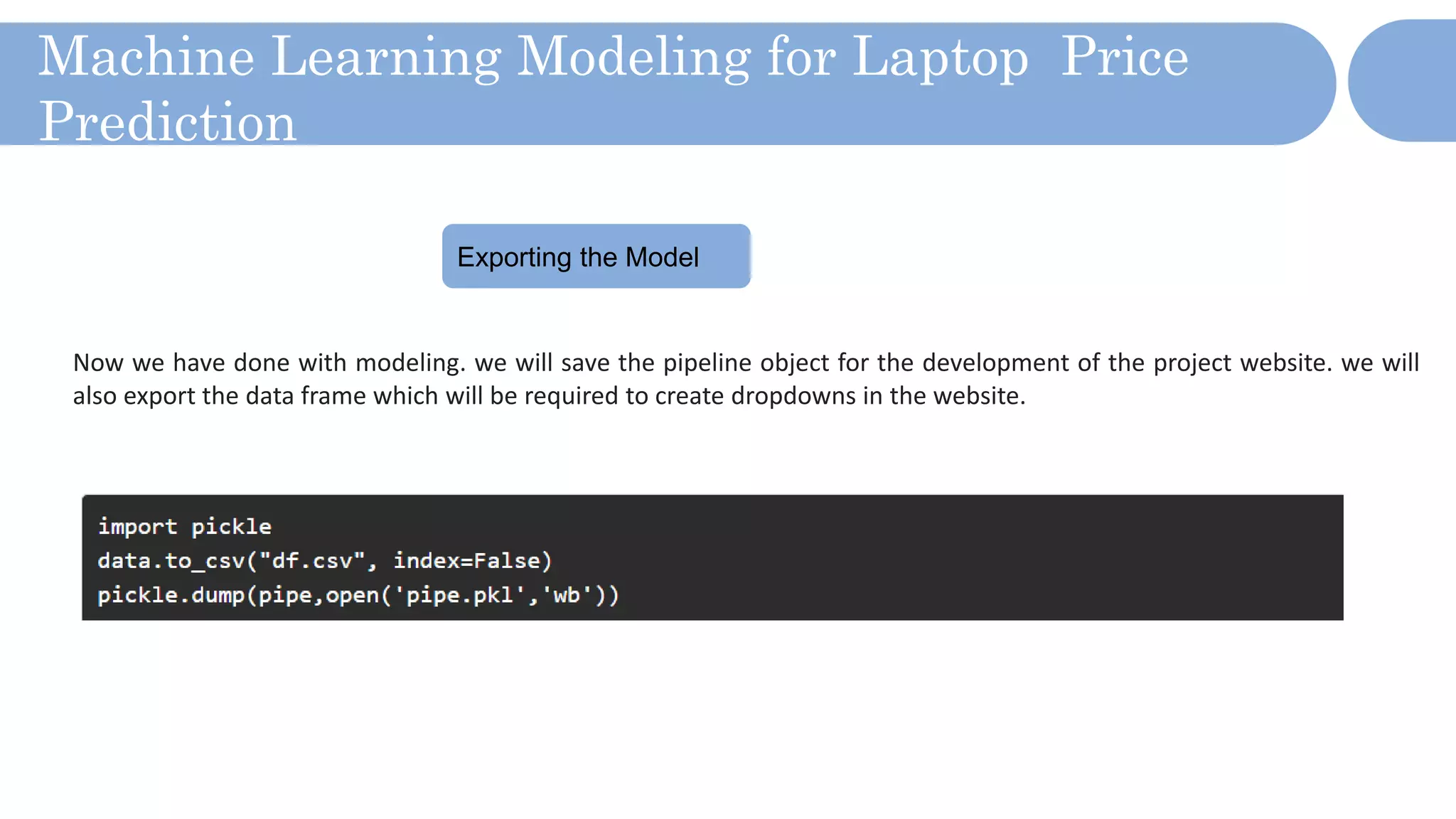

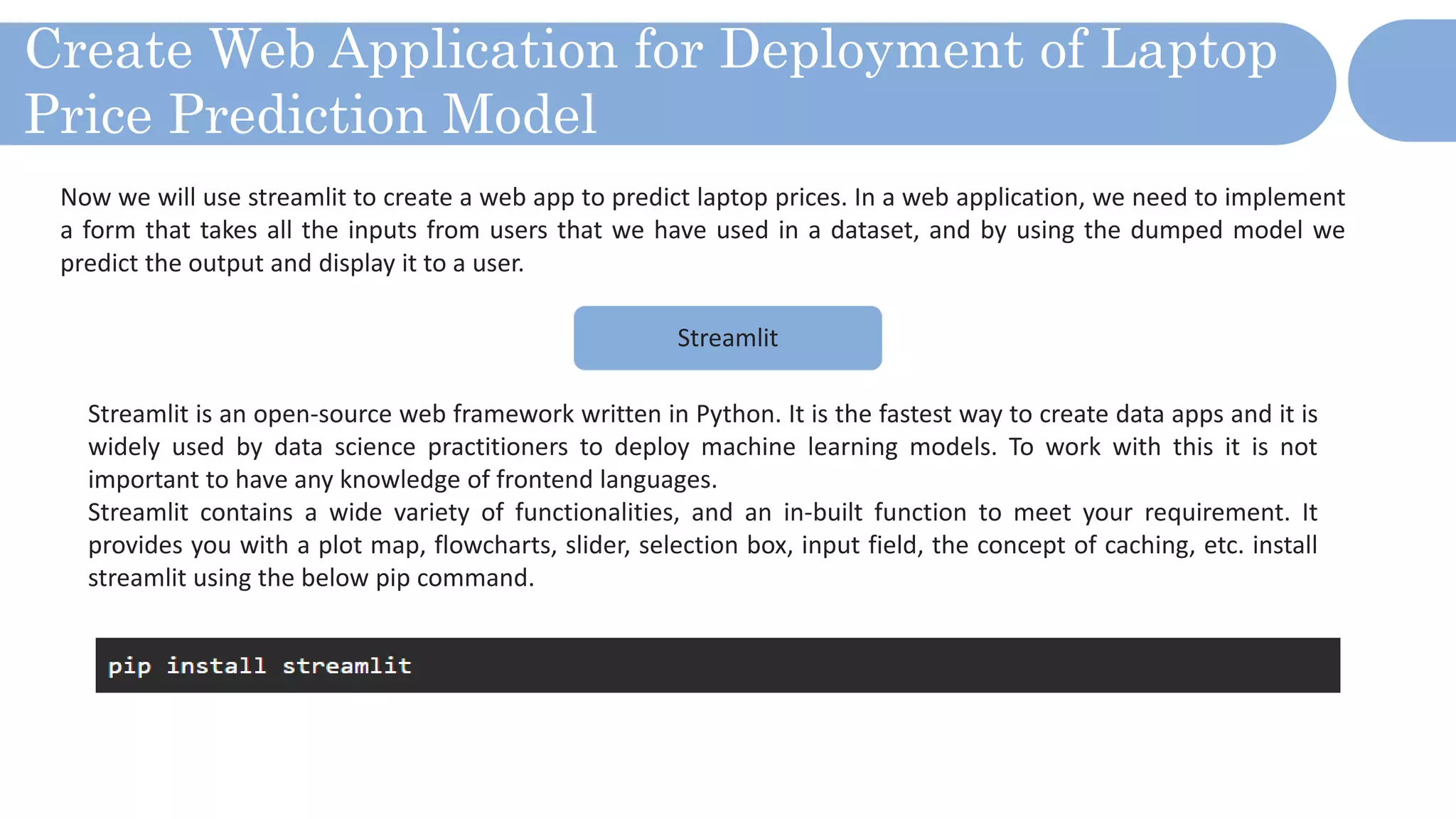

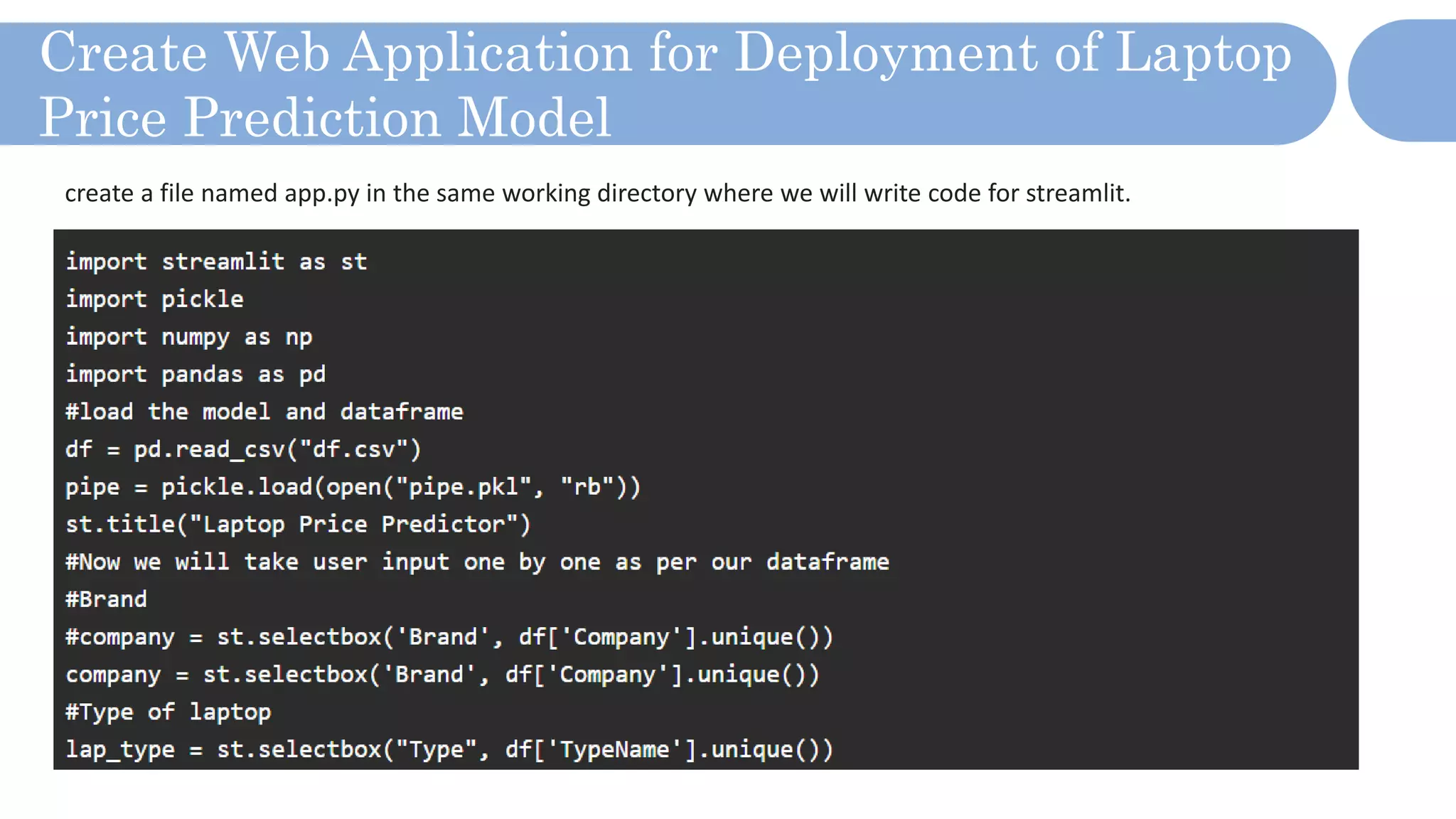

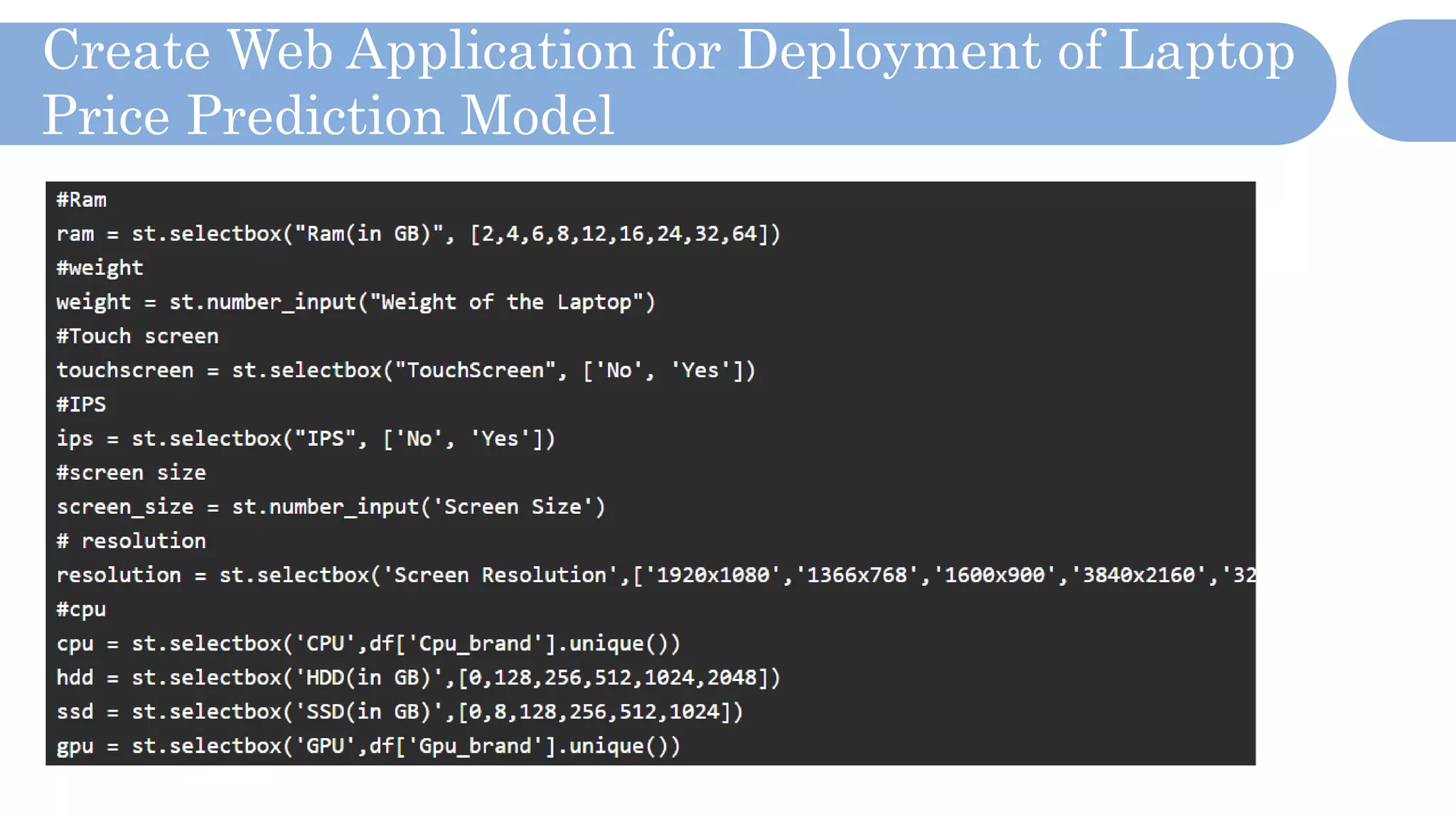

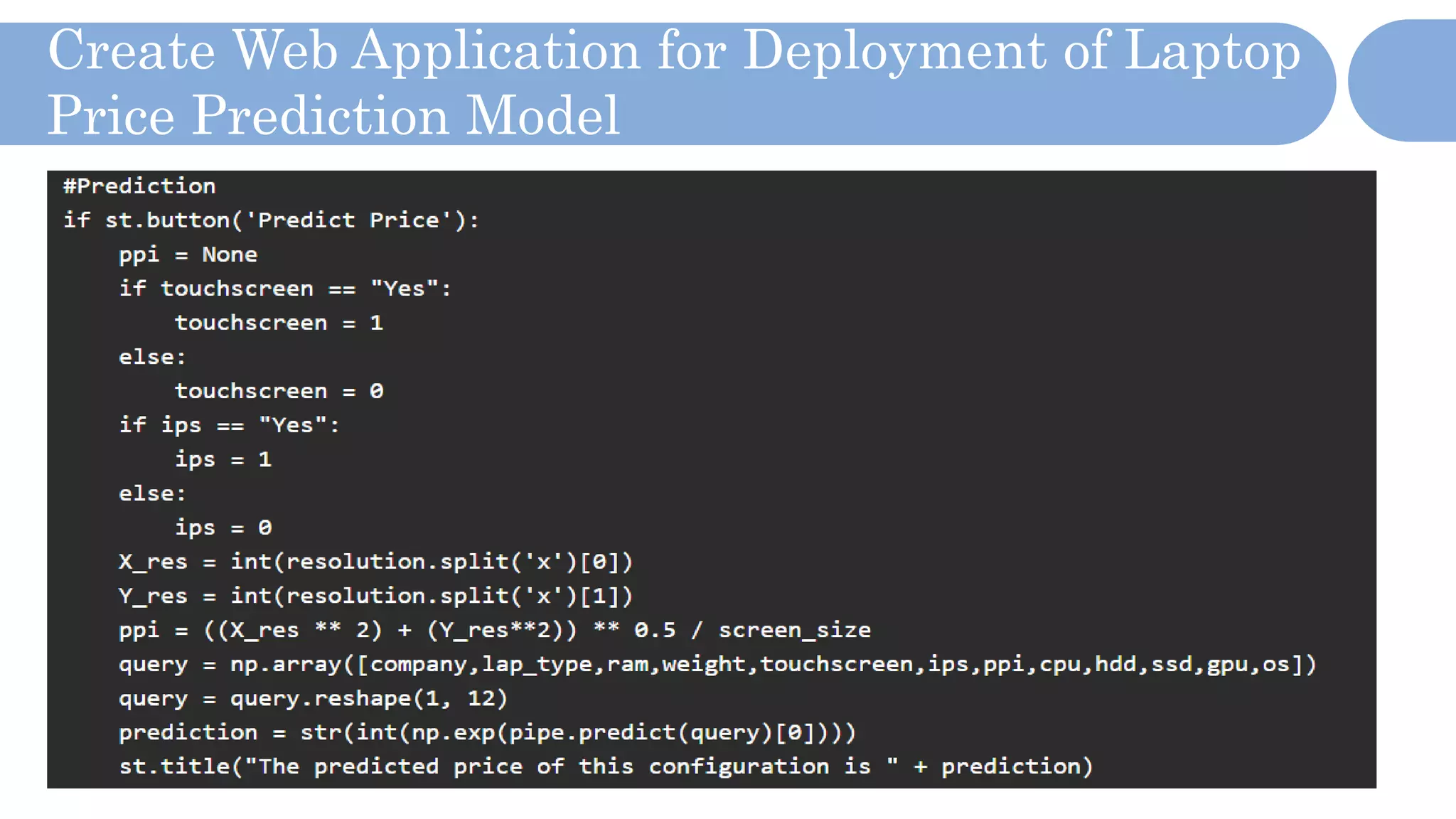

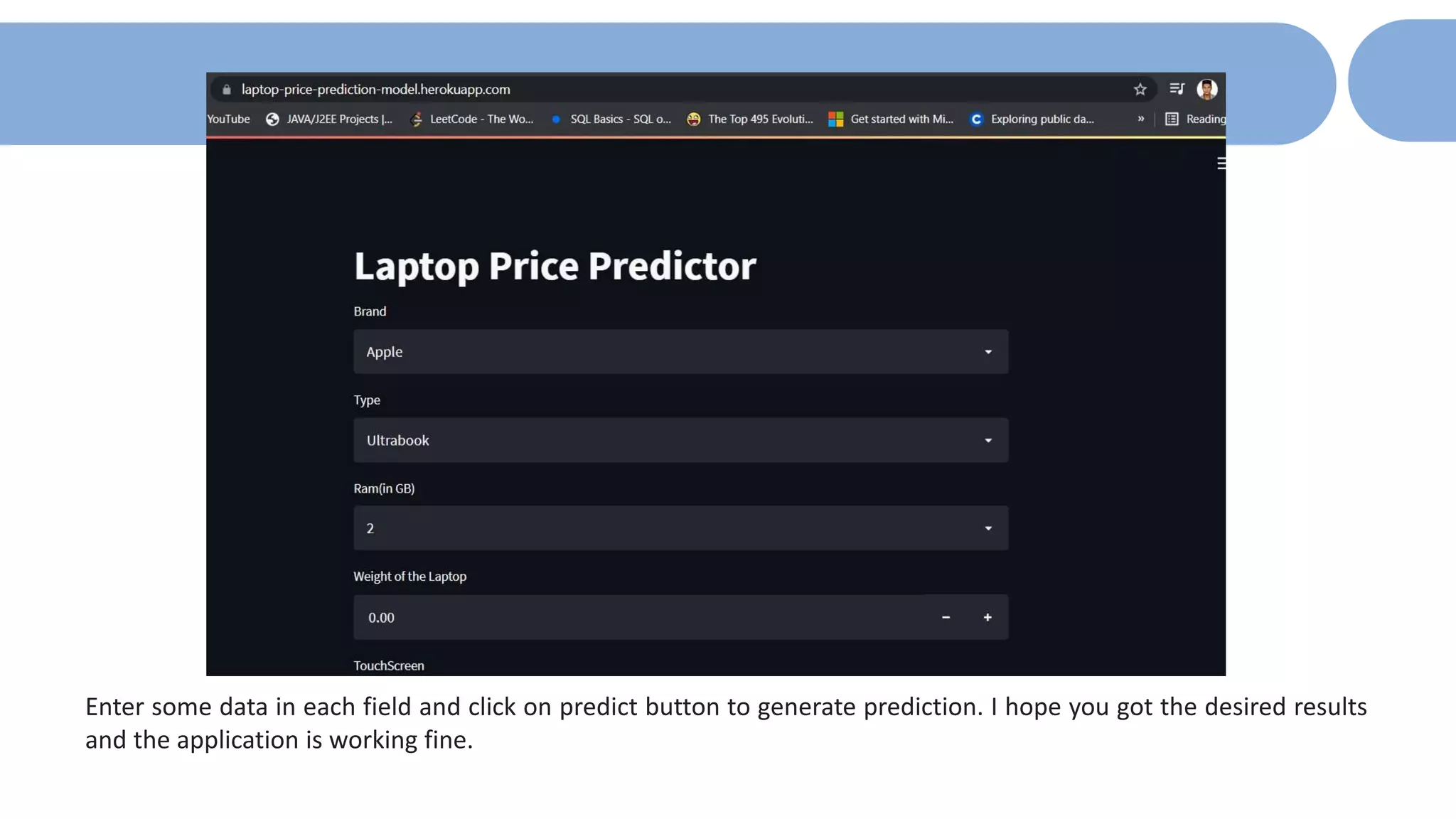

The document outlines a laptop price prediction project undertaken by a group, detailing the problem statement and the development process using machine learning techniques. It includes sections on data cleaning, exploratory data analysis, feature engineering, machine learning modeling, and deployment of a web application using Streamlit. The objective is to create a model that predicts laptop prices based on user configurations while addressing challenges posed by a noisy dataset.