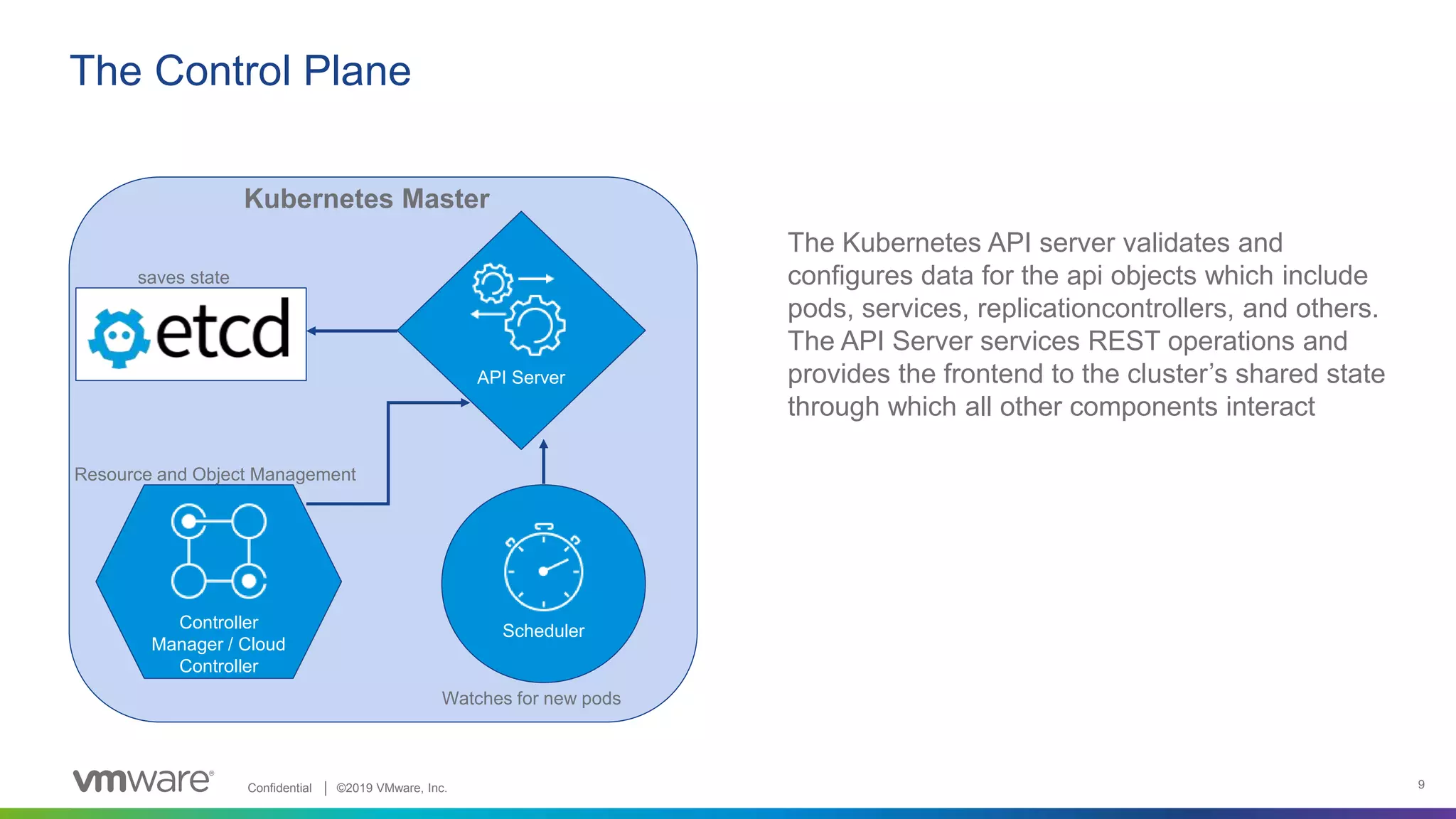

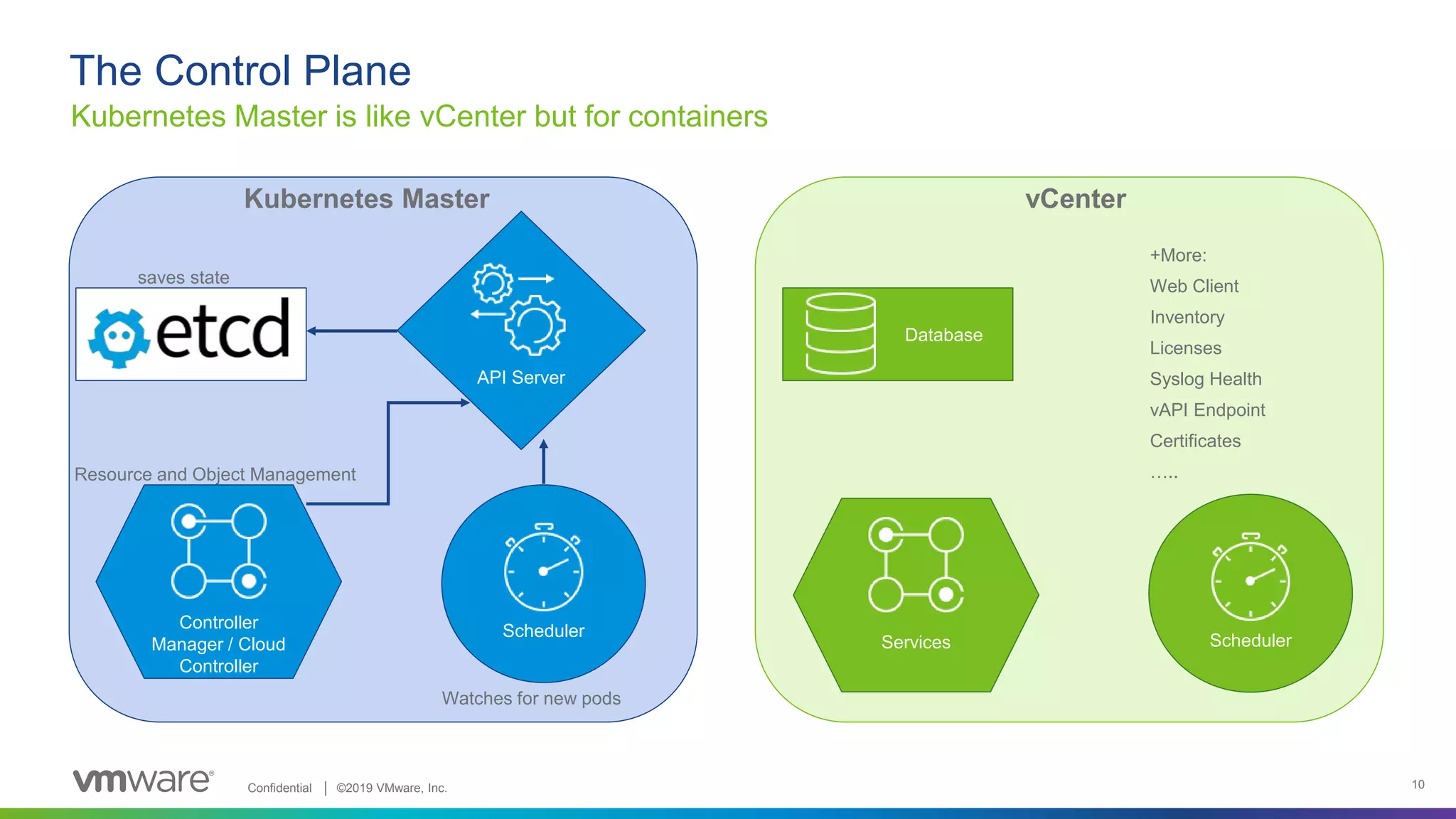

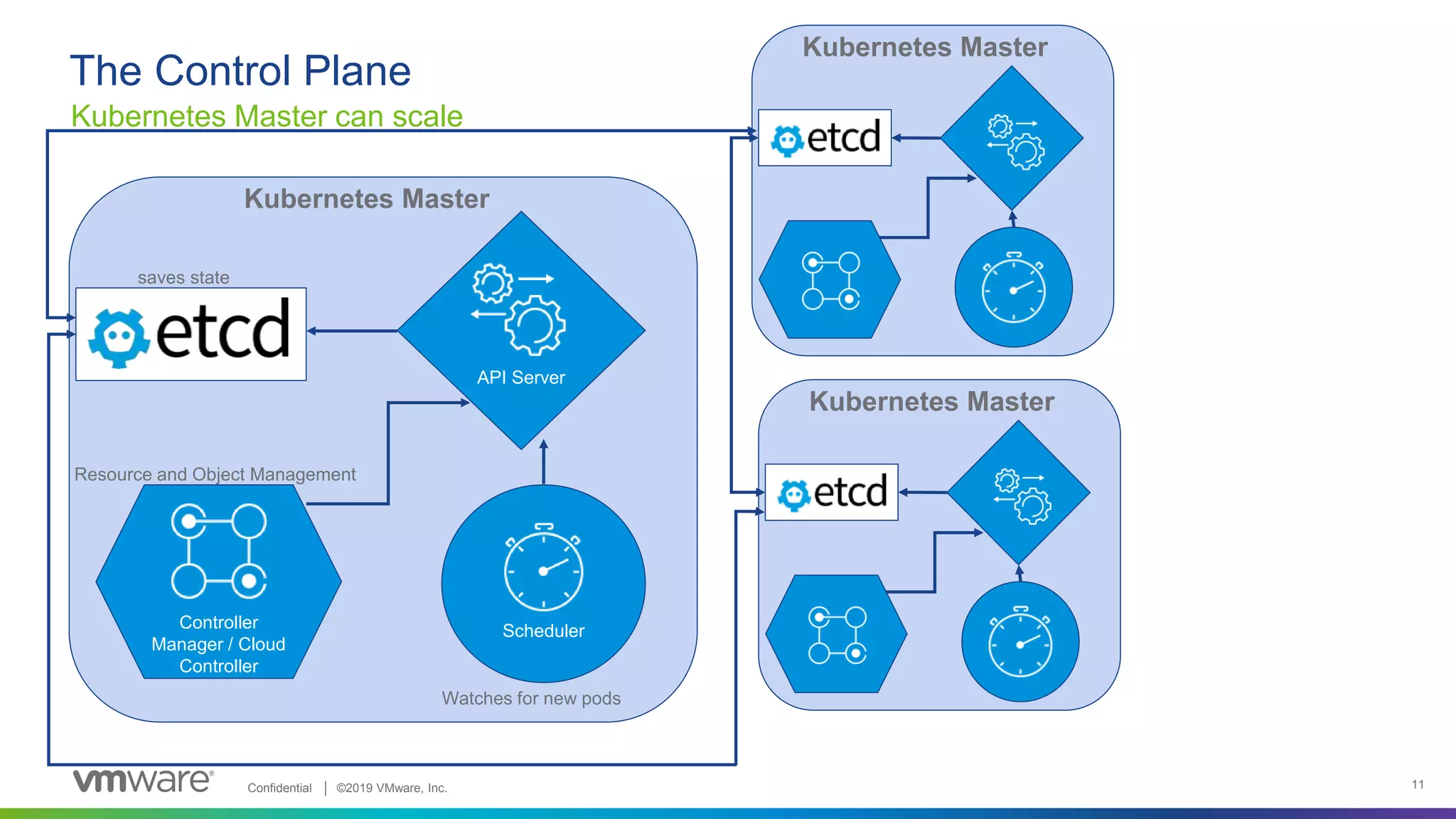

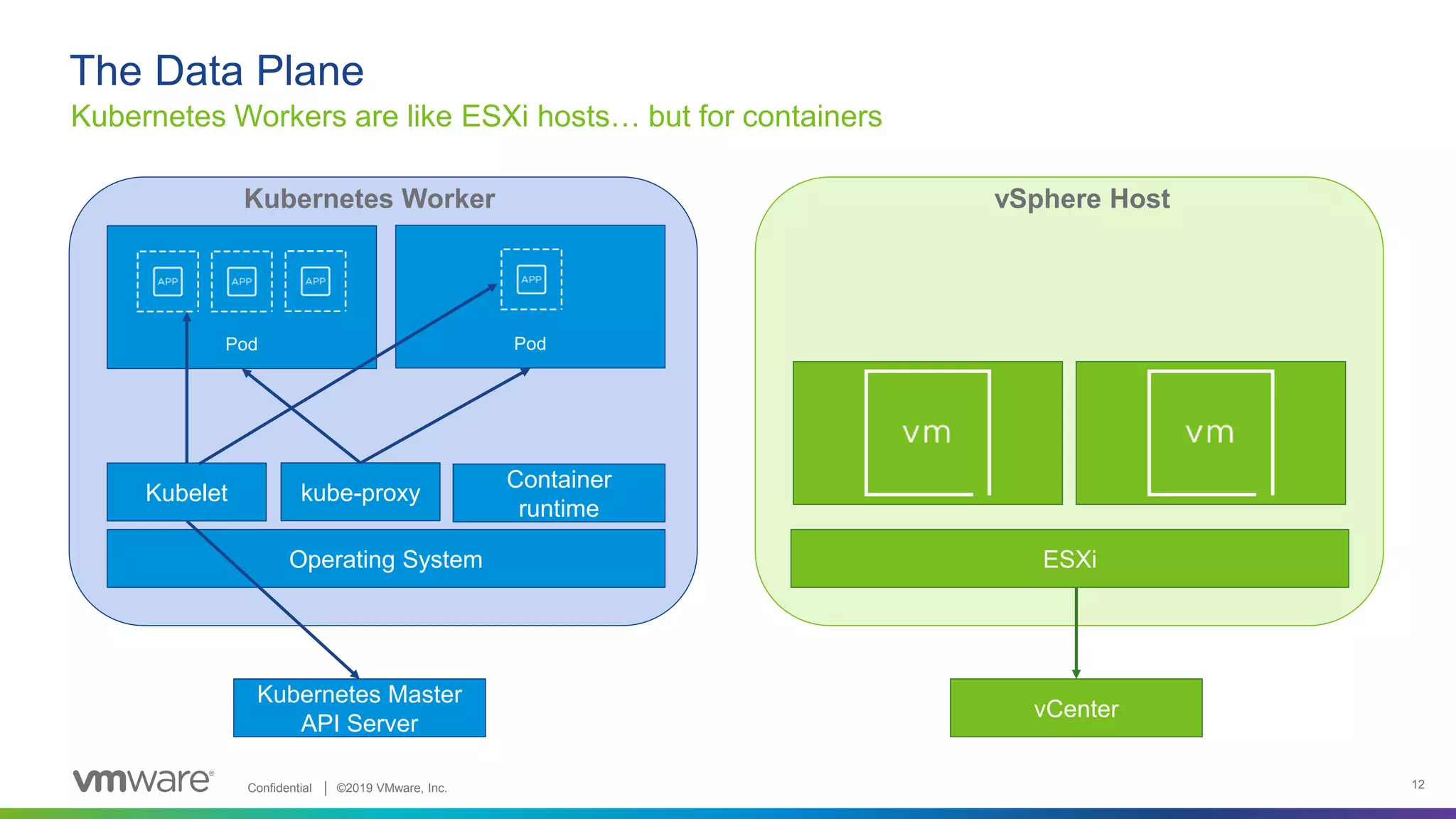

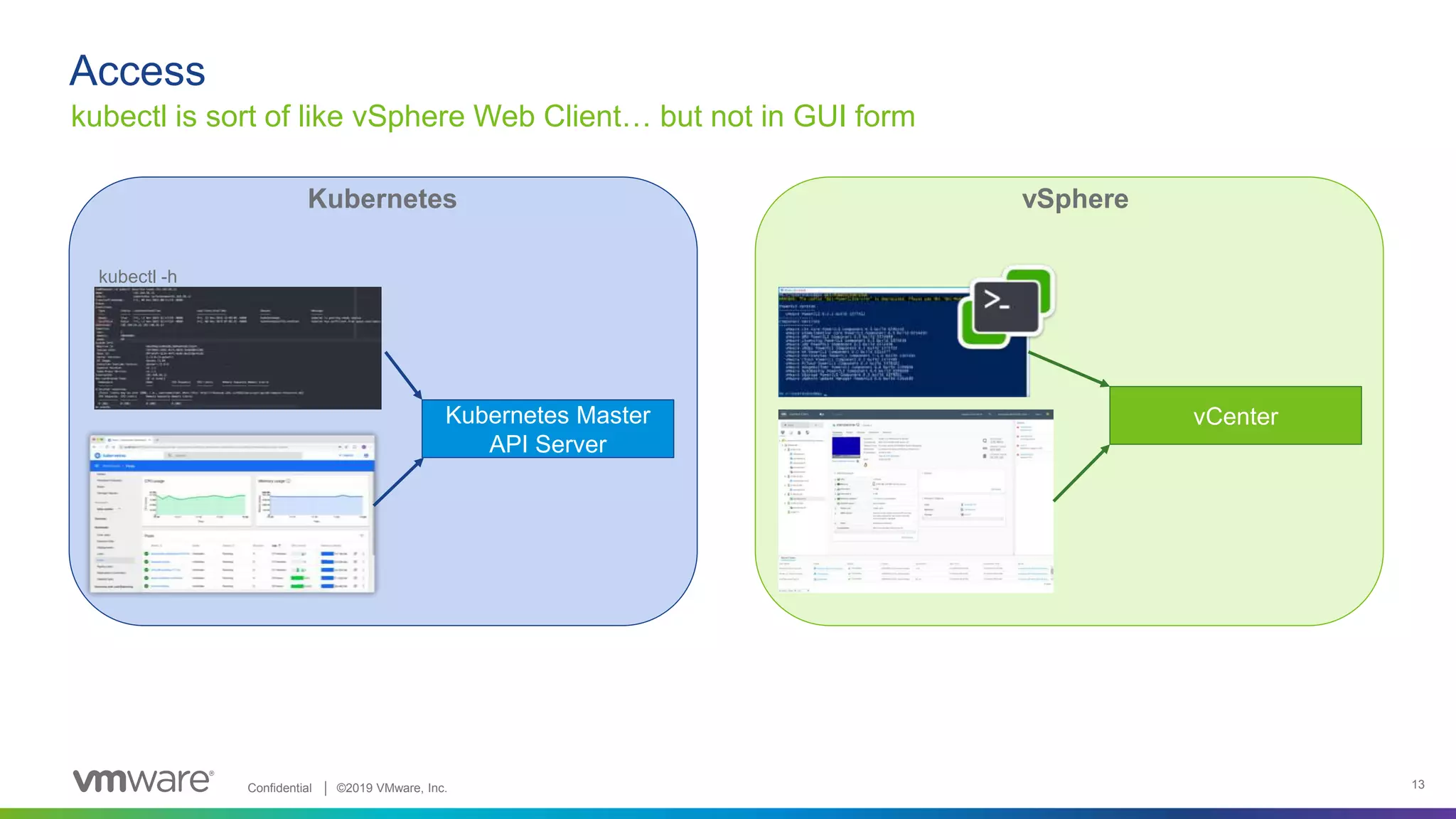

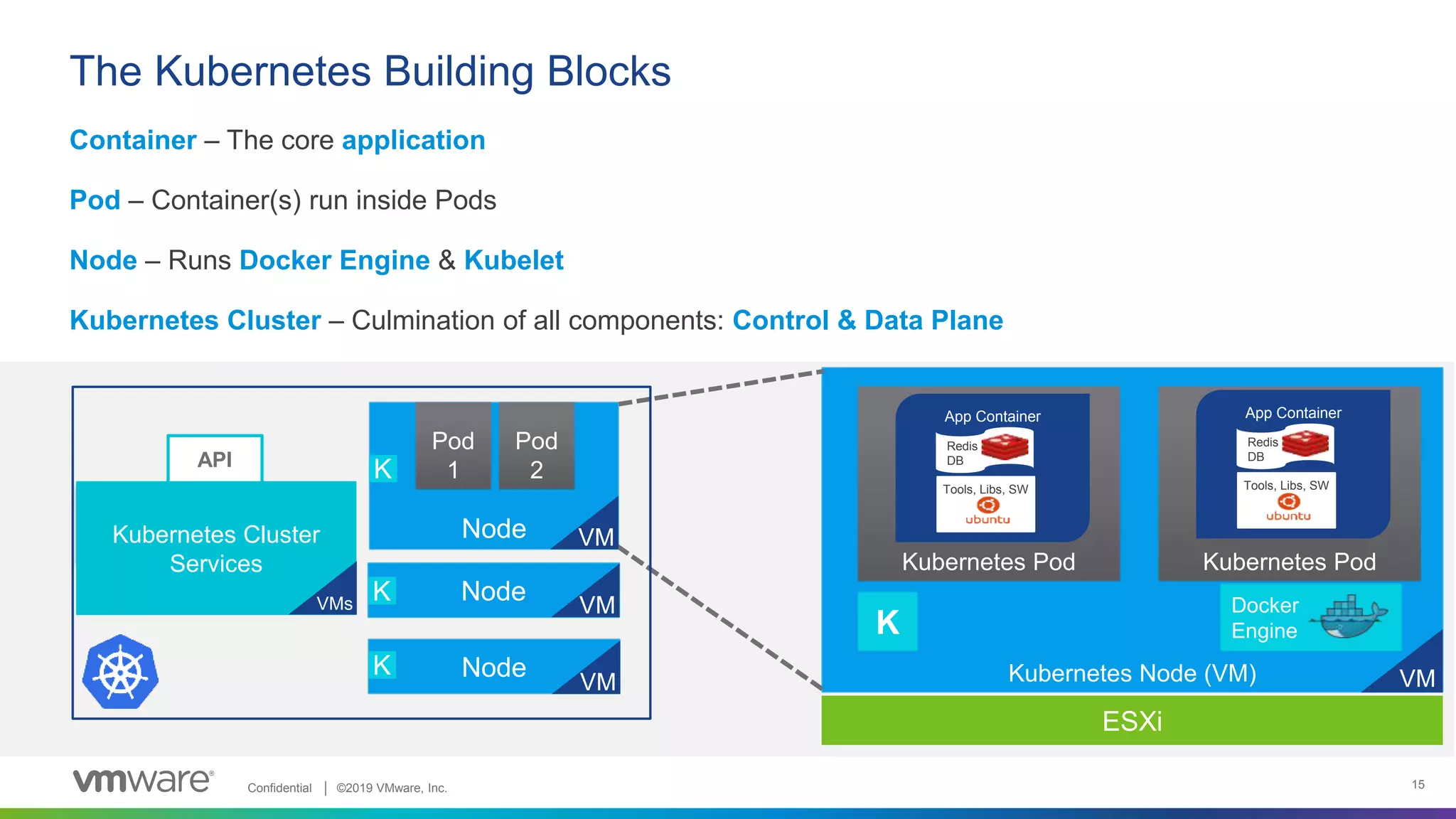

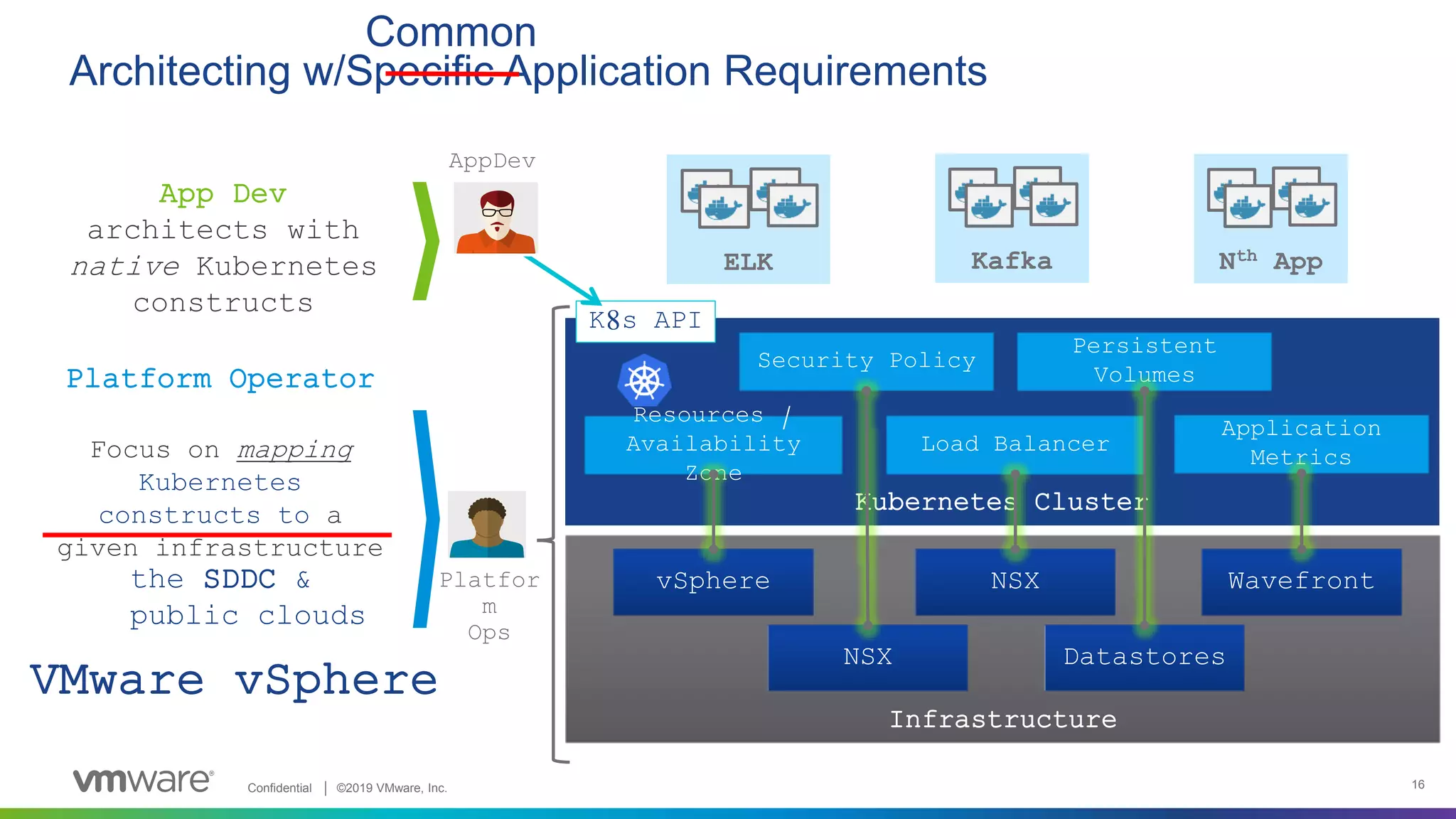

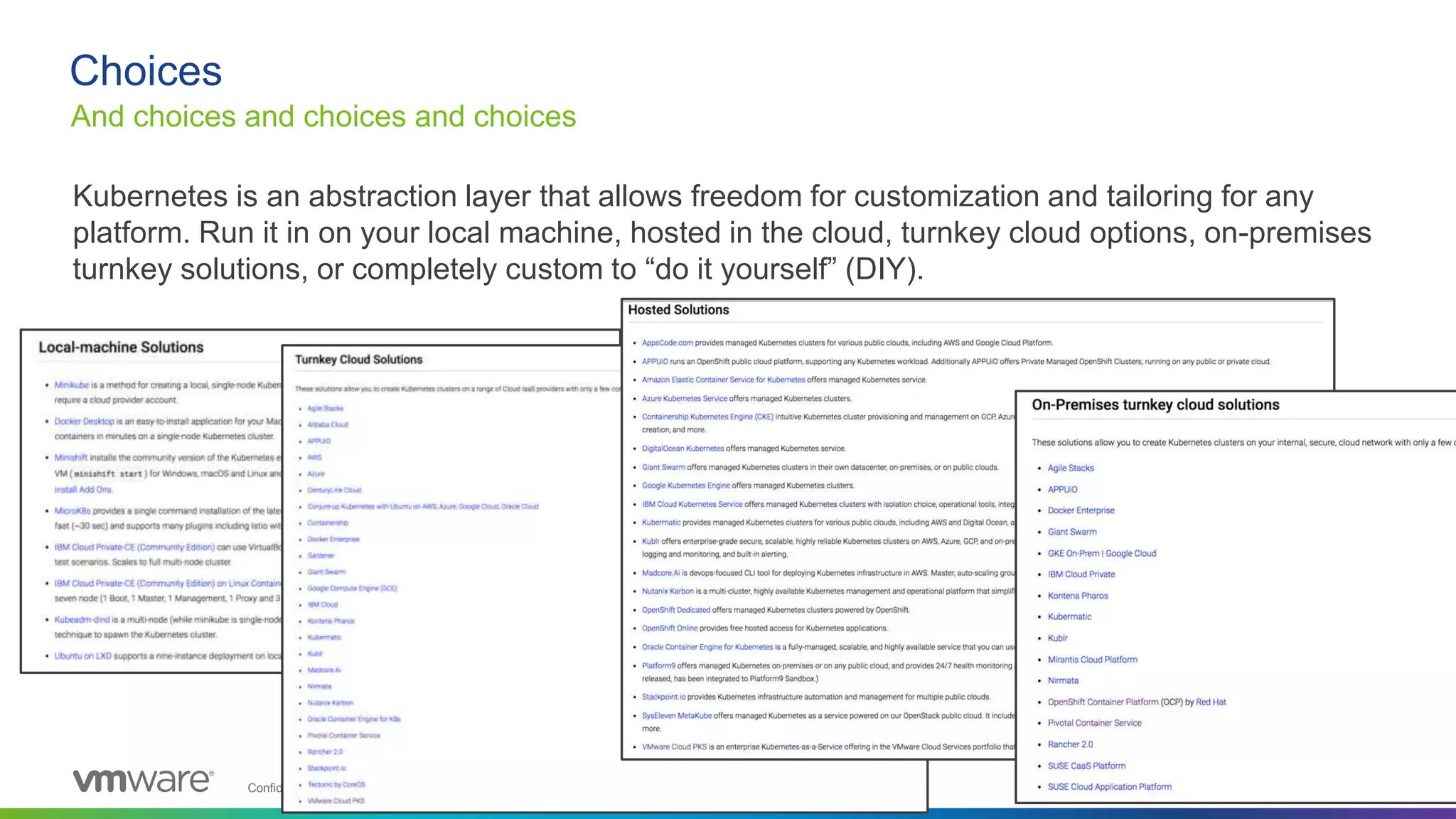

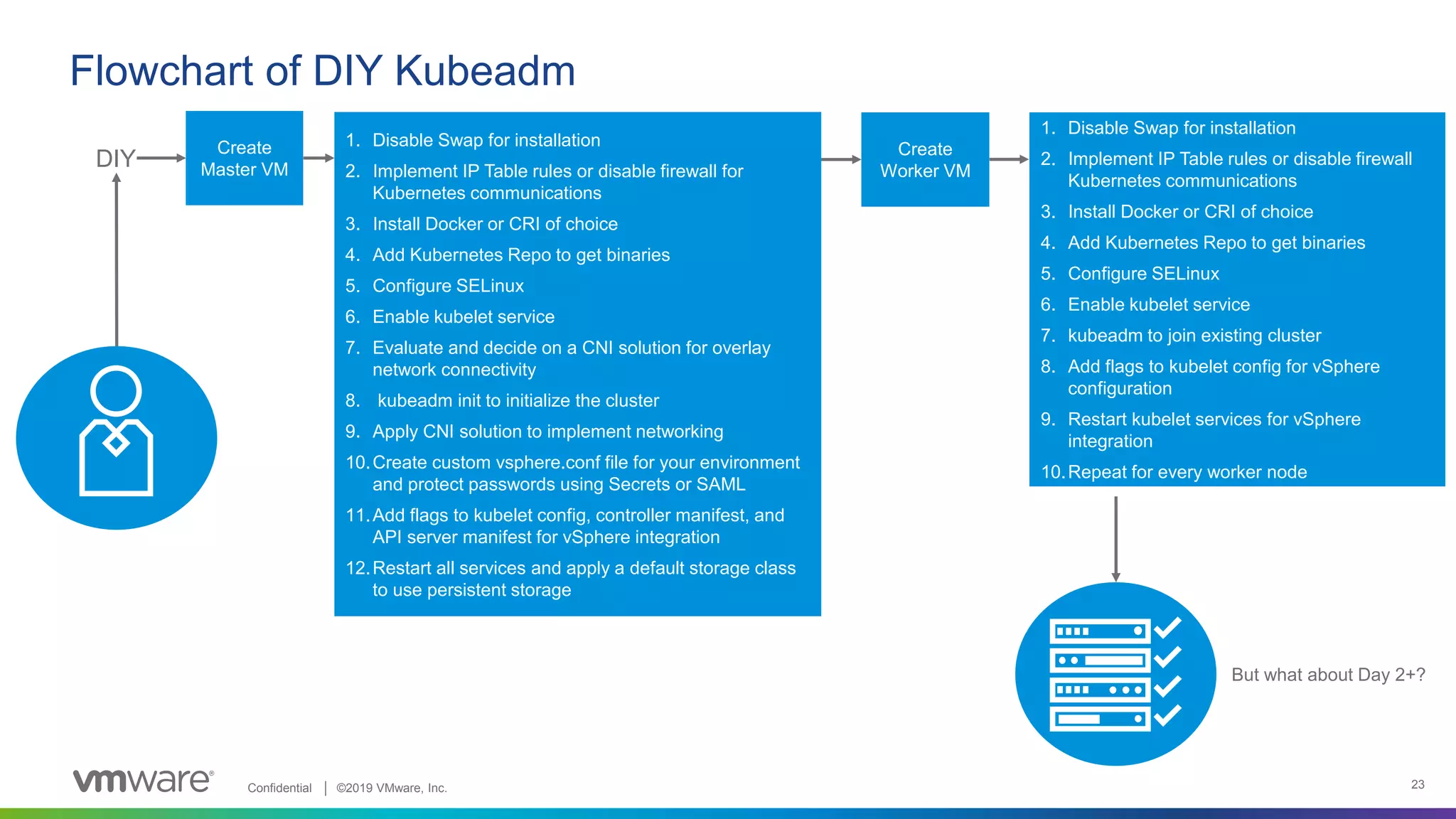

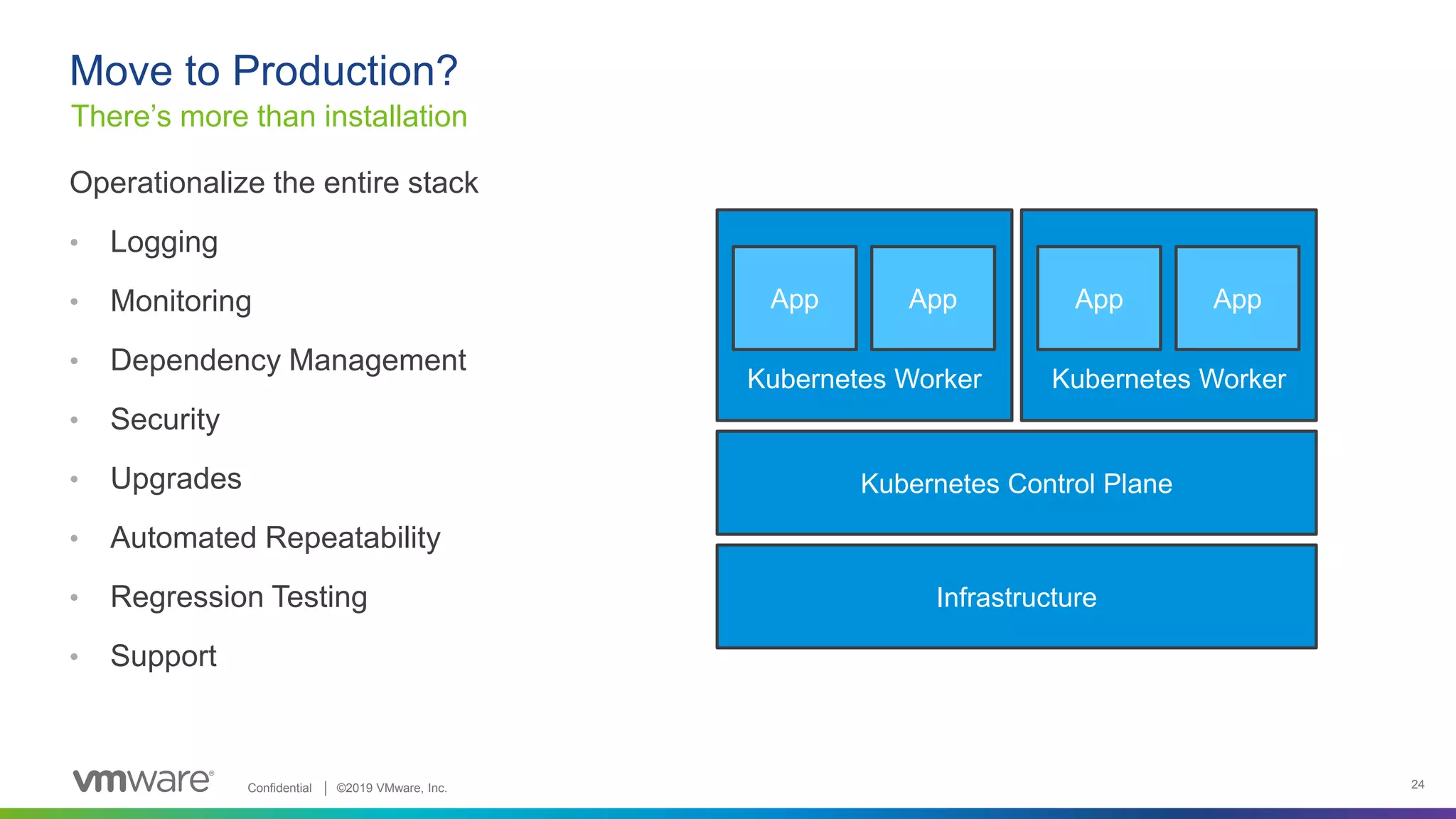

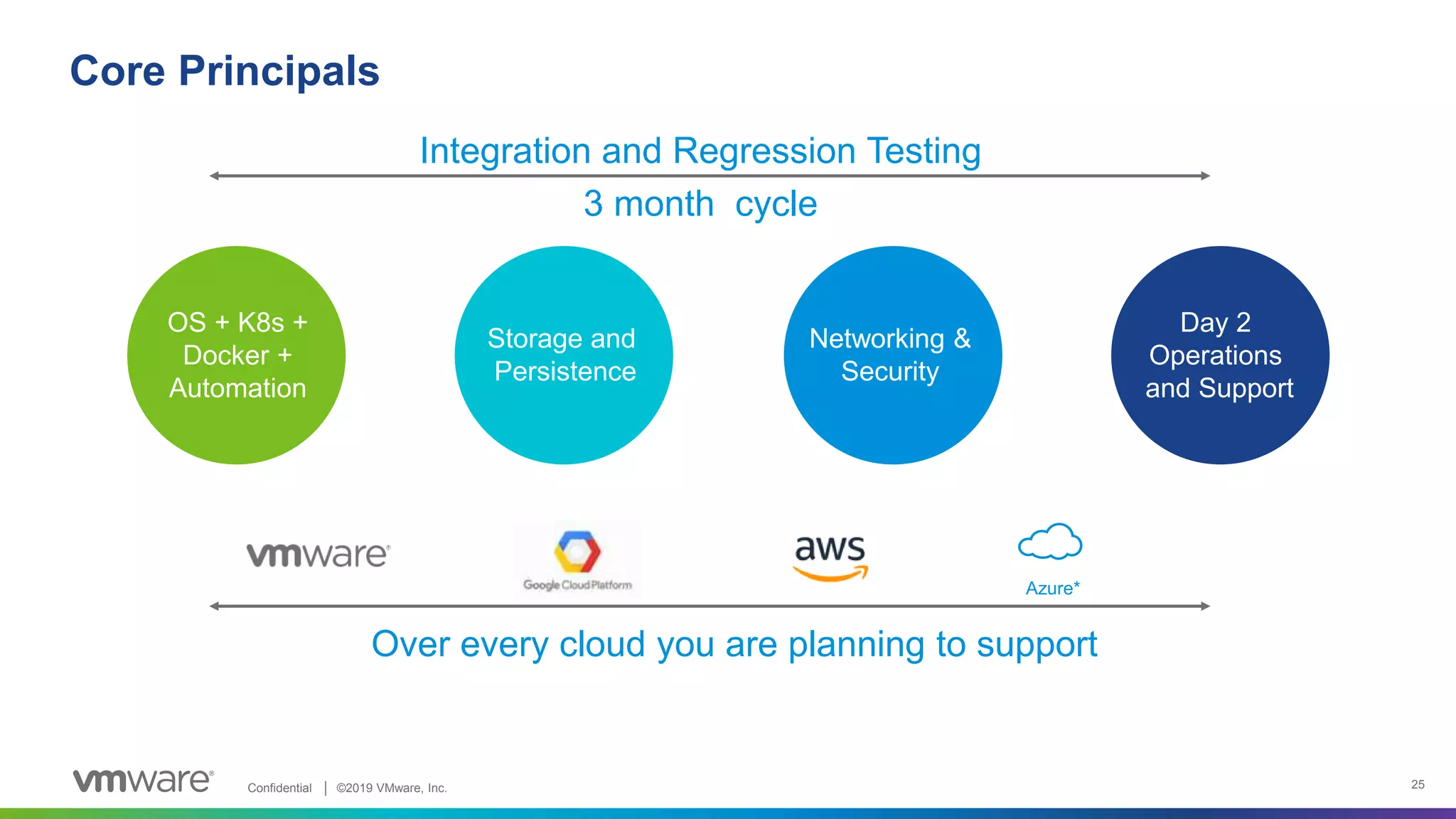

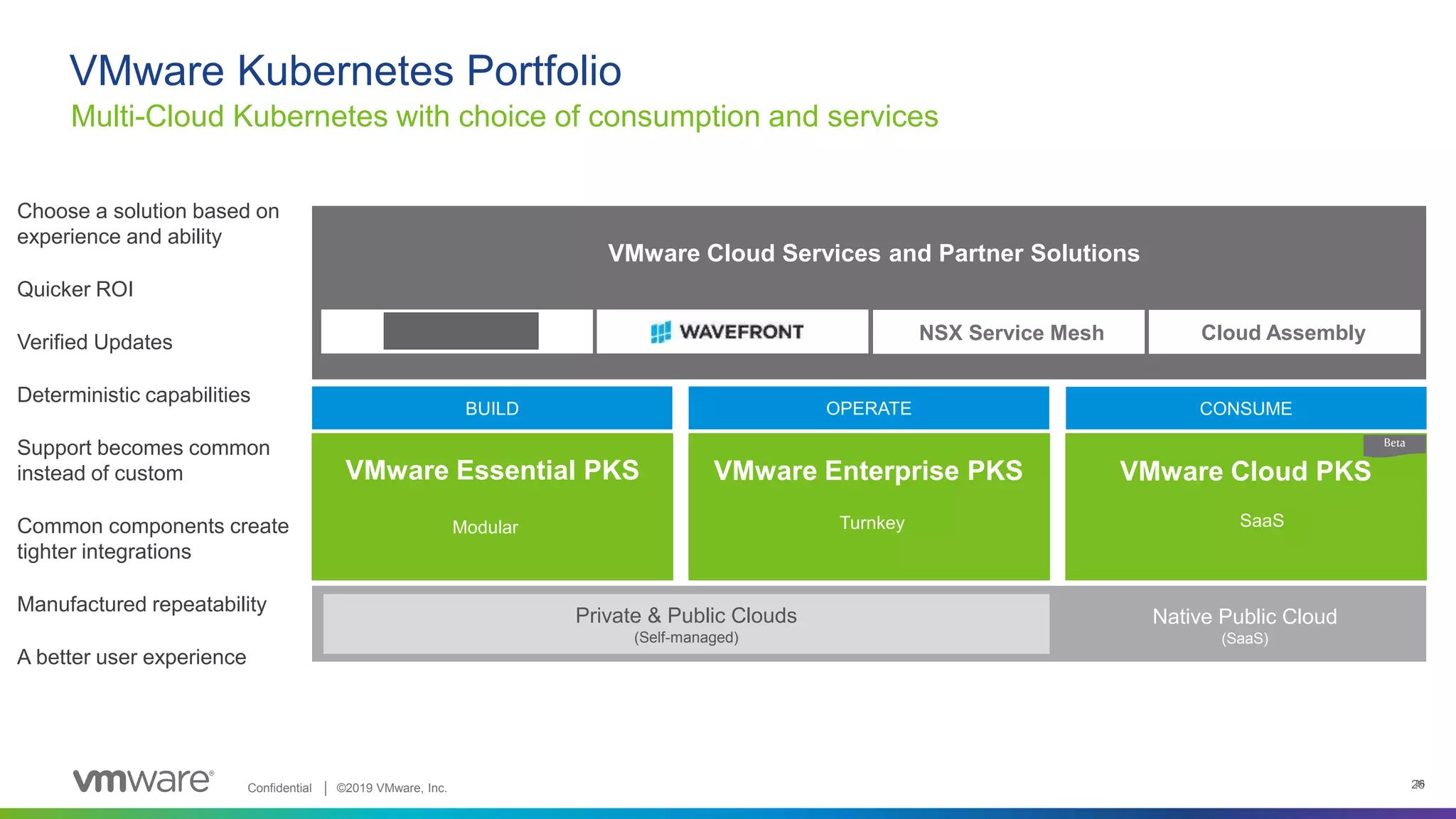

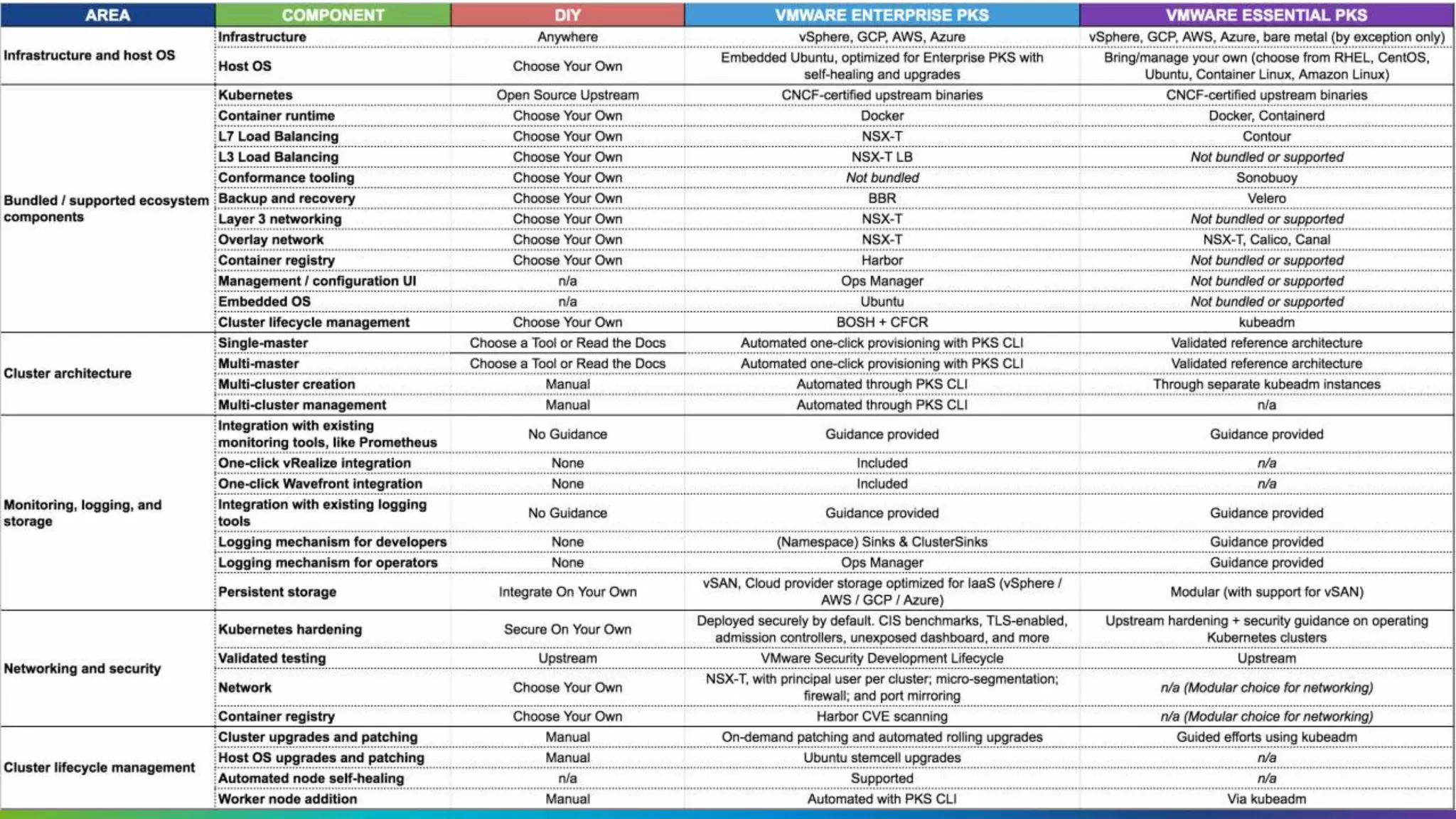

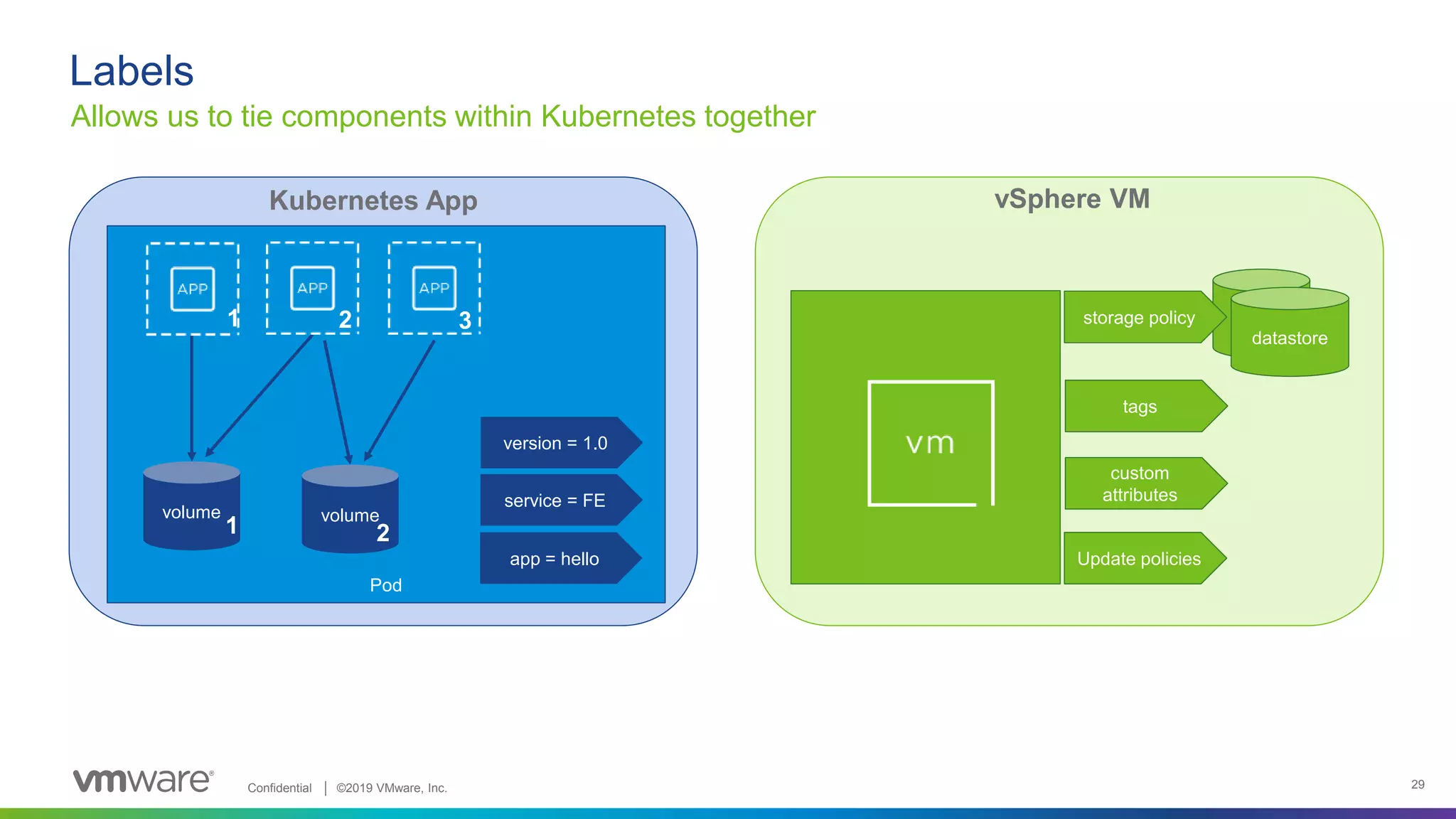

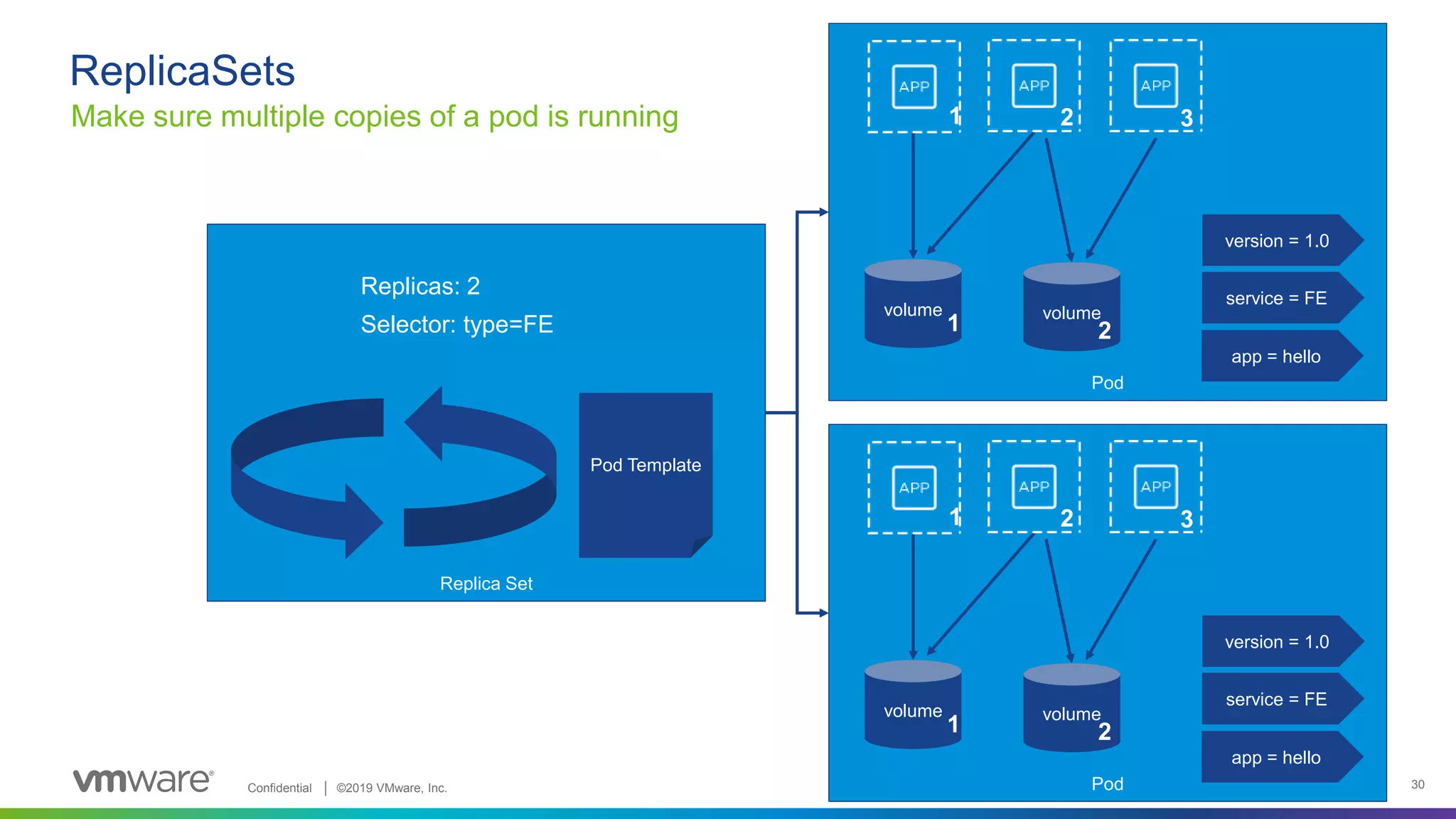

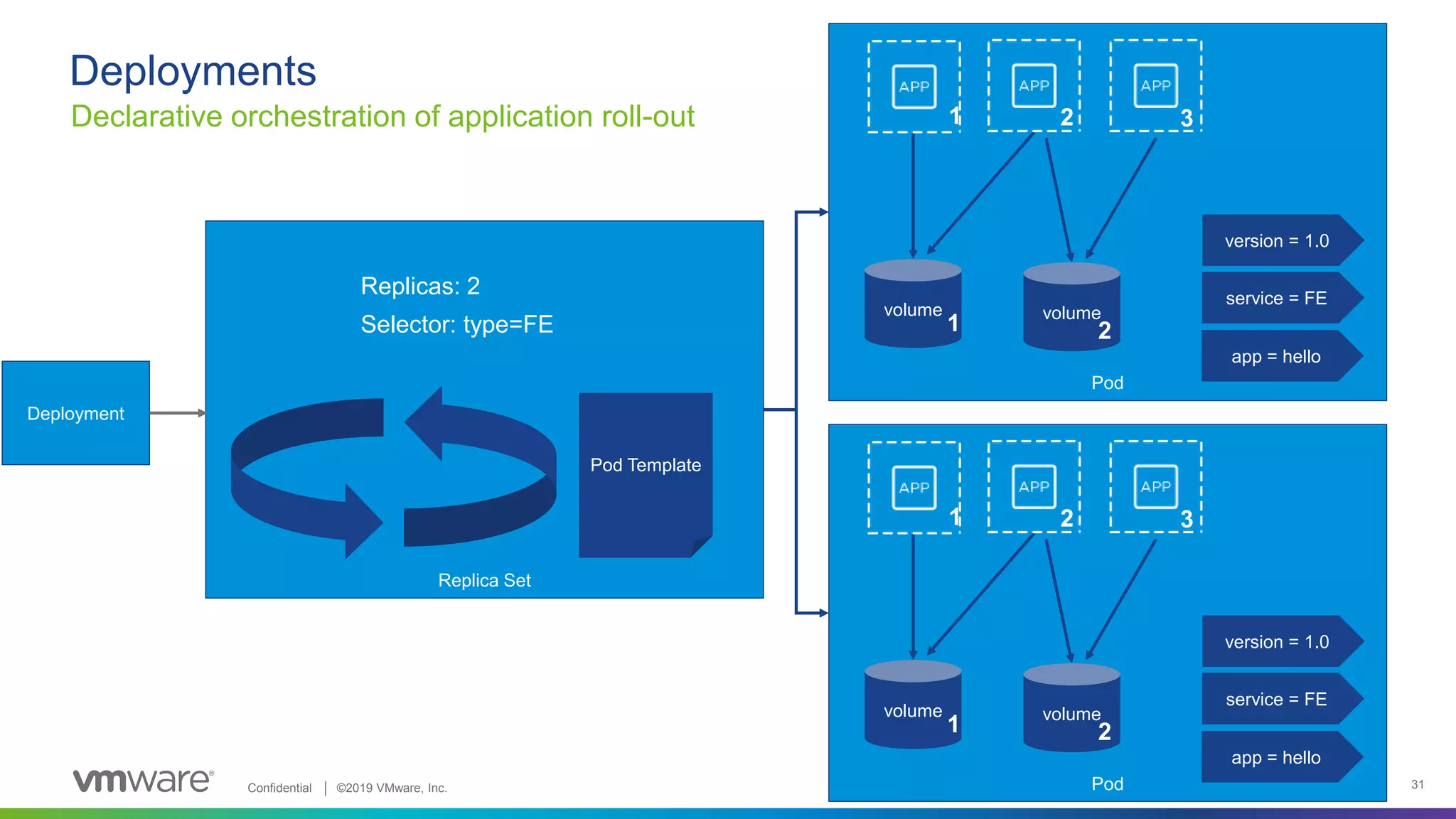

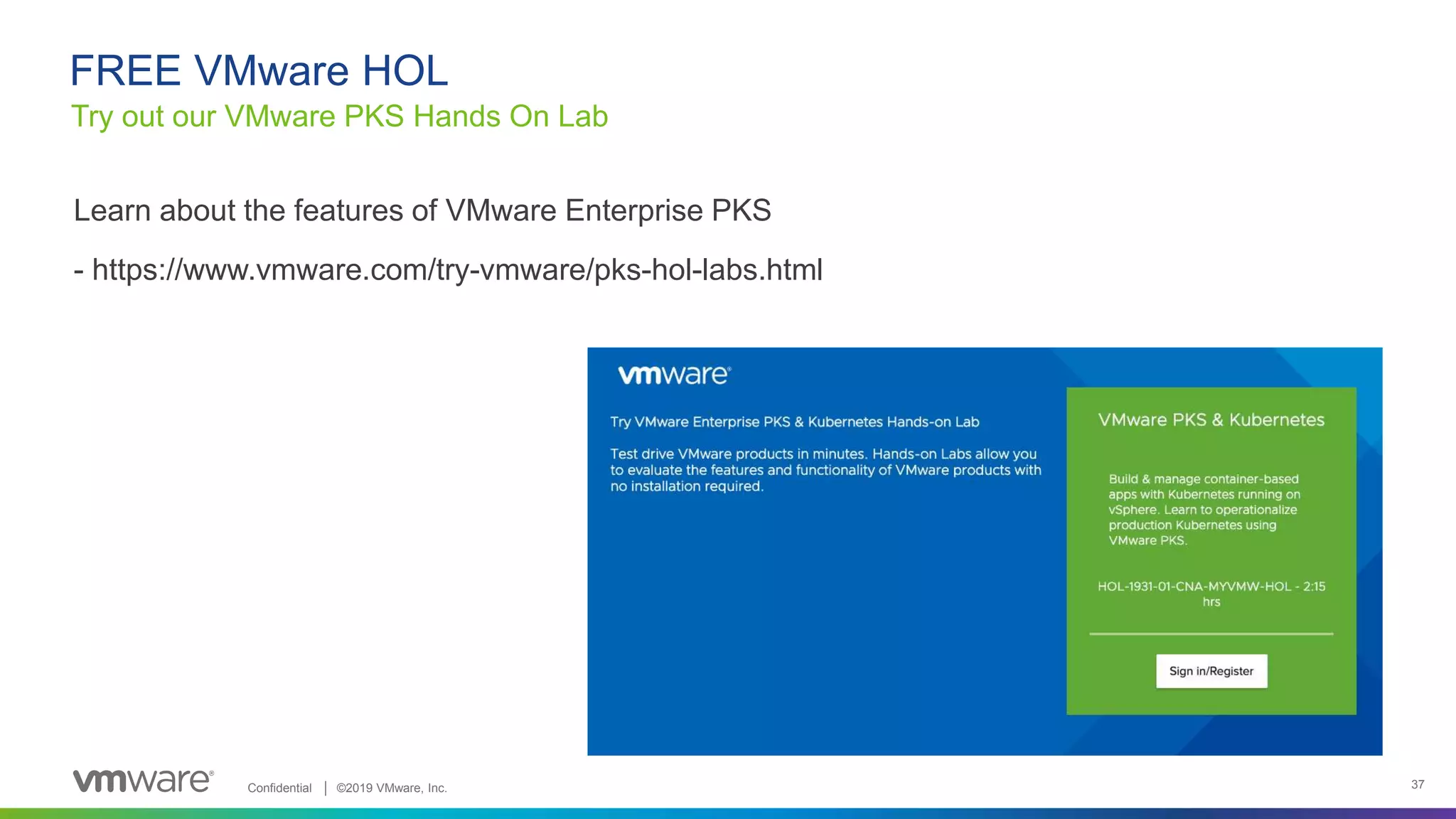

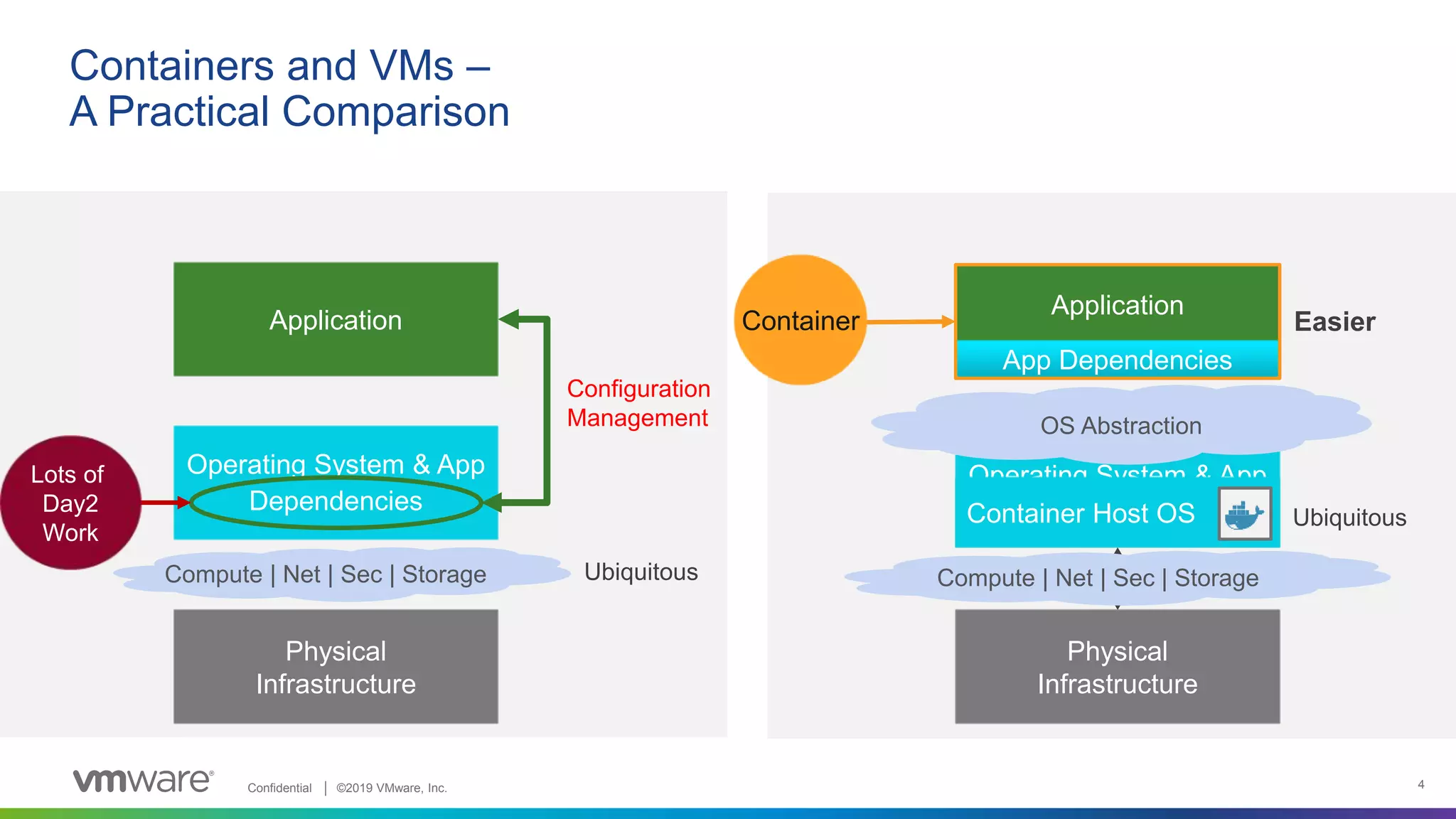

This document provides an overview of Kubernetes and how it compares to VMware technologies. It begins with an analogy that containers are to operating systems what virtual machines are to server hardware. It then discusses how Kubernetes orchestrates multiple containers across nodes by splitting applications into smaller services. The remainder of the document discusses key Kubernetes concepts like pods, replica sets, deployments and services. It provides a mapping of how Kubernetes concepts compare to VMware concepts like vCenter and vSphere hosts. It also discusses considerations for installing Kubernetes and operating it at scale.

![Confidential │ ©2019 VMware, Inc. 5

Container Registry

Repo for

Container Images

Anatomy of Building and Running a Container (Redis DB)

FROM: Ubuntu 14.04

RUN apt-get redis

EXPOSE 6379

CMD [“/user/sbin/redis..]

Minimal Linux “Container Host”

Docker

Engine

Running Container

Redis

DB

Tools, Libs, SW

From

#docker build

#docker push

#docker run redis_img1

Redis

DB

Tools, Libs, SW

Dockerfile

Redis

DB

Tools, Libs, SW

From

Packaging the App with its

Dependencies

= Portability & Consistency

VM](https://image.slidesharecdn.com/kubernetesfortheviadmin-190613173608/75/Kubernetes-for-the-VI-Admin-5-2048.jpg)