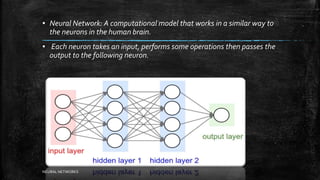

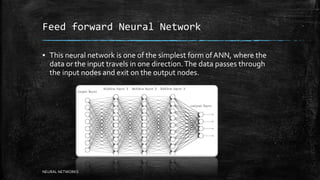

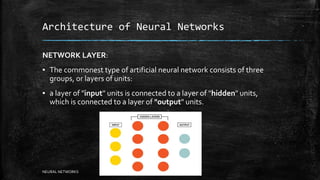

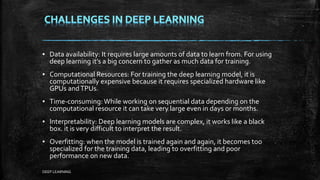

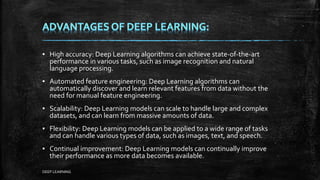

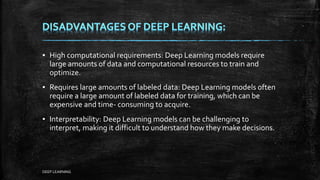

Neural networks are computational models inspired by the human brain that are used for machine learning. They consist of interconnected nodes that process information using a learning algorithm. Neural networks are used for applications like pattern recognition and classification. The first neural networks were developed in the 1940s-1950s, but modern networks use many layers of nodes, called deep learning, which has led to state-of-the-art performance in computer vision, natural language processing, and other domains. Deep learning requires large amounts of data and computational power but can automatically discover relevant features from data.