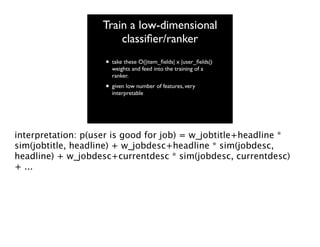

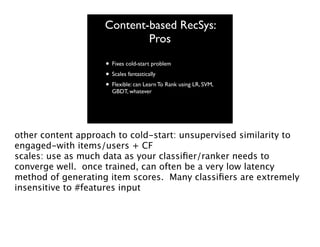

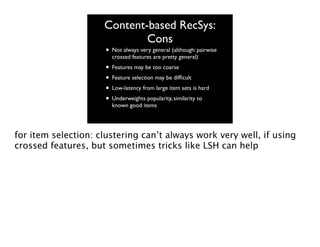

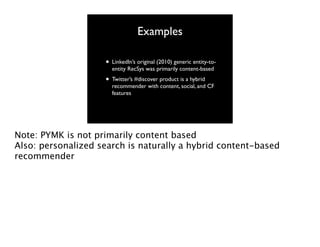

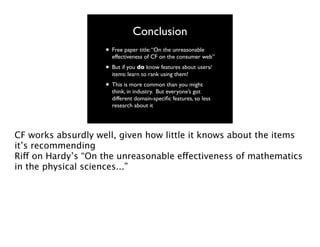

The document discusses content-based recommender systems, emphasizing collaborative filtering and user/item feature modeling. It covers the advantages and disadvantages of different recommendation approaches, including practical examples from LinkedIn and Twitter. The conclusion highlights the effectiveness of collaborative filtering while noting the importance of leveraging specific user and item features in industry applications.

![Aside: “users” don’t

have to be users

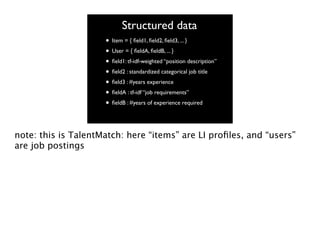

• At LinkedIn, the Recommender Systems

team built a general-purpose entity-toentity RecSys: Product [user, item]

•

•

•

•

TalentMatch [job posting, user-profile]

GroupsYouMayLike [user, group]

{Jobs for your group} [group, job-posting]

AdsYouMayBeInterestedIn [user, ad]

Anmol Bhasin, Monica Rogati (now VP of Data at Jawbone), and

myself built...

So how do recommender systems work?

next page: “an artists depiction of collaborative filtering”](https://image.slidesharecdn.com/jakemannixmlconf2013-131119173715-phpapp01/85/Jake-Mannix-MLconf-2013-4-320.jpg)