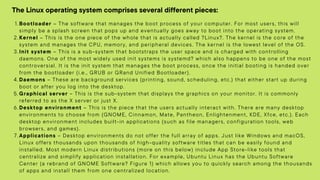

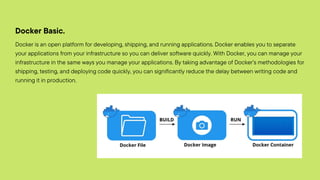

Linux/Unix is an operating system that supports multitasking and multi-user functionality. It consists of a kernel, shell, and programs. Unix is widely used on servers, desktops, and embedded in other operating systems. Docker is a tool that allows users to package applications into containers that can run on any infrastructure. It provides a way to deploy applications easily and consistently from development to production. Docker uses a client-server architecture, with a Docker daemon managing containers and images based on requests from a Docker client.