The document provides an exploration of the GridGain Nebula, focusing on test automation challenges encountered while using its cloud-native control center/dashboard. It outlines the architecture of the test framework, initial login tests, and additional test scenarios, along with observations on element location and testing strategies. Key findings highlight the importance of consistent element identification for effective test automation in this environment.

![18

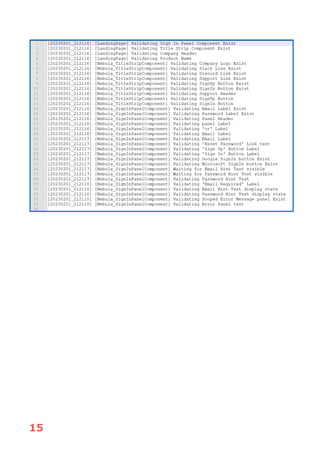

NEBULA_MAT_UI_25000.5, NEBULA_MAT_UI_25000.6

In the first of these tests, NEBULA_MAT_UI_25000.5, the target assertion is that

the Profile page, accessed via the User Menu on the far right of the Dashboard

title strip, is as expected.

In this test, we pass data to the step (Line 77) where we actually do the

assertions regarding the data on the Profile page. The expected value of the

“phone” element is specified by means of the mnemonic “[blank]”. In the step

definition, code behind, we need to define a method carrying a special attribute,

that is recognised at runtime which specifies this mnemonic will be used to

signal an empty string in Data Tables. This special method is shown below:

For certain Country/Region selections, GDPR-related input is requested on the

Profile page. The Country/Region for the defined login credentials is specified in

the table by the data item in column “country-region”. The two possible views

of the Profile panel are shown below:](https://image.slidesharecdn.com/intothenebulaarticle-240119150126-786c8ada/85/IntoTheNebulaArticle-pdf-20-320.jpg)