The document discusses the use of the Testim tool for test automation in user acceptance testing (UAT) settings, highlighting its features and benefits compared to historical record-playback (r-p) approaches. It details the author's positive experience in developing tests for a banking web application, emphasizing that Testim enhances validation processes for non-technical users. The author recommends further training for business actors and suggests improvements to the tool's interface for better usability.

![13

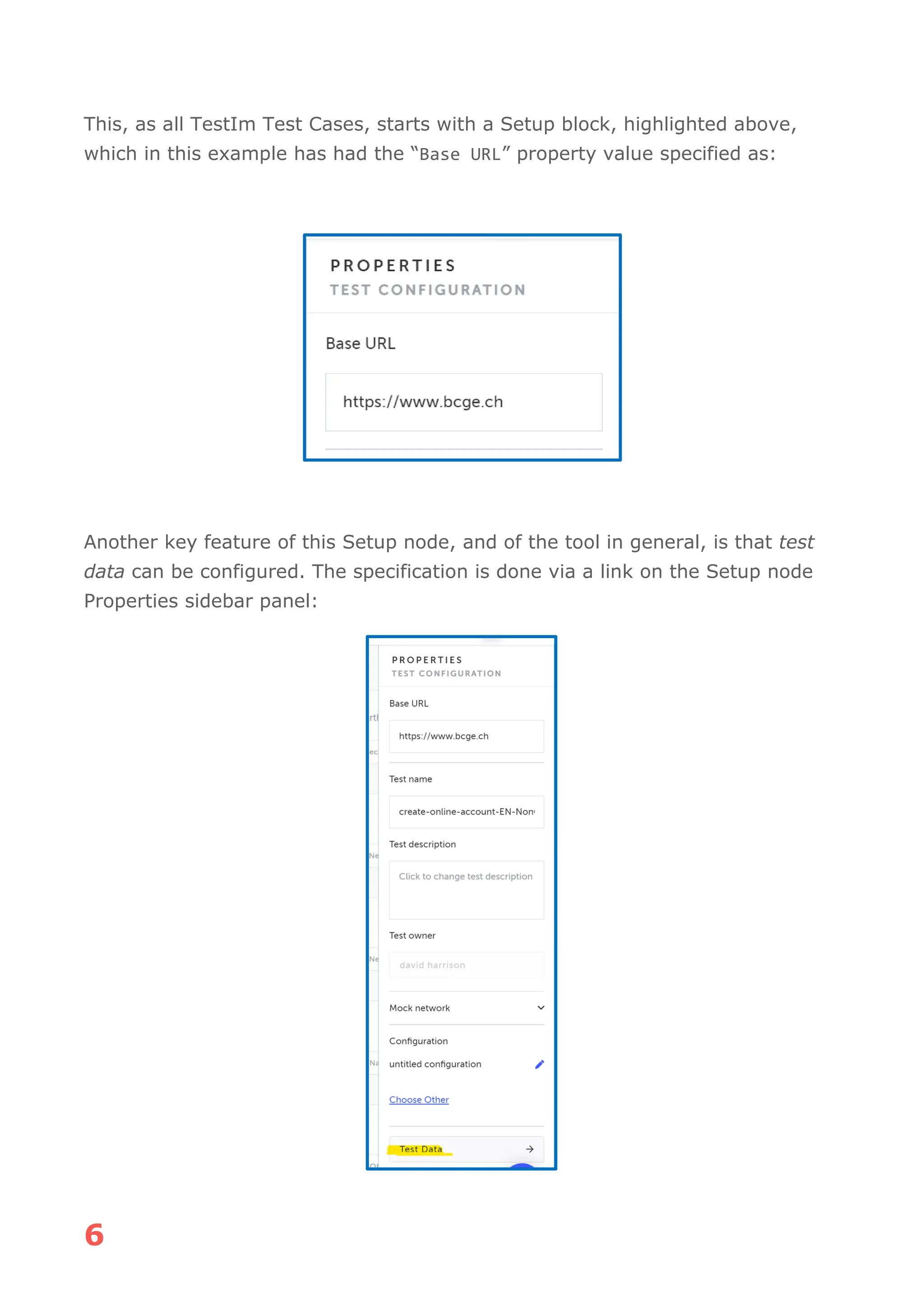

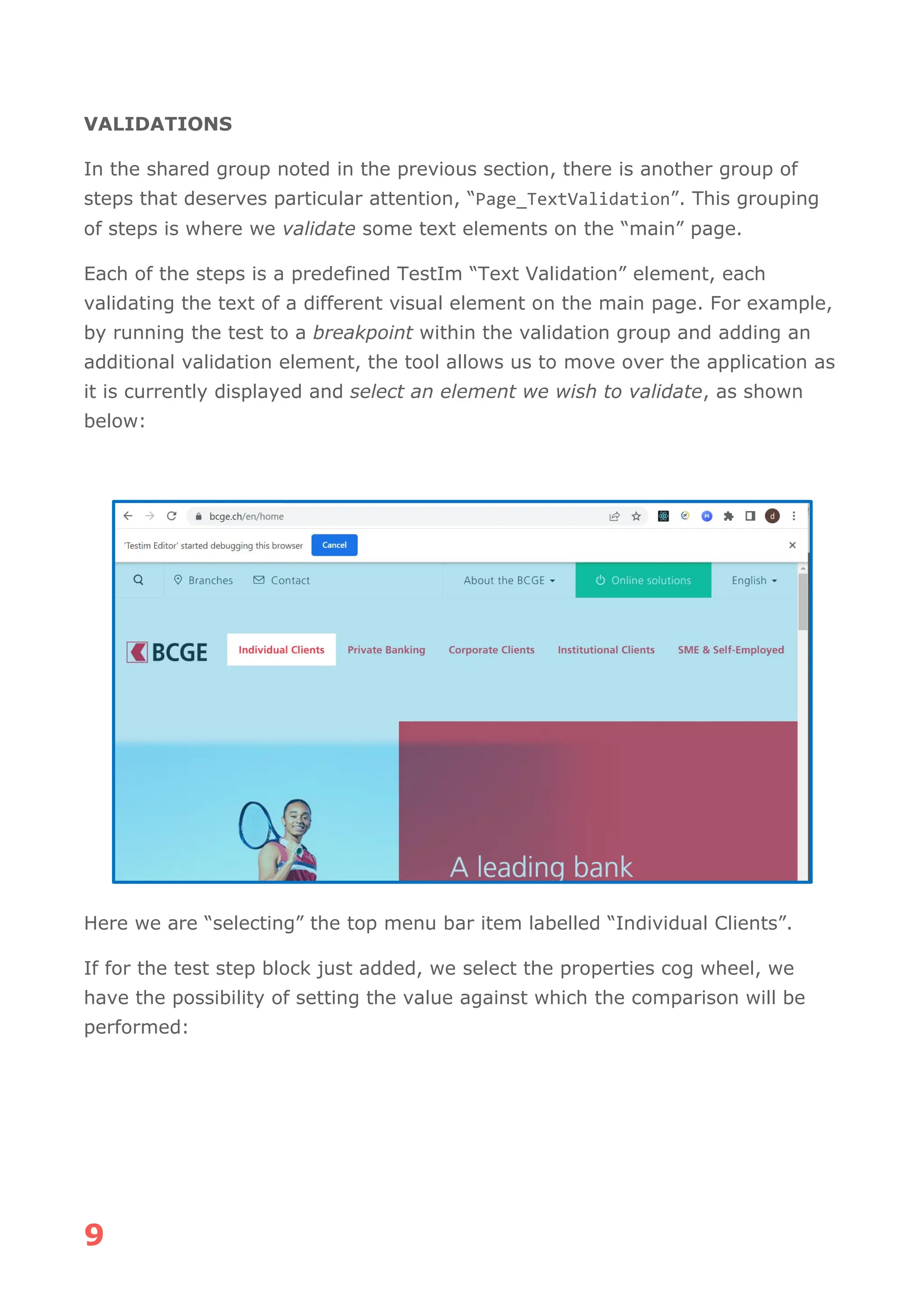

There are quite some aspects that need to be validated here, to ensure that the

basic login process offered by the panel is as designed.

Each of the TextBoxes have associated Hint Text which we need to ensure is

being displayed appropriately, as well as associated Error Text displayed if an

entered value is detected as inappropriate. In addition, there are several links

that need to be checked to ensure they lead to the correct pages. It is

worthwhile to note that the business actor has gone to some trouble ensuring

that the page operates in this way, and we as test automators need to ensure

compliance of the features with the business expectations.

In addition, we might see the correct login process as a trivial, but to be tested,

base case.

If we were approaching the testing of this Login process using

(BDD/Java/Selenide) the Scenario might look something like as shown below:

@CONSOLE_REACT_UI_2000.4 @CONSOLE @SMOKE

Scenario Outline: Check Login Panel operation

Given [2000.4] I click the Password TextBox

Then [2000.4] The Email Hint Text is displayed

When [2000.4] I click the Email TextBox

Then [2000.4] The Password Hint Text is displayed

When [2000.4] I enter "<invalid-email>" in Email TextBox

Then [2000.4] The Email Error Text "<email-error-text>" is displayed

When [2000.4] I enter "<valid-email>" in Email TextBox

Then [2000.4] The Email Error Text is not displayed

When [2000.4] I click the Email TextBox

Then [2000.4] The Password Hint Text is displayed

When [2000.4] I click the Password Recovery Link

Then [2000.4] The Password Recovery Page is displayed

When [2000.4] I click the browser back button

Then The Login page is displayed

When [2000.4] I click the Sign Up Link

Then [2000.4] The Sign Up Page is displayed

When [2000.4] I click the browser back button

Then The Login page is displayed

When [2000.4] I click the Terms of Use Link

Then [2000.4] The Terms of Use Page is displayed

When [2000.4] I Click the close link

Then The Login page is displayed

When [2000.4] I click the Privacy Policy Link

Then [2000.4] The Privacy Policy Page is displayed

When [2000.4] I Click the close link

Then The Login page is displayed

When [2000.4] I click the Cookie Policy Link

Then [2000.4] The Cookie Policy Page is displayed

When [2000.4] I Click the close link

Then The Login page is displayed

Examples:

| invalid-email | email-error-text | valid-email |

| jonsmith-yahoo.com | Enter a valid email | jonsmith@yahoo.com |](https://image.slidesharecdn.com/phoenixrisingarticle-240119155246-ef2c4386/75/PhoenixRisingArticle-pdf-15-2048.jpg)