Embed presentation

Downloaded 15 times

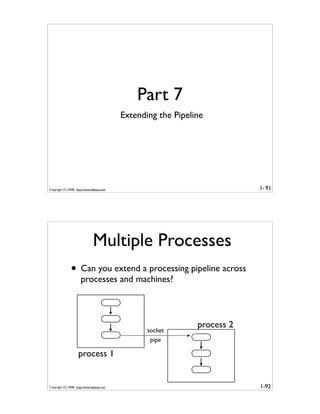

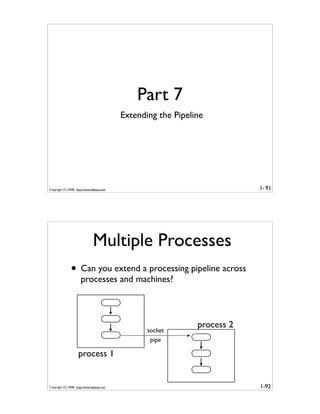

This document is the introduction to a tutorial about generator tricks for systems programmers presented by David Beazley. It provides biographical information about the presenter, outlines the goals and structure of the tutorial, and introduces some key concepts around iterators and generators in Python. The tutorial will cover practical uses of generators with a focus on files, file systems, parsing, networking, threads, and other systems programming tasks. It aims to provide compelling examples of using generators and get attendees thinking about how to apply them.