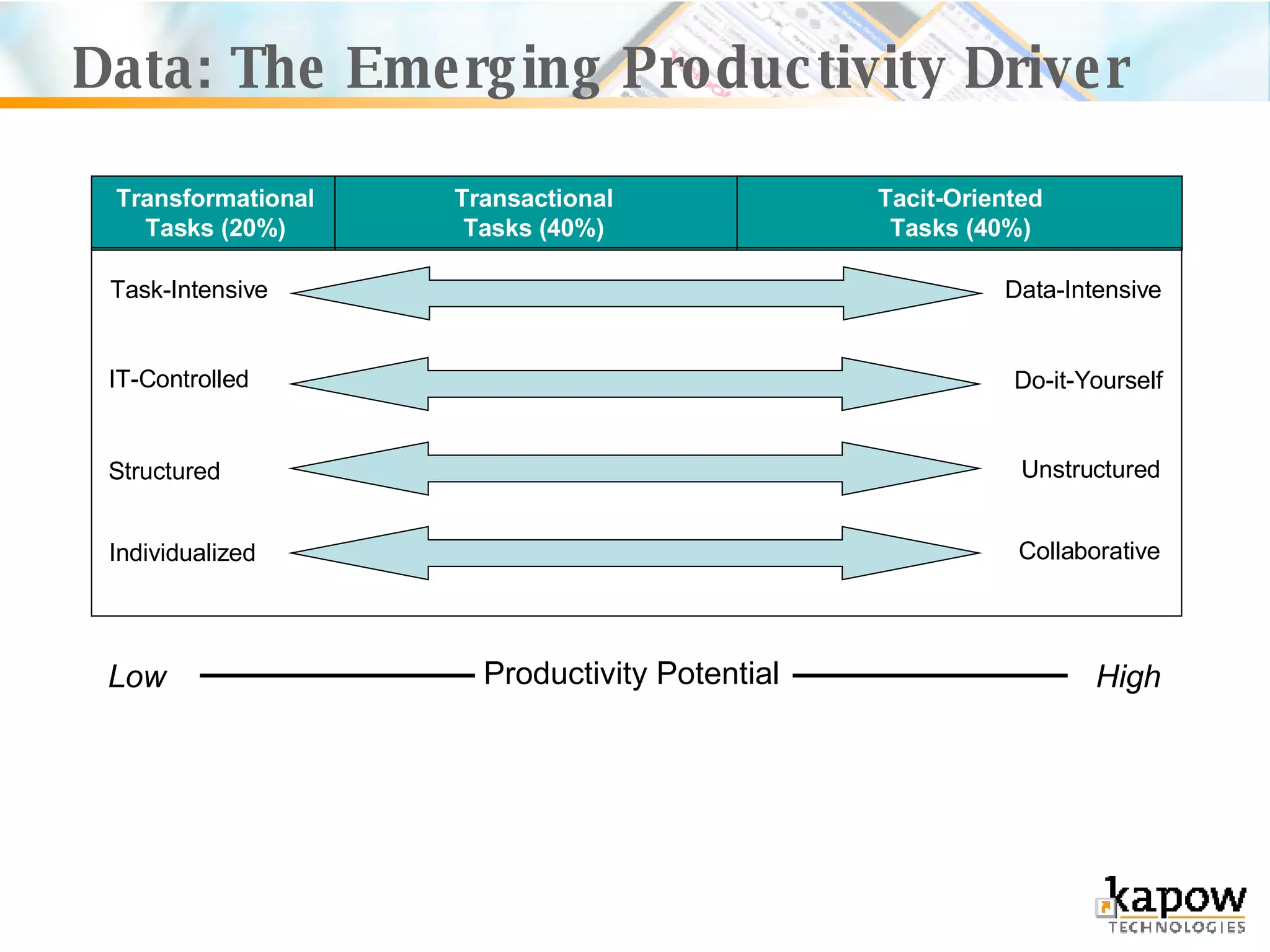

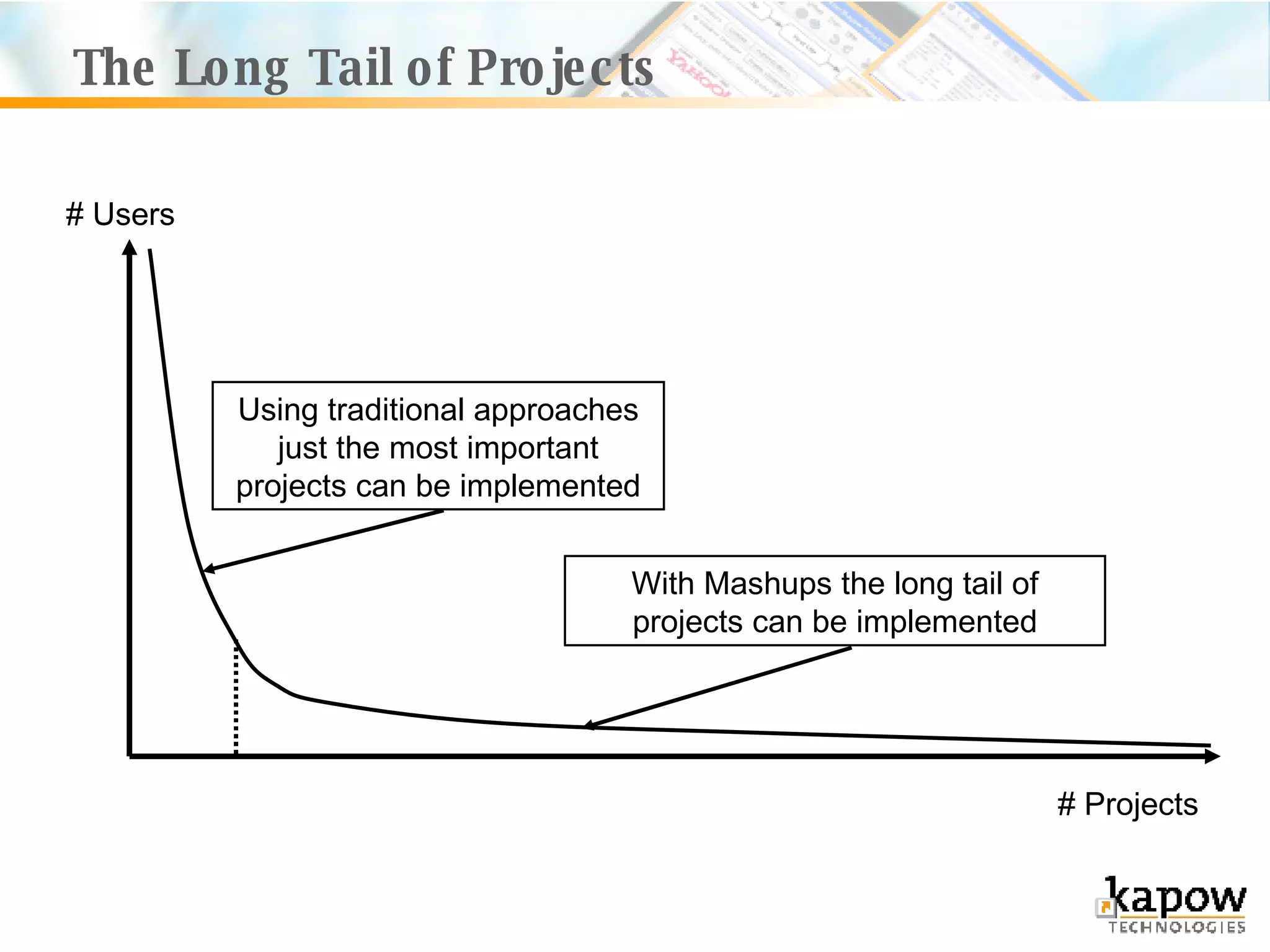

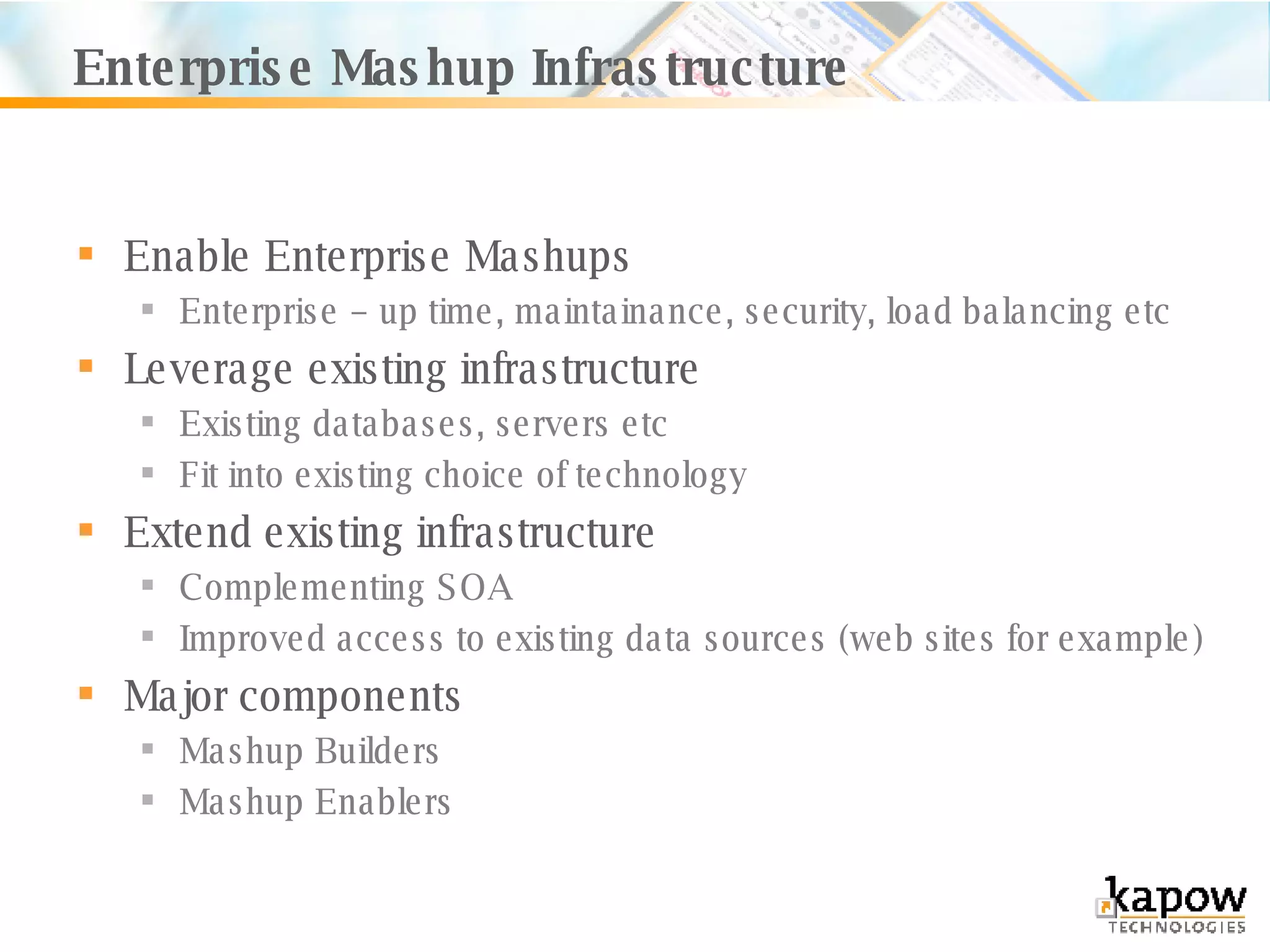

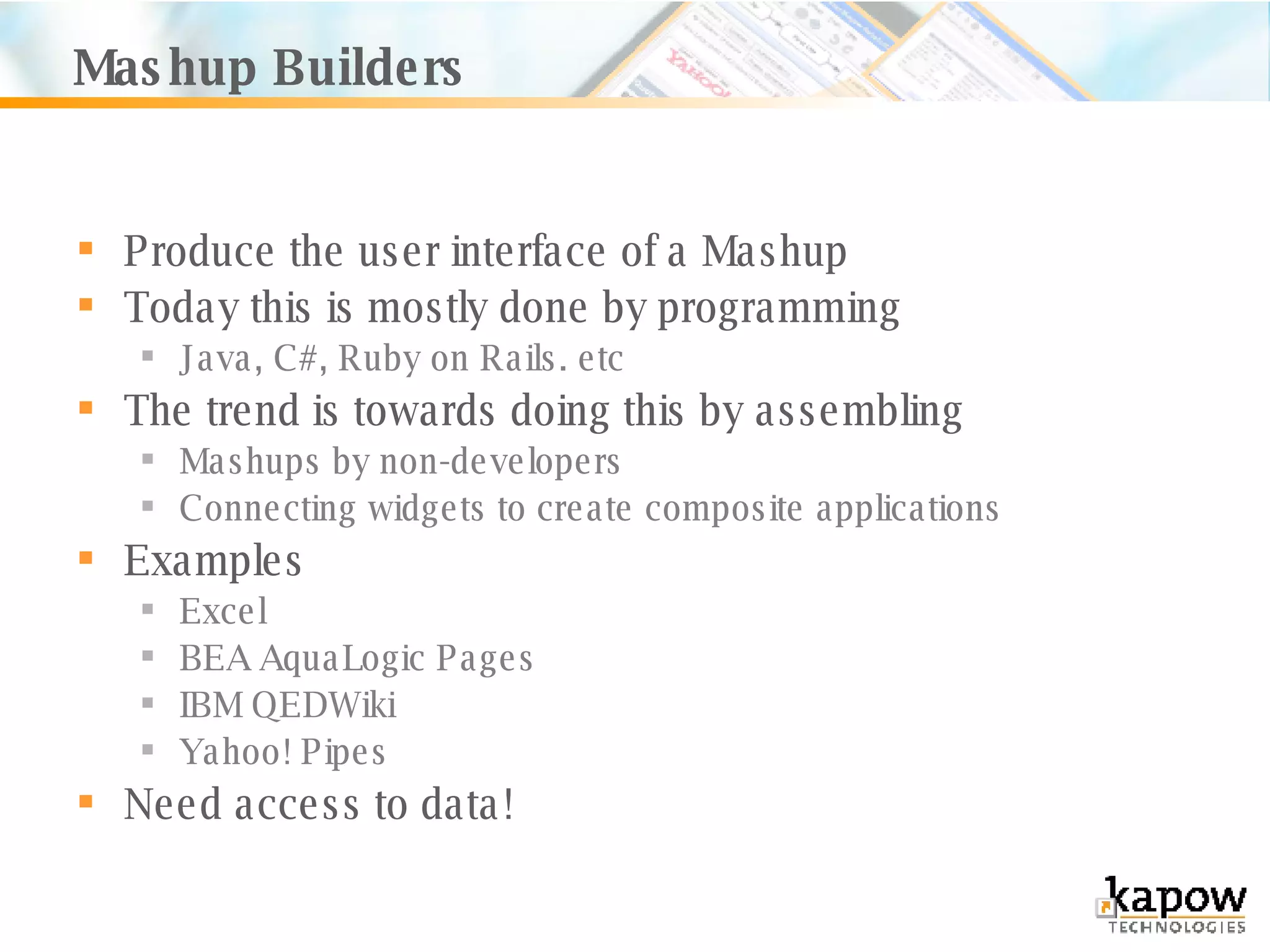

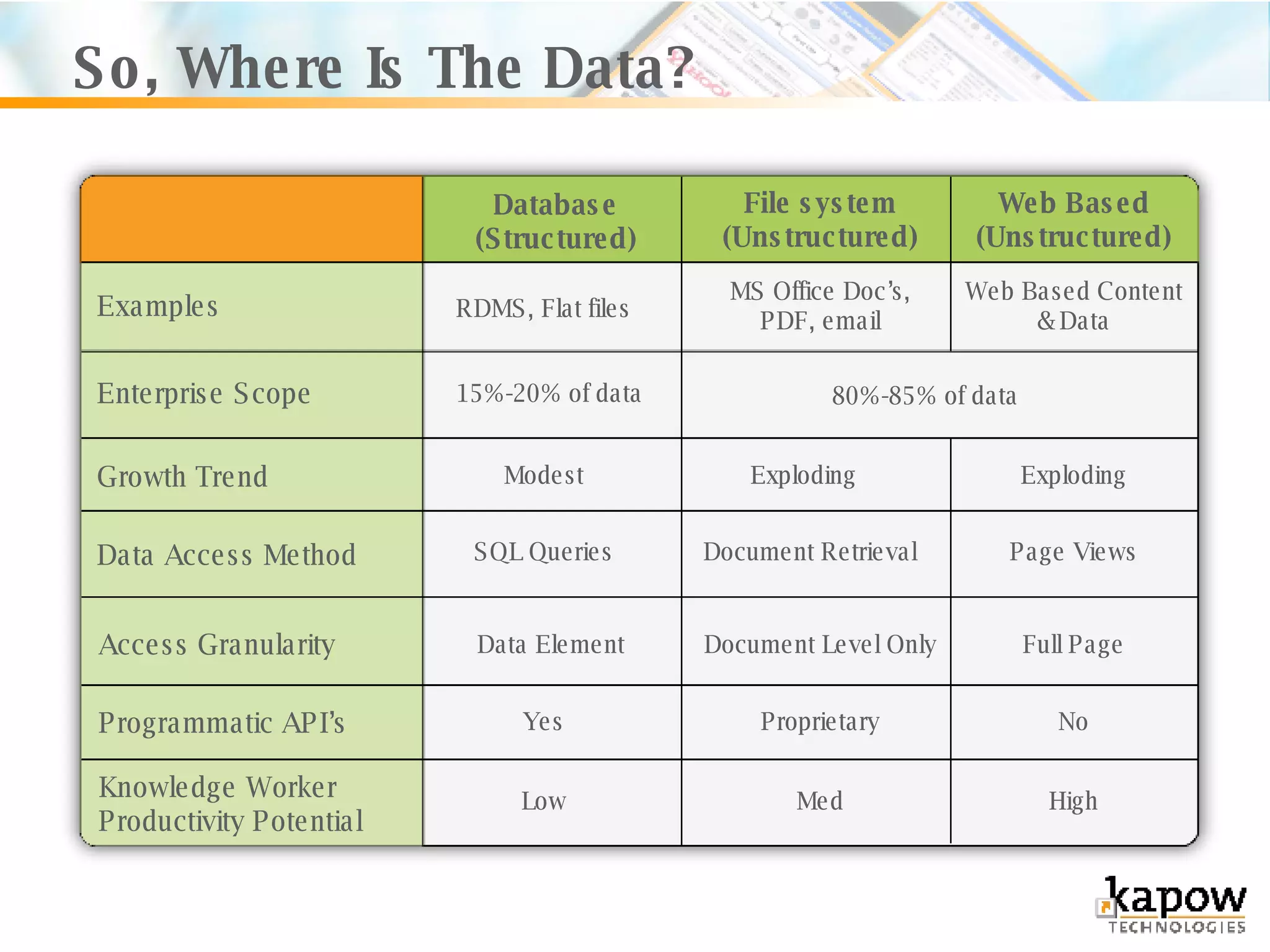

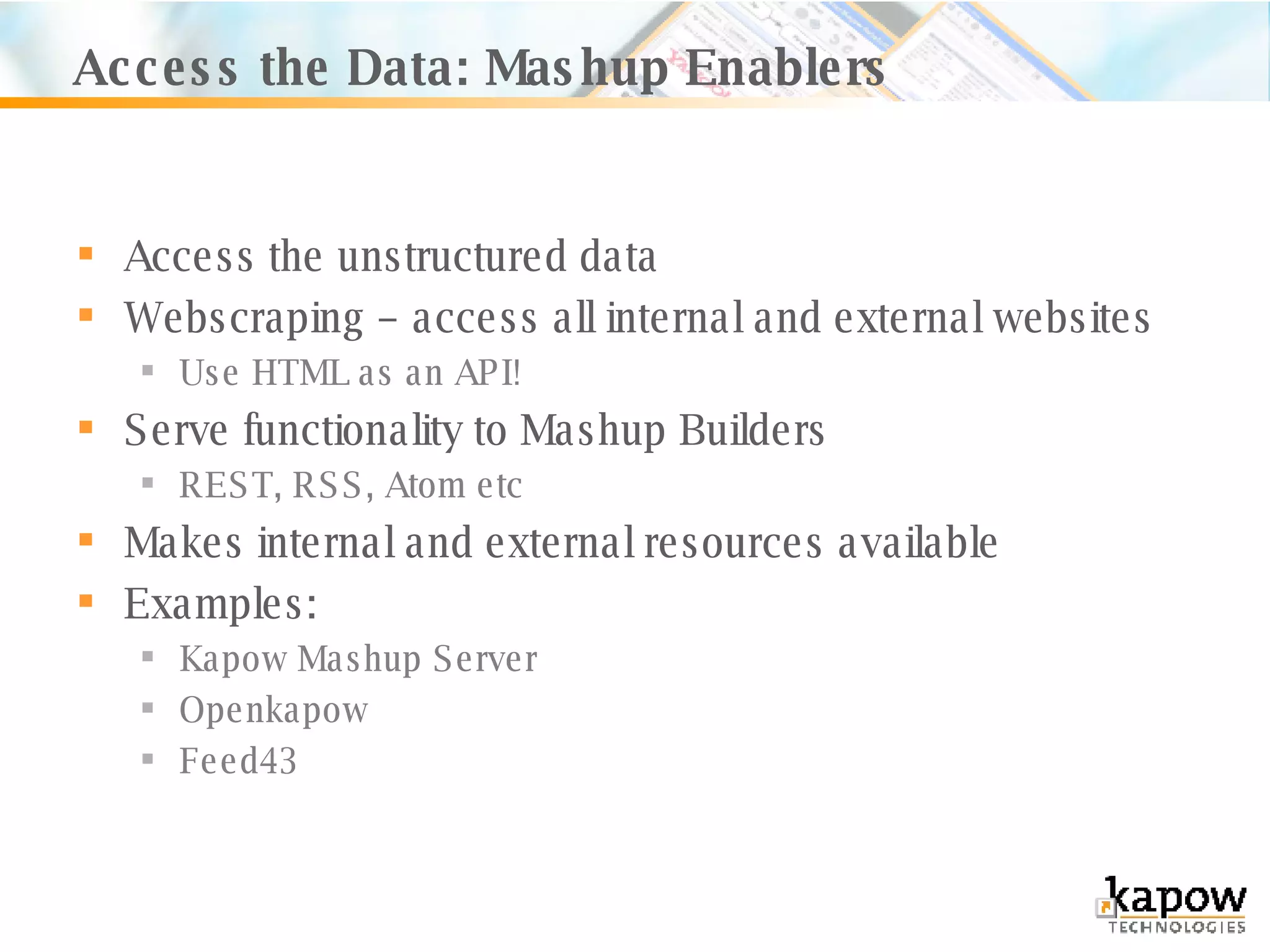

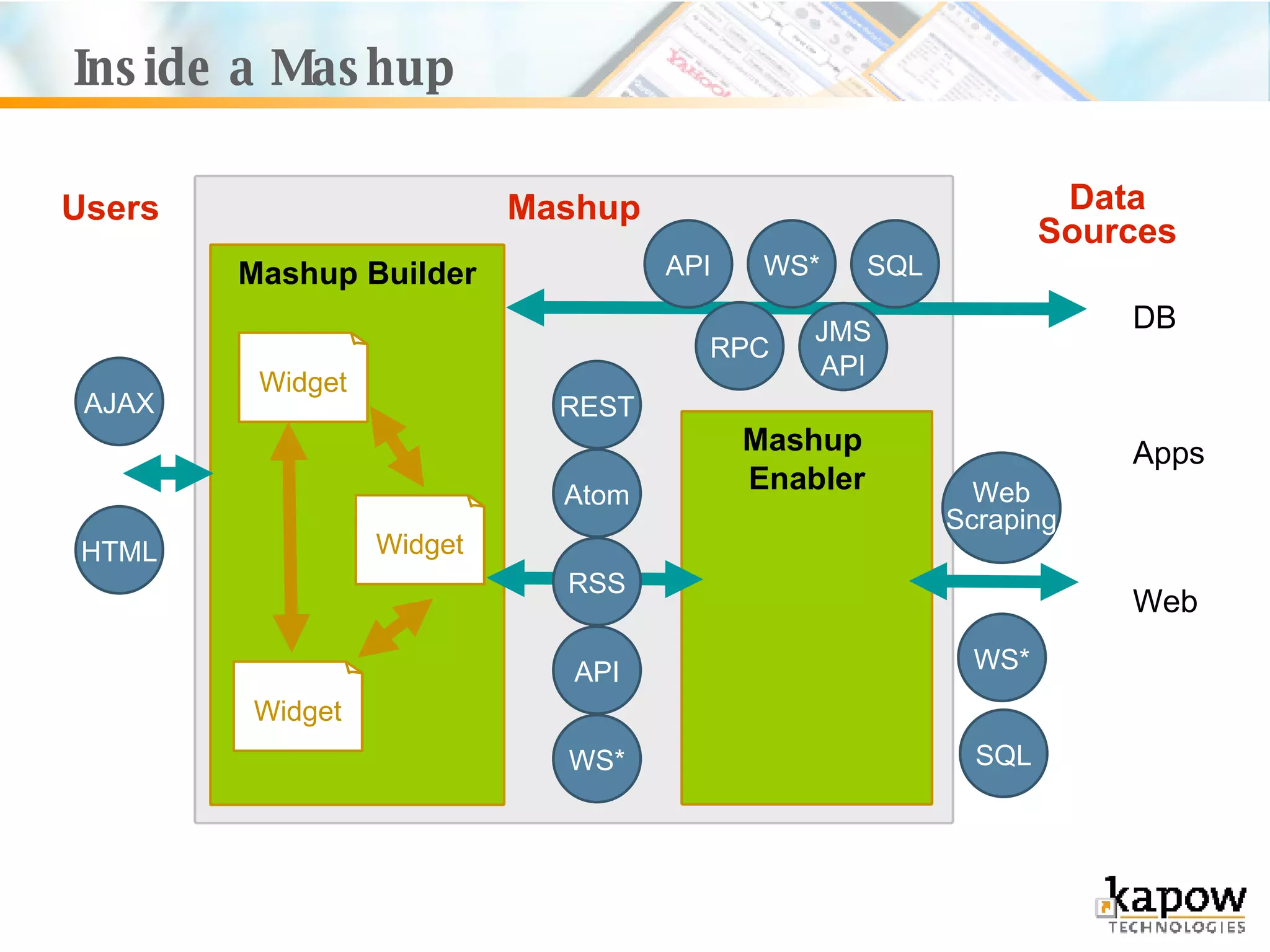

The document provides an overview of enterprise mashup infrastructure, explaining the business value of mashups and their role in enhancing data integration and productivity within organizations. It discusses various applications, examples of technologies, and mashup builders that enable users to create custom applications without extensive IT support. The document emphasizes the importance of lightweight access to corporate data, improving efficiency in problem-solving by enabling opportunistic applications and allowing business users to generate their own solutions.