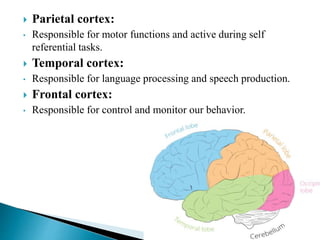

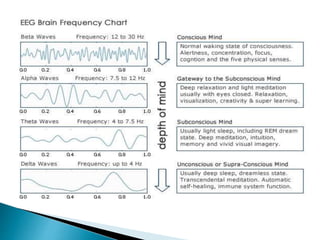

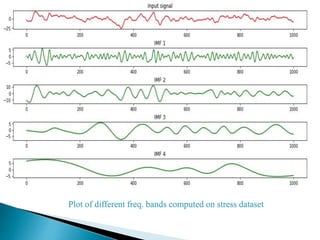

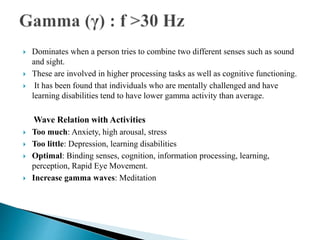

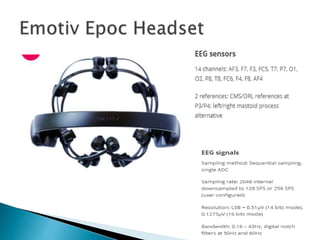

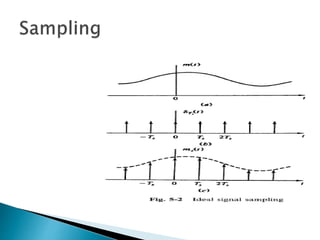

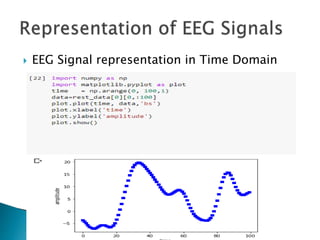

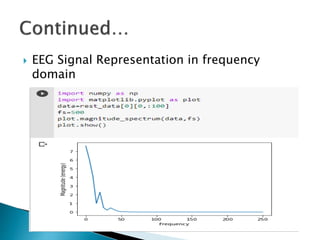

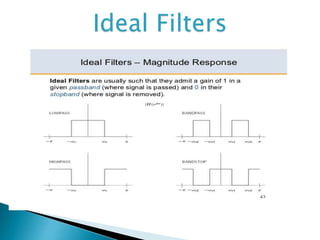

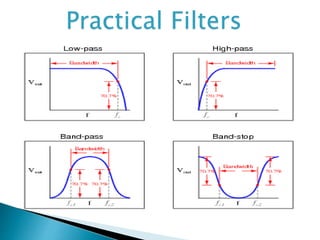

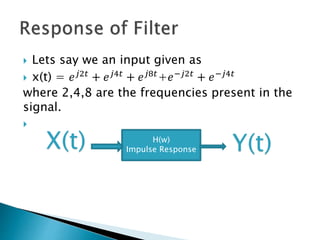

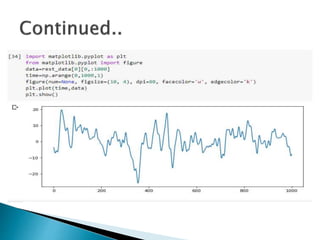

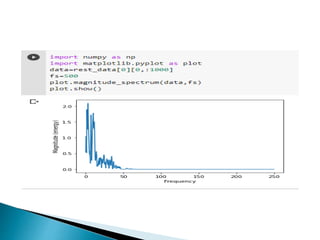

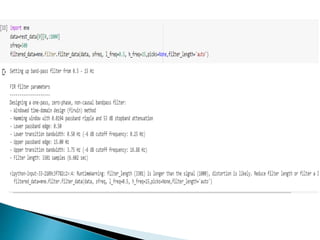

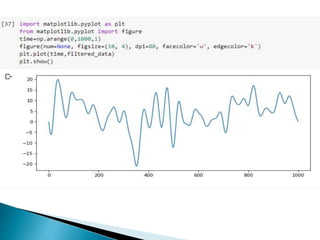

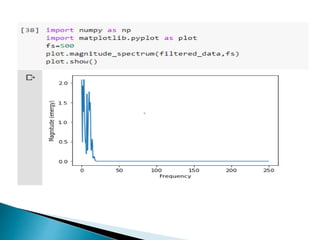

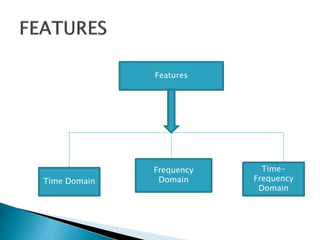

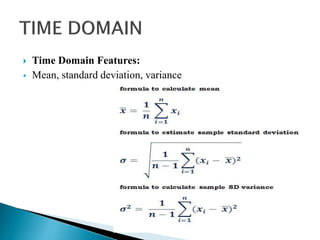

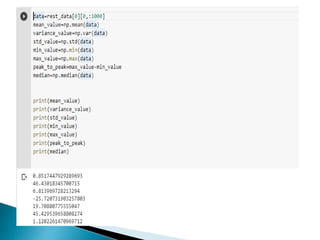

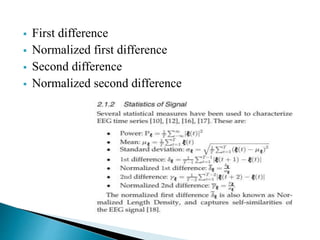

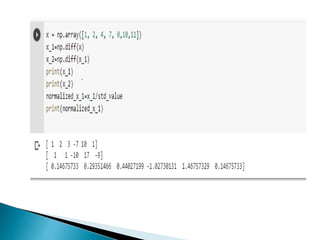

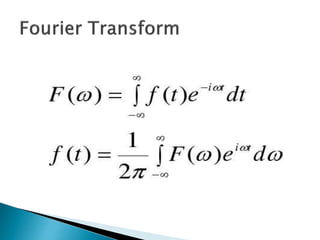

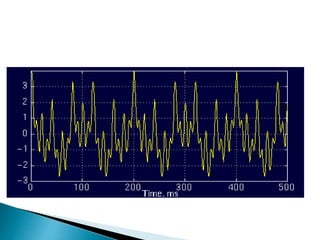

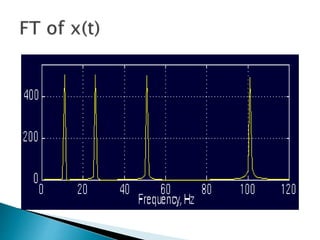

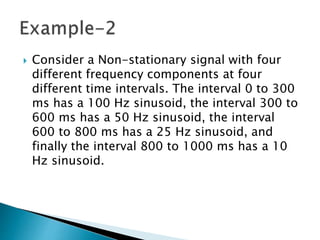

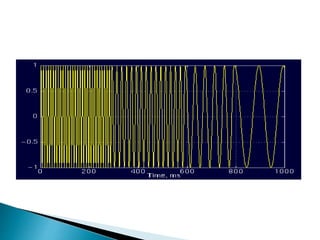

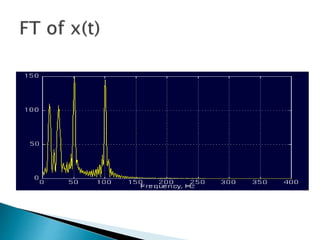

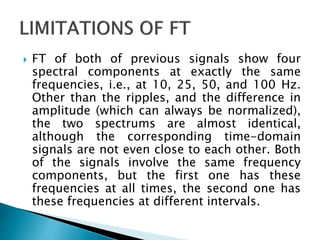

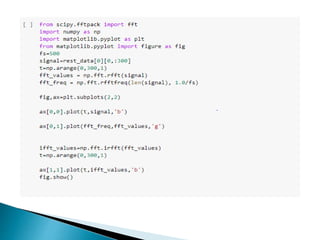

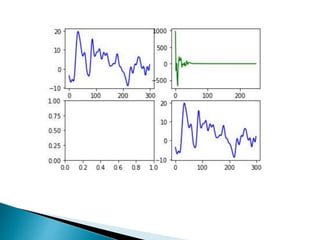

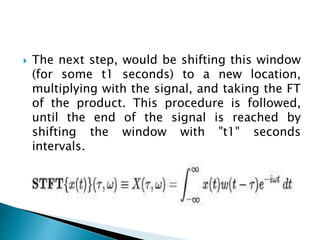

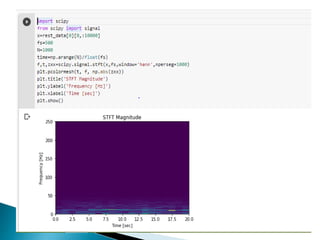

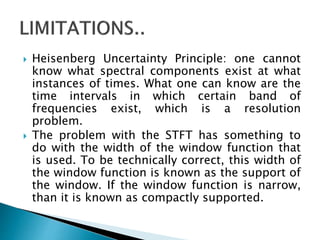

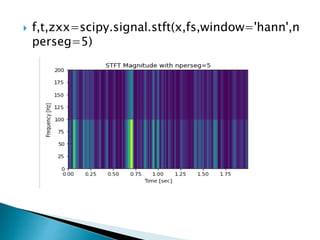

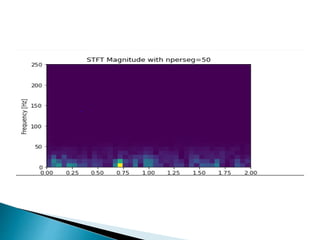

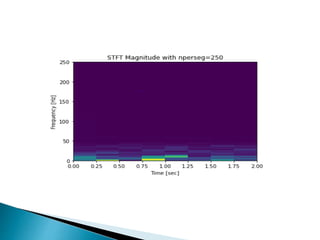

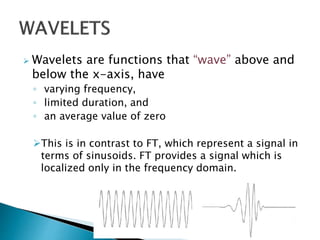

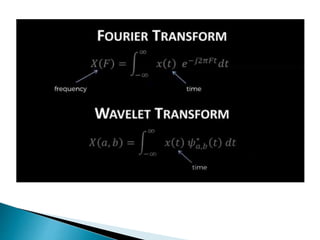

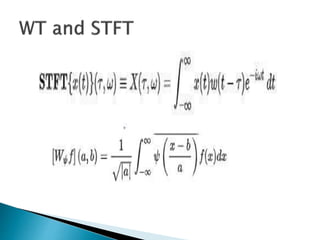

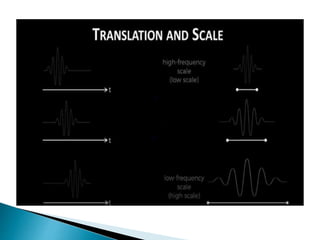

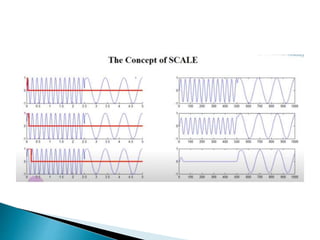

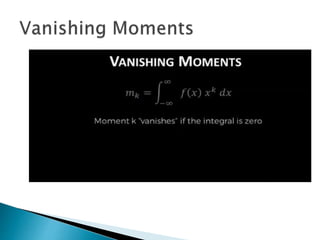

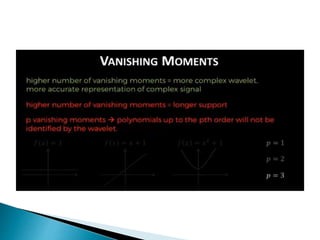

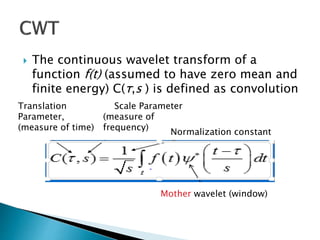

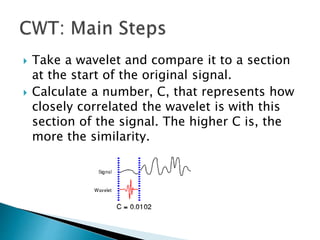

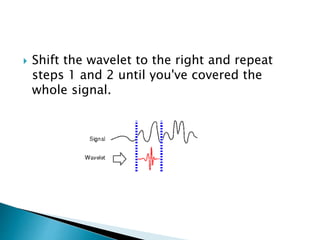

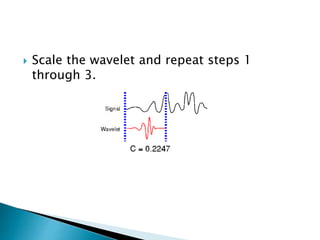

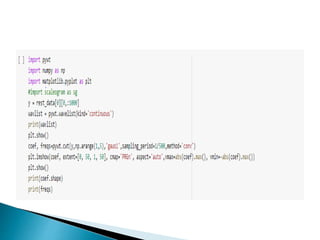

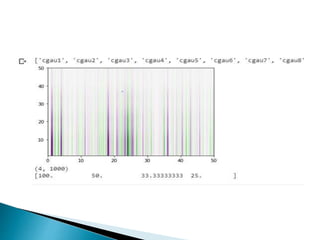

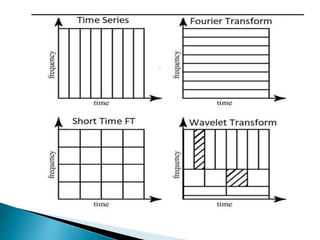

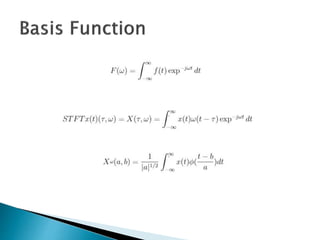

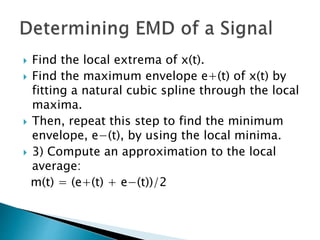

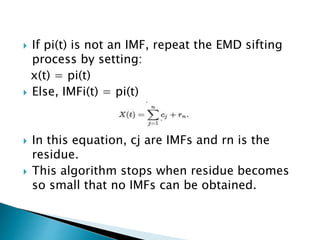

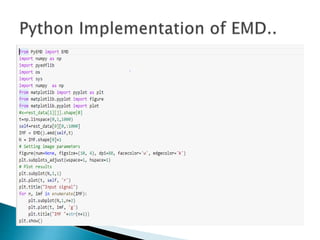

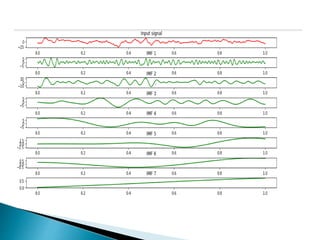

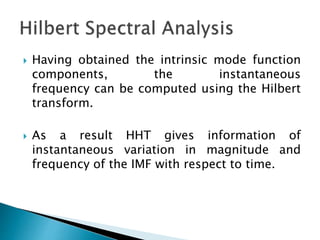

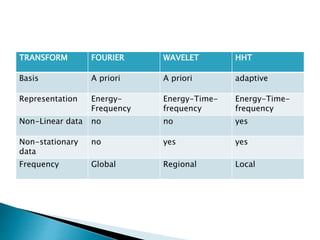

The document provides a comprehensive overview of the electroencephalogram (EEG), detailing its function in detecting brain activity and abnormalities through non-invasive electrode placement on the scalp. It explains the different cortical functions monitored by EEG, the characteristics of EEG signals, their representation in time and frequency domains, and various signal processing techniques, including Fourier Transform, Short-Time Fourier Transform, Wavelet Transform, and Hilbert-Huang Transform. Additionally, it highlights the limitations and advantages of these techniques in analyzing non-stationary biological signals.