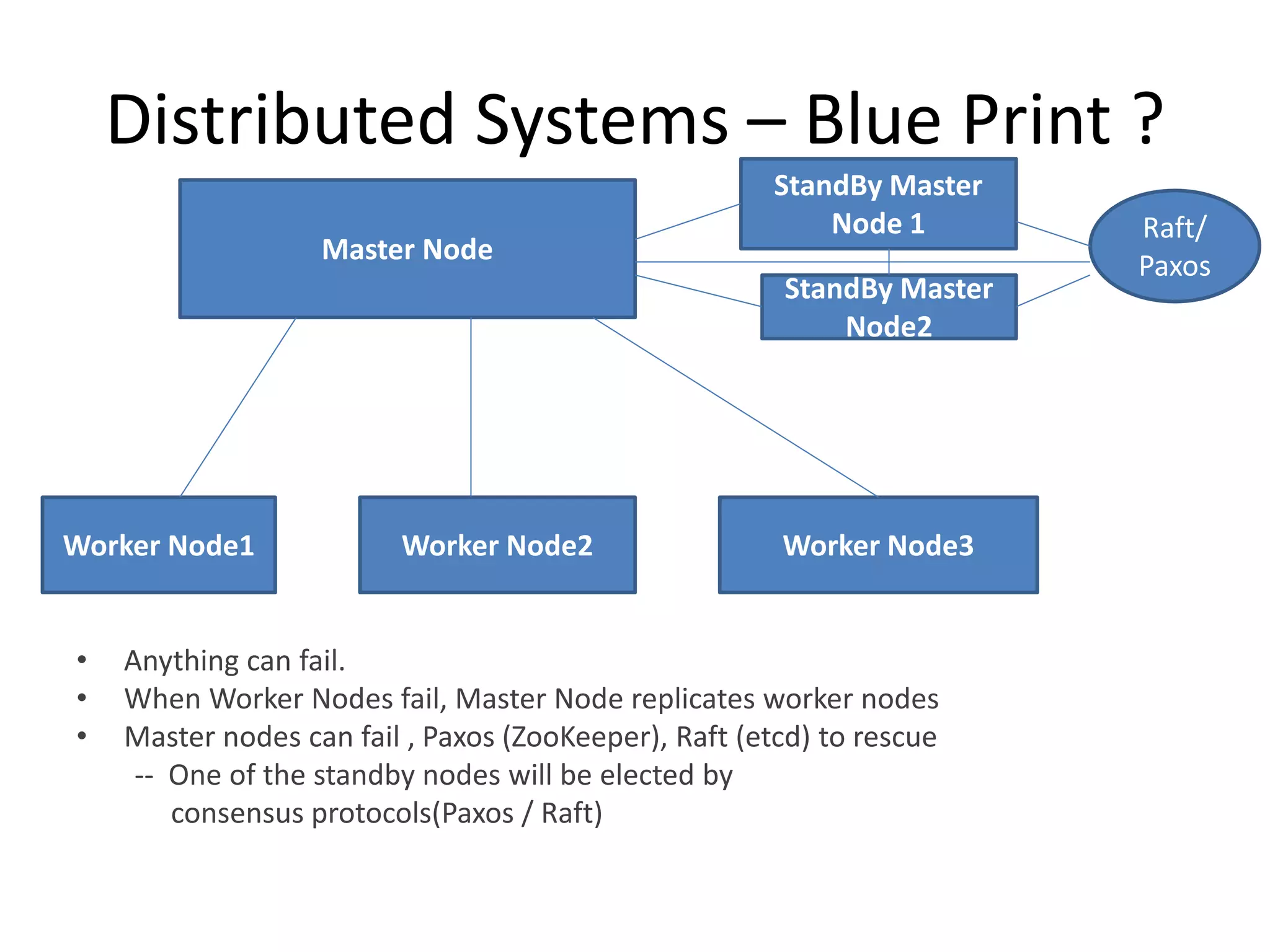

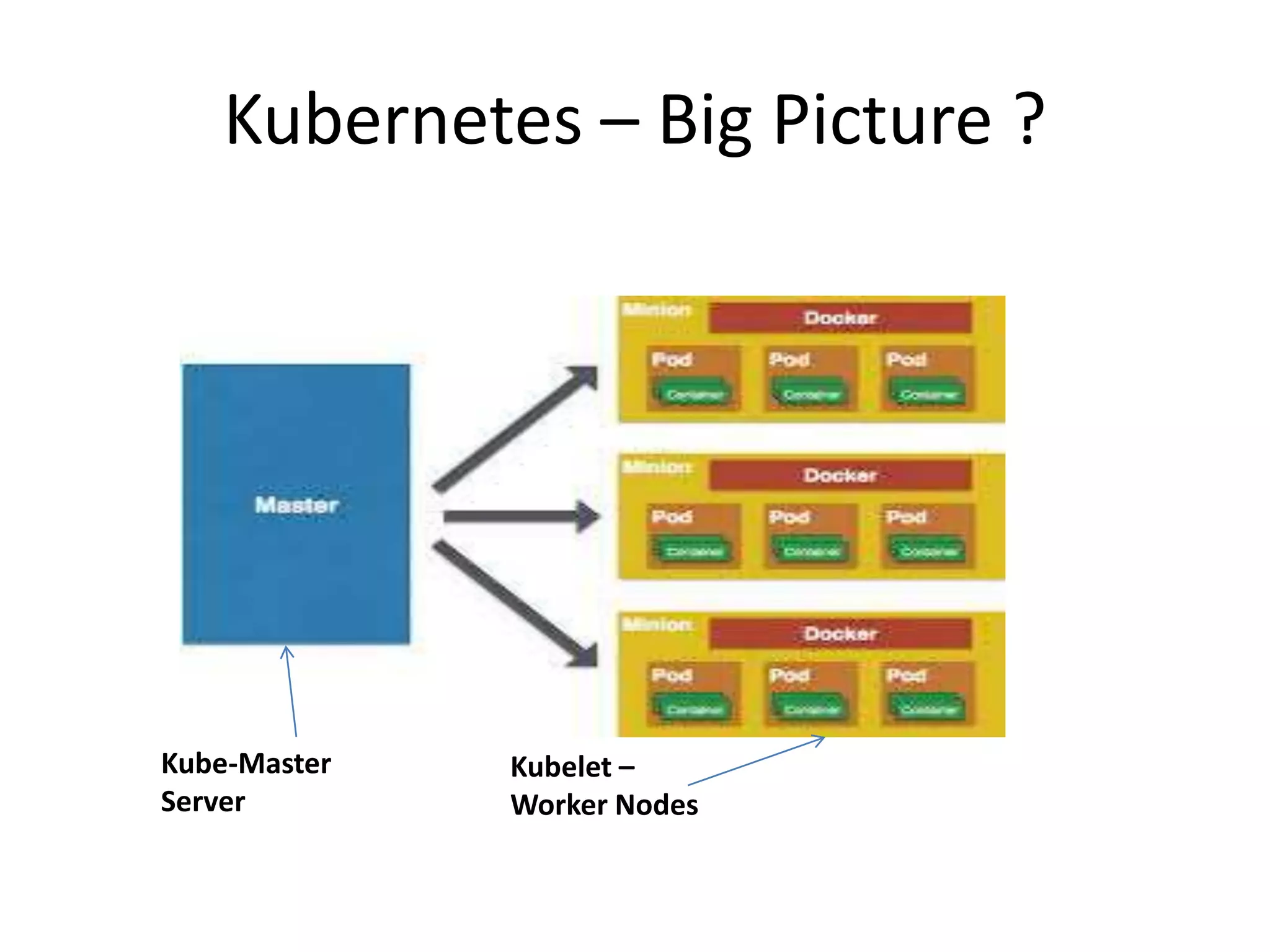

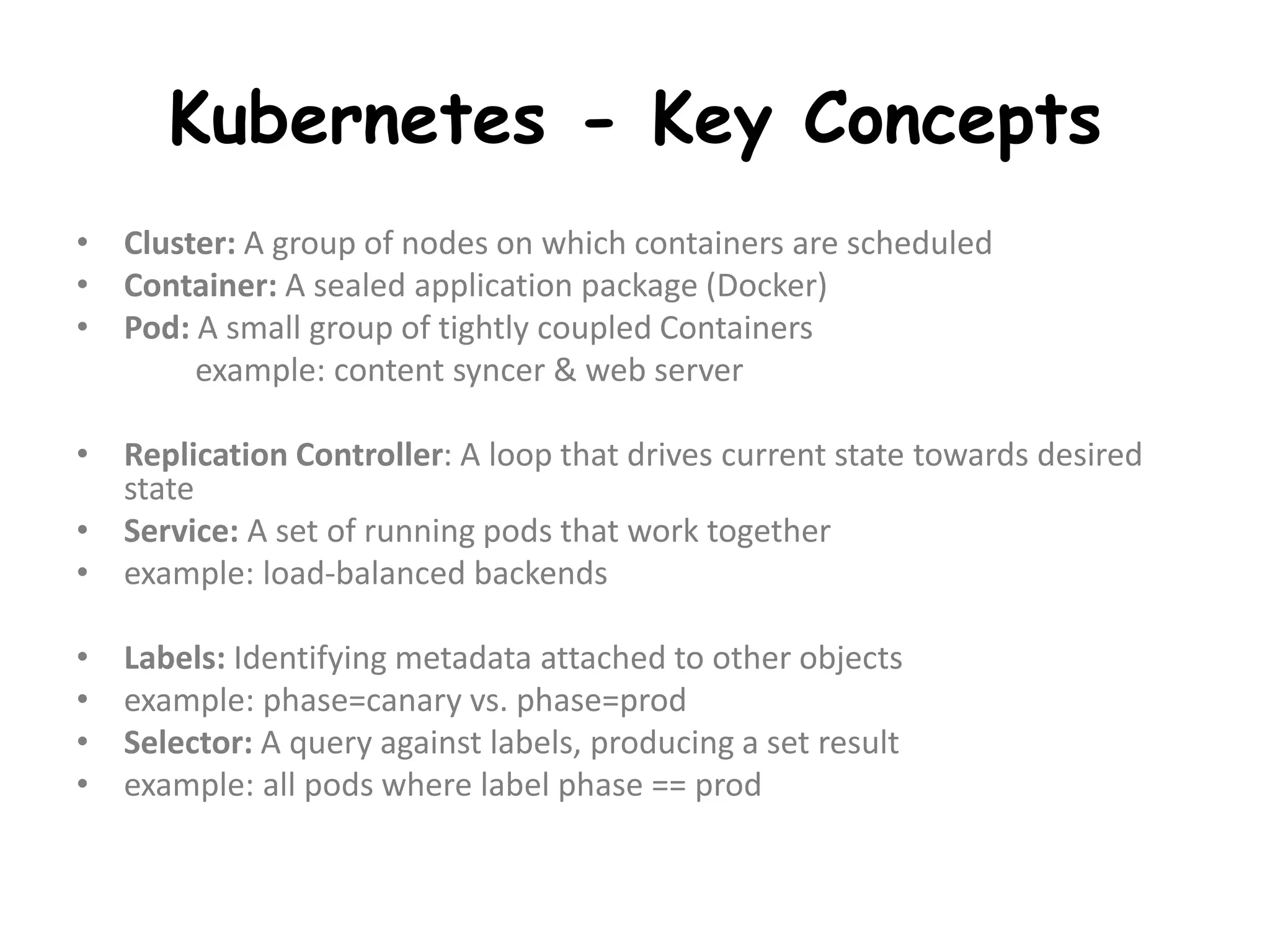

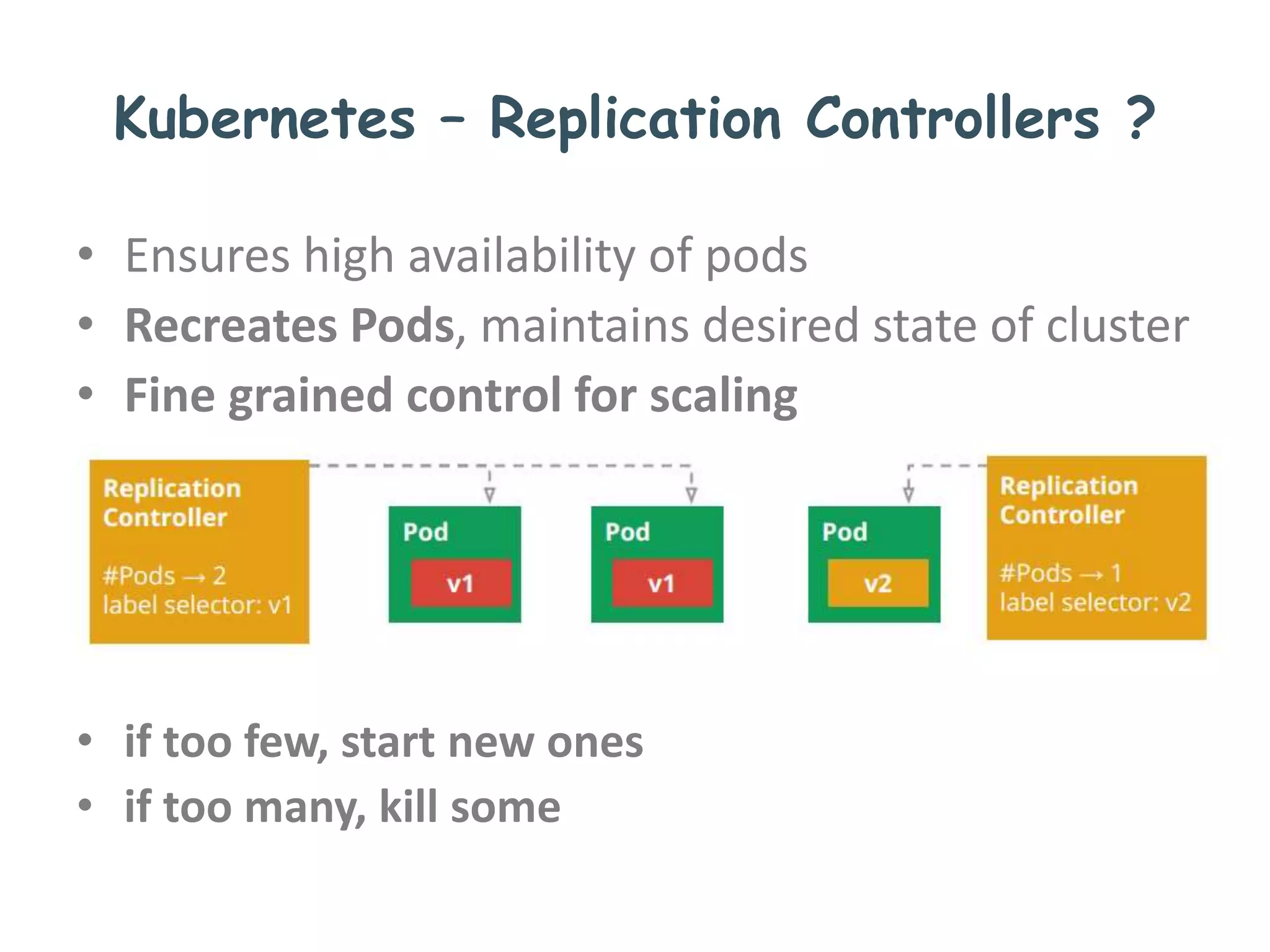

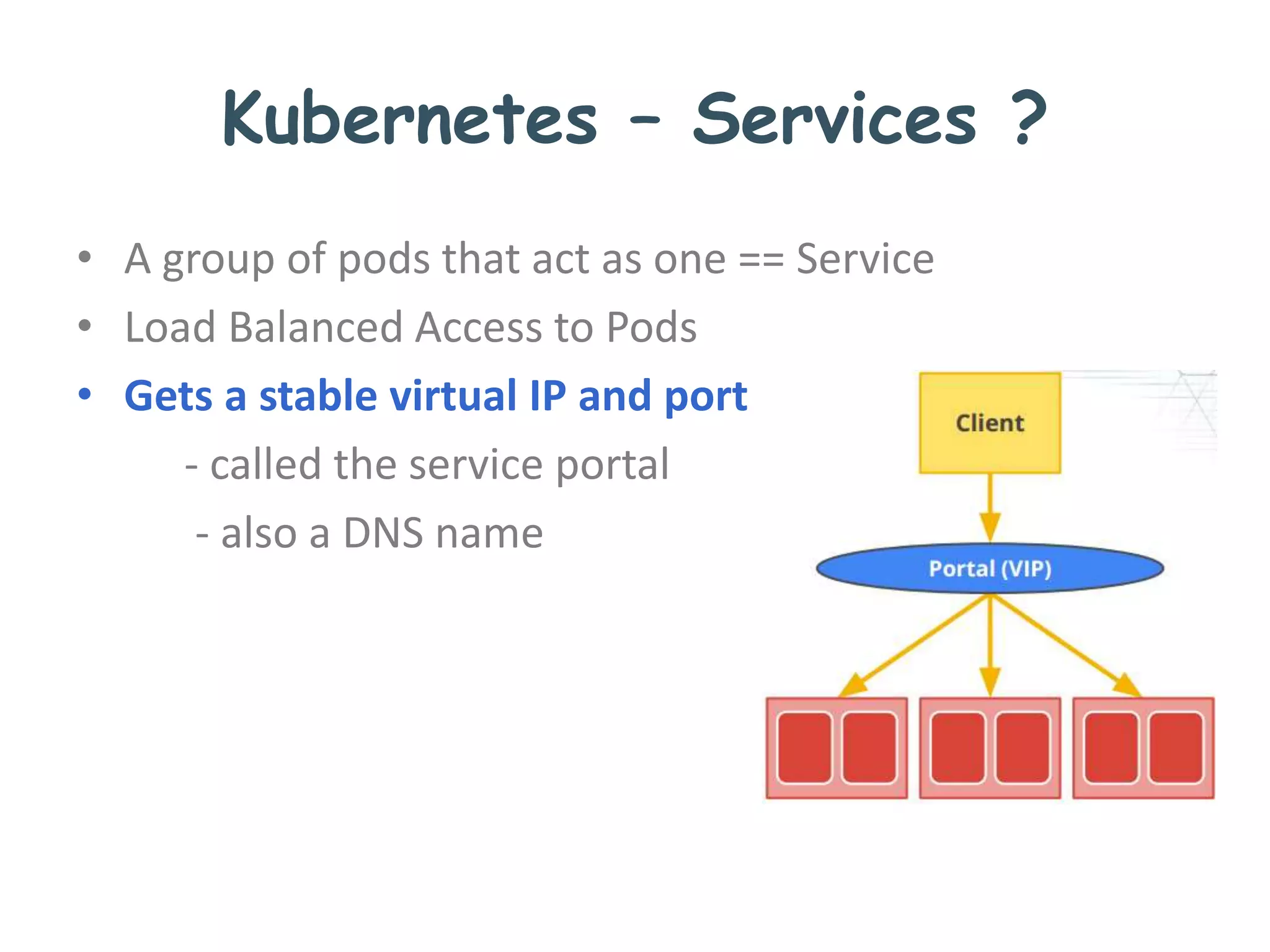

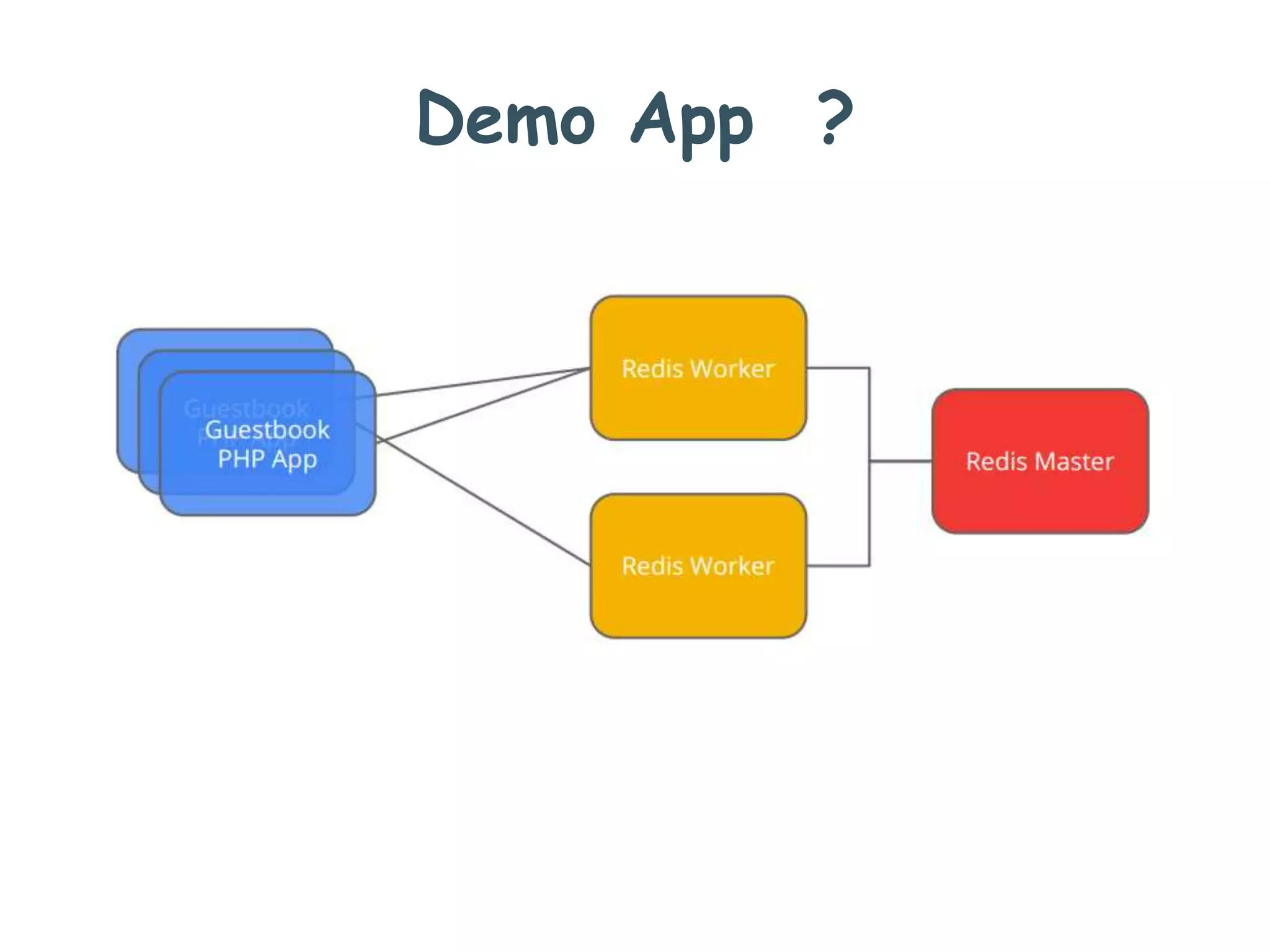

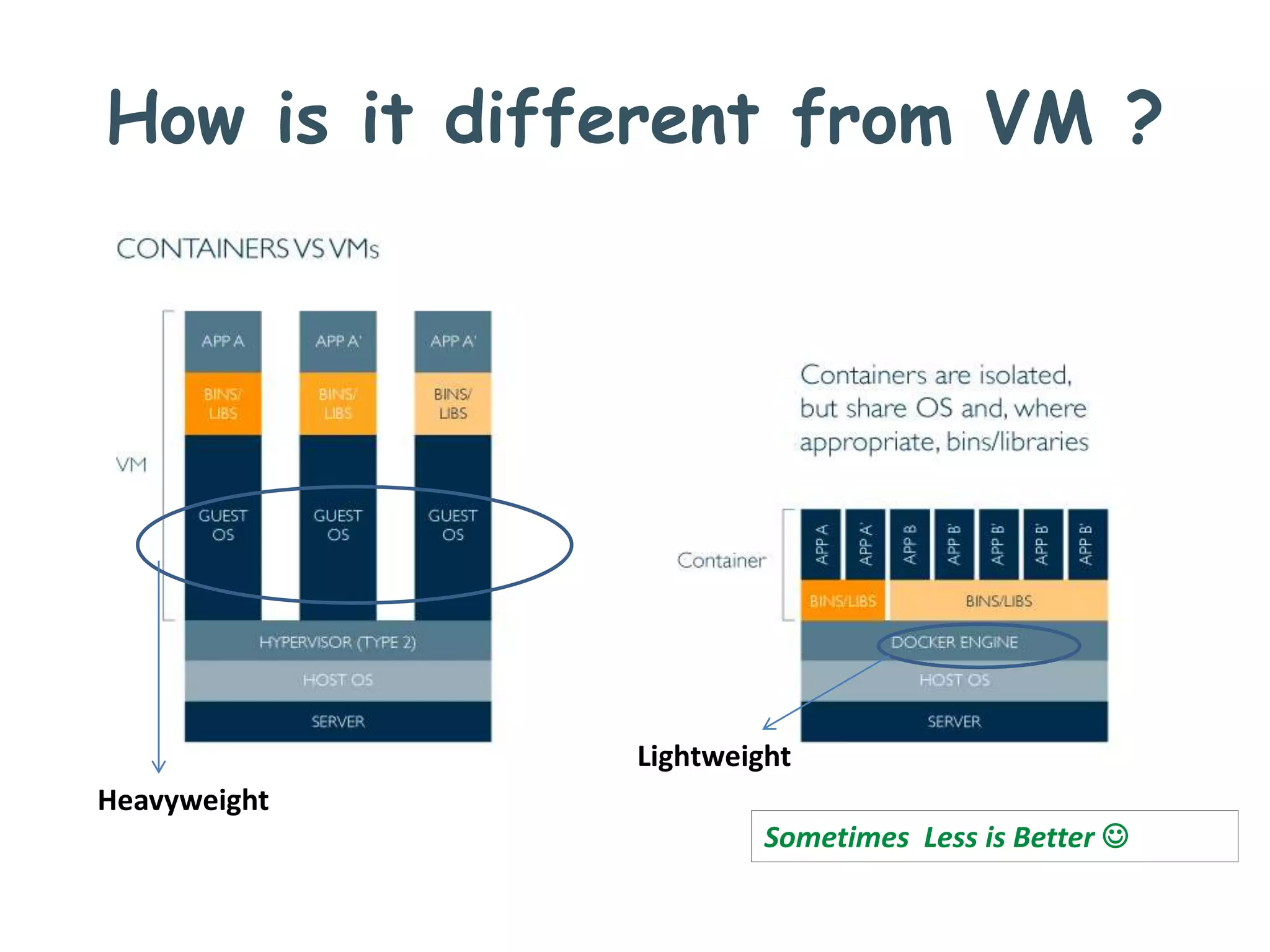

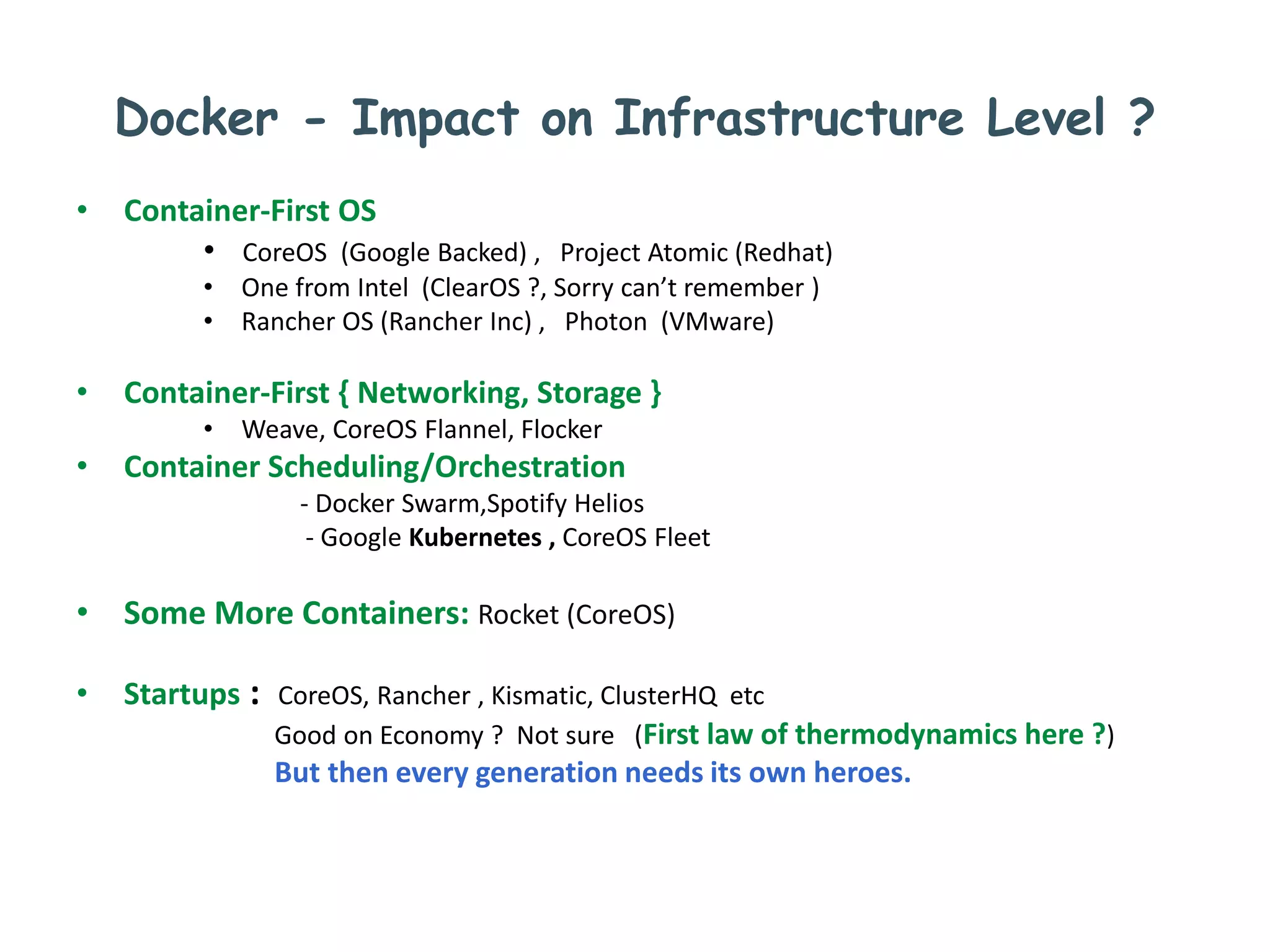

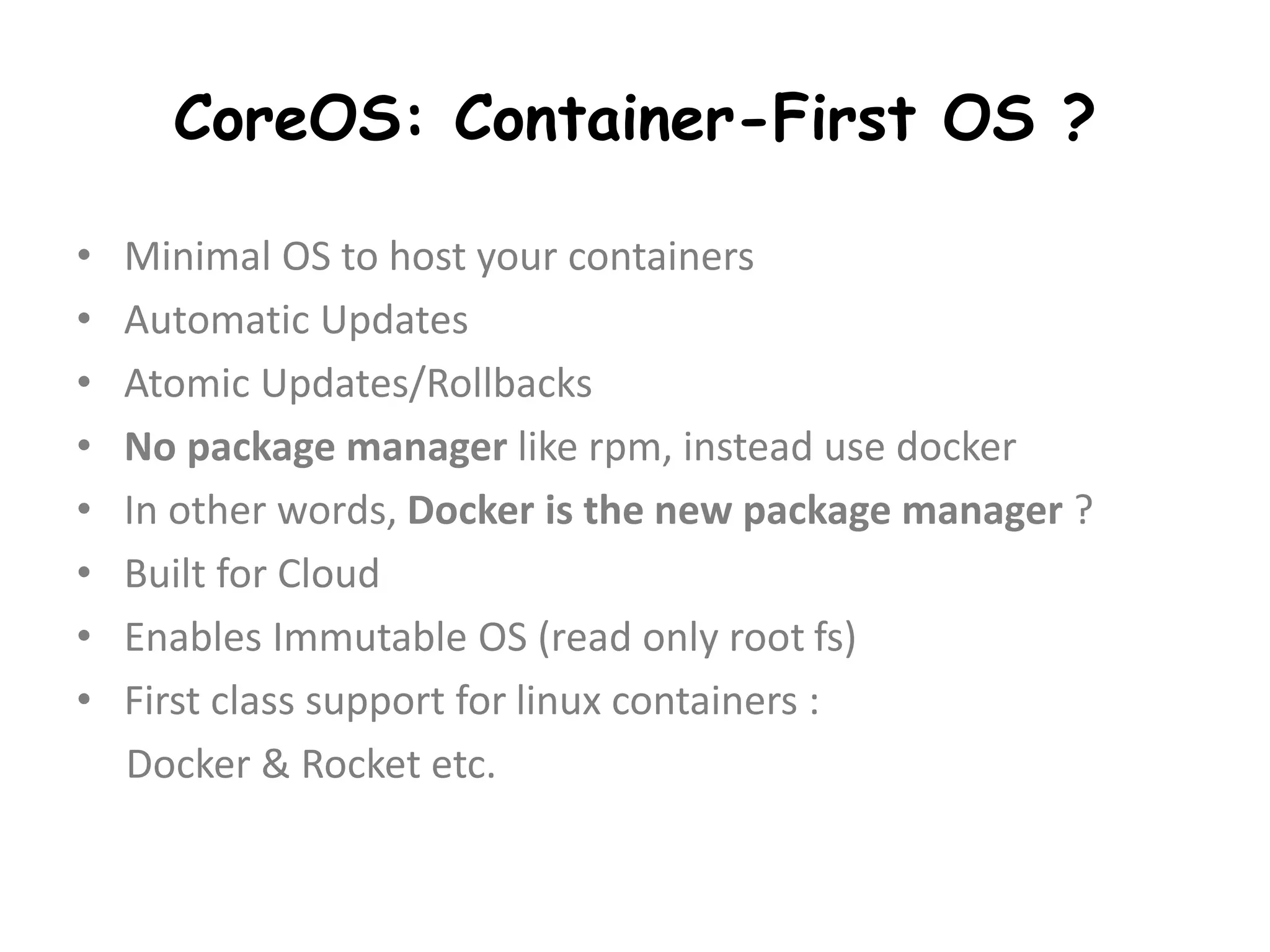

This document provides an informal summary of Docker and related container technologies presented by Santosh Koti on May 29, 2015. It discusses Docker and how it helps standardize application packaging and runtimes. Microservices and how they decompose applications are covered. Distributed systems challenges like failures are also summarized. Kubernetes, an open source container cluster manager from Google, is introduced as a way to manage containers at scale across multiple clouds.

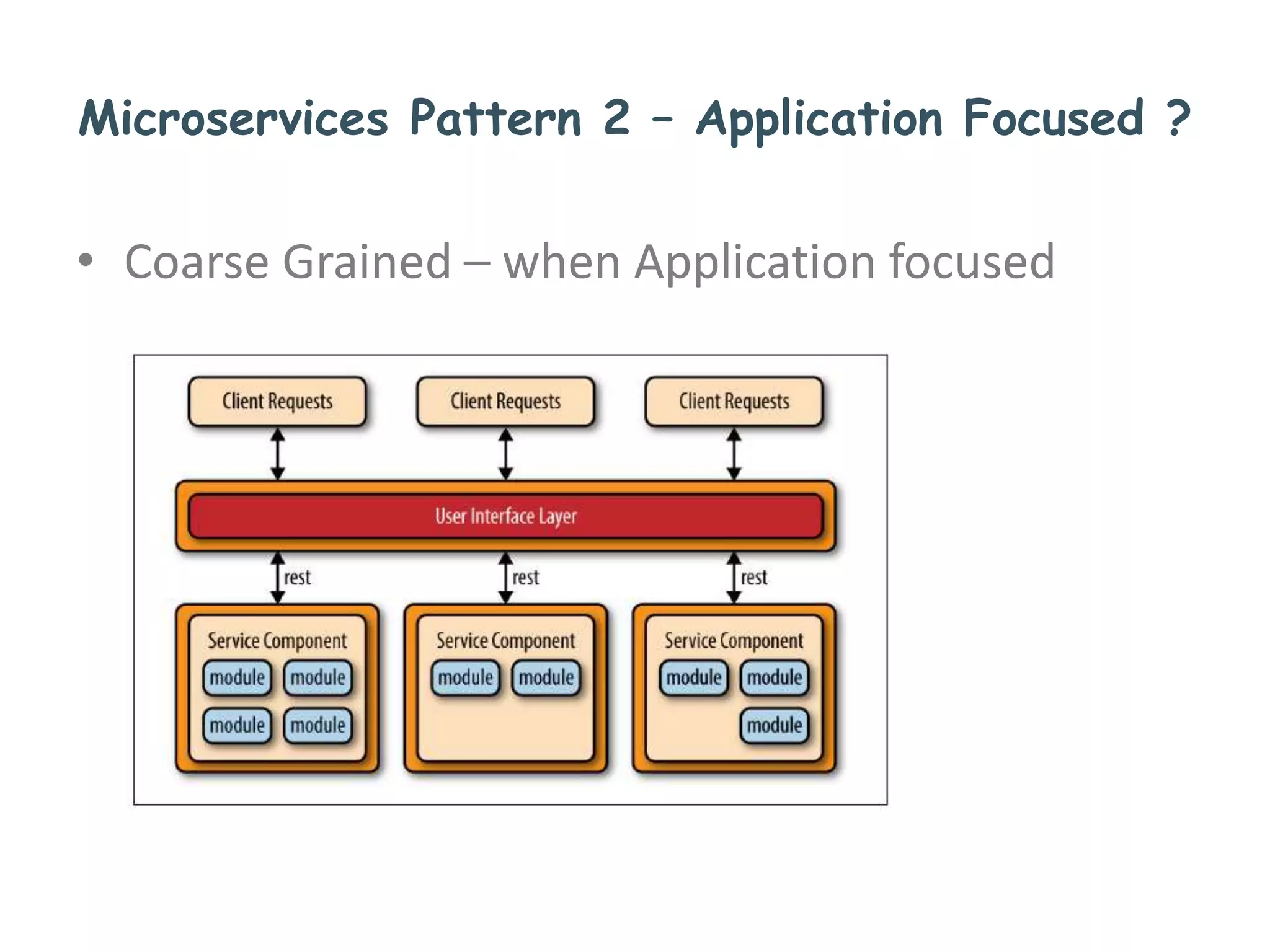

![Docker - Impact on Application Level ?

• Fosters Micro-services

• So applications are structurally decomposed/distributed

• Embrace Fundamentally Distributed Systems

• Emergence of Lean Stacks across languages

( Javascript: NodeJS, Java: Spring Boot,

Scala: Akka Http, Python: Flask, Go : Goji etc )

• Better Developer’s Health [NOCC ? ]

• As a result, better software is shipped ?

• So can we recall tagline of KF/LG’s tag line : Good times / Life is Good](https://image.slidesharecdn.com/10f8b59b-1850-4356-a8f3-d0e3e127945e-151123013414-lva1-app6892/75/Docker-N-Beyond-20-2048.jpg)