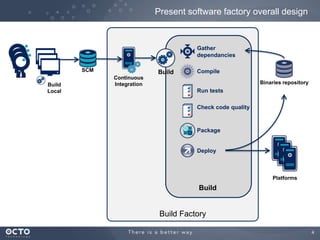

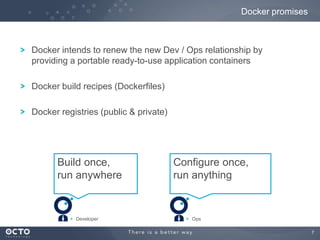

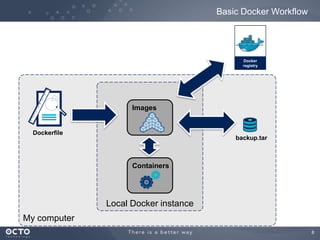

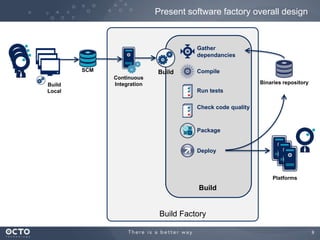

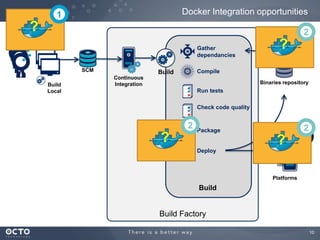

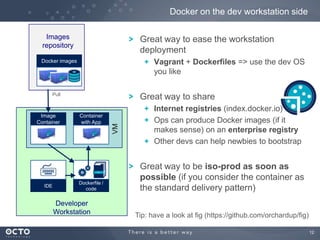

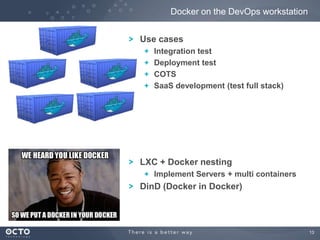

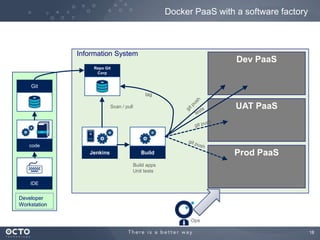

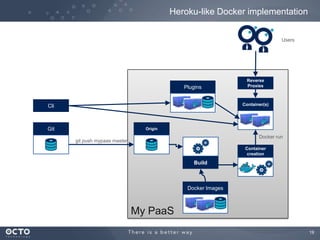

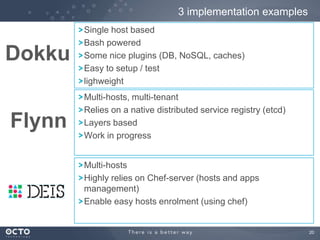

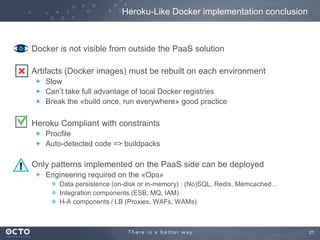

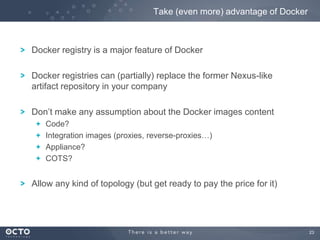

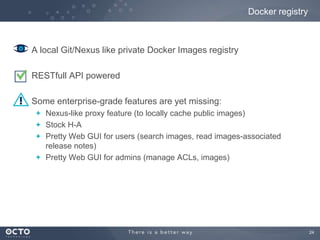

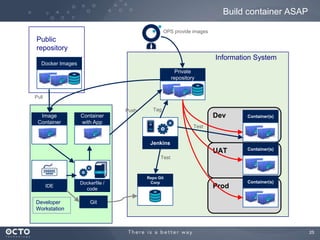

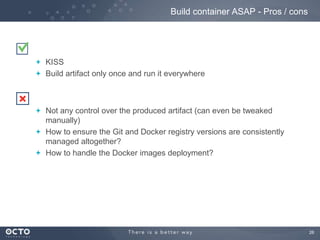

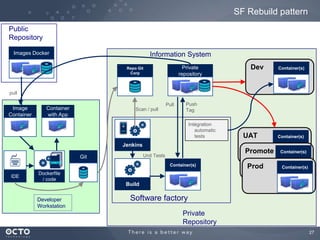

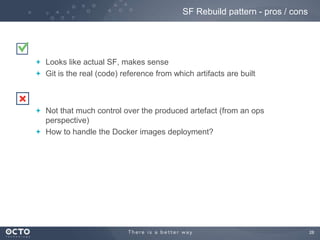

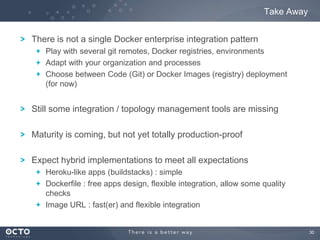

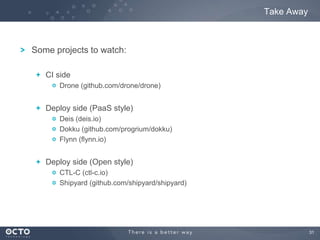

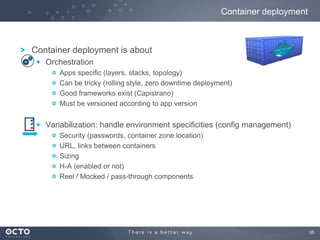

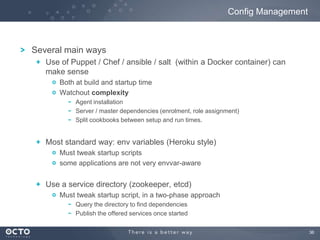

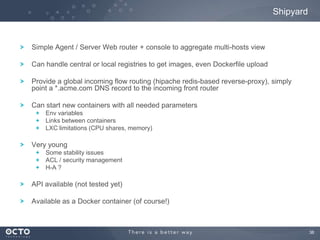

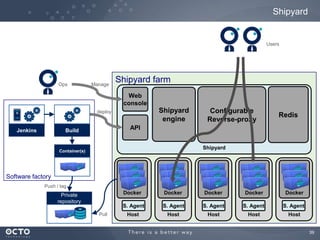

This document discusses software management in the enterprise using Docker containers. It begins with an overview of a traditional software factory model and then examines how Docker could be integrated at various points, including the developer workstation, continuous integration servers, and production servers. Several example Docker-based platforms are described, along with considerations around configuration management and deployment orchestration. The key takeaways are that there is no single integration pattern and hybrid approaches may be needed, integration and topology tools are still maturing, and image-based deployments could initially be easier than rebuilding applications from source on each environment.