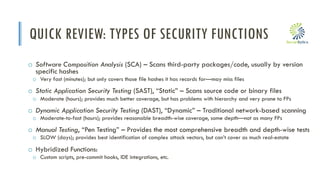

The document discusses the evolution of DevSecOps within the context of DevOps and hyper-automation, addressing the challenges and lessons learned in integrating security into the software development lifecycle. It outlines the shift from traditional security approaches to more automated and integrated security practices as a response to the rapid changes in technology and development practices. Key points include the necessity of a mature pipeline, effective tool selection, and the importance of addressing security holistically without compromising on automation or functionality.

![i

WHAT DO WE REALLY NEED TO TEST?

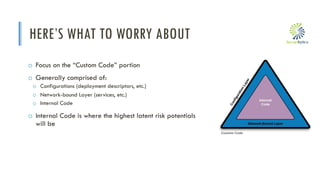

o The Code Pyramid:

o Third-Party – The lion’s share, can be scanned

with SCA

o WARNING: Not all SCAs scan as deeply

o Configuration/IaC – can also be scanned with

(certain) SCAs [mostly commercial]

o Custom Code – this is where we should be

spending most of our time and resources

o This is the Intellectual Property we’ve developed

Moderate-

Hard

Quick

Quick-ish](https://image.slidesharecdn.com/issa-mke-devsecops20190108-210703094426/85/DevSecOps-A-Secure-SDLC-in-the-Age-of-DevOps-and-Hyper-Automation-22-320.jpg)