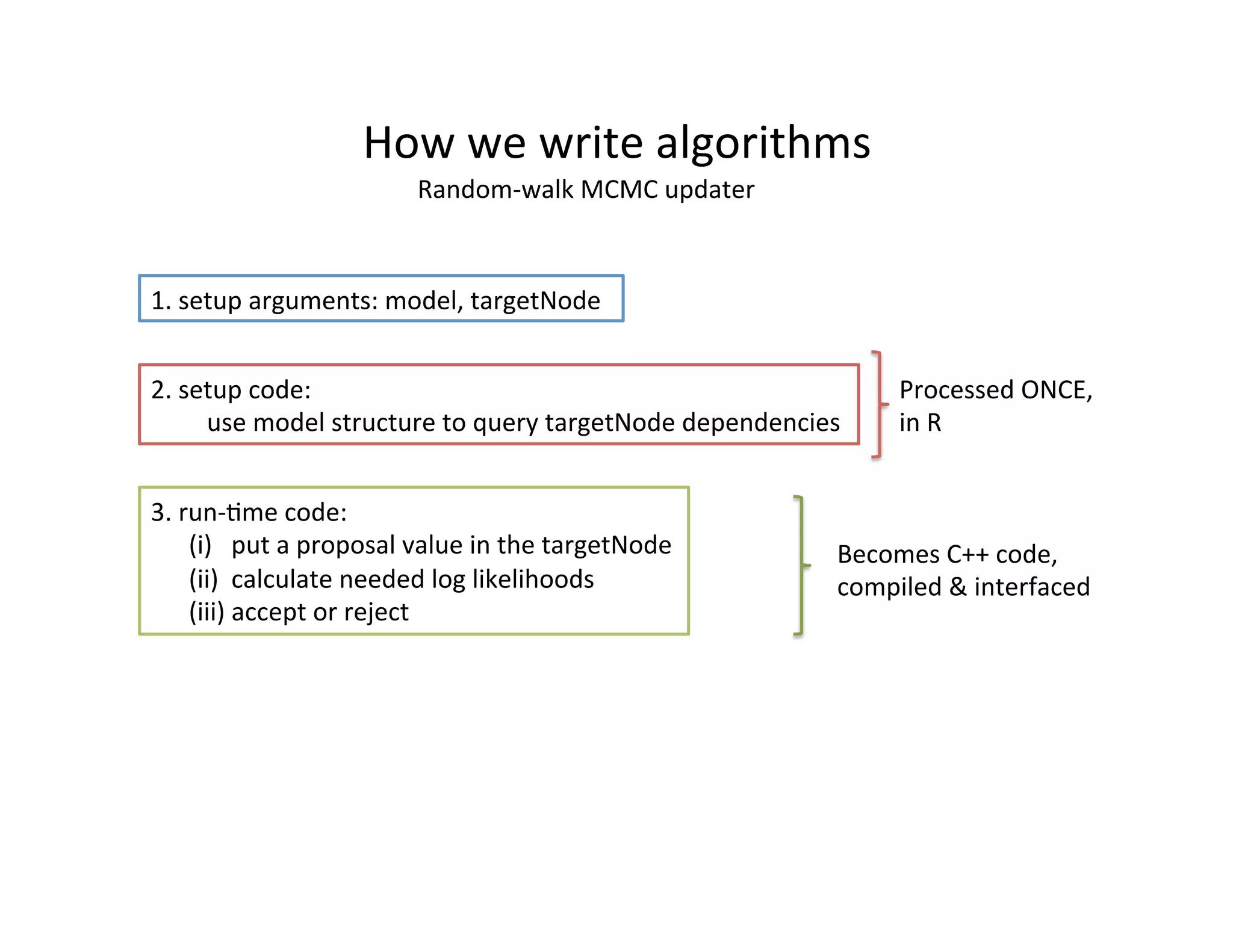

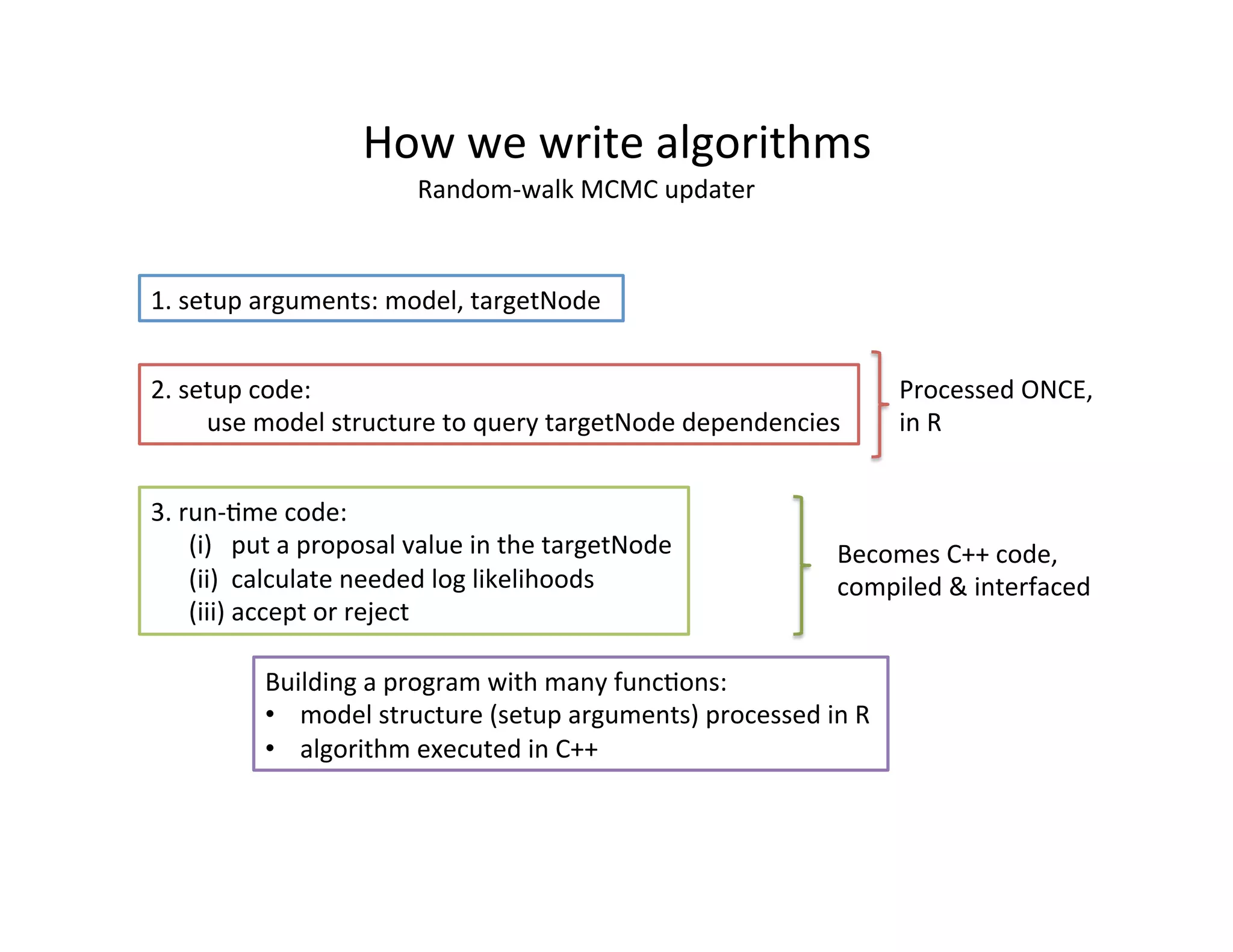

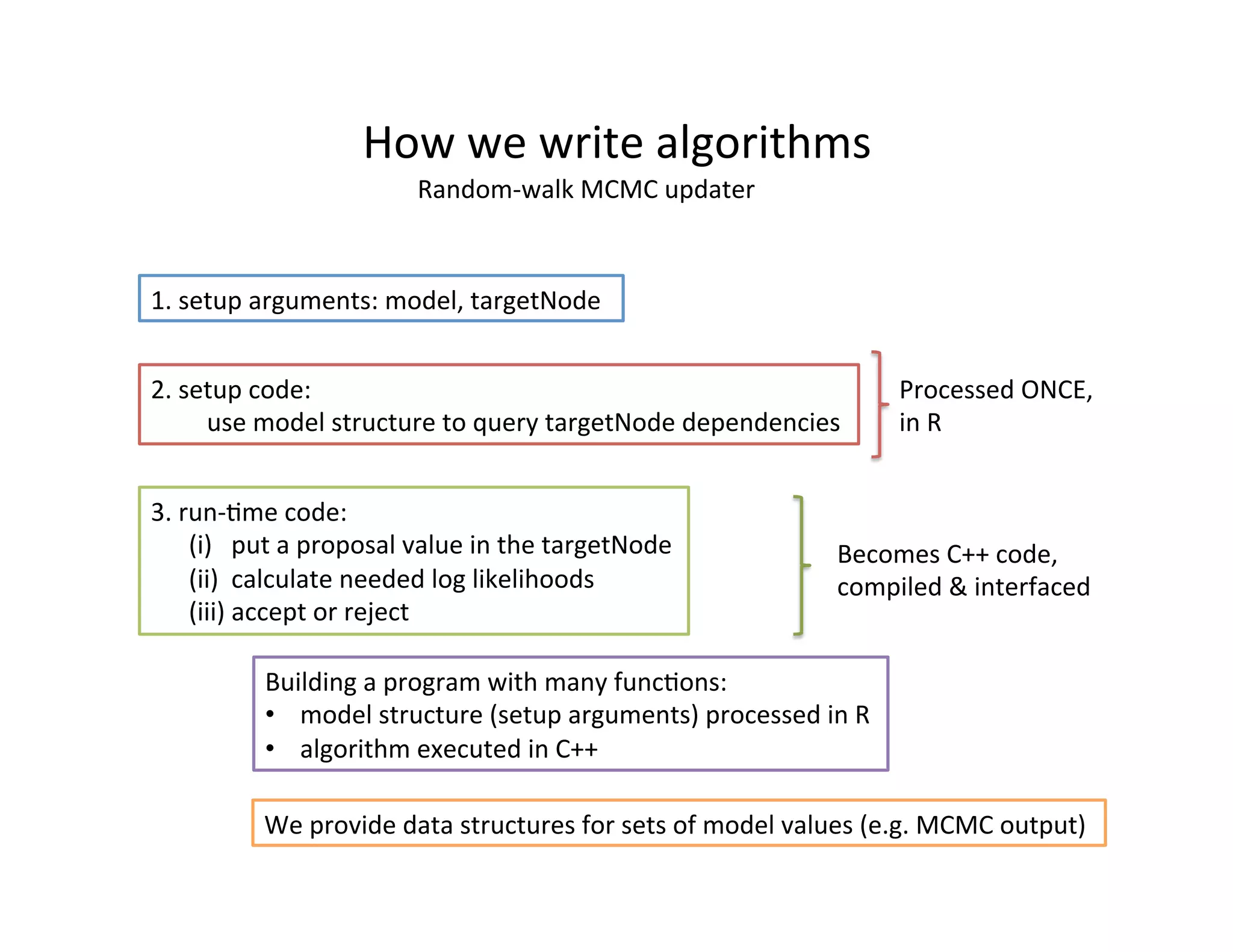

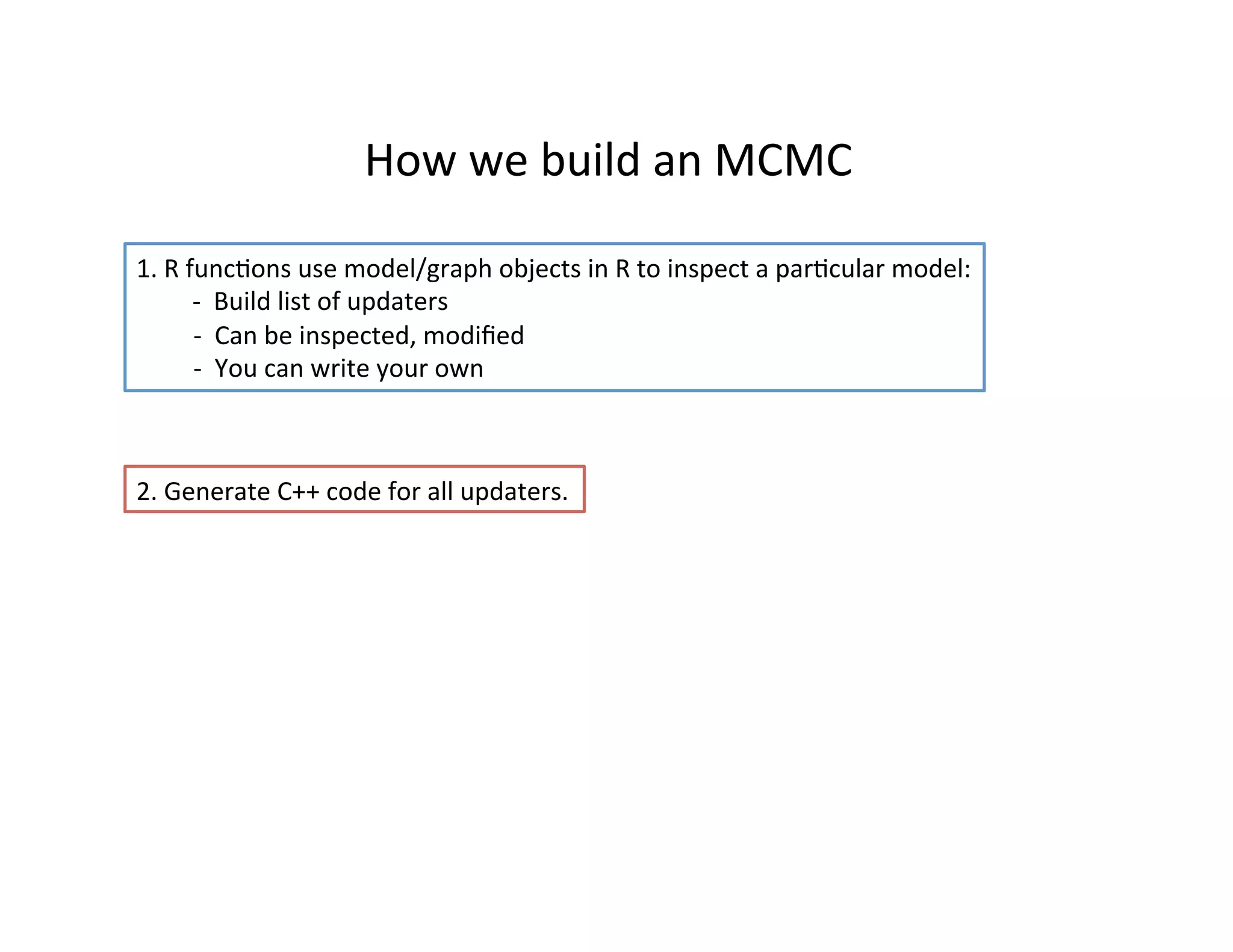

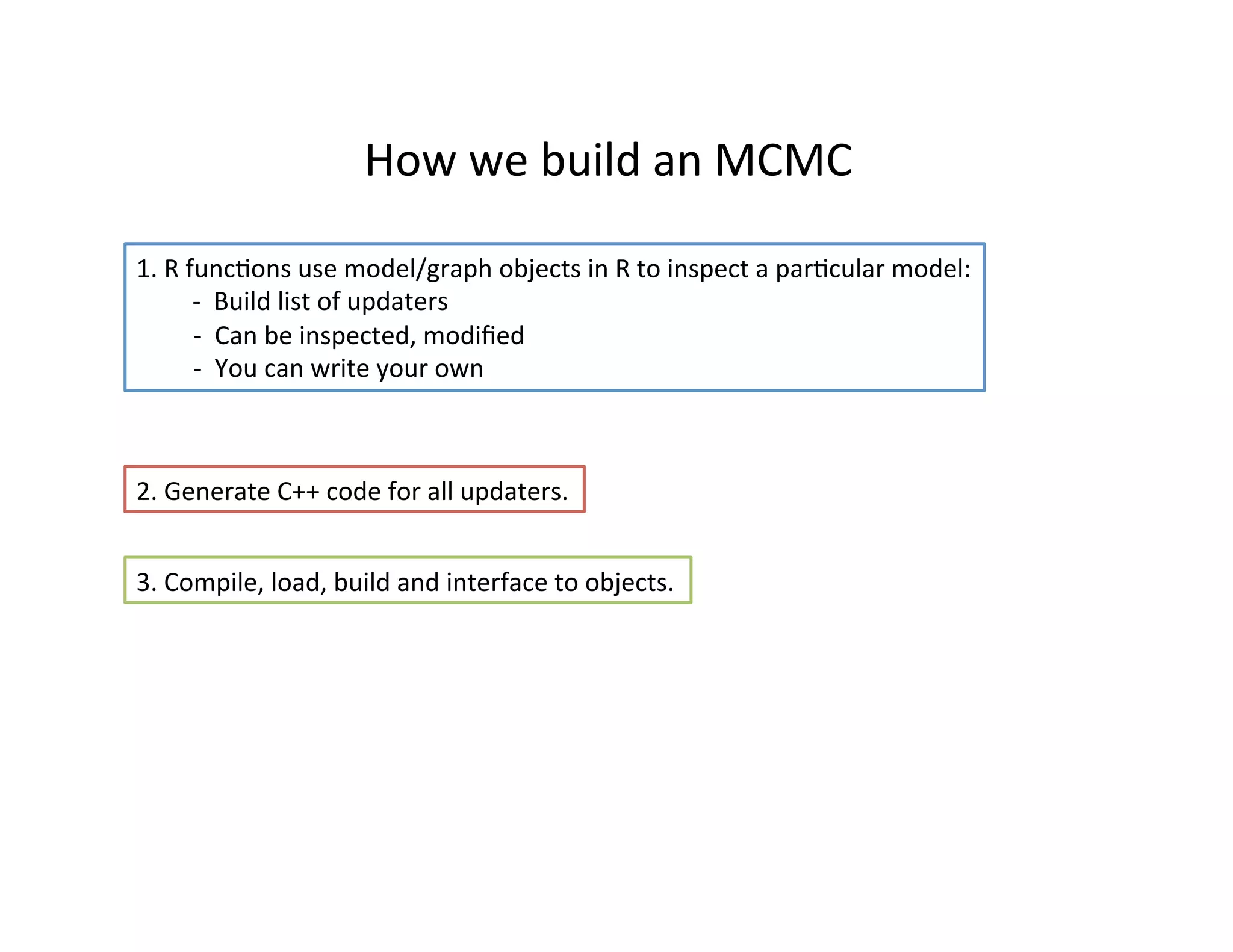

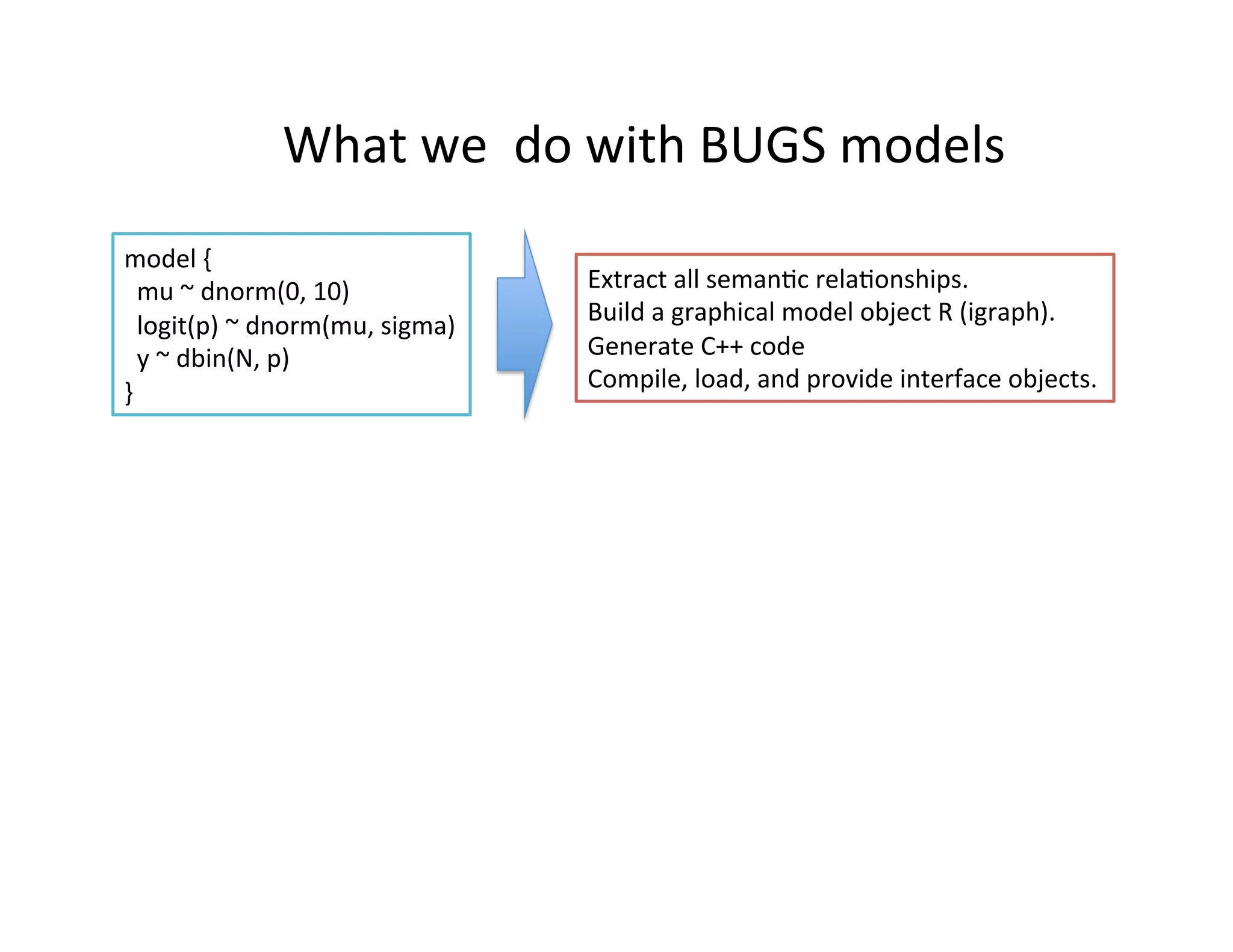

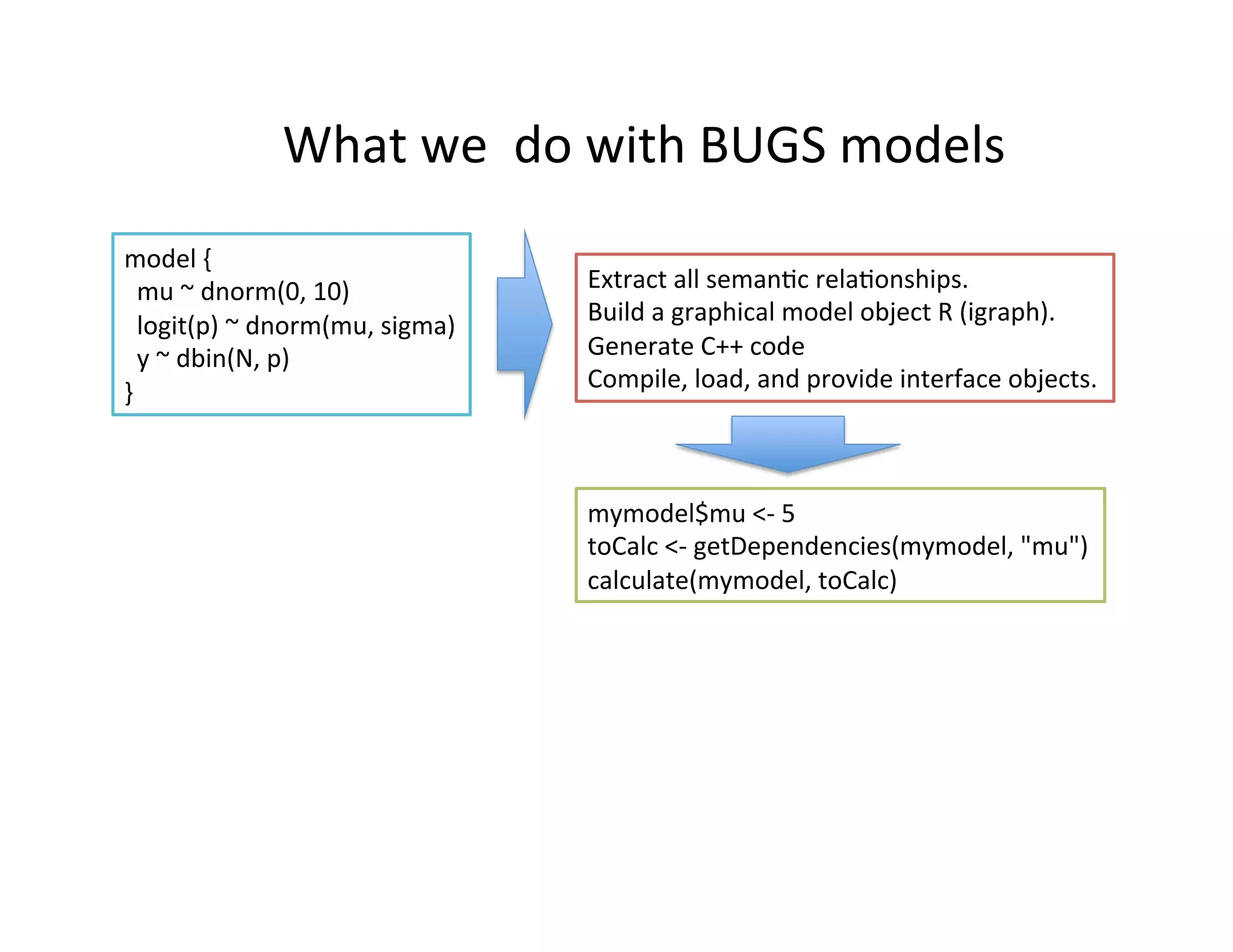

NIMBLE is a modeling language and algorithm programming framework for Bayesian and likelihood-based statistical analysis. It allows users to write custom algorithms that operate on statistical models specified in the BUGS language. The framework processes BUGS models to extract relationships, builds a graphical model object, generates C++ code, and provides interfaces to compiled algorithm functions. This allows for flexible and distributed development of advanced Bayesian computational methods.

![What

we

do

with

BUGS

models

Some

"automaHc"

extensions

of

BUGS

1. Expressions

as

arguments

2. MulHple

parameterizaHons

for

distribuHons

(e.g.

precision

or

std.

deviaHon)

3. Compile-‐Hme

if-‐then-‐else

4. Single-‐line

moHfs:

y[1:10]

~

glmm(X

*

A

+

(1

|

Group),

family

=

"binomial")

model

{

mu

~

dnorm(0,

10)

logit(p)

~

dnorm(mu,

sigma)

y

~

dbin(N,

p)

}

Extract

all

semanHc

relaHonships.

Build

a

graphical

model

object

R

(igraph).

Generate

C++

code

Compile,

load,

and

provide

interface

objects.

mymodel$mu

<-‐

5

toCalc

<-‐

getDependencies(mymodel,

"mu")

calculate(mymodel,

toCalc)](https://image.slidesharecdn.com/devalpinenimble-140401233405-phpapp02/75/de-Valpine-NIMBLE-6-2048.jpg)