Embed presentation

Download as PDF, PPTX

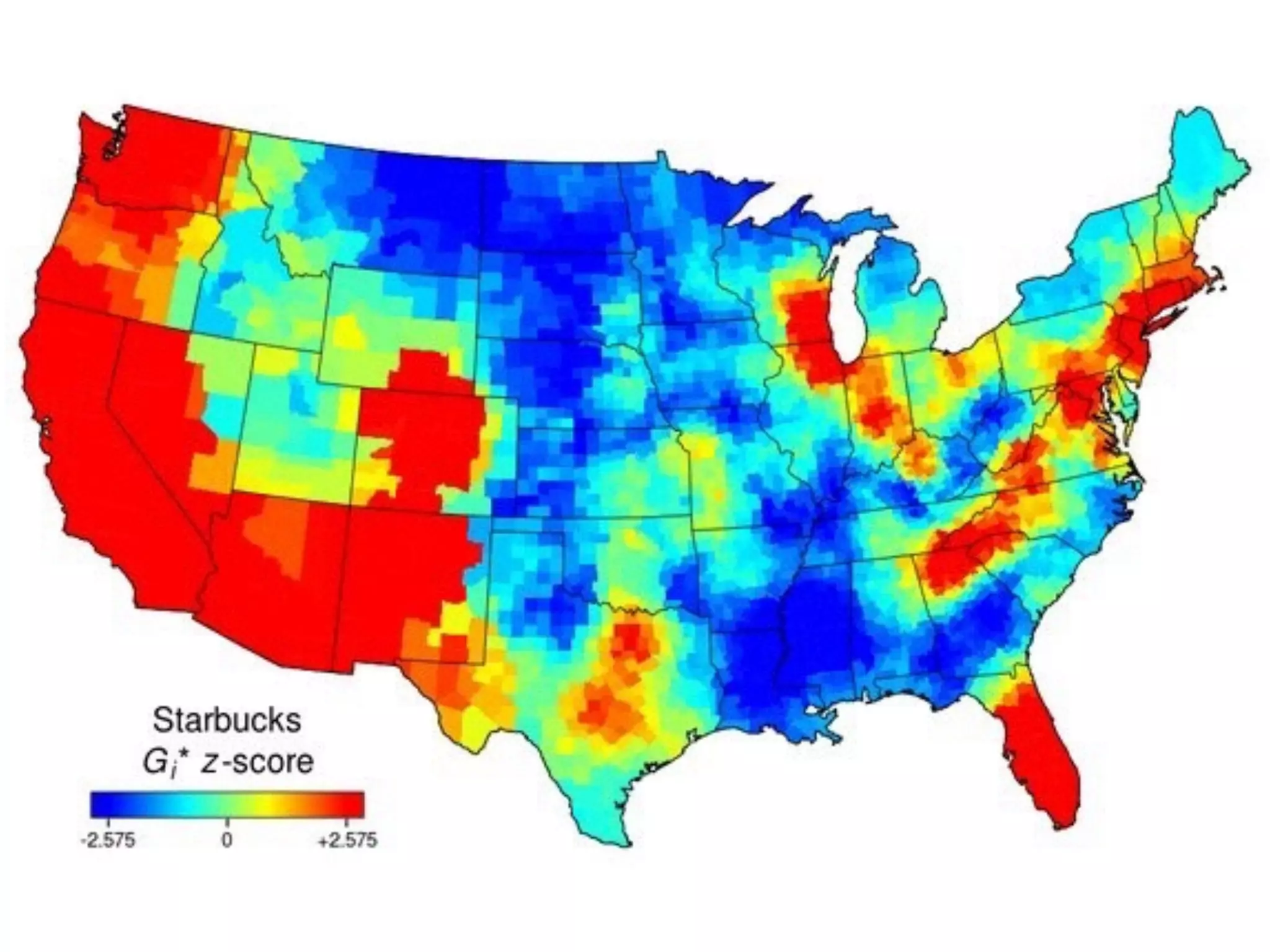

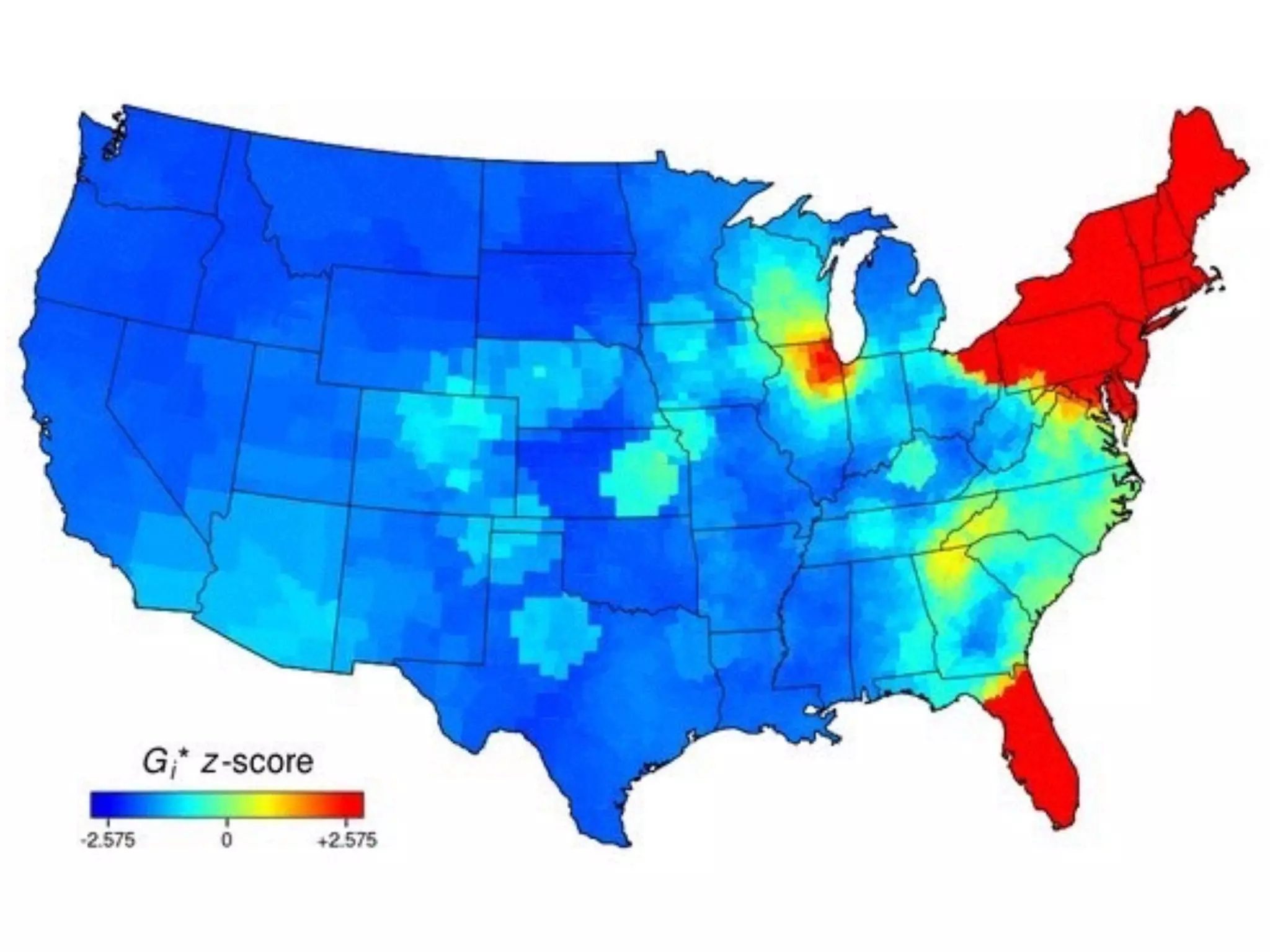

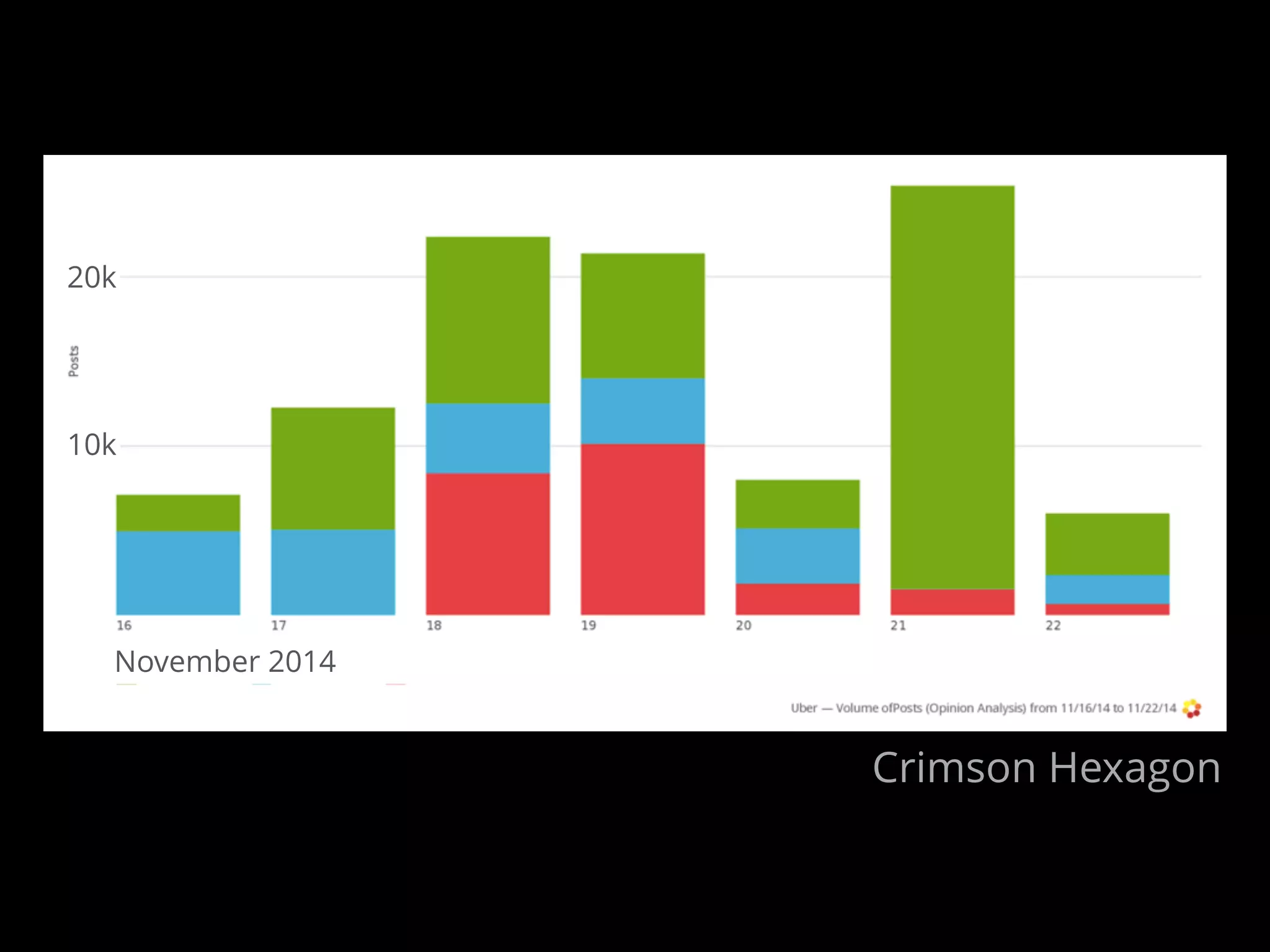

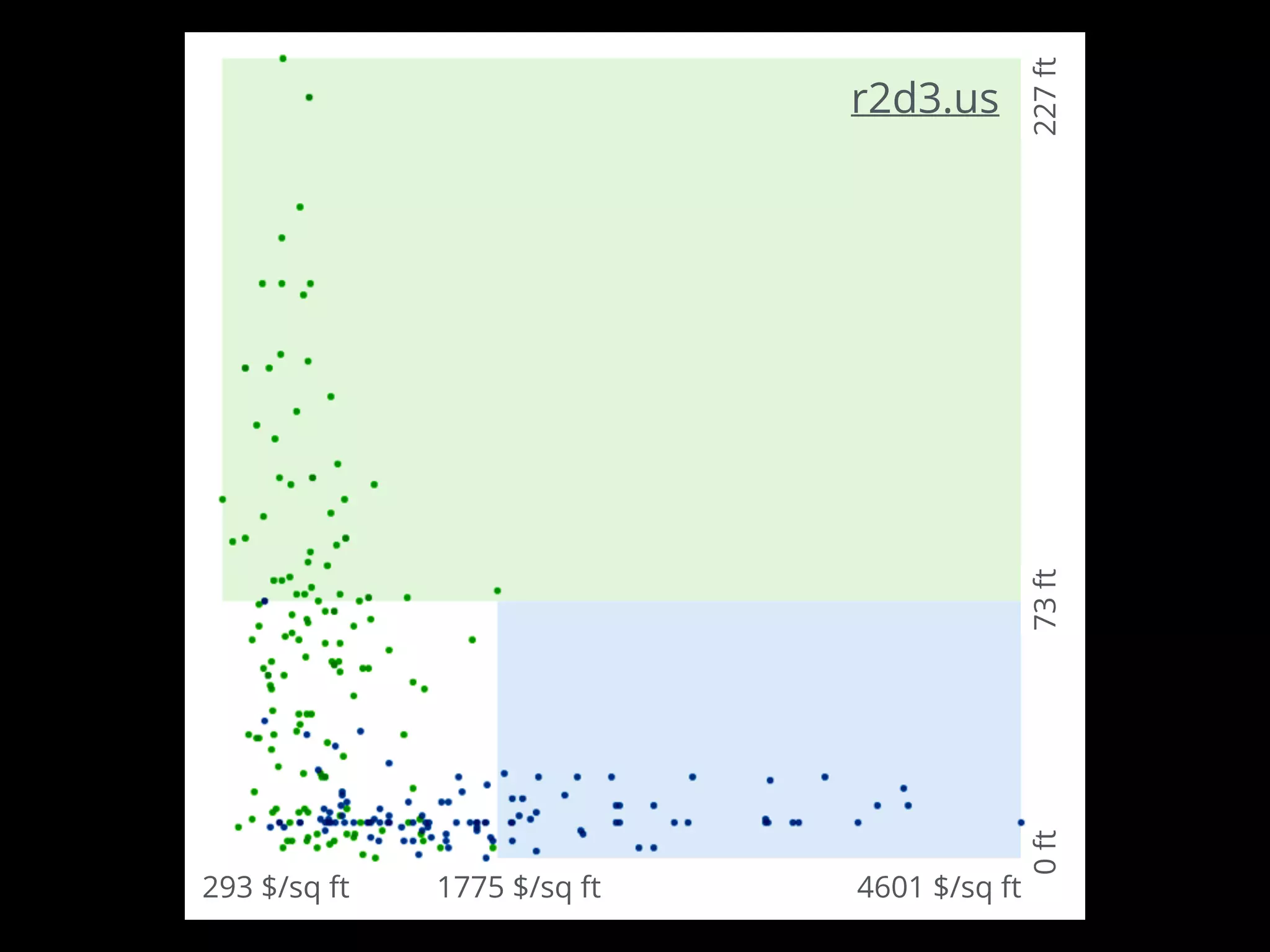

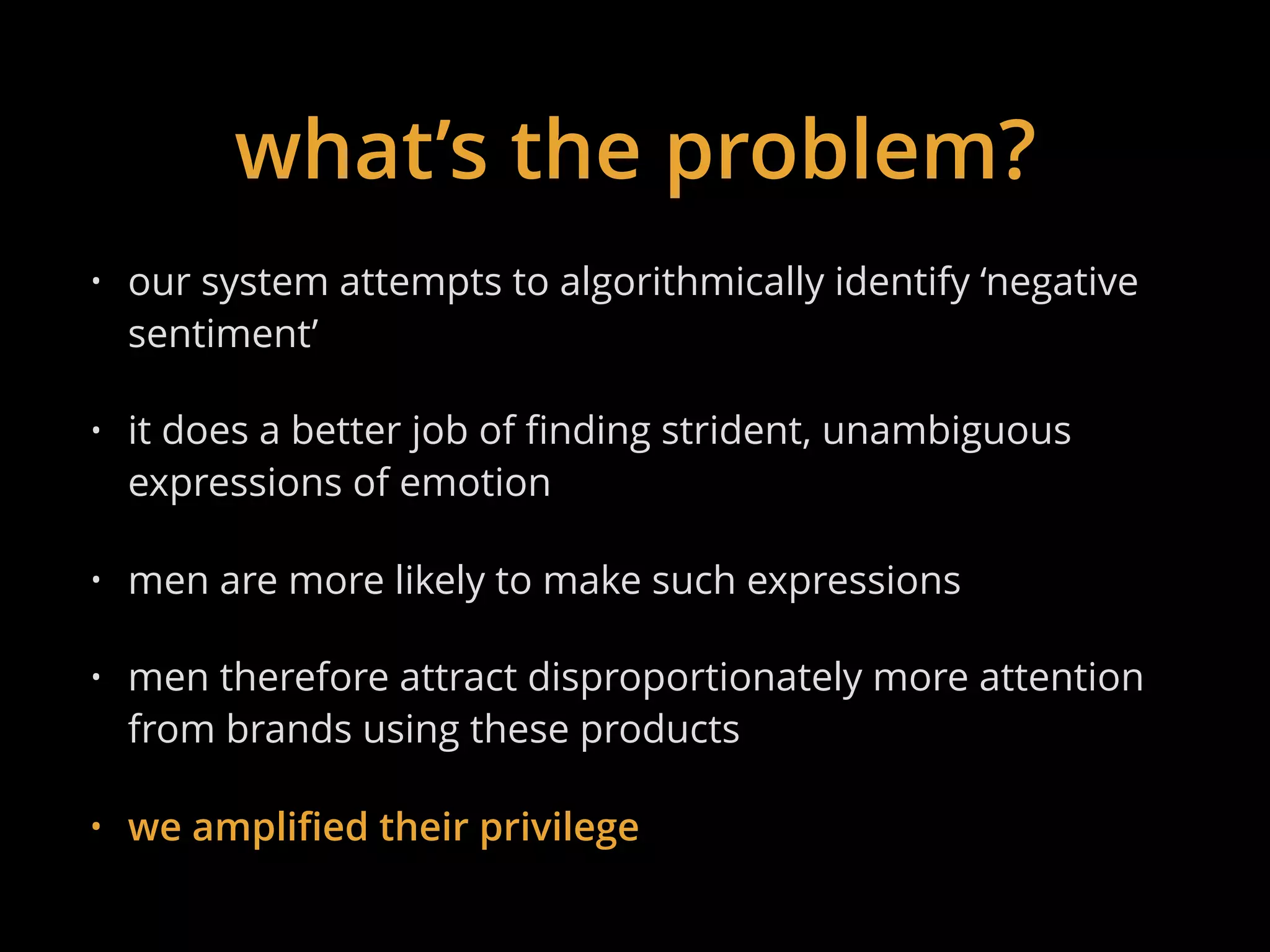

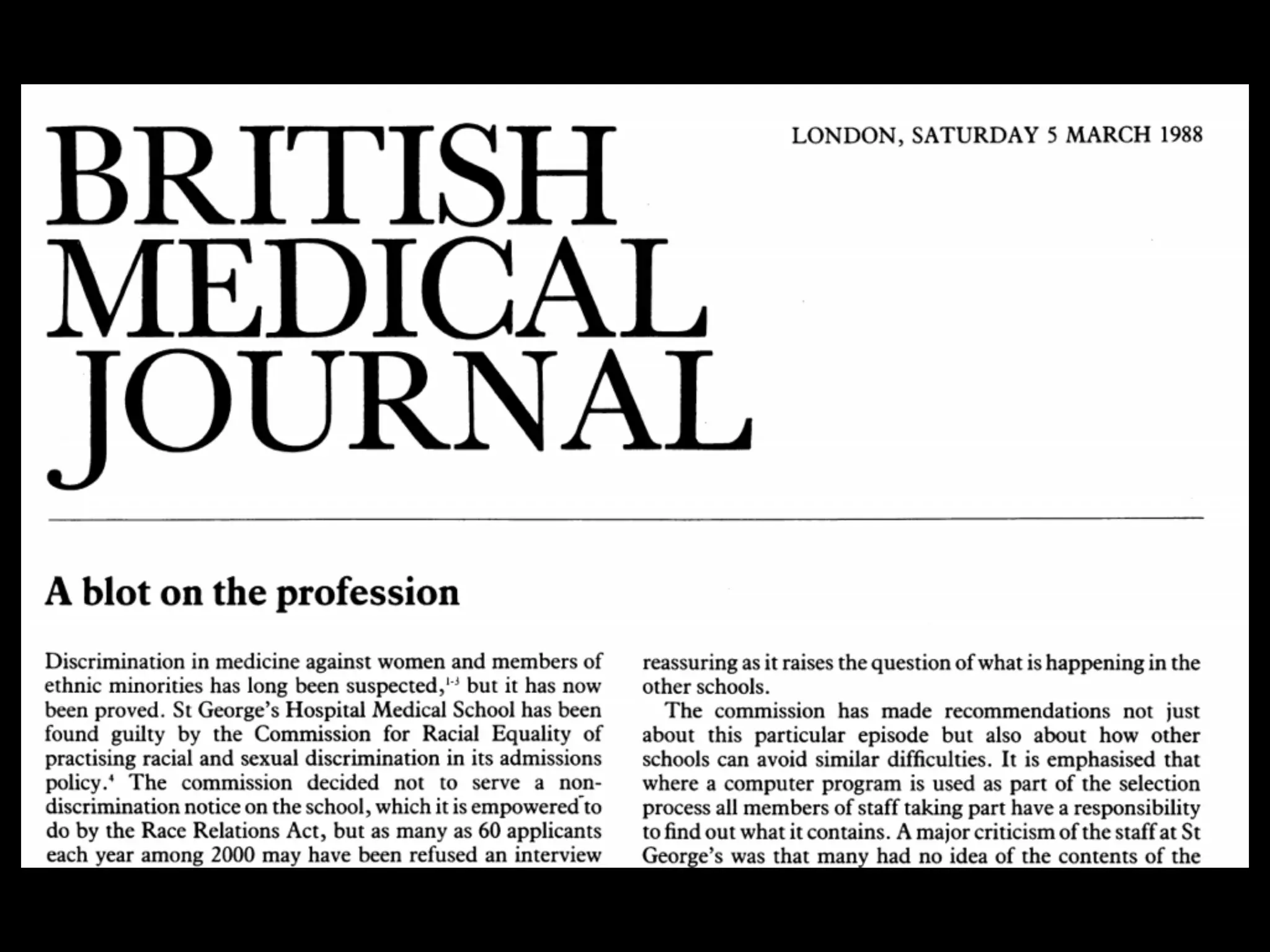

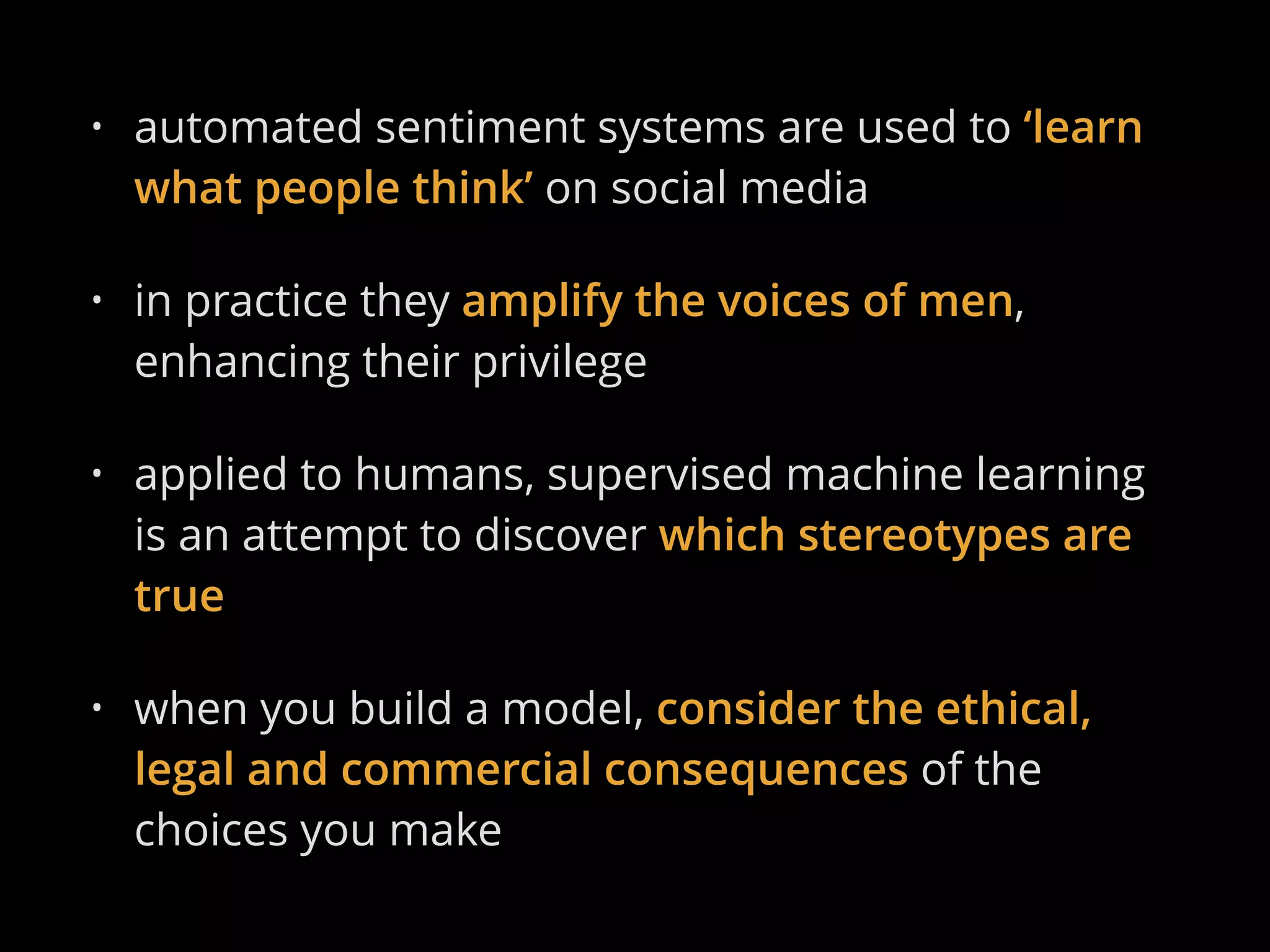

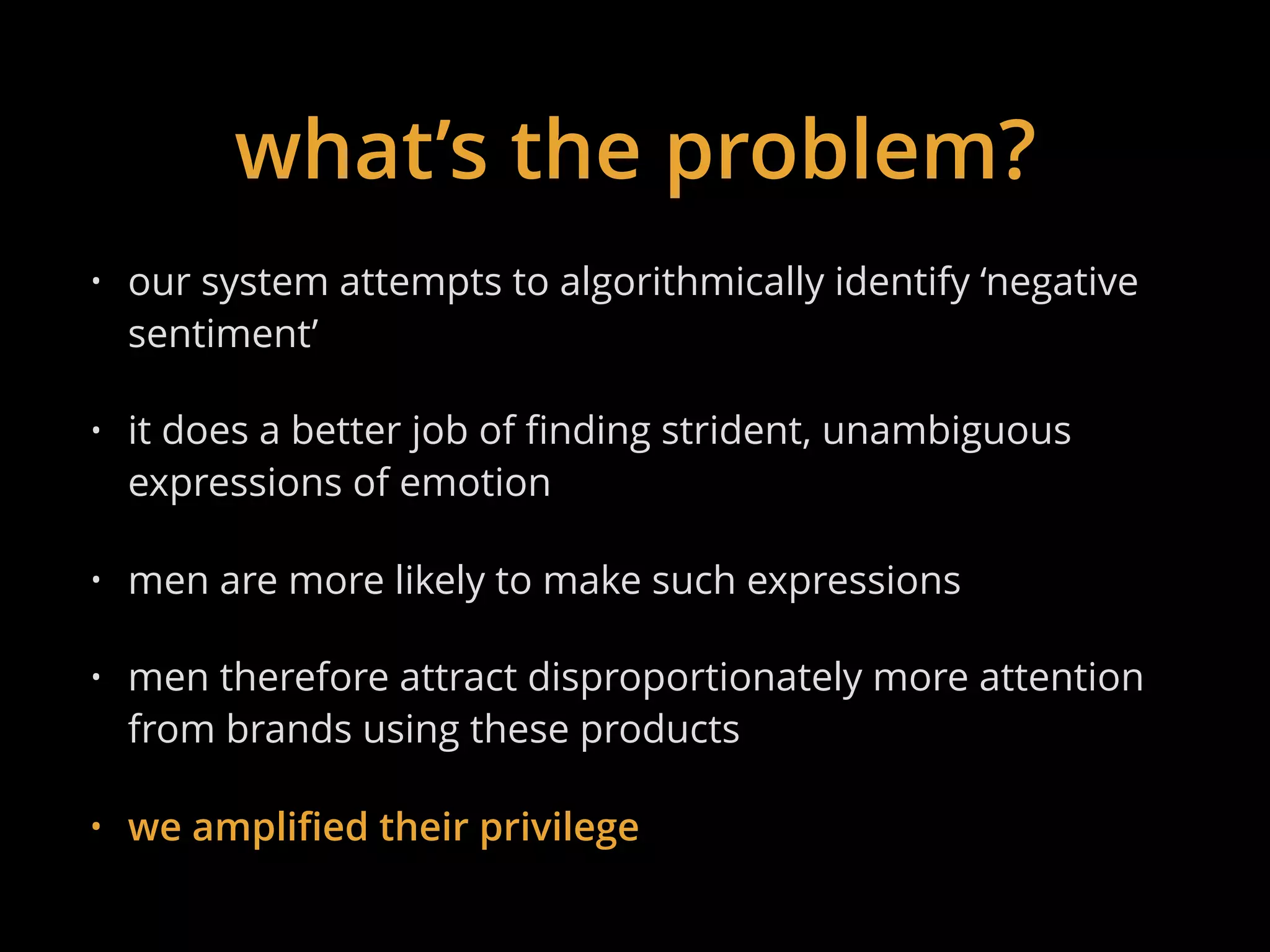

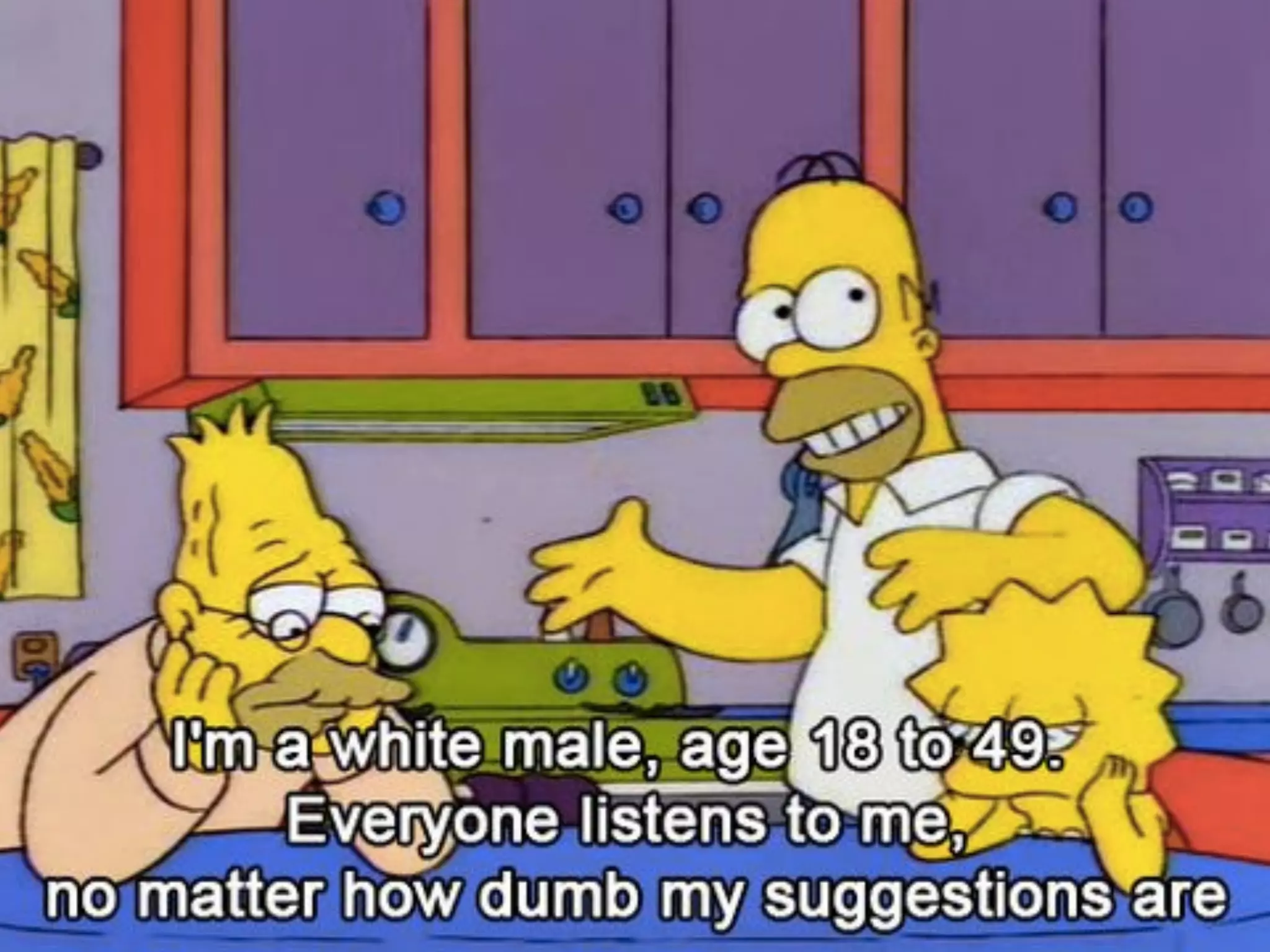

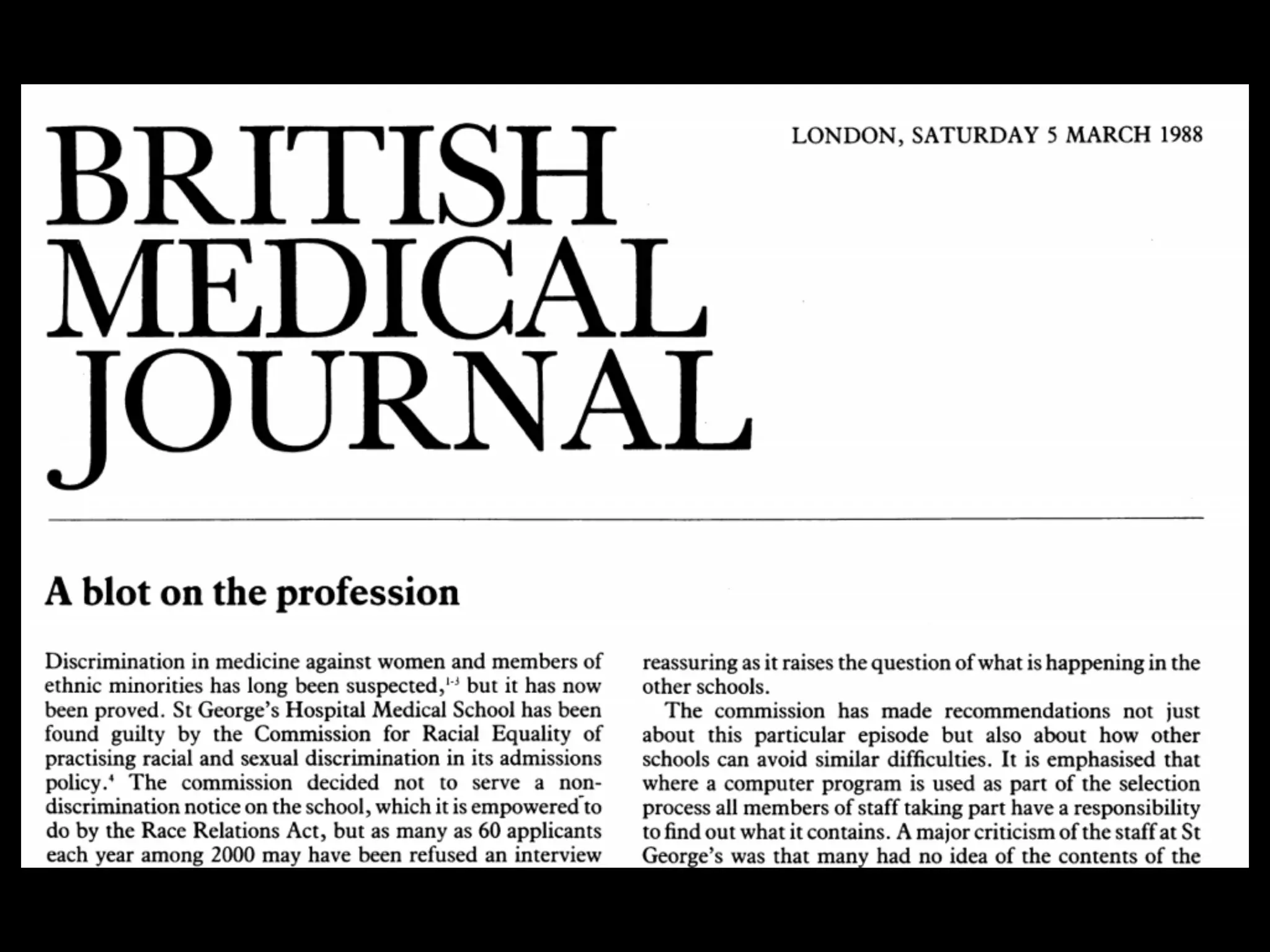

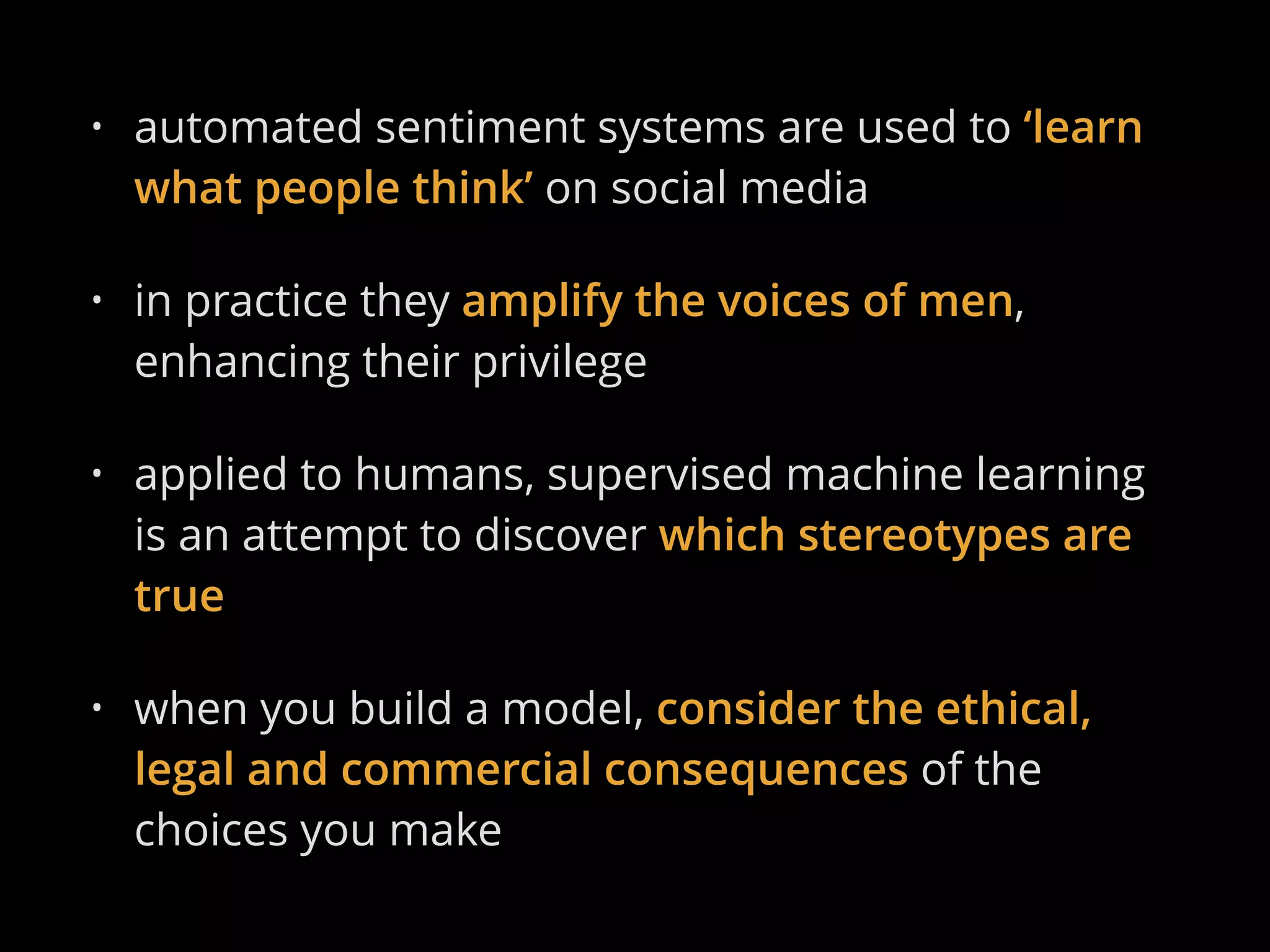

Mike Williams discusses the impact of bias and ethics in supervised machine learning, particularly in sentiment analysis on social media. He highlights how algorithms can amplify men's voices and privilege by focusing on clear expressions of emotion, thereby recapitulating historical biases in training data. The talk emphasizes the need to consider ethical, legal, and commercial consequences when building such models.