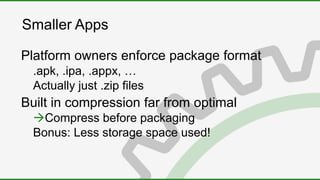

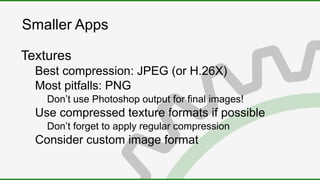

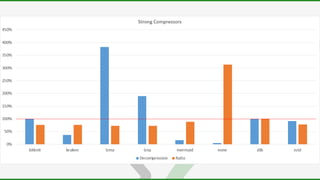

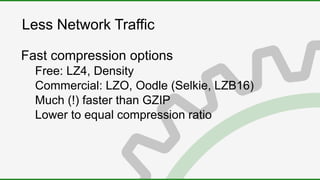

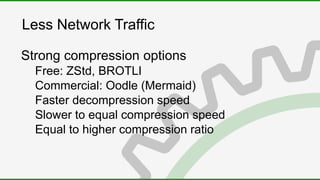

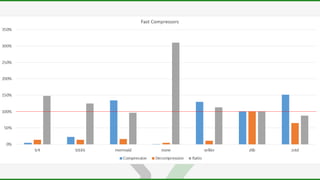

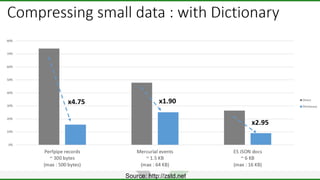

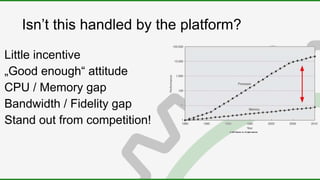

The document discusses the importance of data compression for smaller app sizes and reduced network traffic. It explains that compression can save money on data costs and improve performance by reducing download sizes and loading times. While platforms aim for "good enough" compression, there are many techniques that can achieve much better compression ratios than defaults like DEFLATE. These include lossless compression algorithms like Brotli and LZ4 as well as lossy compression of textures, 3D models, and sounds. The document provides examples of how to compress different data types and considers tradeoffs of compression speed versus ratio.

![Compression in theory

Wikipedia:

„[...] encoding information

using fewer bits

than the original representation“](https://image.slidesharecdn.com/datacompression2020-200219091845/85/Data-Compression-2020-4-320.jpg)