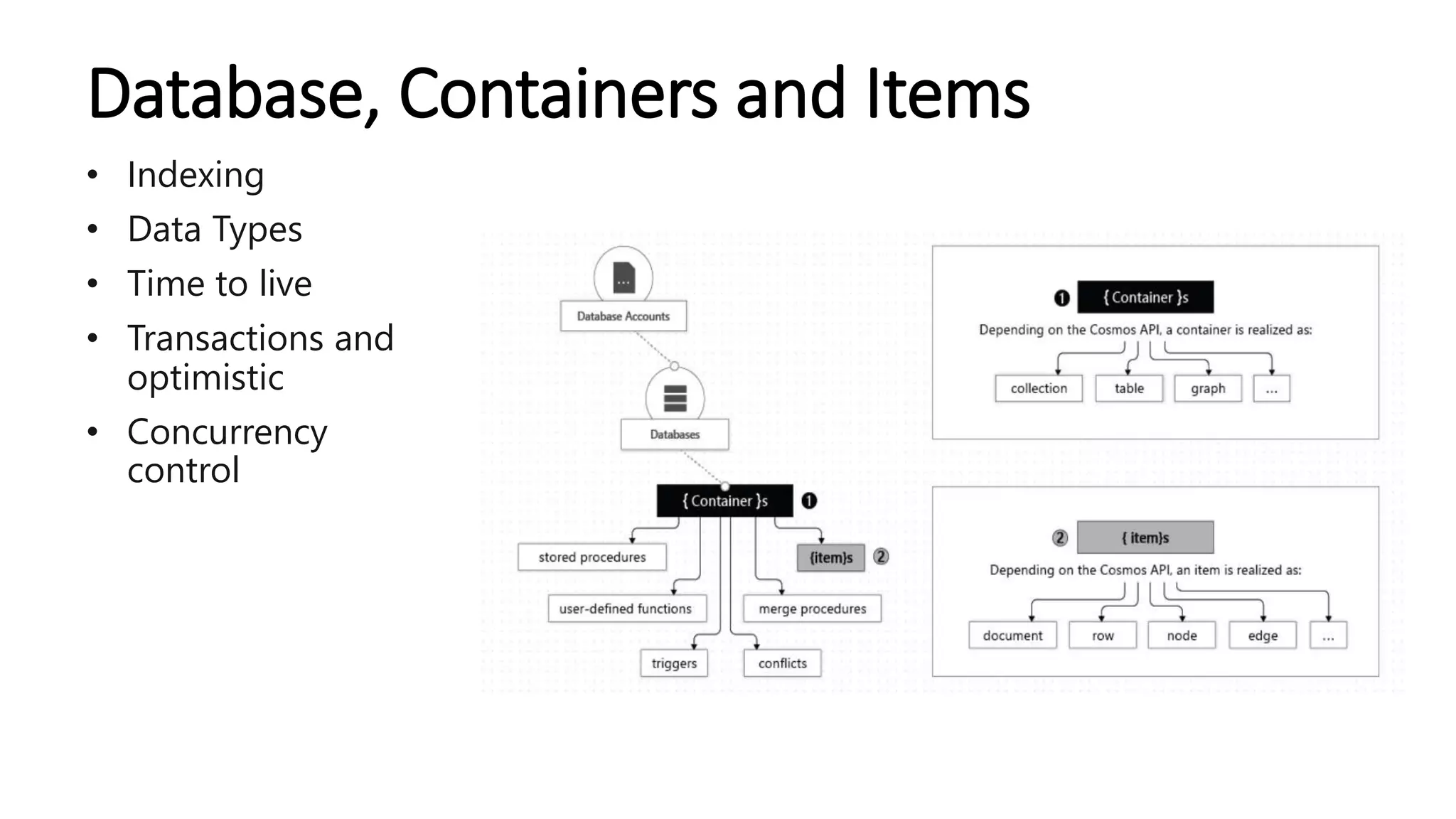

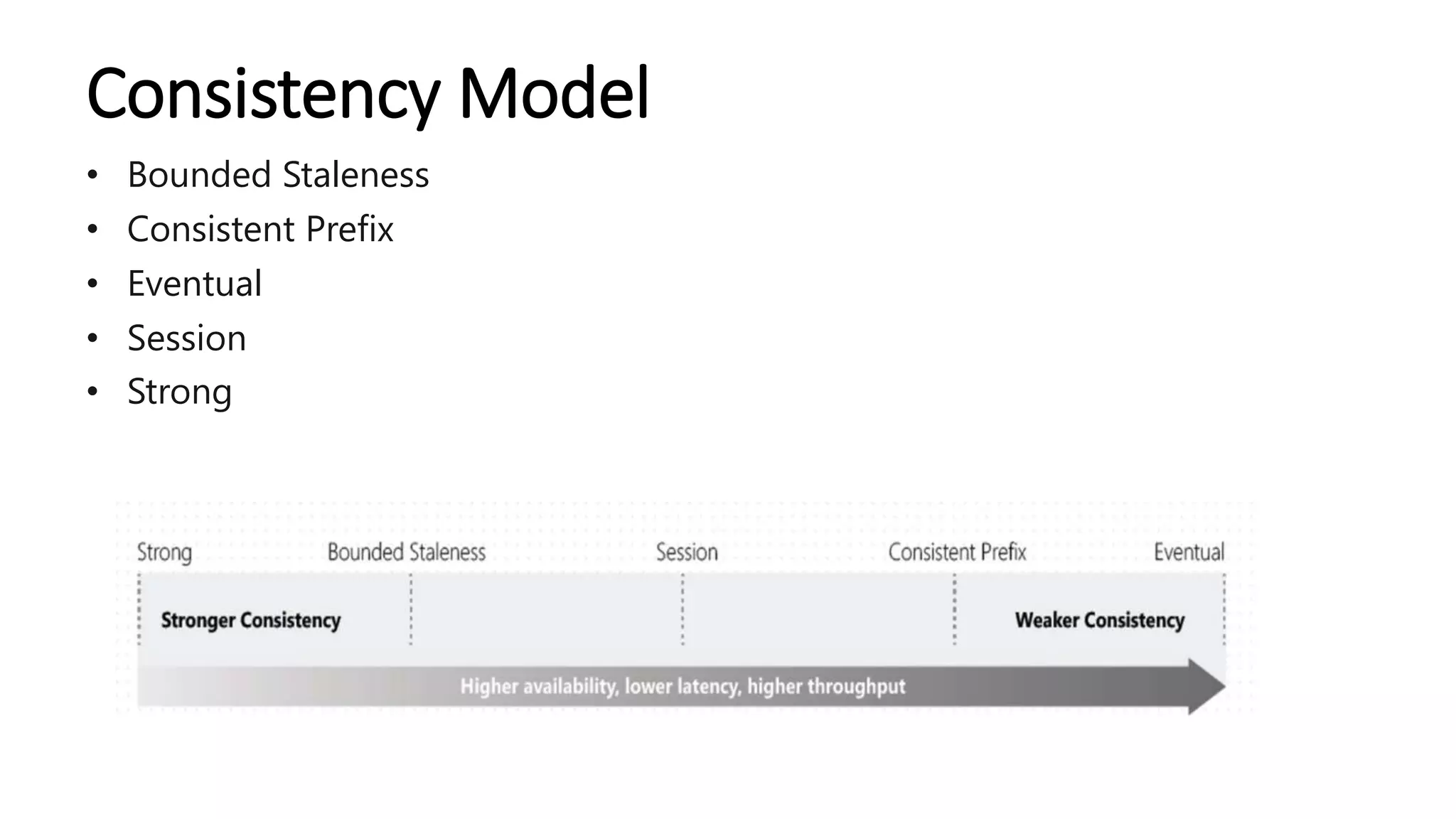

The document provides an overview of Azure Cosmos DB, detailing its features such as APIs for SQL, MongoDB, Gremlin, and Cassandra, along with its capabilities for handling high volumes of dynamic and real-time data. It emphasizes the platform's benefits including global distribution, high availability, consistency models, data movement integrations, and operational management through an emulator. The agenda includes topics like data indexing, transactions, and various integration options with Azure services.