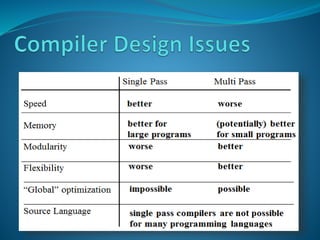

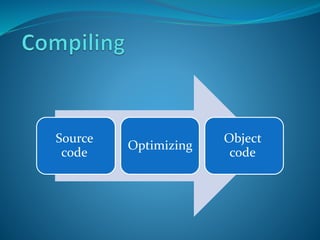

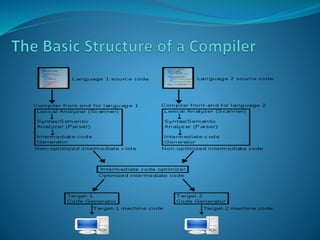

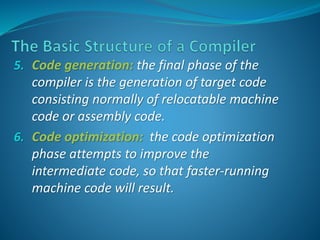

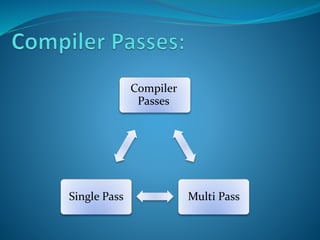

A compiler is a program that translates source code written in one programming language into another target language. It performs several steps including lexical analysis, parsing, code generation and optimization. The compiler consists of a front end that checks syntax and semantics, a middle end that performs optimizations, and a back end that generates assembly code. Compilers can be single pass or multi pass and are used to translate from high-level languages like C to machine-executable object code.

![let var n:

integer;

var c: char

in begin

c := ‘&’;

n := n+1

end

PUSH 2

LOADL 38

STORE 1[SB]

LOAD 0[SB]

LOADL 1

CALL add

STORE 0[SB]

POP 2

Ident HALT

N

c

Type

Int

char

Address

0[SB]

1[SB]](https://image.slidesharecdn.com/compilerpresentaion-141017065108-conversion-gate02/85/Compiler-presentaion-18-320.jpg)