Chapter 2 Program language translation.pptx

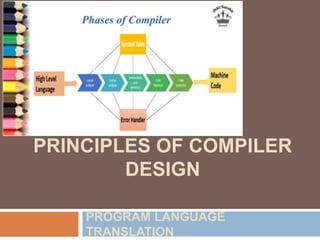

- 1. CHAPTER 2: PRINCIPLES OF COMPILER DESIGN PROGRAM LANGUAGE TRANSLATION

- 2. Why Study Compilers? Reason #1: understand compilers and languages. Understand the code structure and language semantics. Understand relation between source code and generated machine code. Allow to become a better programmer and increase programmer productivity and portability Reason #2: nice balance of theory and practice. Theory: Mathematical models: regular expressions, automata, grammars, graphs. Algorithms that use these models. Practice: Apply theoretical notions to build a real compiler. Reason #3: programming experience. Creating a compiler entails writing a large computer program which manipulates complex data structures and implement sophisticated algorithm Increasing programming capability

- 3. Language Processors: Computer programs are generally written in high-level languages (like C++, Python, and Java). A language processor, or language translator, is a computer program that convert source code from one programming language to another language or human readable language. They also find errors during translation.

- 4. Language Processors: Language Processors includes – Compilers and translators, – Interpreters, – Pre-processors, – Assemblers, – Linkers, – Loaders

- 5. Compilers Compiler is a program which translates source program written in one language to an equivalent program in other language (the target language). Usually the source language is a high level language like Java, C, C++ etc. whereas the target language is machine code or "code" that a computer's processor understands. An important role of the compiler is to report any errors in the source program that it detects during the translation process.

- 6. Compilers Figure 2.1: A compiler

- 7. Compilers If the target program is an executable machine-language program, it can then be called by the user to process inputs and produce outputs; Interpreters - translate programs written in high-level languages into machine code that a computer understands. .

- 8. Example 2.1: Java language processors combine compilation and interpretation. A Java source program may first be compiled into an intermediate form called bytecodes. The bytecodes are then interpreted by a virtual machine. A benefit of this arrangement is that bytecodes compiled on one machine can be interpreted on another machine, perhaps across a network. In order to achieve faster processing of inputs to outputs, some Java compilers, called just-in-time compilers, translate the bytecodes into machine language immediately before they run the intermediate program to process the input.

- 9. Example 2.1: In order to achieve faster processing of inputs to outputs, some Java compilers, called just-in-time compilers, translate the bytecodes into machine language immediately before they run the intermediate program to process the input.

- 10. Introduction to Compilers…. The machine-language target program produced by a compiler is usually much faster than an interpreter at mapping inputs to outputs . An interpreter, however, can usually give better error diagnostics than a compiler, because it executes the source program statement by statement.

- 11. Introduction to Compilers …. The source language is optimized for humans. – It is more user-friendly, to some extent platform- independent. – They are easier to read, write, and maintain, and hence it is easy to avoid errors. A program written in any language must be translated into a form that is understood by the computer. – This form is typically known as Machine Language (ML) or Machine Code, or Object Code. Consists of streams of 0’s and 1’s

- 12. Introduction to Compilers …. Some examples of compilers are: – A Java compiler for the Apple Macintosh – A COBOL compiler for the SUN – A C++ compiler for the Apple Macintosh If a portion of the input to a Java compiler looked like this: – a = b + c ∗ d; – the output corresponding to this input might look something like this:

- 13. Sample Problem 2.1.1 Show the output of a Java native code compiler, in any typical assembly language, for the following Java input string: while (x<a+b) x = 2*x;

- 14. Introduction to Compilers …. Figure 2.4: A Compiler and Interpreter produce very different output for the same input.

- 15. Introduction to Compilers …. The input to an interpreter is a program written in a high-level language, but rather than generating a machine language program, the interpreter actually carries out the computations specified in the source program. In other words, the output of a compiler is a program, whereas the output of an interpreter is the source program’s output.

- 16. Sample Problem 2.1.2 Show the compiler output and the interpreter output for the following Java source code: for (i=1; i<=4; i++) System.out.println (i*3);

- 17. Compiler vs. Interpreter Compiler Interpreter A compiler is a program that converts the entire source code of a programming language into executable machine code. An interpreter takes a source program and runs it line by line, translating each line as it comes to it. The compiler takes a large amount of time to analyze the entire source code but the overall execution time of the program is comparatively faster. An interpreter takes less amount of time to analyze the source code but the overall execution time of the program is slower. The compiler generates the error message only after scanning the whole program, so debugging is comparatively hard as the error can be present anywhere in the program. Its Debugging is easier as it continues translating the program until the error is met. The compiler requires a lot of memory for generating object codes. It requires less memory than a compiler because no object code is generated. Generates intermediate object code. No intermediate object code is

- 18. Big C notation for compilers It is important to remember that a compiler is a program, and it must be written in some language (machine, assembly, high- level). In describing this program, we are dealing with three languages: 1) The source language, i.e. the input to the compiler, 2) The object language, i.e. the output of the compiler, 3) The language in which the compiler is written

- 19. Sample Problem 2.1.3 Using the big C notation, show each of the following compilers: 1) An Ada compiler which runs on the PC and compiles to the PC machine language. 2) An Ada compiler which compiles to the PC machine language, but which is written in Ada. 3) An Ada compiler which compiles to the PC machine language, but which runs on a Sun.

- 20. Error handling in Compilers Other kinds of errors not generally detected by the compiler are called run-time errors. Compile time errors Syntax errors are reported by the compiler at compile time.

- 21. Assemblers- Assemblers- translate programs written in low-level or assembly language into machine code. Assembly language is called low-level language. Because there is one to one correspondence between the assembly language statements and machine language statements. Symbolic form of the machine language, makes it easy to translate Compiler generates assembly language as its target language and assembler translate it into object code.

- 22. Assemblers- Assembler is basically the 1st interface that is able to communicate humans with the machine.

- 23. Linker Linker - is responsible for taking object code generated by the compiler and linking it with libraries and other object code to create a final executable program. It resolves references to external symbols and creates the complete executable. Common examples include the GNU linker (ld) and the Microsoft Visual C++ linker.

- 24. Loader Loader - is responsible for loading an executable program into memory for execution. It prepares the memory space, reads the binary code, and resolves any dynamic linking or loading of shared libraries. This is often done by the operating system's loader component.

- 25. A language-processing system In addition to compiler, several other programs may be required to create an executable target program. A source program may be divided into modules stored in separate files. The task of collecting the source program is the responsibility of another program called preprocessor. The target program created by the compiler may require further processing before it can be run.

- 26. The Phases of a Compiler The structure of a compiler includes; Lexical analysis, Syntax analysis, Semantic Analysis, Intermediate code generation, Code Optimization, Code generation, Bookkeeping and Error handling

- 27. The Phases of a Compiler

- 29. 1. Lexical Analysis The first phase of a compiler is lexical analysis, also known as scanning. The lexical phase reads the characters in the source program and groups them into a stream of tokens in which each token represents a logically cohesive sequence of characters, such as, an identifier, keyword, punctuation character. The character sequence forming a token is called the lexeme for the token.

- 30. Lexical Analysis A token is basically the arrangement of characters that defines a unit of information in the source code. NOTE: In computer science, a program that executes the process of lexical analysis is called a scanner, tokenizer, or lexer.

- 31. Lexical Analysis Roles and Responsibilities of Lexical Analyzer It is accountable for terminating the comments and white spaces from the source program. It helps in identifying the tokens. Categorization of lexical units.

- 32. Lexical Analysis A token, also known as a lexeme, a lexical item, or a lexical token, is a string of input characters which is taken as a unit and passed on to the next phase of compilation. Examples key words - while, void, if, for, ... identifiers - declared by the programmer operators - +, -, *, /, =, ==, ... numeric constants - numbers such as 124, 12.35, 0.09E-23, etc.

- 33. Lexical Analysis Examples character constants - single characters or strings of characters enclosed in quotes special characters - characters used as delimiters such as . ( ) , ; : comments - ignored by subsequent phases. These must be identified by the scanner, but are not included in the output.

- 35. 2. Syntax Analysis The second phase of a compiler is syntax analysis, also known as parsing. This phase takes the stream of tokens generated by the lexical analysis phase and checks whether they conform to the grammar of the programming language. The output of this phase is usually an Abstract Syntax Tree (AST).

- 36. Syntax Analysis It accepts tokens as input and provides a parse tree as output. It is also known as parsing in a compiler.

- 37. The parser will check for proper syntax, issue appropriate error messages, and determine the underlying structure of the source program. The output of this phase may be a stream of atoms or a collection of syntax trees. An atom is an atomic operation, or one that is generally available with one machine language instruction(s) on most target machines. For example, MULT, ADD, and MOVE could represent atomic operations for multiplication, addition, and moving data in memory.

- 38. Each atom consists of three or four parts: an operation, one or two operands, and a result. Example – (ADD, A, B, C)

- 39. Sample Problem 2.2.5 Show the atoms corresponding to the following Java statement: a = b + c * d ; Solution: (MULT, c, d, temp1) (ADD, b, temp1, temp2) (MOVE, temp2, a)

- 40. Syntax Analysis Roles and Responsibilities of Syntax Analyzer Note syntax errors. Helps in building a parse tree. Acquire tokens from the lexical analyzer. Scan the syntax errors, if any.

- 41. Semantic Analysis This phase checks whether the code is semantically correct, i.e., whether it conforms to the language’s type system and other semantic rules. Roles and Responsibilities of Semantic Analyzer: Saving collected data to symbol tables or syntax trees. It notifies semantic errors. Scanning for semantic errors.

- 42. Intermediate Code Generation This phase generates an intermediate representation of the source code that can be easily translated into machine code. A middle-level language code generated by a compiler at the time of the translation of a source program into the object code is known as intermediate code or text. Roles and Responsibilities: Helps in maintaining the priority ordering of the source language. Translate the intermediate code into the machine code. Having operands of instructions.

- 43. Optimization This phase applies various optimization techniques to the intermediate code to improve the performance of the generated machine code. Roles and Responsibilities: Remove the unused variables and unreachable code. Enhance runtime and execution of the program. Produce streamlined code from the intermediate expression.

- 44. Code Generation The final phase of a compiler is code generation. This phase takes the optimized intermediate code and generates the actual machine code that can be executed by the target hardware. Roles and Responsibilities: Translate the intermediate code to target machine code. Select and allocate memory spots and registers.

- 45. Phases in Compiler Generally, phases are divided into two parts: Front End phases: These phases are source language-dependent and target machine, independents. These generally consist of lexical analysis, semantic analysis, syntactic analysis, symbol table creation, and intermediate code generation. The front-end part also includes the error handling that goes along with each of the phases. Back End phases: The portions of compilers that depend on the target machine and do not depend on the source language. In the back end, code generation and code optimization phases, along with error handling and symbol table operations.

- 46. Symbol Table The symbol table is mainly known as the data structure of the compiler. It helps in storing the identifiers with their name and types. It stores: Information about the identifier such as, – its type, (by semantic and intermediate code) – its scope, (by semantic and intermediate code) – storage allocation, (by code generation) – number of arguments and its type for procedure, the type returned. It stores the literal constants and strings. It helps in storing the function names. It also prefers to store variable names and constants. It stores labels in source languages.

- 47. Error Detecting and Reporting Each phase encounters errors. Lexical phase determine the input that do not form token. Syntax phase determine the token that violates the syntax rule. Semantic phase detects the constructs that have no meaning to operand.

- 48. Example These phases are illustrated by considering the following statement: position := initial + rate *60

- 49. Compiler writing tools The compiler writer can use some specialized tools that help in implementing various phases of a compiler. These tools assist in the creation of an entire compiler or its parts. Some commonly used compiler construction tools include: Parser generators. YACC, Scanner generators. LEX Syntax-directed translation engines.