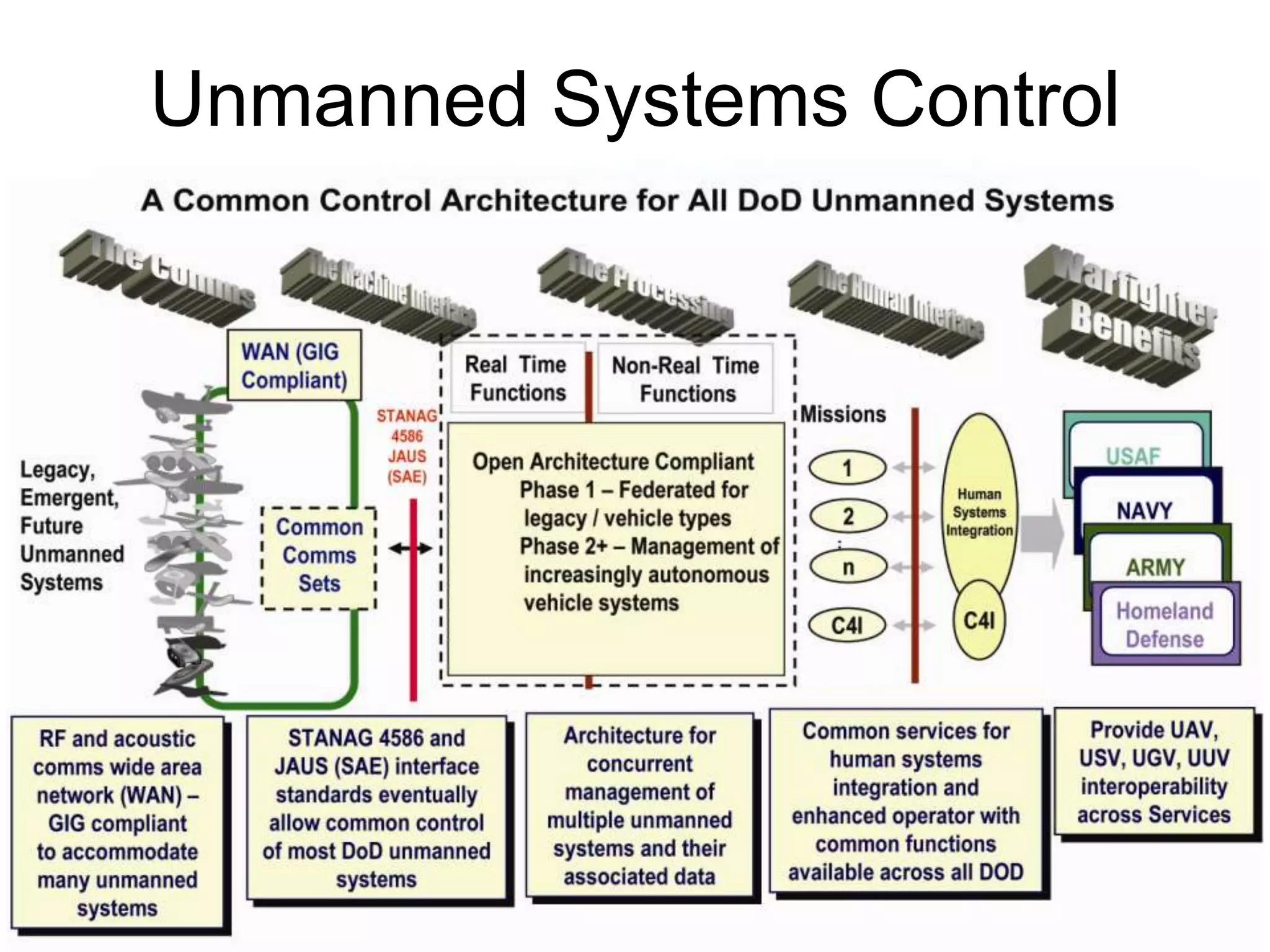

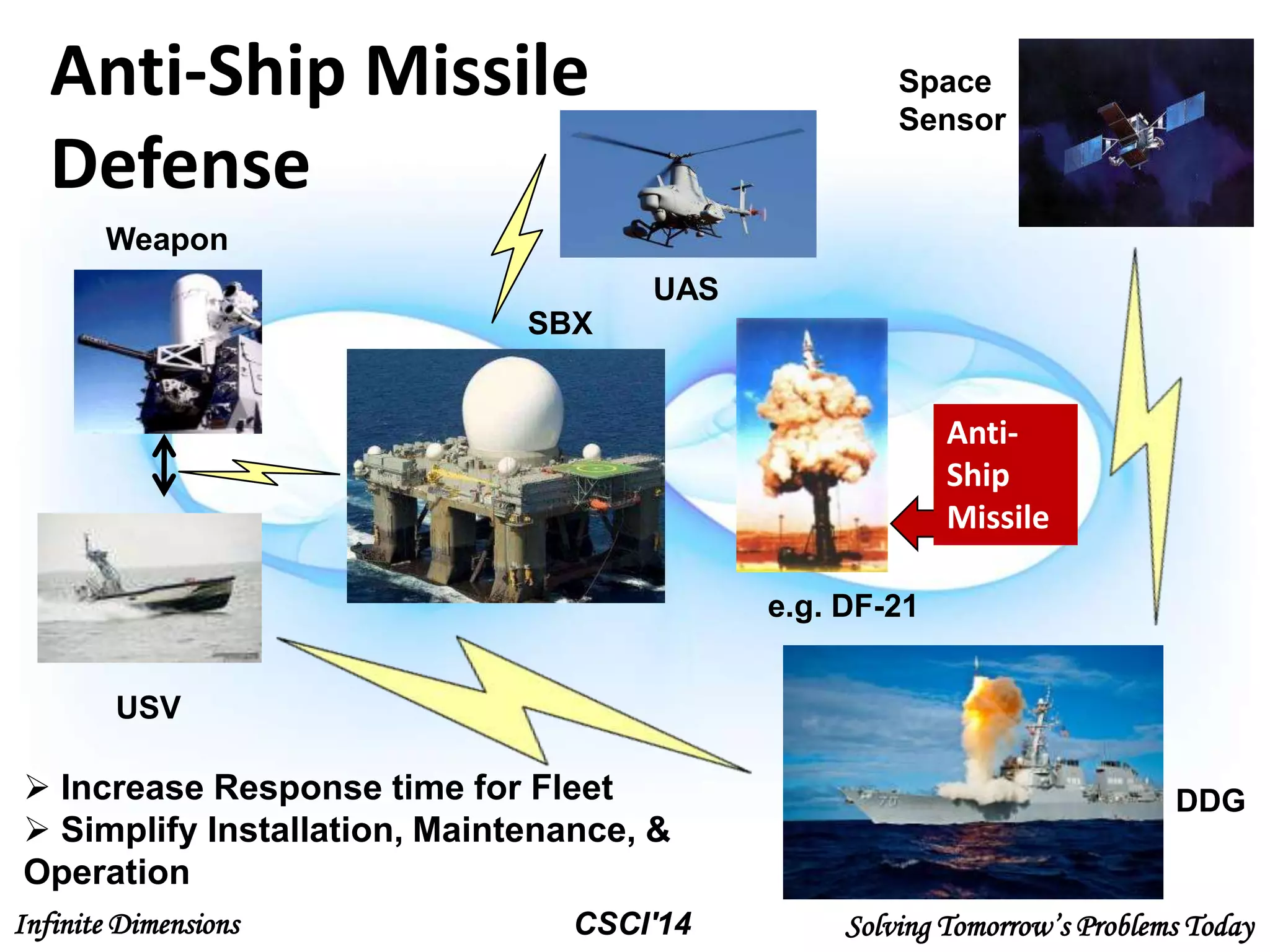

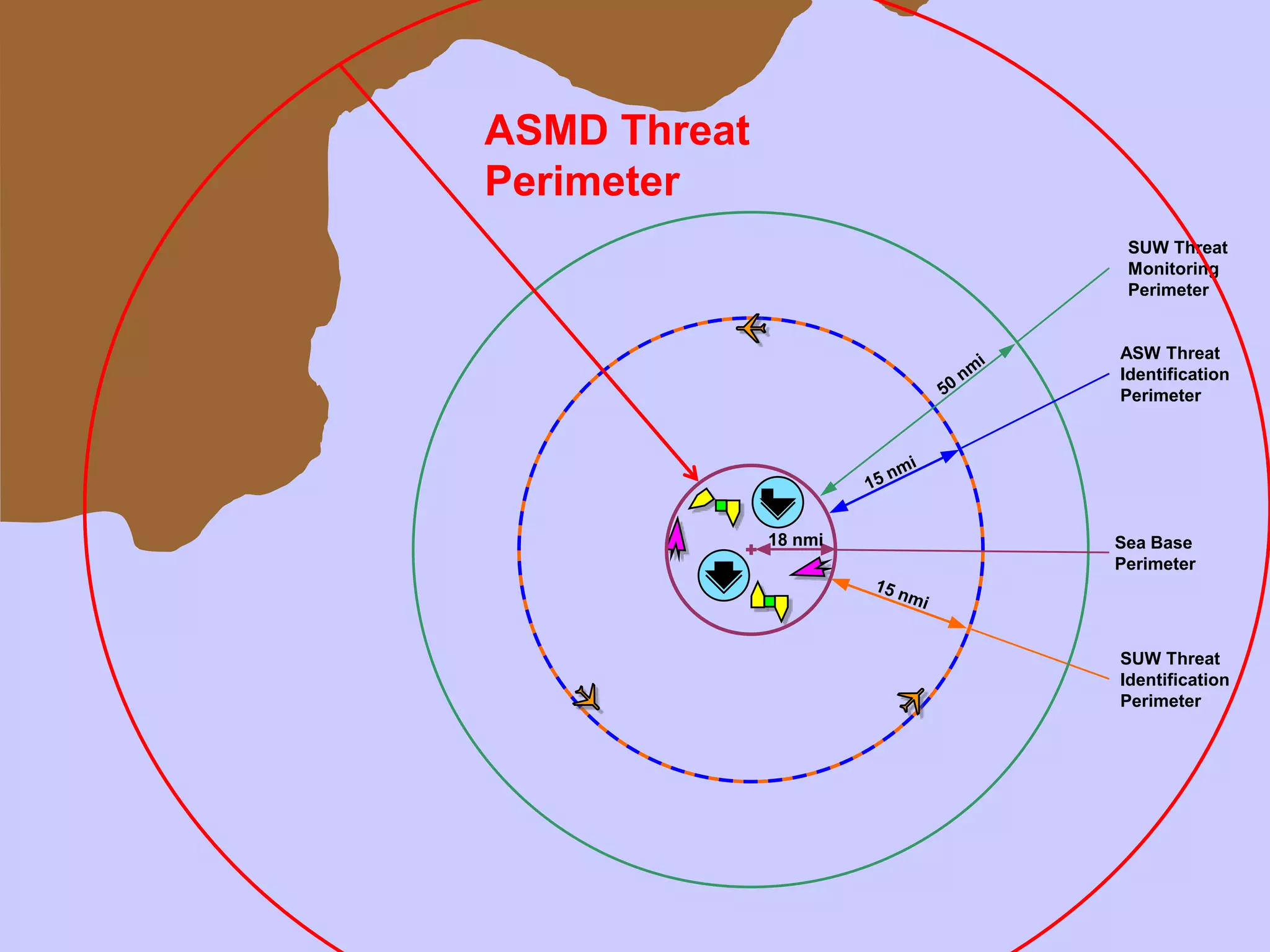

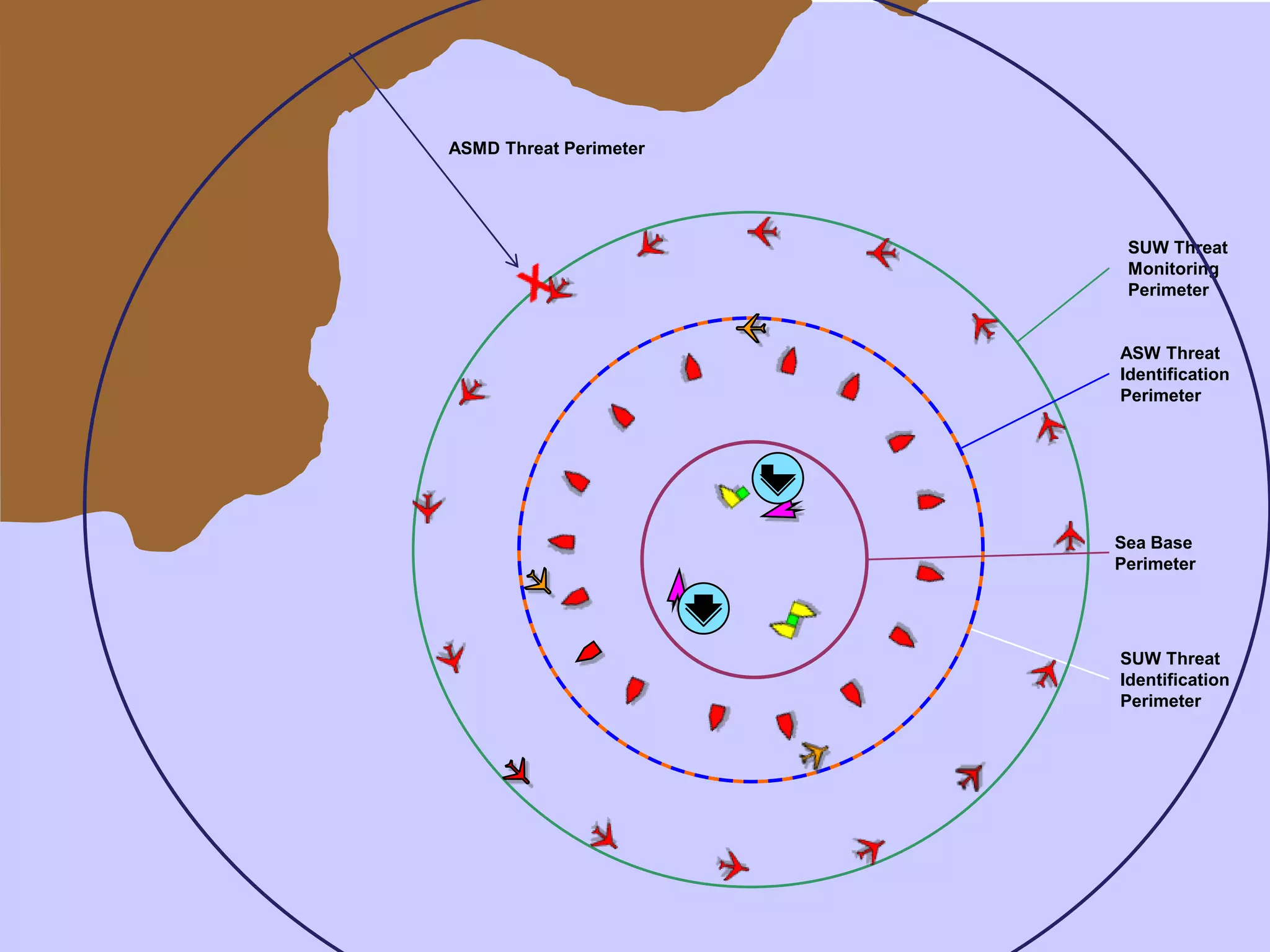

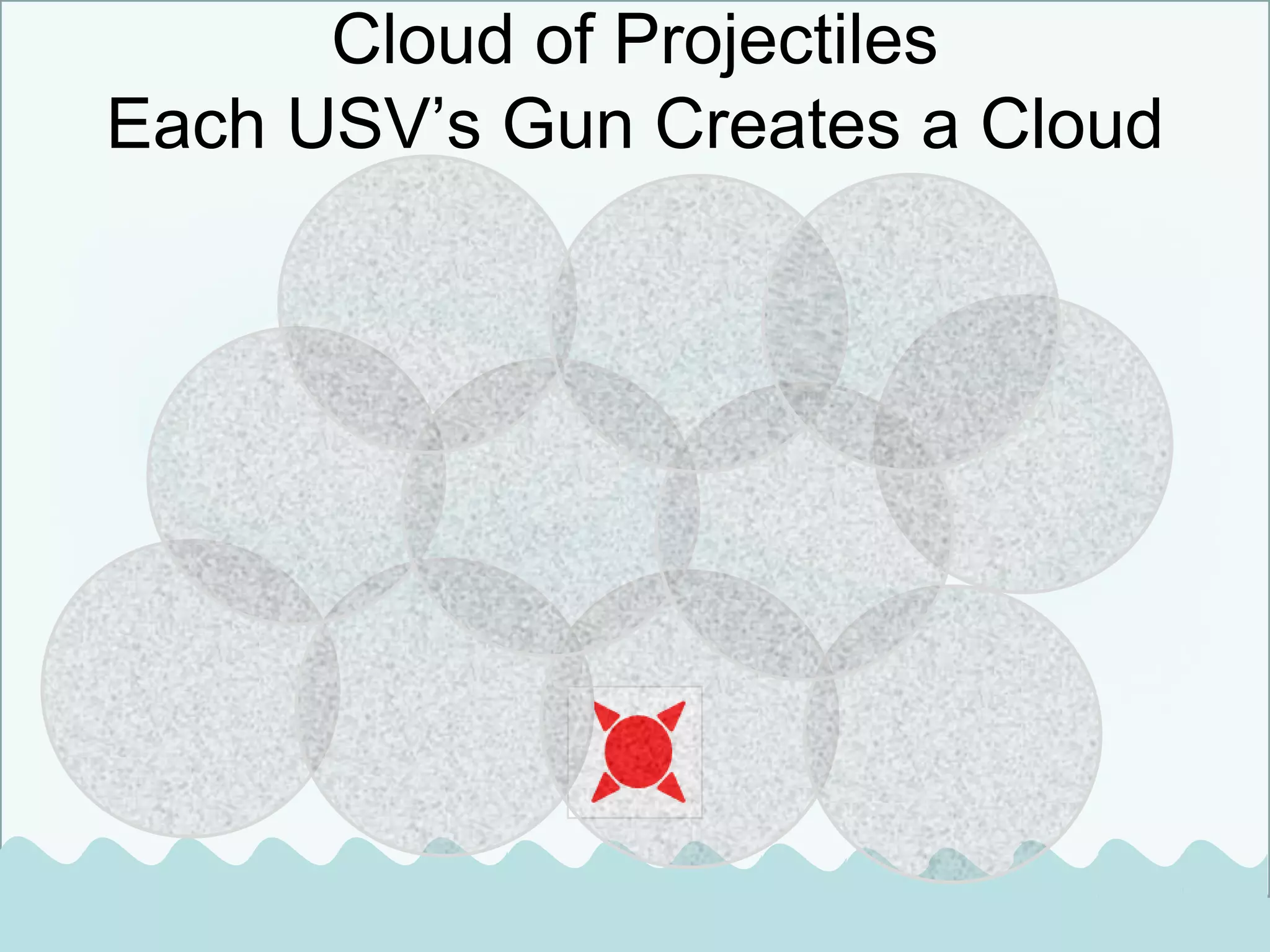

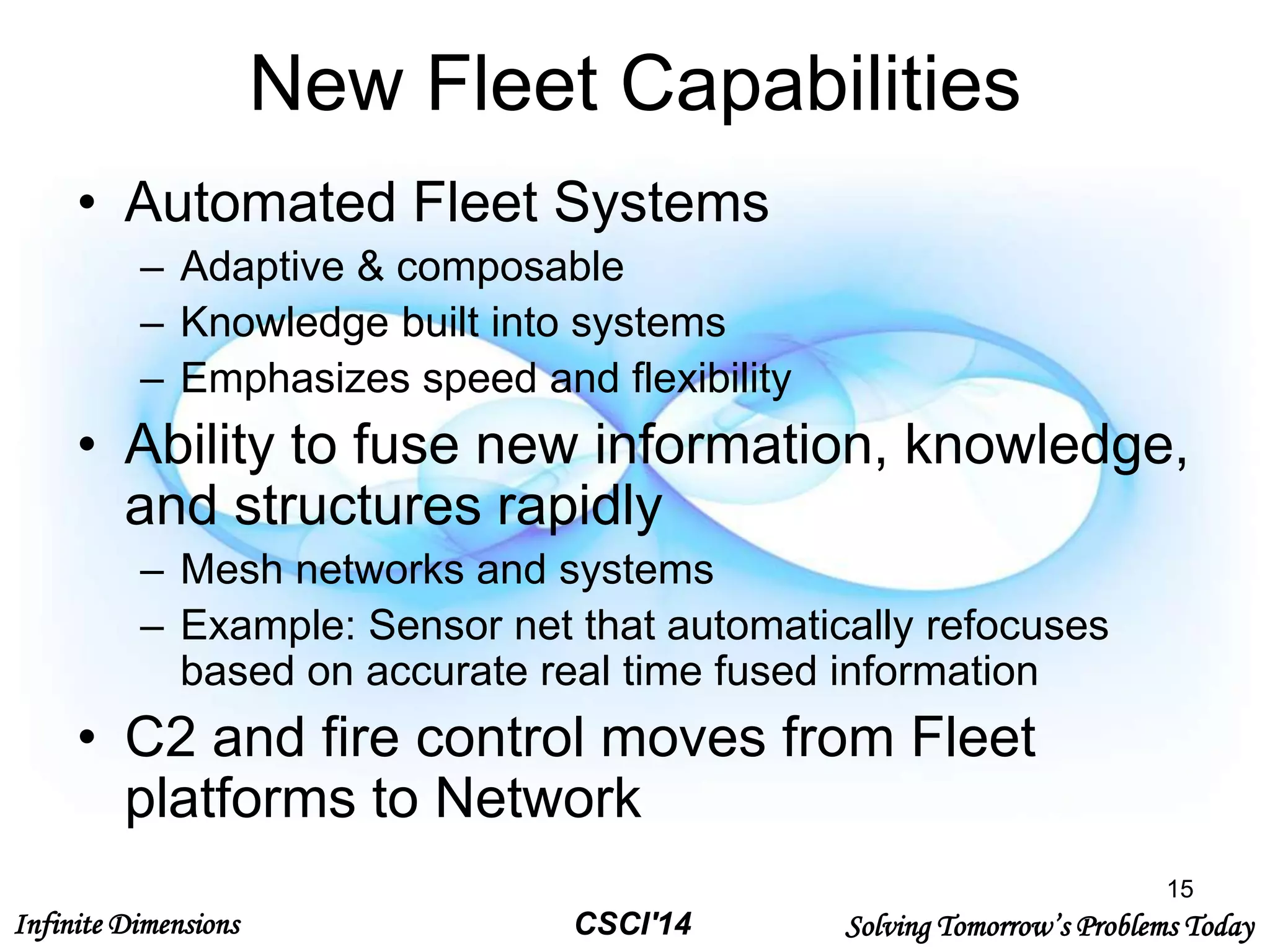

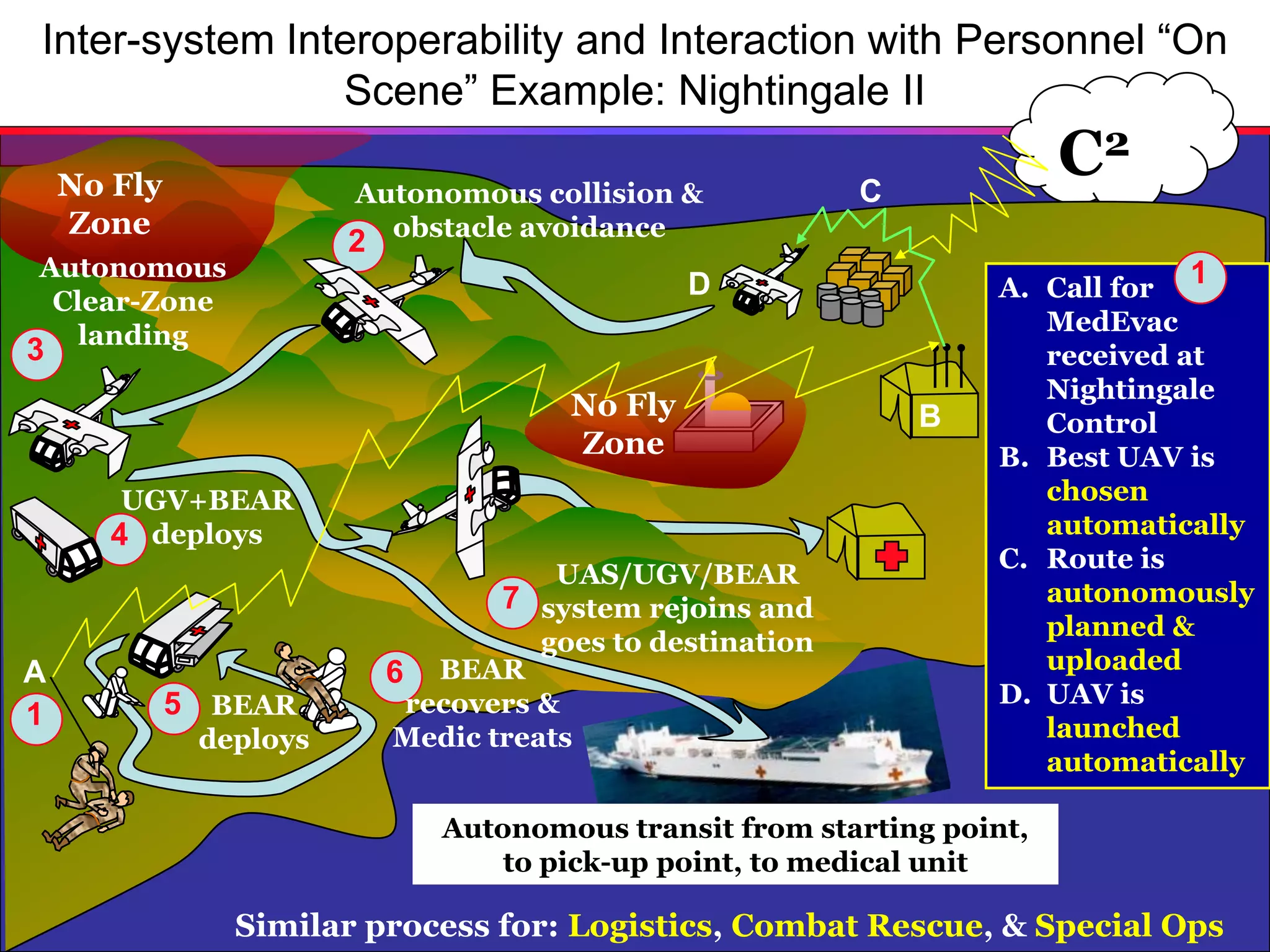

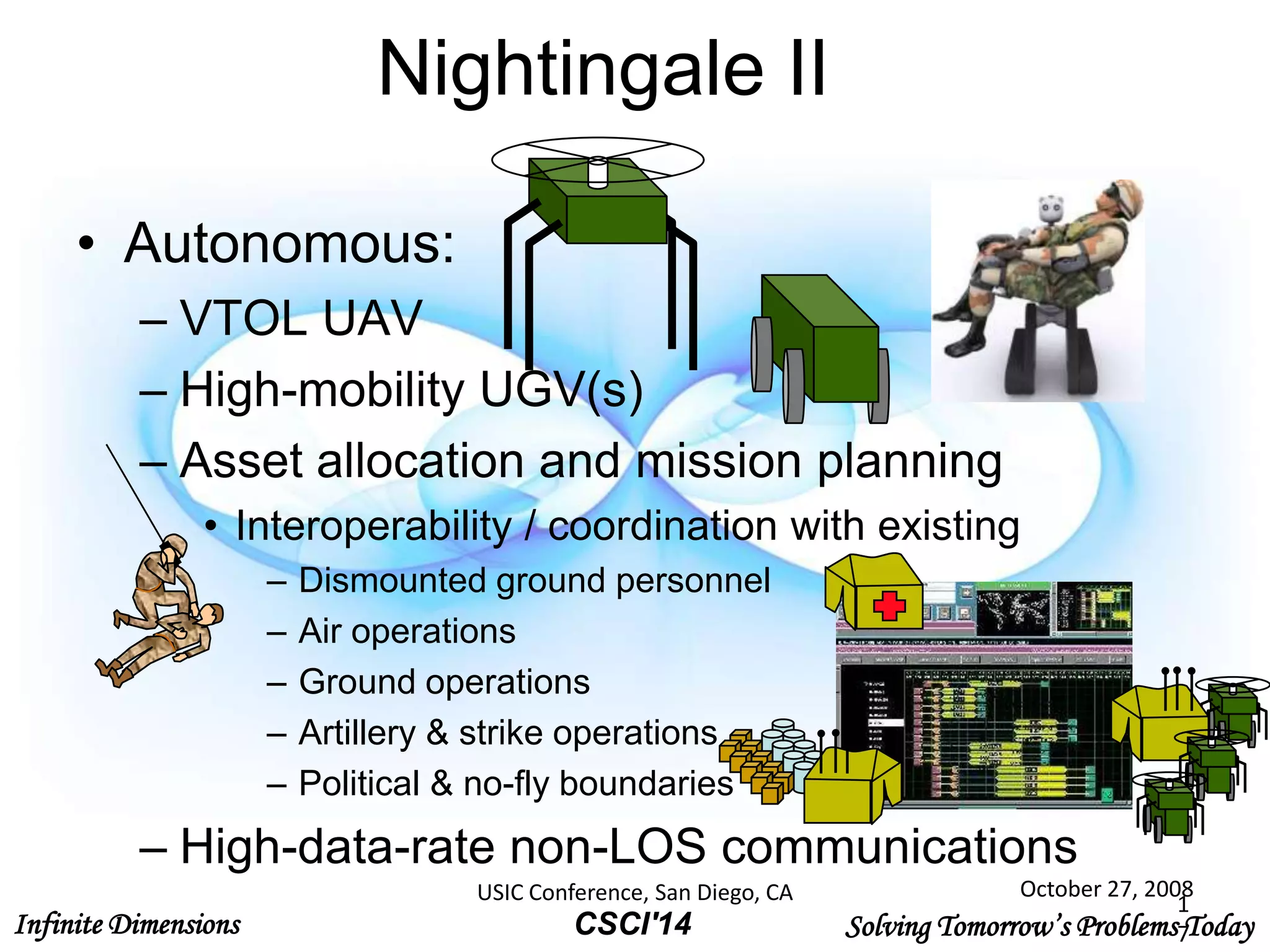

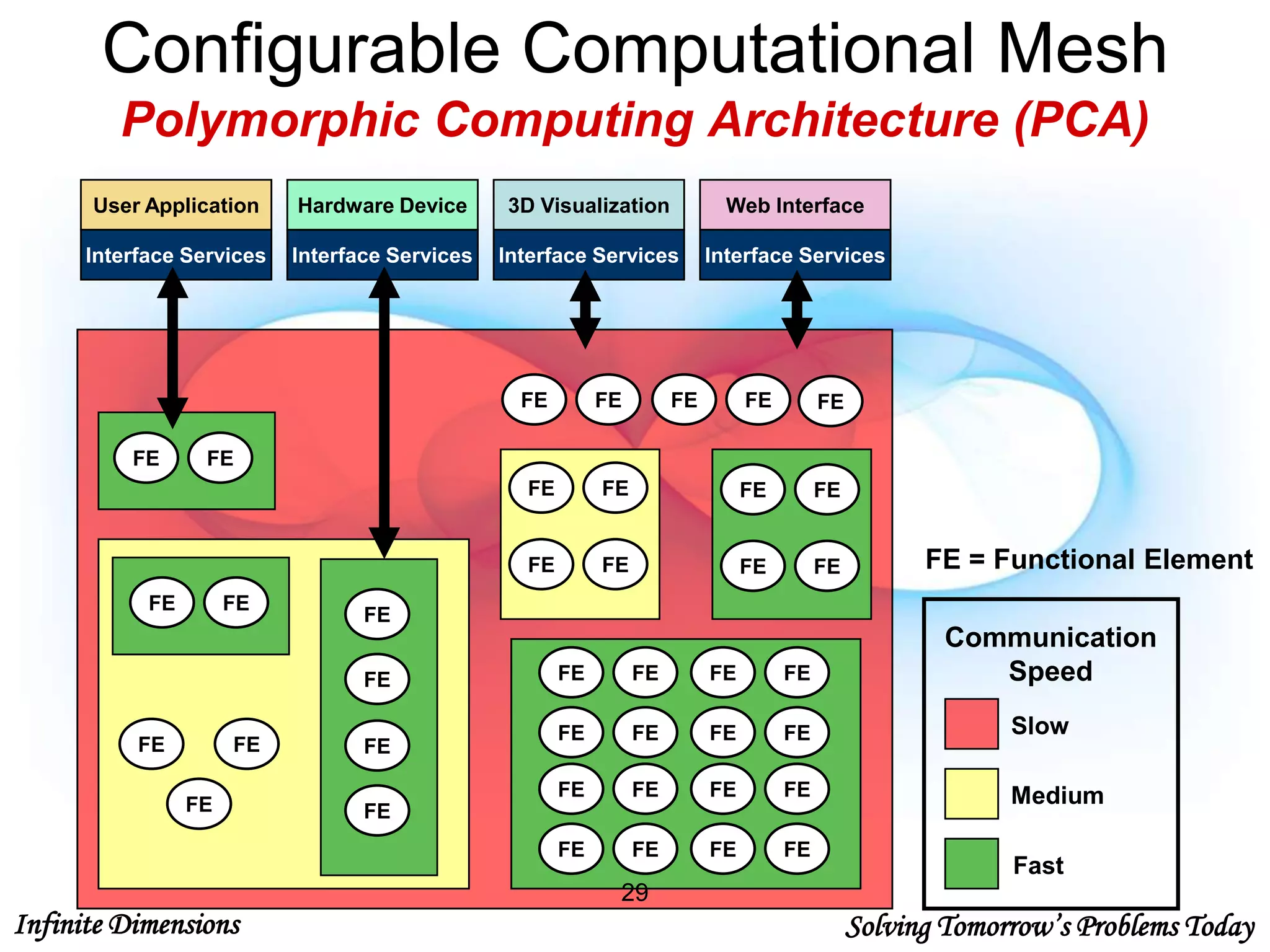

The document discusses the integration of cloud computing and intelligent systems to tackle complex challenges in unmanned systems control, anti-ship missile defense, and battlefield medical extraction. It outlines a collaborative environment that involves government, academia, and industry partners to develop innovative solutions and improve system interoperability. Key technical challenges include real-time operations, autonomous controls, and dynamic system adaptation.