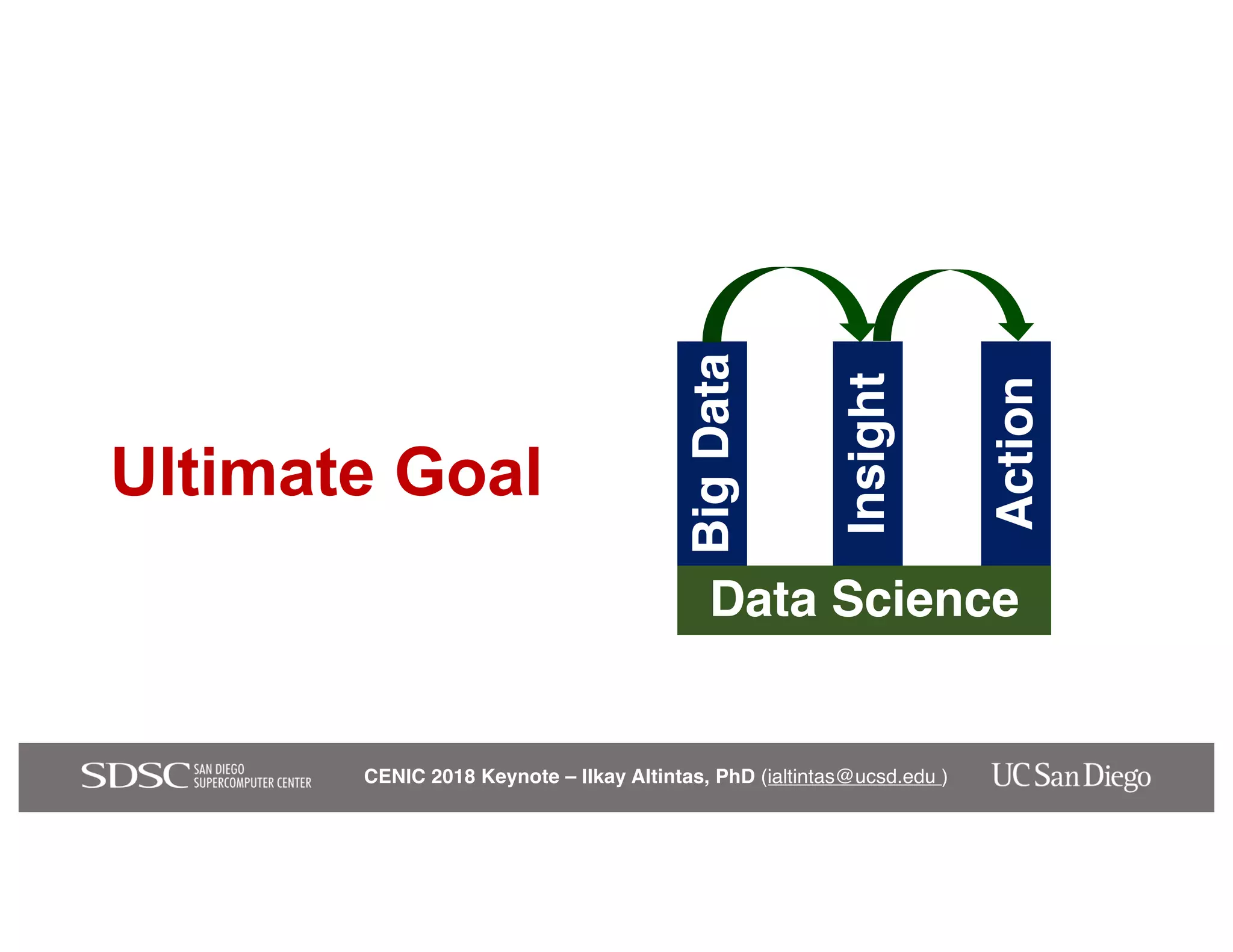

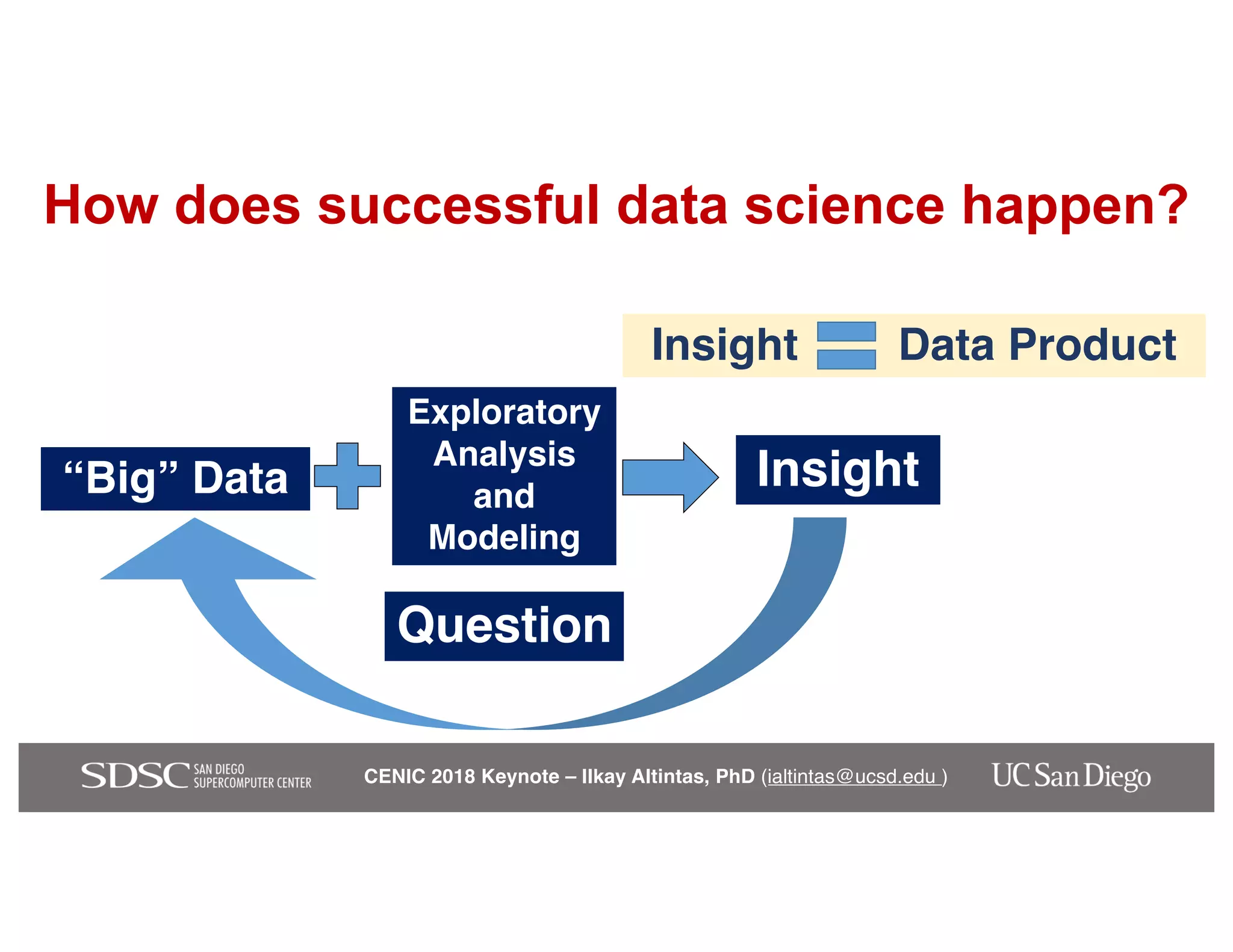

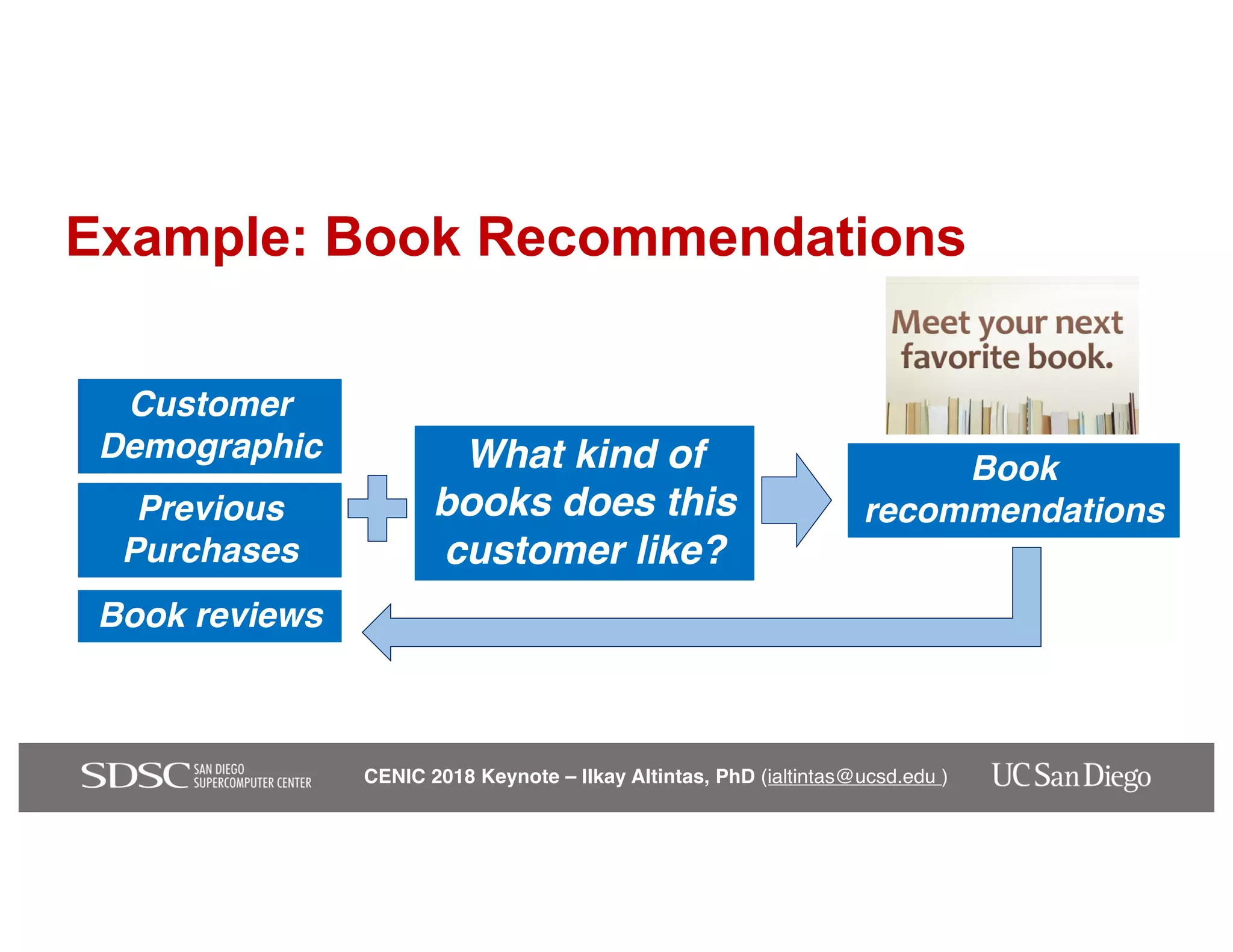

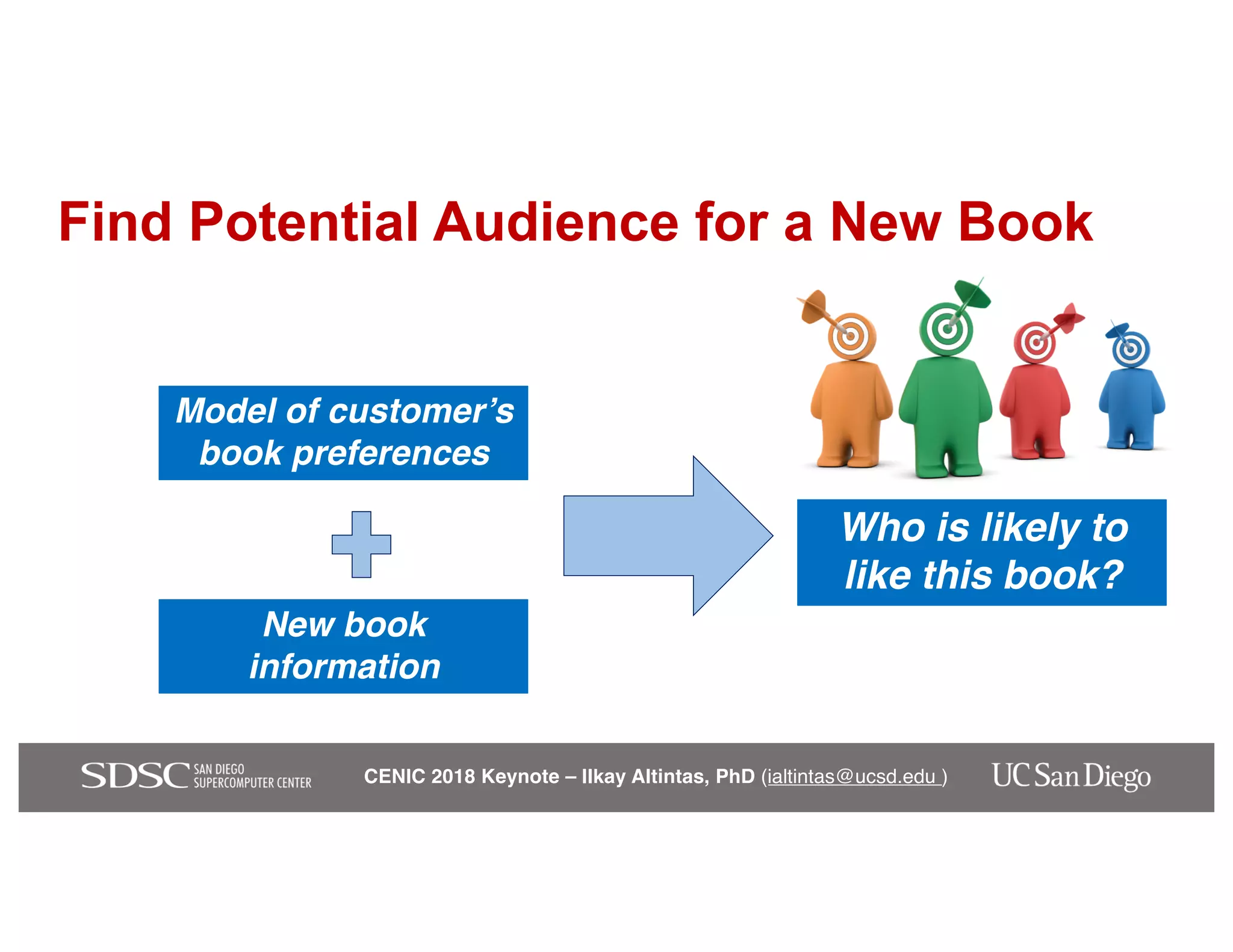

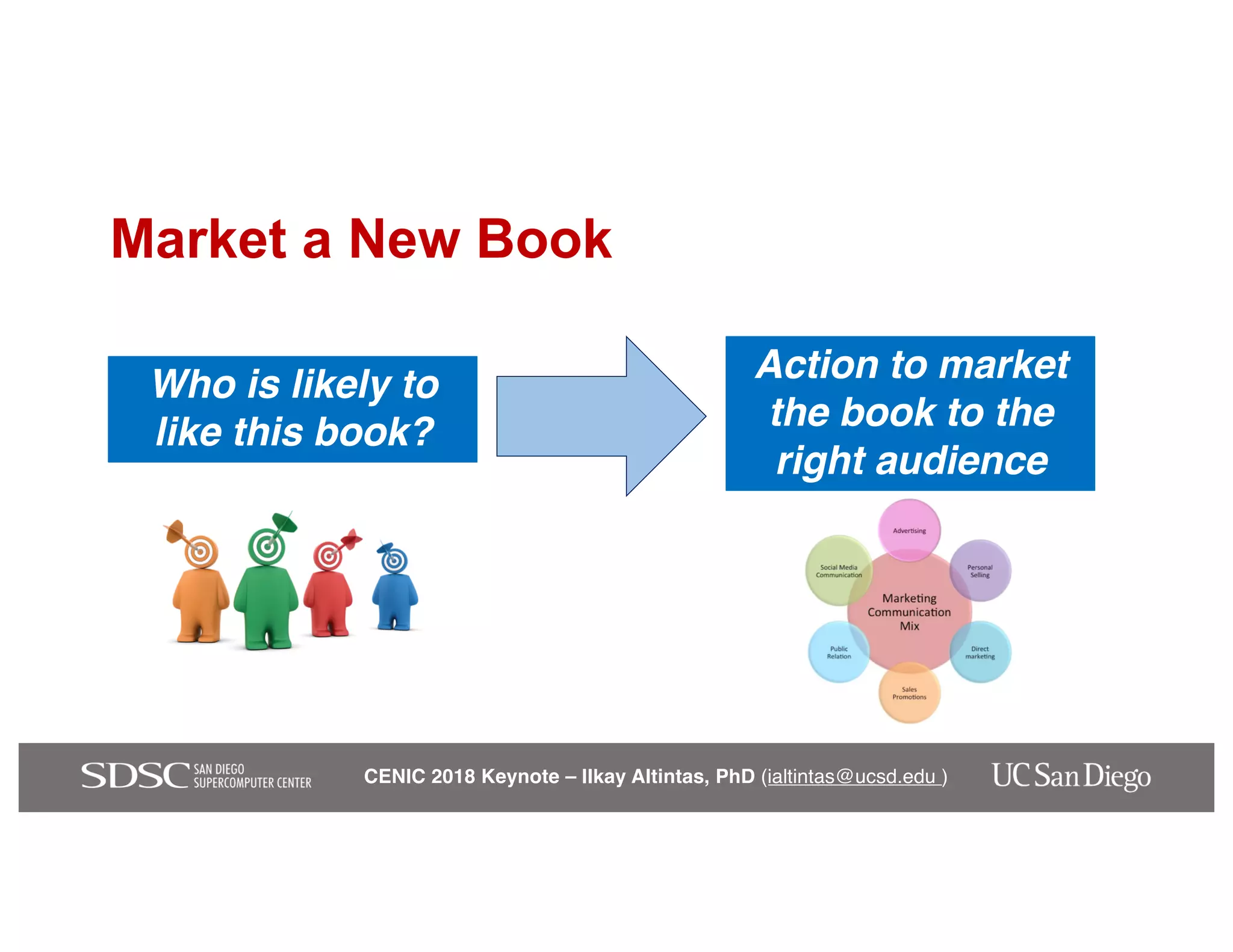

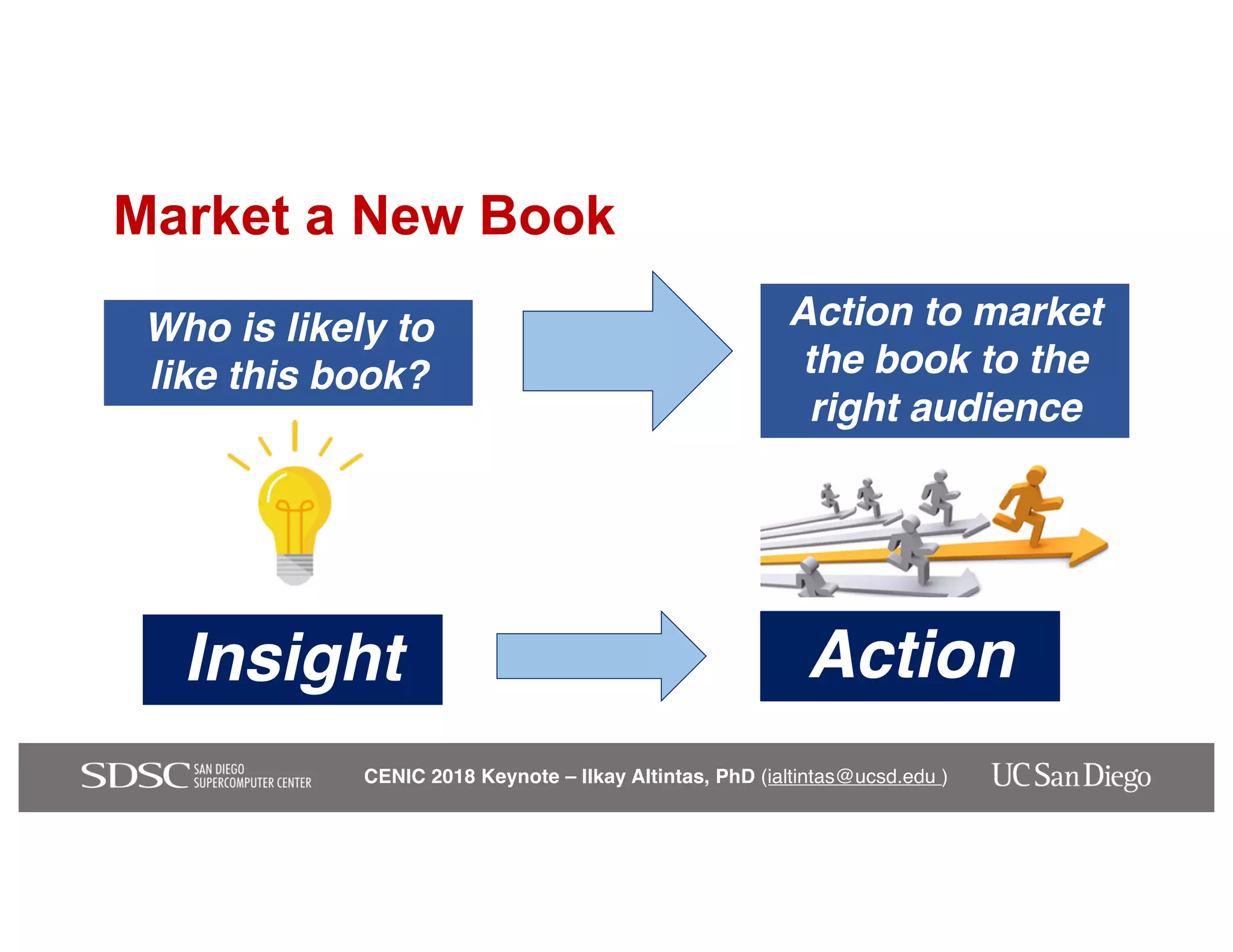

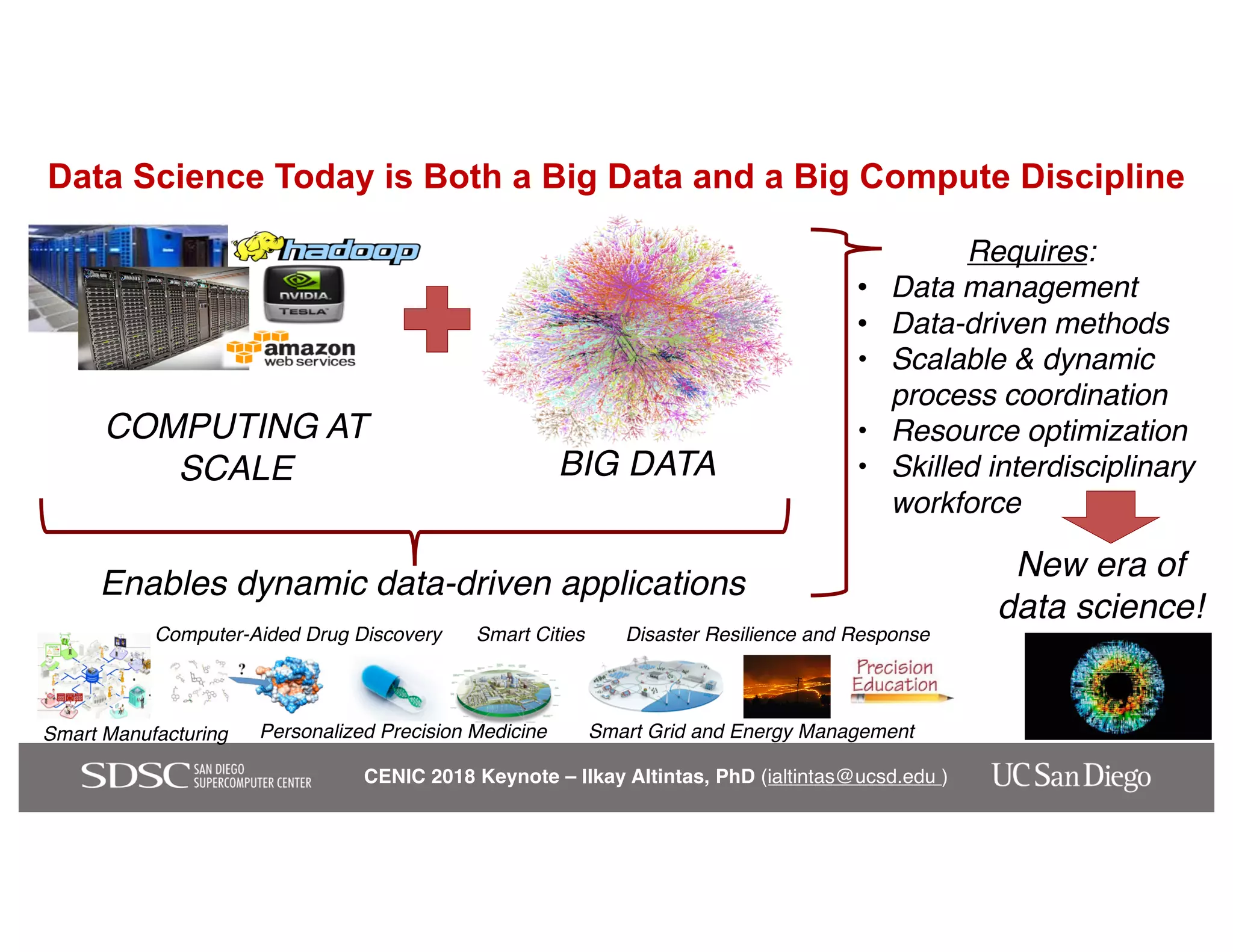

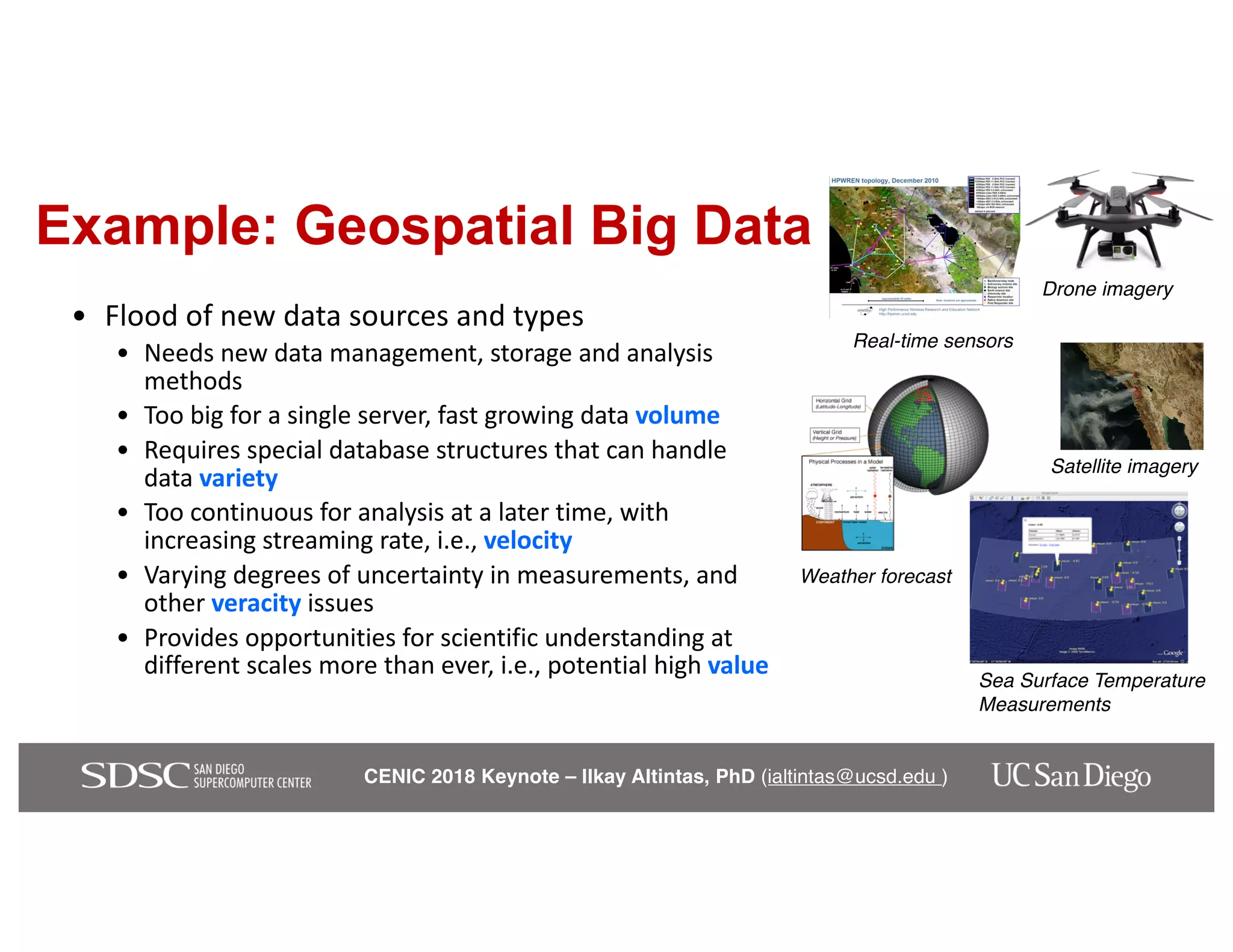

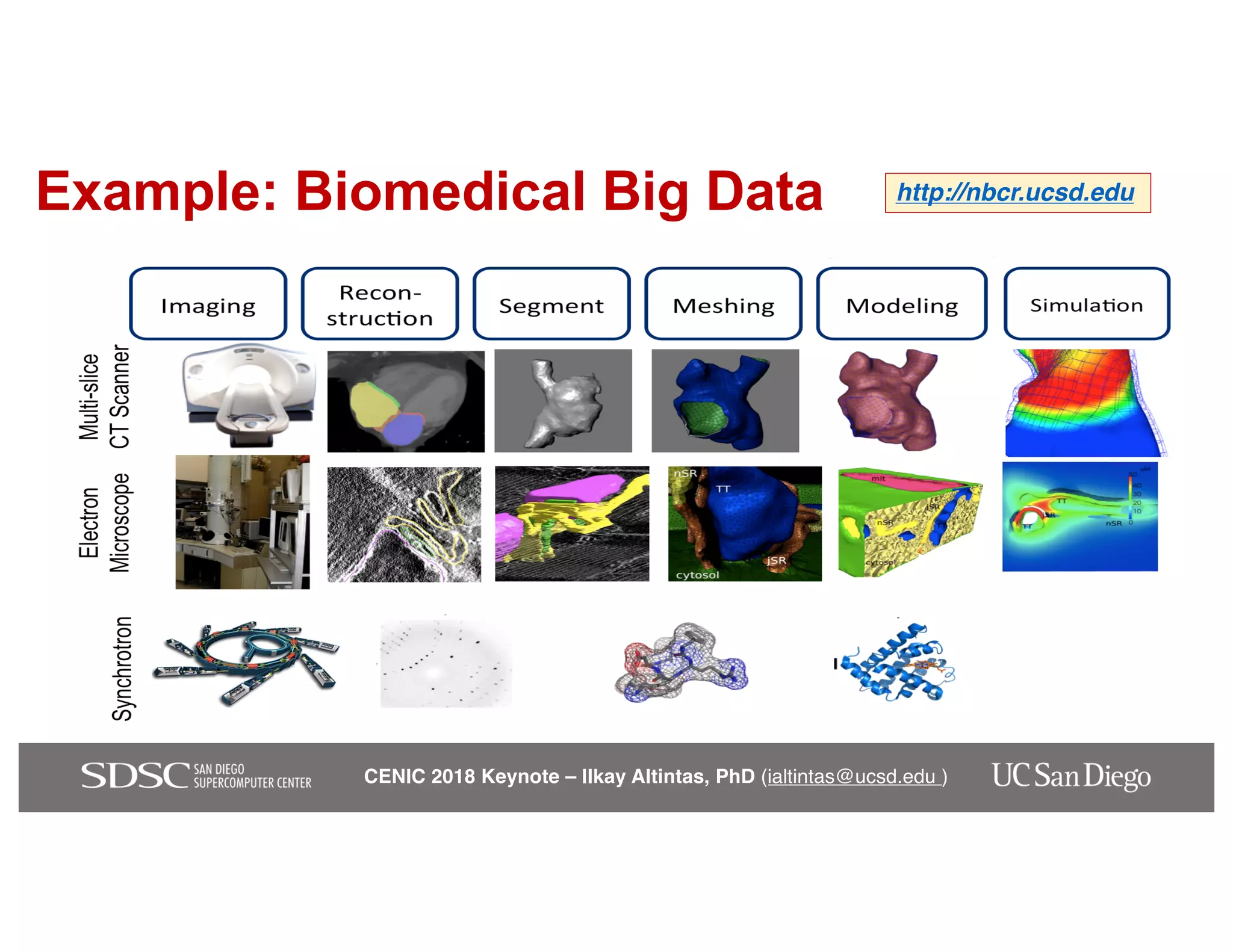

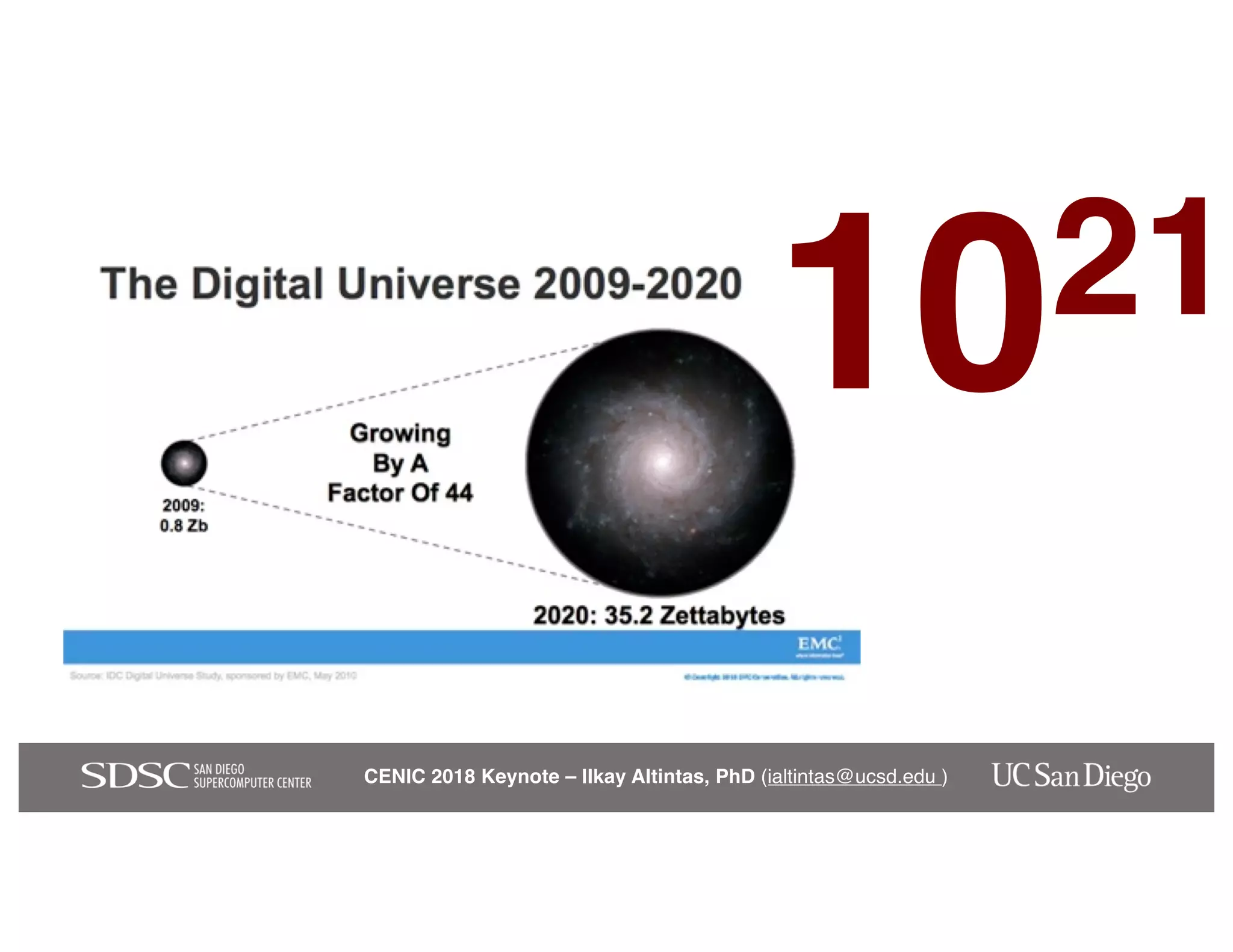

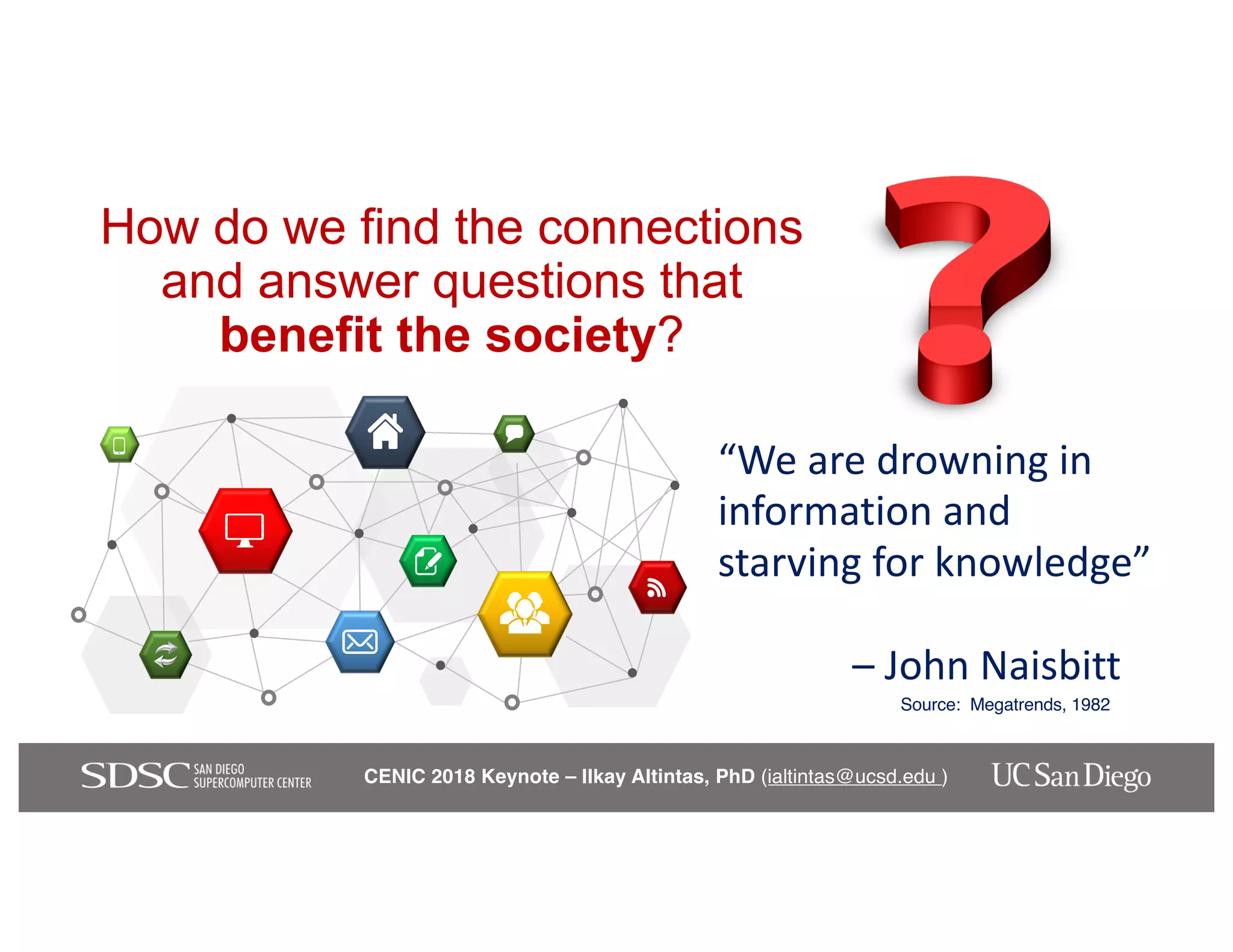

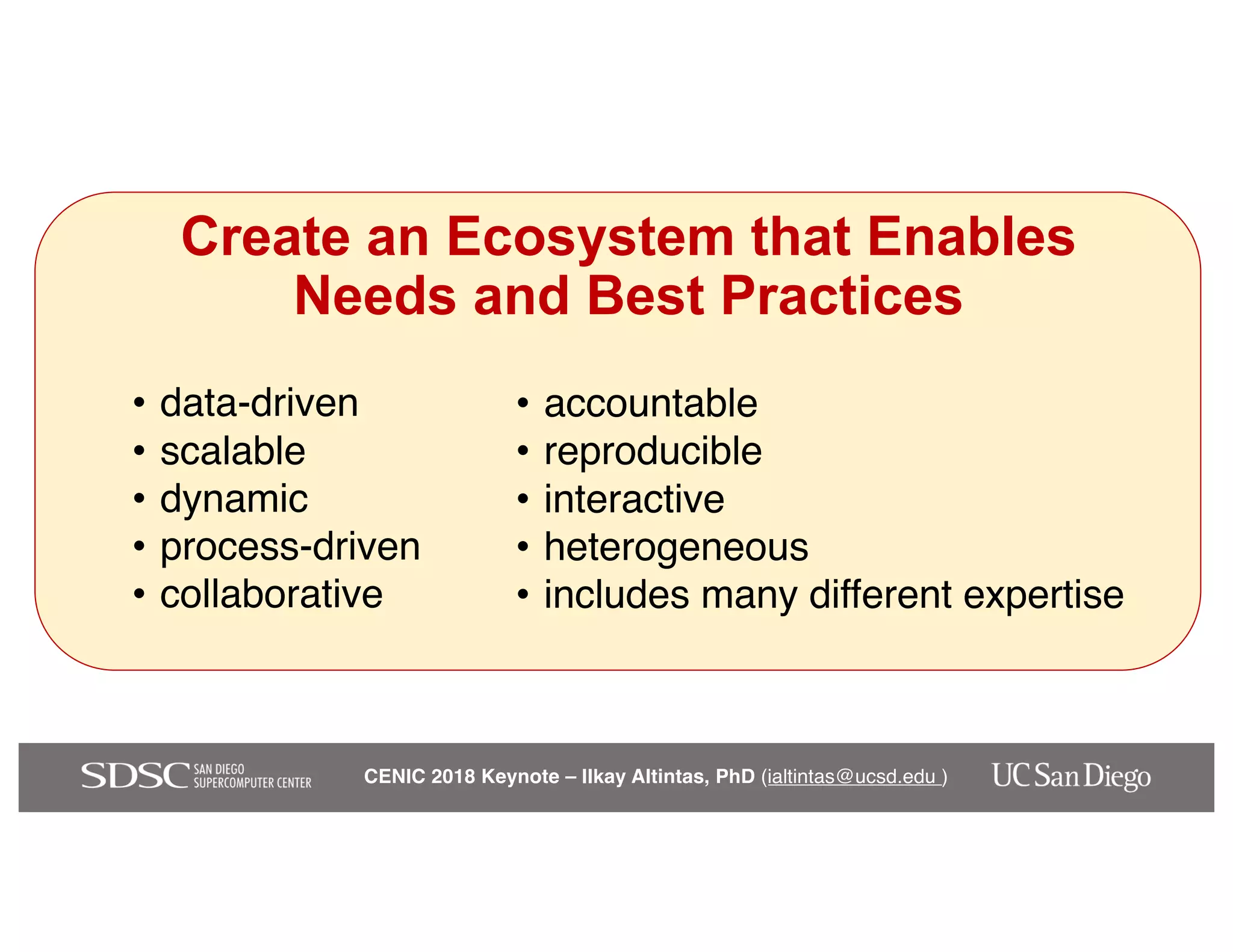

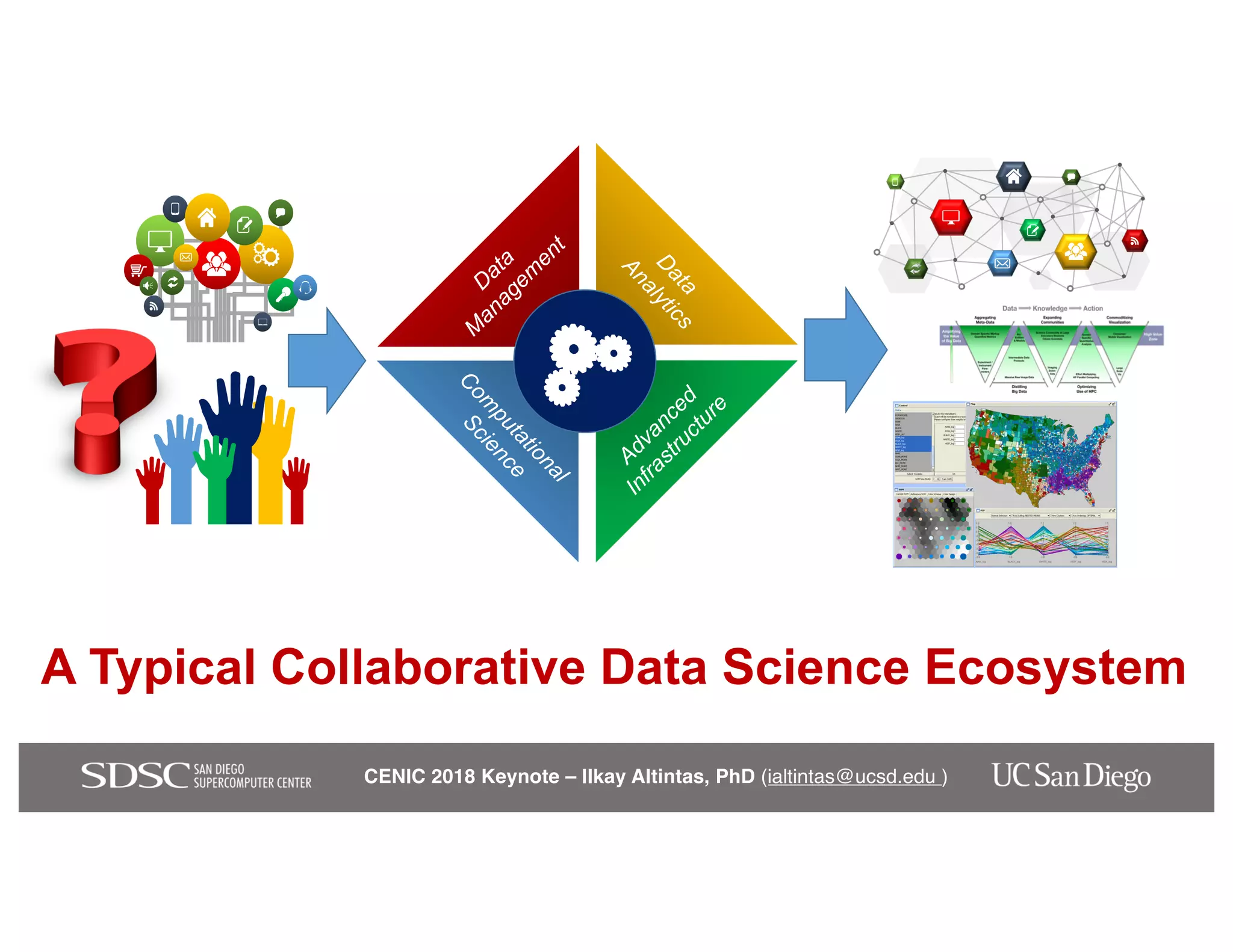

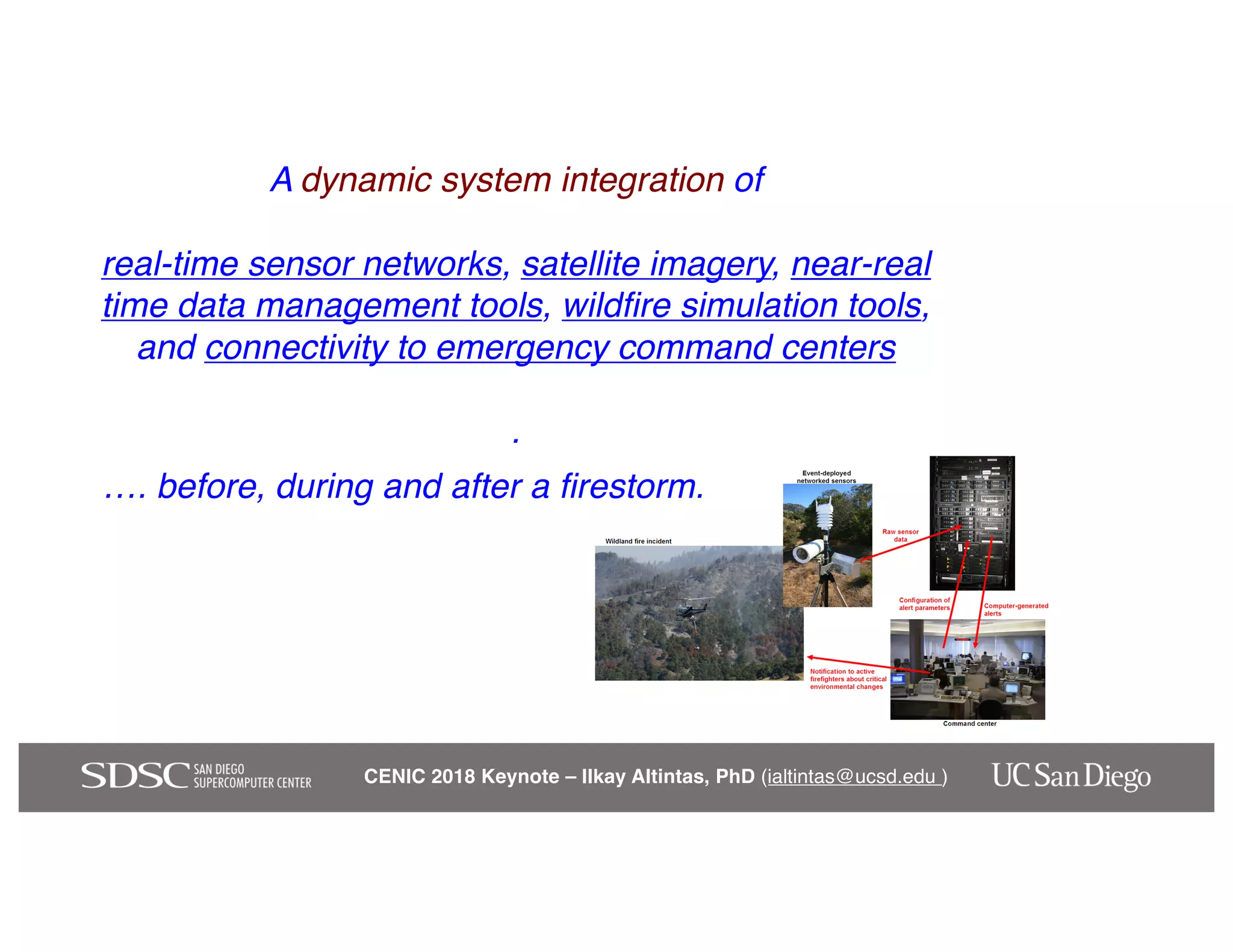

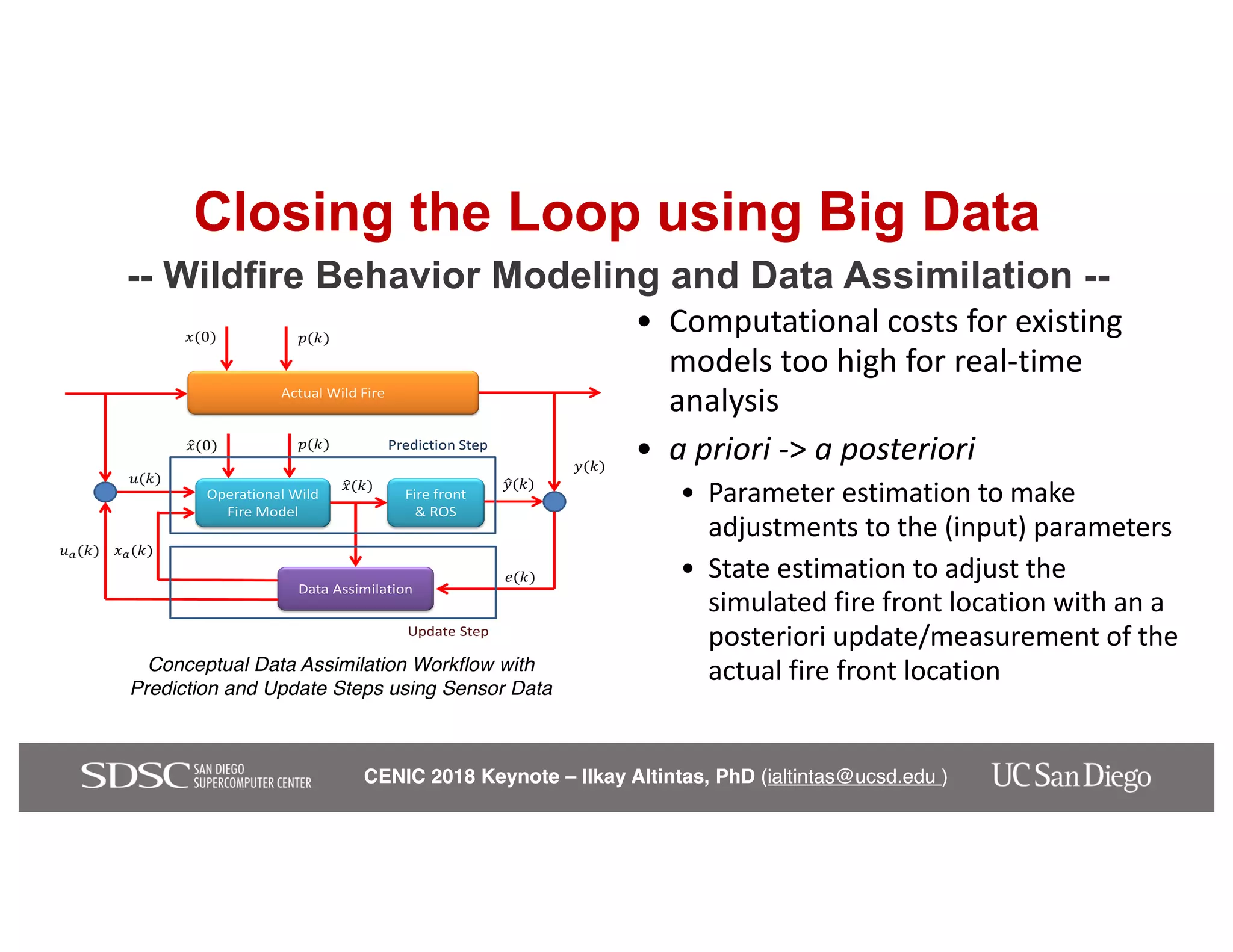

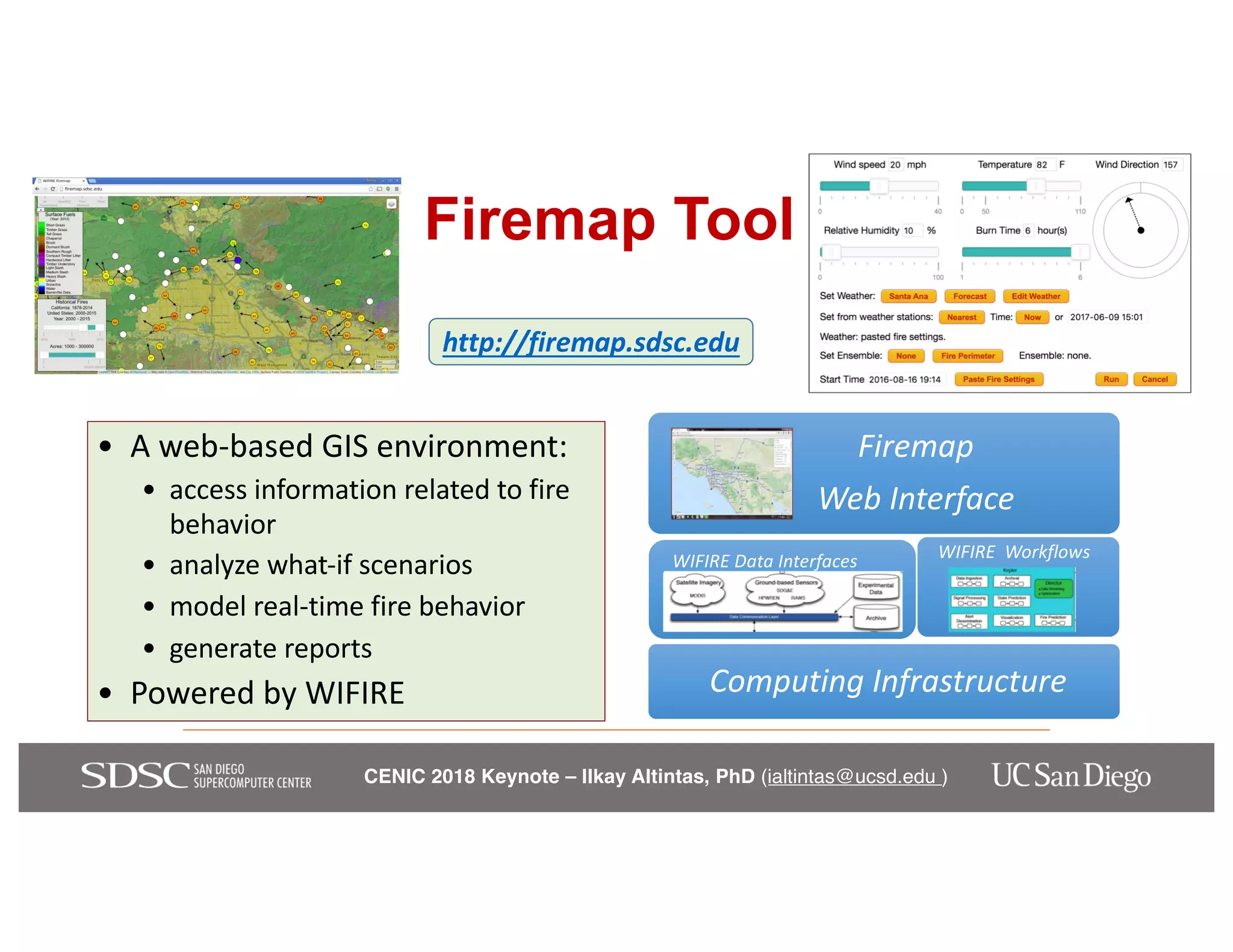

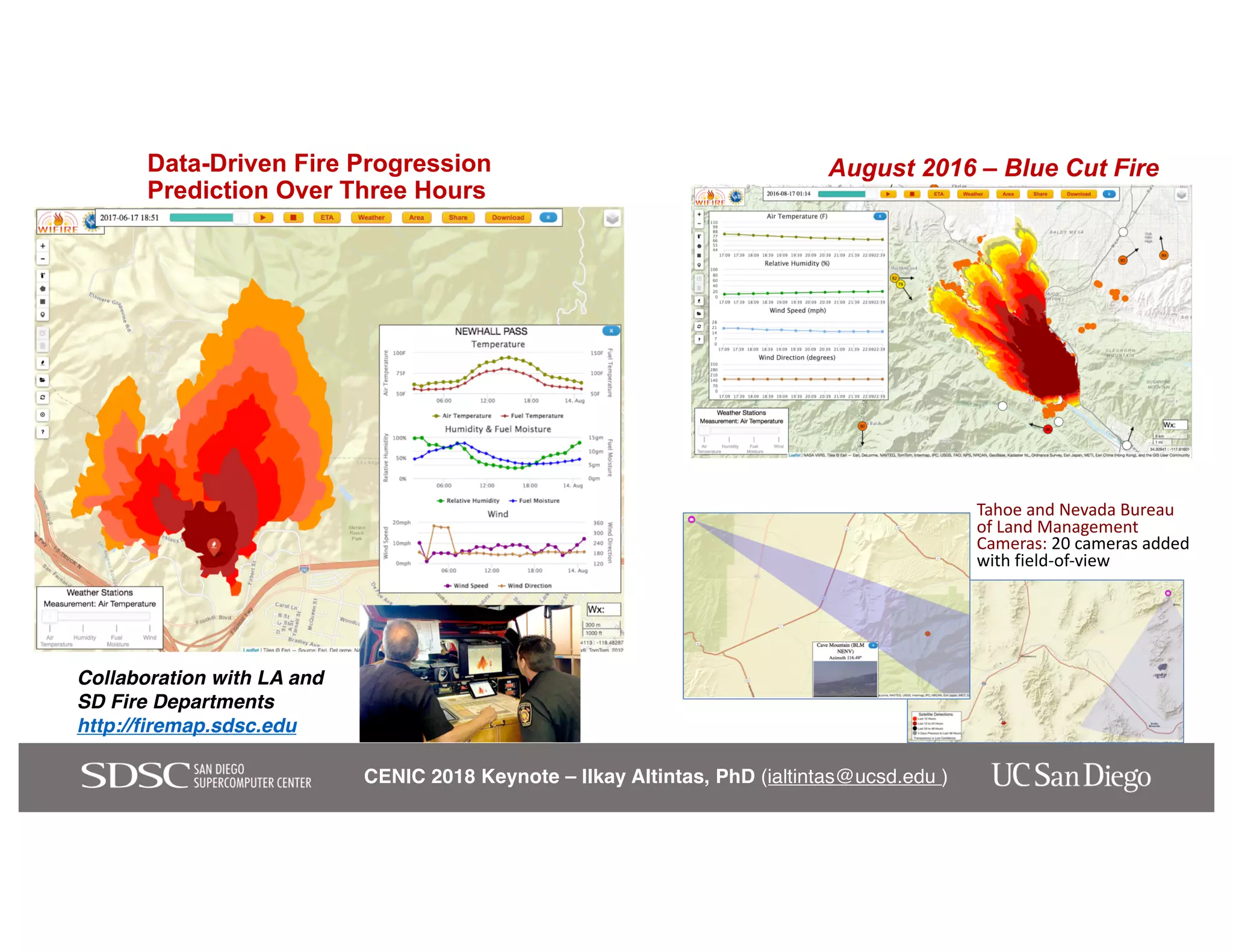

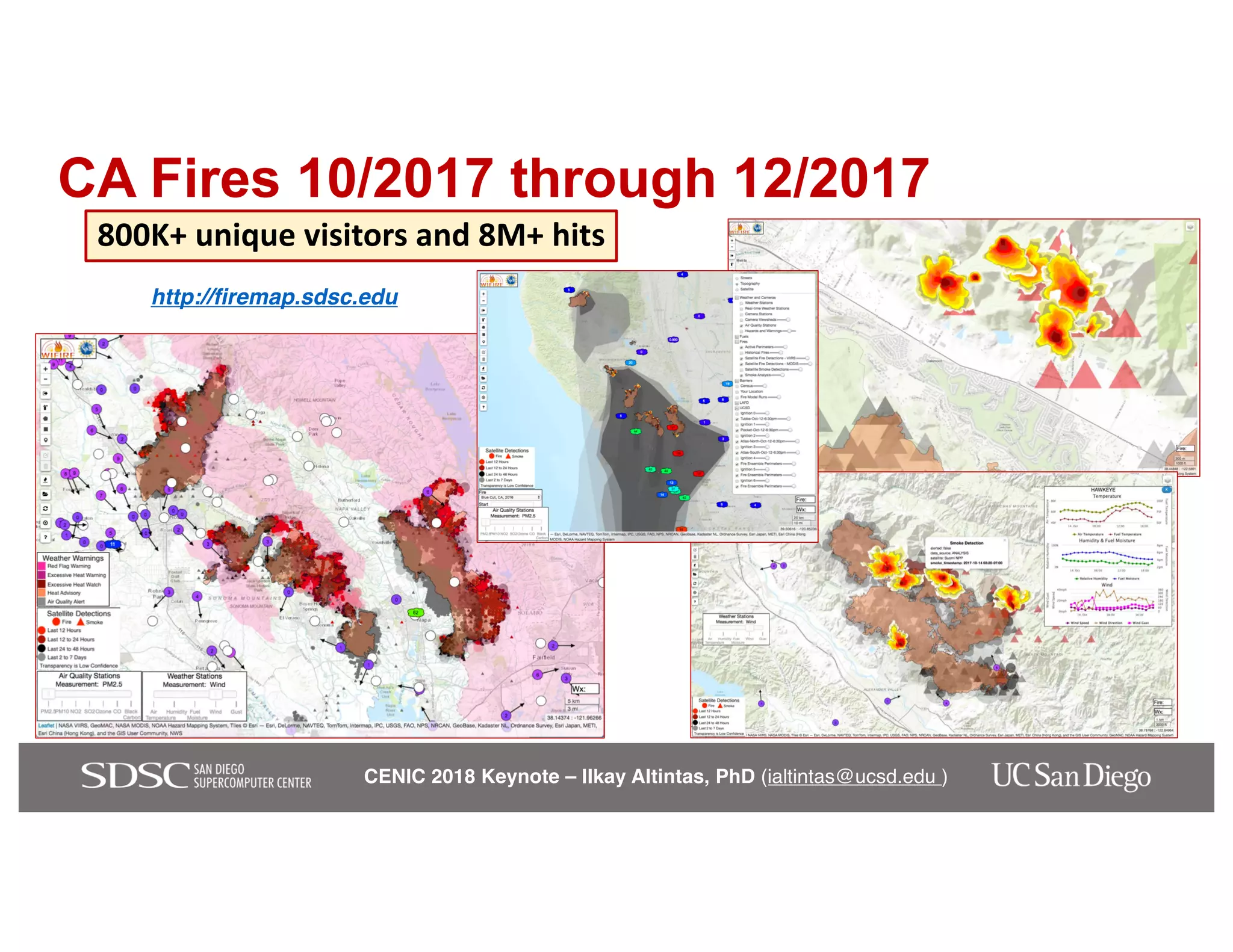

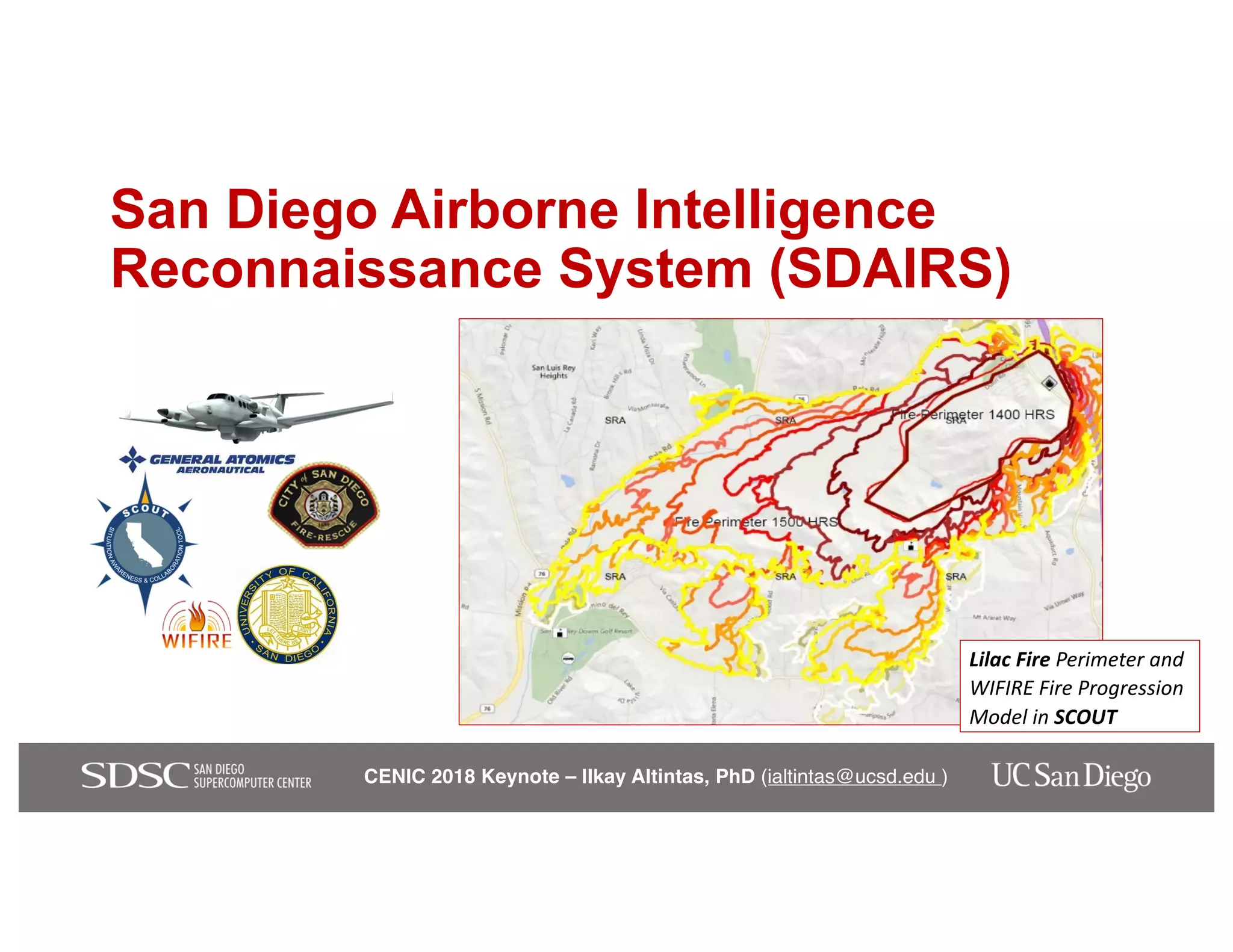

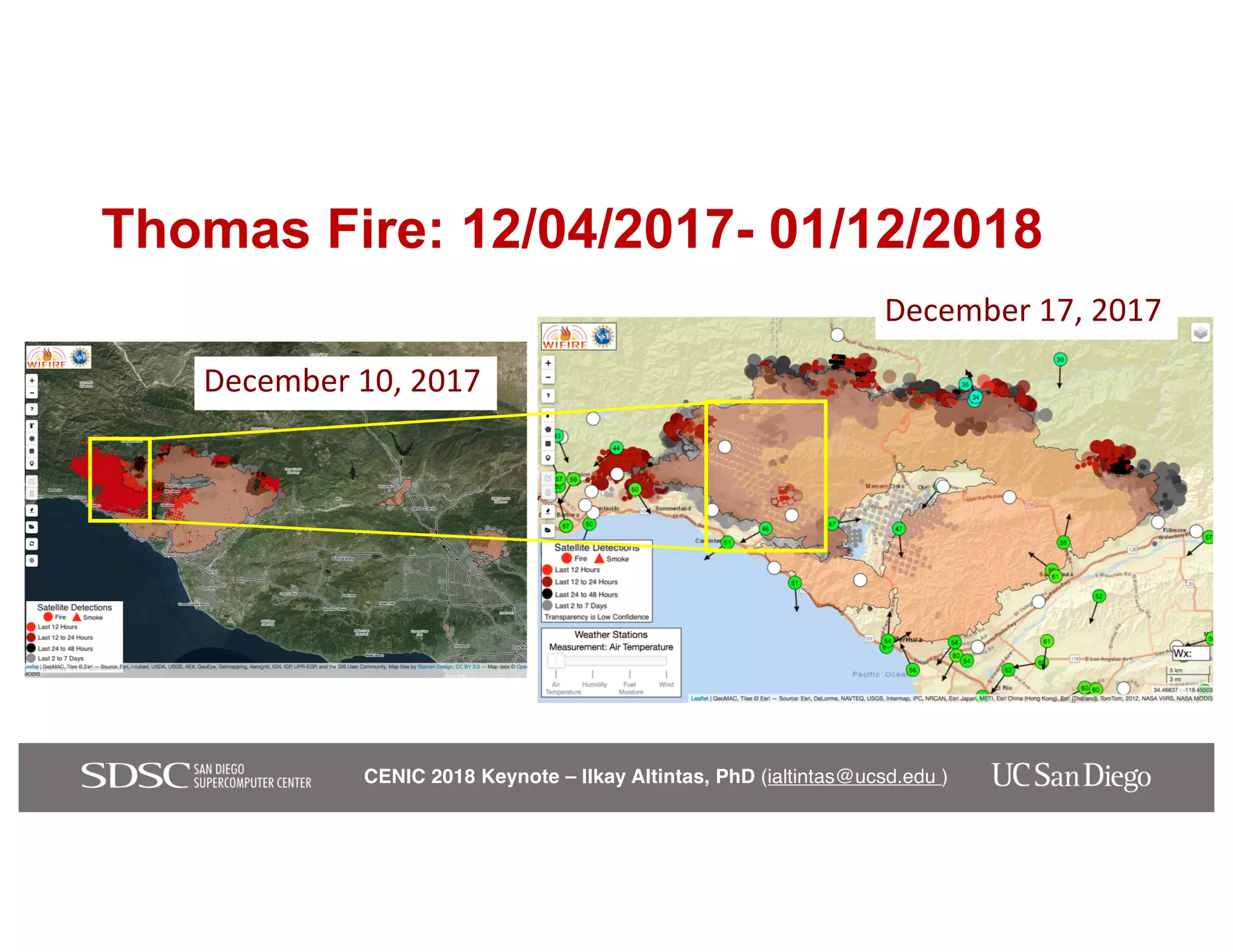

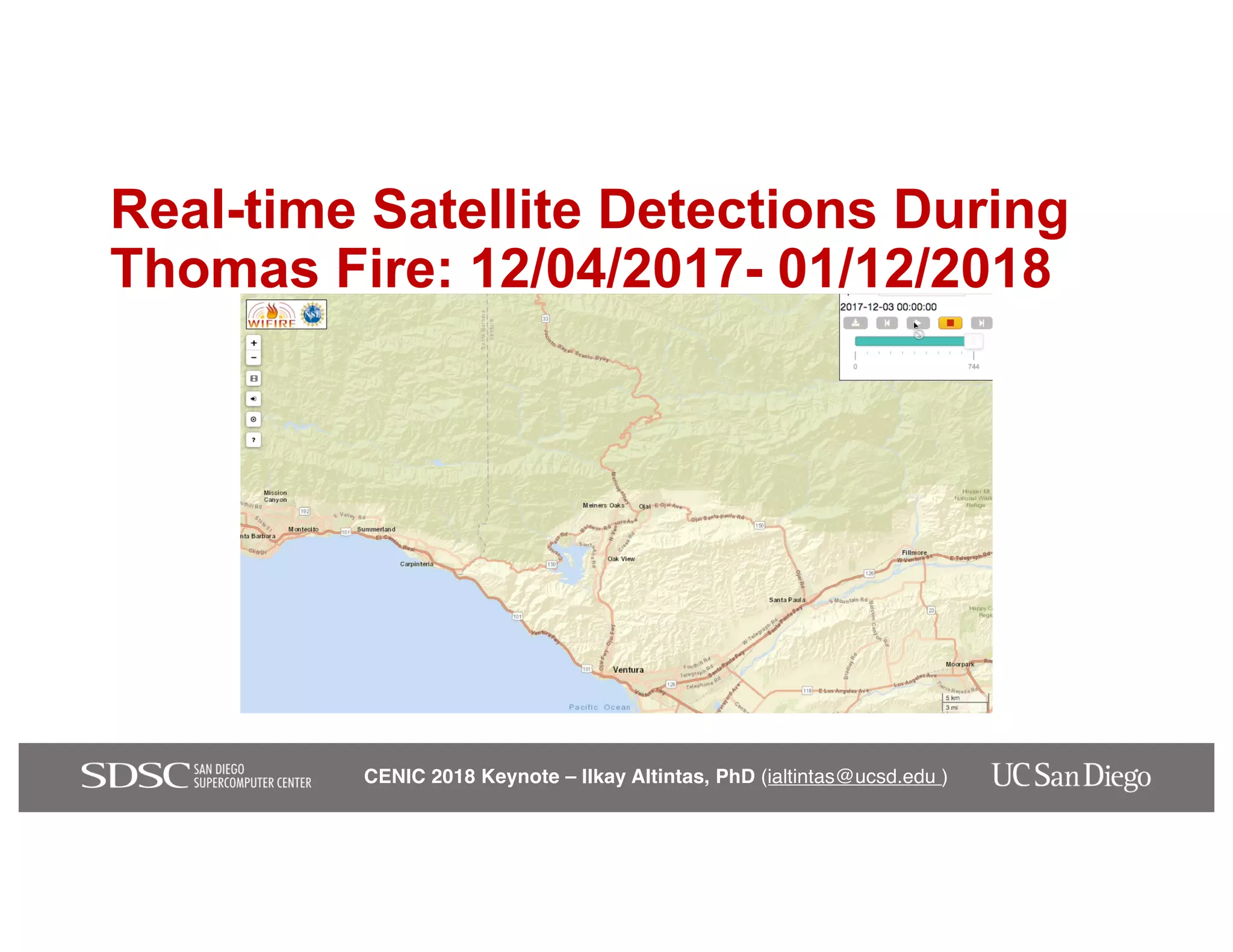

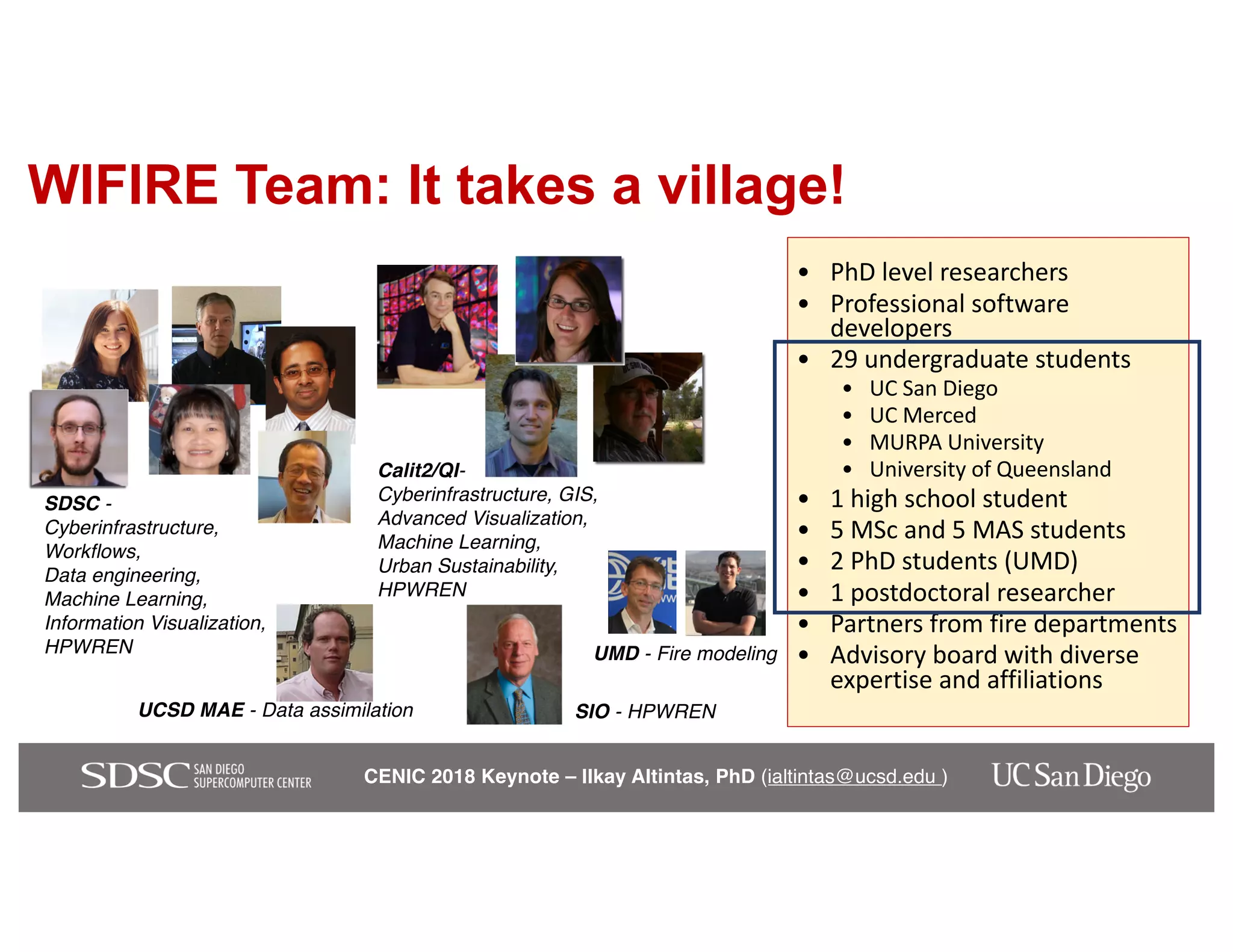

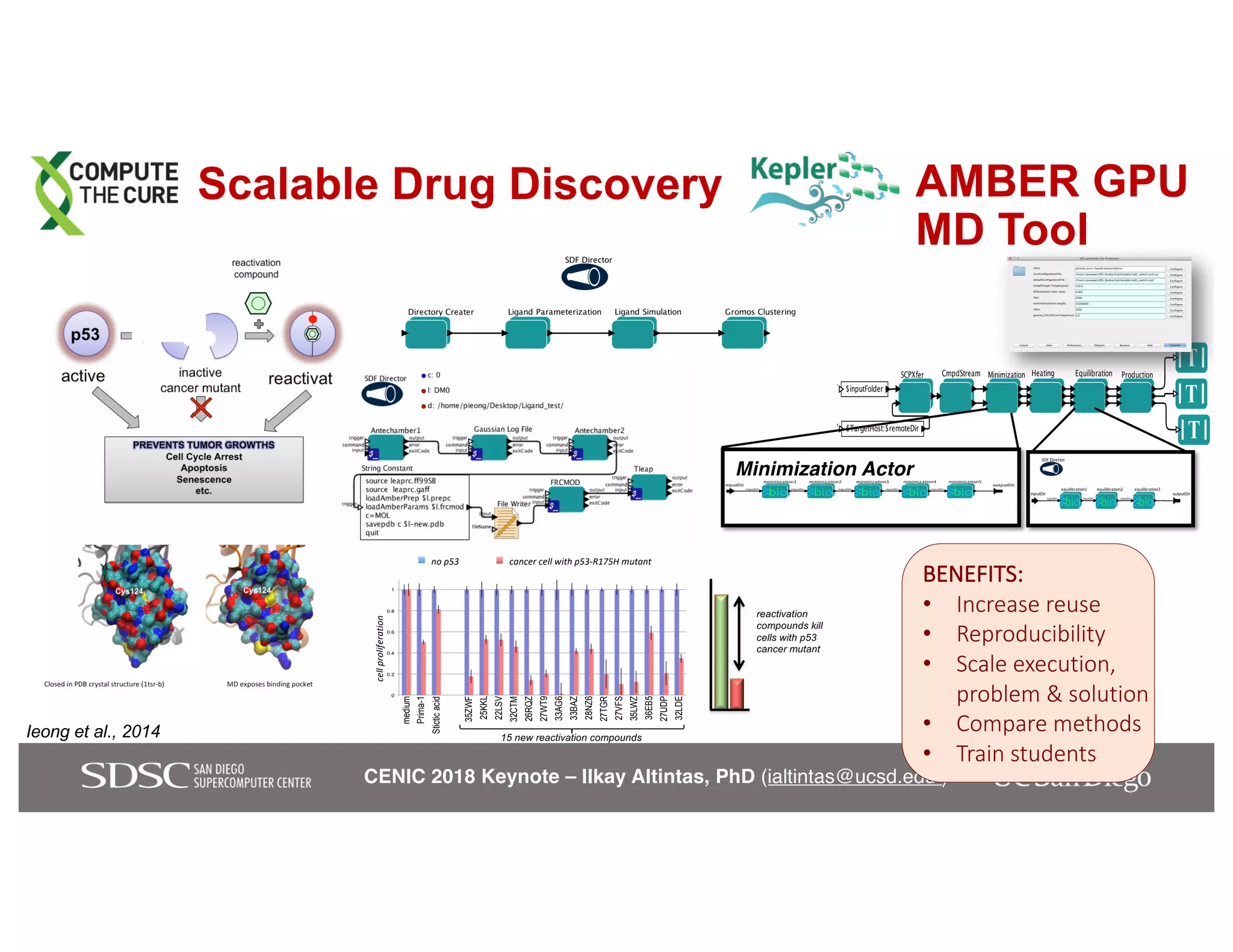

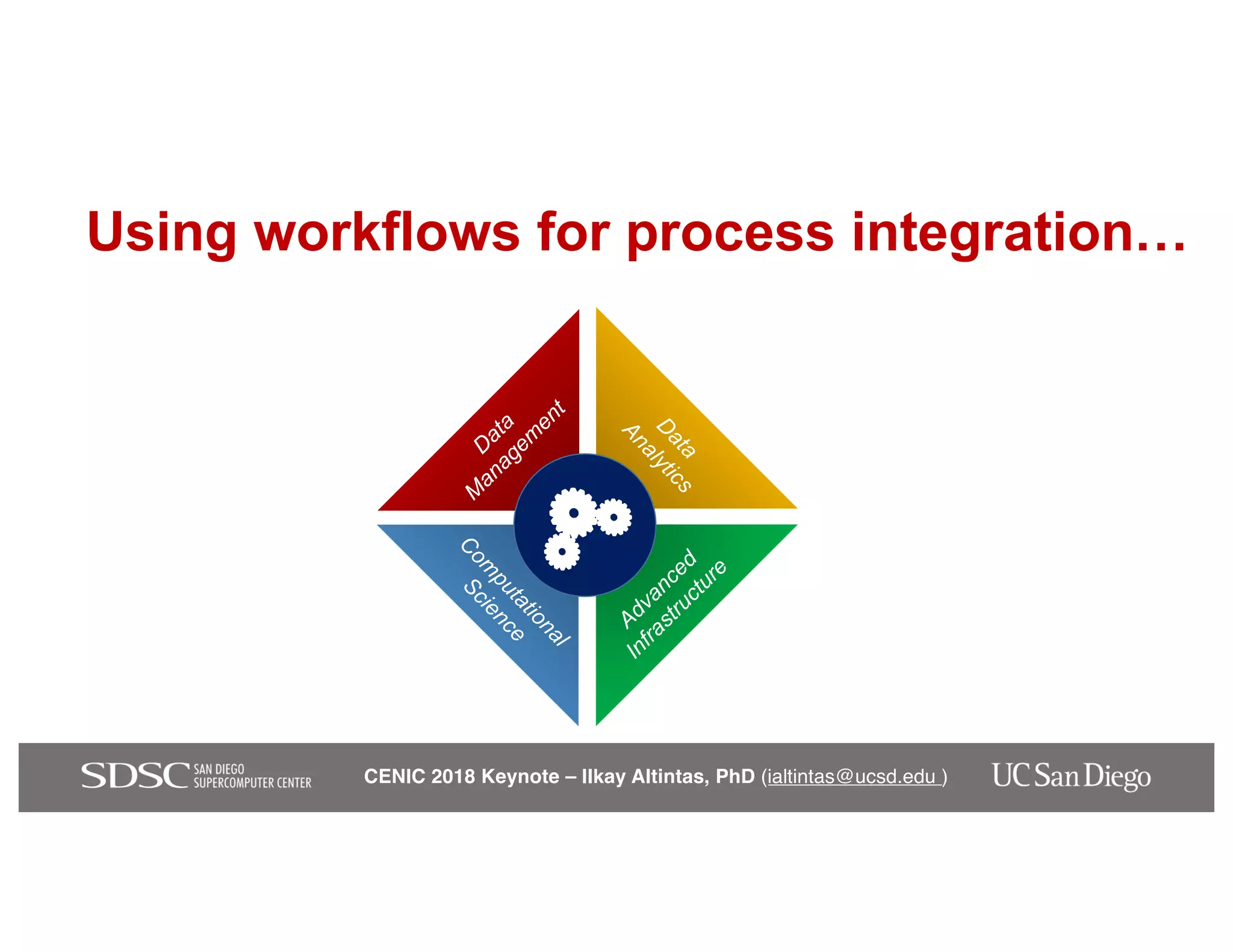

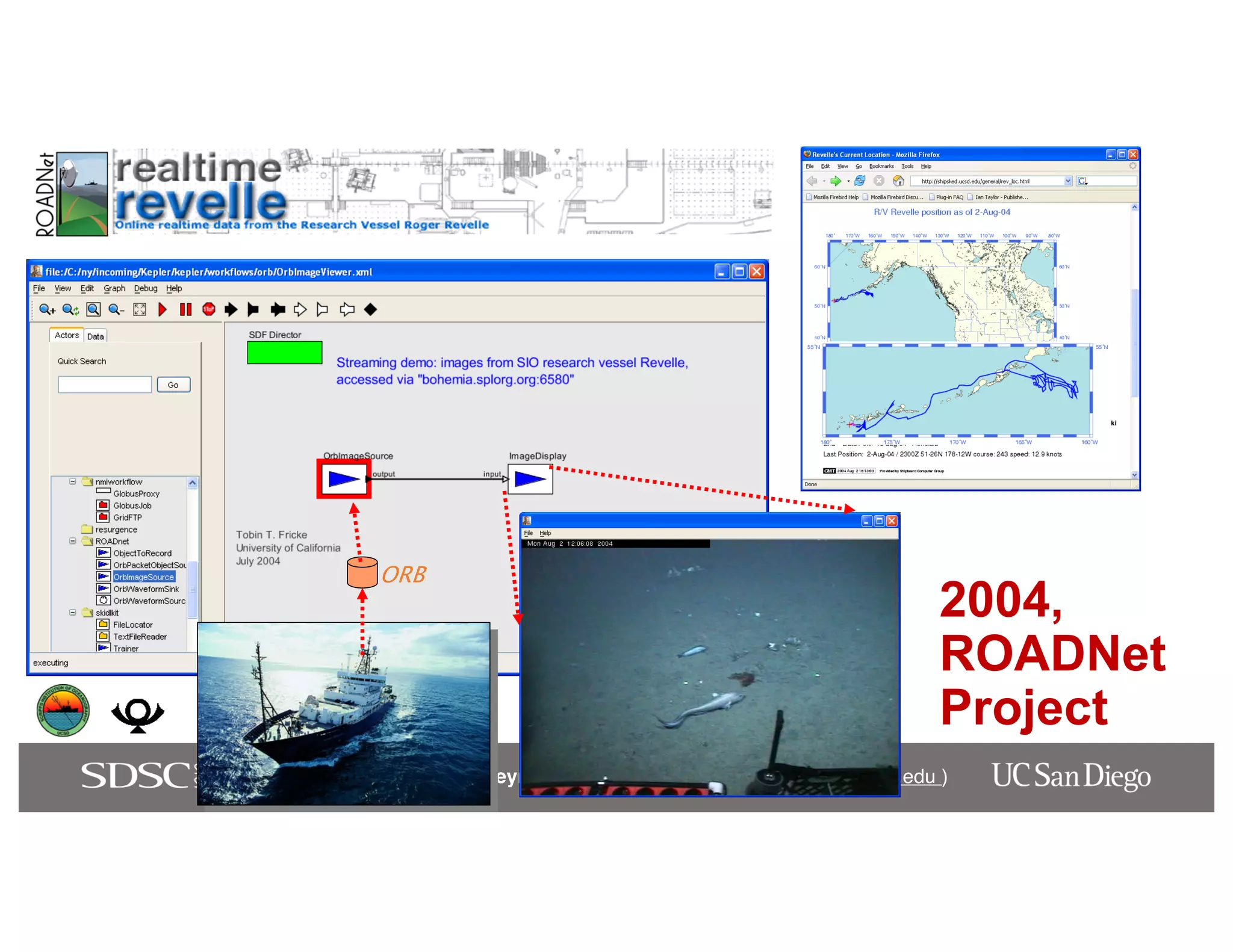

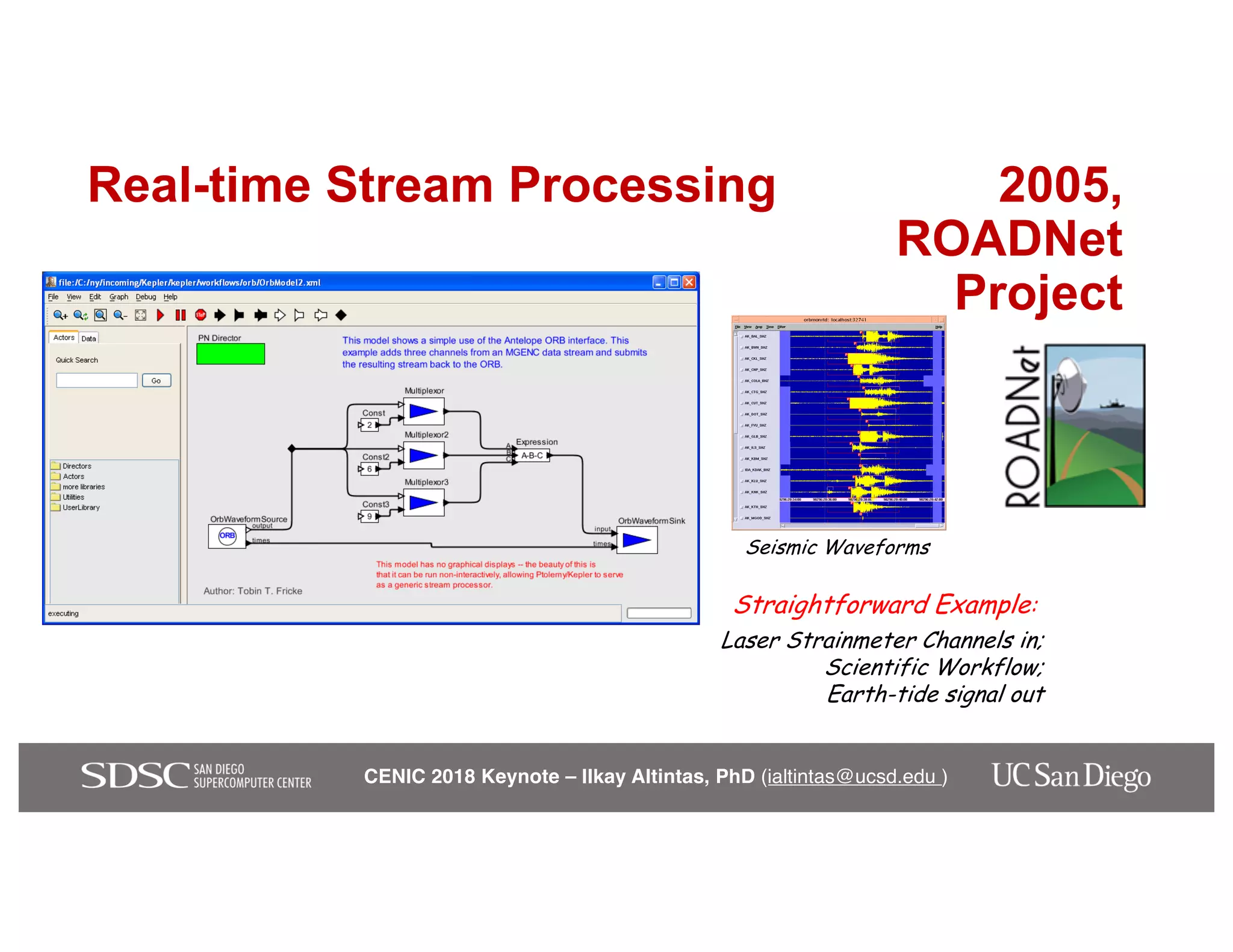

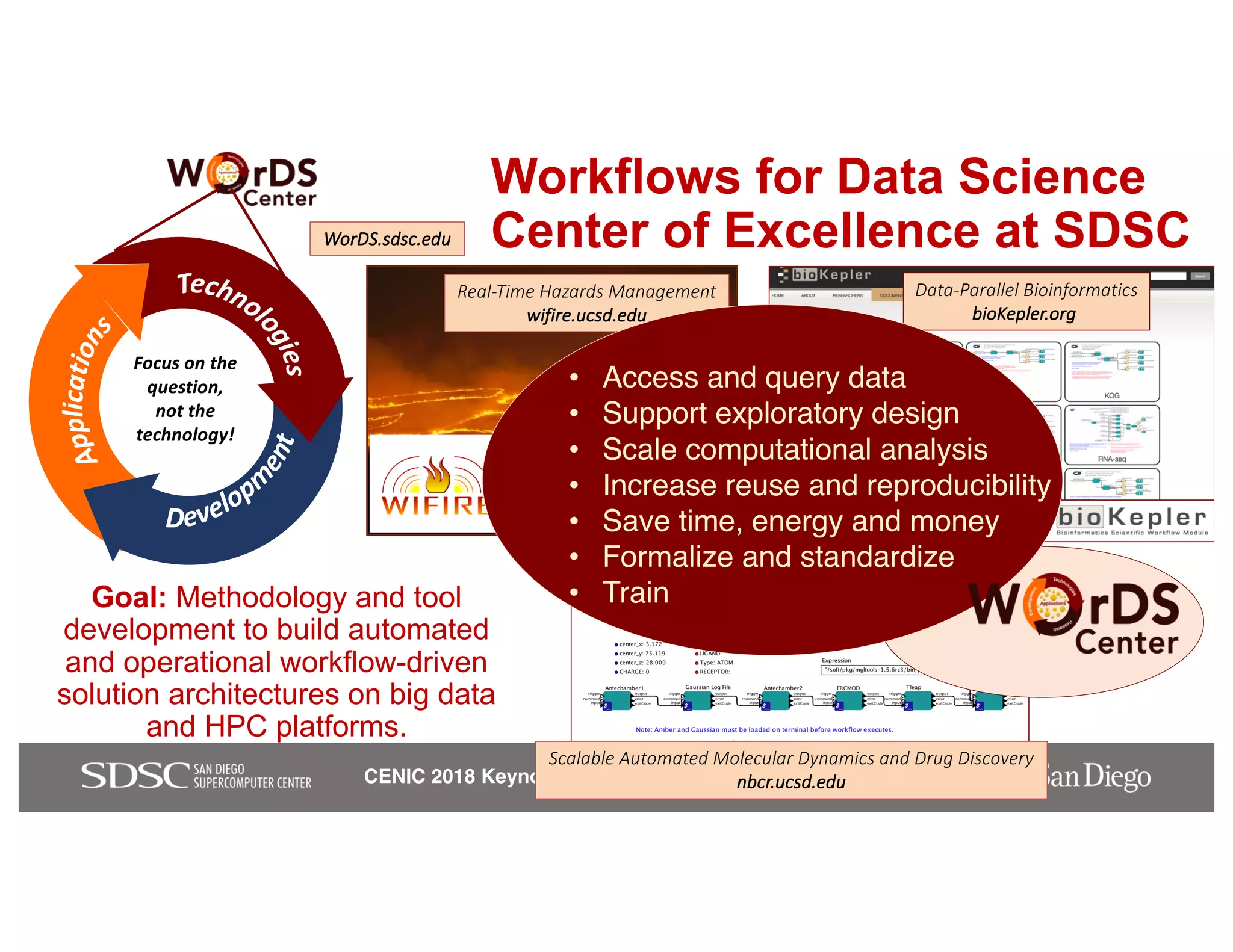

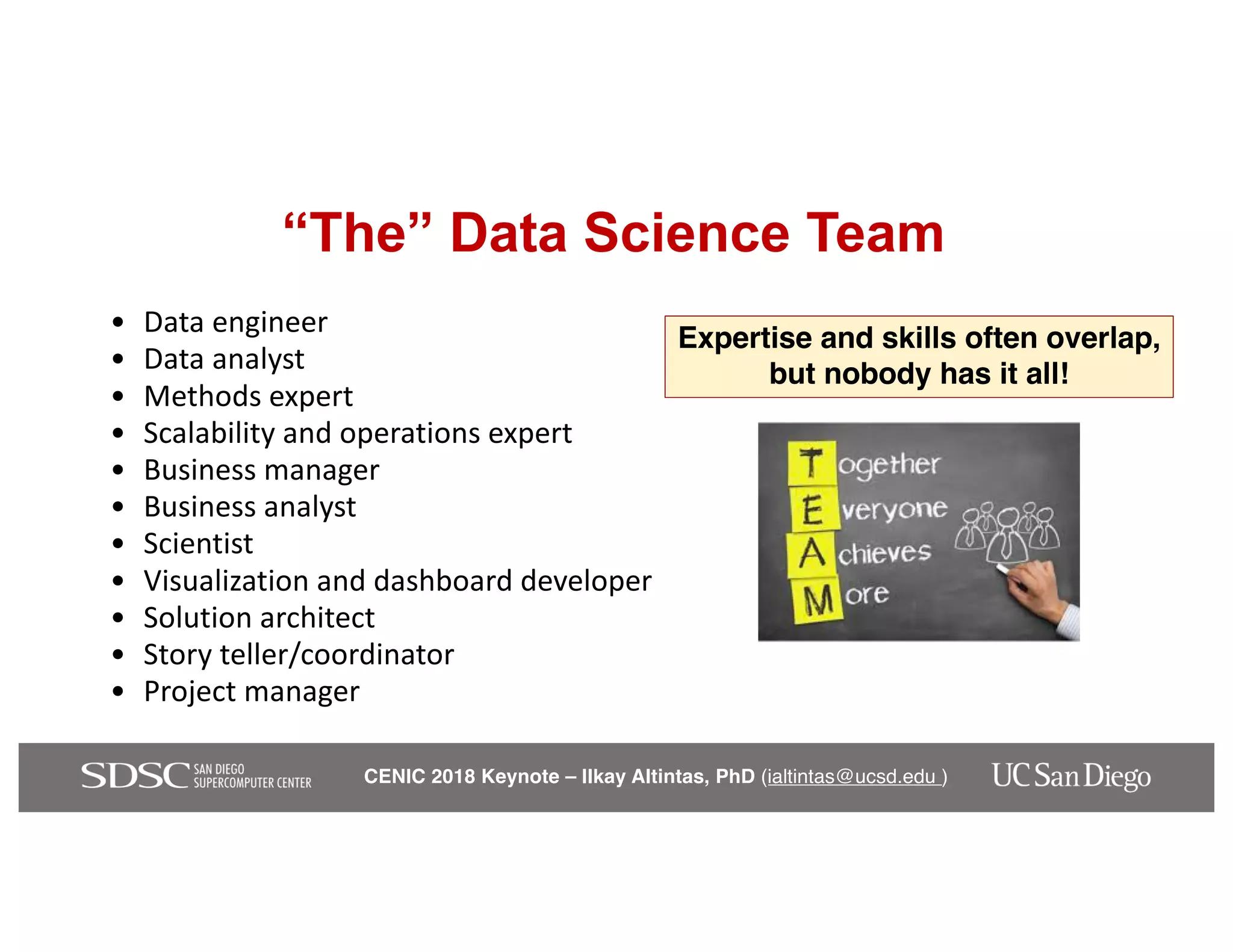

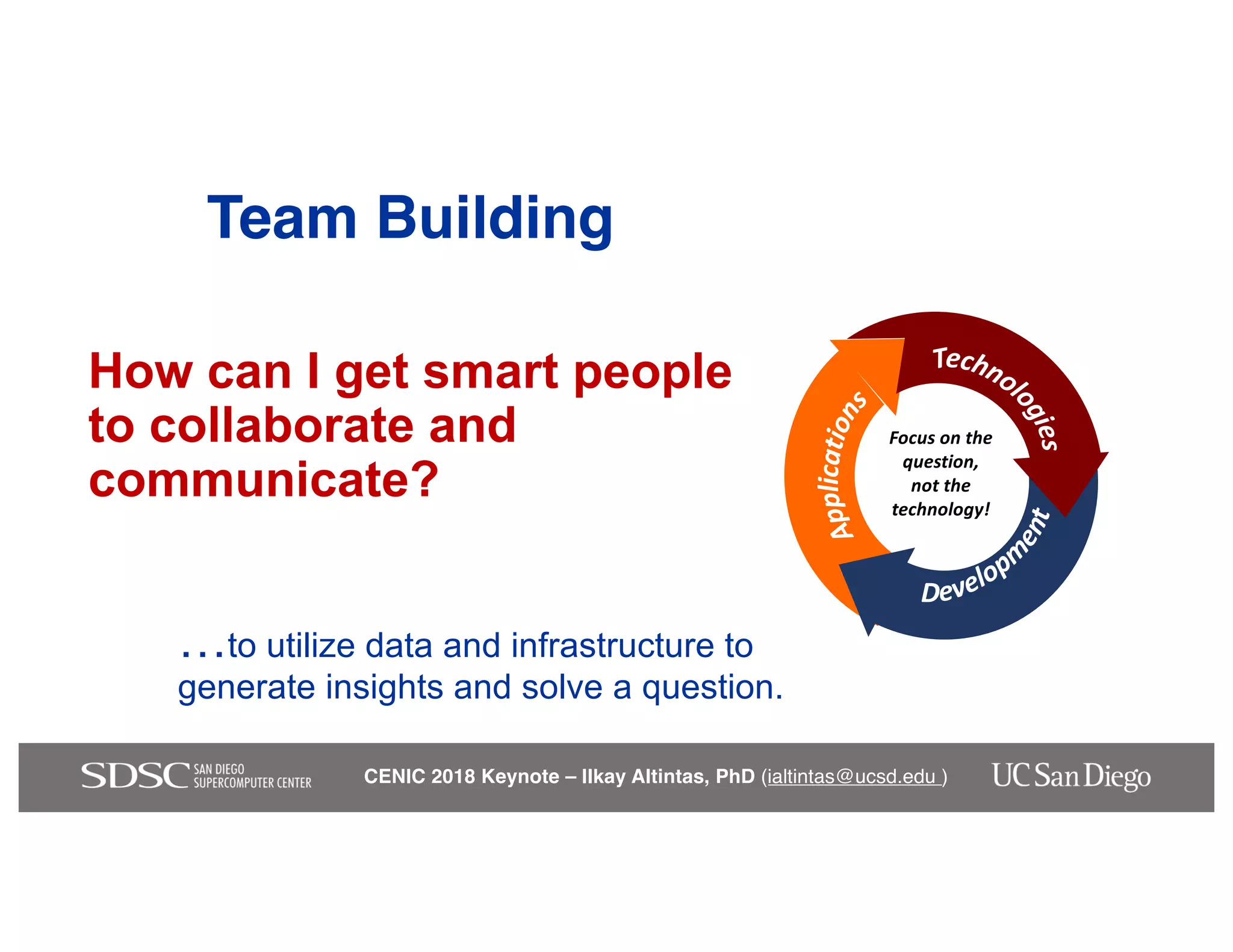

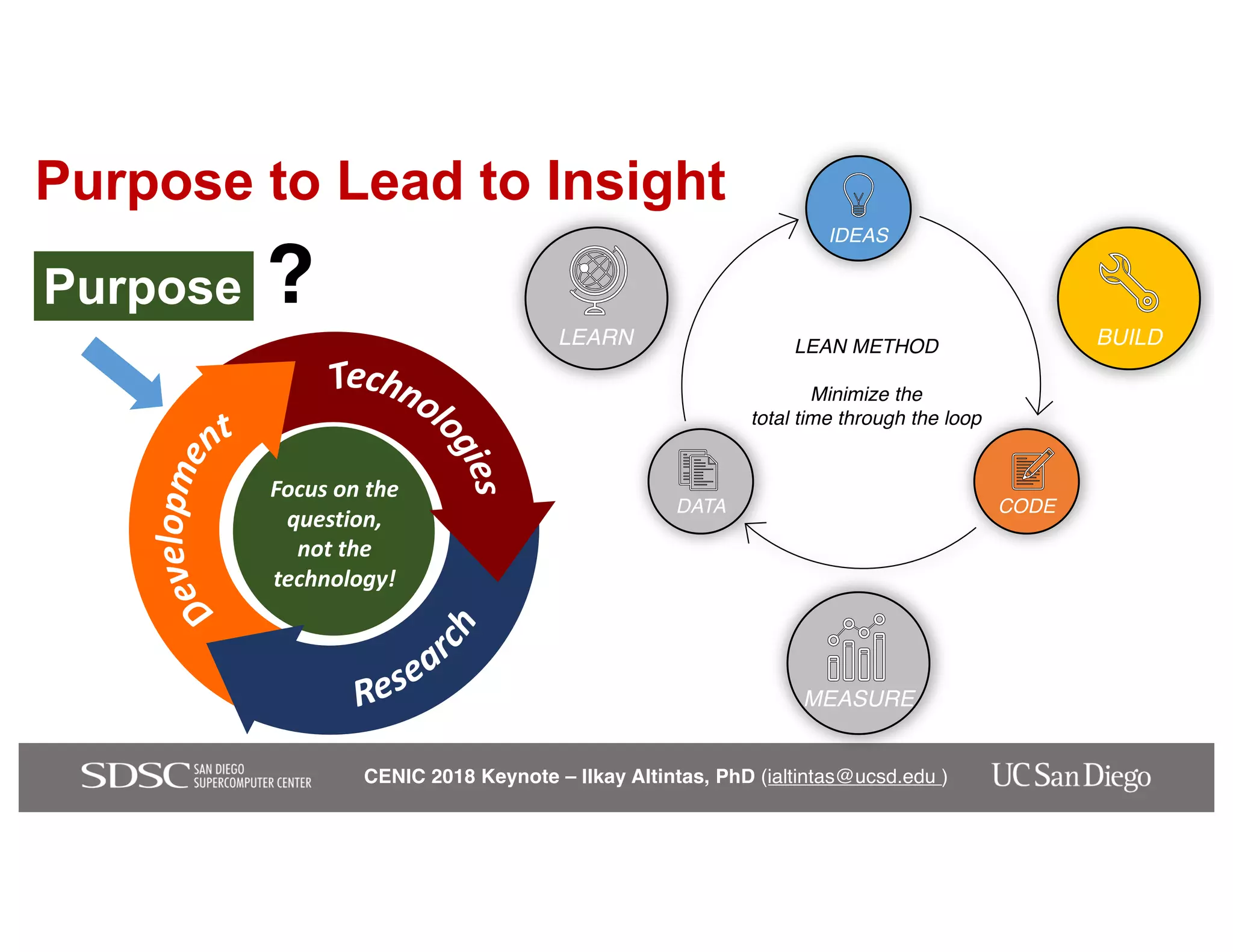

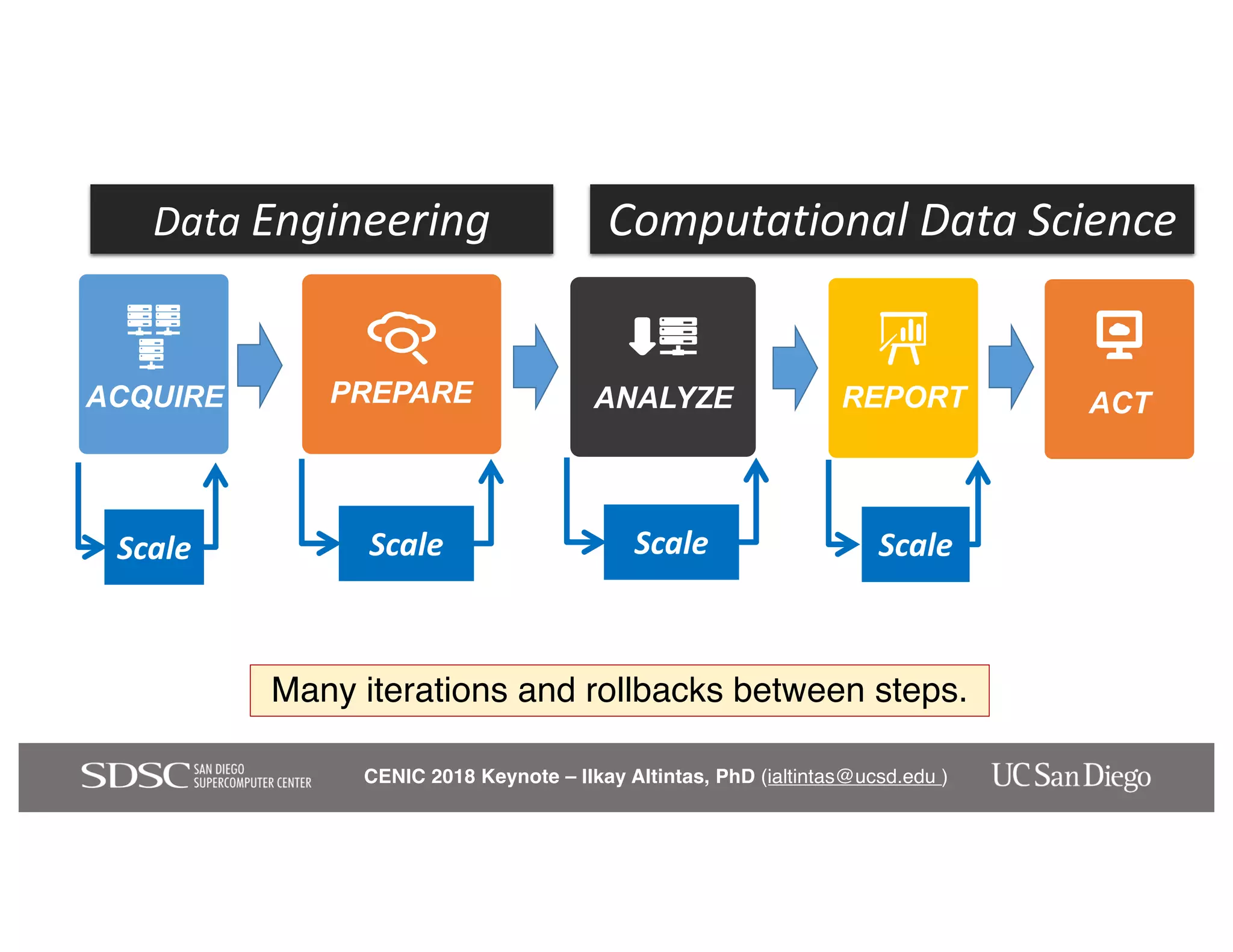

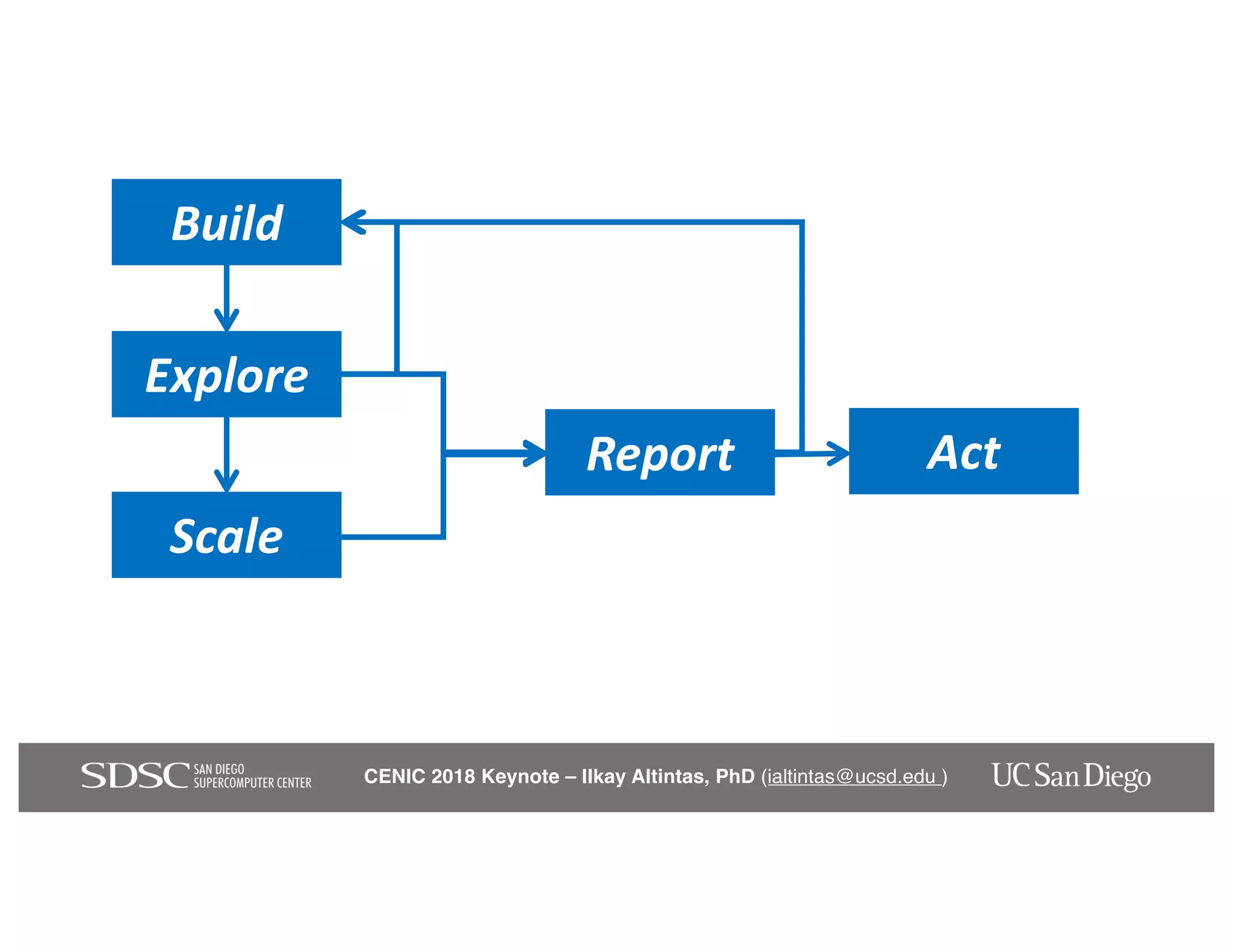

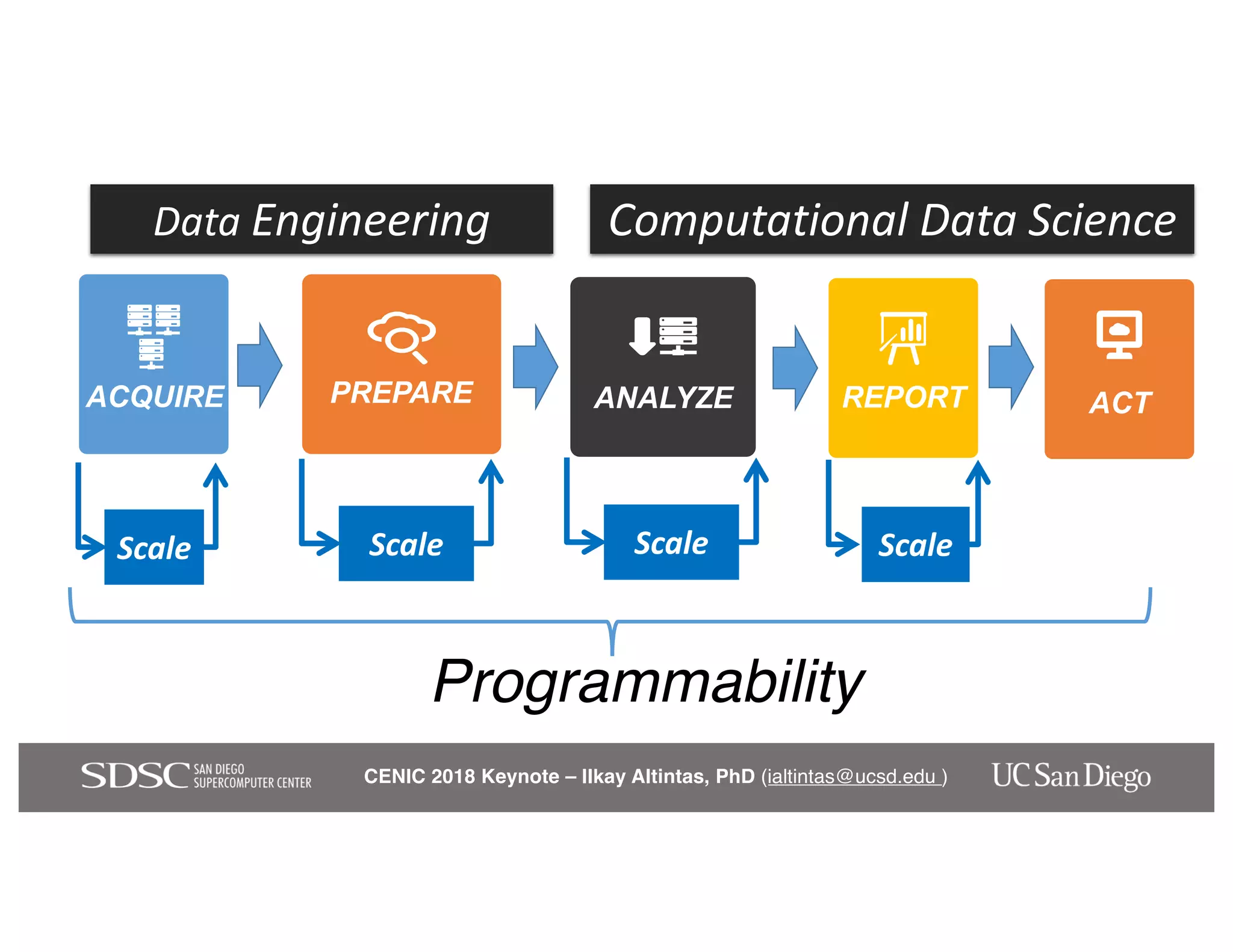

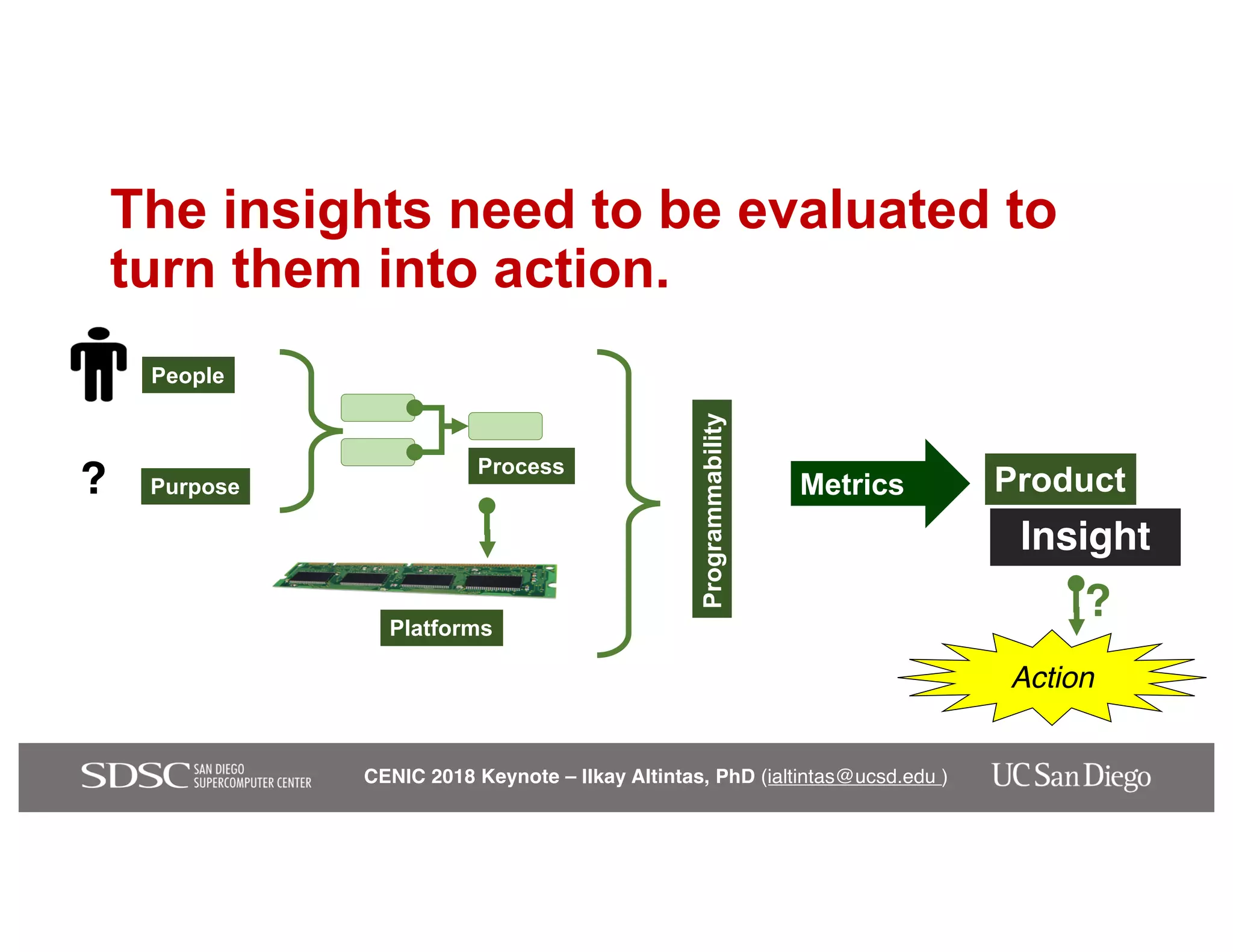

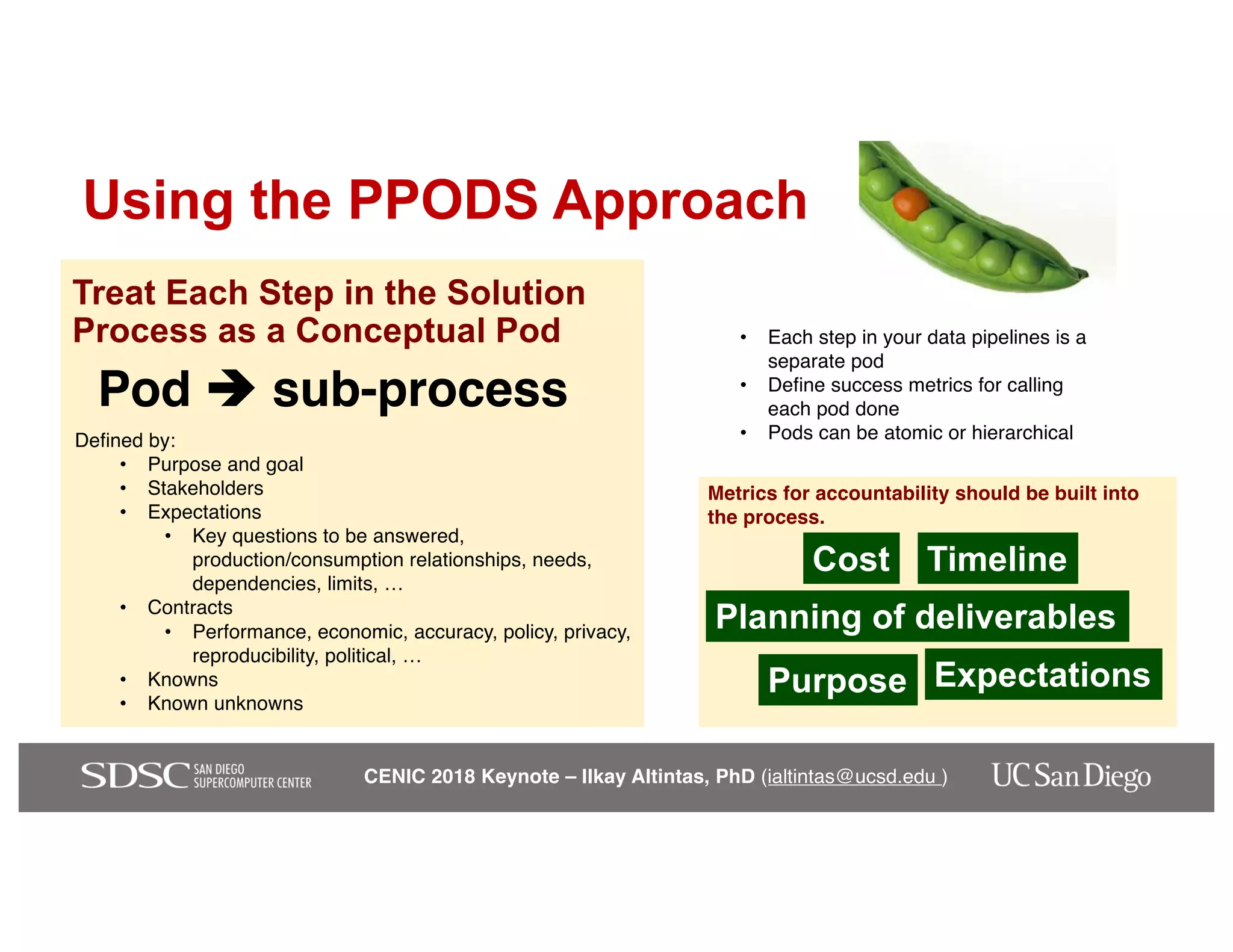

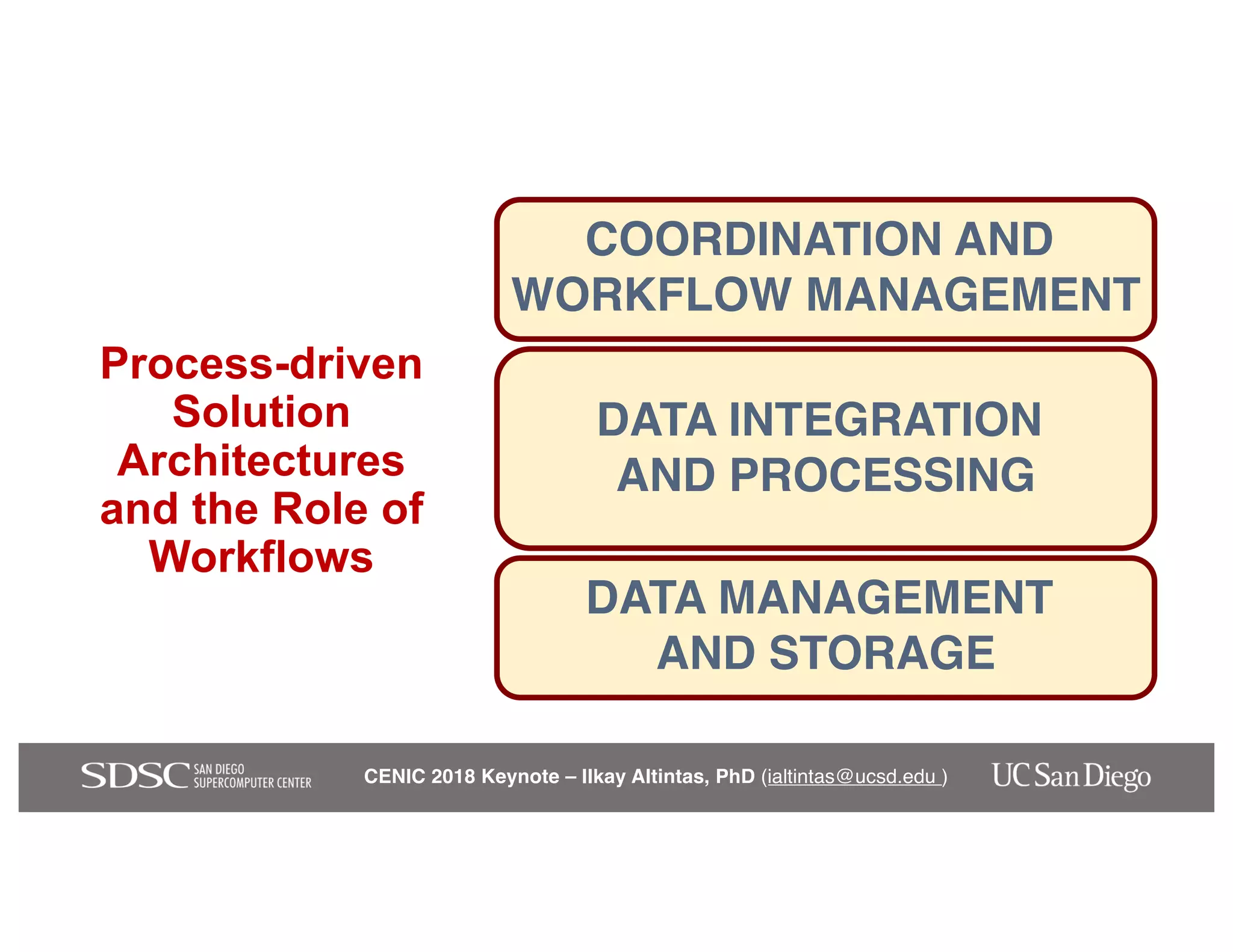

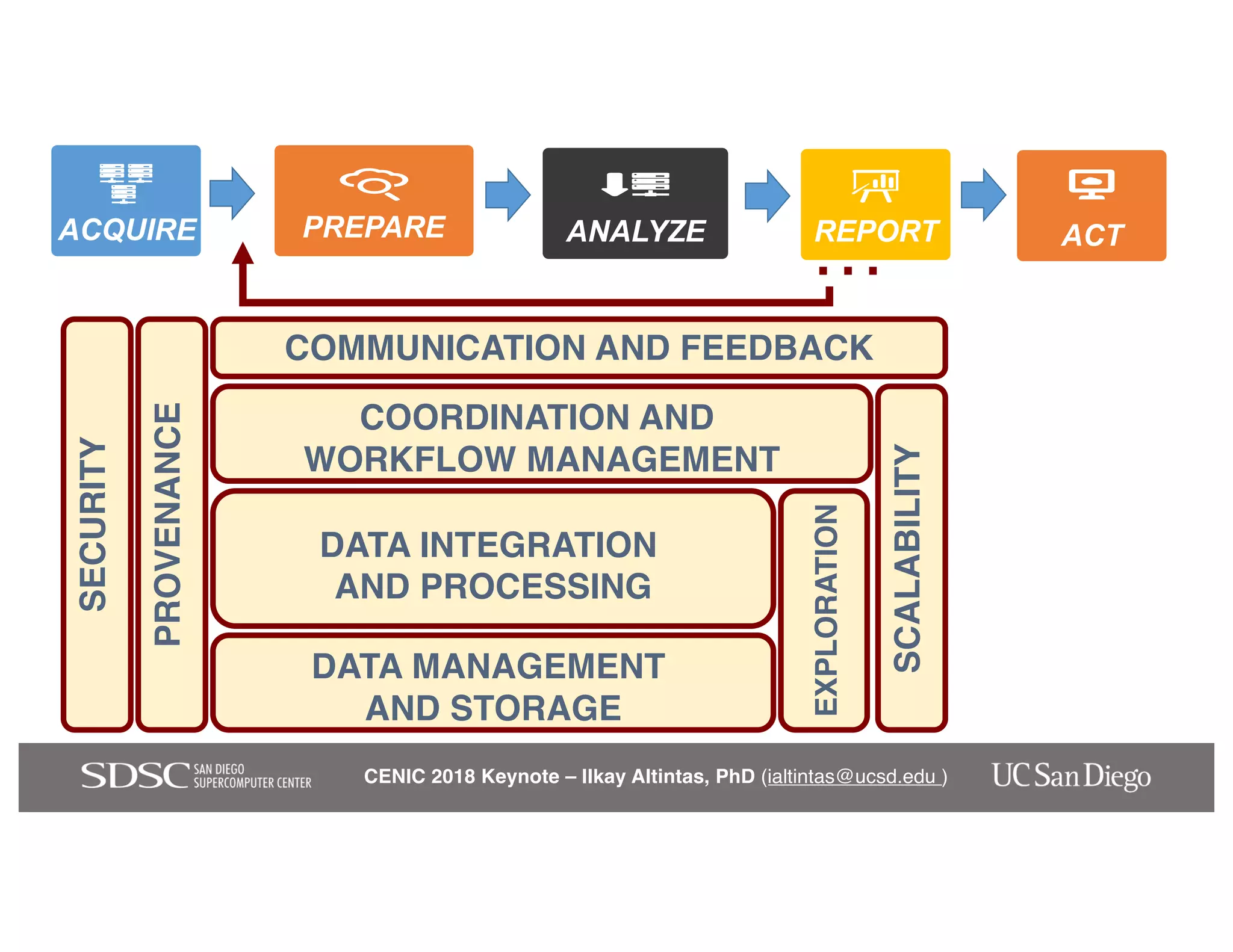

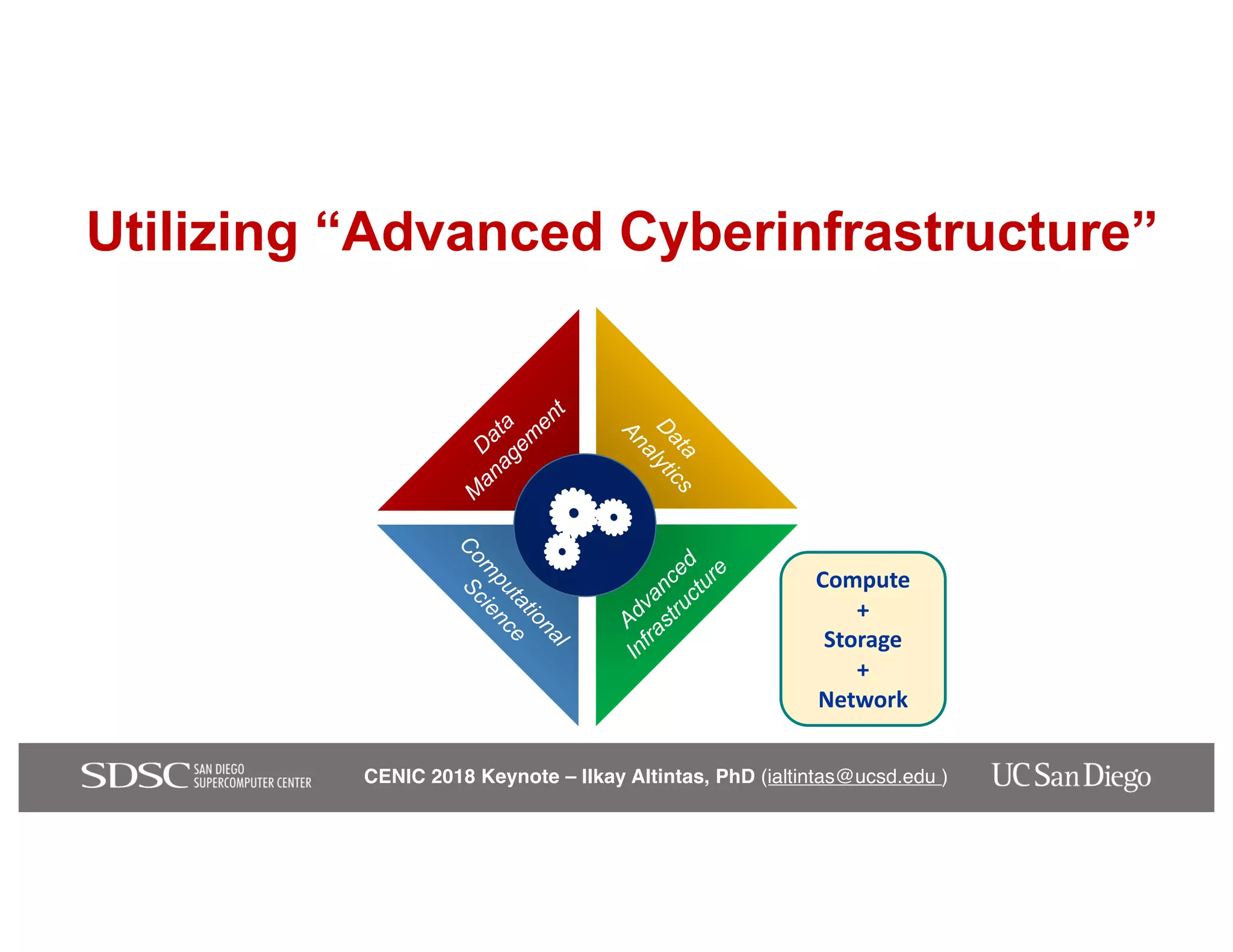

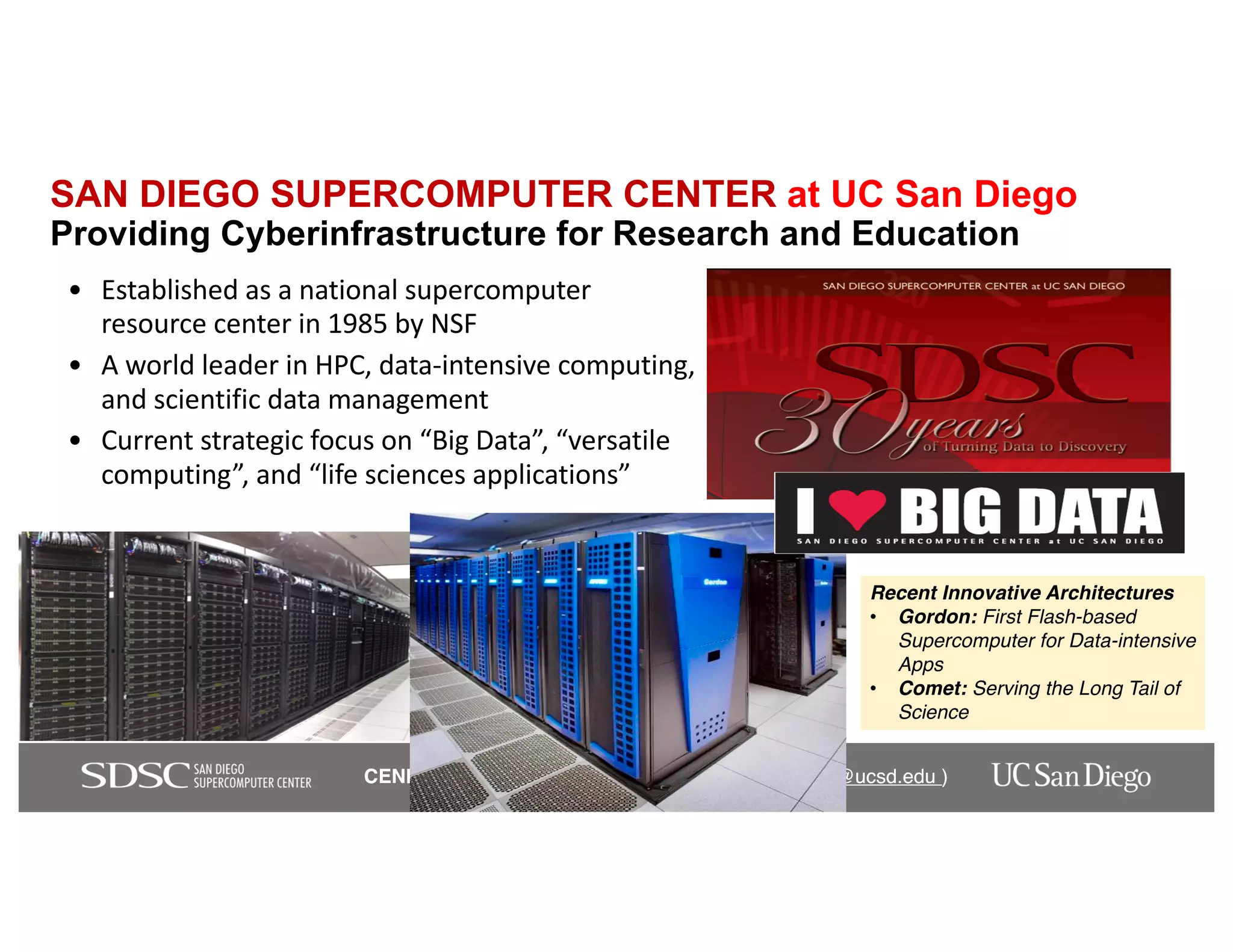

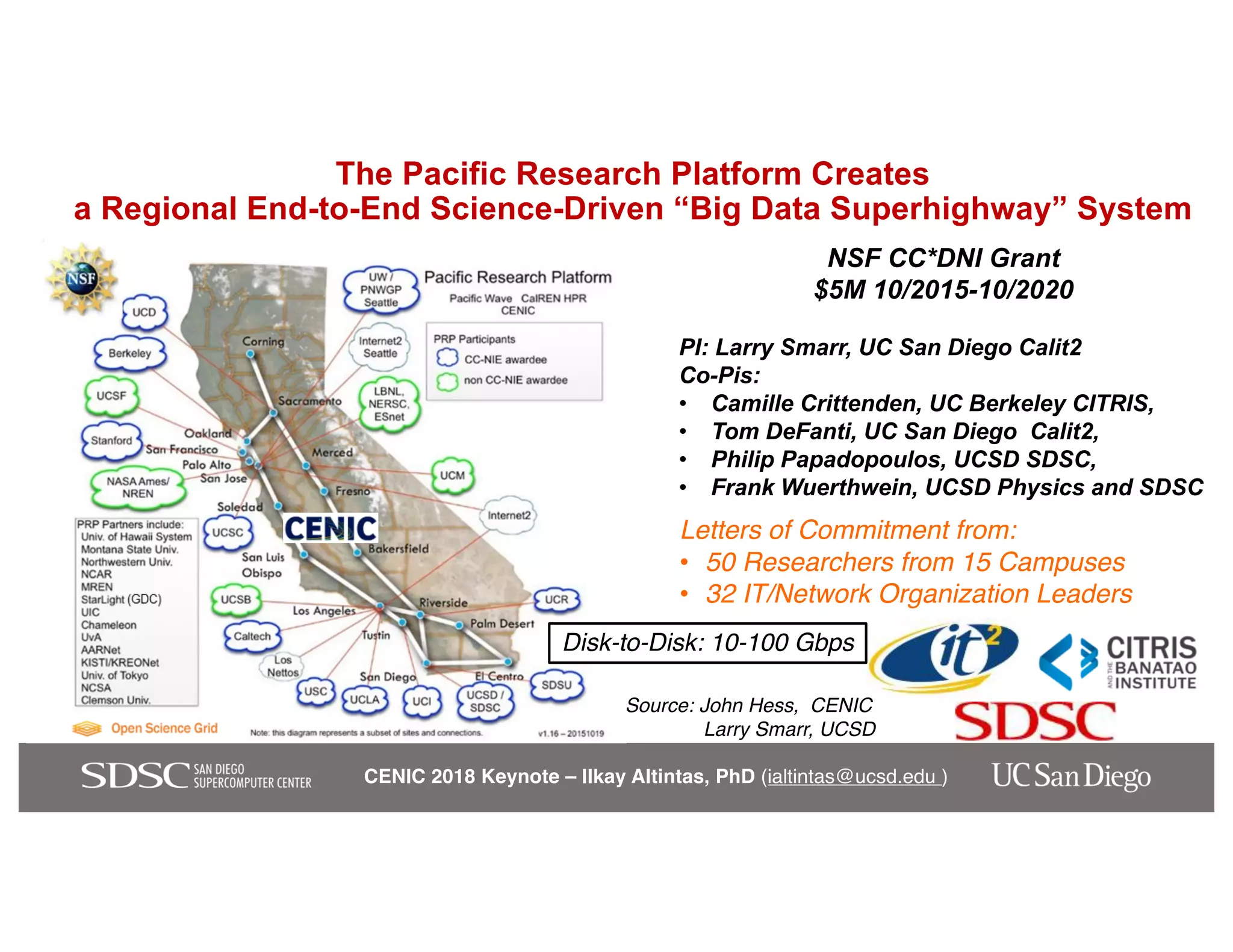

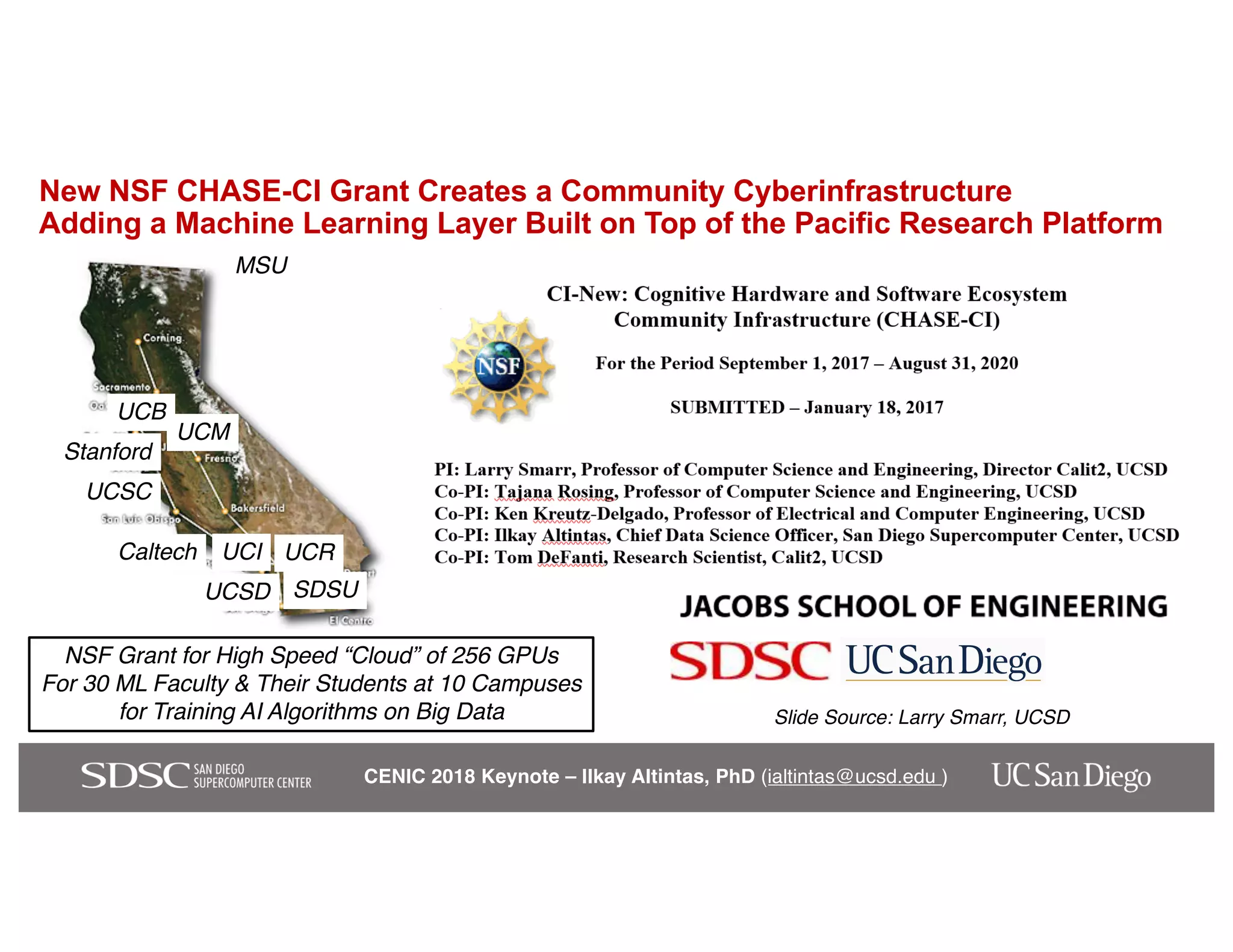

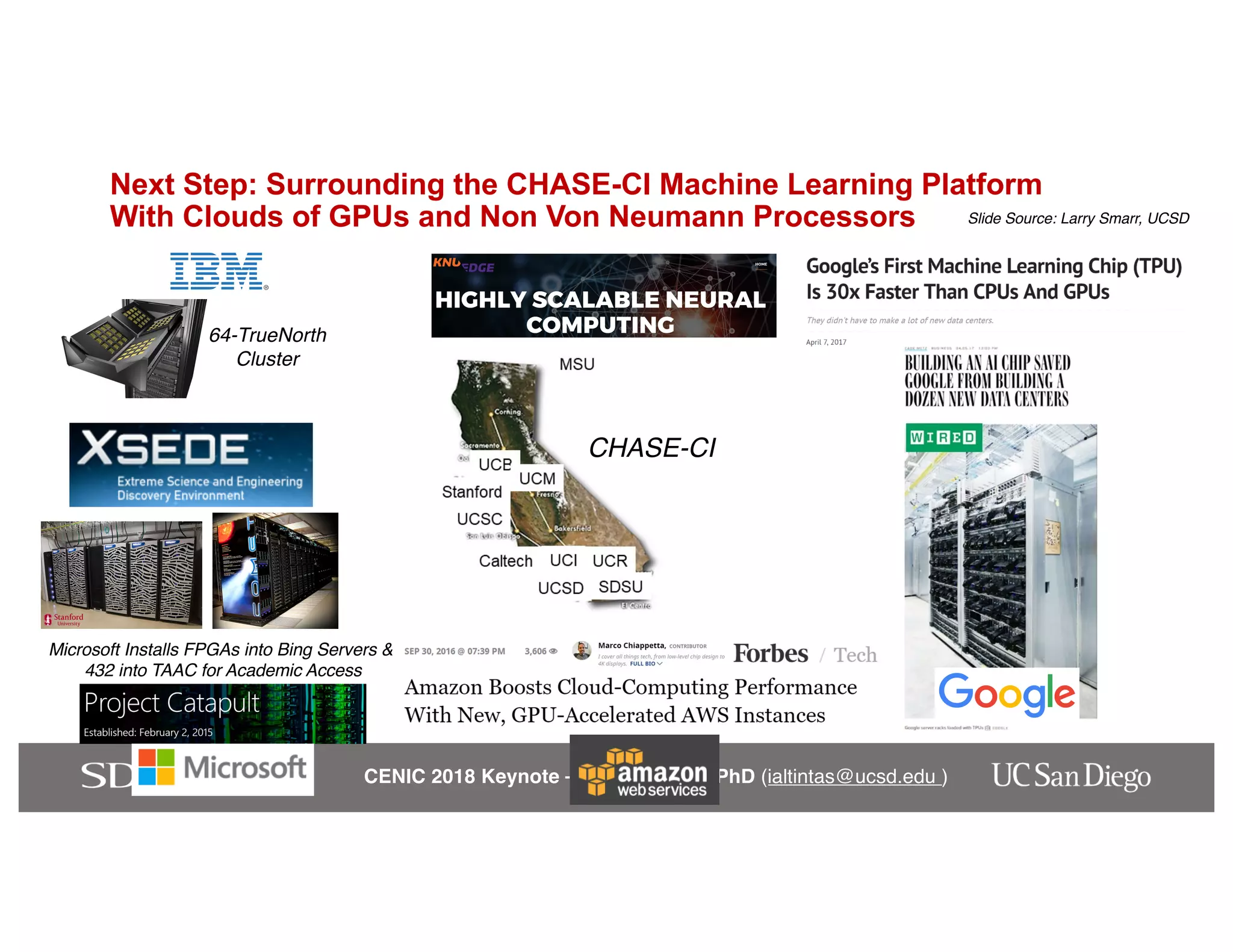

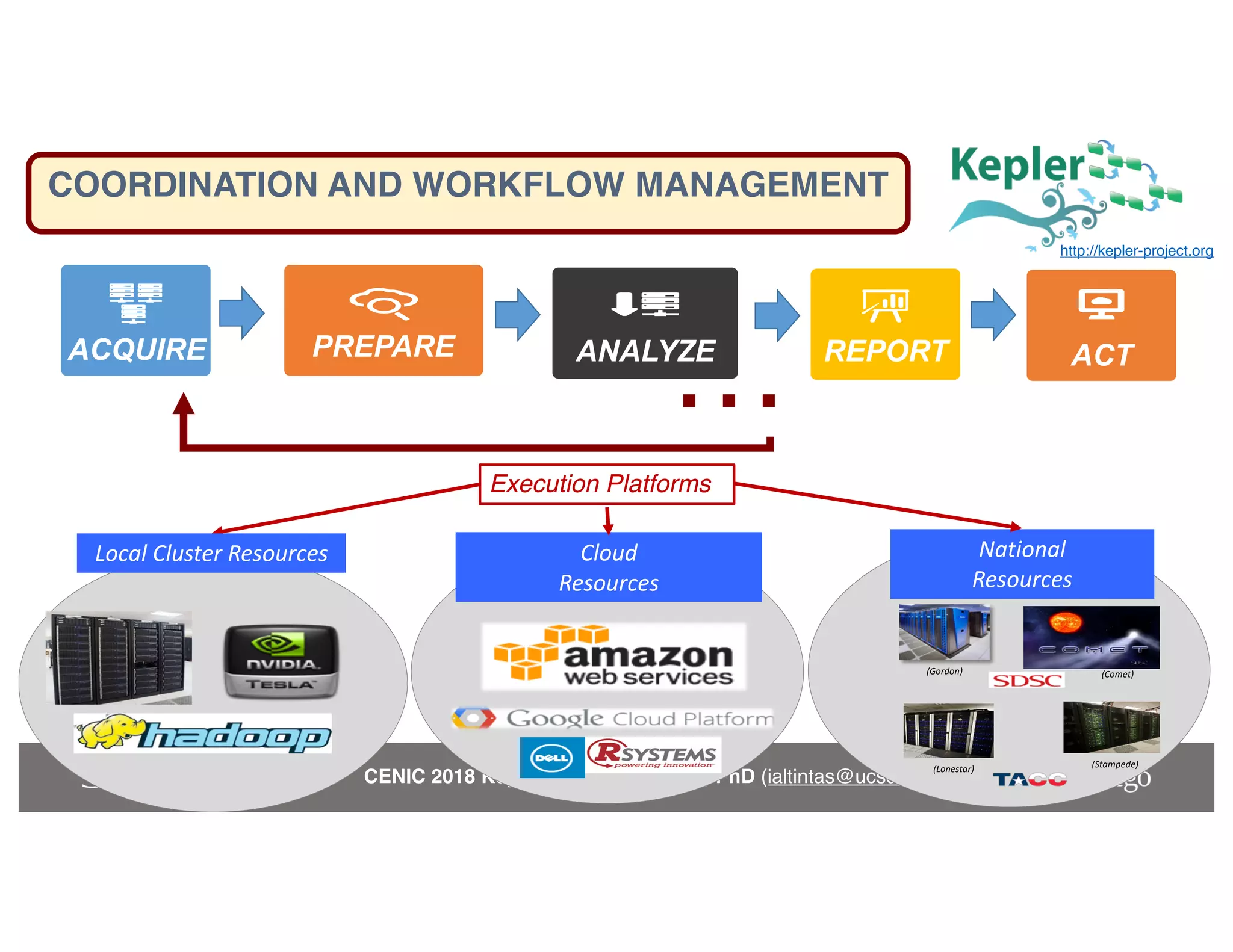

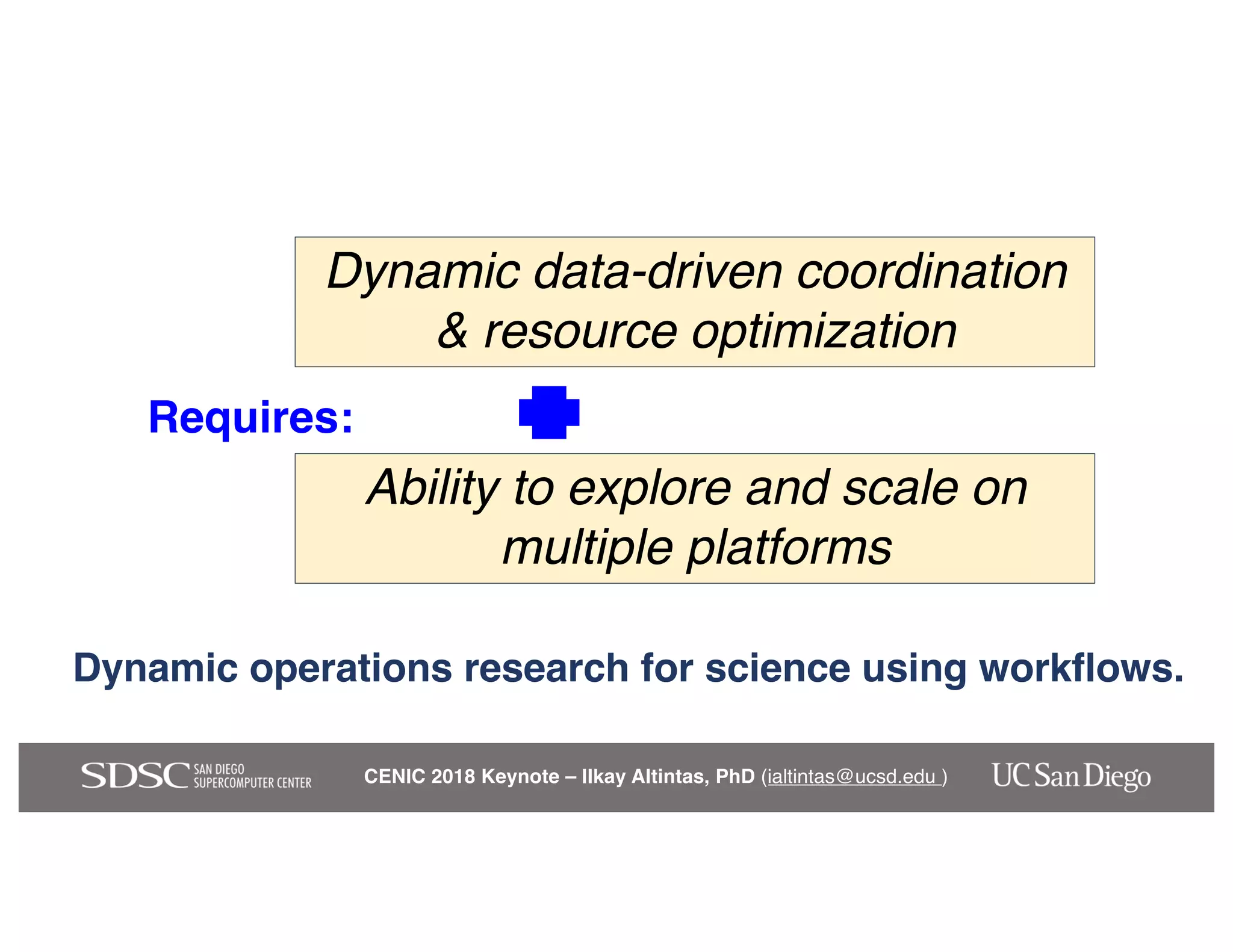

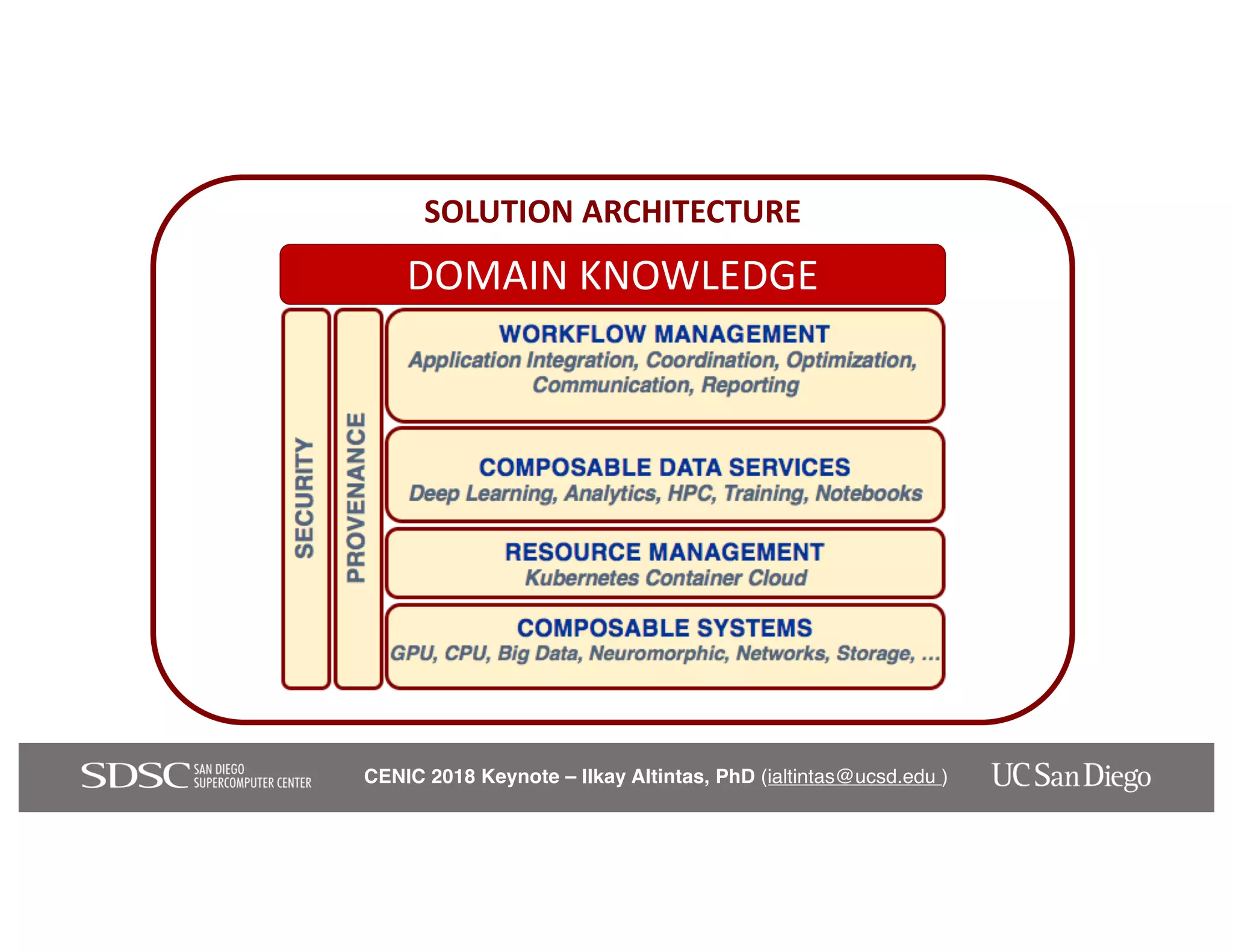

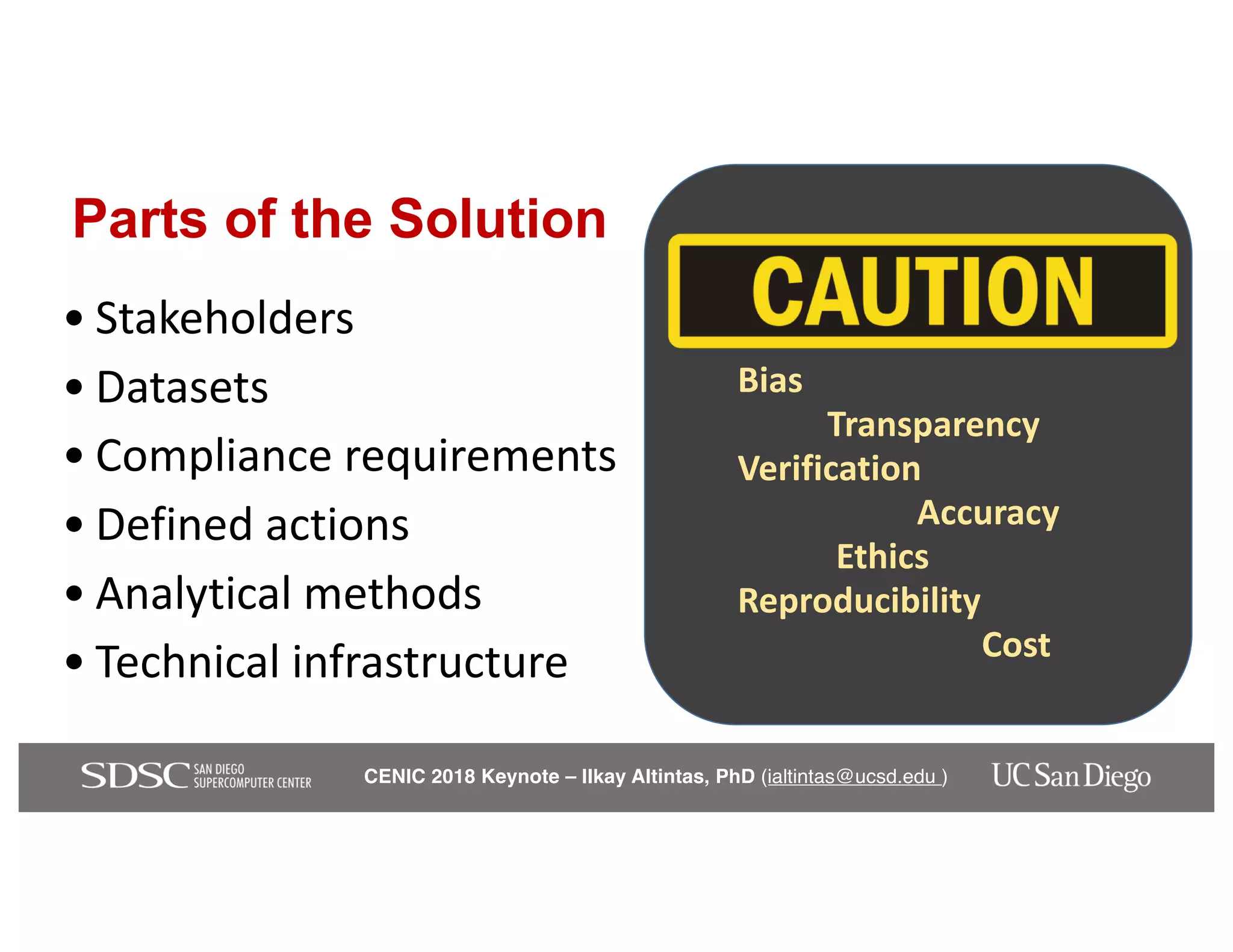

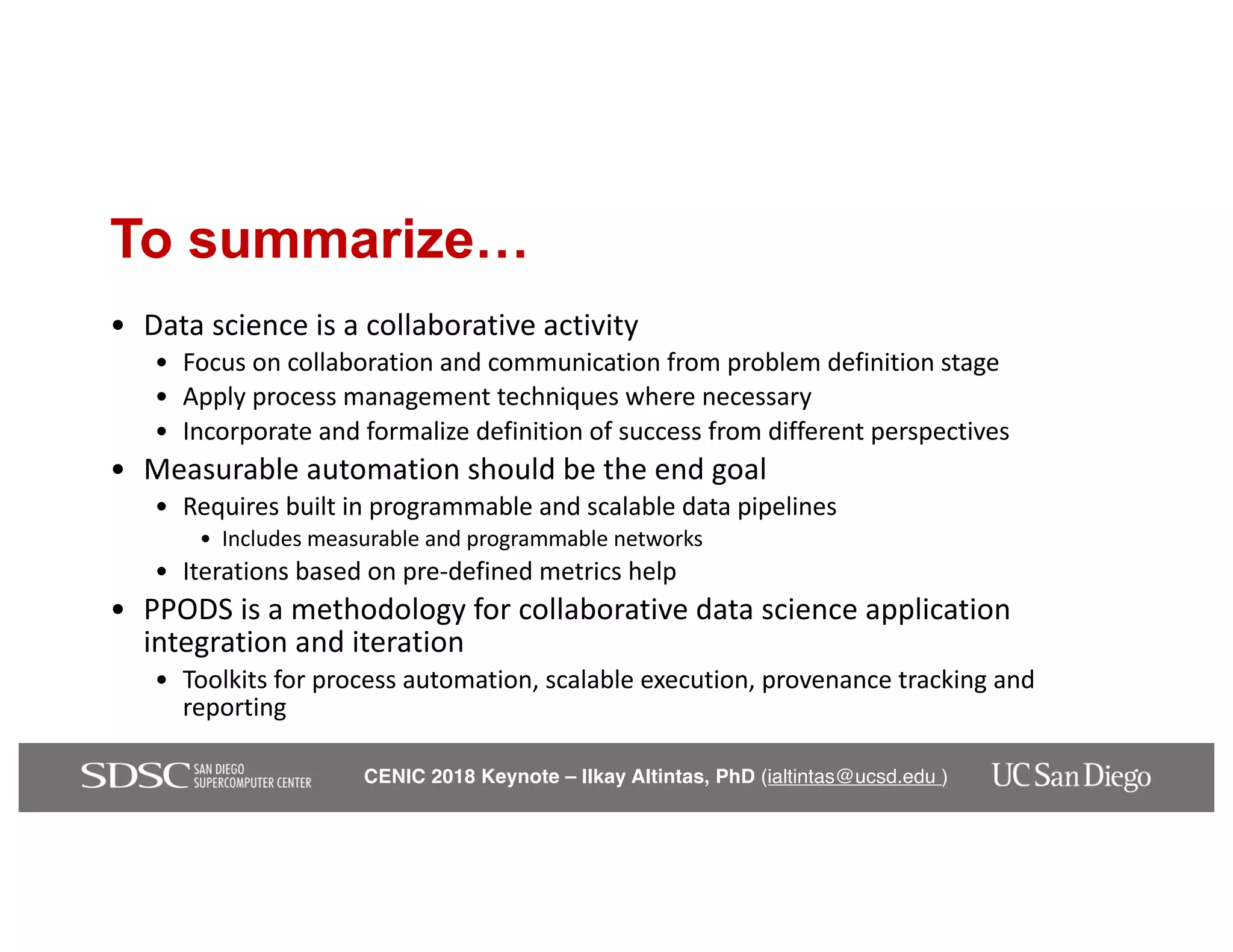

The keynote by Ilkay Altintas emphasizes the importance of collaboration in data science within a highly networked environment to enhance decision-making and predictive analytics. It covers the transformative impact of big data and advanced computing across various sectors, including smart cities and wildfire management. The talk outlines the components of an effective data science ecosystem and the interdisciplinary teamwork required to achieve actionable insights and solutions.