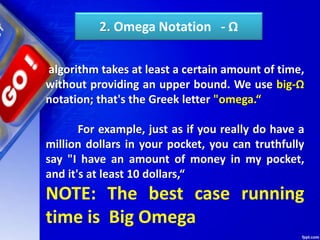

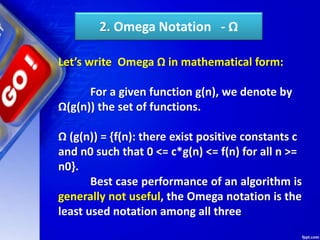

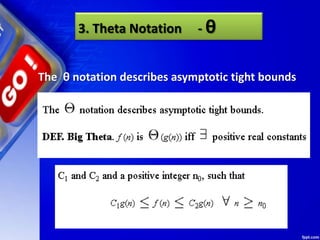

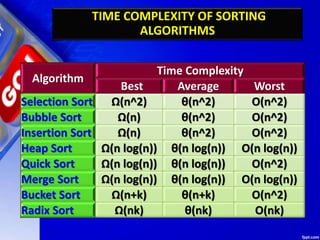

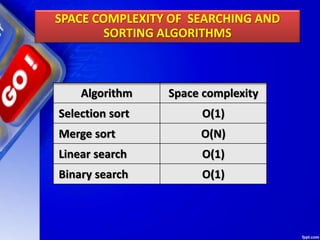

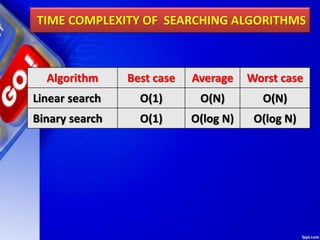

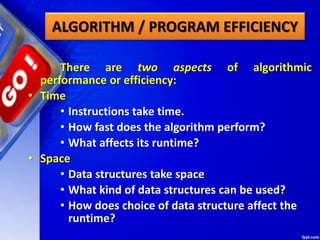

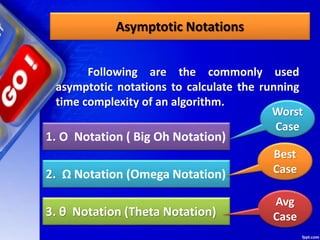

The document provides an introduction to algorithms and their analysis. It defines an algorithm as a step-by-step procedure for solving a problem in a finite amount of time. The analysis of algorithms aims to predict the cost of an algorithm in terms of resources and performance. There are two main aspects of analyzing algorithmic performance - time complexity, which measures how fast an algorithm performs based on input size, and space complexity, which measures how much memory an algorithm requires. Common asymptotic notations used to represent time complexity include Big-O, Omega, and Theta notations. The time complexity of common algorithms like searching, sorting, and traversing data structures are discussed.

![2. O(n)

printAllElementOfArray(lst2, size):

for i in range(0,size):

print("n", lst2[i])

printAllElementOfArray(lst2, size)

This function runs in O(n) time (or "linear

time"), where n is the number of items in the

array. If the array has 10 items, we have to print

10 times. If it has 1000 items, we have to print

1000 times.

1. Ο Notation ( Big Oh Notation)](https://image.slidesharecdn.com/chapter09designandanalysisofalgorithms-190528091701/85/Chapter-09-design-and-analysis-of-algorithms-67-320.jpg)

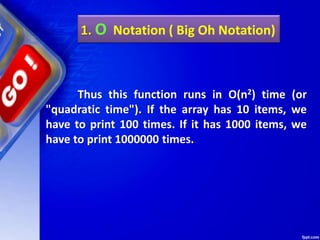

![2. O(n)

Here we're nesting two loops. If our array

has n items, our outer loop runs n times and our

inner loop runs n times for each iteration of the

outer loop, giving us n2 total prints.

printAllPossibleOrderedPairs(lis2,

size):

for i in range(0,size)

for j in range(0,size):

print("%n", lst2[i], lst2[j])

1. Ο Notation ( Big Oh Notation)](https://image.slidesharecdn.com/chapter09designandanalysisofalgorithms-190528091701/85/Chapter-09-design-and-analysis-of-algorithms-68-320.jpg)

![5. Drop the constants

When you're calculating the big O

complexity of something, you just throw out the

constants. Like:

printAllItemsTwice(lst2,size):

for i in range(0,size):

print("n", lst2[i])

for i in range(0,size):

print(“n", lst2[i])

This is O(2n), which we just call O(n).

1. Ο Notation ( Big Oh Notation)](https://image.slidesharecdn.com/chapter09designandanalysisofalgorithms-190528091701/85/Chapter-09-design-and-analysis-of-algorithms-71-320.jpg)

![5. Drop the constants

This is O(1 + n/2 + 100), which we just call O(n).

printlist(lst2,size):

print("First element =n",arr[0]);

for i in range(0, size/2):

print("%dn", arr[i]);

for i in range(0,100):

print("Hin")

printlist(lst2,size)

Remember, for big O notation we're looking at

what happens as n gets arbitrarily large. As n gets really

big, adding 100 or dividing by 2 has a decreasingly

significant effect.

1. Ο Notation ( Big Oh Notation)](https://image.slidesharecdn.com/chapter09designandanalysisofalgorithms-190528091701/85/Chapter-09-design-and-analysis-of-algorithms-72-320.jpg)

![6. Drop the less significant terms

printAllNumbersThenAllPairSums(lst2, size):

for i in range(0,size):

print(“n", lst2[i])

for i in range(0,size):

for j in range(0,size):

print("%dn", lst2[i] + lst2[j])

Here our runtime is O(n + n2), which we

just call O(n2).

1. Ο Notation ( Big Oh Notation)](https://image.slidesharecdn.com/chapter09designandanalysisofalgorithms-190528091701/85/Chapter-09-design-and-analysis-of-algorithms-73-320.jpg)