Centralized Model Organism Database (Biocuration 2014 poster)

•Download as PPTX, PDF•

1 like•1,747 views

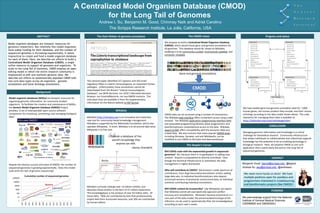

A Centralized Model Organism Database (CMOD) for the Long Tail of Genomes Presented at Biocuration 2014 in Toronto http://biocuration2014.events.oicr.on.ca/ See related slides at http://www.slideshare.net/andrewsu/20140116-gmod-short

Report

Share

Report

Share

Recommended

FAIR Agronomy, where are we? The KnetMiner Use Case

Marco Brandizi and Keywan Hassani-Pak, Rothamsted Research, Invited Presentation at SWAT4HCLS 2022.

FAIR data principles are being a driving force in life sciences and other scientific domains, helping researchers to share their data and free all of their potential to integrate information and do novel discoveries. Knowledge graphs are an ever more popular paradigm to model data according to such principles, and technologies such as graph databases are emerging as complementary to approaches like linked data. All of this includes the agronomy, farming and food domains. How advanced the adoption of sound data management policies is in these life domains? How does that compare to other life sciences? In this presentation, we will talk about our practical experience, focusing on KnetMiner, a gene and molecular biology discovering platform, which is based on building and publishing knowledge graphs according to the FAIR principles, as well as using a mix of linked data standards for life sciences and recent graph database and API technologies. We will welcome questions and discussions from the audience about similar experience.

AgriSchemas: Sharing Agrifood data with Bioschemas

AgriSchemas short report, for the Elixir workshop on Data and interoperability for plant sciences.

Genomic Big Data Management, Integration and Mining - Emanuel Weitschek

Thanks to Next Generation Sequencing (NGS), a technology that is lowering the cost and time of reading DNA, we are faced with huge amounts of biomedical data. These data are continuously collected by research laboratories, and often organized through world-wide consortia, which are releasing many public data bases. One of the main aims of bioinformatics is to solve fundamental issues in biomedicine research (e.g., how cancer occurs) starting from big genomic data and their analysis. In this talk I will give an overview of big genomic data management, integration, and mining.

Technology R&D Theme 2: From Descriptive to Predictive Networks

National Resource for Networks Biology's TR&D Theme 2: Genomics is mapping complex data about human biology and promises major medical advances. However, the routine use of genomics data in medical research is in its infancy, due mainly to the challenges of working with highly complex “big data”. In this theme, we will use network information to help organize, analyze and integrate these data into models that can be used to make clinically relevant diagnoses and predictions about an individual.

Fabricio Silva: Cloud Computing Technologies for Genomic Big Data Analysis

Talk by Fabricio Silva on the 1st Symposium of Big Data and Public Health, 2013

Recommended

FAIR Agronomy, where are we? The KnetMiner Use Case

Marco Brandizi and Keywan Hassani-Pak, Rothamsted Research, Invited Presentation at SWAT4HCLS 2022.

FAIR data principles are being a driving force in life sciences and other scientific domains, helping researchers to share their data and free all of their potential to integrate information and do novel discoveries. Knowledge graphs are an ever more popular paradigm to model data according to such principles, and technologies such as graph databases are emerging as complementary to approaches like linked data. All of this includes the agronomy, farming and food domains. How advanced the adoption of sound data management policies is in these life domains? How does that compare to other life sciences? In this presentation, we will talk about our practical experience, focusing on KnetMiner, a gene and molecular biology discovering platform, which is based on building and publishing knowledge graphs according to the FAIR principles, as well as using a mix of linked data standards for life sciences and recent graph database and API technologies. We will welcome questions and discussions from the audience about similar experience.

AgriSchemas: Sharing Agrifood data with Bioschemas

AgriSchemas short report, for the Elixir workshop on Data and interoperability for plant sciences.

Genomic Big Data Management, Integration and Mining - Emanuel Weitschek

Thanks to Next Generation Sequencing (NGS), a technology that is lowering the cost and time of reading DNA, we are faced with huge amounts of biomedical data. These data are continuously collected by research laboratories, and often organized through world-wide consortia, which are releasing many public data bases. One of the main aims of bioinformatics is to solve fundamental issues in biomedicine research (e.g., how cancer occurs) starting from big genomic data and their analysis. In this talk I will give an overview of big genomic data management, integration, and mining.

Technology R&D Theme 2: From Descriptive to Predictive Networks

National Resource for Networks Biology's TR&D Theme 2: Genomics is mapping complex data about human biology and promises major medical advances. However, the routine use of genomics data in medical research is in its infancy, due mainly to the challenges of working with highly complex “big data”. In this theme, we will use network information to help organize, analyze and integrate these data into models that can be used to make clinically relevant diagnoses and predictions about an individual.

Fabricio Silva: Cloud Computing Technologies for Genomic Big Data Analysis

Talk by Fabricio Silva on the 1st Symposium of Big Data and Public Health, 2013

Introduction to Bioinformatics.

Slides contain information about why bioinformatics appeared,

who bioinformaticians are, what they do, what kind of cool applications and challenges in bioinformatics there are.

Slides were prepared for the Bioinformatics seminar 2016, Institute of Computer Science, University of Tartu.

Wikidata and the Semantic Web of Food

A 10 minute introduction to Wikidata, the Gene Wiki project, and the Semantic Web. Presented at IC-Foods inaugural conference at UC Davis

Interoperable Data for KnetMiner and DFW Use Cases

Summary of AgriSchemas Work done so far, used for the ELIXIR BioHackathon 2021.

Introduction to Bioinformatics Slides

This HIBB presentation provides background information on bases, amino acids, proteins, nucleotides and DNA. The presentation then explains what bioinformatics is, lists some examples, and demonstrates some tools. It demonstrates tools which compare parts of human and chimp genes, and illustrate drug resistance analysis and HIV subtype analysis. It then discusses some ethical and clinical aspects to bioinformatics.

Bioinformatics

A description of how technology has changed the face of Biology, specially in the fields of genetics, proteomics, and evolution.

It includes a brief history, examples of usage, and a look into the future.

BIOINFORMATICS Applications And Challenges

This is a complete summary of an introduction to Bioinformatics; Applications and Challenges for Advanced Biology students.

Bioinformatics: What, Why and Where?

Introducing Bioinformatics

Bioinformatics in the Big Data Era

How to get into Bioinformatics?

How to learn and practice Bioinformatics?

Bioinformatics Careers and Salaries Worldwide

Applications of Bioinformatics

Take-Home Messages

Bioinformatics principles and applications

Bioinformatics principles and applicationsSouth African National Bioinformatics Institute at the University of the Western Cape

Computational Biology and Bioinformatics

Computational Biology and Bioinformatics is a rapidly developing multi-disciplinary field. The systematic achievement of data made possible by genomics and proteomics technologies has created a tremendous gap between available data and their biological interpretation.

Career oppurtunities in the field of Bioinformatics

Advancement in the field of bioinformatics in terms of career perspectives.

Data sharing - Data management - The SysMO-SEEK Story

Professor Carole Goble, University of Manchester, talks at the RIN "Research data: policies & behaviour" event as part of a series on Research Information in Transition.

Globus Genomics: Democratizing NGS Analysis

We describe Globus Genomics, a service developed and operated by the Computation Institute, University of Chicago

2016 bmdid-mappings

Bio2RDF is an open-source project that offers a large and

connected knowledge graph of Life Science Linked Data. Each dataset is expressed using its own vocabulary, thereby hindering integration, search, query, and browse data across similar or identical types of data. With growth and content changes in source data, a manual approach to maintain mappings has proven untenable. The aim of this work is to develop a (semi)automated procedure to generate high quality mappings

between Bio2RDF and SIO using BioPortal ontologies. Our preliminary results demonstrate that our approach is promising in that it can find new mappings using a transitive closure between ontology mappings. Further development of the methodology coupled with improvements in

the ontology will offer a better-integrated view of the Life Science Linked Data

Web services for sharing germplasm data sets, at FAO in Rome (2006)

Sharing of Germplasm datasets with web services. Food and Agriculture Organization of the United Nations (FAO) 20th February 2006.

Gene Wiki and Mark2Cure update for BD2K

An introduction to the Gene Wiki project with an emphasis on the use of the new WikiData project. Also describes mark2cure, a citizen science initiative oriented on biomedical text mining.

Web based servers and softwares for genome analysis

There are many characteristics of biological data. All these characteristics make the management of biological information a particularly challenging problem. Here mainly we will focus on characteristics of biological information and multidisciplinary field called bioinformatics. Bioinformatics, now a days has emerged with graduate degree programs in several universities.

More Related Content

What's hot

Introduction to Bioinformatics.

Slides contain information about why bioinformatics appeared,

who bioinformaticians are, what they do, what kind of cool applications and challenges in bioinformatics there are.

Slides were prepared for the Bioinformatics seminar 2016, Institute of Computer Science, University of Tartu.

Wikidata and the Semantic Web of Food

A 10 minute introduction to Wikidata, the Gene Wiki project, and the Semantic Web. Presented at IC-Foods inaugural conference at UC Davis

Interoperable Data for KnetMiner and DFW Use Cases

Summary of AgriSchemas Work done so far, used for the ELIXIR BioHackathon 2021.

Introduction to Bioinformatics Slides

This HIBB presentation provides background information on bases, amino acids, proteins, nucleotides and DNA. The presentation then explains what bioinformatics is, lists some examples, and demonstrates some tools. It demonstrates tools which compare parts of human and chimp genes, and illustrate drug resistance analysis and HIV subtype analysis. It then discusses some ethical and clinical aspects to bioinformatics.

Bioinformatics

A description of how technology has changed the face of Biology, specially in the fields of genetics, proteomics, and evolution.

It includes a brief history, examples of usage, and a look into the future.

BIOINFORMATICS Applications And Challenges

This is a complete summary of an introduction to Bioinformatics; Applications and Challenges for Advanced Biology students.

Bioinformatics: What, Why and Where?

Introducing Bioinformatics

Bioinformatics in the Big Data Era

How to get into Bioinformatics?

How to learn and practice Bioinformatics?

Bioinformatics Careers and Salaries Worldwide

Applications of Bioinformatics

Take-Home Messages

Bioinformatics principles and applications

Bioinformatics principles and applicationsSouth African National Bioinformatics Institute at the University of the Western Cape

Computational Biology and Bioinformatics

Computational Biology and Bioinformatics is a rapidly developing multi-disciplinary field. The systematic achievement of data made possible by genomics and proteomics technologies has created a tremendous gap between available data and their biological interpretation.

Career oppurtunities in the field of Bioinformatics

Advancement in the field of bioinformatics in terms of career perspectives.

Data sharing - Data management - The SysMO-SEEK Story

Professor Carole Goble, University of Manchester, talks at the RIN "Research data: policies & behaviour" event as part of a series on Research Information in Transition.

Globus Genomics: Democratizing NGS Analysis

We describe Globus Genomics, a service developed and operated by the Computation Institute, University of Chicago

2016 bmdid-mappings

Bio2RDF is an open-source project that offers a large and

connected knowledge graph of Life Science Linked Data. Each dataset is expressed using its own vocabulary, thereby hindering integration, search, query, and browse data across similar or identical types of data. With growth and content changes in source data, a manual approach to maintain mappings has proven untenable. The aim of this work is to develop a (semi)automated procedure to generate high quality mappings

between Bio2RDF and SIO using BioPortal ontologies. Our preliminary results demonstrate that our approach is promising in that it can find new mappings using a transitive closure between ontology mappings. Further development of the methodology coupled with improvements in

the ontology will offer a better-integrated view of the Life Science Linked Data

What's hot (19)

Interoperable Data for KnetMiner and DFW Use Cases

Interoperable Data for KnetMiner and DFW Use Cases

B.sc biochem i bobi u-1 introduction to bioinformatics

B.sc biochem i bobi u-1 introduction to bioinformatics

Career oppurtunities in the field of Bioinformatics

Career oppurtunities in the field of Bioinformatics

Data sharing - Data management - The SysMO-SEEK Story

Data sharing - Data management - The SysMO-SEEK Story

Similar to Centralized Model Organism Database (Biocuration 2014 poster)

Web services for sharing germplasm data sets, at FAO in Rome (2006)

Sharing of Germplasm datasets with web services. Food and Agriculture Organization of the United Nations (FAO) 20th February 2006.

Gene Wiki and Mark2Cure update for BD2K

An introduction to the Gene Wiki project with an emphasis on the use of the new WikiData project. Also describes mark2cure, a citizen science initiative oriented on biomedical text mining.

Web based servers and softwares for genome analysis

There are many characteristics of biological data. All these characteristics make the management of biological information a particularly challenging problem. Here mainly we will focus on characteristics of biological information and multidisciplinary field called bioinformatics. Bioinformatics, now a days has emerged with graduate degree programs in several universities.

20 years of evolution in data production in health and life sciences

I share feedbacks as a genomics core facility scientific head and as a facilities manager over the last 20 years. I go trough evolution in the high throughput sequencing field and discuss about data storage and sharing.

Celsi®, CELL SIGNALING

Celsi® is a computer–assisted platform to simulate Signal Transduction (ST) pathways, occurring in living cells, and derived from an extracellular stimulus triggering a protein reaction signal cascade to the interior of the cell causing gene expression and producing a cellular response.

CELSI®, CELL SIGNALING

Celsi® is a computer–assisted platform to simulate Signal Transduction (ST) pathways, occurring in living cells, and derived from an extracellular stimulus triggering a protein reaction signal cascade to the interior of the cell causing gene expression and producing a cellular response.

Celsi®, a virtual simulation software for cell signaling pathways

CELSI® (CELl SIgnaling) a computer-assisted platform to simulate Signal Transduction (ST) pathways occurring in living cells after an extracellular stimulus trigger a protein reaction signal cascade to the interior of the cell causing gene expression and producing a cellular response.

Big Data, The Community and The Commons (May 12, 2014)

These are the slides from a keynote I gave at the

The Cancer Genome Atlas (TCGA) Third Annual Scientific Symposium on May 12, 2014.

A consistent and efficient graphical User Interface Design and Querying Organ...

We propose a software layer called GUEDOS-DB upon Object-Relational Database Management System ORDMS. In this work we apply it in Molecular Biology, more precisely Organelle complete genome. We aim to offer biologists the possibility to access in a unified way information spread among heterogeneous genome databanks. In this paper, the goal is firstly, to provide a visual schema graph through a number of illustrative examples. The adopted, human-computer interaction technique in this visual designing and querying makes very easy for biologists to formulate database queries compared with linear textual query representation.

SFSCON23 - Michele Finelli - Management of large genomic data with free software

SFSCON23 - Michele Finelli - Management of large genomic data with free softwareSouth Tyrol Free Software Conference

The suite of free software tools created within the OpenCB (Open Computational Biology – https://github.com/opencb) initiative makes possible to efficiently manage large genomic databases.

These tools are not widely used, since there is quite a steep learning curve for their adoption, thanks to the complexity of the software stack, but they may be really cost-effective for hospitals, research institutions etcetera.

The objective of the talk is showing the potential of the OpenCB suite, the information to start using it and the advantages for the end users. BioDec is currently deploying a large OpenCGA installation for the Genetic Unit of one of the main Italian Hospitals, where data in the order of the hundreds of TBs will be managed and analyzed by bioinformaticians.Software Pipelines: The Good, The Bad and The Ugly

Presentation in the "Whole genome sequencing for clinical microbiology:Translation into routine applications" Symposium , Basel , Switzerland, 2 Sep 2017

Big Data and AI in Fighting Against COVID-19

Website: https://learn.xnextcon.com/event/eventdetails/W20070810

As the COVID-19 pandemic sweeps the globe, big data and AI have emerged as crucial tools for everything from diagnosis and epidemiology to therapeutic and vaccine development.

In this talk, we collect and review how big data is fighting back against COVID-19. We also provide a deep diving for two interesting use cases: 1) Use NLP and BERT to answer scientific questions. 2) Covid-19 data lake from Databricks, Google and Amazon

Agenda:

Introduction

Supercomputers for Scientific Research

Covid-19 Tracking and Prediction

Covid-19 Research and Diagnosis

Use Case 1 NLP and BERT to answer scientific questions

Use Case 2 Covid-19 Data Lake and Platform

Big Data and AI for Covid-19

Agenda:

Introduction

Supercomputers for Scientific Research

Covid-19 Tracking and Prediction

Covid-19 Research and Diagnosis

Use Case 1 NLP and BERT to answer scientific questions

Use Case 2 Covid-19 Data Lake and Platform

Life Technologies' Journey to the Cloud (ENT208) | AWS re:Invent 2013

Life Technologies initially planned to build out its own data center infrastructure, but when a cost analysis revealed that by using Amazon Web Services the company would save $325,000 in hardware alone for a single new initiative, the company decided to use AWS instead. Within 6 months of adopting AWS, Life Technologies launched their Digital Hub platform in production, which now undergirds Life Technologies' entire instrumentation product suite.This immediately began to decrease their time-to-market and enhance their customers' user experience. In this session, we provide an overview of our path to the AWS cloud, with particular focus on the evaluation criteria used to make a cloud vendor decision. We also discuss the lessons learned since going into production.

What is Biological Computing And How It Will Change Our World

When you look at the origin of the word computer—“one who calculates”—you learn electronics aren’t necessarily a required component even though most of us would imagine the modern-day desktop or laptop when we hear the term.

2011Field talk at iEVOBIO 2011

A keynote talk at iEVOBIO 2011 meeting - http://ievobio.org/. Has been a great meeting.

MMTF-Spark: Interactive, Scalable, and Reproducible Datamining of 3D Macromo...

Presented at the NIH/NCI Informatics Technology for Cancer Research (ITCR) 2018 meeting (https://itcr.cancer.gov/).

Advances in Structural Bioinformatics are driven by the fast growth in experimental 3D structures and integration with even larger sets of sequence and protein function data. At the same time, the field of Data Science has created new technologies for re-engineering legacy software pipelines to make them scalable, easy to use, reproducible, reusable, and sharable.

Here, we describe the MMTF-Spark/PySpark [1] project that combines three key components to create such an infrastructure: 1. Interactive Jupyter notebooks to run ad hoc analyses, data mining, machine learning, and visualization of 3D structure and sequence datasets, 2. A scalable compute infrastructure to run these analyses interactively across large datasets, e.g., the entire PDB, using previously developed efficient data representations [2, 3] and the Apache Spark framework for distributed parallel computing, 3. A library of methods for data mining and analysis of 3D structure and sequence data, capitalizing on the rich data analytics, visualization, and machine/deep learning tools available in the Python ecosystem.

Scientists face a number of complex and time consuming barriers when applying structural bioinformatics analysis, including complex software setups, non-interoperable data formats and software applications, lack of documentation, simple examples, and tutorials. Given the large datasets, biologists routinely apply computational tools and automation pipelines in their research. However, there is a long tail of ad-hoc, one-off, questions that biologists ask that cannot be answered using available web resources or workflow systems that focus on common tasks. In this project, we provide a self-contained programming environment that caters to scientists with varying computational skills and needs, ranging from biologists with basic programming skills, to structural and computational biologists who want to share their work, to data scientists who seek access to bioinformatics datasets to benchmark new machine learning methods. A key advantage of this environment is interactivity, which enables iterative exploration. By combining documentation, data sets, analysis code, results, and interactive visualizations in Jupyter notebooks, the steps of an interactive session can be captured, reproduced, and shared.

Acknowledgements

This project was supported by the NCI of the NIH under award number U01 CA198942.

References

1. https://github.com/sbl-sdsc/mmtf-spark, https://github.com/sbl-sdsc/mmtf-pyspark

2. Bradley AR, et al. (2017) MMTF - an efficient file format for the transmission, visualization, and analysis of macromolecular structures. PLOS Computational Biology 13(6): e1005575.

3. Valasatava Y, et al. (2017) Towards an efficient compression of 3D coordinates of macromolecular structures. PLOS ONE 12(3): e0174846.

Semantics for Bioinformatics: What, Why and How of Search, Integration and An...

Amit Sheth's Keynote at Semantic Web Technologies for Science and Engineering Workshop (held in conjunction with ISWC2003), Sanibel Island, FL, October 20, 2003.

Similar to Centralized Model Organism Database (Biocuration 2014 poster) (20)

Web services for sharing germplasm data sets, at FAO in Rome (2006)

Web services for sharing germplasm data sets, at FAO in Rome (2006)

Web based servers and softwares for genome analysis

Web based servers and softwares for genome analysis

20 years of evolution in data production in health and life sciences

20 years of evolution in data production in health and life sciences

Celsi®, a virtual simulation software for cell signaling pathways

Celsi®, a virtual simulation software for cell signaling pathways

Big Data, The Community and The Commons (May 12, 2014)

Big Data, The Community and The Commons (May 12, 2014)

A consistent and efficient graphical User Interface Design and Querying Organ...

A consistent and efficient graphical User Interface Design and Querying Organ...

SFSCON23 - Michele Finelli - Management of large genomic data with free software

SFSCON23 - Michele Finelli - Management of large genomic data with free software

Software Pipelines: The Good, The Bad and The Ugly

Software Pipelines: The Good, The Bad and The Ugly

Life Technologies' Journey to the Cloud (ENT208) | AWS re:Invent 2013

Life Technologies' Journey to the Cloud (ENT208) | AWS re:Invent 2013

What is Biological Computing And How It Will Change Our World

What is Biological Computing And How It Will Change Our World

MMTF-Spark: Interactive, Scalable, and Reproducible Datamining of 3D Macromo...

MMTF-Spark: Interactive, Scalable, and Reproducible Datamining of 3D Macromo...

Semantics for Bioinformatics: What, Why and How of Search, Integration and An...

Semantics for Bioinformatics: What, Why and How of Search, Integration and An...

More from Andrew Su

Wikidata as a FAIR knowledge graph for the life sciences

Talk given at the Workshop on Advanced Knowledge Technologies for Science in a FAIR World (AKTS)

https://www.isi.edu/ikcap/akts/akts2019/

The Gene Wiki: Using Wikipedia and Wikidata to organize biomedical knowledge

Presented via video at Wikimedia Research Showcase August 23, 2017. Video available at https://www.youtube.com/watch?v=Fa0Ztv2iF4w&t=33m

BOSC2017: Using Wikidata as an open, community-maintained database of biomedi...

Talk given at BOSC 2017 in Prague, July 22, 2017

Citizen Science and Rare Disease Research

Talk given at "Personalized Health in the Digital Age" September 22, 2016 at Campus Biotech in Geneva, Switzerland https://www.personalizedhealth2016.ch/

Open biomedical knowledge using crowdsourcing and citizen science

Talk given at UCSD's Genetics & Genomics / Bioinformatics & Systems Biology joint seminar series on November 5, 2015.

Heart BD2K, Biocuration, and Citizen Science

Talk given at Big Data in Biomedicine Conference May 21, 2015.

Panel on Citizen Science and Crowdsourcing Games - March 27, 2015

Federal Community of Practice for Crowdsourcing and Citizen Science meeting on Games

Using Citizen Science to organize biomedical knowledge

Talk on "Using Citizen Science to organize biomedical knowledge" given March 5, 2015 at the Future of Genomic Medicine conference in La Jolla, CA

UCSD / DBMI seminar 2015-02-6

Presentation given at UCSD Division of Biomedical Informatics on Feb 6, 2015.

Crowdsourcing and Learning from Crowd Data (Tutorial @ PSB2015)

Tutorial for the PSB2015 session on "Crowdsourcing and learning from Crowd Data"

Slides created by Robert Leaman, Benjamin Good, and Andrew Su.

Microtask crowdsourcing for annotating diseases in PubMed abstracts (ASHG 2014)

Presentation on "Microtask crowdsourcing for annotating diseases in PubMed abstracts" at ASHG14 session on "Cloudy with a chance of big data".

Crowdsourcing Biology: The Gene Wiki, BioGPS, and Citizen Science

Screencast video now at: https://www.youtube.com/watch?v=oe7pjHJU-z4

Talk info at http://1.usa.gov/1kPcRxC

A Centralized Model Organism Database (CMOD) for the Long Tail of Sequenced G...

Keynote talk given at GMOD 2014

Video of talk at: https://www.youtube.com/watch?v=RVijs5ry05E

Video of QA at: https://www.youtube.com/watch?v=dGHXo-iNsyU

Blog post: http://sulab.org/2013/06/creating-a-centralized-model-organism-database-cmod/

Crowdsourcing Biology: The Gene Wiki, BioGPS and GeneGames.org

Given at DBMI seminar series at UCSD. http://dbmi.ucsd.edu/display/DBMI/Seminars

NCBO Webinar: Translating unstructured, crowdsourced content into structured ...

The use of crowdsourcing in biology is gaining popularity as a mechanism to tackle challenges of massive scale. However, to maximize participation and lower the barriers to entry, contributions to crowdsourcing efforts are typically not well-structured, which makes computing on these data challenging and difficult. The presentation will discuss strategies for translating this unstructured content into structured data. Three vignettes (in varying degrees of completion) will be described, one each from our Gene Wiki [1], BioGPS [2], and serious gaming [3] initiatives.

[1]: http://en.wikipedia.org/wiki/Portal:Gene_Wiki

[2]: http://biogps.org

[3]: http://genegames.org

More from Andrew Su (20)

Building and mining a heterogeneous biomedical knowledge graph

Building and mining a heterogeneous biomedical knowledge graph

Wikidata as a FAIR knowledge graph for the life sciences

Wikidata as a FAIR knowledge graph for the life sciences

The Gene Wiki: Using Wikipedia and Wikidata to organize biomedical knowledge

The Gene Wiki: Using Wikipedia and Wikidata to organize biomedical knowledge

BOSC2017: Using Wikidata as an open, community-maintained database of biomedi...

BOSC2017: Using Wikidata as an open, community-maintained database of biomedi...

Open data, compound repurposing, and rare diseases (ISCB)

Open data, compound repurposing, and rare diseases (ISCB)

Open data, compound repurposing, and rare diseases -- Point Loma Nazarene Uni...

Open data, compound repurposing, and rare diseases -- Point Loma Nazarene Uni...

Open biomedical knowledge using crowdsourcing and citizen science

Open biomedical knowledge using crowdsourcing and citizen science

Panel on Citizen Science and Crowdsourcing Games - March 27, 2015

Panel on Citizen Science and Crowdsourcing Games - March 27, 2015

Using Citizen Science to organize biomedical knowledge

Using Citizen Science to organize biomedical knowledge

Crowdsourcing and Learning from Crowd Data (Tutorial @ PSB2015)

Crowdsourcing and Learning from Crowd Data (Tutorial @ PSB2015)

Microtask crowdsourcing for annotating diseases in PubMed abstracts (ASHG 2014)

Microtask crowdsourcing for annotating diseases in PubMed abstracts (ASHG 2014)

Crowdsourcing Biology: The Gene Wiki, BioGPS, and Citizen Science

Crowdsourcing Biology: The Gene Wiki, BioGPS, and Citizen Science

A Centralized Model Organism Database (CMOD) for the Long Tail of Sequenced G...

A Centralized Model Organism Database (CMOD) for the Long Tail of Sequenced G...

Crowdsourcing Biology: The Gene Wiki, BioGPS and GeneGames.org

Crowdsourcing Biology: The Gene Wiki, BioGPS and GeneGames.org

NCBO Webinar: Translating unstructured, crowdsourced content into structured ...

NCBO Webinar: Translating unstructured, crowdsourced content into structured ...

Recently uploaded

Deep Software Variability and Frictionless Reproducibility

Deep Software Variability and Frictionless ReproducibilityUniversity of Rennes, INSA Rennes, Inria/IRISA, CNRS

The ability to recreate computational results with minimal effort and actionable metrics provides a solid foundation for scientific research and software development. When people can replicate an analysis at the touch of a button using open-source software, open data, and methods to assess and compare proposals, it significantly eases verification of results, engagement with a diverse range of contributors, and progress. However, we have yet to fully achieve this; there are still many sociotechnical frictions.

Inspired by David Donoho's vision, this talk aims to revisit the three crucial pillars of frictionless reproducibility (data sharing, code sharing, and competitive challenges) with the perspective of deep software variability.

Our observation is that multiple layers — hardware, operating systems, third-party libraries, software versions, input data, compile-time options, and parameters — are subject to variability that exacerbates frictions but is also essential for achieving robust, generalizable results and fostering innovation. I will first review the literature, providing evidence of how the complex variability interactions across these layers affect qualitative and quantitative software properties, thereby complicating the reproduction and replication of scientific studies in various fields.

I will then present some software engineering and AI techniques that can support the strategic exploration of variability spaces. These include the use of abstractions and models (e.g., feature models), sampling strategies (e.g., uniform, random), cost-effective measurements (e.g., incremental build of software configurations), and dimensionality reduction methods (e.g., transfer learning, feature selection, software debloating).

I will finally argue that deep variability is both the problem and solution of frictionless reproducibility, calling the software science community to develop new methods and tools to manage variability and foster reproducibility in software systems.

Exposé invité Journées Nationales du GDR GPL 2024

Nutraceutical market, scope and growth: Herbal drug technology

As consumer awareness of health and wellness rises, the nutraceutical market—which includes goods like functional meals, drinks, and dietary supplements that provide health advantages beyond basic nutrition—is growing significantly. As healthcare expenses rise, the population ages, and people want natural and preventative health solutions more and more, this industry is increasing quickly. Further driving market expansion are product formulation innovations and the use of cutting-edge technology for customized nutrition. With its worldwide reach, the nutraceutical industry is expected to keep growing and provide significant chances for research and investment in a number of categories, including vitamins, minerals, probiotics, and herbal supplements.

Nucleic Acid-its structural and functional complexity.

This presentation explores a brief idea about the structural and functional attributes of nucleotides, the structure and function of genetic materials along with the impact of UV rays and pH upon them.

Deep Behavioral Phenotyping in Systems Neuroscience for Functional Atlasing a...

Functional Magnetic Resonance Imaging (fMRI) provides means to characterize brain activations in response to behavior. However, cognitive neuroscience has been limited to group-level effects referring to the performance of specific tasks. To obtain the functional profile of elementary cognitive mechanisms, the combination of brain responses to many tasks is required. Yet, to date, both structural atlases and parcellation-based activations do not fully account for cognitive function and still present several limitations. Further, they do not adapt overall to individual characteristics. In this talk, I will give an account of deep-behavioral phenotyping strategies, namely data-driven methods in large task-fMRI datasets, to optimize functional brain-data collection and improve inference of effects-of-interest related to mental processes. Key to this approach is the employment of fast multi-functional paradigms rich on features that can be well parametrized and, consequently, facilitate the creation of psycho-physiological constructs to be modelled with imaging data. Particular emphasis will be given to music stimuli when studying high-order cognitive mechanisms, due to their ecological nature and quality to enable complex behavior compounded by discrete entities. I will also discuss how deep-behavioral phenotyping and individualized models applied to neuroimaging data can better account for the subject-specific organization of domain-general cognitive systems in the human brain. Finally, the accumulation of functional brain signatures brings the possibility to clarify relationships among tasks and create a univocal link between brain systems and mental functions through: (1) the development of ontologies proposing an organization of cognitive processes; and (2) brain-network taxonomies describing functional specialization. To this end, tools to improve commensurability in cognitive science are necessary, such as public repositories, ontology-based platforms and automated meta-analysis tools. I will thus discuss some brain-atlasing resources currently under development, and their applicability in cognitive as well as clinical neuroscience.

Observation of Io’s Resurfacing via Plume Deposition Using Ground-based Adapt...

Since volcanic activity was first discovered on Io from Voyager images in 1979, changes

on Io’s surface have been monitored from both spacecraft and ground-based telescopes.

Here, we present the highest spatial resolution images of Io ever obtained from a groundbased telescope. These images, acquired by the SHARK-VIS instrument on the Large

Binocular Telescope, show evidence of a major resurfacing event on Io’s trailing hemisphere. When compared to the most recent spacecraft images, the SHARK-VIS images

show that a plume deposit from a powerful eruption at Pillan Patera has covered part

of the long-lived Pele plume deposit. Although this type of resurfacing event may be common on Io, few have been detected due to the rarity of spacecraft visits and the previously low spatial resolution available from Earth-based telescopes. The SHARK-VIS instrument ushers in a new era of high resolution imaging of Io’s surface using adaptive

optics at visible wavelengths.

Salas, V. (2024) "John of St. Thomas (Poinsot) on the Science of Sacred Theol...

I Introduction

II Subalternation and Theology

III Theology and Dogmatic Declarations

IV The Mixed Principles of Theology

V Virtual Revelation: The Unity of Theology

VI Theology as a Natural Science

VII Theology’s Certitude

VIII Conclusion

Notes

Bibliography

All the contents are fully attributable to the author, Doctor Victor Salas. Should you wish to get this text republished, get in touch with the author or the editorial committee of the Studia Poinsotiana. Insofar as possible, we will be happy to broker your contact.

(May 29th, 2024) Advancements in Intravital Microscopy- Insights for Preclini...

(May 29th, 2024) Advancements in Intravital Microscopy- Insights for Preclini...Scintica Instrumentation

Intravital microscopy (IVM) is a powerful tool utilized to study cellular behavior over time and space in vivo. Much of our understanding of cell biology has been accomplished using various in vitro and ex vivo methods; however, these studies do not necessarily reflect the natural dynamics of biological processes. Unlike traditional cell culture or fixed tissue imaging, IVM allows for the ultra-fast high-resolution imaging of cellular processes over time and space and were studied in its natural environment. Real-time visualization of biological processes in the context of an intact organism helps maintain physiological relevance and provide insights into the progression of disease, response to treatments or developmental processes.

In this webinar we give an overview of advanced applications of the IVM system in preclinical research. IVIM technology is a provider of all-in-one intravital microscopy systems and solutions optimized for in vivo imaging of live animal models at sub-micron resolution. The system’s unique features and user-friendly software enables researchers to probe fast dynamic biological processes such as immune cell tracking, cell-cell interaction as well as vascularization and tumor metastasis with exceptional detail. This webinar will also give an overview of IVM being utilized in drug development, offering a view into the intricate interaction between drugs/nanoparticles and tissues in vivo and allows for the evaluation of therapeutic intervention in a variety of tissues and organs. This interdisciplinary collaboration continues to drive the advancements of novel therapeutic strategies.

原版制作(carleton毕业证书)卡尔顿大学毕业证硕士文凭原版一模一样

原版纸张【微信:741003700 】【(carleton毕业证书)卡尔顿大学毕业证】【微信:741003700 】学位证,留信认证(真实可查,永久存档)offer、雅思、外壳等材料/诚信可靠,可直接看成品样本,帮您解决无法毕业带来的各种难题!外壳,原版制作,诚信可靠,可直接看成品样本。行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备。十五年致力于帮助留学生解决难题,包您满意。

本公司拥有海外各大学样板无数,能完美还原海外各大学 Bachelor Diploma degree, Master Degree Diploma

1:1完美还原海外各大学毕业材料上的工艺:水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠。文字图案浮雕、激光镭射、紫外荧光、温感、复印防伪等防伪工艺。材料咨询办理、认证咨询办理请加学历顾问Q/微741003700

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

Unveiling the Energy Potential of Marshmallow Deposits.pdf

Unveiling the Energy Potential of Marshmallow Deposits: A Revolutionary

Breakthrough in Sustainable Energy Science

Recently uploaded (20)

Deep Software Variability and Frictionless Reproducibility

Deep Software Variability and Frictionless Reproducibility

PRESENTATION ABOUT PRINCIPLE OF COSMATIC EVALUATION

PRESENTATION ABOUT PRINCIPLE OF COSMATIC EVALUATION

Mammalian Pineal Body Structure and Also Functions

Mammalian Pineal Body Structure and Also Functions

Nutraceutical market, scope and growth: Herbal drug technology

Nutraceutical market, scope and growth: Herbal drug technology

Nucleic Acid-its structural and functional complexity.

Nucleic Acid-its structural and functional complexity.

Deep Behavioral Phenotyping in Systems Neuroscience for Functional Atlasing a...

Deep Behavioral Phenotyping in Systems Neuroscience for Functional Atlasing a...

Observation of Io’s Resurfacing via Plume Deposition Using Ground-based Adapt...

Observation of Io’s Resurfacing via Plume Deposition Using Ground-based Adapt...

Salas, V. (2024) "John of St. Thomas (Poinsot) on the Science of Sacred Theol...

Salas, V. (2024) "John of St. Thomas (Poinsot) on the Science of Sacred Theol...

In silico drugs analogue design: novobiocin analogues.pptx

In silico drugs analogue design: novobiocin analogues.pptx

Body fluids_tonicity_dehydration_hypovolemia_hypervolemia.pptx

Body fluids_tonicity_dehydration_hypovolemia_hypervolemia.pptx

BLOOD AND BLOOD COMPONENT- introduction to blood physiology

BLOOD AND BLOOD COMPONENT- introduction to blood physiology

(May 29th, 2024) Advancements in Intravital Microscopy- Insights for Preclini...

(May 29th, 2024) Advancements in Intravital Microscopy- Insights for Preclini...

Unveiling the Energy Potential of Marshmallow Deposits.pdf

Unveiling the Energy Potential of Marshmallow Deposits.pdf

Centralized Model Organism Database (Biocuration 2014 poster)

- 1. A Centralized Model Organism Database (CMOD) for the Long Tail of Genomes ABSTRACT Andrew I. Su, Benjamin M. Good, Chinmay Naik and Adriel Carolino The Scripps Research Institute, La Jolla, California, USA Background How Gene Wiki? We acknowledge support from the National Institute of General Medical Sciences (GM089820 and GM083924). CONTACT Benjamin Good: bgood@scripps.edu, @bgood Andrew Su: asu@scripps.edu, @andrewsu How Gene Wiki? The CMOD visionGENE WIKI EXAMPLEABSTRACT FUNDING Progress and status CONCLUSION One: structure from text miningThe Dark Matter of genome annotation We need more hands on deck! We have multiple positions open for postdocs and programmers interested in crowdsourcing and bioinformatics projects (like CMOD)! 1 10 100 1000 10000 100000 1000000 1997 1999 2001 2003 2005 2007 2009 2011 2013 2015 2017 2019 2021 2023 2025 Bacteria Eukaryotes Archaea Model organism databases (MODs) are fantastic resources for organizing genomic information for commonly-studied organisms. To facilitate the creation and maintenance of MODs, the Generic Model Organism Database (GMOD) Project provides “a set of interoperable open-source software components for visualizing, annotating, and managing biological data.” Provide a database of the world’s knowledge that anyone can edit. - Denny Vrandečić Despite the obvious success and value of GMOD, the number of sequenced genomes is growing exponentially. Does this model scale with the rate of genome sequencing? Figure courtesy Scott Cain Wikidata (http://wikidata.org) is an innovative and important new tool for community-based knowledge management. Wikidata is supported by the Wikimedia Foundation, which also operates Wikipedia. In short, Wikidata is to structured data what Wikipedia is to free text. Model organism databases are fantastic resources for genomics researchers. But relatively few model organisms have stable funding for their database, and the number of sequenced genomes is increasing exponentially. It seems impractical to create and fund a model organism database for each of them. Here, we describe our efforts to build a Centralized Model Organism Database (CMOD), a single online resource to support all genomes and organisms. To scale to the Long Tail of Genomes, CMOD employs an open editing model in which the entire research community is empowered to edit and maintain genomic data. We describe our efforts to systematically populate CMOD with two core data types across all organisms – genome annotations and Gene Ontology annotations. We propose to build a Centralized Model Organism Database (CMOD), which would house gene and genome annotations for all genomes. This database would be based on Wikidata, enabling it to be community-curated, continuously-updated, and computer-readable. CMOD Gene and genome annotations CMOD data can be accessed using a number of mechanisms. The Wikidata web interface offers convenient access using a web browser. The Wikidata application programming interface (API) and associated programming libraries allow programmers and bioinformaticians computational access to the data. Wikidata export to RDF offers compatibility with the Semantic Web and Linked Data. We also envision that many popular GMOD tools, including Gbrowse, Jbrowse, and and WebApollo, can be modified to use CMOD as the back-end data warehouse. Wikidata Wikidata currently catalogs over 14 million entities, and describes those entities in the form of 27 million statements. This knowledgebase is the product of over 50 million edits. Of those edits, ~90% are contributed by bots that predominantly import data from structured resources, and 10% are contributed by human editors. This seminal paper identified 517 operons and 103 small regulatory RNAs in Listeria monocytogenes, an important human pathogen. Unfortunately, these annotations cannot be downloaded from the Broad’s “Listeria monocytogenes Database”, nor NCBI Genome, nor UCSC’s Microbial Genome Browser, nor EnsemblBacteria, nor any GMOD instance. The only place they are available is from the Supplementary information on the Nature website in PDF format. We have loaded gene and genome annotation data for ~1000 human genes, the human proteins they encode, and their mouse orthologs according to the data model shown above. The code repository for managing these data is available at https://bitbucket.org/sulab/wikidatagenebot. The Skeptic’s Corner Will CMOD scale with the exponential growth in sequenced genomes? Yes, because there is no gatekeeper to adding new content. Anyone is empowered to directly contribute. Even though the technical infrastructure is centralized, the data management is highly distributed. Who will contribute to CMOD? We envision a wide spectrum of contributors, from large biocuration/annotation centers adding large data sets, to individual bioinformaticians who deposit structured versions of previously unstructured data, to individual scientists contributing individual annotations. Will CMOD content be trustworthy? Like Wikipedia, we expect that Wikidata overall will asymptotically approach perfect accuracy and completeness. Moreover, because provenance is a core part of the data model, the presence/absence/type of the reference can be used to systematically filter the knowledgebase according to each user’s needs. Managing genomic information and knowledge is a critical challenge for biomedical research. Community infrastructure that allows individuals to collaboratively and collectively organize knowledge has the potential to be an enabling technology in biological research. Here, we propose CMOD as one such application that is particularly focused on the Long Tail of sequenced genomes. Cumulative number of sequenced genomes