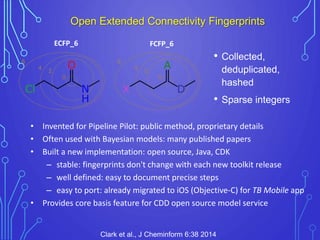

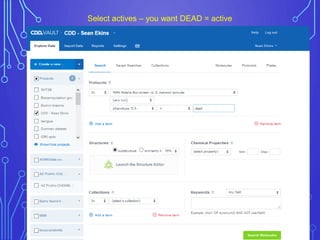

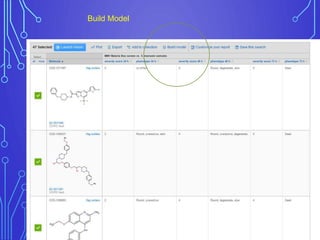

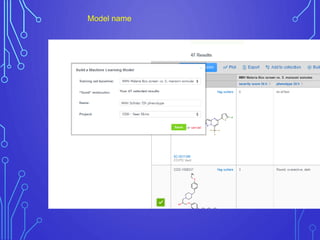

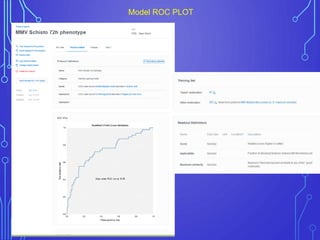

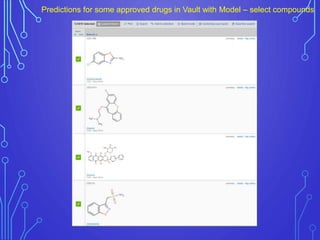

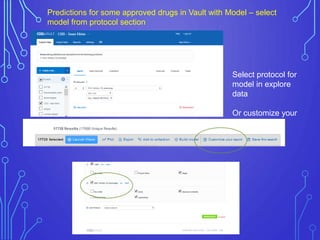

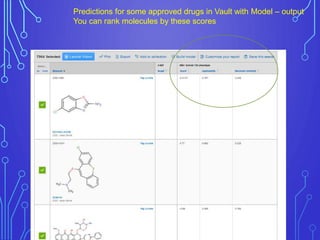

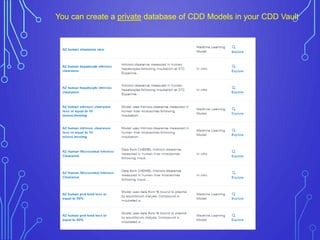

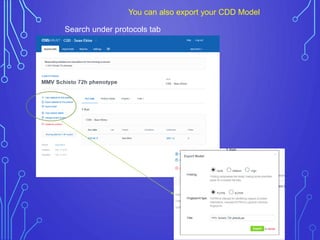

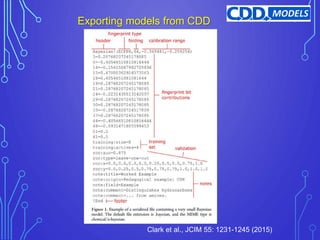

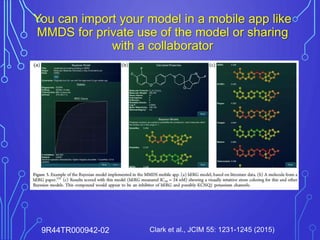

The case study outlines methods for building machine learning models using the Collaborative Drug Discovery (CDD) vault and public datasets. It discusses techniques for exporting models for internal use or sharing, and includes examples of utilizing specific datasets like MMV for predictions on drug compounds. The document emphasizes the benefits of open-source implementations and collaboration in drug discovery research.