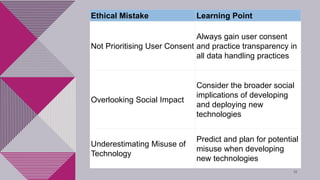

This document discusses ethical issues in computer science through several examples. It describes how Cambridge Analytica collected Facebook user data without consent, leading to privacy and consent issues. It also discusses Google's work on a Pentagon AI project that caused employee resignations due to concerns about AI in military applications. The document then covers deepfake technology, noting how AI can be used to manipulate videos but also spread misinformation. Finally, it summarizes lessons from these past issues, such as prioritizing user consent, considering social impact, and planning for potential misuse of new technologies.