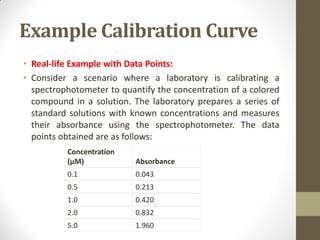

Calibration is a systematic process for ensuring the accuracy and reliability of measurement instruments across various industries, including manufacturing, healthcare, and environmental monitoring. The calibration curve is a vital tool that represents the relationship between concentration and instrument response, enabling quantitative analysis and verifying instrument performance. Proper calibration processes contribute to quality assurance, compliance with regulations, and the troubleshooting of potential issues in measurement accuracy.