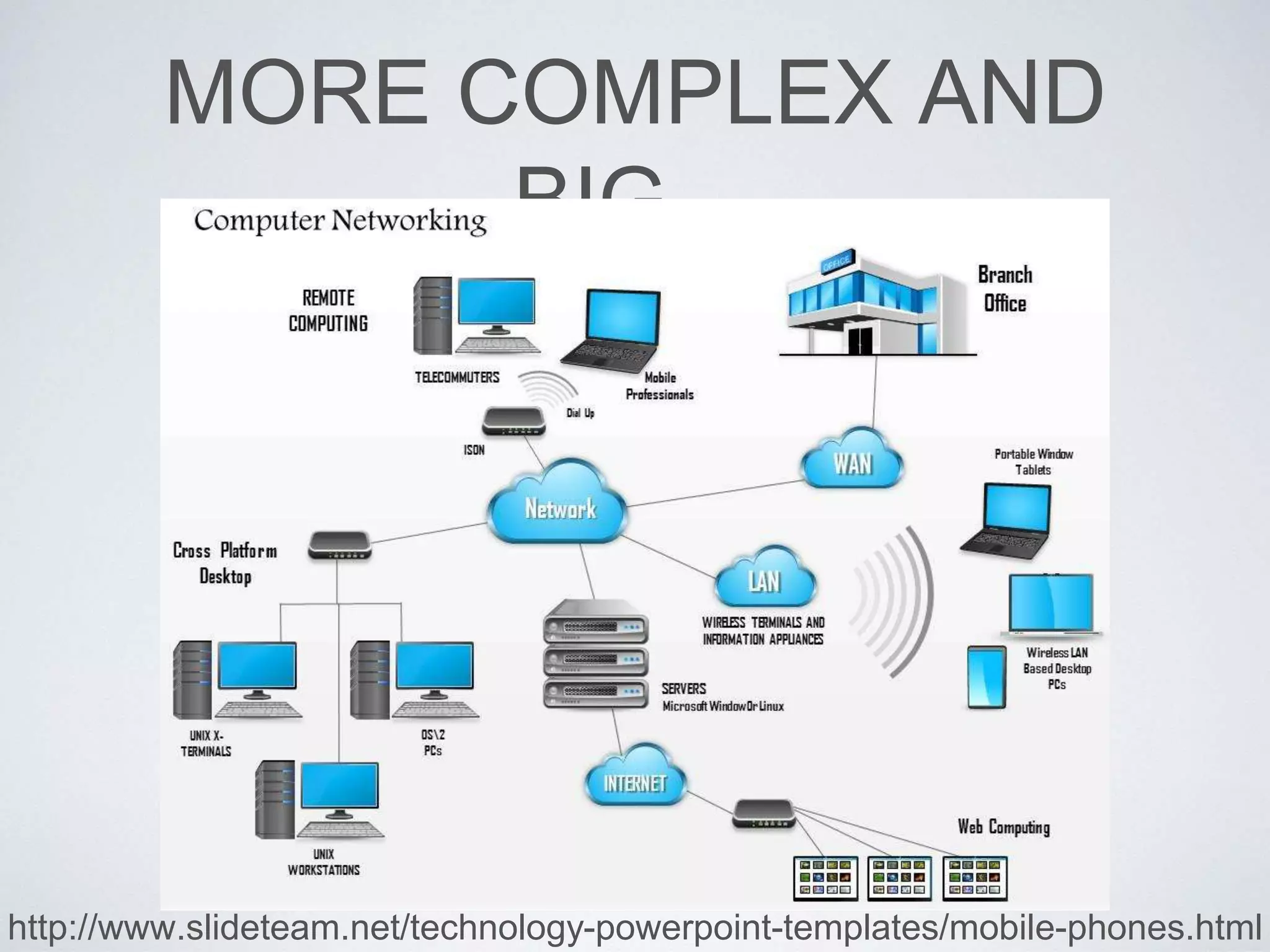

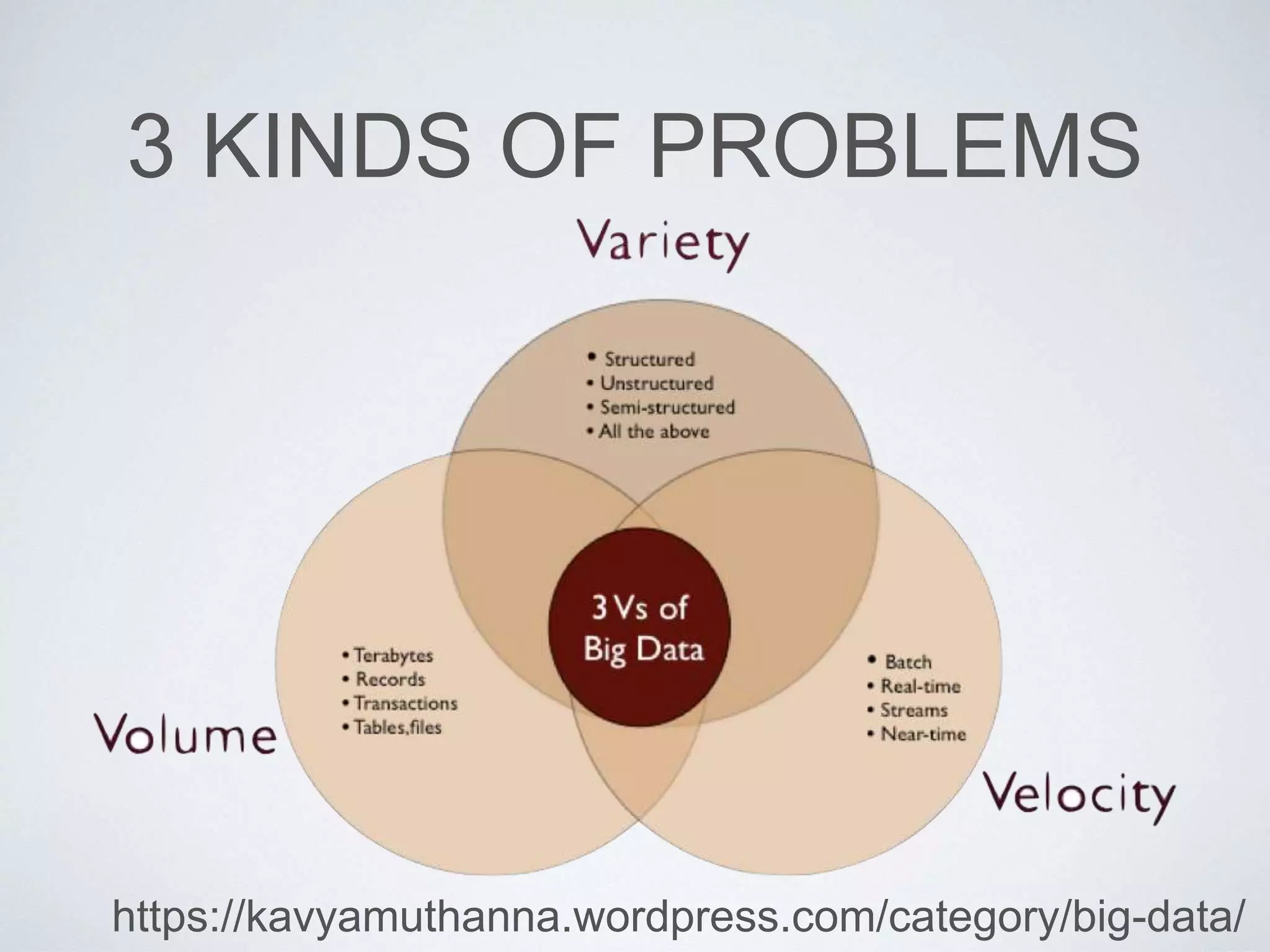

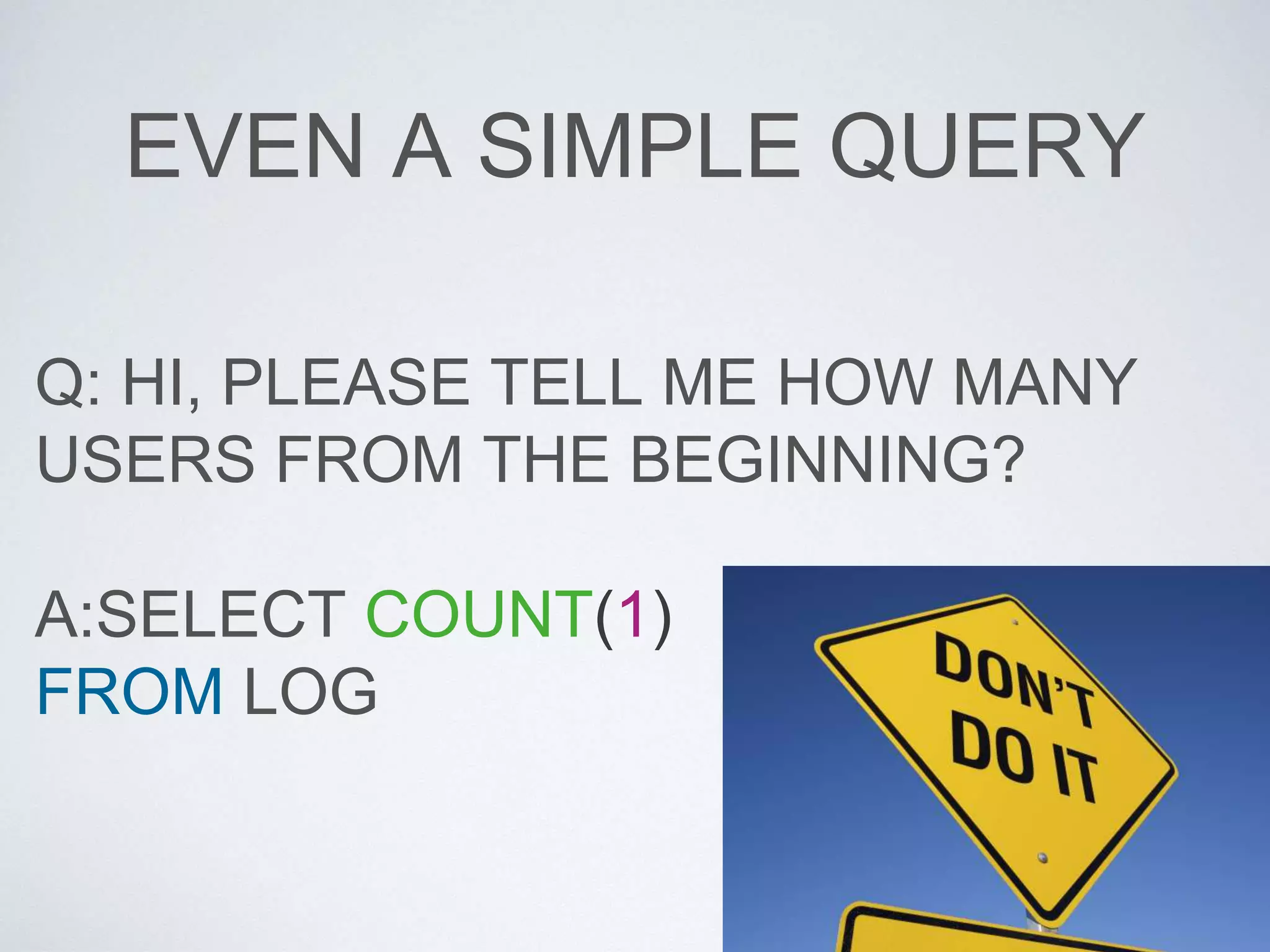

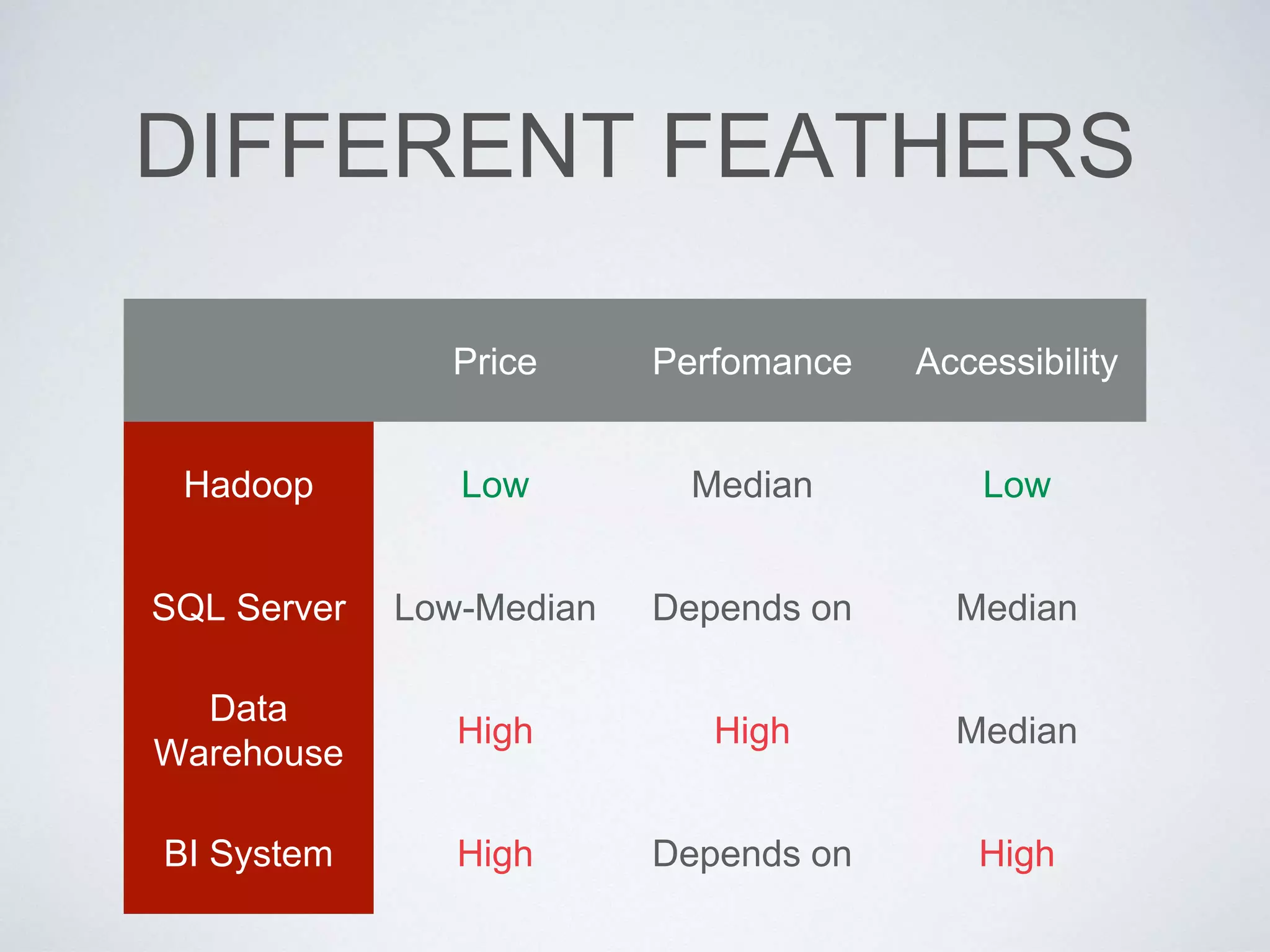

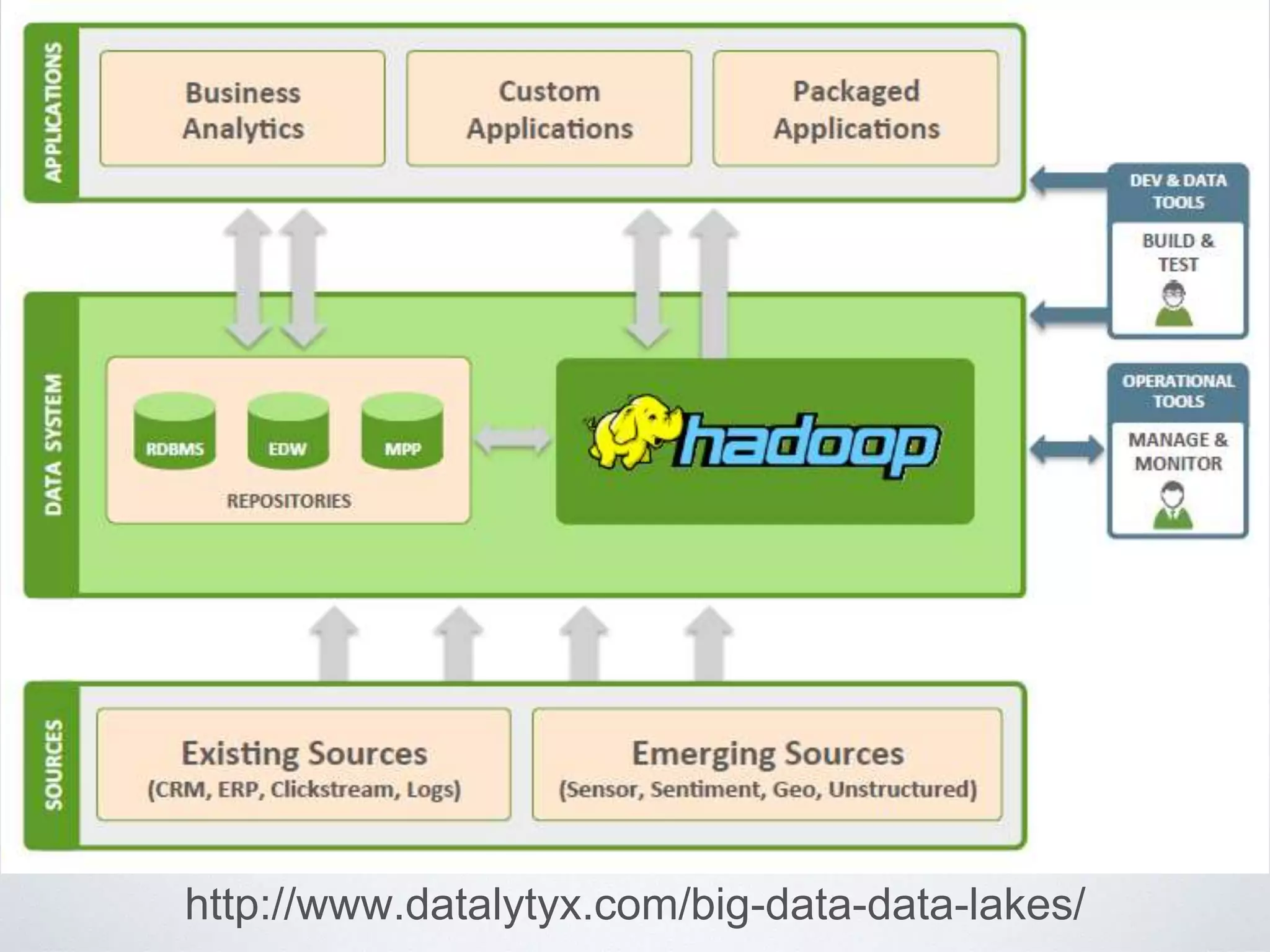

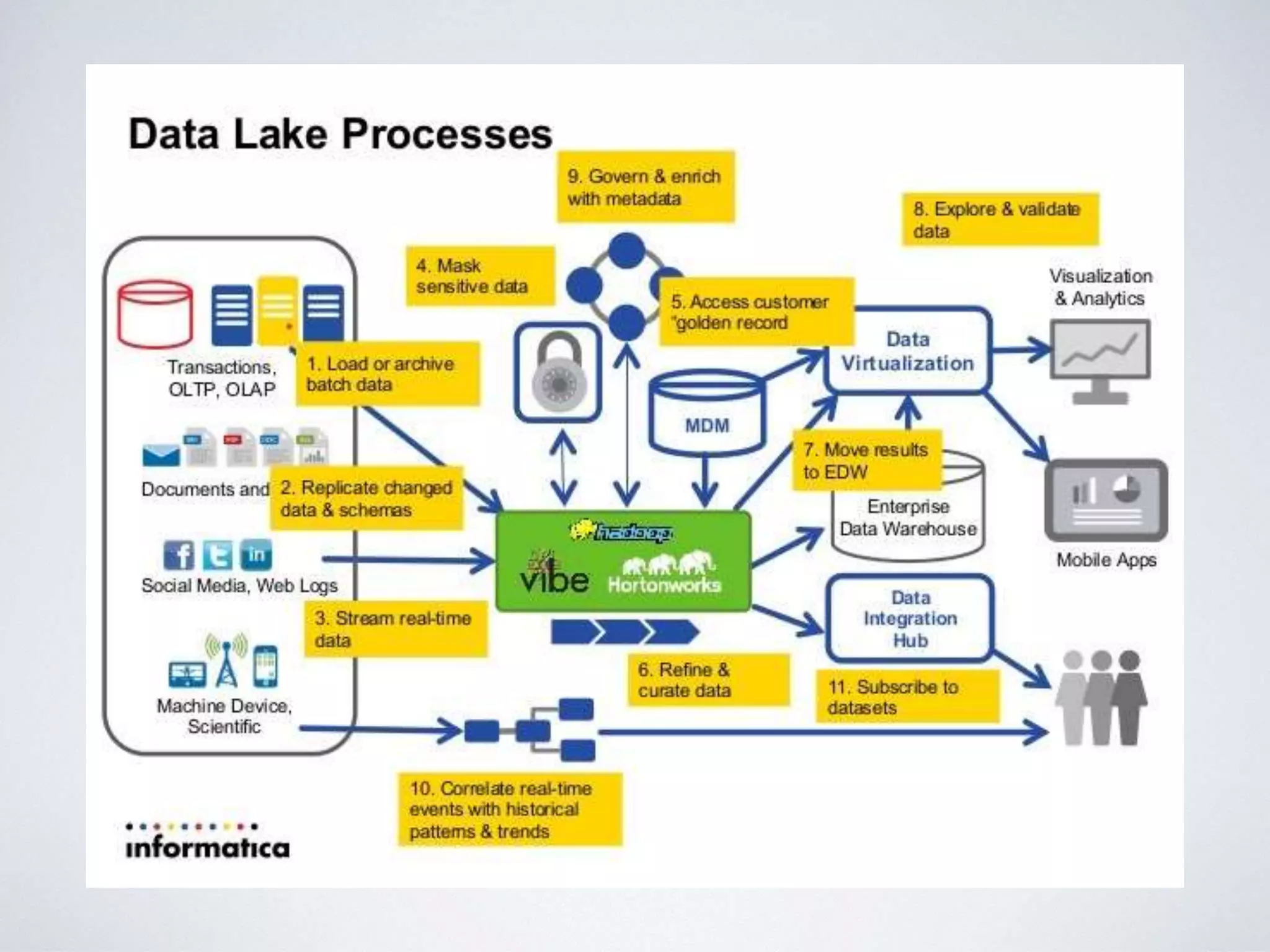

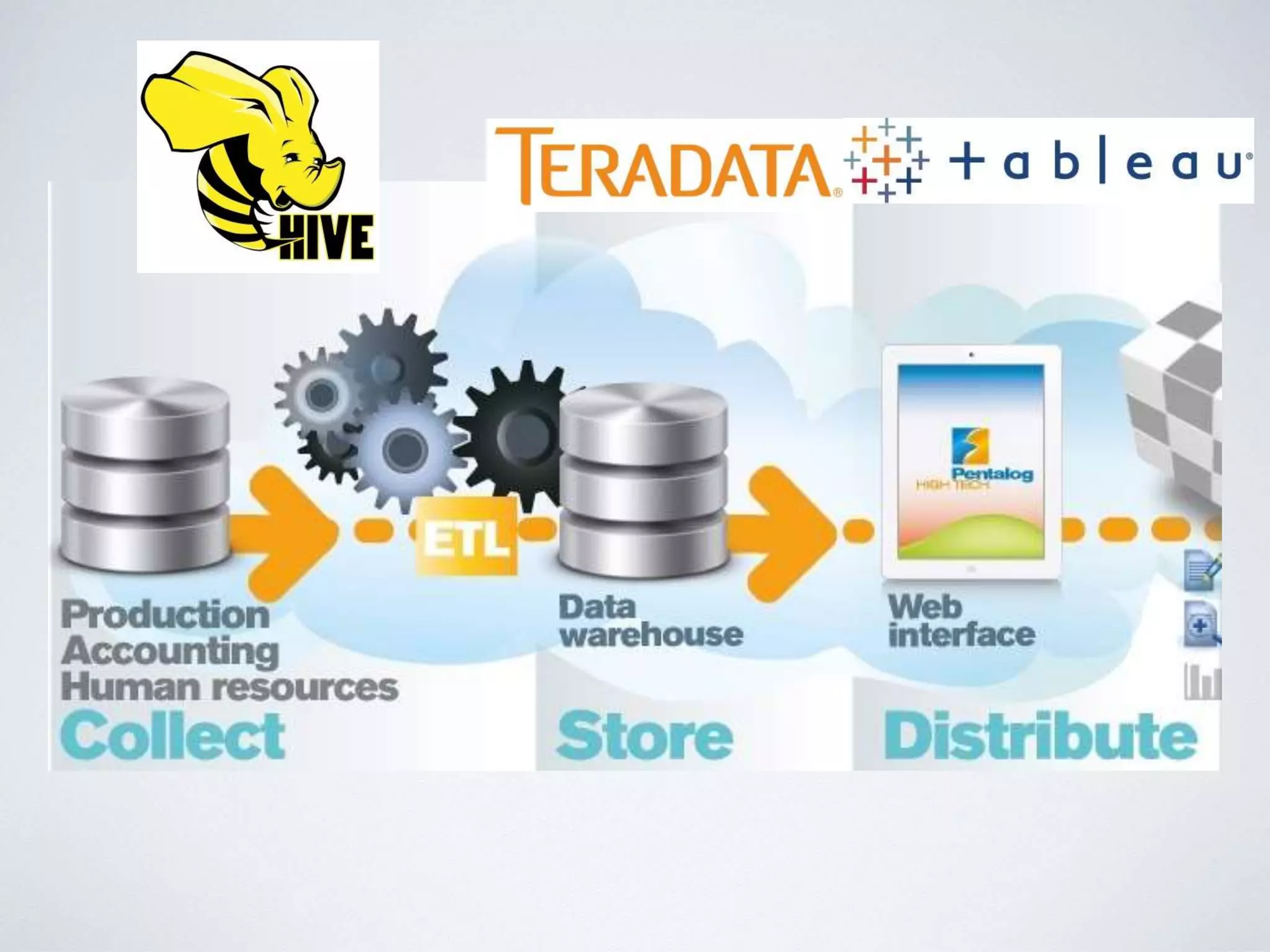

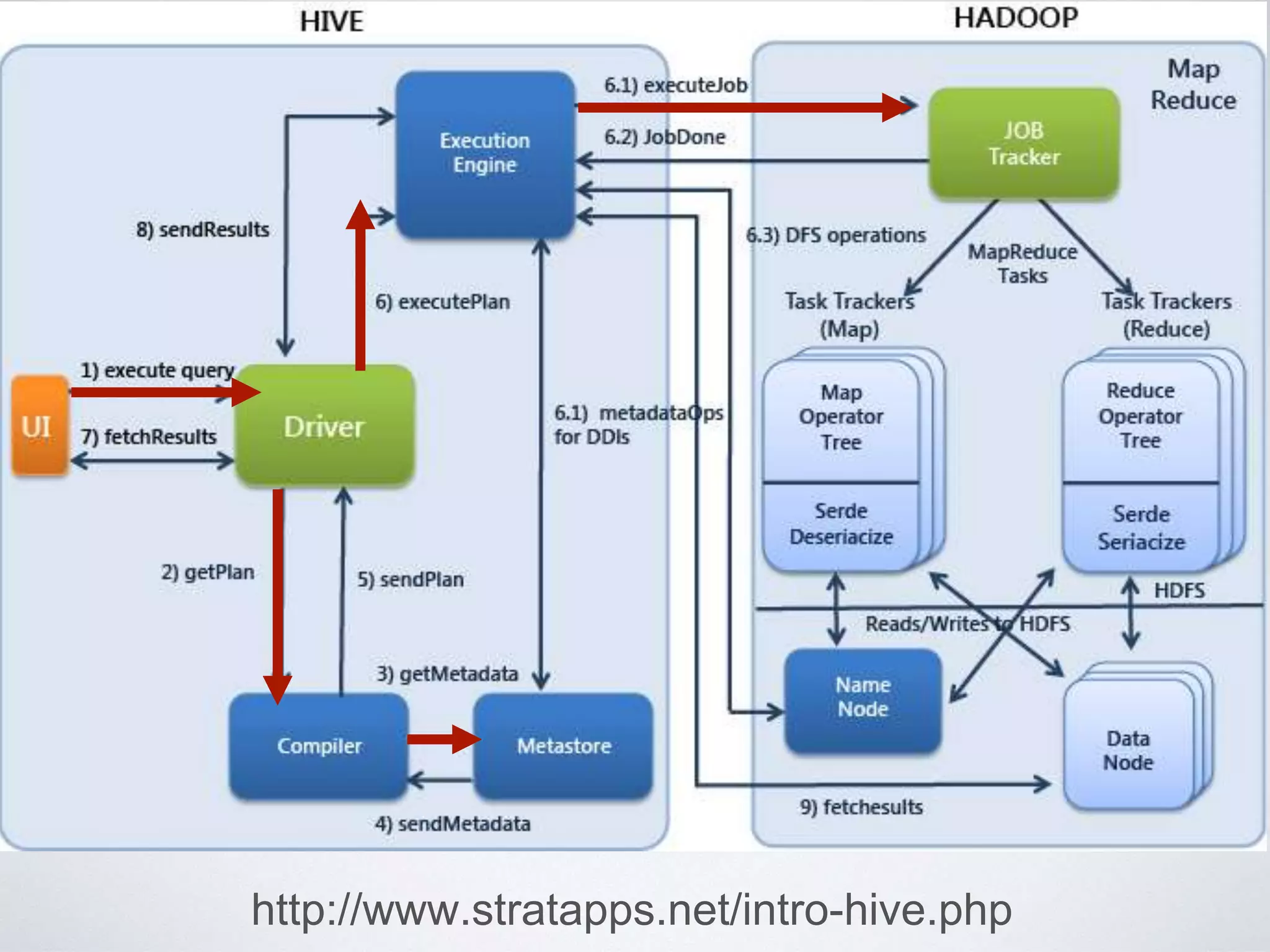

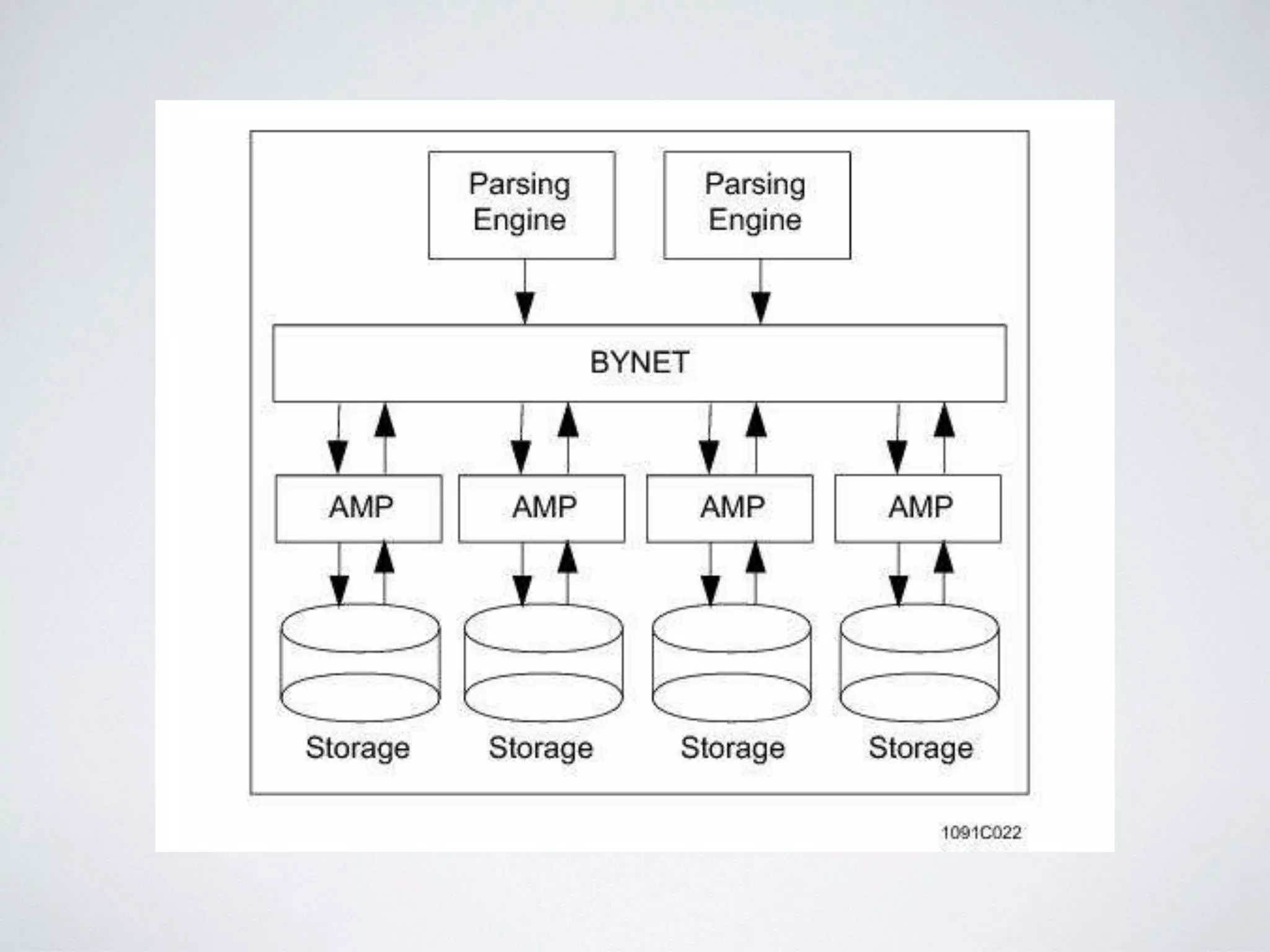

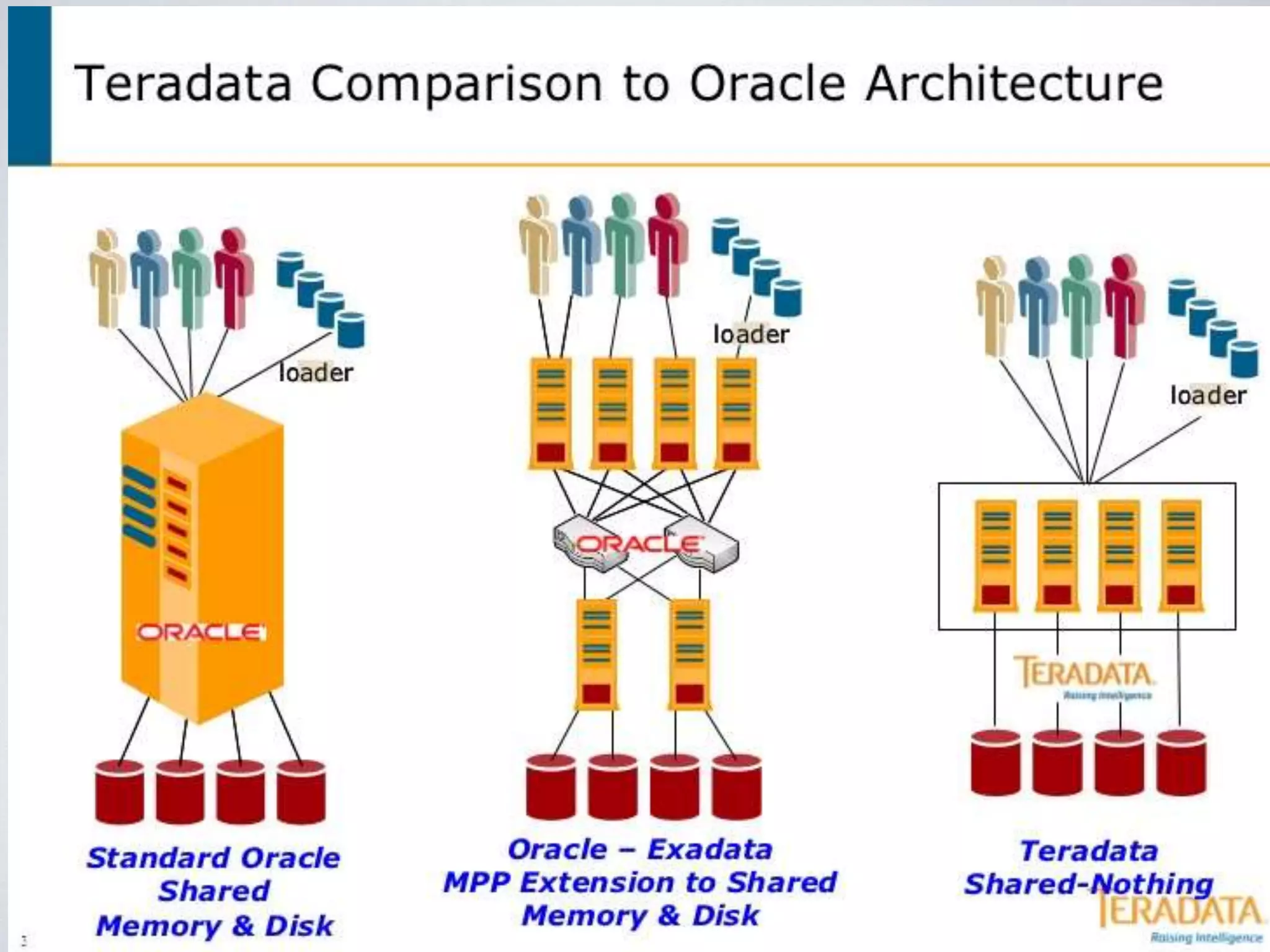

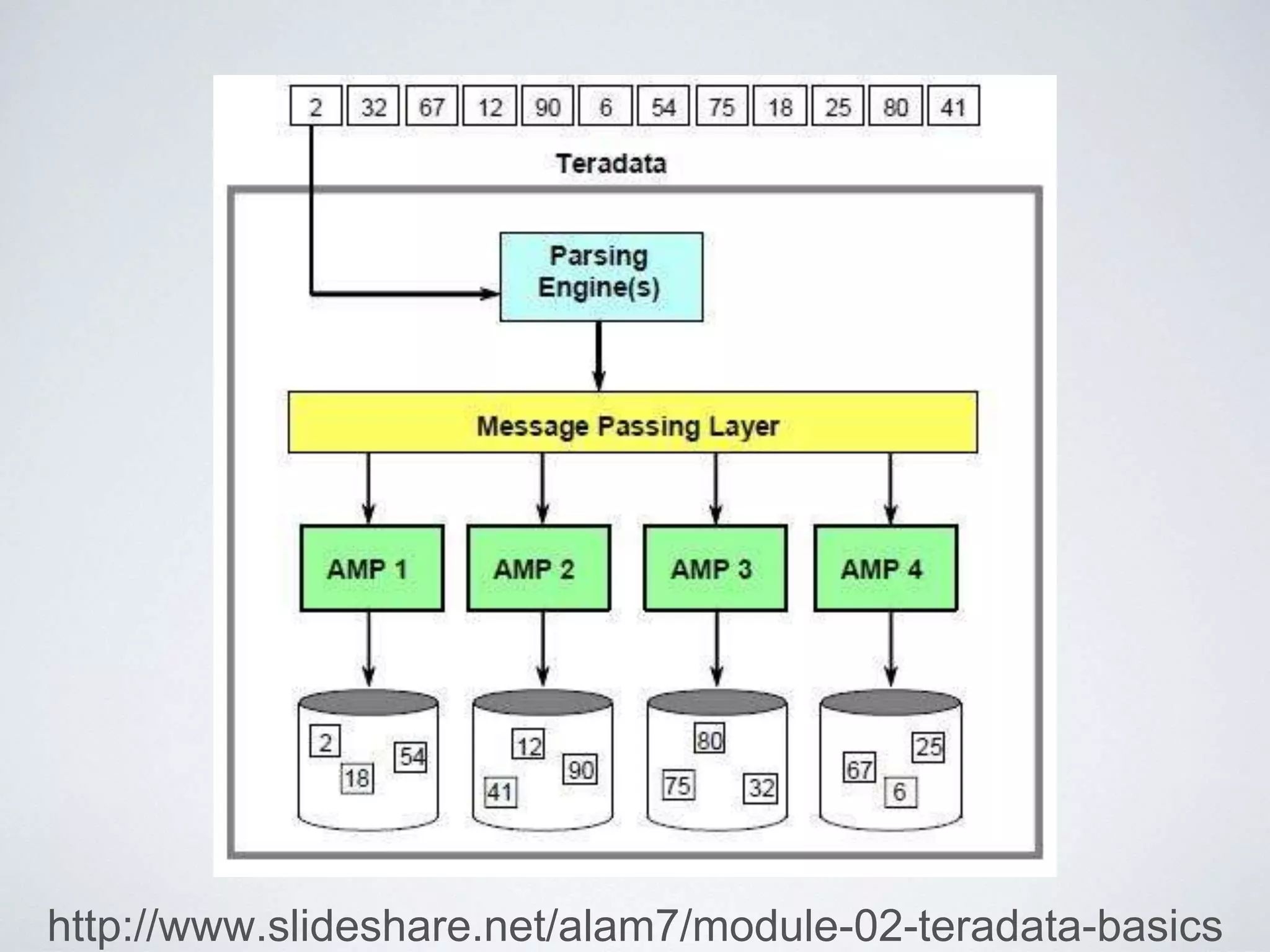

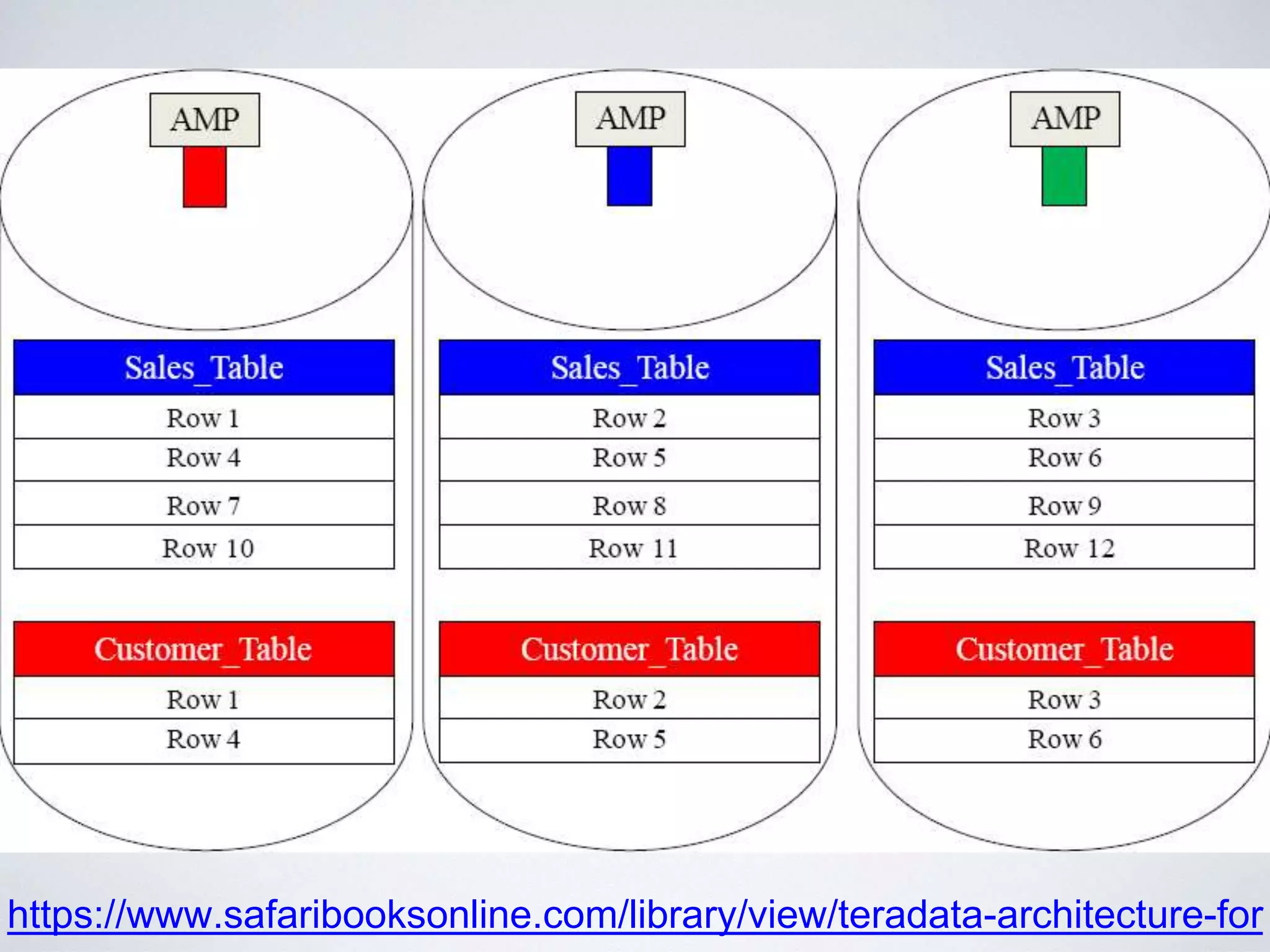

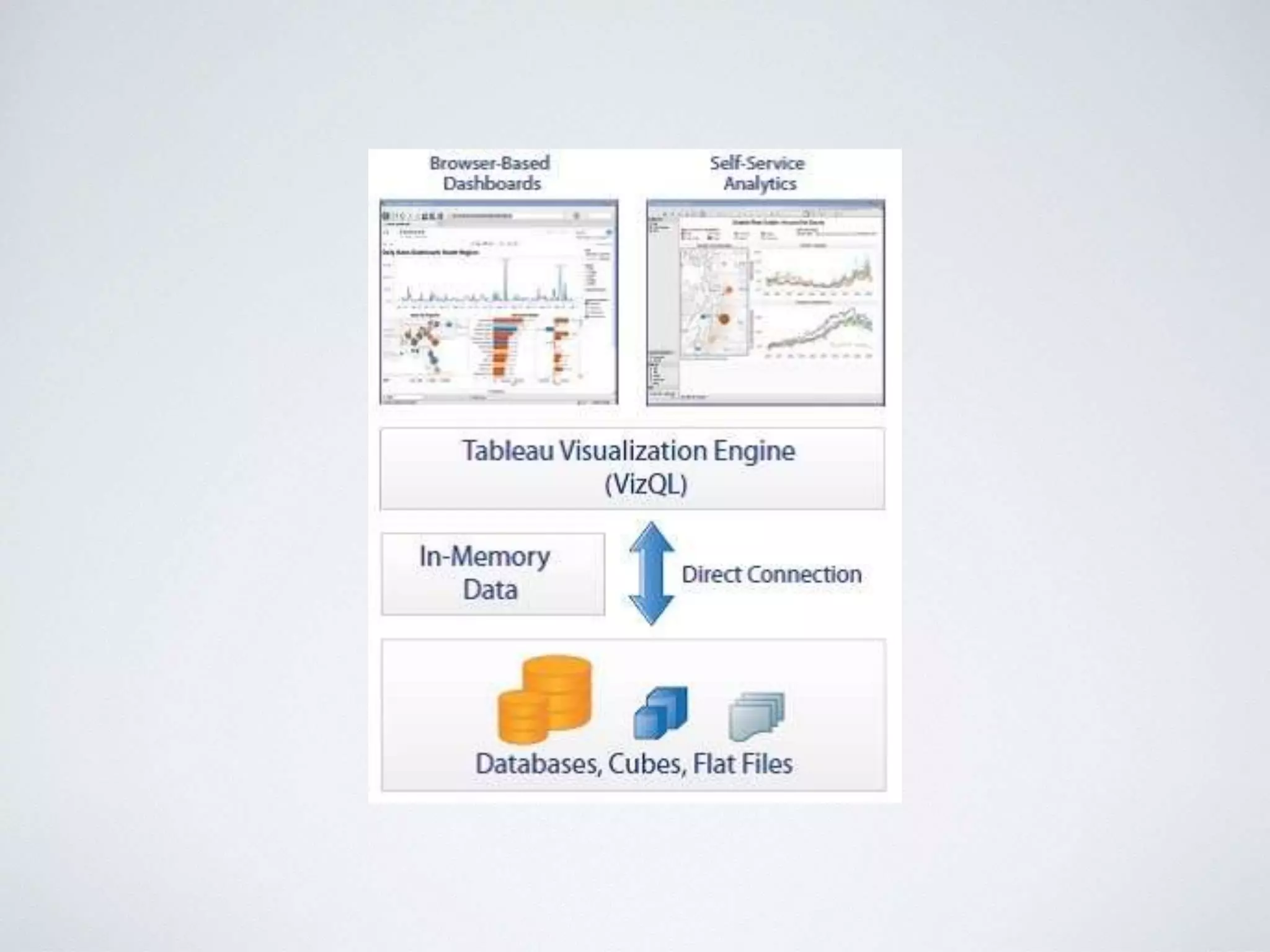

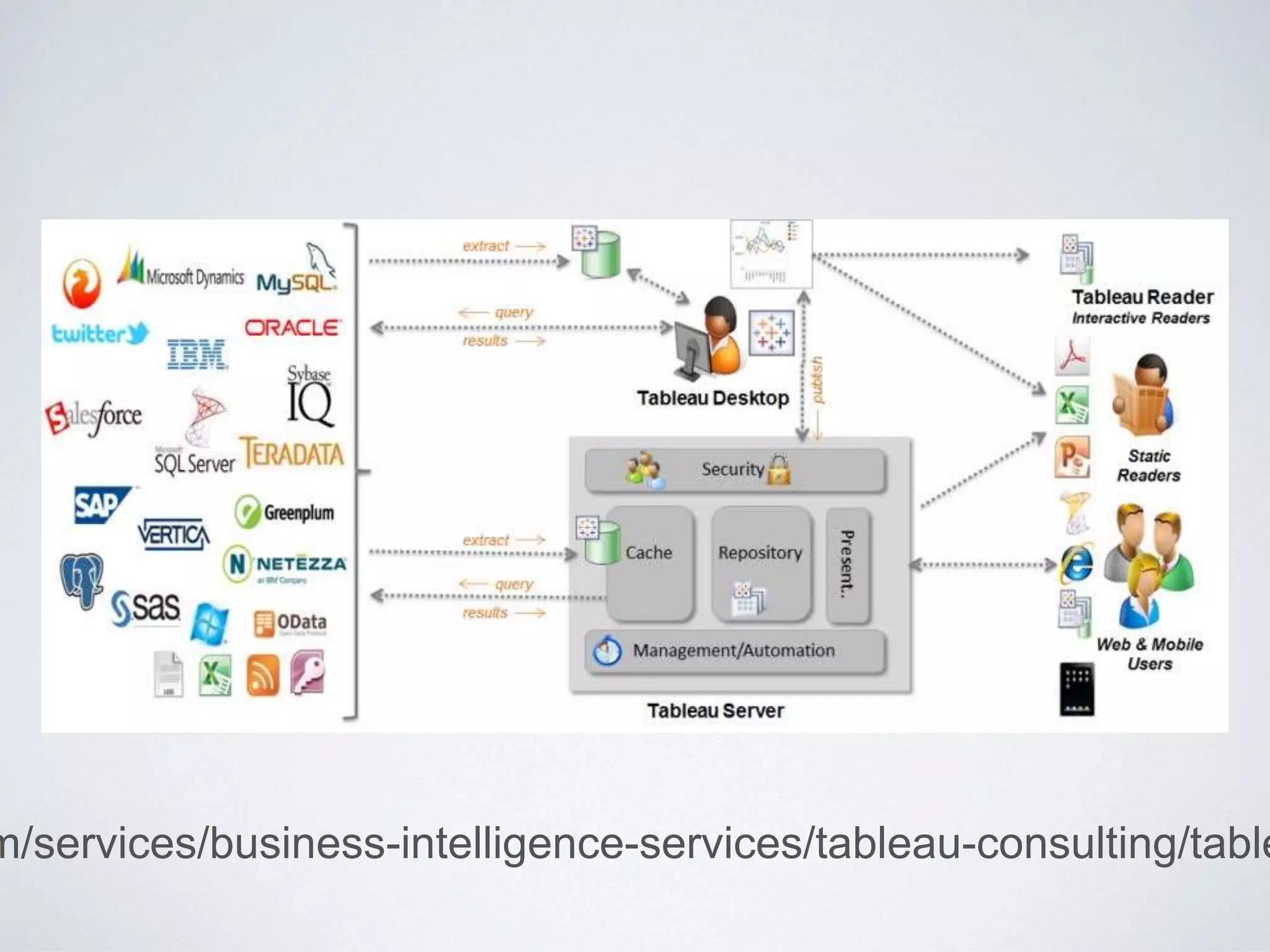

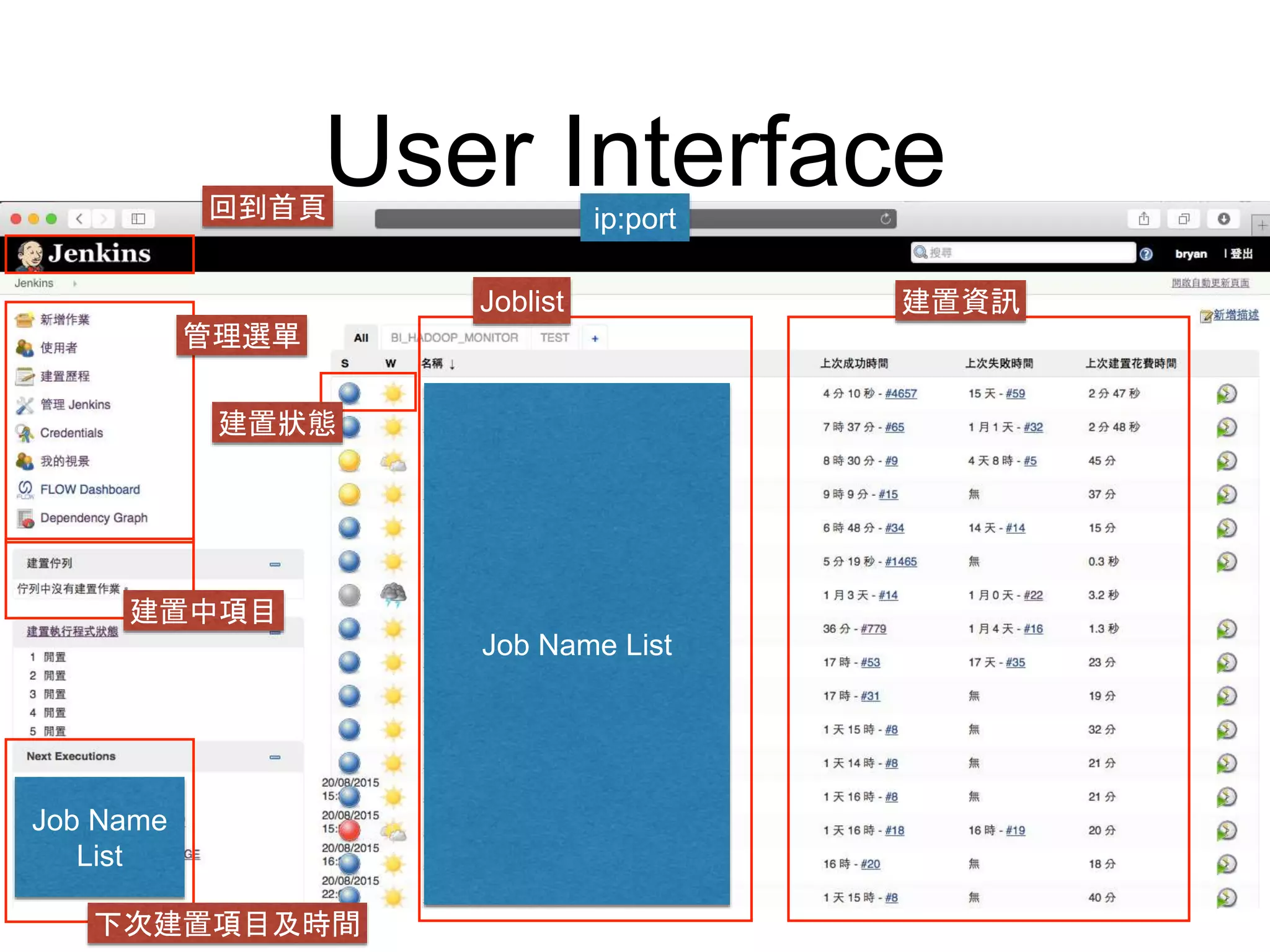

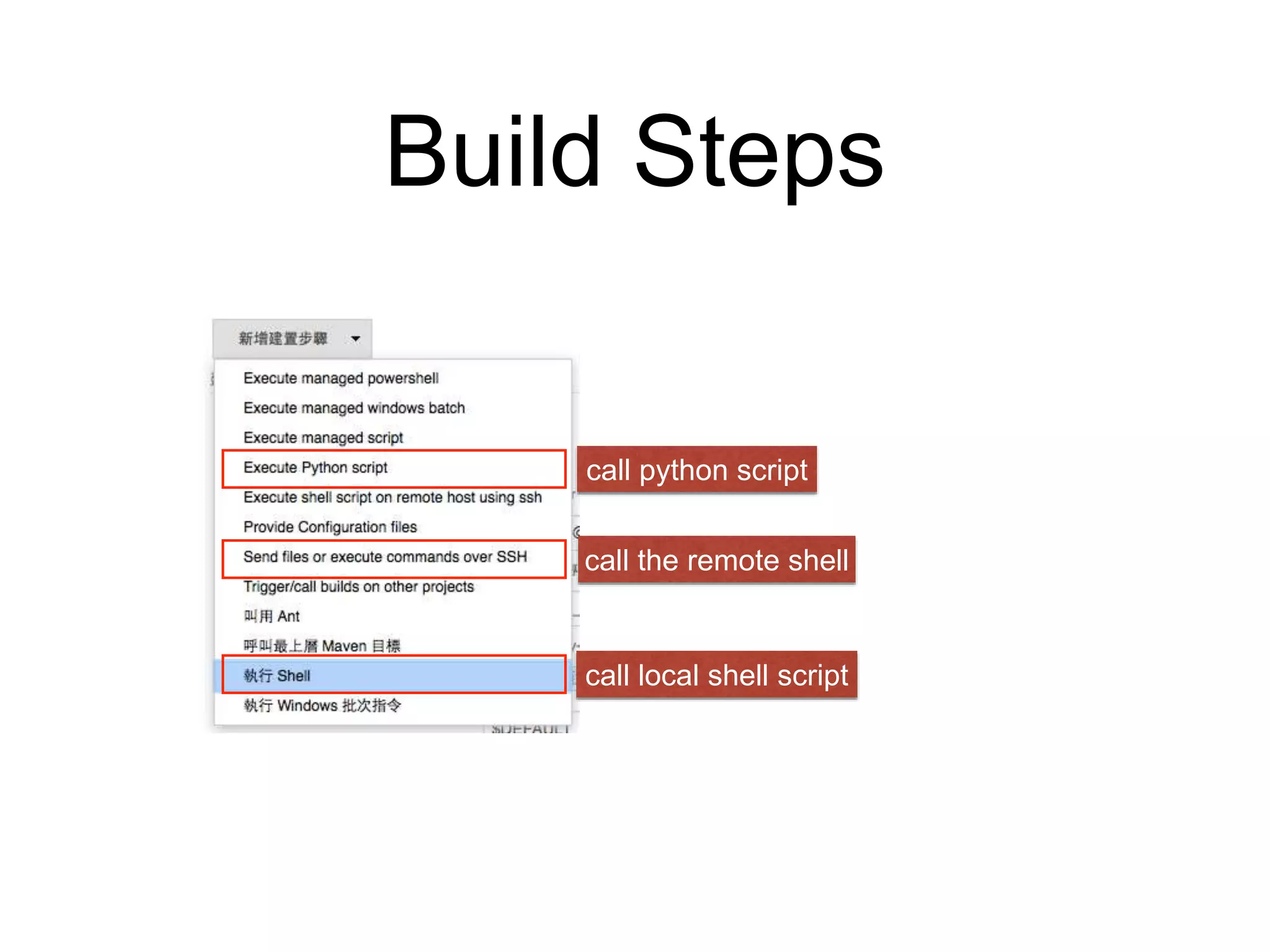

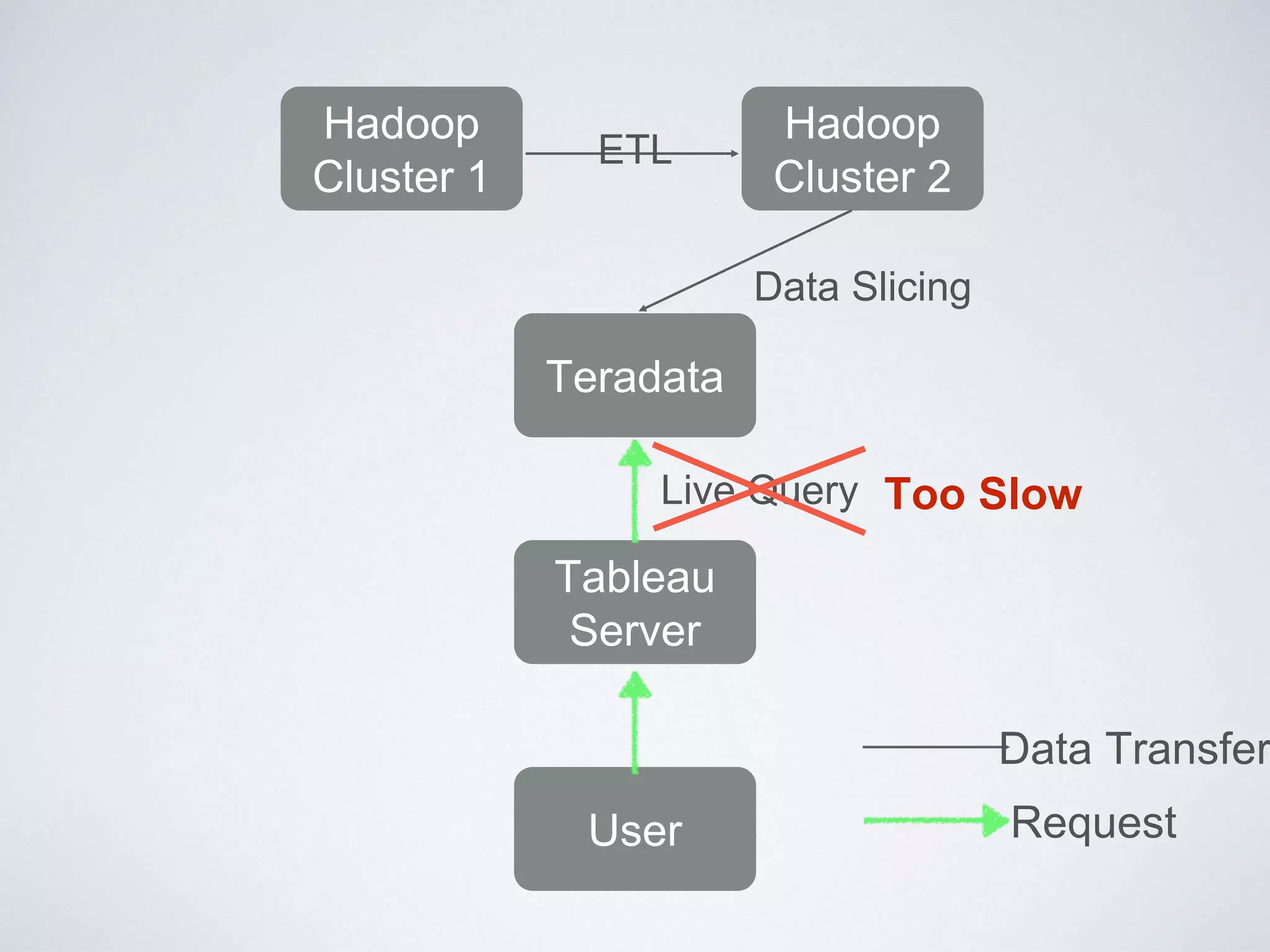

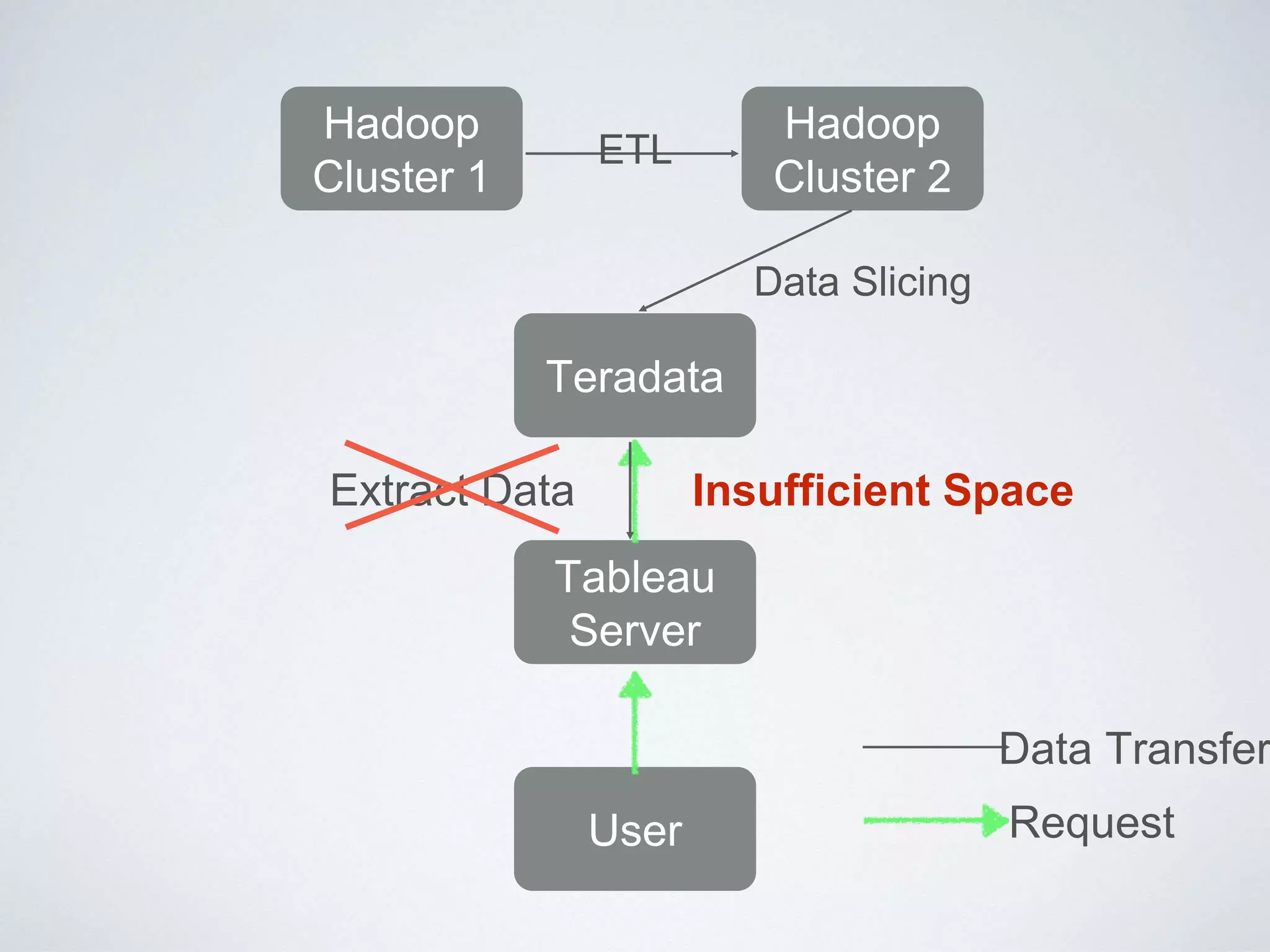

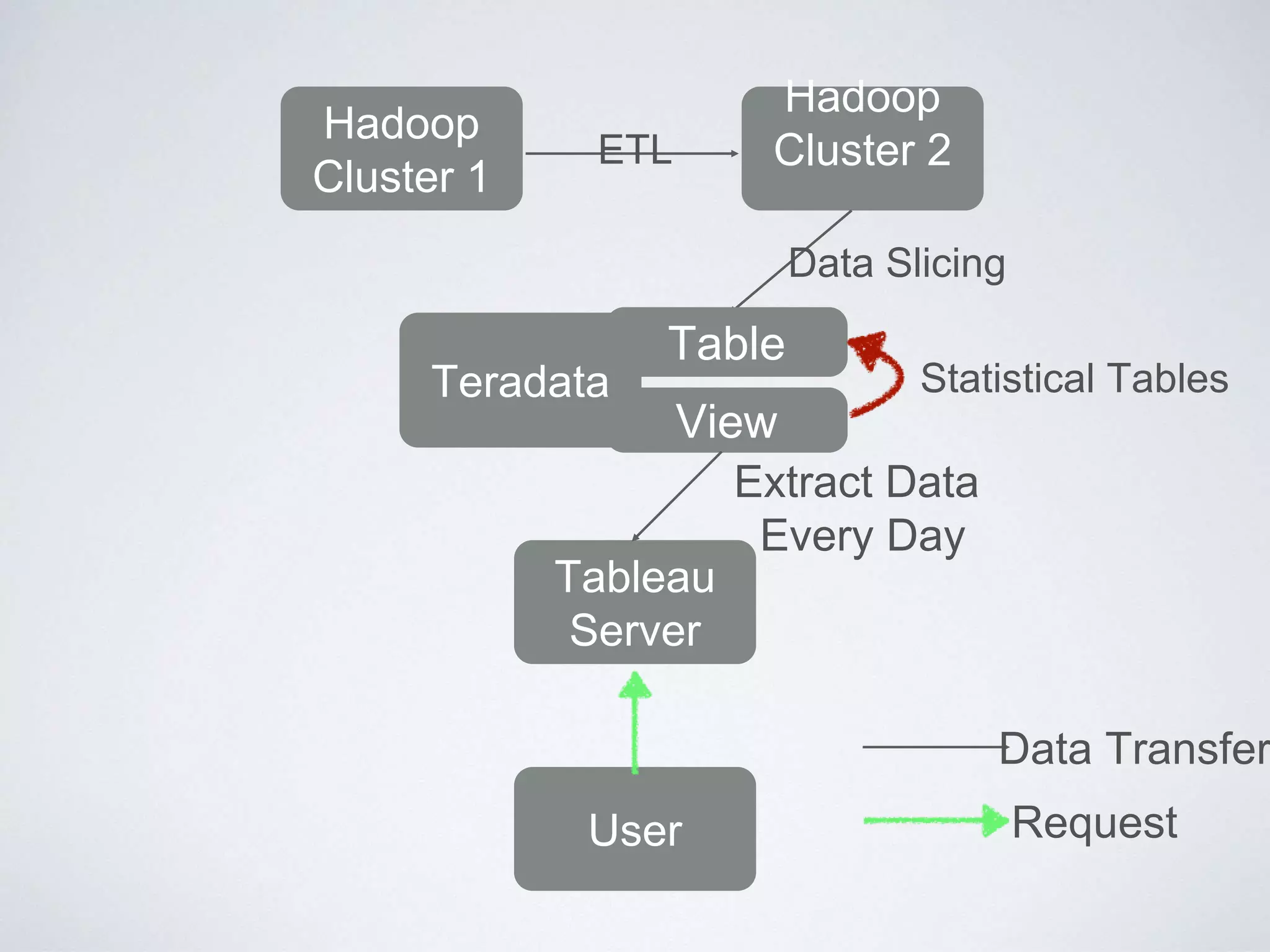

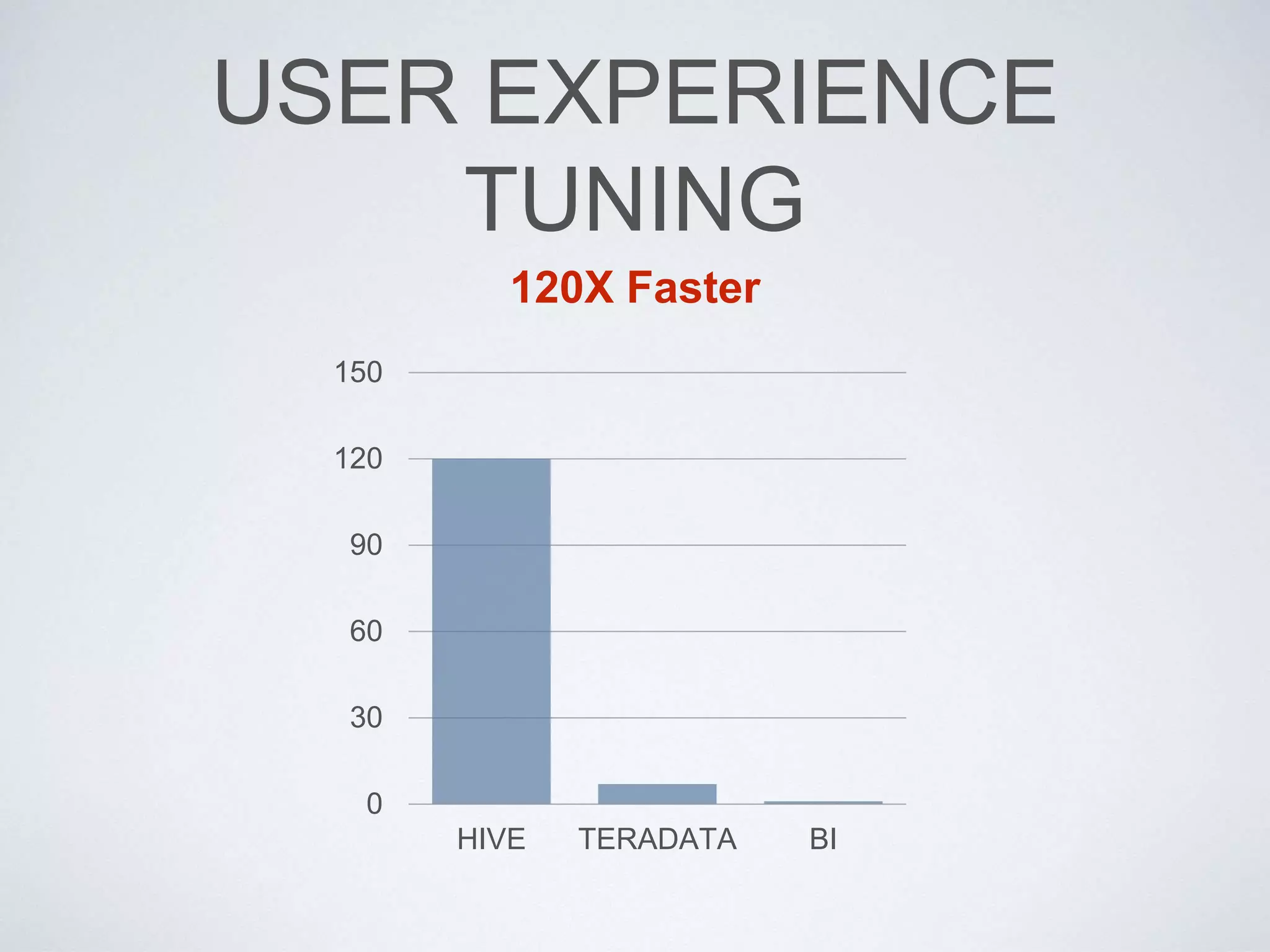

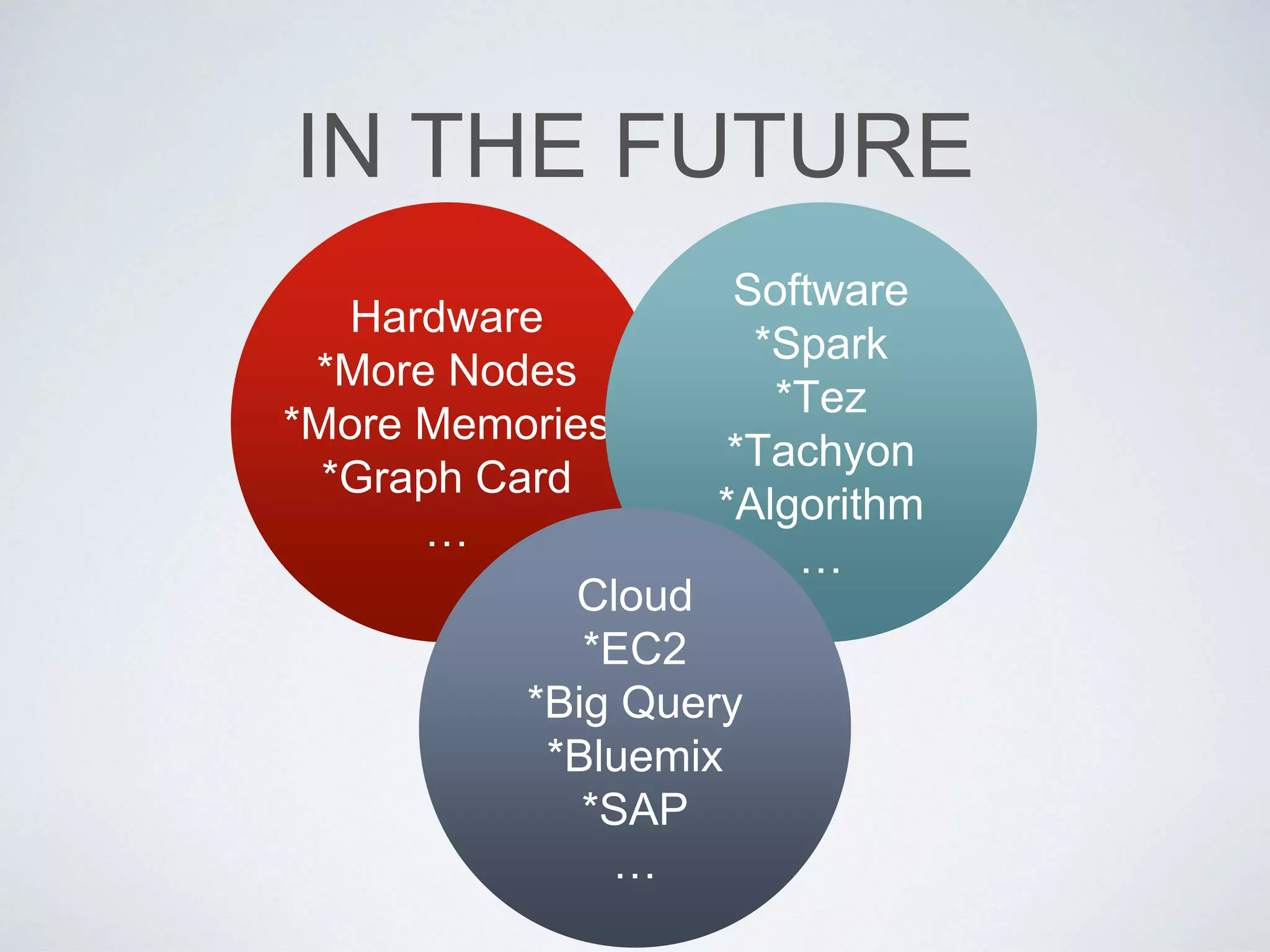

This document provides an overview of building a business intelligence (BI) system using a data lake ecosystem. It discusses using Hadoop, Hive, Teradata, Tableau and Jenkins together. The goals are to deal with big data problems like high volume, variety and velocity of data in a cost effective way. Sample architectures are proposed to handle ETL processes, data storage and querying, and visualization. Key considerations for choosing components include storage and management costs, processing time, and balancing needs with costs. The document concludes by suggesting that a good framework can help support business growth over time within a given cost curve.